ELK环境搭建

elk目录下文件树:

./

├── docker-compose.yml

├── elasticsearch

│ ├── config

│ │ └── elasticsearch.yml

│ ├── data

│ ├── plugins

│ └── logs

├── kabana

│ └── config

│ └── kabana.yml

└── logstash

├── config

│ ├── logstash.yml

│ └── small-tools

│ └── demo.config

└── data

elasticsearch配置相关

#新建目录

mkdir /usr/local/docker/elk

#增加es目录

mkdir -p /usr/local/docker/elk/elasticsearch/{logs,data,config,plugins}

进入文件夹

cd /usr/local/docker/elk/elasticsearch

赋权目录data

chmod -R 777 data

进入目录config

cd config

编写文件elasticsearch.yml

vim elasticsearch.yml

elasticsearch.yml内容

cluster.name: "docker-cluster"

network.host: 0.0.0.0

http.port: 9200

# 开启es跨域

http.cors.enabled: true

http.cors.allow-origin: "*"

http.cors.allow-headers: Authorization

# 开启安全控制

xpack.security.enabled: true

xpack.security.transport.ssl.enabled: true

kibana配置相关

创建文件夹kibana

mkdir -p /usr/local/docker/elk/kibana/{config}

进入文件夹kibana

cd /usr/local/docker/elk/kibana

进入config目录

cd kibana/config

编写文件kibana.yml

kibana.yml内容

server.name: kibana

server.host: "0.0.0.0"

server.publicBaseUrl: "http://kibana:5601" #不用更改配置文件配置好了

elasticsearch.hosts: [ "http://elasticsearch:9200" ] ##不用更改配置文件配置好了

xpack.monitoring.ui.container.elasticsearch.enabled: true

elasticsearch.username: "elastic" #用户账号

elasticsearch.password: "123456" #用户密码

i18n.locale: zh-CN

logstash配置相关

创建文件夹logstash

mkdir -p /usr/local/docker/elk/logstash/{data,config}

创建目录small-tools

mkdir -p /usr/local/docker/elk/logstash/config/{small-tools}

进入对应目录

cd /usr/local/docker/elk/logstash

赋权

chmod 777 data

进入目录

cd /usr/local/docker/elk/logstash/config

编写配置文件

vim logstash.yml

logstash.yml内容

http.host: "0.0.0.0"

xpack.monitoring.enabled: true

xpack.monitoring.elasticsearch.hosts: [ "http://elasticsearch:9200" ]

xpack.monitoring.elasticsearch.username: "elastic" #用户账号

xpack.monitoring.elasticsearch.password: "123456" #用户密码

进入small-tools下增加demo项目监控配置文件

cd /usr/local/docker/elk/logstash/config/small-tools

编写 demo.config

demo.config内容

input { #输入

tcp {

mode => "server"

host => "0.0.0.0" # 允许任意主机发送日志

type => "demo" # 设定type以区分每个输入源

port => 9999

codec => json_lines # 数据格式

}

}

filter {

mutate {

# 导入之过滤字段

remove_field => ["LOG_MAX_HISTORY_DAY", "LOG_HOME", "APP_NAME"]

remove_field => ["@version", "_score", "port", "level_value", "tags", "_type", "host"]

}

}

output { #输出-控制台

stdout{

codec => rubydebug

}

}

output { #输出-es

if [type] == "demo" {

elasticsearch {

action => "index" # 输出时创建映射

hosts => "http://elasticsearch:9200" # ES地址和端口

user => "elastic" # ES用户名

password => "123456" # ES密码

index => "demo-%{+YYYY.MM.dd}" # 指定索引名-按天

codec => "json"

}

}

}

elk目录下增加docker-compose文件

cd /usr/local/docker/elk

docker-compose.yml

version: '3.0'

networks:

elk:

driver: bridge

services:

elasticsearch:

image: registry.cn-hangzhou.aliyuncs.com/zhengqing/elasticsearch:7.14.1

container_name: elk_elasticsearch

restart: unless-stopped

volumes:

- "/usr/local/docker/elk/elasticsearch/data:/usr/share/elasticsearch/data"

- "/usr/local/docker/elk/elasticsearch/logs:/usr/share/elasticsearch/logs"

- "/usr/local/docker/elk/elasticsearch/config/elasticsearch.yml:/usr/share/elasticsearch/config/elasticsearch.yml"

- "/usr/local/docker/elk/elasticsearch/plugins:/usr/share/elasticsearch/plugins"

environment:

TZ: Asia/Shanghai

LANG: en_US.UTF-8

TAKE_FILE_OWNERSHIP: "true" # 权限

discovery.type: single-node

ES_JAVA_OPTS: "-Xmx512m -Xms512m"

ELASTIC_PASSWORD: "123456" # elastic账号密码

ports:

- "9200:9200"

- "9300:9300"

networks:

- elk

kibana:

image: registry.cn-hangzhou.aliyuncs.com/zhengqing/kibana:7.14.1

container_name: elk_kibana

restart: unless-stopped

volumes:

- "/usr/local/docker/elk/kibana/config/kibana.yml:/usr/share/kibana/config/kibana.yml"

ports:

- "5601:5601"

depends_on:

- elasticsearch

links:

- elasticsearch

networks:

- elk

logstash:

image: registry.cn-hangzhou.aliyuncs.com/zhengqing/logstash:7.14.1

container_name: elk_logstash

restart: unless-stopped

environment:

LS_JAVA_OPTS: "-Xmx512m -Xms512m"

volumes:

- "/usr/local/docker/elk/logstash/data:/usr/share/logstash/data"

- "/usr/local/docker/elk/logstash/config/logstash.yml:/usr/share/logstash/config/logstash.yml"

- "/usr/local/docker/elk/logstash/config/small-tools:/usr/share/logstash/config/small-tools"

command: logstash -f /usr/share/logstash/config/small-tools

ports:

- "9600:9600"

- "9999:9999"

depends_on:

- elasticsearch

networks:

- elk

查看elk目录文件树

yum -y install tree

#查看当前目录下4层

tree -L 4

#显示所有文件、文件夹

tree -a

#显示大小

tree -s

[root@devops-01 elk]# pwd

/home/test/demo/elk

[root@devops-01 elk]# tree ./

./

├── docker-compose.yml

├── elasticsearch

│ ├── config

│ │ └── elasticsearch.yml

│ ├── data

│ ├── plugins

│ └── logs

├── kabana

│ └── config

│ └── kabana.yml

└── logstash

├── config

│ ├── logstash.yml

│ └── small-tools

│ └── demo.config

└── data

编排elk

docker-compose up -d

编排成功查看容器是否成功启动

[root@devops-01 elk]# docker ps | grep elk

编排成功访问kibana页面

http://10.10.22.174:5601/app/home#/

springboot集成logstash

pom.xml

<!--logstash start-->

<dependency>

<groupId>net.logstash.logback</groupId>

<artifactId>logstash-logback-encoder</artifactId>

<version>6.6</version>

</dependency>

<!--logstash end-->

logback-spring.xml

<springProfile name="uat">

<appender name="logstash" class="net.logstash.logback.appender.LogstashTcpSocketAppender">

<destination>10.10.22.174:9999</destination>

<encoder charset="UTF-8" class="net.logstash.logback.encoder.LogstashEncoder"/>

</appender>

<root level="INFO">

<appender-ref ref="logstash"/>

</root>

</springProfile>

启动项目logstash采集日志

kibana配置查看日志

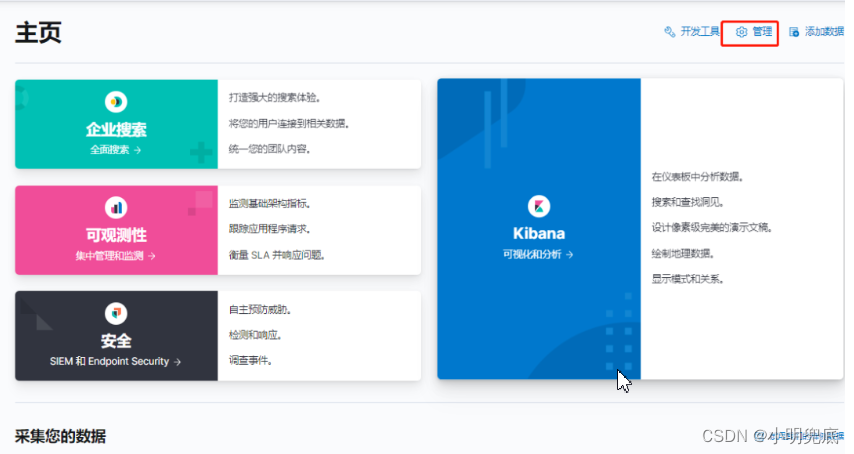

http://10.10.22.174:5601/app/home#/ 输入ES用户名和密码进入kibana控制台

点击管理按钮进入管理界面

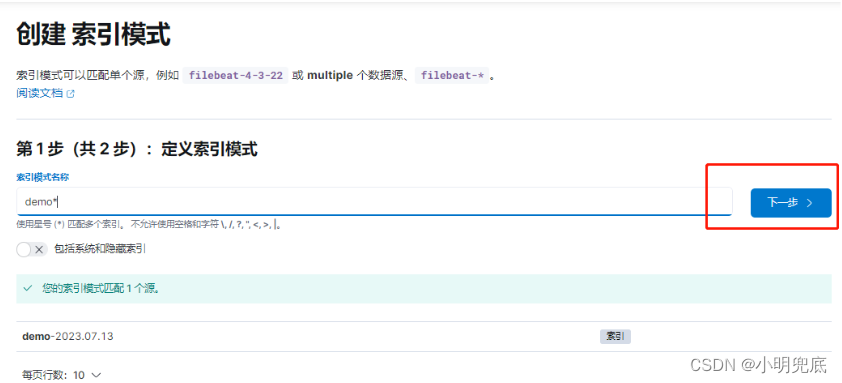

点击索引模式进入–>创建索引模式

输入配置日志表达式–>点击下一步

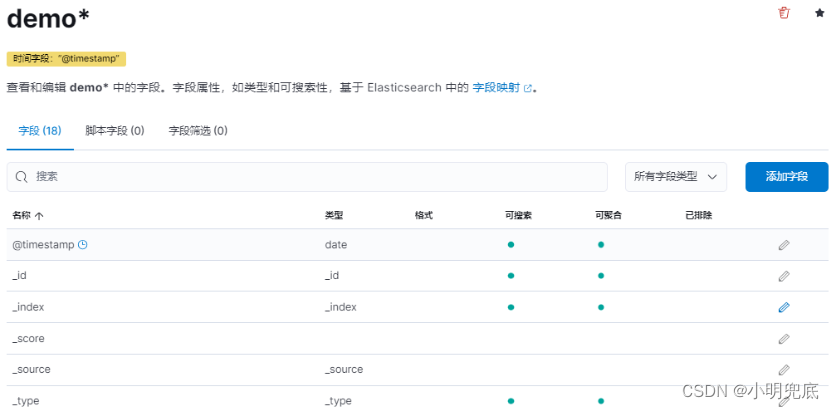

选择timestamp -->创建索引模式

创建完成如下所示代表成功

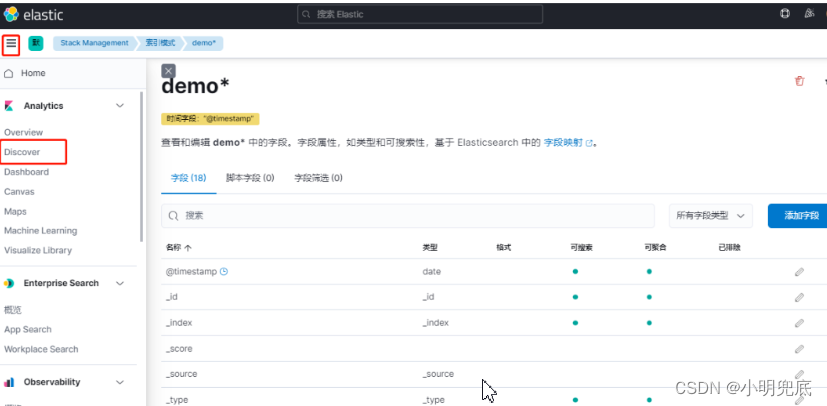

查看日志

菜单点击–>discover

178

178

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?