- 在本地文件夹中新建两个文件file1.txt,file2.txt,并输入内容

- 在终端开启hadoop,hbase服务

start-dfs.sh

start-hbase.sh

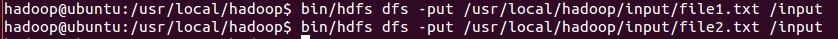

3.将file1.txt file2.txt上传到HDFS

hdfs dfs -put /usr/local/hadoop/input/file1.txt /input

hdfs dfs -put /usr/local/hadoop/input/file2.txt /input

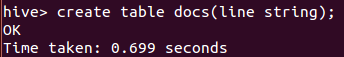

4.进入Hive端创建表,将数据存入表并利用MapReduce统计单词

creat table docs(line string);

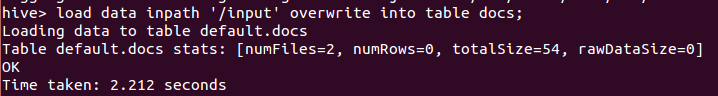

load data inpath '/input' overwrte into table docs;

create table word_count as

select word, count(1) as count from

(select explode(split(line,' '))as word from docs) w

group by word

order by word;

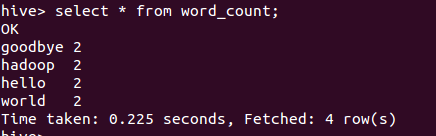

5.使用select语句查询

select * from word_count;

结果正确,word_count执行成功

290

290

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?