本小节是通过示例如何通过神经网络识别验证码(mnist数据集)。

1、数据集

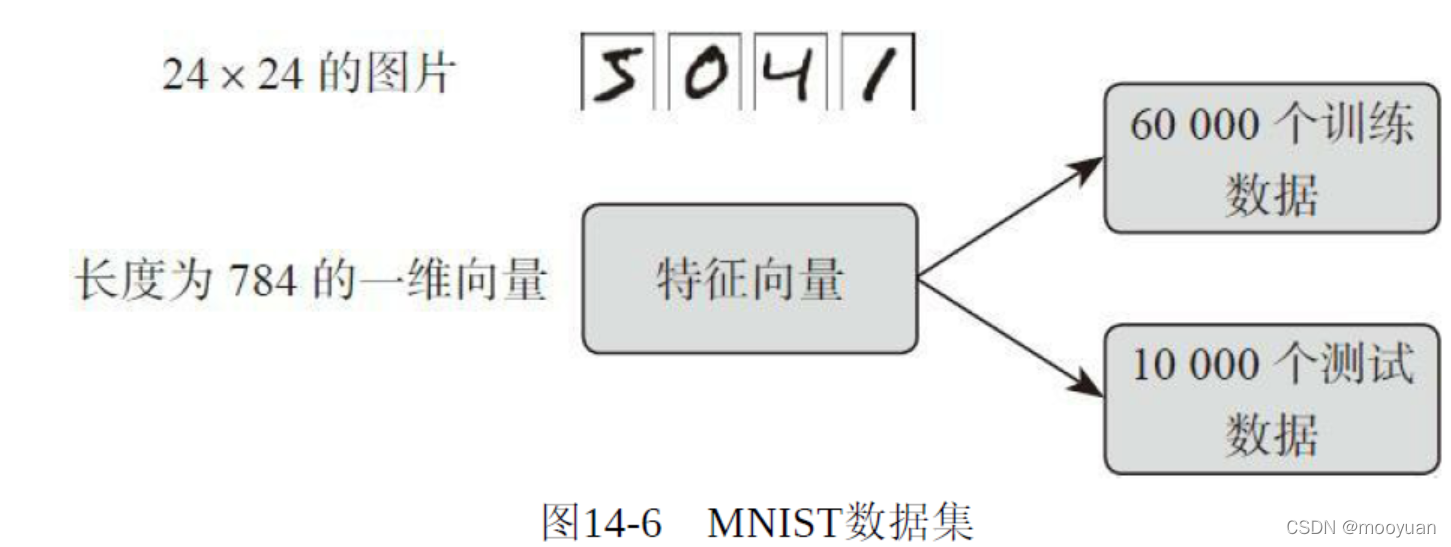

MNIST实际上是计算机视觉入门数据集,如下图14-6所示:

代码如下所示,将60000个样本作为训练集,剩下的作为测试样本,为了将数据标准化,将其data除以255,保证范围在0-1之间

mnist = fetch_mldata("MNIST original")

# rescale the data, use the traditional train/test split

X, y = mnist.data / 255., mnist.target

X_train, X_test = X[:60000], X[60000:]

y_train, y_test = y[:60000], y[60000:]因为数据集未下载成功,可以通过如下地址下载,并放到指定目录中

https://raw.githubusercontent.com/amplab/datascience-sp14/master/lab7/mldata/mnist-original.mat指定目录确定的方法为

from sklearn.datasets.base import get_data_home

print(get_data_home())程序在运行过程中,报错如下

C:\ProgramData\Anaconda3\lib\site-packages\sklearn\utils\deprecation.py:85: DeprecationWarning: Function fetch_mldata is deprecated; fetch_mldata was deprecated in version 0.20 and will be removed in version 0.22. Please use fetch_openml.

warnings.warn(msg, category=DeprecationWarning)这是因为原书配套github使用的函数到现在已经不使用了,fetch_mldata在新版的sklearn里已经被弃用了,新版的是openml可以通过如下方法修改:,

from sklearn.datasets import fetch_openml

mnist = fetch_openml('mnist_784')2、神经网络训练

将神经元设置为50个,层数设置为1层,使用sgd法

mlp = MLPClassifier(hidden_layer_sizes=(50,), max_iter=10, alpha=1e-4,

solver='sgd', verbose=10, tol=1e-4, random_state=1,

learning_rate_init=.1)

mlp.fit(X_train, y_train)3.完整代码

print(__doc__)

import matplotlib.pyplot as plt

from sklearn.datasets import fetch_mldata

from sklearn.neural_network import MLPClassifier

from sklearn.datasets.base import get_data_home

print (get_data_home())

mnist = fetch_mldata("MNIST original")

# rescale the data, use the traditional train/test split

X, y = mnist.data / 255., mnist.target

X_train, X_test = X[:60000], X[60000:]

y_train, y_test = y[:60000], y[60000:]

mlp = MLPClassifier(hidden_layer_sizes=(50,), max_iter=10, alpha=1e-4,

solver='sgd', verbose=10, tol=1e-4, random_state=1,

learning_rate_init=.1)

mlp.fit(X_train, y_train)

print("Training set score: %f" % mlp.score(X_train, y_train))

print("Test set score: %f" % mlp.score(X_test, y_test))

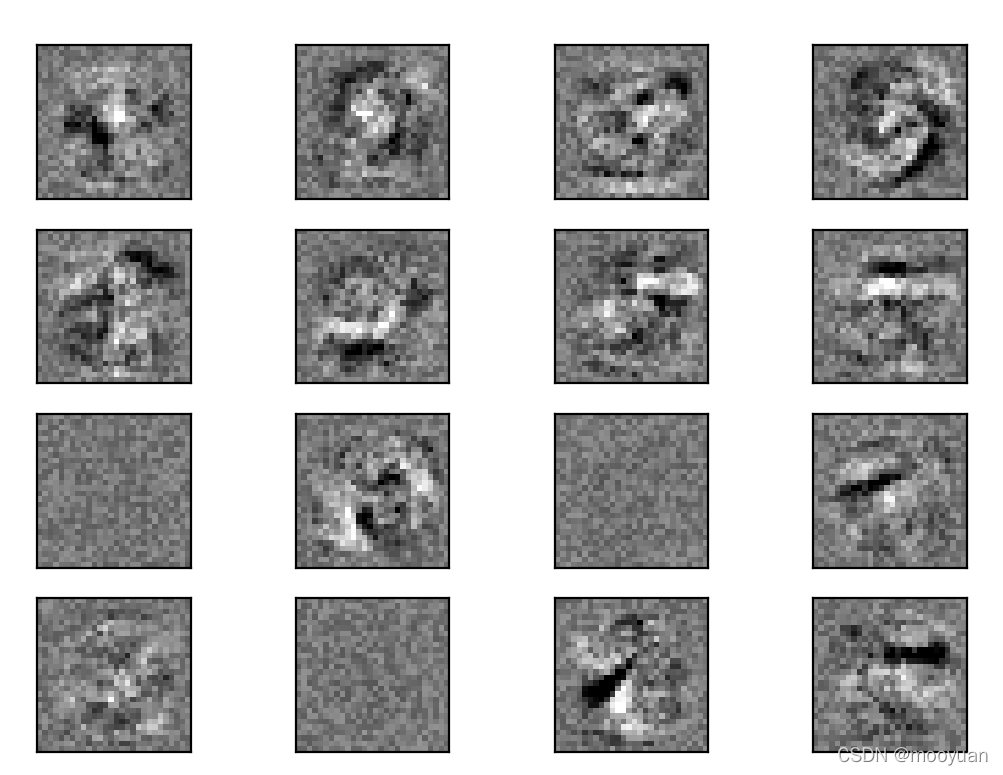

fig, axes = plt.subplots(4, 4)

# use global min / max to ensure all weights are shown on the same scale

vmin, vmax = mlp.coefs_[0].min(), mlp.coefs_[0].max()

for coef, ax in zip(mlp.coefs_[0].T, axes.ravel()):

ax.matshow(coef.reshape(28, 28), cmap=plt.cm.gray, vmin=.5 * vmin,

vmax=.5 * vmax)

ax.set_xticks(())

ax.set_yticks(())

plt.show()4、运行结果

如下所示,准确率达到了97%左右,效果还是不错的

Iteration 1, loss = 0.32212731

Iteration 2, loss = 0.15738787

Iteration 3, loss = 0.11647274

Iteration 4, loss = 0.09631113

Iteration 5, loss = 0.08074513

Iteration 6, loss = 0.07163224

Iteration 7, loss = 0.06351392

Iteration 8, loss = 0.05694146

Iteration 9, loss = 0.05213487

Iteration 10, loss = 0.04708320

Training set score: 0.985733

Test set score: 0.971000

可视化效果

在运行过程中报警如下:

C:\ProgramData\Anaconda3\lib\site-packages\sklearn\neural_network\multilayer_perceptron.py:566: ConvergenceWarning: Stochastic Optimizer: Maximum iterations (10) reached and the optimization hasn't converged yet.

% self.max_iter, ConvergenceWarning)这是因为迭代次数不够是还是没达到最佳拟合,只要在配置过程中增加迭代次数即可。

467

467

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?