本文链接:http://blog.csdn.net/njnu_mjn/article/details/52882402,谢绝转载

ndb存储引擎的索引

ndb存储引擎支持两种索引(见CREATE INDEX Syntax): hash和btree

Storage Engine Permissible Index Types InnoDB BTREE MyISAM BTREE MEMORY/HEAP BTREE NDB HASH, BTREE

hash

mysql实现的hash是一种key-value,一一对应,不允许存在相同的hash值,所以只能在primary key和unique字段上指定使用hash索引,且需要显示指定using hash, 如:

create table test

(

id bigint,

seq int,

description varchar(200),

primary key (id, seq) using hash

) engine = ndb

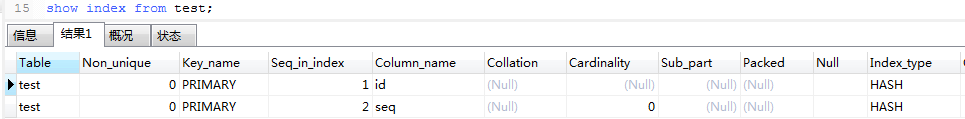

partition by key(id);查看创建的索引show index from test;

unique字段使用hash的例子:

create table test2

(

id int,

unique (id) using hash

) engine = ndb;btree

ndb存储引擎使用T-tree数据结构来实现btree索引的(见CREATE INDEX Syntax):

BTREE indexes are implemented by the NDB storage engine as T-tree indexes.

如果primary key和unique不显示使用hash, 则默认使用btree:

For indexes on NDB table columns, the USING option can be specified only for a unique index or primary key. USING HASH prevents the creation of an ordered index; otherwise, creating a unique index or primary key on an NDB table automatically results in the creation of both an ordered index and a hash index, each of which indexes the same set of columns.

create index创建的索引都是btree, ndb存储引擎不支持在创建索引的时候使用using关键字来指定索引类型

例如:

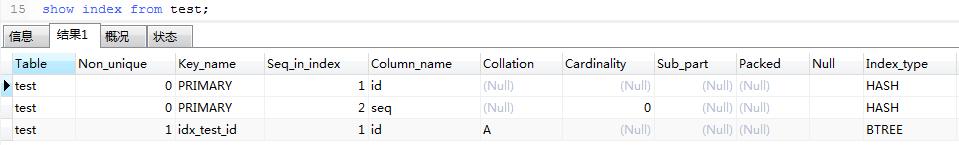

create index idx_test_id on test

(

id

);查看创建的索引show index from test;

批量操作,一次执行

mysql ndb支持多次操作,一次性执行(见The NdbTransaction Class)

Several operations can be defined on the same NdbTransaction object, in which case they are executed in parallel. When all operations are defined, the execute() method sends them to the NDB kernel for execution.

可以通过多次调用NdbTransaction::getNdbOperation()获取多个NdbOperation,针对NdbOperation的增删改只修改本地的数据,当执行NdbTransaction::execute()时,NdbTransaction下的多个NdbOperation被同时发到服务器端,并行执行。

性能测试

hash

使用上面创建的test表,插入一条测试数据:

insert into test(id, seq, description)

values(1, 0, 'this is a description');根据主键(即hash索引)查询记录,以下是测试结果:

| 事物数 | 操作数 | 总耗时(s) |

|---|---|---|

| 150 | 20 | 0.14578 |

| 300 | 10 | 0.20860 |

| 600 | 5 | 0.35560 |

| 3000 | 1 | 1.47742 |

以第一行数据为例,总共150个事物,每个事物有20个操作,这20个操作是一起发送到服务器端的,以下是每个函数所消耗的时间:

Func:[close ] Total:[ 0.04425s] Count:[ 150] 0~0.005s[ 150]

Func:[execute ] Total:[ 0.09601s] Count:[ 150] 0~0.005s[ 150]

Func:[readTuple ] Total:[ 0.00031s] Count:[ 3000] 0~0.005s[ 3000]

Func:[getNdbOperation] Total:[ 0.00171s] Count:[ 3000] 0~0.005s[ 3000]

Func:[all ] Total:[ 0.14578s]

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

1456

1456

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?