import os

import tensorflow as tf

os.environ['TF_CPP_MIN_LOG_LEVEL'] = '3' # or any {'0', '1', '2'}

import tensorflow as tf

import os

os.environ['TF_CPP_MIN_LOG_LEVEL'] = '0'

# os.environ['TF_CPP_MIN_LOG_LEVEL'] = '1'

# os.environ['TF_CPP_MIN_LOG_LEVEL'] = '2'

# os.environ['TF_CPP_MIN_LOG_LEVEL'] = '3'

v1 = tf.constant([1.0, 2.0, 3.0], shape=[3], name='v1')

v2 = tf.constant([1.0, 2.0, 3.0], shape=[3], name='v2')

sumV12 = v1 + v2

with tf.Session(config=tf.ConfigProto(log_device_placement=True)) as sess:

print sess.run(sumV12)

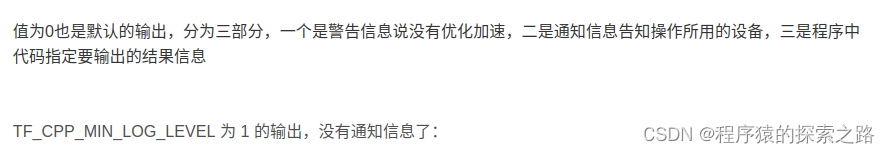

2018-04-21 14:59:09.910415: W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use SSE4.1 instructions, but these are available on your machine and could speed up CPU computations.

2018-04-21 14:59:09.910442: W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use SSE4.2 instructions, but these are available on your machine and could speed up CPU computations.

2018-04-21 14:59:09.910448: W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use AVX instructions, but these are available on your machine and could speed up CPU computations.

2018-04-21 14:59:09.910453: W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use AVX2 instructions, but these are available on your machine and could speed up CPU computations.

2018-04-21 14:59:09.910457: W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use FMA instructions, but these are available on your machine and could speed up CPU computations.

2018-04-21 14:59:09.911260: I tensorflow/core/common_runtime/direct_session.cc:300] Device mapping:

2018-04-21 14:59:09.911816: I tensorflow/core/common_runtime/simple_placer.cc:872] add: (Add)/job:localhost/replica:0/task:0/cpu:0

2018-04-21 14:59:09.911835: I tensorflow/core/common_runtime/simple_placer.cc:872] v2: (Const)/job:localhost/replica:0/task:0/cpu:0

2018-04-21 14:59:09.911841: I tensorflow/core/common_runtime/simple_placer.cc:872] v1: (Const)/job:localhost/replica:0/task:0/cpu:0

Device mapping: no known devices.

add: (Add): /job:localhost/replica:0/task:0/cpu:0

v2: (Const): /job:localhost/replica:0/task:0/cpu:0

v1: (Const): /job:localhost/replica:0/task:0/cpu:0

[ 2. 4. 6.]

2018-04-21 14:59:09.910415: W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use SSE4.1 instructions, but these are available on your machine and could speed up CPU computations.

2018-04-21 14:59:09.910442: W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use SSE4.2 instructions, but these are available on your machine and could speed up CPU computations.

2018-04-21 14:59:09.910448: W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use AVX instructions, but these are available on your machine and could speed up CPU computations.

2018-04-21 14:59:09.910453: W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use AVX2 instructions, but these are available on your machine and could speed up CPU computations.

2018-04-21 14:59:09.910457: W tensorflow/core/platform/cpu_feature_guard.cc:45] The TensorFlow library wasn't compiled to use FMA instructions, but these are available on your machine and could speed up CPU computations.

Device mapping: no known devices.

add: (Add): /job:localhost/replica:0/task:0/cpu:0

v2: (Const): /job:localhost/replica:0/task:0/cpu:0

v1: (Const): /job:localhost/replica:0/task:0/cpu:0

[ 2. 4. 6.]

Device mapping: no known devices.

add: (Add): /job:localhost/replica:0/task:0/cpu:0

v2: (Const): /job:localhost/replica:0/task:0/cpu:0

v1: (Const): /job:localhost/replica:0/task:0/cpu:0

[ 2. 4. 6.]

3139

3139

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?