一维:

import torch

import torch.nn as nn

import math

criterion = nn.CrossEntropyLoss()

output = torch.randn(1, 5, requires_grad=True)

label = torch.empty(1, dtype=torch.long).random_(5)

loss = criterion(output, label)

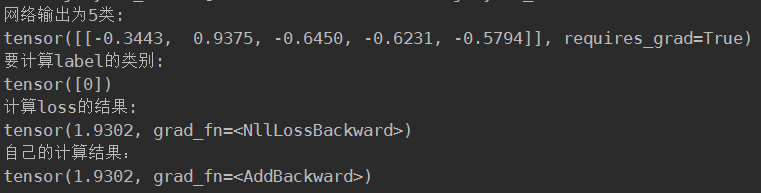

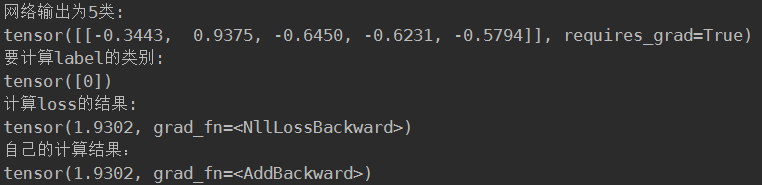

print("网络输出为5类:")

print(output)

print("要计算label的类别:")

print(label)

print("计算loss的结果:")

print(loss)

first = 0

for i in range(1):

first = -output[i][label[i]]

second = 0

for i in range(1):

for j in range(5):

second += math.exp(output[i][j])

res = 0

res = (first + math.log(second))

print("自己的计算结果:")

print(res)

多维:

import torch

import torch.nn as nn

import math

criterion = nn.CrossEntropyLoss()

output = torch.randn(3, 5, requires_grad=True)

label = torch.empty(3, dtype=torch.long).random_(5)

loss = criterion(output, label)

print("网络输出为3个5类:")

print(output)

print("要计算loss的类别:")

print(label)

print("计算loss的结果:")

print(loss)

first = [0, 0, 0]

for i in range(3):

first[i] = -output[i][label[i]]

second = [0, 0, 0]

for i in range(3):

for j in range(5):

second[i] += math.exp(output[i][j])

res = 0

for i in range(3):

res += (first[i] + math.log(second[i]))

print("自己的计算结果:")

print(res/3)

eg1:

import torch

import torch.nn as nn

import math

criterion = nn.CrossEntropyLoss()

# output = torch.randn(3, 5, requires_grad=True)

# label = torch.empty(3, dtype=torch.long).random_(5)

output=torch.tensor([[ 0.4368, 2.2467, 0.3081, -2.0271, 0.4074],

[ 0.3731, 0.8674, -0.5239, -0.3642, -1.4294],

[-1.9317, -0.4155, 0.1015, -0.7857, 0.4398]])

label=torch.tensor([ 2, 1, 4])

loss = criterion(output, label)

print(loss)

first = [0, 0, 0]

for i in range(3):

first[i] = -output[i][label[i]]

print(first)

second = [0, 0, 0]

for i in range(3):

for j in range(5):

second[i] += math.exp(output[i][j])

res = 0

for i in range(3):

res += (first[i] + math.log(second[i]))

print("自己的计算结果:")

print(res/3)

label = torch.empty(3, dtype=torch.long).random_(5)

print(label)eg2:

import numpy as np

from torch import nn

import torch as t

import math

bbb=t.tensor([[ 0.1736, 0.5441, 0.2823],

])

ccc=t.tensor([0])

aaa=nn.CrossEntropyLoss()

print(bbb,ccc)

print(aaa(bbb,ccc))

print(-bbb[0][0]+np.log((np.exp(0.1736)+np.exp(0.5441)+np.exp(0.2823))))

# print(np.exp(0.1736))

# print(np.exp(0.5441))

# print(np.exp(0.2823))

eg3:

import numpy as np

from torch import nn

import torch as t

import math

bbb=t.tensor([[ 0.1736, 0.5441, 0.2823],

])

ccc=t.tensor([0])

aaa=nn.CrossEntropyLoss()

print(bbb,ccc)

print(aaa(bbb,ccc))

print(-np.log(np.exp(bbb[0][0])/((np.exp(0.1736)+np.exp(0.5441)+np.exp(0.2823)))))

# print(np.exp(0.1736))

# print(np.exp(0.5441))

# print(np.exp(0.2823))eg4:

loss(predictions,labels)

tensor(1.1652)

labels

tensor([ 2, 1])

predictions

tensor([[ 0.2928, 0.1549, 0.5523],

[ 0.7676, 0.0461, 0.1863]])

-np.log(np.exp(0.0461)/(np.exp(0.7676)+np.exp(0.0461)+np.exp(0.1863)))

1.4369924776751017

-np.log(np.exp(0.5523)/(np.exp(0.2928)+np.exp(0.1549)+np.exp(0.5523)))

0.8934324010163079

1.43+0.89

2.32

2.32/2

1.16

2831

2831

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?