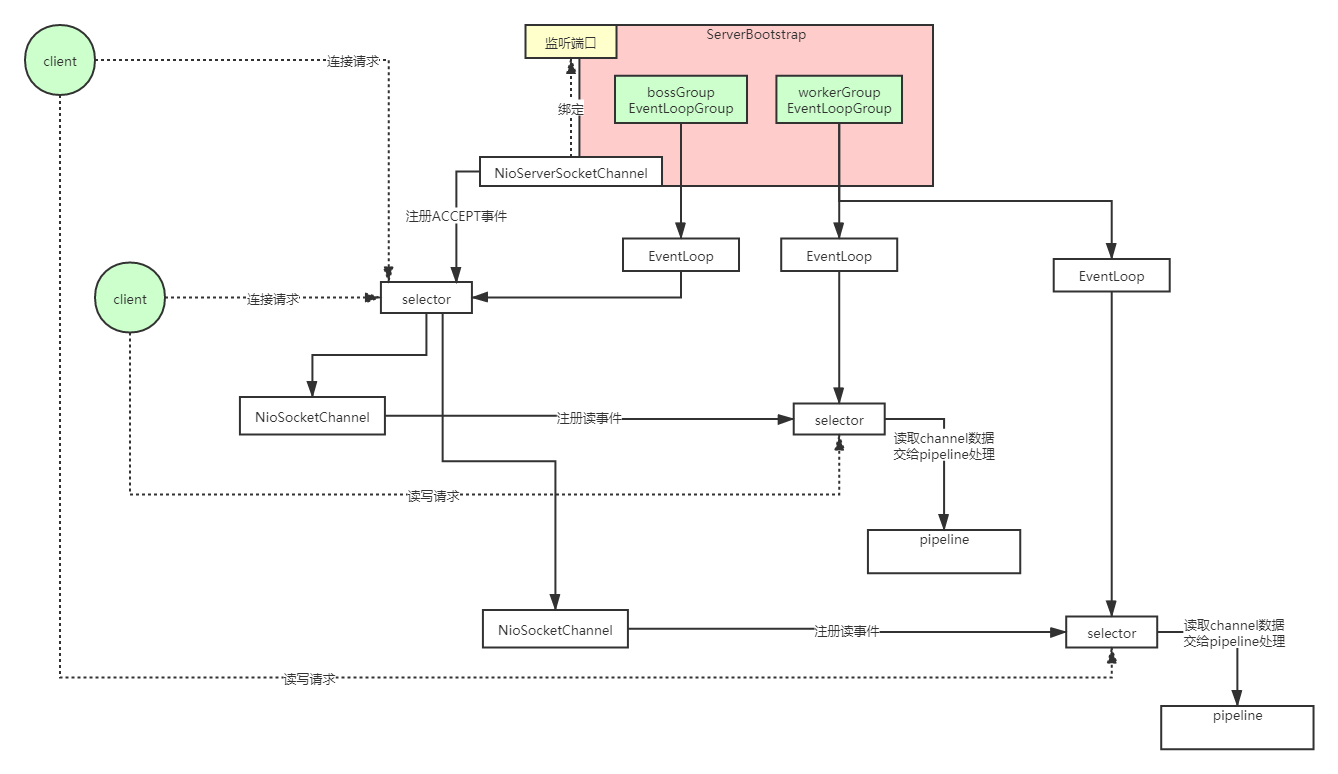

Netty线程模型图

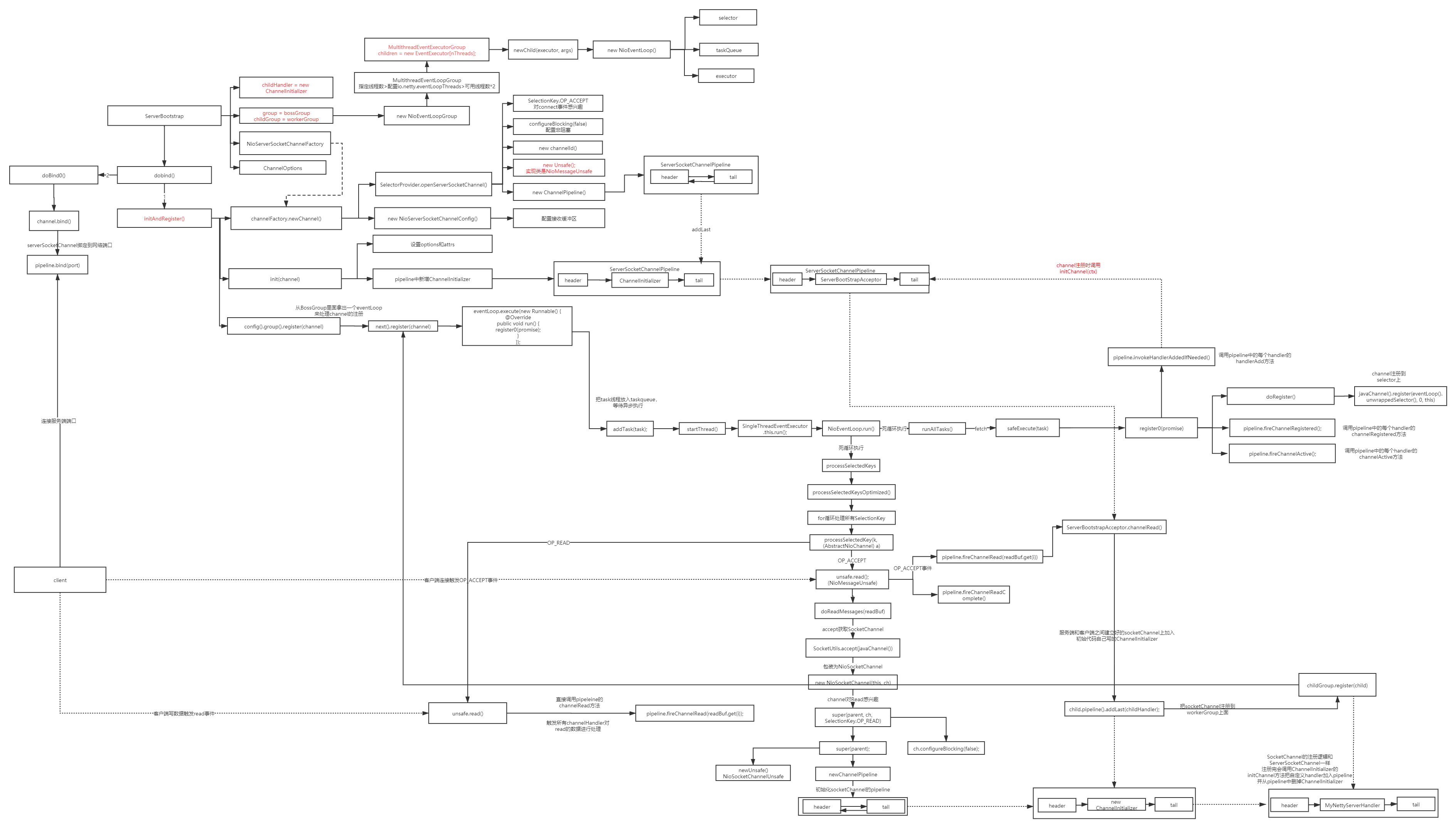

源码执行过程流程图

Netty服务端代码

// 管理连接线程组

EventLoopGroup bossGroup = new NioEventLoopGroup(1);

// 处理io任务线程组

EventLoopGroup workerGroup = new NioEventLoopGroup();

try {

// 启动服务端

ServerBootstrap serverBootstrap = new ServerBootstrap();

serverBootstrap.group(bossGroup, workerGroup)

.channel(NioServerSocketChannel.class)

// 等待队列

.option(ChannelOption.SO_BACKLOG,1024)

// 创建通道初始化对象,设置初始化参数

.childHandler(new ChannelInitializer<SocketChannel>() {

@Override

protected void initChannel(SocketChannel ch) throws Exception {

// 给worker group的channel设置处理器

ch.pipeline().addLast(new NettyServerHandler());

ch.pipeline().addLast(new Server2Handler());

ch.pipeline().addLast(new Server3Handler());

}

});

// 启动服务并绑定端口

ChannelFuture channelFuture = serverBootstrap.bind(9000).sync();

System.out.println("netty server start ...");

// 监听通道关闭

channelFuture.channel().closeFuture().sync();

} catch (InterruptedException e) {

e.printStackTrace();

} finally {

bossGroup.shutdownGracefully();

workerGroup.shutdownGracefully();

}

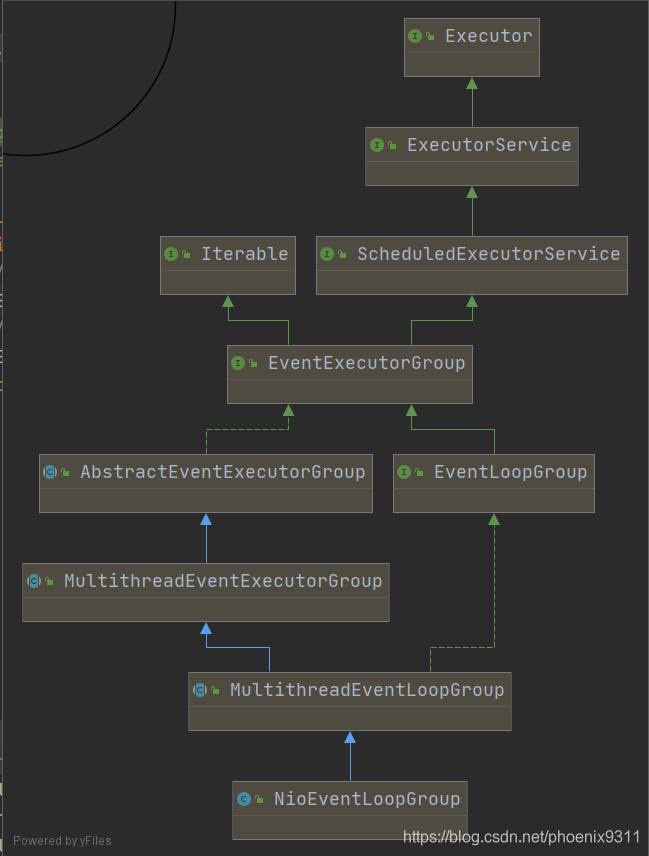

EventLoopGroup继承关系

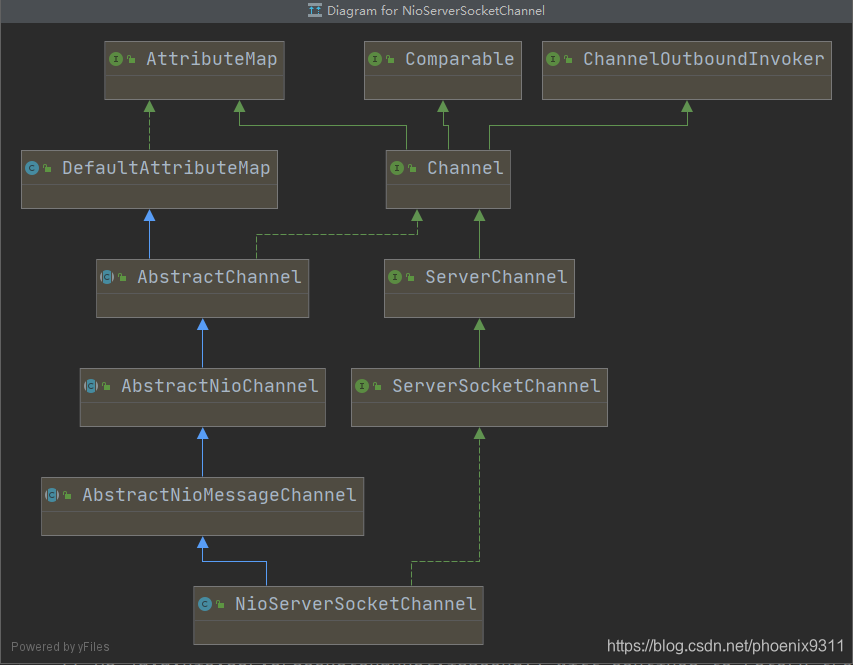

NioServerSocketChannel继承关系

1.创建线程组

创建两个类型为NioEventLoopGroup的线程组,一个用于处理客户端的连接事件,起名为bossGroup,一个用于处理客户端的读写请求,起名为workerGroup

从父类MultithreadEventLoopGroup的构造方法可以看到优先会使用用户指定的线程,如果不指定会使用默认的线程数

protected MultithreadEventLoopGroup(int nThreads, Executor executor, Object... args) {

super(nThreads == 0 ? DEFAULT_EVENT_LOOP_THREADS : nThreads, executor, args);

}

在静态代码块里,会初始化默认线程数,可以看出来是先取io.netty.eventLoopThreads属性配置的值,如果没有配置则默认是取可用CPU的2倍

private static final int DEFAULT_EVENT_LOOP_THREADS;

static {

DEFAULT_EVENT_LOOP_THREADS = Math.max(1, SystemPropertyUtil.getInt(

"io.netty.eventLoopThreads", NettyRuntime.availableProcessors() * 2));

if (logger.isDebugEnabled()) {

logger.debug("-Dio.netty.eventLoopThreads: {}", DEFAULT_EVENT_LOOP_THREADS);

}

}

从构造方法一路往上追溯到MultithreadEventExecutorGroup的构造方法中(部分异常处理代码有删减)

protected MultithreadEventExecutorGroup(int nThreads, Executor executor,

EventExecutorChooserFactory chooserFactory, Object... args) {

if (executor == null) {

executor = new ThreadPerTaskExecutor(newDefaultThreadFactory());

}

children = new EventExecutor[nThreads];

for (int i = 0; i < nThreads; i ++) {

boolean success = false;

try {

children[i] = newChild(executor, args);

success = true;

}

chooser = chooserFactory.newChooser(children);

}

可以看到这里初始化了一个属性名为children的EventExecutor数组,里面每个对象都有newChild方法来初始化,实际上是创建了一个NioEventLoop对象

protected EventLoop newChild(Executor executor, Object... args) throws Exception {

EventLoopTaskQueueFactory queueFactory = args.length == 4 ? (EventLoopTaskQueueFactory) args[3] : null;

return new NioEventLoop(this, executor, (SelectorProvider) args[0],

((SelectStrategyFactory) args[1]).newSelectStrategy(), (RejectedExecutionHandler) args[2], queueFactory);

}

内部创建了seletor和taskQueue等

NioEventLoop(NioEventLoopGroup parent, Executor executor, SelectorProvider selectorProvider,

SelectStrategy strategy, RejectedExecutionHandler rejectedExecutionHandler,

EventLoopTaskQueueFactory queueFactory) {

super(parent, executor, false, newTaskQueue(queueFactory), newTaskQueue(queueFactory),

rejectedExecutionHandler);

this.provider = ObjectUtil.checkNotNull(selectorProvider, "selectorProvider");

this.selectStrategy = ObjectUtil.checkNotNull(strategy, "selectStrategy");

final SelectorTuple selectorTuple = openSelector();

this.selector = selectorTuple.selector;

this.unwrappedSelector = selectorTuple.unwrappedSelector;

}

protected SingleThreadEventLoop(EventLoopGroup parent, Executor executor,

boolean addTaskWakesUp, Queue<Runnable> taskQueue, Queue<Runnable> tailTaskQueue,

RejectedExecutionHandler rejectedExecutionHandler) {

super(parent, executor, addTaskWakesUp, taskQueue, rejectedExecutionHandler);

tailTasks = ObjectUtil.checkNotNull(tailTaskQueue, "tailTaskQueue");

}

2.构建ServerBootStrap对象

构造方法是空实现,不做任何处理

private final Map<ChannelOption<?>, Object> childOptions = new LinkedHashMap<ChannelOption<?>, Object>();

private final Map<AttributeKey<?>, Object> childAttrs = new ConcurrentHashMap<AttributeKey<?>, Object>();

private final ServerBootstrapConfig config = new ServerBootstrapConfig(this);

private volatile EventLoopGroup childGroup;

private volatile ChannelHandler childHandler;

public ServerBootstrap() { }

通过group方法把bossGroup赋值给group字段,workerGroup赋值childGroup字段

public ServerBootstrap group(EventLoopGroup parentGroup, EventLoopGroup childGroup) {

super.group(parentGroup);

if (this.childGroup != null) {

throw new IllegalStateException("childGroup set already");

}

this.childGroup = ObjectUtil.checkNotNull(childGroup, "childGroup");

return this;

}

通过channel方法给channelFactory属性指定Channel工厂,后续通过无参构造方法来反射创建实例

public B channel(Class<? extends C> channelClass) {

return channelFactory(new ReflectiveChannelFactory<C>(

ObjectUtil.checkNotNull(channelClass, "channelClass")

));

}

public ReflectiveChannelFactory(Class<? extends T> clazz) {

ObjectUtil.checkNotNull(clazz, "clazz");

try {

this.constructor = clazz.getConstructor();

} catch (NoSuchMethodException e) {

throw new IllegalArgumentException("Class " + StringUtil.simpleClassName(clazz) +

" does not have a public non-arg constructor", e);

}

}@Override

public T newChannel() {

try {

return constructor.newInstance();

} catch (Throwable t) {

throw new ChannelException("Unable to create Channel from class " + constructor.getDeclaringClass(), t);

}

}

通过option方法配置参数

public <T> B option(ChannelOption<T> option, T value) {

ObjectUtil.checkNotNull(option, "option");

synchronized (options) {

if (value == null) {

options.remove(option);

} else {

options.put(option, value);

}

}

return self();

}

新建一个ChannelInitializer赋值给childHandler属性,后面启动的时候会用到,暂时先跳过。内部实现就是一个简单的赋值,这里记录一下具体的调用

.childHandler(new ChannelInitializer<SocketChannel>() {

@Override

protected void initChannel(SocketChannel ch) throws Exception {

// 给worker group的channel设置处理器

ch.pipeline().addLast(new NettyServerHandler());

ch.pipeline().addLast(new Server2Handler());

ch.pipeline().addLast(new Server3Handler());

}

});

3.启动服务并绑定端口

serverBootstrap.bind(9000).sync()

沿着方法的调用进入到AbstractBootstrap#doBind方法,这里有两个方法比较重要,一个是initAndRegister(),另外一个是doBind0(regFuture, channel, localAddress, promise)

其中doBind方法比较简单,里面没有什么很复杂的逻辑,最终是调用java的api sun.nio.ch.ServerSocketChannelImpl#bind来实现的,这里我们主要看一下initAndRegister方法

initAndRegister

final ChannelFuture initAndRegister() {

Channel channel = null;

try {

channel = channelFactory.newChannel();

init(channel);

} catch (Throwable t) {

if (channel != null) {

// channel can be null if newChannel crashed (eg SocketException("too many open files"))

channel.unsafe().closeForcibly();

// as the Channel is not registered yet we need to force the usage of the GlobalEventExecutor

return new DefaultChannelPromise(channel, GlobalEventExecutor.INSTANCE).setFailure(t);

}

// as the Channel is not registered yet we need to force the usage of the GlobalEventExecutor

return new DefaultChannelPromise(new FailedChannel(), GlobalEventExecutor.INSTANCE).setFailure(t);

}

ChannelFuture regFuture = config().group().register(channel);

if (regFuture.cause() != null) {

if (channel.isRegistered()) {

channel.close();

} else {

channel.unsafe().closeForcibly();

}

}

return regFuture;

}

这里我们从三个分支分别去看他的调用方向:channelFactory.newChannel()、init(channel)、config().group().register(channel)

3.1channelFactory.newChannel()----新建ServerSocketChannel对象

第二步我们构建ServerBootstrap对象的时候给channelFactory属性赋值的是一个反射创建NioServerSocketChannel对象的工厂,所以这里我们调用new Channel会通过它的无参构造方法反射创建一个NioServerSocketChannel对象

public NioServerSocketChannel() {

this(newSocket(DEFAULT_SELECTOR_PROVIDER));

}

new Socket方法会通过默认的SelectProvider来new 一个java nio包下的ServerSocketChannel对象,进入this方法

public NioServerSocketChannel(ServerSocketChannel channel) {

super(null, channel, SelectionKey.OP_ACCEPT);

config = new NioServerSocketChannelConfig(this, javaChannel().socket());

}

NioServerSocketChannelConfig没什么逻辑,就是把当前对象(NioServerSocketChannel)和ServerSocketChannel的socket封装了一下,接下来进入super

一直进到AbstractNioChannel的构造方法,可以看到当前channel设置了对OP_ACCEPT事件感兴趣,并且配置了非阻塞

protected AbstractNioChannel(Channel parent, SelectableChannel ch, int readInterestOp) {

super(parent);

this.ch = ch;

this.readInterestOp = readInterestOp;

try {

ch.configureBlocking(false);

} catch (IOException e) {

try {

ch.close();

} catch (IOException e2) {

logger.warn(

"Failed to close a partially initialized socket.", e2);

}

throw new ChannelException("Failed to enter non-blocking mode.", e);

}

}

再往上进入父类的构造方法,这里新建了一个unsafe对象,因为这里最终的实现类是NioServerSocketChannel,通过UML结构图可以看出来他最终调用的实现类是AbstractNioMessageChannel#newUnsafe,所以这里的unsafe对象是NioMessageUnsafe对象。

protected AbstractChannel(Channel parent) {

this.parent = parent;

id = newId();

unsafe = newUnsafe();

pipeline = newChannelPipeline();

}

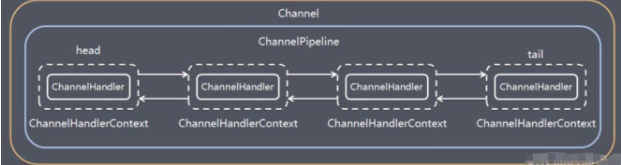

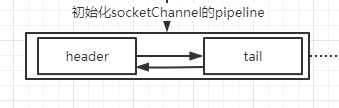

这里还初始化了一个ChannelPipeline对象,里面是由ChannelHandlerContext组成的双向链表,context里面放的是channelHandler对象,现在里面只有head和tail

protected DefaultChannelPipeline(Channel channel) {

this.channel = ObjectUtil.checkNotNull(channel, "channel");

succeededFuture = new SucceededChannelFuture(channel, null);

voidPromise = new VoidChannelPromise(channel, true);

tail = new TailContext(this);

head = new HeadContext(this);

head.next = tail;

tail.prev = head;

}

3.2 init(channel)---Channel初始化

void init(Channel channel) {

setChannelOptions(channel, newOptionsArray(), logger);

setAttributes(channel, attrs0().entrySet().toArray(EMPTY_ATTRIBUTE_ARRAY));

ChannelPipeline p = channel.pipeline();

final EventLoopGroup currentChildGroup = childGroup;

final ChannelHandler currentChildHandler = childHandler;

final Entry<ChannelOption<?>, Object>[] currentChildOptions;

synchronized (childOptions) {

currentChildOptions = childOptions.entrySet().toArray(EMPTY_OPTION_ARRAY);

}

final Entry<AttributeKey<?>, Object>[] currentChildAttrs = childAttrs.entrySet().toArray(EMPTY_ATTRIBUTE_ARRAY);

p.addLast(new ChannelInitializer<Channel>() {

@Override

public void initChannel(final Channel ch) {

final ChannelPipeline pipeline = ch.pipeline();

ChannelHandler handler = config.handler();

if (handler != null) {

pipeline.addLast(handler);

}

ch.eventLoop().execute(new Runnable() {

@Override

public void run() {

pipeline.addLast(new ServerBootstrapAcceptor(

ch, currentChildGroup, currentChildHandler, currentChildOptions, currentChildAttrs));

}

});

}

});

}

这里先是设置了attr和options,childGroup就是我们定义的workerGroup,childHandler就是我们自定义的匿名ChannelInitializer对象,最后向我们上面初始化的ServerSocketChannel的pipeline对象中加入了一个匿名ChannelInitializer对象

我们看一下addLast方法,可以看到这里先是把handler包装了一个channelHandlerContext然后听过addLast0方法加入pipeline

public final ChannelPipeline addLast(EventExecutorGroup group, String name, ChannelHandler handler) {

final AbstractChannelHandlerContext newCtx;

synchronized (this) {

checkMultiplicity(handler);

newCtx = newContext(group, filterName(name, handler), handler);

addLast0(newCtx);

// If the registered is false it means that the channel was not registered on an eventLoop yet.

// In this case we add the context to the pipeline and add a task that will call

// ChannelHandler.handlerAdded(...) once the channel is registered.

if (!registered) {

newCtx.setAddPending();

callHandlerCallbackLater(newCtx, true);

return this;

}

EventExecutor executor = newCtx.executor();

if (!executor.inEventLoop()) {

callHandlerAddedInEventLoop(newCtx, executor);

return this;

}

}

callHandlerAdded0(newCtx);

return this;

}

addLast0方法,可以看出来我们并不是真的加到链表的最后面,而是加到tail的前面一个

private void addLast0(AbstractChannelHandlerContext newCtx) {

AbstractChannelHandlerContext prev = tail.prev;

newCtx.prev = prev;

newCtx.next = tail;

prev.next = newCtx;

tail.prev = newCtx;

}

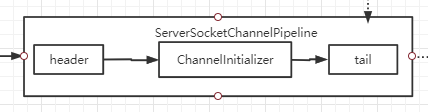

加入了一个ChannelInitializer对象以后,现在ServerSocketChannel的pipeline里面是这个样子

在ServerSocketChannel注册到selector上的时候,会调用这里的ChannelInitializer对象里面的initChannel方法,具体的触发时间和调用逻辑在后面触发的时候再看

3.3config().group().register(channel)

这里的config()返回的是新建ServerBootStrap对象的时候新建的包含this的ServerBootStrapConfig对象,config().group返回的是ServerBootStrap的group对象,也就是我们初始化的时候新建的bossGroup,从这里暂时可以看出是要把channel注册到bossGroup上面。因为bossGroup是NioEventLoopGroup类型的,根据UML类图可以看出这里调用的是MultithreadEventLoopGroup的register方法,

@Override

public ChannelFuture register(Channel channel) {

return next().register(channel);

}

next()是根据对应的策略选择出bossGroup里面的某一个NioEventLoop,根据uml类图来判断调用逻辑

@Override

public ChannelFuture register(Channel channel) {

return register(new DefaultChannelPromise(channel, this));

}

@Override

public ChannelFuture register(final ChannelPromise promise) {

ObjectUtil.checkNotNull(promise, "promise");

promise.channel().unsafe().register(this, promise);

return promise;

}

promise.channel()返回的就是我们传进来的serverSocketChannel对象,unsafe方法就是new serverSocketChannel对象时创建的NioMessageUnsafe对象(参考3.1)

public final void register(EventLoop eventLoop, final ChannelPromise promise) {

ObjectUtil.checkNotNull(eventLoop, "eventLoop");

if (isRegistered()) {

promise.setFailure(new IllegalStateException("registered to an event loop already"));

return;

}

if (!isCompatible(eventLoop)) {

promise.setFailure(

new IllegalStateException("incompatible event loop type: " + eventLoop.getClass().getName()));

return;

}

AbstractChannel.this.eventLoop = eventLoop;

if (eventLoop.inEventLoop()) {

register0(promise);

} else {

try {

eventLoop.execute(new Runnable() {

@Override

public void run() {

register0(promise);

}

});

} catch (Throwable t) {

logger.warn(

"Force-closing a channel whose registration task was not accepted by an event loop: {}",

AbstractChannel.this, t);

closeForcibly();

closeFuture.setClosed();

safeSetFailure(promise, t);

}

}

}

当前运行的是main线程,并不是NioEventLoop里面的线程,所以这里会走else逻辑

eventLoop.execute(new Runnable() {

@Override

public void run() {

register0(promise);

}

});

这里分成两个部分分别来看

3.3.1 eventLoop.execute

private void execute(Runnable task, boolean immediate) {

boolean inEventLoop = inEventLoop();

addTask(task);

if (!inEventLoop) {

startThread();

if (isShutdown()) {

boolean reject = false;

try {

if (removeTask(task)) {

reject = true;

}

} catch (UnsupportedOperationException e) {

// The task queue does not support removal so the best thing we can do is to just move on and

// hope we will be able to pick-up the task before its completely terminated.

// In worst case we will log on termination.

}

if (reject) {

reject();

}

}

}

if (!addTaskWakesUp && immediate) {

wakeup(inEventLoop);

}

}

这里先把register0这个任务放到taskQueue里面等待异步执行,

final boolean offerTask(Runnable task) {

if (isShutdown()) {

reject();

}

return taskQueue.offer(task);

}

同样的因为这里是main线程会走if条件里面的逻辑,执行启动线程startThread方法

CAS设置启动状态,然后执行doStartThread方法

private void startThread() {

if (state == ST_NOT_STARTED) {

if (STATE_UPDATER.compareAndSet(this, ST_NOT_STARTED, ST_STARTED)) {

boolean success = false;

try {

doStartThread();

success = true;

} finally {

if (!success) {

STATE_UPDATER.compareAndSet(this, ST_STARTED, ST_NOT_STARTED);

}

}

}

}

}

这里使用线程池去执行后面的任务,main线程会立刻返回,我们来看线程内部执行的任务(视角从main线程切换到线程池上的线程)

private void doStartThread() {

assert thread == null;

executor.execute(new Runnable() {

@Override

public void run() {

thread = Thread.currentThread();

if (interrupted) {

thread.interrupt();

}

boolean success = false;

updateLastExecutionTime();

try {

SingleThreadEventExecutor.this.run();

success = true;

}

我们这里看主流程SingleThreadEventExecutor.this.run(),其他的异常处理和状态流转先忽略。

protected void run() {

int selectCnt = 0;

for (;;) {

try {

int strategy;

try {

strategy = selectStrategy.calculateStrategy(selectNowSupplier, hasTasks());

switch (strategy) {

case SelectStrategy.CONTINUE:

continue;

case SelectStrategy.BUSY_WAIT:

// fall-through to SELECT since the busy-wait is not supported with NIO

case SelectStrategy.SELECT:

long curDeadlineNanos = nextScheduledTaskDeadlineNanos();

if (curDeadlineNanos == -1L) {

curDeadlineNanos = NONE; // nothing on the calendar

}

nextWakeupNanos.set(curDeadlineNanos);

try {

if (!hasTasks()) {

strategy = select(curDeadlineNanos);

}

} finally {

// This update is just to help block unnecessary selector wakeups

// so use of lazySet is ok (no race condition)

nextWakeupNanos.lazySet(AWAKE);

}

// fall through

default:

}

} catch (IOException e) {

// If we receive an IOException here its because the Selector is messed up. Let's rebuild

// the selector and retry. https://github.com/netty/netty/issues/8566

rebuildSelector0();

selectCnt = 0;

handleLoopException(e);

continue;

}

selectCnt++;

cancelledKeys = 0;

needsToSelectAgain = false;

final int ioRatio = this.ioRatio;

boolean ranTasks;

if (ioRatio == 100) {

try {

if (strategy > 0) {

processSelectedKeys();

}

} finally {

// Ensure we always run tasks.

ranTasks = runAllTasks();

}

} else if (strategy > 0) {

final long ioStartTime = System.nanoTime();

try {

processSelectedKeys();

} finally {

// Ensure we always run tasks.

final long ioTime = System.nanoTime() - ioStartTime;

ranTasks = runAllTasks(ioTime * (100 - ioRatio) / ioRatio);

}

} else {

ranTasks = runAllTasks(0); // This will run the minimum number of tasks

}

if (ranTasks || strategy > 0) {

if (selectCnt > MIN_PREMATURE_SELECTOR_RETURNS && logger.isDebugEnabled()) {

logger.debug("Selector.select() returned prematurely {} times in a row for Selector {}.",

selectCnt - 1, selector);

}

selectCnt = 0;

} else if (unexpectedSelectorWakeup(selectCnt)) { // Unexpected wakeup (unusual case)

selectCnt = 0;

}

} catch (CancelledKeyException e) {

// Harmless exception - log anyway

if (logger.isDebugEnabled()) {

logger.debug(CancelledKeyException.class.getSimpleName() + " raised by a Selector {} - JDK bug?",

selector, e);

}

} catch (Throwable t) {

handleLoopException(t);

}

// Always handle shutdown even if the loop processing threw an exception.

try {

if (isShuttingDown()) {

closeAll();

if (confirmShutdown()) {

return;

}

}

} catch (Throwable t) {

handleLoopException(t);

}

}

}

可以看到NioEventLoop启动的这个线程,for死循环执行这段逻辑,第一次循环的时候,此时taskQueue里面是有刚才加入的register0的这个任务,所以hastask()方法返回true,此时selector上并没有connect或读写事件(netty服务端还没启动完成),所以strategy的值为0;switch内部没有任何操作,此时ioRatio为初始值50,所以会进入到runAllTasks方法

runAllTasks

执行任务队列中的所有任务,此时任务队列中只有一个任务,就是刚才加入的register0

protected boolean runAllTasks(long timeoutNanos) {

fetchFromScheduledTaskQueue();

Runnable task = pollTask();

if (task == null) {

afterRunningAllTasks();

return false;

}

final long deadline = timeoutNanos > 0 ? ScheduledFutureTask.nanoTime() + timeoutNanos : 0;

long runTasks = 0;

long lastExecutionTime;

for (;;) {

safeExecute(task);

runTasks ++;

// Check timeout every 64 tasks because nanoTime() is relatively expensive.

// XXX: Hard-coded value - will make it configurable if it is really a problem.

if ((runTasks & 0x3F) == 0) {

lastExecutionTime = ScheduledFutureTask.nanoTime();

if (lastExecutionTime >= deadline) {

break;

}

}

task = pollTask();

if (task == null) {

lastExecutionTime = ScheduledFutureTask.nanoTime();

break;

}

}

afterRunningAllTasks();

this.lastExecutionTime = lastExecutionTime;

return true;

}

3.3.2 register0(promise);

doRegister方法把ServerSocketChannel注册到bossGroup里面的某一个NioEventLoop上的unwrappedSelector上

private void register0(ChannelPromise promise) {

try {

// check if the channel is still open as it could be closed in the mean time when the register

// call was outside of the eventLoop

if (!promise.setUncancellable() || !ensureOpen(promise)) {

return;

}

boolean firstRegistration = neverRegistered;

doRegister();

neverRegistered = false;

registered = true;

// Ensure we call handlerAdded(...) before we actually notify the promise. This is needed as the

// user may already fire events through the pipeline in the ChannelFutureListener.

pipeline.invokeHandlerAddedIfNeeded();

safeSetSuccess(promise);

pipeline.fireChannelRegistered();

// Only fire a channelActive if the channel has never been registered. This prevents firing

// multiple channel actives if the channel is deregistered and re-registered.

if (isActive()) {

if (firstRegistration) {

pipeline.fireChannelActive();

} else if (config().isAutoRead()) {

// This channel was registered before and autoRead() is set. This means we need to begin read

// again so that we process inbound data.

//

// See https://github.com/netty/netty/issues/4805

beginRead();

}

}

} catch (Throwable t) {

// Close the channel directly to avoid FD leak.

closeForcibly();

closeFuture.setClosed();

safeSetFailure(promise, t);

}

}

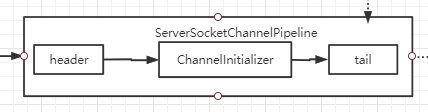

接下来调用pipeline中的invokeHandlerAddedIfNeeded方法,此时的pipeline是这个样子的(参照3.2末尾)

从pendingHandlerCallbackHead开始通过链表依次调用execute方法,此时链表里面只有一个对象,因为pipeline里面只有一个handler(除header和tail)

private void callHandlerAddedForAllHandlers() {

final PendingHandlerCallback pendingHandlerCallbackHead;

synchronized (this) {

assert !registered;

// This Channel itself was registered.

registered = true;

pendingHandlerCallbackHead = this.pendingHandlerCallbackHead;

// Null out so it can be GC'ed.

this.pendingHandlerCallbackHead = null;

}

// This must happen outside of the synchronized(...) block as otherwise handlerAdded(...) may be called while

// holding the lock and so produce a deadlock if handlerAdded(...) will try to add another handler from outside

// the EventLoop.

PendingHandlerCallback task = pendingHandlerCallbackHead;

while (task != null) {

task.execute();

task = task.next;

}

}

pendingHandlerCallbackHead对象是什么?从哪里初始化的?

在3.2中channel初始化的时候,向pipeline中新增了一个ChannelInitializer对象,执行addLast方法的时候,因为channel还没有注册到bossGroup中的nioEventloop的selector上,所以走了if的逻辑

if (!registered) {

newCtx.setAddPending();

callHandlerCallbackLater(newCtx, true);

return this;

}

执行callHandlerCallbackLater方法

新建一个PendingHandlerAddedTask对象,加在链表的末尾。这个对象继承自runnable,内部封装了channelHandlerContext属性.

所以这个时候链表里面只有一个PendingHandlerAddedTask对象,对应的channelHandlerContext内部封装了ChannelInitializer对象(ChannelHandler子类)

private void callHandlerCallbackLater(AbstractChannelHandlerContext ctx, boolean added) {

assert !registered;

PendingHandlerCallback task = added ? new PendingHandlerAddedTask(ctx) : new PendingHandlerRemovedTask(ctx);

PendingHandlerCallback pending = pendingHandlerCallbackHead;

if (pending == null) {

pendingHandlerCallbackHead = task;

} else {

// Find the tail of the linked-list.

while (pending.next != null) {

pending = pending.next;

}

pending.next = task;

}

}

所以上面的task.execute方法最终是调用PendingHandlerAddedTask.execute方法

@Override

void execute() {

EventExecutor executor = ctx.executor();

if (executor.inEventLoop()) {

callHandlerAdded0(ctx);

} else {

try {

executor.execute(this);

} catch (RejectedExecutionException e) {

if (logger.isWarnEnabled()) {

logger.warn(

"Can't invoke handlerAdded() as the EventExecutor {} rejected it, removing handler {}.",

executor, ctx.name(), e);

}

atomicRemoveFromHandlerList(ctx);

ctx.setRemoved();

}

}

}

不论是哪个线程在执行,最终都是进入callHandlerAdded0方法 => ctx.callHandlerAdded() => handler().handlerAdded(this)

因为这里的handler最终实现是ChannelInitializer对象,所以进入对应的方法如下:

public void handlerAdded(ChannelHandlerContext ctx) throws Exception {

if (ctx.channel().isRegistered()) {

// This should always be true with our current DefaultChannelPipeline implementation.

// The good thing about calling initChannel(...) in handlerAdded(...) is that there will be no ordering

// surprises if a ChannelInitializer will add another ChannelInitializer. This is as all handlers

// will be added in the expected order.

if (initChannel(ctx)) {

// We are done with init the Channel, removing the initializer now.

removeState(ctx);

}

}

}

此时ServerSocketChannel已经注册完成,所以会执行对应的initChannel方法,进入具体实现

private boolean initChannel(ChannelHandlerContext ctx) throws Exception {

if (initMap.add(ctx)) { // Guard against re-entrance.

try {

initChannel((C) ctx.channel());

} catch (Throwable cause) {

// Explicitly call exceptionCaught(...) as we removed the handler before calling initChannel(...).

// We do so to prevent multiple calls to initChannel(...).

exceptionCaught(ctx, cause);

} finally {

ChannelPipeline pipeline = ctx.pipeline();

if (pipeline.context(this) != null) {

pipeline.remove(this);

}

}

return true;

}

return false;

}

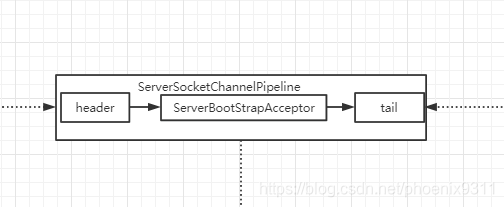

可以看出这里会调用ChannelInitializer对象的initChannel方法,从3.2初始化channel可以看到往pipeline中加入了ServerBootstrapAcceptor对象,最终在finally模块中从pipeline删掉自己,所以现在pipeline中是这样的

接着回到register0方法中,回调pipeline中channelHandler的channelRegistered方法,如果channel激活,触发channelActive方法,因为这里唯一的handler(ServerBootstrapAcceptor对象)没有具体实现,所以不深入下去

pipeline.fireChannelRegistered();

此时SingleThreadEventExecutor.this.run()方法内部死循环还在执行,服务端已经启动成功了,客户端还没有启动,所以方法会阻塞在selector.select上等待客户端连接和写数据,接下来我们启动客户端

启动客户端会接收到连接请求,服务端进入processSelectedKeys方法处理

private void processSelectedKeys() {

if (selectedKeys != null) {

processSelectedKeysOptimized();

} else {

processSelectedKeysPlain(selector.selectedKeys());

}

}

当有connect或read/write事件发生时,selectedKeys不为null,执行processSelectedKeysOptimized方法,for循环处理每一个连接事件

private void processSelectedKeysOptimized() {

for (int i = 0; i < selectedKeys.size; ++i) {

final SelectionKey k = selectedKeys.keys[i];

// null out entry in the array to allow to have it GC'ed once the Channel close

// See https://github.com/netty/netty/issues/2363

selectedKeys.keys[i] = null;

final Object a = k.attachment();

if (a instanceof AbstractNioChannel) {

processSelectedKey(k, (AbstractNioChannel) a);

} else {

@SuppressWarnings("unchecked")

NioTask<SelectableChannel> task = (NioTask<SelectableChannel>) a;

processSelectedKey(k, task);

}

if (needsToSelectAgain) {

// null out entries in the array to allow to have it GC'ed once the Channel close

// See https://github.com/netty/netty/issues/2363

selectedKeys.reset(i + 1);

selectAgain();

i = -1;

}

}

}

接着进入processSelectedKey方法 如果是connect事件,完成连接,建立socketChannel通道,如果是write事件,写完数据flush缓冲区

private void processSelectedKey(SelectionKey k, AbstractNioChannel ch) {

final AbstractNioChannel.NioUnsafe unsafe = ch.unsafe();

if (!k.isValid()) {

final EventLoop eventLoop;

try {

eventLoop = ch.eventLoop();

} catch (Throwable ignored) {

return;

}

if (eventLoop == this) {

unsafe.close(unsafe.voidPromise());

}

return;

}

try {

int readyOps = k.readyOps();

if ((readyOps & SelectionKey.OP_CONNECT) != 0) {

int ops = k.interestOps();

ops &= ~SelectionKey.OP_CONNECT;

k.interestOps(ops);

unsafe.finishConnect();

}

if ((readyOps & SelectionKey.OP_WRITE) != 0) {

ch.unsafe().forceFlush();

}

if ((readyOps & (SelectionKey.OP_READ | SelectionKey.OP_ACCEPT)) != 0 || readyOps == 0) {

unsafe.read();

}

} catch (CancelledKeyException ignored) {

unsafe.close(unsafe.voidPromise());

}

}

因为我们这里的channel是serverSocketChannel,所以我们主要看read和accept事件,进入unsafe.read方法

public void read() {

assert eventLoop().inEventLoop();

final ChannelConfig config = config();

final ChannelPipeline pipeline = pipeline();

final RecvByteBufAllocator.Handle allocHandle = unsafe().recvBufAllocHandle();

allocHandle.reset(config);

boolean closed = false;

Throwable exception = null;

try {

try {

do {

int localRead = doReadMessages(readBuf);

if (localRead == 0) {

break;

}

if (localRead < 0) {

closed = true;

break;

}

allocHandle.incMessagesRead(localRead);

} while (allocHandle.continueReading());

} catch (Throwable t) {

exception = t;

}

int size = readBuf.size();

for (int i = 0; i < size; i ++) {

readPending = false;

pipeline.fireChannelRead(readBuf.get(i));

}

readBuf.clear();

allocHandle.readComplete();

pipeline.fireChannelReadComplete();

if (exception != null) {

closed = closeOnReadError(exception);

pipeline.fireExceptionCaught(exception);

}

if (closed) {

inputShutdown = true;

if (isOpen()) {

close(voidPromise());

}

}

} finally {

// Check if there is a readPending which was not processed yet.

// This could be for two reasons:

// * The user called Channel.read() or ChannelHandlerContext.read() in channelRead(...) method

// * The user called Channel.read() or ChannelHandlerContext.read() in channelReadComplete(...) method

//

// See https://github.com/netty/netty/issues/2254

if (!readPending && !config.isAutoRead()) {

removeReadOp();

}

}

}

我们首先来看doReadMessages方法,接收客户端连接生成SocketChannel建立连接,然后封装NioSocketChannel对象,加入到buf里面

protected int doReadMessages(List<Object> buf) throws Exception {

SocketChannel ch = SocketUtils.accept(javaChannel());

try {

if (ch != null) {

buf.add(new NioSocketChannel(this, ch));

return 1;

}

} catch (Throwable t) {

logger.warn("Failed to create a new channel from an accepted socket.", t);

try {

ch.close();

} catch (Throwable t2) {

logger.warn("Failed to close a socket.", t2);

}

}

return 0;

}

NioSocketChannel对象初始化过程和NioServerSocketChannel对象类似,此时对象内部也有一个初始化的pipeline

接下来执行pipeline的fireChannelRead方法,注意这里的pipeline是serverSocketChannel里面的pipeline,参数readbuf.get(i)是刚才建立连接的NioSocketChannel,此时pipeline里面只有一个ServerBootStrapAcceptor对象,所以我们直接看这个对象的channelRead方法

public void channelRead(ChannelHandlerContext ctx, Object msg) {

final Channel child = (Channel) msg;

child.pipeline().addLast(childHandler);

setChannelOptions(child, childOptions, logger);

setAttributes(child, childAttrs);

try {

childGroup.register(child).addListener(new ChannelFutureListener() {

@Override

public void operationComplete(ChannelFuture future) throws Exception {

if (!future.isSuccess()) {

forceClose(child, future.cause());

}

}

});

} catch (Throwable t) {

forceClose(child, t);

}

}

可以看到这里先向NioSocketChannel的pipeline中加入了childHandler,就是最前面我们自己自定义的ChannelInitializer对象,里面写了业务处理逻辑流程。接下来设置attr和options。

最关键的一点,我们这里发现NioSocketChannel被注册到了childGroup上(也就是workerGroup上)。注册过程和前面相同,只不过换了一个group,这里就不赘述了。

最后如果客户端向服务端写数据,workerGroup上的NioEventLoop的selector探测到了,还是调用前面的unsafe.read方法,不过这里的channel是NioSocketChannel,所以对应的unsafe对象换成了NioByteUnsafe,所以实现逻辑换成了如下,触发pipeline上的channelHandler的channelRead方法和fireChannelReadComplete方法,最终调用我们写的业务逻辑

do {

byteBuf = allocHandle.allocate(allocator);

allocHandle.lastBytesRead(doReadBytes(byteBuf));

if (allocHandle.lastBytesRead() <= 0) {

// nothing was read. release the buffer.

byteBuf.release();

byteBuf = null;

close = allocHandle.lastBytesRead() < 0;

if (close) {

// There is nothing left to read as we received an EOF.

readPending = false;

}

break;

}

allocHandle.incMessagesRead(1);

readPending = false;

pipeline.fireChannelRead(byteBuf);

byteBuf = null;

} while (allocHandle.continueReading());

allocHandle.readComplete();

pipeline.fireChannelReadComplete();

if (close) {

closeOnRead(pipeline);

}

530

530

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?