写在开头:OPEN_MR在数加平台上可用,ODPS_MR我还没跑出结果,先给大家演示一下OPEN_MR的简单使用。

首先,建立一个Hadoop项目,可以使用御膳房的文档搭建环境(目的使代码能够编译通过),后面附一份我的御膳房项目(配置maven后可直接导入eclipse使用)。

使用御膳房的文档搭建环境

http://setting.tenant.yushanfang.com/portal/help/doc.html?spm=0.0.0.0.LBzMEE&file=MrUdfLocalDev

下载源码

http://download.csdn.net/detail/q383700092/9551145

附上示例代码:

package hadoop.TianChiMapreduce;

import java.io.IOException;

import java.util.Iterator;

import com.aliyun.odps.data.Record;

import com.aliyun.odps.data.TableInfo;

import com.aliyun.odps.mapred.JobClient;

import com.aliyun.odps.mapred.MapperBase;

import com.aliyun.odps.mapred.ReducerBase;

import com.aliyun.odps.mapred.TaskContext;

import com.aliyun.odps.mapred.conf.JobConf;

import com.aliyun.odps.mapred.utils.InputUtils;

import com.aliyun.odps.mapred.utils.OutputUtils;

import com.aliyun.odps.mapred.utils.SchemaUtils;

public class WordCount {

public static class TokenizerMapper extends MapperBase {

private Record word;

private Record one;

@Override

public void setup(TaskContext context) throws IOException {

word = context.createMapOutputKeyRecord();

one = context.createMapOutputValueRecord();

one.set(new Object[] { 1L });

System.out.println("TaskID:" + context.getTaskID().toString());

}

@Override

public void map(long recordNum, Record record, TaskContext context)

throws IOException {

for (int i = 0; i < record.getColumnCount(); i++) {

word.set(new Object[] { record.get(0).toString() });

context.write(word, one);

}

}

}

/**

* A combiner class that combines map output by sum them.

**/

public static class SumCombiner extends ReducerBase {

private Record count;

@Override

public void setup(TaskContext context) throws IOException {

count = context.createMapOutputValueRecord();

}

@Override

public void reduce(Record key, Iterator<Record> values, TaskContext context)

throws IOException {

long c = 0;

while (values.hasNext()) {

Record val = values.next();

c += (Long) val.get(0);

}

count.set(0, c);

context.write(key, count);

}

}

/**

* A reducer class that just emits the sum of the input values.

**/

public static class SumReducer extends ReducerBase {

private Record result = null;

@Override

public void setup(TaskContext context) throws IOException {

result = context.createOutputRecord();

}

@Override

public void reduce(Record key, Iterator<Record> values, TaskContext context)

throws IOException {

long count = 0;

while (values.hasNext()) {

Record val = values.next();

count += (Long) val.get(0);

}

result.set(0, key.get(0));

result.set(1, count);

context.write(result);

}

}

public static void main(String[] args) throws Exception {

if (args.length != 2) {

System.err.println("Usage: WordCount <in_table> <out_table>");

System.exit(2);

}

JobConf job = new JobConf();

job.setMapperClass(TokenizerMapper.class);

job.setCombinerClass(SumCombiner.class);

job.setReducerClass(SumReducer.class);

job.setMapOutputKeySchema(SchemaUtils.fromString("word:string"));

job.setMapOutputValueSchema(SchemaUtils.fromString("count:bigint"));

InputUtils.addTable(TableInfo.builder().tableName(args[0]).build(), job);

OutputUtils.addTable(TableInfo.builder().tableName(args[1]).build(), job);

JobClient.runJob(job);

}

}

项目结构如下:

留一个标红的代码就行,其他没用。

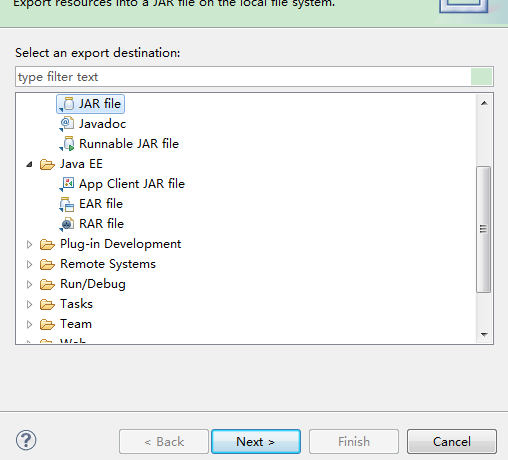

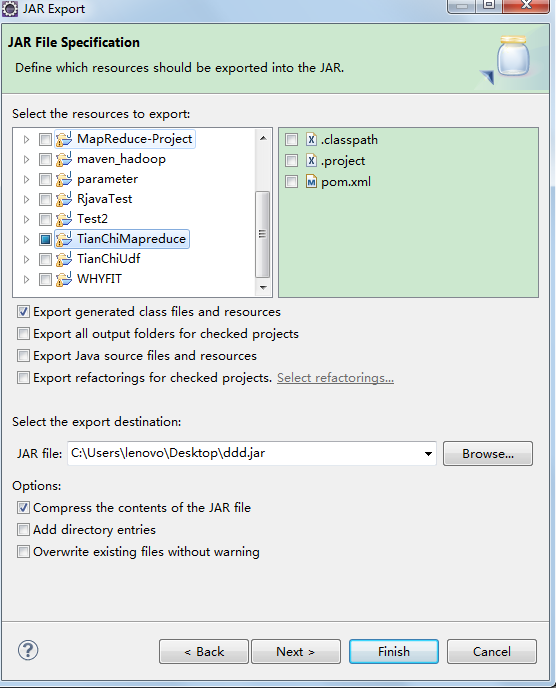

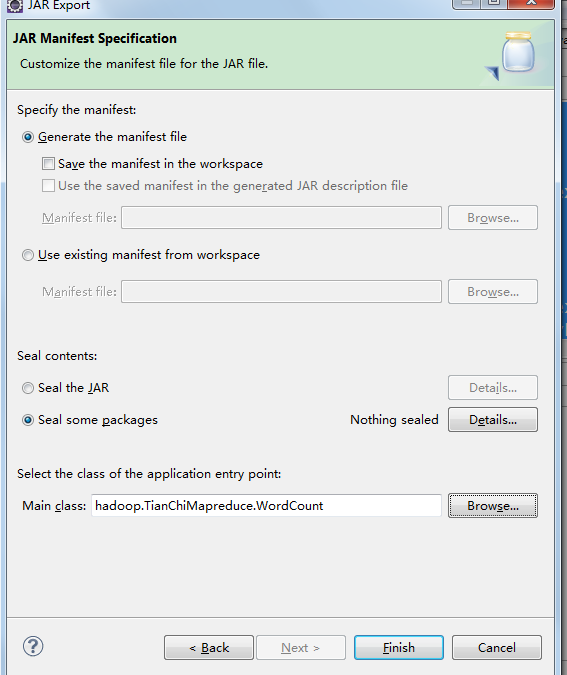

点击WordCount.java右键export导出Jar

打开数加平台点击上传资源

拖动OPEN_MR组件到右侧空白

、

、

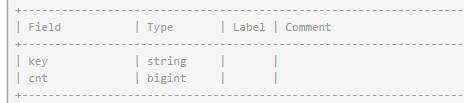

双击组件-按如下填写,前两个是你需要运行的jar,mapper和reducer是你要运行的map类和reudce类,输出表要提前创建好,key和value对应输出表的字段

建表sql:create table if not exists wcout1 (key string,cnt bigint);

保存运行即可

wcout1的表结构和结果

4872

4872

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?