#!/usr/bin/env python

# coding=utf-8

import tensorflow as tf

import input_mnist

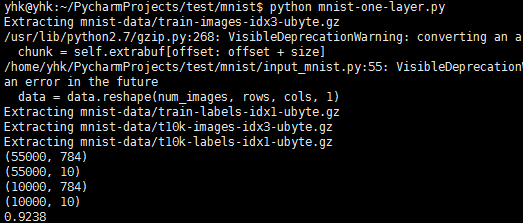

mnist=input_mnist.read_data_sets("mnist-data/",one_hot=True)

print mnist.train.images.shape

print mnist.train.labels.shape

print mnist.test.images.shape

print mnist.test.labels.shape

#Create the model

W=tf.Variable(tf.zeros([784,10]))

b=tf.Variable(tf.zeros([10]))

x=tf.placeholder("float",[None,784])

y=tf.nn.softmax(tf.matmul(x,W)+b)

y_=tf.placeholder("float",[None,10])

#Define loss and optimizer

cross_entropy=tf.reduce_mean(-tf.reduce_sum(y_*tf.log(y),reduction_indices=[1]))

train_step=tf.train.GradientDescentOptimizer(0.5).minimize(cross_entropy)

init=tf.initialize_all_variables()

sess=tf.Session()

sess.run(init)

#Train

for i in xrange(10000):

batch_xs,batch_ys=mnist.train.next_batch(100)

sess.run(train_step, feed_dict={x:batch_xs, y_:batch_ys})

#Test trained model

correct_prediction=tf.equal(tf.arg_max(y,1),tf.arg_max(y_,1))

accuracy=tf.reduce_mean(tf.cast(correct_prediction,tf.float32))

print(sess.run(accuracy, feed_dict={x:mnist.test.images,y_:mnist.test.labels}))

3万+

3万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?