深度学习实践,代码+注释记录每一步

1.准备工作

import tensorflow as tf

import matplotlib.pyplot as plt

from tensorflow.keras import datasets,layers,models 需要提前安装tensorflow2.7 、matplotlib

代码在和鲸社区工作台运行,传送门≯

2.导入数据集

(train_data,train_labels),(test_data,test_labels) = datasets.mnist.load_data("/home/mw/input/mnist2539/mnist.npz")3.数据可视化

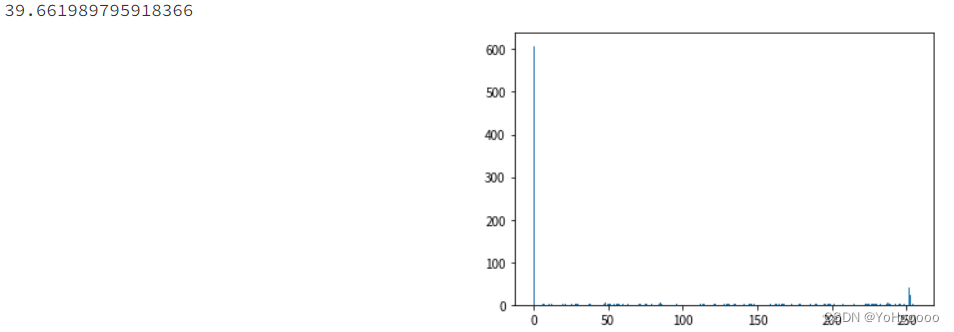

因为导入的是手写数字图片,这里将其中一张图片的灰度直方图进行展示。这里主要是练习通过matplotlib包进行数据可视化。数字表示当前图像灰度平均值,图像为灰度直方图。

avg_image=0.0

for i in range(0,28):

for j in range(0,28):

avg_image += train_data[1][i][j]

#print("i:{:d},j:{:d}".format(i,j))

print(avg_image/(28*28))

#plt.imshow(train_data[1],cmap=plt.cm.binary)

#"{} {}".format("hello", "world")

plt.hist(train_data[1].ravel(),256)

plt.show()

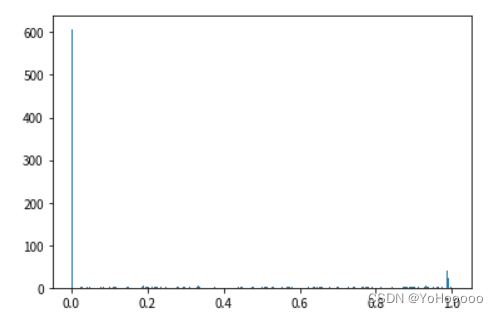

4.数据归一化

如图所示,所有数据都分布在0-1区间内。

train_data = train_data/255.0

test_data = test_data/255.0

avg_image=0.0

for i in range(0,28):

for j in range(0,28):

avg_image += train_data[1][i][j]

#print(train_data[1][i][j])

print(avg_image/28*28)

#plt.subplot(1,2,1)

#plt.imshow(train_data[1],cmap=plt.cm.binary)

# plt.subplot(1,2,2)

plt.hist(train_data[1].ravel(),256)

plt.show()

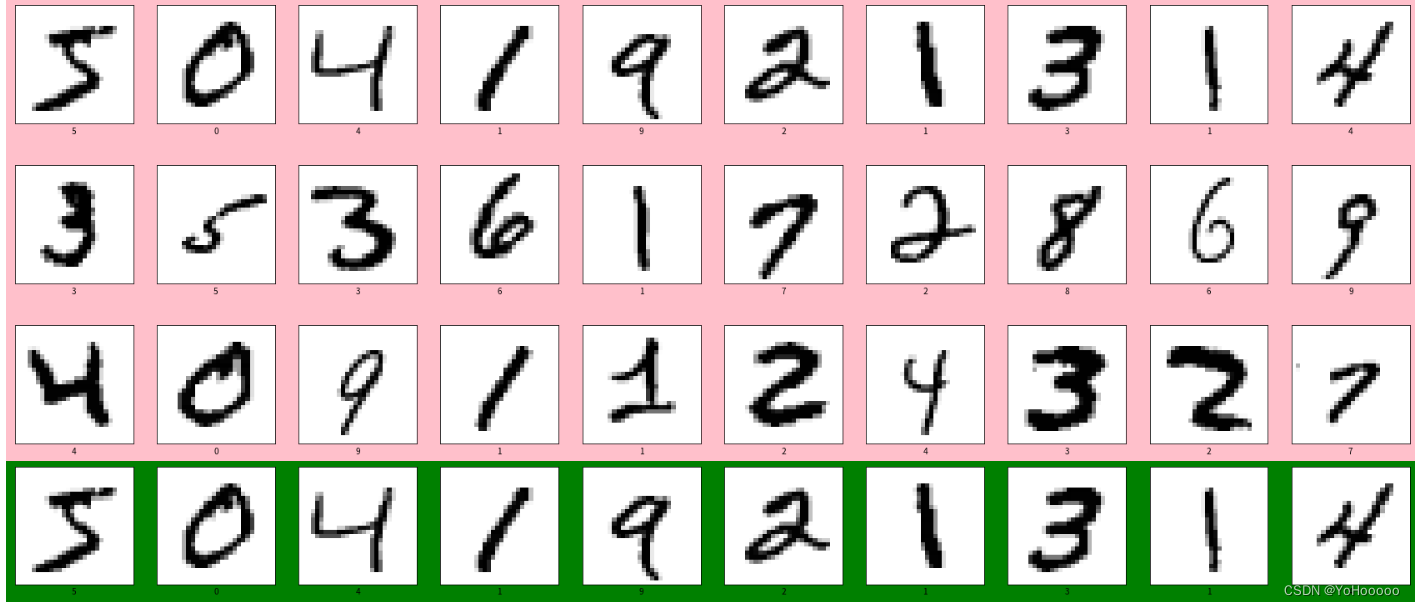

5.训练集图片展示

plt.figure(1,figsize=(30,10),facecolor='pink')中,figure用来设置画布,figsieze设置画布大小,facecolor设置画布背景。代码中设置了两个画布。

plt.figure(1,figsize=(30,10),facecolor='pink')

for i in range(30):

plt.subplot(3,10,i+1)

plt.xticks([])

plt.yticks([])

plt.grid(False)

plt.imshow(train_data[i],cmap=plt.cm.binary)

plt.xlabel(train_labels[i])

plt.figure(2,figsize=(30,10),facecolor='green')

for i in range(10):

plt.subplot(3,10,i+1)

plt.xticks([])

plt.yticks([])

plt.grid(False)

plt.imshow(train_data[i],cmap=plt.cm.binary)

plt.xlabel(train_labels[i])

plt.show()

6.数据准备

为了方便模型进行训练,需要将图片的维度从3维改为4维。

print(train_data.shape,test_data.shape)

train_data=train_data.reshape((60000,28,28,1))

test_data=test_data.reshape((10000,28,28,1))

print(train_data.shape,test_data.shape)(60000, 28, 28) (10000, 28, 28) (60000, 28, 28, 1) (10000, 28, 28, 1)

结果如上

7.建立模型

这里我们直接使用tensorflow内自带的卷积层、池化层和全连接层进行处理。

model = models.Sequential([

layers.Conv2D(32,(3,3),activation='relu',input_shape=(28,28,1)),

layers.MaxPooling2D((2,2)),

layers.Conv2D(64,(3,3),activation='relu'),

layers.MaxPooling2D((2,2)),

layers.Flatten(),

layers.Dense(64,activation='relu'),

layers.Dense(10)

])

model.summary()

Model: "sequential" _________________________________________________________________ Layer (type) Output Shape Param # ================================================================= conv2d (Conv2D) (None, 26, 26, 32) 320 _________________________________________________________________ max_pooling2d (MaxPooling2D) (None, 13, 13, 32) 0 _________________________________________________________________ conv2d_1 (Conv2D) (None, 11, 11, 64) 18496 _________________________________________________________________ max_pooling2d_1 (MaxPooling2 (None, 5, 5, 64) 0 _________________________________________________________________ flatten (Flatten) (None, 1600) 0 _________________________________________________________________ dense (Dense) (None, 64) 102464 _________________________________________________________________ dense_1 (Dense) (None, 10) 650 ================================================================= Total params: 121,930 Trainable params: 121,930 Non-trainable params: 0 _________________________________________________________________

结果如上所示。

8.模型参数设置和训练

optimizer用来设置动量增加模式,损失函数我们使用了tensorflow自带的交叉熵函数。

model.compile(optimizer='adam',

loss=tf.keras.losses.SparseCategoricalCrossentropy(from_logits="Ture"),

metrics=['accuracy'])

history=model.fit(train_data,train_labels,

epochs=20,

validation_data=(test_data,test_labels))

Epoch 1/20 1875/1875 [==============================] - 70s 37ms/step - loss: 0.1370 - accuracy: 0.9580 - val_loss: 0.0446 - val_accuracy: 0.9853 Epoch 2/20 1875/1875 [==============================] - 67s 36ms/step - loss: 0.0060 - accuracy: 0.9981 - val_loss: 0.0463 - val_accuracy: 0.9891 Epoch 12/20 1875/1875 [==============================] - 67s 36ms/step - loss: 0.0053 - accuracy: 0.9981 - val_loss: 0.0356 - val_accuracy: 0.9913 Epoch 13/20 1875/1875 [==============================] - 67s 36ms/step - loss: 0.0049 - accuracy: 0.9983 - val_loss: 0.0320 - val_accuracy: 0.9914 Epoch 14/20 1875/1875 [==============================] - 67s 36ms/step - loss: 0.0038 - accuracy: 0.9988 - val_loss: 0.0434 - val_accuracy: 0.9913 Epoch 15/20 1875/1875 [==============================] - 67s 36ms/step - loss: 0.0046 - accuracy: 0.9986 - val_loss: 0.0333 - val_accuracy: 0.9919 Epoch 16/20 1875/1875 [==============================] - 67s 36ms/step - loss: 0.0034 - accuracy: 0.9988 - val_loss: 0.0478 - val_accuracy: 0.9903 Epoch 17/20 1875/1875 [==============================] - 67s 36ms/step - loss: 0.0036 - accuracy: 0.9989 - val_loss: 0.0454 - val_accuracy: 0.9911 Epoch 18/20 1875/1875 [==============================] - 66s 35ms/step - loss: 0.0044 - accuracy: 0.9984 - val_loss: 0.0537 - val_accuracy: 0.9901 Epoch 19/20 1875/1875 [==============================] - 66s 35ms/step - loss: 0.0032 - accuracy: 0.9990 - val_loss: 0.0463 - val_accuracy: 0.9913 Epoch 20/20 1875/1875 [==============================] - 66s 35ms/step - loss: 0.0037 - accuracy: 0.9990 - val_loss: 0.0459 - val_accuracy: 0.9917

9.模型保存和加载

model.save(r'/home/mw/project/model.h5')

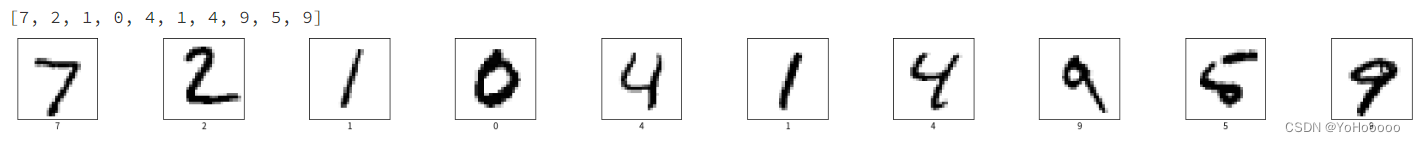

model = tf.keras.models.load_model(r'/home/mw/project/model.h5')10.读取模型进行预测

test_data_pl = test_data.reshape((10000,28,28))

pre=model.predict(test_data)

test_lab = []

for i in range(0,10):

test_lab.append(np.argmax(pre[i]))

print(test_lab)

plt.figure(figsize=(30,10))

for i in range(10):

plt.subplot(5,10,i+1)

plt.xticks([])

plt.yticks([])

plt.imshow(test_data_pl[i],cmap=plt.cm.binary)

plt.xlabel(test_lab[i])

plt.show()

结果如图所示

1035

1035

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?