CountVectorizer会统计特定文档中单词出现的次数,并且会根据单词的频率进行排序,频率高的排在前面,当频率相同时,则它的位置个人感觉是随机的。因为太过例子跑出来,每一次都不相同。

##语料被称为文本文档的完整集合。

##标记化,将指定语句或文本文档的词语集合划分成单位/独立词语的方法被称为标记化

from pyspark.sql import SparkSession####引入对象 创建RDD

spark = SparkSession.builder.appName('nlp').getOrCreate()df = spark.createDataFrame([(1, 'T really liked this movie'),

(2, 'I would recommend this movie to my friends'),

(3, 'movie was alright but acting was horrible'),

(4, 'I am never watching that movie ever again i liked it')],

['user_id', 'review'])####引入Tokenizer 标记化

from pyspark.ml.feature import Tokenizer

tokenization = Tokenizer(inputCol = 'review', outputCol = 'tokens')

tokenized_df = tokenization.transform(df)

tokenized_df.show(4, False)

###移除停用词

###在pyspark中,可以使用StopWordsRemover来移除停用词

from pyspark.ml.feature import StopWordsRemover

stopword_removal = StopWordsRemover(inputCol = 'tokens', outputCol = 'refind_tokens')

refind_df = stopword_removal.transform(tokenized_df)

refind_df.select(['user_id', 'tokens', 'refind_tokens']).show(4, False)####词袋 数值形式表示文本数据

###计数向量器 会统计特定文档中单词出现的次数

from pyspark.ml.feature import CountVectorizer

count_vec = CountVectorizer(inputCol = 'refind_tokens', outputCol = 'features')

cv_df = count_vec.fit(refind_df).transform(refind_df)

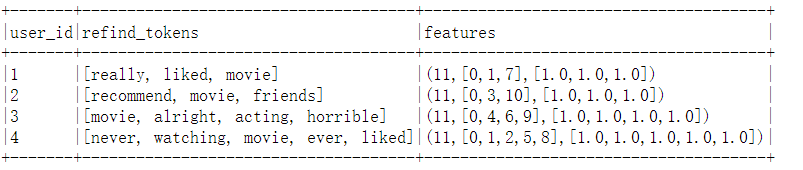

cv_df.select(['user_id', 'refind_tokens', 'features']).show(4, False)

其中11是单词的个数,可以看到movie出现的频率最高,所以排在最前面。[0,1,7]分别表示单词位于第0个、第1个和第7个位置,[1.0,1.0,1.0]表示单词在本文档中出现的次数。

540

540

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?