本文整理了该模型的运行经验,经过验证可行。

本文详细介绍了基于inception-v3模型的神经网络图片识别系统搭建过程。

1. 系统搭建

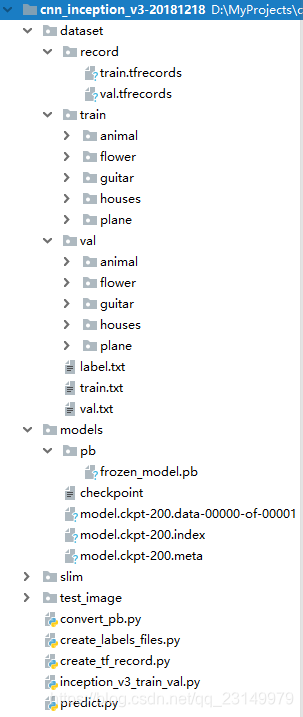

进行系统搭建前,需要配置文件夹,如图1,介绍了工程的文件架构。

工程名称为cnn_inception_v3-20181218。

说明如下:

|-dataset #存放数据集

|-record #存放record文件

train.tfrecords #train的record文件

val.tfrecords #val的record文件

|-train #存放用于训练的图片,按类存取,共5类。

|-animal #存放若干张动物的图片

|-flower

|-guitar

|-houses

|-plane

|-val #存放用于评价的图片,按类存取,共5类。

|-animal

|-flower

|-guitar

|-houses

|-plane

label.txt #存放5个标签名称

train.txt #存放训练数据集标签

val.txt #存放评价数据集标签

|-models #存放模型

|-pb #存放pb模型

frozen_model.pb #训练获取的pb模型

checkpoint #检查点文件,文件保存了一个目录下所有的模型文件列表。

model.ckpt-200.data-00000-of-00001 #保存模型中每个变量的取值

model.ckpt-200.index

model.ckpt-200.meta #文件保存了TensorFlow计算图的结构,可以理解为神经网络

#的网络结构,该文件可以被 tf.train.import_meta_graph 加载

#到当前默认的图来使用。

|-slim #存放slim函数库

|-test_image #存放测试的文件

convert_pb.py #将ckpt模型转化为pb模型

create_labels_files.py #将数据创建标签

create_tf_record.py #将数据转化为record格式

inception_v3_train_val.py #训练数据

predict.py #测试模型

1.1 创建数据标签

在dataset/train和dataset/val文件下存放图片数据集,共有五类图片,分别是:flower、guitar、animal、houses和plane,每组数据集大概有800张左右。create_labels_files.py可以直接生成训练train和验证val的数据集txt文件。

create_labels_files.py代码如下:

#调入库

import os

# os.environ['TF_CPP_MIN_LOG_LEVEL'] = '2'

import os.path

def write_txt(content, filename, mode='w'):

"""保存txt数据

:param content:需要保存的数据,type->list

:param filename:文件名

:param mode:读写模式:'w' or 'a'

:return: void

"""

with open(filename, mode) as f:

for line in content:

str_line = ""

for col, data in enumerate(line):

if not col == len(line) - 1:

# 以空格作为分隔符

str_line = str_line + str(data) + " "

else:

# 每行最后一个数据用换行符“\n”

str_line = str_line + str(data) + "\n"

f.write(str_line)

def get_files_list(dir):

'''

实现遍历dir目录下,所有文件(包含子文件夹的文件)

:param dir:指定文件夹目录

:return:包含所有文件的列表->list

'''

# parent:父目录, filenames:该目录下所有文件夹,filenames:该目录下的文件名

files_list = []

for parent, dirnames, filenames in os.walk(dir):

for filename in filenames:

# print("parent is: " + parent)

# print("filename is: " + filename)

# print(os.path.join(parent, filename)) # 输出rootdir路径下所有文件(包含子文件)信息

curr_file = parent.split(os.sep)[-1]

if curr_file == 'flower':

labels = 0

elif curr_file == 'guitar':

labels = 1

elif curr_file == 'animal':

labels = 2

elif curr_file == 'houses':

labels = 3

elif curr_file == 'plane':

labels = 4

files_list.append([os.path.join(curr_file, filename), labels])

return files_list

if __name__ == '__main__':

train_dir = 'dataset/train'

train_txt = 'dataset/train.txt'

train_data = get_files_list(train_dir)

write_txt(train_data, train_txt, mode='w')

val_dir = 'dataset/val'

val_txt = 'dataset/val.txt'

val_data = get_files_list(val_dir)

write_txt(val_data, val_txt, mode='w')

1.2 制作tfrecords数据格式

有了 train.txt和val.txt数据集,我们就可以制作train.tfrecords和val.tfrecords文件了,create_tf_record.py如下。

#图片转向量函数

# -*-coding: utf-8 -*-

"""

"""

##########################################################################

import tensorflow as tf

import numpy as np

import os

import cv2

import matplotlib.pyplot as plt

import random

from PIL import Image

##########################################################################

def _int64_feature(value):

return tf.train.Feature(int64_list=tf.train.Int64List(value=[value]))

# 生成字符串型的属性

def _bytes_feature(value):

return tf.train.Feature(bytes_list=tf.train.BytesList(value=[value]))

# 生成实数型的属性

def float_list_feature(value):

return tf.train.Feature(float_list=tf.train.FloatList(value=value))

def get_example_nums(tf_records_filenames):

'''

统计tf_records图像的个数(example)个数

:param tf_records_filenames: tf_records文件路径

:return:

'''

nums= 0

for record in tf.python_io.tf_record_iterator(tf_records_filenames):

nums += 1

return nums

def show_image(title,image):

'''

显示图片

:param title: 图像标题

:param image: 图像的数据

:return:

'''

# plt.figure("show_image")

# print(image.dtype)

plt.imshow(image)

plt.axis('on') # 关掉坐标轴为 off

plt.title(title) # 图像题目

plt.show()

def load_labels_file(filename,labels_num=1,shuffle=False):

'''

载图txt文件,文件中每行为一个图片信息,且以空格隔开:图像路径 标签1 标签2,如:test_image/1.jpg 0 2

:param filename:

:param labels_num :labels个数

:param shuffle :是否打乱顺序

:return:images type->list

:return:labels type->list

'''

images=[]

labels=[]

with open(filename) as f:

lines_list=f.readlines()

if shuffle:

random.shuffle(lines_list)

for lines in lines_list:

line=lines.rstrip().split(' ')

label=[]

for i in range(labels_num):

label.append(int(line[i+1]))

images.append(line[0])

labels.append(label)

return images,labels

def read_image(filename, resize_height, resize_width,normalization=False):

'''

读取图片数据,默认返回的是uint8,[0,255]

:param filename:

:param resize_height:

:param resize_width:

:param normalization:是否归一化到[0.,1.0]

:return: 返回的图片数据

'''

bgr_image = cv2.imread(filename)

if len(bgr_image.shape)==2:#若是灰度图则转为三通道

print("Warning:gray image",filename)

bgr_image = cv2.cvtColor(bgr_image, cv2.COLOR_GRAY2BGR)

rgb_image = cv2.cvtColor(bgr_image, cv2.COLOR_BGR2RGB)#将BGR转为RGB

# show_image(filename,rgb_image)

# rgb_image=Image.open(filename)

if resize_height>0 and resize_width>0:

rgb_image=cv2.resize(rgb_image,(resize_width,resize_height))

rgb_image=np.asanyarray(rgb_image)

if normalization:

# 不能写成:rgb_image=rgb_image/255

rgb_image=rgb_image/255.0

# show_image("src resize image",image)

return rgb_image

def get_batch_images(images,labels,batch_size,labels_nums,one_hot=False,shuffle=False,num_threads=1):

'''

:param images:图像

:param labels:标签

:param batch_size:

:param labels_nums:标签个数

:param one_hot:是否将labels转为one_hot的形式

:param shuffle:是否打乱顺序,一般train时shuffle=True,验证时shuffle=False

:return:返回batch的images和labels

'''

min_after_dequeue = 200

capacity = min_after_dequeue + 3 * batch_size # 保证capacity必须大于min_after_dequeue参数值

if shuffle:

images_batch, labels_batch = tf.train.shuffle_batch([images,labels],

batch_size=batch_size,

capacity=capacity,

min_after_dequeue=min_after_dequeue,

num_threads=num_threads)

else:

images_batch, labels_batch = tf.train.batch([images,labels],

batch_size=batch_size,

capacity=capacity,

num_threads=num_threads)

if one_hot:

labels_batch = tf.one_hot(labels_batch, labels_nums, 1, 0)

return images_batch,labels_batch

def read_records(filename,resize_height, resize_width,type=None):

'''

解析record文件:源文件的图像数据是RGB,uint8,[0,255],一般作为训练数据时,需要归一化到[0,1]

:param filename:

:param resize_height:

:param resize_width:

:param type:选择图像数据的返回类型

None:默认将uint8-[0,255]转为float32-[0,255]

normalization:归一化float32-[0,1]

centralization:归一化float32-[0,1],再减均值中心化

:return:

'''

# 创建文件队列,不限读取的数量

filename_queue = tf.train.string_input_producer([filename])

# create a reader from file queue

reader = tf.TFRecordReader()

# reader从文件队列中读入一个序列化的样本

_, serialized_example = reader.read(filename_queue)

# get feature from serialized example

# 解析符号化的样本

features = tf.parse_single_example(

serialized_example,

features={

'image_raw': tf.FixedLenFeature([], tf.string),

'height': tf.FixedLenFeature([], tf.int64),

'width': tf.FixedLenFeature([], tf.int64),

'depth': tf.FixedLenFeature([], tf.int64),

'label': tf.FixedLenFeature([], tf.int64)

}

)

tf_image = tf.decode_raw(features['image_raw'], tf.uint8)#获得图像原始的数据

tf_height = features['height']

tf_width = features['width']

tf_depth = features['depth']

tf_label = tf.cast(features['label'], tf.int32)

# PS:恢复原始图像数据,reshape的大小必须与保存之前的图像shape一致,否则出错

# tf_image=tf.reshape(tf_image, [-1]) # 转换为行向量

tf_image=tf.reshape(tf_image, [resize_height, resize_width, 3]) # 设置图像的维度

# 恢复数据后,才可以对图像进行resize_images:输入uint->输出float32

# tf_image=tf.image.resize_images(tf_image,[224, 224])

# 存储的图像类型为uint8,tensorflow训练时数据必须是tf.float32

if type is None:

tf_image = tf.cast(tf_image, tf.float32)

elif type=='normalization':# [1]若需要归一化请使用:

# 仅当输入数据是uint8,才会归一化[0,255]

# tf_image = tf.image.convert_image_dtype(tf_image, tf.float32)

tf_image = tf.cast(tf_image, tf.float32) * (1. / 255.0) # 归一化

elif type=='centralization':

# 若需要归一化,且中心化,假设均值为0.5,请使用:

tf_image = tf.cast(tf_image, tf.float32) * (1. / 255) - 0.5 #中心化

# 这里仅仅返回图像和标签

# return tf_image, tf_height,tf_width,tf_depth,tf_label

return tf_image,tf_label

def create_records(image_dir,file, output_record_dir, resize_height, resize_width,shuffle,log=5):

'''

实现将图像原始数据,label,长,宽等信息保存为record文件

注意:读取的图像数据默认是uint8,再转为tf的字符串型BytesList保存,解析请需要根据需要转换类型

:param image_dir:原始图像的目录

:param file:输入保存图片信息的txt文件(image_dir+file构成图片的路径)

:param output_record_dir:保存record文件的路径

:param resize_height:

:param resize_width:

PS:当resize_height或者resize_width=0是,不执行resize

:param shuffle:是否打乱顺序

:param log:log信息打印间隔

'''

# 加载文件,仅获取一个label

images_list, labels_list=load_labels_file(file,1,shuffle)

writer = tf.python_io.TFRecordWriter(output_record_dir)

for i, [image_name, labels] in enumerate(zip(images_list, labels_list)):

image_path=os.path.join(image_dir,images_list[i])

if not os.path.exists(image_path):

print('Err:no image',image_path)

continue

image = read_image(image_path, resize_height, resize_width)

image_raw = image.tostring()

if i%log==0 or i==len(images_list)-1:

print('------------processing:%d-th------------' % (i))

print('current image_path=%s' % (image_path),'shape:{}'.format(image.shape),'labels:{}'.format(labels))

# 这里仅保存一个label,多label适当增加"'label': _int64_feature(label)"项

label=labels[0]

example = tf.train.Example(features=tf.train.Features(feature={

'image_raw': _bytes_feature(image_raw),

'height': _int64_feature(image.shape[0]),

'width': _int64_feature(image.shape[1]),

'depth': _int64_feature(image.shape[2]),

'label': _int64_feature(label)

}))

writer.write(example.SerializeToString())

writer.close()

def disp_records(record_file,resize_height, resize_width,show_nums=4):

'''

解析record文件,并显示show_nums张图片,主要用于验证生成record文件是否成功

:param tfrecord_file: record文件路径

:return:

'''

# 读取record函数

tf_image, tf_label = read_records(record_file,resize_height,resize_width,type='normalization')

# 显示前4个图片

init_op = tf.initialize_all_variables()

with tf.Session() as sess:

sess.run(init_op)

coord = tf.train.Coordinator()

threads = tf.train.start_queue_runners(sess=sess, coord=coord)

for i in range(show_nums):

image,label = sess.run([tf_image,tf_label]) # 在会话中取出image和label

# image = tf_image.eval()

# 直接从record解析的image是一个向量,需要reshape显示

# image = image.reshape([height,width,depth])

print('shape:{},tpye:{},labels:{}'.format(image.shape,image.dtype,label))

# pilimg = Image.fromarray(np.asarray(image_eval_reshape))

# pilimg.show()

show_image("image:%d"%(label),image)

coord.request_stop()

coord.join(threads)

def batch_test(record_file,resize_height, resize_width):

'''

:param record_file: record文件路径

:param resize_height:

:param resize_width:

:return:

:PS:image_batch, label_batch一般作为网络的输入

'''

# 读取record函数

tf_image,tf_label = read_records(record_file,resize_height,resize_width,type='normalization')

image_batch, label_batch= get_batch_images(tf_image,tf_label,batch_size=4,labels_nums=5,one_hot=False,shuffle=False)

init = tf.global_variables_initializer()

with tf.Session() as sess: # 开始一个会话

sess.run(init)

coord = tf.train.Coordinator()

threads = tf.train.start_queue_runners(coord=coord)

for i in range(4):

# 在会话中取出images和labels

images, labels = sess.run([image_batch, label_batch])

# 这里仅显示每个batch里第一张图片

show_image("image", images[0, :, :, :])

print('shape:{},tpye:{},labels:{}'.format(images.shape,images.dtype,labels))

# 停止所有线程

coord.request_stop()

coord.join(threads)

if __name__ == '__main__':

# 参数设置

resize_height = 224 # 指定存储图片高度

resize_width = 224 # 指定存储图片宽度

shuffle=True

log=5

# 产生train.record文件

image_dir='dataset/train'

train_labels = 'dataset/train.txt' # 图片路径

train_record_output = 'dataset/record/train.tfrecords'

create_records(image_dir,train_labels, train_record_output, resize_height, resize_width,shuffle,log)

train_nums=get_example_nums(train_record_output)

print("save train example nums={}".format(train_nums))

# 产生val.record文件

image_dir='dataset/val'

val_labels = 'dataset/val.txt' # 图片路径

val_record_output = 'dataset/record/val.tfrecords'

create_records(image_dir,val_labels, val_record_output, resize_height, resize_width,shuffle,log)

val_nums=get_example_nums(val_record_output)

print("save val example nums={}".format(val_nums))

# 测试显示函数

# disp_records(train_record_output,resize_height, resize_width)

batch_test(train_record_output,resize_height, resize_width)

1.3 训练方法实现过程

inception_v3要求训练数据height, width = 224, 224,项目使用create_tf_record.py制作了训练train.tfrecords和验证val.tfrecords数据,下面是inception_v3_train_val.py文件代码说明:

#coding=utf-8

import tensorflow as tf

import numpy as np

import pdb

import os

from datetime import datetime

import slim.nets.inception_v3 as inception_v3

from create_tf_record import *

import tensorflow.contrib.slim as slim

labels_nums = 5 # 类别个数

batch_size = 16 #

resize_height = 224 # 指定存储图片高度

resize_width = 224 # 指定存储图片宽度

depths = 3

data_shape = [batch_size, resize_height, resize_width, depths]

# 定义input_images为图片数据

input_images = tf.placeholder(dtype=tf.float32, shape=[None, resize_height, resize_width, depths], name='input')

# 定义input_labels为labels数据

# input_labels = tf.placeholder(dtype=tf.int32, shape=[None], name='label')

input_labels = tf.placeholder(dtype=tf.int32, shape=[None, labels_nums], name='label')

# 定义dropout的概率

keep_prob = tf.placeholder(tf.float32,name='keep_prob')

is_training = tf.placeholder(tf.bool, name='is_training')

def net_evaluation(sess,loss,accuracy,val_images_batch,val_labels_batch,val_nums):

val_max_steps = int(val_nums / batch_size)

val_losses = []

val_accs = []

for _ in range(val_max_steps):

val_x, val_y = sess.run([val_images_batch, val_labels_batch])

# print('labels:',val_y)

# val_loss = sess.run(loss, feed_dict={x: val_x, y: val_y, keep_prob: 1.0})

# val_acc = sess.run(accuracy,feed_dict={x: val_x, y: val_y, keep_prob: 1.0})

val_loss,val_acc = sess.run([loss,accuracy], feed_dict={input_images: val_x, input_labels: val_y, keep_prob:1.0, is_training: False})

val_losses.append(val_loss)

val_accs.append(val_acc)

mean_loss = np.array(val_losses, dtype=np.float32).mean()

mean_acc = np.array(val_accs, dtype=np.float32).mean()

return mean_loss, mean_acc

def step_train(train_op,loss,accuracy,

train_images_batch,train_labels_batch,train_nums,train_log_step,

val_images_batch,val_labels_batch,val_nums,val_log_step,

snapshot_prefix,snapshot):

'''

循环迭代训练过程

:param train_op: 训练op

:param loss: loss函数

:param accuracy: 准确率函数

:param train_images_batch: 训练images数据

:param train_labels_batch: 训练labels数据

:param train_nums: 总训练数据

:param train_log_step: 训练log显示间隔

:param val_images_batch: 验证images数据

:param val_labels_batch: 验证labels数据

:param val_nums: 总验证数据

:param val_log_step: 验证log显示间隔

:param snapshot_prefix: 模型保存的路径

:param snapshot: 模型保存间隔

:return: None

'''

saver = tf.train.Saver()

max_acc = 0.0

with tf.Session() as sess:

sess.run(tf.global_variables_initializer())

sess.run(tf.local_variables_initializer())

coord = tf.train.Coordinator()

threads = tf.train.start_queue_runners(sess=sess, coord=coord)

for i in range(max_steps + 1):

batch_input_images, batch_input_labels = sess.run([train_images_batch, train_labels_batch])

_, train_loss = sess.run([train_op, loss], feed_dict={input_images: batch_input_images,

input_labels: batch_input_labels,

keep_prob: 0.5, is_training: True})

# train测试(这里仅测试训练集的一个batch)

if i % train_log_step == 0:

train_acc = sess.run(accuracy, feed_dict={input_images: batch_input_images,

input_labels: batch_input_labels,

keep_prob: 1.0, is_training: False})

print("%s: Step [%d] train Loss : %f, training accuracy : %g" % (

datetime.now(), i, train_loss, train_acc))

# val测试(测试全部val数据)

if i % val_log_step == 0:

mean_loss, mean_acc = net_evaluation(sess, loss, accuracy, val_images_batch, val_labels_batch, val_nums)

print("%s: Step [%d] val Loss : %f, val accuracy : %g" % (datetime.now(), i, mean_loss, mean_acc))

# 模型保存:每迭代snapshot次或者最后一次保存模型

if (i % snapshot == 0 and i > 0) or i == max_steps:

print('-----save:{}-{}'.format(snapshot_prefix, i))

saver.save(sess, snapshot_prefix, global_step=i)

# 保存val准确率最高的模型

if mean_acc > max_acc and mean_acc > 0.7:

max_acc = mean_acc

path = os.path.dirname(snapshot_prefix)

best_models = os.path.join(path, 'best_models_{}_{:.4f}.ckpt'.format(i, max_acc))

print('------save:{}'.format(best_models))

saver.save(sess, best_models)

coord.request_stop()

coord.join(threads)

def train(train_record_file,

train_log_step,

train_param,

val_record_file,

val_log_step,

labels_nums,

data_shape,

snapshot,

snapshot_prefix):

'''

:param train_record_file: 训练的tfrecord文件

:param train_log_step: 显示训练过程log信息间隔

:param train_param: train参数

:param val_record_file: 验证的tfrecord文件

:param val_log_step: 显示验证过程log信息间隔

:param val_param: val参数

:param labels_nums: labels数

:param data_shape: 输入数据shape

:param snapshot: 保存模型间隔

:param snapshot_prefix: 保存模型文件的前缀名

:return:

'''

[base_lr,max_steps]=train_param

[batch_size,resize_height,resize_width,depths]=data_shape

# 获得训练和测试的样本数

train_nums=get_example_nums(train_record_file)

val_nums=get_example_nums(val_record_file)

print('train nums:%d,val nums:%d'%(train_nums,val_nums))

# 从record中读取图片和labels数据

# train数据,训练数据一般要求打乱顺序shuffle=True

train_images, train_labels = read_records(train_record_file, resize_height, resize_width, type='normalization')

train_images_batch, train_labels_batch = get_batch_images(train_images, train_labels,

batch_size=batch_size, labels_nums=labels_nums,

one_hot=True, shuffle=True)

# val数据,验证数据可以不需要打乱数据

val_images, val_labels = read_records(val_record_file, resize_height, resize_width, type='normalization')

val_images_batch, val_labels_batch = get_batch_images(val_images, val_labels,

batch_size=batch_size, labels_nums=labels_nums,

one_hot=True, shuffle=False)

# Define the model:

with slim.arg_scope(inception_v3.inception_v3_arg_scope()):

out, end_points = inception_v3.inception_v3(inputs=input_images, num_classes=labels_nums, dropout_keep_prob=keep_prob, is_training=is_training)

# Specify the loss function: tf.losses定义的loss函数都会自动添加到loss函数,不需要add_loss()了

tf.losses.softmax_cross_entropy(onehot_labels=input_labels, logits=out)#添加交叉熵损失loss=1.6

# slim.losses.add_loss(my_loss)

loss = tf.losses.get_total_loss(add_regularization_losses=True)#添加正则化损失loss=2.2

accuracy = tf.reduce_mean(tf.cast(tf.equal(tf.argmax(out, 1), tf.argmax(input_labels, 1)), tf.float32))

# Specify the optimization scheme:

optimizer = tf.train.GradientDescentOptimizer(learning_rate=base_lr)

# global_step = tf.Variable(0, trainable=False)

# learning_rate = tf.train.exponential_decay(0.05, global_step, 150, 0.9)

#

# optimizer = tf.train.MomentumOptimizer(learning_rate, 0.9)

# # train_tensor = optimizer.minimize(loss, global_step)

# train_op = slim.learning.create_train_op(loss, optimizer,global_step=global_step)

# 在定义训练的时候, 注意到我们使用了`batch_norm`层时,需要更新每一层的`average`和`variance`参数,

# 更新的过程不包含在正常的训练过程中, 需要我们去手动像下面这样更新

# 通过`tf.get_collection`获得所有需要更新的`op`

update_ops = tf.get_collection(tf.GraphKeys.UPDATE_OPS)

# 使用`tensorflow`的控制流, 先执行更新算子, 再执行训练

with tf.control_dependencies(update_ops):

# create_train_op that ensures that when we evaluate it to get the loss,

# the update_ops are done and the gradient updates are computed.

# train_op = slim.learning.create_train_op(total_loss=loss,optimizer=optimizer)

train_op = slim.learning.create_train_op(total_loss=loss, optimizer=optimizer)

# 循环迭代过程

step_train(train_op, loss, accuracy,

train_images_batch, train_labels_batch, train_nums, train_log_step,

val_images_batch, val_labels_batch, val_nums, val_log_step,

snapshot_prefix, snapshot)

if __name__ == '__main__':

train_record_file='dataset/record/train.tfrecords'

val_record_file='dataset/record/val.tfrecords'

train_log_step=100

base_lr = 0.01 # 学习率

max_steps = 200 # 迭代次数 可选择10000次 有条件可选择100000次

train_param=[base_lr,max_steps]

val_log_step=10 #可定义200

snapshot=200 #保存文件间隔

snapshot_prefix='models/model.ckpt'

train(train_record_file=train_record_file,

train_log_step=train_log_step,

train_param=train_param,

val_record_file=val_record_file,

val_log_step=val_log_step,

labels_nums=labels_nums,

data_shape=data_shape,

snapshot=snapshot,

snapshot_prefix=snapshot_prefix)

1.4 模型预测

模型测试的程序,predict.py代码如下:

#coding=utf-8

import tensorflow as tf

import numpy as np

import pdb

import cv2

import os

import glob

import slim.nets.inception_v3 as inception_v3

from create_tf_record import *

import tensorflow.contrib.slim as slim

def predict(models_path,image_dir,labels_filename,labels_nums, data_format):

[batch_size, resize_height, resize_width, depths] = data_format

labels = np.loadtxt(labels_filename, str, delimiter='\t')

input_images = tf.placeholder(dtype=tf.float32, shape=[None, resize_height, resize_width, depths], name='input')

with slim.arg_scope(inception_v3.inception_v3_arg_scope()):

out, end_points = inception_v3.inception_v3(inputs=input_images, num_classes=labels_nums, dropout_keep_prob=1.0, is_training=False)

# 将输出结果进行softmax分布,再求最大概率所属类别

score = tf.nn.softmax(out,name='pre')

class_id = tf.argmax(score, 1)

sess = tf.InteractiveSession()

sess.run(tf.global_variables_initializer())

saver = tf.train.Saver()

saver.restore(sess, models_path)

images_list=glob.glob(os.path.join(image_dir,'*.jpg'))

for image_path in images_list:

im=read_image(image_path,resize_height,resize_width,normalization=True)

im=im[np.newaxis,:]

#pred = sess.run(f_cls, feed_dict={x:im, keep_prob:1.0})

pre_score,pre_label = sess.run([score,class_id], feed_dict={input_images:im})

max_score=pre_score[0,pre_label]

print("{} is: pre labels:{},name:{} score: {}".format(image_path,pre_label,labels[pre_label], max_score))

sess.close()

if __name__ == '__main__':

class_nums=5

image_dir='test_image'

labels_filename='dataset/label.txt'

models_path='models/model.ckpt-200'

batch_size = 1 #

resize_height = 224 # 指定存储图片高度

resize_width = 224 # 指定存储图片宽度

depths=3

data_format=[batch_size,resize_height,resize_width,depths]

predict(models_path,image_dir, labels_filename, class_nums, data_format)

312

312

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?