在使用Nacos作为注册中心时,首先要引入nacos相关依赖,并使用@EnableNacosDiscovery或@EnableDiscoveryClient注解配置开启nacos注册中心功能

@EnableNacosDiscovery是nacos提供的专属注解,用于开启nacos的服务注册和发现功能,它只适用于nacos作为注册中心的场景 @EnableDiscoveryClient是spring cloud提供的通用注解,用于开启服务注册和发现功能,它可以适配不同的注册中心,如eureka、consul、zookeeper等,只要引入相应的依赖即可。 @EnableNacosDiscovery和@EnableDiscoveryClient都可以实现nacos的服务注册和发现功能,但是@EnableNacosDiscovery更加简洁和直观,而@EnableDiscoveryClient更加通用和灵活

< dependency> < groupId> </ groupId> < artifactId> </ artifactId> < version> </ version> </ dependency> < dependency> < groupId> </ groupId> < artifactId> </ artifactId> < version> </ version> </ dependency> 然后在服务yml中配置注册中心相关信息,在配置时有一个ephemeral属性,默认为true表示当前服务是临时实例,false表示为持久实例,两种不同实例的健康监测机制不同

临时实例: 默认情况下该类型实例仅会注册在Nacos内存,不会持久化到磁盘,其健康检测机制为Client模式,也就是Client主动向Server上报健康状态类似于推模式,默认心跳间隔为5秒,在15秒内Server未收到Client心跳,则会将其标记为“不健康”状态,在30秒内若收到了Client心跳,则重新恢复“健康”状态,否则该实例将从Server端内存清除,即不健康的实例Server会自动清除 持久实例: 服务实例不仅会注册到Nacos内存,同时也会持久化到磁盘,此时健康检测机制为Server模式,也就是nacos注册中心主动去检测Client的健康状态类似于拉模式,默认每20秒检测一次,健康检测失败后服务实例会被标记为“不健康”状态,因为其是持久化在磁盘的所以不会被清除,需要专门处理

nacos的健康检查机制分客户端主动上报和服务端主动探测两种,客户端主动上报是指客户端定时向服务端发送心跳请求,表明自己的存活状态就想上面说的默认间隔时间为5秒可以通过配置项naming.client.beat.interval来修改。服务端主动探测是指服务端定时向客户端发送请求或者心跳包,检查客户端的存活状态,默认间隔时间为10秒,可以通过配置项naming.healthCheckTaskInterval来修改 服务提供方启动时会获取当前服务信息,包括服务名,服务ip,端口号等,向nacos发送注册请求,同时启动定时任务定时上报心跳,nacos注册中心接收到注册请求后,会解析获取到服务信息,封装成一个Instance类对象保存到一个ConcurrentHashMap类型的serviceMap中,这就是服务注册表,这是一个双层map,外层map的key为namespaceId,valve为内层map,内层map key为 group::serviceName,valve为Service实例也就是Instance对象 健康保护机制: 在Nacos注册中心的service中存在一个protectThreshold属性,代表保护阈值,针对的是当前某一个Service中的服务实例的,是默认值是 0.85。如果健康实例的占比小于 85%,那么就会触发保护阈值,消费者会从所有实例中进行选择调用,可能会调用到不健康实例 了解nacos注册中心首先要了解内部一个比较重要的核心接口NamingService,提供了服务的上下线,服务实例查询,根据健康状态查询实例列表,根据随机权重算法查询单个健康实例,服务监听器订阅\取消订阅,分页查询服务列表等众多能力。整合了nacos注册中心的服务在启动时首先会解析yaml中的nacos相关配置,执行NamingFactory下的createNamingService()方法创建NamingService,默认情况下返回的是NacosNamingService static {

try {

namingService = NamingFactory . createNamingService ( SERVER_ADDR ) ;

} catch ( NacosException e) {

logger. error ( "连接到Nacos时有错误发生: " , e) ;

throw new RpcException ( RpcError . FAILED_TO_CONNECT_TO_SERVICE_REGISTRY ) ;

}

}

查看NacosNamingService内部重写了init()方法,通过该方法初始化创建了几个核心类: 客户端心跳管理类BeatReactor、本地服务列表管理类HostReactor、服务事件管理类EventDispatcher、NacosServer 通信代理类NamingProxy private void init ( Properties properties) throws NacosException {

ValidatorUtils . checkInitParam ( properties) ;

this . namespace = InitUtils . initNamespaceForNaming ( properties) ;

InitUtils . initSerialization ( ) ;

initServerAddr ( properties) ;

InitUtils . initWebRootContext ( ) ;

initCacheDir ( ) ;

initLogName ( properties) ;

this . eventDispatcher = new EventDispatcher ( ) ;

this . serverProxy = new NamingProxy ( this . namespace, this . endpoint, this . serverList, properties) ;

this . beatReactor = new BeatReactor ( this . serverProxy, initClientBeatThreadCount ( properties) ) ;

this . hostReactor = new HostReactor ( this . eventDispatcher, this . serverProxy, beatReactor, this . cacheDir,

isLoadCacheAtStart ( properties) , initPollingThreadCount ( properties) ) ;

}

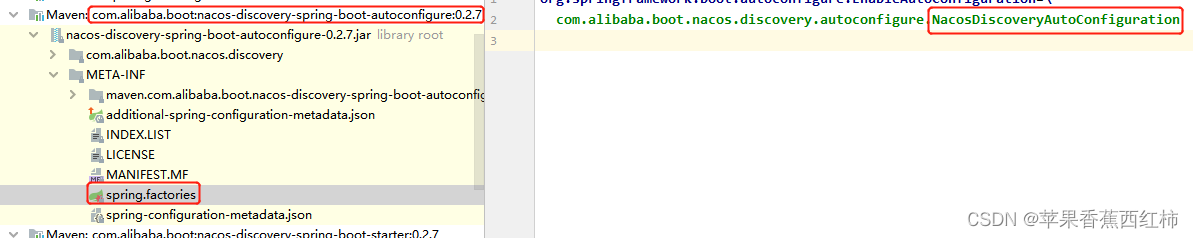

查看EventDispatcher源码,内部创建了SingleThreadExecutor,通过这个线程池执行Notifier任务,任务执行方法中开启了一个无限循环,一直从阻塞队列changedServices中获取数据,发送NamingEvent事件通知 负责与 Nacos 服务器进行通信 负责处理心跳任务,下面有讲解 HostReactor 负责与nacos注册中心的udp通信实现的服务更新,下方有讲解 在通过nacos-discovery-spring-boot-starter依赖整合nacos注册中心时,查看META-INF下的spring.factories文件,根据SpringBoot SPI自动装配原理,通过这个文件向容器中注入了一个NacosDiscoveryAutoConfiguration对象 查看 NacosDiscoveryAutoConfiguration,该类中提供了一个被@Bean修饰的discoveryAutoRegister(),会向容器中创建一个NacosDiscoveryAutoRegister import com. alibaba. boot. nacos. discovery. properties. NacosDiscoveryProperties ;

import com. alibaba. nacos. spring. context. annotation. discovery. EnableNacosDiscovery ;

import org. springframework. boot. autoconfigure. condition. ConditionalOnClass ;

import org. springframework. boot. autoconfigure. condition. ConditionalOnMissingBean ;

import org. springframework. boot. autoconfigure. condition. ConditionalOnProperty ;

import org. springframework. boot. context. properties. EnableConfigurationProperties ;

import org. springframework. context. annotation. Bean ;

@ConditionalOnProperty (

name = { "nacos.discovery.enabled" } ,

matchIfMissing = true

)

@ConditionalOnMissingBean (

name = { "globalNacosProperties$discovery" }

)

@EnableNacosDiscovery

@EnableConfigurationProperties ( { NacosDiscoveryProperties . class } )

@ConditionalOnClass (

name = { "org.springframework.boot.context.properties.bind.Binder" }

)

public class NacosDiscoveryAutoConfiguration {

public NacosDiscoveryAutoConfiguration ( ) {

}

@Bean

public NacosDiscoveryAutoRegister discoveryAutoRegister ( ) {

return new NacosDiscoveryAutoRegister ( ) ;

}

}

查看NacosDiscoveryAutoRegister 继承自ApplicationListener,了解Spring事件驱动开发原理知道,当 Spring 容器刷新完成后,会触发该事件,事件执行NacosDiscoveryAutoRegister 会调用 onApplicationEvent 方法,实现服务注册(当Spring核心逻辑执行完成刷新(finishRefresh())时,会发布WebServerInitializedEvent事件。此事件由AbstractAutoServiceRegistration的onApplicationEvent方法响应,从而启动服务的自动注册流程)

Spring启动时底层会调用refresh()方法初始化容器,创建bean进行ioc注册,当前只考虑事件驱动,在refresh()方法中会先调用initApplicationEventMulticaster() 初始化事件派发器,多播器, 创建完成后,调用registerListeners() 获取所有ApplicationListener注册监听器,这时候就能注册NacosDiscoveryAutoRegister 因为它继承了ApplicationListener用来监听WebServerInitializedEvent事件,并且在容器创建时调用的registerListeners()方法中还会获取之前注册的事件earlyApplicationEvents,如果不为空遍历这些事件调用applicationEventMulticaster事件派发器的广播事件方法multicastEvent()将这些事件广播出去, 在refresh()方法最后会执行finishRefresh() 完成ben的创建初始化工作,完成 IOC 容器的创建,发布容器刷新完成事件,在该方法中会获取所有类型为ApplicationEvent的事件调用publishEvent()发布事件,而当前WebServerInitializedEvent是在webServer初始话完成后进行发布,最终触发NacosDiscoveryAutoRegister 的onApplicationEvent()方法的执行

事件执行时会调用NacosDiscoveryAutoRegister的onApplicationEvent()方法,该方法内部重点关注调用了NacosNamingService下的registerInstance()方法 import com. alibaba. boot. nacos. discovery. properties. NacosDiscoveryProperties ;

import com. alibaba. boot. nacos. discovery. properties. Register ;

import com. alibaba. nacos. api. annotation. NacosInjected ;

import com. alibaba. nacos. api. exception. NacosException ;

import com. alibaba. nacos. api. naming. NamingService ;

import com. alibaba. nacos. client. naming. utils. NetUtils ;

import org. apache. commons. lang3. StringUtils ;

import org. slf4j. Logger ;

import org. slf4j. LoggerFactory ;

import org. springframework. beans. factory. annotation. Autowired ;

import org. springframework. beans. factory. annotation. Value ;

import org. springframework. boot. web. context. WebServerInitializedEvent ;

import org. springframework. context. ApplicationListener ;

import org. springframework. stereotype. Component ;

@Component

public class NacosDiscoveryAutoRegister implements ApplicationListener < WebServerInitializedEvent > {

private static final Logger logger = LoggerFactory . getLogger ( NacosDiscoveryAutoRegister . class ) ;

@NacosInjected

private NamingService namingService;

@Autowired

private NacosDiscoveryProperties discoveryProperties;

@Value ( "${spring.application.name:}" )

private String applicationName;

public NacosDiscoveryAutoRegister ( ) {

}

public void onApplicationEvent ( WebServerInitializedEvent event) {

if ( this . discoveryProperties. isAutoRegister ( ) ) {

Register register = this . discoveryProperties. getRegister ( ) ;

if ( StringUtils . isEmpty ( register. getIp ( ) ) ) {

register. setIp ( NetUtils . localIP ( ) ) ;

}

if ( register. getPort ( ) == 0 ) {

register. setPort ( event. getWebServer ( ) . getPort ( ) ) ;

}

register. getMetadata ( ) . put ( "preserved.register.source" , "SPRING_BOOT" ) ;

register. setInstanceId ( "" ) ;

String serviceName = register. getServiceName ( ) ;

if ( StringUtils . isEmpty ( serviceName) ) {

if ( StringUtils . isEmpty ( this . applicationName) ) {

throw new AutoRegisterException ( "serviceName notNull" ) ;

}

serviceName = this . applicationName;

}

try {

this . namingService. registerInstance ( serviceName, register. getGroupName ( ) , register) ;

logger. info ( "Finished auto register service : {}, ip : {}, port : {}" , new Object [ ] { serviceName, register. getIp ( ) , register. getPort ( ) } ) ;

} catch ( NacosException var5) {

throw new AutoRegisterException ( var5) ;

}

}

}

}

查看NacosNamingService中的registerInstance()方法:

默认创建的都是临时节点,会创建一个beatInfo对象设置当前服务的ip端口号等信息,设置心跳间隔为5秒,最终调用BeatReactor下的addBeatInfo()方法与Nacos Server服务实现心跳 然后调用NamingProxy下的registerService()方法,内部通过调用Nacos的open API实现服务注册

public class NacosNamingService implements NamingService {

@Override

public void registerInstance ( String serviceName, String groupName, Instance instance) throws NacosException {

if ( instance. isEphemeral ( ) ) {

BeatInfo beatInfo = new BeatInfo ( ) ;

beatInfo. setServiceName ( NamingUtils . getGroupedName ( serviceName, groupName) ) ;

beatInfo. setIp ( instance. getIp ( ) ) ;

beatInfo. setPort ( instance. getPort ( ) ) ;

beatInfo. setCluster ( instance. getClusterName ( ) ) ;

beatInfo. setWeight ( instance. getWeight ( ) ) ;

beatInfo. setMetadata ( instance. getMetadata ( ) ) ;

beatInfo. setScheduled ( false ) ;

beatInfo. setPeriod ( instance. getInstanceHeartBeatInterval ( ) ) ;

beatReactor. addBeatInfo ( NamingUtils . getGroupedName ( serviceName, groupName) , beatInfo) ;

}

serverProxy. registerService ( NamingUtils . getGroupedName ( serviceName, groupName) , groupName, instance) ;

}

}

并且在NacosNamingService中重写了init()方法,在通过init()方法初始化创建这个类的时候一块初始化创建了几个属性对象,在后续与nacos建立心跳,服务注册是会用到,比如内部创建的 BeatReactor 对象(在与nacos建立心跳时会用到这个对象,该对象内部封装了一个线程池) private void init ( Properties properties) {

namespace = InitUtils . initNamespaceForNaming ( properties) ;

initServerAddr ( properties) ;

InitUtils . initWebRootContext ( ) ;

initCacheDir ( ) ;

initLogName ( properties) ;

eventDispatcher = new EventDispatcher ( ) ;

serverProxy = new NamingProxy ( namespace, endpoint, serverList, properties) ;

beatReactor = new BeatReactor ( serverProxy, initClientBeatThreadCount ( properties) ) ;

hostReactor = new HostReactor ( eventDispatcher, serverProxy, cacheDir, isLoadCacheAtStart ( properties) ,

initPollingThreadCount ( properties) ) ;

}

在registerInstance()方法中,首先会判断节点的类型,默认创建的都是临时节点,会创建一个beatInfo对象设置当前服务的ip端口号等信息,设置心跳间隔为5秒,最终调用BeatReactor下的addBeatInfo()方法与Nacos Server服务实现心跳,查看addBeatInfo()方法,重点通过executorService.schedule(BeatTask beatTask)定时线程定时执行BeatTask任务,向nacos发送心跳 public void addBeatInfo ( String serviceName, BeatInfo beatInfo) {

LogUtils . NAMING_LOGGER . info ( "[BEAT] adding beat: {} to beat map." , beatInfo) ;

String key = this . buildKey ( serviceName, beatInfo. getIp ( ) , beatInfo. getPort ( ) ) ;

BeatInfo existBeat = null ;

if ( ( existBeat = ( BeatInfo ) this . dom2Beat. remove ( key) ) != null ) {

existBeat. setStopped ( true ) ;

}

this . dom2Beat. put ( key, beatInfo) ;

this . executorService. schedule ( new BeatReactor. BeatTask ( beatInfo) , beatInfo. getPeriod ( ) , TimeUnit . MILLISECONDS ) ;

MetricsMonitor . getDom2BeatSizeMonitor ( ) . set ( ( double ) this . dom2Beat. size ( ) ) ;

}

查看BeatTask任务类中执行任务的run()方法源码,

会调用NamingProxy下的sendBeat()方法—>nacos api方法请求nacos的"/nacos/v1/ns/instance/beat"地址进行上报 如果上报返回的code码为异常,会调用registerService()重新注册 如果上报成功会继续调用 executorService的schedule()方法继续定时执行BeatTask任务,定时上报心跳 并且在上报心跳时分为服务启动第一次上报携带beatInfo对象的重量级上报与后续普通不携带beatInfo的轻量级上报

@Override

public void run ( ) {

if ( beatInfo. isStopped ( ) ) {

return ;

}

long nextTime = beatInfo. getPeriod ( ) ;

try {

JSONObject result = serverProxy. sendBeat ( beatInfo, BeatReactor . this . lightBeatEnabled) ;

long interval = result. getIntValue ( "clientBeatInterval" ) ;

boolean lightBeatEnabled = false ;

if ( result. containsKey ( CommonParams . LIGHT_BEAT_ENABLED ) ) {

lightBeatEnabled = result. getBooleanValue ( CommonParams . LIGHT_BEAT_ENABLED ) ;

}

BeatReactor . this . lightBeatEnabled = lightBeatEnabled;

if ( interval > 0 ) {

nextTime = interval;

}

int code = NamingResponseCode . OK ;

if ( result. containsKey ( CommonParams . CODE ) ) {

code = result. getIntValue ( CommonParams . CODE ) ;

}

if ( code == NamingResponseCode . RESOURCE_NOT_FOUND ) {

Instance instance = new Instance ( ) ;

instance. setPort ( beatInfo. getPort ( ) ) ;

instance. setIp ( beatInfo. getIp ( ) ) ;

instance. setWeight ( beatInfo. getWeight ( ) ) ;

instance. setMetadata ( beatInfo. getMetadata ( ) ) ;

instance. setClusterName ( beatInfo. getCluster ( ) ) ;

instance. setServiceName ( beatInfo. getServiceName ( ) ) ;

instance. setInstanceId ( instance. getInstanceId ( ) ) ;

instance. setEphemeral ( true ) ;

try {

serverProxy. registerService ( beatInfo. getServiceName ( ) ,

NamingUtils . getGroupName ( beatInfo. getServiceName ( ) ) , instance) ;

} catch ( Exception ignore) {

}

}

} catch ( NacosException ne) {

NAMING_LOGGER . error ( "[CLIENT-BEAT] failed to send beat: {}, code: {}, msg: {}" ,

JSON . toJSONString ( beatInfo) , ne. getErrCode ( ) , ne. getErrMsg ( ) ) ;

}

executorService. schedule ( new BeatTask ( beatInfo) , nextTime, TimeUnit . MILLISECONDS ) ;

}

registerInstance()中通过NamingProxy下的registerService()方法实现服务注册的方法比较简单,获取当前服务信息,比如ip, 多核等等,封装为一个map,直接通过 NamingProxy调用nacos api进行服务注册 public void registerService ( String serviceName, String groupName, Instance instance) throws NacosException {

LogUtils . NAMING_LOGGER . info ( "[REGISTER-SERVICE] {} registering service {} with instance: {}" , new Object [ ] { this . namespaceId, serviceName, instance} ) ;

Map < String , String > = new HashMap ( 9 ) ;

params. put ( "namespaceId" , this . namespaceId) ;

params. put ( "serviceName" , serviceName) ;

params. put ( "groupName" , groupName) ;

params. put ( "clusterName" , instance. getClusterName ( ) ) ;

params. put ( "ip" , instance. getIp ( ) ) ;

params. put ( "port" , String . valueOf ( instance. getPort ( ) ) ) ;

params. put ( "weight" , String . valueOf ( instance. getWeight ( ) ) ) ;

params. put ( "enable" , String . valueOf ( instance. isEnabled ( ) ) ) ;

params. put ( "healthy" , String . valueOf ( instance. isHealthy ( ) ) ) ;

params. put ( "ephemeral" , String . valueOf ( instance. isEphemeral ( ) ) ) ;

params. put ( "metadata" , JSON . toJSONString ( instance. getMetadata ( ) ) ) ;

this . reqAPI ( UtilAndComs . NACOS_URL_INSTANCE , params, "POST" ) ;

}

请求首先到达nacos注册中心的controller层,查看注册中心接收到注册请求的源码InstanceController的register()方法

首先会解析获取注册请求中的请求数据 调用parseInstance()封装代表当前服务实例的Instance对象 调用ServiceManager下的registerInstance()方法,将服务实例保存到注册表中

@CanDistro

@PostMapping

@Secured ( parser = NamingResourceParser . class , action = ActionTypes . WRITE )

public String register ( HttpServletRequest request) throws Exception {

final String namespaceId = WebUtils . optional ( request, CommonParams . NAMESPACE_ID , Constants . DEFAULT_NAMESPACE_ID ) ;

final String serviceName = WebUtils . required ( request, CommonParams . SERVICE_NAME ) ;

checkServiceNameFormat ( serviceName) ;

final Instance instance = parseInstance ( request) ;

serviceManager. registerInstance ( namespaceId, serviceName, instance) ;

return "ok" ;

}

此时请求到达Manager层,重点在ServiceManager的registerInstance()中,查看源码

有个主意点,会获取Ephemeral属性,如果为true则表示当前注册的是临时实例 首先会调用createEmptyService()创建一个空的service临时实例,并将这个空的service保存到注册表 调用addInstance()给上面刚创建的空的service添加当前注册的instance实例

public void registerInstance ( String namespaceId, String serviceName, Instance instance) throws NacosException {

createEmptyService ( namespaceId, serviceName, instance. isEphemeral ( ) ) ;

Service service = getService ( namespaceId, serviceName) ;

if ( service == null ) {

throw new NacosException ( NacosException . INVALID_PARAM ,

"service not found, namespace: " + namespaceId + ", service: " + serviceName) ;

}

addInstance ( namespaceId, serviceName, instance. isEphemeral ( ) , instance) ;

}

查看createEmptyService()创建一个空的service临时实例,并将这个空的service保存到注册表源码,内部重点调用了createServiceIfAbsent()

首先在请求中获取当前注册服务的namespaceId,通过这个namespaceId查询注册表中是否已经存在了该服务对应的service,存在直接返回 如果不存在,创建Service对象,调用putServiceAndInit()将当代表当注册服务的Service添加到注册表中 调用addOrReplaceService()持久实例到其他Nacos

public void createServiceIfAbsent ( String namespaceId, String serviceName, boolean local, Cluster cluster)

throws NacosException {

Service service = getService ( namespaceId, serviceName) ;

if ( service == null ) {

Loggers . SRV_LOG . info ( "creating empty service {}:{}" , namespaceId, serviceName) ;

service = new Service ( ) ;

service. setName ( serviceName) ;

service. setNamespaceId ( namespaceId) ;

service. setGroupName ( NamingUtils . getGroupName ( serviceName) ) ;

service. setLastModifiedMillis ( System . currentTimeMillis ( ) ) ;

service. recalculateChecksum ( ) ;

if ( cluster != null ) {

cluster. setService ( service) ;

service. getClusterMap ( ) . put ( cluster. getName ( ) , cluster) ;

}

service. validate ( ) ;

putServiceAndInit ( service) ;

if ( ! local) {

addOrReplaceService ( service) ;

}

}

}

public Service getService ( String namespaceId, String serviceName) {

if ( serviceMap. get ( namespaceId) == null ) {

return null ;

}

return chooseServiceMap ( namespaceId) . get ( serviceName) ;

}

public Map < String , Service > chooseServiceMap ( String namespaceId) {

return serviceMap. get ( namespaceId) ;

}

查看createEmptyService()创建service,并将service添加到注册表方法中有一个重点调用了一个recalculateChecksum()方法重写计算校验和

遍历当前服务下的所有实例,按照 IP,端口,权重,健康状态等属性进行排序,生成一个实例列表的字符串。 执行MD5Utils.md5Hex()使用 MD5 算法对该字符串进行哈希,得到一个 16 进制的校验和, 将该校验和存储在服务的元数据中,作为服务的唯一标识 当服务下的实例发生增加,删除,修改等操作时,重新调用该方法计算新的校验和,并与旧的校验和进行比较。 如果校验和不同,说明服务发生了变化,需要通知客户端进行同步

public synchronized void recalculateChecksum ( ) {

List < Instance > = allIPs ( ) ;

StringBuilder ipsString = new StringBuilder ( ) ;

ipsString. append ( getServiceString ( ) ) ;

if ( Loggers . SRV_LOG . isDebugEnabled ( ) ) {

Loggers . SRV_LOG . debug ( "service to json: " + getServiceString ( ) ) ;

}

if ( CollectionUtils . isNotEmpty ( ips) ) {

Collections . sort ( ips) ;

}

for ( Instance ip : ips) {

String string = ip. getIp ( ) + ":" + ip. getPort ( ) + "_" + ip. getWeight ( ) + "_" + ip. isHealthy ( ) + "_" + ip

. getClusterName ( ) ;

ipsString. append ( string) ;

ipsString. append ( "," ) ;

}

checksum = MD5Utils . md5Hex ( ipsString. toString ( ) , Constants . ENCODE ) ;

}

public List < Instance > allIPs ( ) {

List < Instance > = new ArrayList < > ( ) ;

for ( Map. Entry < String , Cluster > : clusterMap. entrySet ( ) ) {

result. addAll ( entry. getValue ( ) . allIPs ( ) ) ;

}

return result;

}

public List < Instance > allIPs ( ) {

List < Instance > = new ArrayList < > ( ) ;

allInstances. addAll ( persistentInstances) ;

allInstances. addAll ( ephemeralInstances) ;

return allInstances;

}

我们继续看一下创建service后,执行ServiceManager的putServiceAndInit()将service写入到注册表源码,该方法中重点做了三个动作

调用putService()方法最终将当前注册的服务的service添加到注册表serviceMap中 调用Service下的init()方法,初始化service内部健康检测任务(重点这个就是service内部健康检测任务 ) 通过consistencyService.listen()给nacos集合中的当前服务的持久实例、临时实例添加监听

private void putServiceAndInit ( Service service) throws NacosException {

putservice ( service) ;

service. init ( ) ;

consistencyService. listen ( KeyBuilder . buildInstanceListKey ( service. getNamespaceId ( ) , service. getName ( ) , true ) , service) ;

consistencyService. listen ( KeyBuilder . buildInstanceListKey ( service. getNamespaceld ( ) , service. getName ( ) , false , service) ;

Loggers . SRV_LOG . info ( "[NEW-SERVICE]" , service. toJson ( ) ) ;

}

public void putService ( Service service) {

if ( ! serviceMap. containsKey ( service. getNamespaceId ( ) ) ) {

synchronized ( putServiceLock) {

if ( ! serviceMap. containsKey ( service. getNamespaceId ( ) ) ) {

serviceMap. put ( service. getNamespaceId ( ) , new ConcurrentHashMap < > ( 16 ) ) ;

}

}

}

serviceMap. get ( service. getNamespaceId ( ) ) . put ( service. getName ( ) , service) ;

}

查看服务注册nacos处理流程,可以用mvc的架构去理解

在服务注册时,请求首先到达nacos注册中心controller层InstanceController的register()解析http请求, 有controller层调用ServiceManager的registerInstance(),获取注册中心服务列表中该服务信息,如果不存在说明第一次注册,创建当前服务的service,添加到serviceMap服务列表中,封装Instance实例,调用addInstance()将实例添加到service,实现服务注册,并添加监控检查的定时任务等 接下来重点查看一下addInstance()重点关注nacos如何处理AP或CP的,这里就来到的nacos的service层

nacos的AP或CP是根据临时实例或持久化实例来区分的,通过spring.cloud.nacos.discovery.ephemeral配置,默认true为临时实例 查看addInstance()添加实例到指定的服务中的源码,内部会调用DelegateConsistencyServiceImpl的put(key, instances)将实例列表放入一致性服务中,以确保实例信息在集群中保持一致

public void addInstance ( String namespaceId, String serviceName, boolean ephemeral, Instance . . . ips) throws NacosException {

String key = KeyBuilder . buildInstanceListKey ( namespaceId, serviceName, ephemeral) ;

Service service = getService ( namespaceId, serviceName) ;

synchronized ( service) {

List < Instance > = addIpAddresses ( service, ephemeral, ips) ;

Instances instances = new Instances ( ) ;

instances. setInstanceList ( instanceList) ;

consistencyService. put ( key, instances) ;

}

}

DelegateConsistencyServiceImpl中有一个代表AP的ephemeralConsistencyService @DependsOn ( "ProtocolManager" )

@Service ( "consistencyDelegate" )

public class DelegateConsistencyServiceImpl implements ConsistencyService {

private final PersistentConsistencyService persistentConsistencyService;

private final EphemeralConsistencyService ephemeralConsistencyService;

public DelegateConsistencyServiceImpl (

PersistentConsistencyService persistentConsistencyService,

EphemeralConsistencyService ephemeralConsistencyService) {

this . persistentConsistencyService = persistentConsistencyService;

this . ephemeralConsistencyService = ephemeralConsistencyService;

}

@Override

public void put ( String key, Record value) throws NacosException {

mapConsistencyService ( key) . put ( key, value) ;

}

private ConsistencyService mapConsistencyService ( String key) {

return KeyBuilder . matchEphemeralKey ( key) ? ephemeralConsistencyService : persistentConsistencyService;

}

}

重点关注EphemeralConsistencyService 中实现注册服务信息同步的put方法,内部:

执行onPut(key, value)注册实例,状态为ApplyAction.CHANGE 投递 notifier 执行taskDispatcher.addTask(key)同步服务实例给其他集群节点 在onPut方法中,首先通过KeyBuilder.matchEphemeralInstanceListKey(key)判断key是否匹配临时性实例列表的key模式,如果匹配,则将实例信息封装成Datum< Instances >对象,并异步地通过Notifier线程处理,将数据记录存储到数据存储器中(dataStore.put(key, datum))。这样异步处理避免了阻塞主线程,提高了处理效率 在数据处理完成后,Nacos通过发布ServiceChangeEvent事件,触发UDP推送服务,将服务实例变动通知给订阅的客户端。这种方式相对于ZooKeeper的TCP长连接模式,确实节约了很多资源,尤其在大量节点更新时不会出现性能瓶颈。虽然UDP推送不能保证数据可靠性,但Nacos客户端通过定时任务轮询(每隔1秒)进行服务发现的方式做兜底,可以保证数据的最终一致性和可靠性。这种 服务端UDP推送+客户端定时轮询的方式在很多实际场景中能够有效平衡实时性和数据可靠性的需求

@DependsOn ( "ProtocolManager" )

@org.springframework.stereotype.Service ( "distroConsistencyService" )

public class DistroConsistencyServiceImpl implements EphemeralConsistencyService {

private final DistroMapper distroMapper;

private final DataStore dataStore;

private final TaskDispatcher taskDispatcher;

private final ServerMemberManager memberManager;

private final GlobalConfig globalConfig;

private volatile Notifier notifier = new Notifier ( ) ;

private Map < String , ConcurrentLinkedQueue < RecordListener > > = new ConcurrentHashMap < > ( ) ;

private Map < String , String > = new ConcurrentHashMap < > ( 16 ) ;

public DistroConsistencyServiceImpl ( DistroMapper distroMapper, DataStore dataStore,

TaskDispatcher taskDispatcher, Serializer serializer,

ServerMemberManager memberManager, SwitchDomain switchDomain,

GlobalConfig globalConfig) {

this . distroMapper = distroMapper;

this . dataStore = dataStore;

this . taskDispatcher = taskDispatcher;

this . serializer = serializer;

this . memberManager = memberManager;

this . switchDomain = switchDomain;

this . globalConfig = globalConfig;

}

@PostConstruct

public void init ( ) {

GlobalExecutor . submit ( loadDataTask) ;

GlobalExecutor . submitDistroNotifyTask ( notifier) ;

}

@Override

public void put ( String key, Record value) throws NacosException {

onPut ( key, value) ;

taskDispatcher. addTask ( key) ;

}

}

也是关注RaftConsistencyServiceImpl中用来同步服务信息的put()方法

内部封装了RaftCore一致性算法类,调用RaftCore的signalPublish()实现同步 查看signalPublish()方法,内部首先获取到majorityCount需要同步的nacos服务的节点数,利用CountDownLatch控制调用 HttpClient.asyncHttpPostLarge()向其他节点发送确认请求实现多数节点的确认 如果在规定的时间内没有得到majorityCount个节点的成功响应,则认为数据发布失败,并抛出异常

@DependsOn ( "ProtocolManager" )

@Service

public class RaftConsistencyServiceImpl implements PersistentConsistencyService {

@Autowired

private RaftCore raftCore;

@Autowired

private RaftPeerSet peers;

@Autowired

private SwitchDomain switchDomain;

@Override

public void put ( String key, Record value) throws NacosException {

try {

raftCore. signalPublish ( key, value) ;

} catch ( Exception e) {

Loggers . RAFT . error ( "Raft put failed." , e) ;

throw new NacosException ( NacosException . SERVER_ERROR , "Raft put failed, key:" + key + ", value:" + value, e) ;

}

}

}

@DependsOn ( "ProtocolManager" )

@Component

public class RaftCore {

. . . 省略

public void signalPublish ( String key, Record value) throws Exception {

if ( ! isLeader ( ) ) {

ObjectNode params = JacksonUtils . createEmptyJsonNode ( ) ;

params. put ( "key" , key) ;

params. replace ( "value" , JacksonUtils . transferToJsonNode ( value) ) ;

Map < String , String > = new HashMap < > ( 1 ) ;

parameters. put ( "key" , key) ;

final RaftPeer leader = getLeader ( ) ;

raftProxy. proxyPostLarge ( leader. ip, API_PUB , params. toString ( ) , parameters) ;

return ;

}

try {

OPERATE_LOCK . lock ( ) ;

long start = System . currentTimeMillis ( ) ;

final Datum datum = new Datum ( ) ;

datum. key = key;

datum. value = value;

if ( getDatum ( key) == null ) {

datum. timestamp. set ( 1L ) ;

} else {

datum. timestamp. set ( getDatum ( key) . timestamp. incrementAndGet ( ) ) ;

}

ObjectNode json = JacksonUtils . createEmptyJsonNode ( ) ;

json. replace ( "datum" , JacksonUtils . transferToJsonNode ( datum) ) ;

json. replace ( "source" , JacksonUtils . transferToJsonNode ( peers. local ( ) ) ) ;

onPublish ( datum, peers. local ( ) ) ;

final String content = json. toString ( ) ;

final CountDownLatch latch = new CountDownLatch ( peers. majorityCount ( ) ) ;

for ( final String server : peers. allServersIncludeMyself ( ) ) {

if ( isLeader ( server) ) {

latch. countDown ( ) ;

continue ;

}

final String url = buildURL ( server, API_ON_PUB ) ;

HttpClient . asyncHttpPostLarge ( url, Arrays . asList ( "key=" + key) , content, new AsyncCompletionHandler < Integer > ( ) {

@Override

public Integer onCompleted ( Response response) throws Exception {

if ( response. getStatusCode ( ) != HttpURLConnection . HTTP_OK ) {

Loggers . RAFT . warn ( "[RAFT] failed to publish data to peer, datumId={}, peer={}, http code={}" ,

datum. key, server, response. getStatusCode ( ) ) ;

return 1 ;

}

latch. countDown ( ) ;

return 0 ;

}

@Override

public STATE onContentWriteCompleted ( ) {

return STATE . CONTINUE ;

}

} ) ;

}

if ( ! latch. await ( UtilsAndCommons . RAFT_PUBLISH_TIMEOUT , TimeUnit . MILLISECONDS ) ) {

Loggers . RAFT . error ( "data publish failed, caused failed to notify majority, key={}" , key) ;

throw new IllegalStateException ( "data publish failed, caused failed to notify majority, key=" + key) ;

}

long end = System . currentTimeMillis ( ) ;

} finally {

OPERATE_LOCK . unlock ( ) ;

}

}

}

当客户端的心跳信息不发送给服务端,那么保存在服务端的Instance就会过期,这时候服务端需要进行清理这些过期的Instance 通过上面我们了解到在nacos注册中心接收到注册请求后,会解析注册请求数据封装Instance实例对象,封装Service对象,将当前注册的服务实例添加到注册表serviceMap中,在添加完成后会调用一个Service下的init()方法初始化service内部健康检测任务

通过HealthCheckReactor.scheduleCheck()执行ClientBeatCheckTask任务,延迟5秒执行,每5秒检查一次,开启定时清除过期instance任务 遍历当前service锁包含的所有cluster执行Cluster下的init()方法,初始Cluster内部健康检测任务,也可以看成是对持久化实例的监控检查

public void init ( ) {

HealthCheckReactor . scheduleCheck ( clientBeatCheckTask) ;

for ( Map. Entry < String , Cluster > : clusterMap. entrySet ( ) ) {

entry. getValue ( ) . setService ( this ) ;

entry. getValue ( ) . init ( ) ;

}

}

我们先看一下通过HealthCheckReactor.scheduleCheck()延迟5秒执行,每5秒检查一次的ClientBeatCheckTask任务,查看run方法源码:

获取当前服务的所有临时实例, 如果是临时实例,并且心跳间隔距离上次超过了15s,将healthy状态设置为false 若当前时间与上次心跳时间间隔超过了30s,则调用deleteIp()将当前instance清除,如果是过期的是持久实例,则直接跳过

@Override

public void run ( ) {

try {

if ( ! getDistroMapper ( ) . responsible ( service. getName ( ) ) ) {

return ;

}

if ( ! getSwitchDomain ( ) . isHealthCheckEnabled ( ) ) {

return ;

}

List < Instance > = service. allIPs ( true ) ;

for ( Instance instance : instances) {

if ( System . currentTimeMillis ( ) - instance. getLastBeat ( ) > instance. getInstanceHeartBeatTimeOut ( ) ) {

if ( ! instance. isMarked ( ) ) {

if ( instance. isHealthy ( ) ) {

instance. setHealthy ( false ) ;

Loggers . EVT_LOG

. info ( "{POS} {IP-DISABLED} valid: {}:{}@{}@{}, region: {}, msg: client timeout after {}, last beat: {}" ,

instance. getIp ( ) , instance. getPort ( ) , instance. getClusterName ( ) ,

service. getName ( ) , UtilsAndCommons . LOCALHOST_SITE ,

instance. getInstanceHeartBeatTimeOut ( ) , instance. getLastBeat ( ) ) ;

getPushService ( ) . serviceChanged ( service) ;

ApplicationUtils . publishEvent ( new InstanceHeartbeatTimeoutEvent ( this , instance) ) ;

}

}

}

}

if ( ! getGlobalConfig ( ) . isExpireInstance ( ) ) {

return ;

}

for ( Instance instance : instances) {

if ( instance. isMarked ( ) ) {

continue ;

}

if ( System . currentTimeMillis ( ) - instance. getLastBeat ( ) > instance. getIpDeleteTimeout ( ) ) {

Loggers . SRV_LOG . info ( "[AUTO-DELETE-IP] service: {}, ip: {}" , service. getName ( ) ,

JacksonUtils . toJson ( instance) ) ;

deleteIp ( instance) ;

}

}

} catch ( Exception e) {

Loggers . SRV_LOG . warn ( "Exception while processing client beat time out." , e) ;

}

}

查看 getPushService().serviceChanged(service) 发布服务变更事件源码 public void serviceChanged ( Service service) {

if ( futureMap. containsKey ( UtilsAndCommons . assembleFullServiceName ( service. getNamespaceId ( ) , service. getName ( ) ) ) ) {

return ;

}

this . applicationContext. publishEvent ( new ServiceChangeEvent ( this , service) ) ;

}

PushService 类实现了 ApplicationListener< ServiceChangeEvent > 所以本身又会取监听该事件,监听服务状态变更事件,遍历所有的客户端,通过udp协议进行消息的广播通知 @Override

public void onApplicationEvent ( ServiceChangeEvent event) {

Service service = event. getService ( ) ;

String serviceName = service. getName ( ) ;

String namespaceId = service. getNamespaceId ( ) ;

Future future = GlobalExecutor . scheduleUdpSender ( ( ) -> {

try {

Loggers . PUSH . info ( serviceName + " is changed, add it to push queue." ) ;

ConcurrentMap < String , PushClient > = clientMap

. get ( UtilsAndCommons . assembleFullServiceName ( namespaceId, serviceName) ) ;

if ( MapUtils . isEmpty ( clients) ) {

return ;

}

Map < String , Object > = new HashMap < > ( 16 ) ;

long lastRefTime = System . nanoTime ( ) ;

for ( PushClient client : clients. values ( ) ) {

if ( client. zombie ( ) ) {

Loggers . PUSH . debug ( "client is zombie: " + client. toString ( ) ) ;

clients. remove ( client. toString ( ) ) ;

Loggers . PUSH . debug ( "client is zombie: " + client. toString ( ) ) ;

continue ;

}

Receiver. AckEntry ackEntry;

Loggers . PUSH . debug ( "push serviceName: {} to client: {}" , serviceName, client. toString ( ) ) ;

String key = getPushCacheKey ( serviceName, client. getIp ( ) , client. getAgent ( ) ) ;

byte [ ] compressData = null ;

Map < String , Object > = null ;

if ( switchDomain. getDefaultPushCacheMillis ( ) >= 20000 && cache. containsKey ( key) ) {

org. javatuples. Pair= ( org. javatuples. Pair) cache. get ( key) ;

compressData = ( byte [ ] ) ( pair. getValue0 ( ) ) ;

data = ( Map < String , Object > ) pair. getValue1 ( ) ;

Loggers . PUSH . debug ( "[PUSH-CACHE] cache hit: {}:{}" , serviceName, client. getAddrStr ( ) ) ;

}

if ( compressData != null ) {

ackEntry = prepareAckEntry ( client, compressData, data, lastRefTime) ;

} else {

ackEntry = prepareAckEntry ( client, prepareHostsData ( client) , lastRefTime) ;

if ( ackEntry != null ) {

cache. put ( key, new org. javatuples. Pair< > ( ackEntry. origin. getData ( ) , ackEntry. data) ) ;

}

}

Loggers . PUSH . info ( "serviceName: {} changed, schedule push for: {}, agent: {}, key: {}" ,

client. getServiceName ( ) , client. getAddrStr ( ) , client. getAgent ( ) ,

( ackEntry == null ? null : ackEntry. key) ) ;

udpPush ( ackEntry) ;

}

} catch ( Exception e) {

Loggers . PUSH . error ( "[NACOS-PUSH] failed to push serviceName: {} to client, error: {}" , serviceName, e) ;

} finally {

futureMap. remove ( UtilsAndCommons . assembleFullServiceName ( namespaceId, serviceName) ) ;

}

} , 1000 , TimeUnit . MILLISECONDS ) ;

futureMap. put ( UtilsAndCommons . assembleFullServiceName ( namespaceId, serviceName) , future) ;

}

那持久化实例是如何检测的,首先nacos的健康检查机制分客户端主动上报和服务端主动探测两种,持久化实例的健康检测就是通过nacos服务端定时向客户端发送请求或者心跳包,检查客户端的存活状态,默认间隔时间为10秒,可以通过配置项naming.healthCheckTaskInterval来修改,并且根据客户端选择的协议类型Nacos内置提供了 Http、TCP 以及 MySQL三种探测的协议,具体代码就是上方通过Service下的init()触发执行的Cluster的init()方法中

Http类型: 注册中心向客户端发送Http请求,如果返回码在200~400之间,则认为健康,否则不健康(SpringCloud默认环境采用的是Http协议),HttpHealthCheckProcessor Tcp类型:注册中心向客户端发送Tcp连接请求,如果连接成功,认为实例健康,否则不健康,TcpHealthCheckProcessor Mysql类型:注册中心客户端发送Mysql查询请求,如果查询成功,认为实例健康,否则不健康,MysqlHealthCheckProcessor None类型:不进行任何探测,只依赖客户端的心跳上报来判断实例是否健康

查看Cluster下的init()初始化Cluster内部健康检测任务源码,与临时实例的检查类似,内部通过HealthCheckReactor.scheduleCheck()执行HealthCheckTask任务 public void init ( ) {

if ( inited) {

return ;

}

checkTask new HealthCheckTask ( this ) ;

HealthCheckReactor . scheduleCheck ( checkTask) ;

inited true ;

}

查看HealthCheckTask执行任务的run()方法源码,内部重点会调用healthCheckProcessor的process()方法,SpringCloud默认环境执行的是HttpHealthCheckProcessor @Override

public void run ( ) {

try {

if ( distroMapper. responsible ( cluster. getService ( ) . getName ( ) ) && switchDomain

. isHealthCheckEnabled ( cluster. getService ( ) . getName ( ) ) ) {

healthCheckProcessor. process ( this ) ;

if ( Loggers . EVT_LOG . isDebugEnabled ( ) ) {

Loggers . EVT_LOG

. debug ( "[HEALTH-CHECK] schedule health check task: {}" , cluster. getService ( ) . getName ( ) ) ;

}

}

} catch ( Throwable e) {

Loggers . SRV_LOG

. error ( "[HEALTH-CHECK] error while process health check for {}:{}" , cluster. getService ( ) . getName ( ) ,

cluster. getName ( ) , e) ;

} finally {

if ( ! cancelled) {

HealthCheckReactor . scheduleCheck ( this ) ;

if ( this . getCheckRtWorst ( ) > 0 && switchDomain. isHealthCheckEnabled ( cluster. getService ( ) . getName ( ) )

&& distroMapper. responsible ( cluster. getService ( ) . getName ( ) ) ) {

long diff = ( ( this . getCheckRtLast ( ) - this . getCheckRtLastLast ( ) ) * 10000 ) / this . getCheckRtLastLast ( ) ;

this . setCheckRtLastLast ( this . getCheckRtLast ( ) ) ;

Cluster cluster = this . getCluster ( ) ;

if ( Loggers . CHECK_RT . isDebugEnabled ( ) ) {

Loggers . CHECK_RT . debug ( "{}:{}@{}->normalized: {}, worst: {}, best: {}, last: {}, diff: {}" ,

cluster. getService ( ) . getName ( ) , cluster. getName ( ) , cluster. getHealthChecker ( ) . getType ( ) ,

this . getCheckRtNormalized ( ) , this . getCheckRtWorst ( ) , this . getCheckRtBest ( ) ,

this . getCheckRtLast ( ) , diff) ;

}

}

}

}

}

查看HttpHealthCheckProcessor的process()方法(默认情况下临时实例是通过定时上报的方式进行健康检测的,如果想让临时实例也使用HttpHealthCheckProcessor进行健康检查,可以在服务实例的元数据中配置health-checker-type属性为HTTP,或者在nacos-server.properties文件中配置naming.default.health-checker.type属性为HTTP)

首先调用getCluster()获取所有的持久化实例, 遍历实例,判断当前实例如果被标记为过期直接跳过等等经历一系列校验后 创建Beat健康检测任务对象, 调用taskQueue.add()将健康检测任务放入一个阻塞队列,采用异步执行的策略,检测 后续会通过taskQueue这个队列获取到任务,nacos注册中心执行这个任务访问客户端判断客户端是否存活

public void process ( HealthCheckTask task) {

List < Instance > = task. getCluster ( ) . allIPs ( false ) ;

if ( CollectionUtils . isEmpty ( ips) ) {

return ;

}

for ( Instance ip : ips) {

if ( ip. isMarked ( ) ) {

if ( SRV_LOG . isDebugEnabled ( ) ) {

SRV_LOG . debug ( "http check, ip is marked as to skip health check, ip:" + ip. getIp ( ) ) ;

}

continue ;

}

if ( ! ip. markChecking ( ) ) {

SRV_LOG . warn ( "http check started before last one finished, service: " + task. getCluster ( ) . getService ( )

. getName ( ) + ":" + task. getCluster ( ) . getName ( ) + ":" + ip. getIp ( ) + ":" + ip. getPort ( ) ) ;

healthCheckCommon. reEvaluateCheckRT ( task. getCheckRtNormalized ( ) * 2 , task, switchDomain. getHttpHealthParams ( ) ) ;

continue ;

}

Beat beat = new Beat ( ip, task) ;

taskQueue. add ( beat) ;

MetricsMonitor . getHttpHealthCheckMonitor ( ) . incrementAndGet ( ) ;

}

}

后续会通过taskQueue这个队列获取到任务,nacos注册中心执行这个任务访问客户端判断客户端是否存活(没找到相关代码,相关博客) 前面说过,在服务提供方启动时,会请求nacos的"/nacos/v1/ns/instance/beat"接口,向nacos发送代表当前服务存活的心跳,并且在发送时分当前服务首次启动携带beatInfo对象的重量级上报与后续普通不携带beatInfo的轻量级上报 查看nacos注册中心/beat接口源码

首先会解析请求数据,获取到当前发送心跳的服务唯一标识,通过这个唯一标识判断这个服务是否已经注册 并且会解析请求数据,判断当前心跳请求是否携带了beatInfo,如果携带了说明是首次注册发送的心跳,否则表示注册成功后用来标识服务存活的普通心跳 如果判断当前服务未注册,但是心跳请求中也没有携带beatInfo则报错返回NamingResponseCode.RESOURCE_NOT_FOUND 如果当前服务未注册,但是心跳请求中携带了表示第一次注册心跳的beatInfo,这种情况可能是服务注册请求由于网络抖动没有成功发送到nacos注册中心,而表示第一次注册的心跳早一步到达了,此时nacos注册中心会获取beatInfo中的服务信息进行服务注册,将服务ip,端口号等信息封装到Instance中代表一个服务实例,最终将服务信息保存到一个serviceMap中,当前服务的namespaceId为key 如果当前服务已注册,但是心跳请求中没有携带beatInfo,只是一次普通的心跳请求,则会执行一个processclientBeat()方法去处理此次心跳

@CanDistro

@PutMapping ( "/beat" )

@Secured ( parser = NamingResourceParser . class , action = ActionTypes . WRITE )

public ObjectNode beat ( HttpServletRequest request) throws Exception {

ObjectNode result = JacksonUtils . createEmptyJsonNode ( ) ;

result. put ( SwitchEntry . CLIENT_BEAT_INTERVAL , switchDomain. getClientBeatInterval ( ) ) ;

String beat = WebUtils . optional ( request, "beat" , StringUtils . EMPTY ) ;

RsInfo clientBeat = null ;

if ( StringUtils . isNotBlank ( beat) ) {

clientBeat = JacksonUtils . toObj ( beat, RsInfo . class ) ;

}

String clusterName = WebUtils

. optional ( request, CommonParams . CLUSTER_NAME , UtilsAndCommons . DEFAULT_CLUSTER_NAME ) ;

String ip = WebUtils . optional ( request, "ip" , StringUtils . EMPTY ) ;

int port = Integer . parseInt ( WebUtils . optional ( request, "port" , "0" ) ) ;

if ( clientBeat != null ) {

if ( StringUtils . isNotBlank ( clientBeat. getCluster ( ) ) ) {

clusterName = clientBeat. getCluster ( ) ;

} else {

clientBeat. setCluster ( clusterName) ;

}

ip = clientBeat. getIp ( ) ;

port = clientBeat. getPort ( ) ;

}

String namespaceId = WebUtils . optional ( request, CommonParams . NAMESPACE_ID , Constants . DEFAULT_NAMESPACE_ID ) ;

String serviceName = WebUtils . required ( request, CommonParams . SERVICE_NAME ) ;

checkServiceNameFormat ( serviceName) ;

Loggers . SRV_LOG . debug ( "[CLIENT-BEAT] full arguments: beat: {}, serviceName: {}" , clientBeat, serviceName) ;

Instance instance = serviceManager. getInstance ( namespaceId, serviceName, clusterName, ip, port) ;

if ( instance == null ) {

if ( clientBeat == null ) {

result. put ( CommonParams . CODE , NamingResponseCode . RESOURCE_NOT_FOUND ) ;

return result;

}

Loggers . SRV_LOG . warn ( "[CLIENT-BEAT] The instance has been removed for health mechanism, "

+ "perform data compensation operations, beat: {}, serviceName: {}" , clientBeat, serviceName) ;

instance = new Instance ( ) ;

instance. setPort ( clientBeat. getPort ( ) ) ;

instance. setIp ( clientBeat. getIp ( ) ) ;

instance. setWeight ( clientBeat. getWeight ( ) ) ;

instance. setMetadata ( clientBeat. getMetadata ( ) ) ;

instance. setClusterName ( clusterName) ;

instance. setServiceName ( serviceName) ;

instance. setInstanceId ( instance. getInstanceId ( ) ) ;

instance. setEphemeral ( clientBeat. isEphemeral ( ) ) ;

serviceManager. registerInstance ( namespaceId, serviceName, instance) ;

}

Service service = serviceManager. getService ( namespaceId, serviceName) ;

if ( service == null ) {

throw new NacosException ( NacosException . SERVER_ERROR ,

"service not found: " + serviceName + "@" + namespaceId) ;

}

if ( clientBeat == null ) {

clientBeat = new RsInfo ( ) ;

clientBeat. setIp ( ip) ;

clientBeat. setPort ( port) ;

clientBeat. setCluster ( clusterName) ;

}

service. processClientBeat ( clientBeat) ;

result. put ( CommonParams . CODE , NamingResponseCode . OK ) ;

if ( instance. containsMetadata ( PreservedMetadataKeys . HEART_BEAT_INTERVAL ) ) {

result. put ( SwitchEntry . CLIENT_BEAT_INTERVAL , instance. getInstanceHeartBeatInterval ( ) ) ;

}

result. put ( SwitchEntry . LIGHT_BEAT_ENABLED , switchDomain. isLightBeatEnabled ( ) ) ;

return result;

}

查看处理心跳的processclientBeat()方法源码,通过HealthCheckReactor的scheduleNow()立即执行了ClientBeatProcessor 下的任务run() public void processclientBeat ( final RsInfo rsInfo) {

ClientBeatProcessor clientBeatProcessor= new ClientBeatProcessor ( ) ;

clientBeatProcessor. setService ( this ) ;

clientBeatProcessor. setRsInfo ( rsInfo) ;

HealthCheckReactor . scheduleNow ( clientBeatProcessor) ;

}

查看ClientBeatProcessor中的任务方法run()

获取当前发送心跳的服务的所有的临时实例,只有临时实例才会有心跳 遍历所有这些临时实例,从中查找当前发送心跳的instance,判断原instance与当前心跳接收到的ip,端口号是否相同,如果相同更新心跳时间即可 若当前instance健康状态为false,但本次是其发送的心跳,则更新为健康,并且执行getPushService().serviceChanged(service)发布服务变更事件通知服务消费方更新其本地缓存的信息

public void run ( ) {

Service service = this . service;

if ( Loggers . EVT_LOG . isDebugEnabled ( ) ) {

Loggers . EVT_LOG . debug ( "[CLIENT-BEAT] processing beat: {}" , rsInfo. toString ( ) ) ;

}

String ip = rsInfo. getIp ( ) ;

String clusterName = rsInfo. getCluster ( ) ;

int port = rsInfo. getPort ( ) ;

Cluster cluster = service. getClusterMap ( ) . get ( clusterName) ;

List < Instance > = cluster. allIPs ( true ) ;

for ( Instance instance : instances) {

if ( instance. getIp ( ) . equals ( ip) && instance. getPort ( ) == port) {

if ( Loggers . EVT_LOG . isDebugEnabled ( ) ) {

Loggers . EVT_LOG . debug ( "[CLIENT-BEAT] refresh beat: {}" , rsInfo. toString ( ) ) ;

}

instance. setLastBeat ( System . currentTimeMillis ( ) ) ;

if ( ! instance. isMarked ( ) ) {

if ( ! instance. isHealthy ( ) ) {

instance. setHealthy ( true ) ;

Loggers . EVT_LOG

. info ( "service: {} {POS} {IP-ENABLED} valid: {}:{}@{}, region: {}, msg: client beat ok" ,

cluster. getService ( ) . getName ( ) , ip, port, cluster. getName ( ) ,

UtilsAndCommons . LOCALHOST_SITE ) ;

getPushService ( ) . serviceChanged ( service) ;

}

}

}

}

}

查看上面,nacos注册中心在通过beat()接口处理心跳逻辑,获取到当前发起心跳的服务ip,端口号等信息,与注册中心serviceMap注册列表中保存的对应的instance是否相同,如果相同更新心跳时间返回,如果不同,或者第一次接受到心跳,或者当前instance实例健康状态发生改变时,会执行 “getPushService().serviceChanged(service);” 发布服务变更事件,查看serviceChanged()源码,内部通过applicationContext.publishEvent()插入了一个ServiceChangeEvent事件最终事件监听执行触发调用PushService的onApplicationEvent()方法

nacos注册中心服务通过一个PushService的类实现服务向连接client的信息推送,支持TCP和UDP两种推送方式,TCP方式是通过建立长连接来推送服务信息,UDP方式是通过发送UDP包来推送服务信息 TCP方式比较稳定,但是会占用更多的资源和端口,而UDP方式比较轻量,但是不能保证数据的可靠性。因此nacos默认使用UDP方式来推送服务信息时为了提高数据的可靠性使用了一种ACK机制,指NacosServer发送一个UDP包给NacosClient时,会等待NacosClient回复一个ACK来确认收到数据,如果NacosServer在一定时间内没有收到ACK会认为数据丢失,进行重试,如果重试次数达到最大值(默认为3次)则放弃发送,并记录失败日志

查看PushService的onApplicationEvent()方法源码:

首先会通过当前变更服务的namespaceId和serviceName,在clientMap中获取到订阅该服务的所有客户端(clientMap保存了服务订阅者) 遍历获取到的这些客户端,如果客户端存活,执行udpPush()与客户端进行UDP通信,将变更消息推送给订阅客户端

查看PushService下的udpPush()方法:

内部有一个failedPush失败计数器,如果通信失败,会累计加1, 还有一个RetryTimes发送次数属性, 在发送请求前首先会将此次请求缓存到ackMap和udpSendTimeMap中, 然后调用udpSocket.send()发送udp请求到客户端,增加RetryTimes发送次数 当前这个发送是基于ack模式来确认是否发送成功的, 在udpSocket.send()发送请求后,会调用GlobalExecutor.scheduleRetransmitter()插入定时任务,10秒后执行:处理ack超时问题,通过ackMap判断是否还有缓存的没有收到ack的任务ackentry.key,如果有重试发送 在重试时也是调用当前的udpPush(),首先判断RetryTimes发送次数是否超过了MAX_RETRY_TIMES最大重试次数(默认3次) ,如果超过了则删除ackMap和udpSendTimeMap中缓存的任务信息,记录日志,取消发送

private static Receiver. AckEntry udpPush ( Receiver. AckEntry ackEntry) {

if ( ackEntry == null ) {

Loggers . PUSH . error ( "[NACOS-PUSH] ackEntry is null." ) ;

return null ;

}

if ( ackEntry. getRetryTimes ( ) > MAX_RETRY_TIMES ) {

Loggers . PUSH . warn ( "max re-push times reached, retry times {}, key: {}" , ackEntry. retryTimes, ackEntry. key) ;

ackMap. remove ( ackEntry. key) ;

udpSendTimeMap. remove ( ackEntry. key) ;

failedPush += 1 ;

return ackEntry;

}

try {

if ( ! ackMap. containsKey ( ackEntry. key) ) {

totalPush++ ;

}

ackMap. put ( ackEntry. key, ackEntry) ;

udpSendTimeMap. put ( ackEntry. key, System . currentTimeMillis ( ) ) ;

Loggers . PUSH . info ( "send udp packet: " + ackEntry. key) ;

udpSocket. send ( ackEntry. origin) ;

ackEntry. increaseRetryTime ( ) ;

GlobalExecutor . scheduleRetransmitter ( new Retransmitter ( ackEntry) , TimeUnit . NANOSECONDS . toMillis ( ACK_TIMEOUT_NANOS ) , TimeUnit . MILLISECONDS ) ;

return ackEntry;

} catch ( Exception e) {

Loggers . PUSH . error ( "[NACOS-PUSH] failed to push data: {} to client: {}, error: {}" , ackEntry. data,

ackEntry. origin. getAddress ( ) . getHostAddress ( ) , e) ;

ackMap. remove ( ackEntry. key) ;

udpSendTimeMap. remove ( ackEntry. key) ;

failedPush += 1 ;

return null ;

}

}

继续查看PushService, 内部存在一个静态代码块,通过定时任务定时执行removeClientIfZombie()方法,默认每20秒执行一次,移除超过10秒没有更新的pushClient static {

try {

udpSocket = new DatagramSocket ( ) ;

Receiver receiver = new Receiver ( ) ;

Thread inThread = new Thread ( receiver) ;

inThread. setDaemon ( true ) ;

inThread. setName ( "com.alibaba.nacos.naming.push.receiver" ) ;

inThread. start ( ) ;

GlobalExecutor. scheduleRetransmitter ( ( ) - > {

try {

removeClientIfZombie ( ) ;

} catch ( Throwable e) {

Loggers. PUSH. warn ( "[NACOS-PUSH] failed to remove client zombie" ) ;

}

} , 0 , 20 , TimeUnit. SECONDS) ;

} catch ( SocketException e) {

Loggers. SRV_LOG. error ( "[NACOS-PUSH] failed to init push service" ) ;

}

}

PushService实现了Runnable接口,是一个任务类,当向客户端发送完udp服务变更通知后,会定时执行这个任务,处理来自客户端的ack响应,内部开启了一个无限循环接收来自客户端的ack响应,当接收到响应后,会删除ackMap中的ackKey public void run ( ) {

while ( true ) {

byte [ ] buffer = new byte [ 1024 * 64 ] ;

DatagramPacket packet = new DatagramPacket ( buffer, buffer. length) ;

try {

udpSocket. receive ( packet) ;

String json = new String ( packet. getData ( ) , 0 , packet. getLength ( ) , StandardCharsets . UTF_8 ) . trim ( ) ;

AckPacket ackPacket = JacksonUtils . toObj ( json, AckPacket . class ) ;

InetSocketAddress socketAddress = ( InetSocketAddress ) packet. getSocketAddress ( ) ;

String ip = socketAddress. getAddress ( ) . getHostAddress ( ) ;

int port = socketAddress. getPort ( ) ;

if ( System . nanoTime ( ) - ackPacket. lastRefTime > ACK_TIMEOUT_NANOS ) {

Loggers . PUSH . warn ( "ack takes too long from {} ack json: {}" , packet. getSocketAddress ( ) , json) ;

}

String ackKey = getAckKey ( ip, port, ackPacket. lastRefTime) ;

AckEntry ackEntry = ackMap. remove ( ackKey) ;

if ( ackEntry == null ) {

throw new IllegalStateException (

"unable to find ackEntry for key: " + ackKey + ", ack json: " + json) ;

}

long pushCost = System . currentTimeMillis ( ) - udpSendTimeMap. get ( ackKey) ;

Loggers . PUSH

. info ( "received ack: {} from: {}:{}, cost: {} ms, unacked: {}, total push: {}" , json, ip,

port, pushCost, ackMap. size ( ) , totalPush) ;

pushCostMap. put ( ackKey, pushCost) ;

udpSendTimeMap. remove ( ackKey) ;

} catch ( Throwable e) {

Loggers . PUSH . error ( "[NACOS-PUSH] error while receiving ack data" , e) ;

}

}

nacos接收到服务注销请求后处理比较简单,请求会到达nacos的deregister()接口或deregisterInstance()接口,大致流程为:

解析请求获取到需要下线的服务的Instance实例 获取到对应的service 将当前服务实例在nacos中删除

@CanDistro

@DeleteMapping

@Secured ( parser = NamingResourceParser . class , action = ActionTypes . WRITE )

public String deregister ( HttpServletRequest request) throws Exception {

Instance instance = getIpAddress ( request) ;

Service service = serviceManager. getservice ( namespaceld, serviceName) ;

serviceManager. removeInstance ( namespaceld, serviceName, instance. isEphemeral ( ) , instance) ;

}

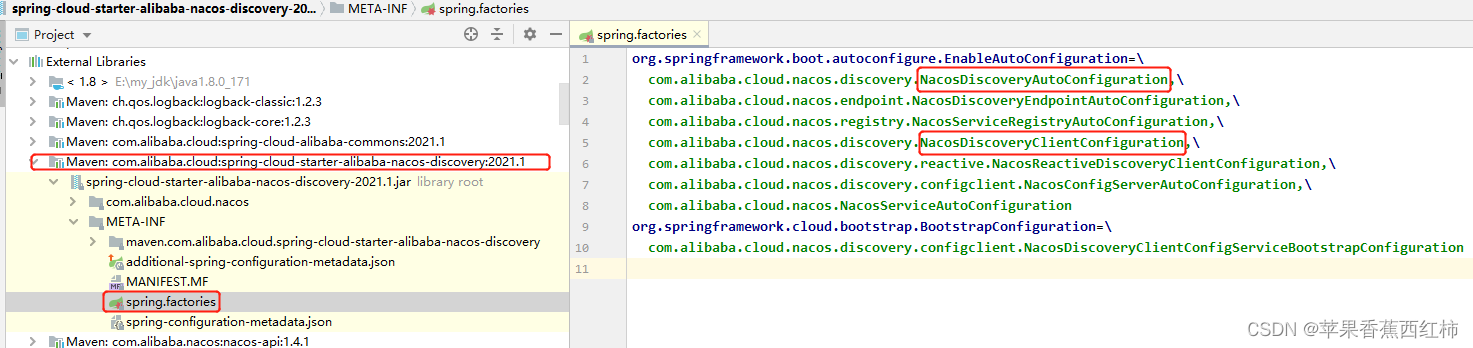

服务消费方启动时持有服务提供方名称,连接注册中心通过服务提供方名称获取调用列表,在请求时本地负载均衡选择指定地址发起调用,那么是如何获取的,如何保存的,又是如何更新的 服务消费方在通过nacos注册中心获取目标服务时,首先要引入nacos依赖,使用@EnableDiscoveryClient开启服务发现功能,yaml中进行相关设置,当前以spring-cloud-starter-alibaba-nacos-discovery依赖为例 < dependency> < groupId> </ groupId> < artifactId> </ artifactId> < version> </ version> </ dependency> 在整合了spring-cloud-starter-alibaba-nacos-discovery以后,根据SpringBoot SPI机制读取META-INF/spring.factories,查看该文件,发现创建了NacosDiscoveryAutoConfiguration与NacosDiscoveryClientConfiguration几个比较重要的类 查看NacosDiscoveryClientConfiguration ,通过这个类向容器中注入了一个NacosDiscoveryClient,这是服务消费方获取目标服务的入口 @Configuration (

proxyBeanMethods = false

)

@ConditionalOnDiscoveryEnabled

@ConditionalOnBlockingDiscoveryEnabled

@ConditionalOnNacosDiscoveryEnabled

@AutoConfigureBefore ( { SimpleDiscoveryClientAutoConfiguration . class , CommonsClientAutoConfiguration . class } )

@AutoConfigureAfter ( { NacosDiscoveryAutoConfiguration . class } )

public class NacosDiscoveryClientConfiguration {

public NacosDiscoveryClientConfiguration ( ) {

}

@Bean

public DiscoveryClient nacosDiscoveryClient ( NacosServiceDiscovery nacosServiceDiscovery) {

return new NacosDiscoveryClient ( nacosServiceDiscovery) ;

}

@Bean

@ConditionalOnMissingBean

@ConditionalOnProperty (

value = { "spring.cloud.nacos.discovery.watch.enabled" } ,

matchIfMissing = true

)

public NacosWatch nacosWatch ( NacosServiceManager nacosServiceManager, NacosDiscoveryProperties nacosDiscoveryProperties, ObjectProvider < ThreadPoolTaskScheduler > ) {

return new NacosWatch ( nacosServiceManager, nacosDiscoveryProperties, taskExecutorObjectProvider) ;

}

}

查看NacosDiscoveryClient源码,内部提供了一个getServices()方法和getInstances()方法,是获取目标服务信息的入口在服务消费方启动时会调用这个getServices()方法获取目标服务列表,在服务接口调用时会通过getInstances()方法获取目标服务 public class NacosDiscoveryClient implements DiscoveryClient {

private static final Logger log = LoggerFactory. getLogger ( NacosDiscoveryClient. class) ;

public static final String DESCRIPTION = "Spring Cloud Nacos Discovery Client" ;

private NacosServiceDiscovery serviceDiscovery;

public NacosDiscoveryClient ( NacosServiceDiscovery nacosServiceDiscovery) {

this. serviceDiscovery = nacosServiceDiscovery;

}

public String description ( ) {

return "Spring Cloud Nacos Discovery Client" ;

}

public List< ServiceInstance> getInstances ( String serviceId) {

try {

return this. serviceDiscovery. getInstances ( serviceId) ;

} catch ( Exception var3) {

throw new RuntimeException ( "Can not get hosts from nacos server. serviceId: " + serviceId, var3) ;

}

}

public List< String> getServices ( ) {

try {

return this. serviceDiscovery. getServices ( ) ;

} catch ( Exception var2) {

log. error ( "get service name from nacos server fail," , var2) ;

return Collections. emptyList ( ) ;

}

}

}

查看NacosDiscoveryClient的getInstances()方法源码,内部会调用NacosServiceDiscovery下的getInstances(),该方法中会获取到NacosNamingService对象,调用它的selectInstances()方法,最终会调用到HostReactor下的getServiceInfo()

public List < ServiceInstance > getInstances ( String serviceId) throws NacosException {

String group = this . discoveryProperties. getGroup ( ) ;

List < Instance > = this . namingService ( ) . selectInstances ( serviceId, group, true ) ;

return hostToServiceInstanceList ( instances, serviceId) ;

}

public List < Instance > selectInstances ( String serviceName, String groupName, List < String > , boolean healthy, boolean subscribe) throws NacosException {

ServiceInfo serviceInfo;

if ( subscribe) {

serviceInfo = this . hostReactor. getServiceInfo ( NamingUtils . getGroupedName ( serviceName, groupName) , StringUtils . join ( clusters, "," ) ) ;

} else {

serviceInfo = this . hostReactor. getServiceInfoDirectlyFromServer ( NamingUtils . getGroupedName ( serviceName, groupName) , StringUtils . join ( clusters, "," ) ) ;

}

return this . selectInstances ( serviceInfo, healthy) ;

}

查看HostReactor下的getServiceInfo()源码

会调用getSerivceInfo0()通过目标服务的serviceName查询本地是否已经存在 如果不存在则调用updateServiceNow()请求nacos注册中心获取目标服务保存到serviceInfoMap ,中间为了防止并发安全问题,在获取目标服务时首先会将当前获取的服务信息缓存到一个updatingMap中,当获取完毕后在updatingMap中删除这个服务信息,后续再获取时,如果updatingMap中存在说明当前获取的服务正在更新,加锁等待UPDATE_HOLD_INTERVAL默认为1000毫秒后再去获取 目标服务获取成功,或者目标服务存在,则会调用scheduleUpdateIfAbsent()方法,创建UpdateTask任务,开启延时任务默认10s后想NacosServer发送请求 去查询一次服务地址进行更新 最后将获取的目标服务返回

public ServiceInfo getServiceInfo ( final String serviceName, final String clusters) {

NAMING_LOGGER . debug ( "failover-mode: " + failoverReactor. isFailoverSwitch ( ) ) ;

String key = ServiceInfo . getKey ( serviceName, clusters) ;

if ( failoverReactor. isFailoverSwitch ( ) ) {

return failoverReactor. getService ( key) ;

}

ServiceInfo serviceObj = getSerivceInfo0 ( serviceName, clusters) ;

if ( null == serviceObj) {

serviceObj = new ServiceInfo ( serviceName, clusters) ;

serviceInfoMap. put ( serviceObj. getKey ( ) , serviceObj) ;

updatingMap. put ( serviceName, new Object ( ) ) ;

updateServiceNow ( serviceName, clusters) ;

updatingMap. remove ( serviceName) ;

} else if ( updatingMap. containsKey ( serviceName) ) {

if ( updateHoldInterval > 0 ) {

synchronized ( serviceObj) {

try {

serviceObj. wait ( updateHoldInterval) ;

} catch ( InterruptedException e) {

NAMING_LOGGER . error ( "[getServiceInfo] serviceName:" + serviceName + ", clusters:" + clusters, e) ;

}

}

}

}

scheduleUpdateIfAbsent ( serviceName, clusters) ;

return serviceInfoMap. get ( serviceObj. getKey ( ) ) ;

}

查看获取目标服务信息的updateServiceNow()方法源码,

先执行了一个"pushReceiver.getUDPPort() "比较重要,获取当前服务UDP端口号,前面在服务注册时提到,NacosServer会主动向客户端发送UDP请求进行探测,探测的UDP端口就是通过这里传给NacosServer的 最终会封装请求数据,请求nacso注册中心的/list接口获取服务信息

public void updateServiceNow ( String serviceName, String clusters) {

ServiceInfo oldService = getSerivceInfo0 ( serviceName, clusters) ;

try {

String result = serverProxy. queryList ( serviceName, clusters, pushReceiver. getUDPPort ( ) , false ) ;

if ( StringUtils . isNotEmpty ( result) ) {

processServiceJSON ( result) ;

}

}

public String queryList ( String serviceName, String clusters, int udpPort, boolean healthyOnly)

throws NacosException {

final Map < String , String > = new HashMap < String , String > ( 8 ) ;

params. put ( CommonParams . NAMESPACE_ID , namespaceId) ;

params. put ( CommonParams . SERVICE_NAME , serviceName) ;

params. put ( "clusters" , clusters) ;

params. put ( "udpPort" , String . valueOf ( udpPort) ) ;

params. put ( "clientIP" , NetUtils . localIP ( ) ) ;

params. put ( "healthyOnly" , String . valueOf ( healthyOnly) ) ;

return reqAPI ( UtilAndComs . NACOS_URL_BASE + "/instance/list" , params, HttpMethod . GET ) ;

}

上面说到服务消费方启动时会触发获取目标服务信息的动作,在获取到目标服务后,调用scheduleUpdateIfAbsent()方法延时任务,查看该方法源码: 会创建一个UpdateTask任务类,通过executor.schedule()执行这个任务,最终做到根据naming.healthCheckTaskInterval配置项默认10s后向NacosServer发送请求去查询一次服务地址进行更新,更新执行后会执行executor.schedule()插入以一次的任务(可以理解为定时任务)下无异常情况下调度时间为6秒,最长为1分钟执行一次心跳检测

public void scheduleUpdateIfAbsent ( String serviceName, String groupName, String clusters) {

String serviceKey = ServiceInfo . getKey ( NamingUtils . getGroupedName ( serviceName, groupName) , clusters) ;

if ( futureMap. get ( serviceKey) != null ) {

return ;

}

synchronized ( futureMap) {

if ( futureMap. get ( serviceKey) != null ) {

return ;

}

ScheduledFuture < ? > = addTask ( new UpdateTask ( serviceName, groupName, clusters) ) ;

futureMap. put ( serviceKey, future) ;

}

}

private synchronized ScheduledFuture < ? > addTask ( UpdateTask task) {

return executor. schedule ( task, DEFAULT_DELAY , TimeUnit . MILLISECONDS ) ;

}

查看UpdateTask任务类中向NacosServer发送请求查询服务信息的run()方法源码:

获取本地缓存的目标服务,如果目标服务更新时间小于等于缓存刷新时间请求nacos注册中心获取目标服务信息更新本地 更新完毕后,同步记录更新时间 最后执行executor.schedule()根据服务健康情况计算failCount,计算任务下次执行时间,无异常情况下也就是failCount等于0缓存实例的刷新时间是6秒,最长为1分钟

public void run ( ) {

long delayTime = DEFAULT_DELAY ;

try {

if ( ! changeNotifier. isSubscribed ( groupName, serviceName, clusters) && ! futureMap. containsKey ( serviceKey) ) {

NAMING_LOGGER

. info ( "update task is stopped, service:" + groupedServiceName + ", clusters:" + clusters) ;

return ;

}

ServiceInfo serviceObj = serviceInfoHolder. getServiceInfoMap ( ) . get ( serviceKey) ;

if ( serviceObj == null ) {

serviceObj = namingClientProxy. queryInstancesOfService ( serviceName, groupName, clusters, 0 , false ) ;

serviceInfoHolder. processServiceInfo ( serviceObj) ;

lastRefTime = serviceObj. getLastRefTime ( ) ;

return ;

}

if ( serviceObj. getLastRefTime ( ) <= lastRefTime) {

serviceObj = namingClientProxy. queryInstancesOfService ( serviceName, groupName, clusters, 0 , false ) ;

serviceInfoHolder. processServiceInfo ( serviceObj) ;

}

lastRefTime = serviceObj. getLastRefTime ( ) ;

if ( CollectionUtils . isEmpty ( serviceObj. getHosts ( ) ) ) {

incFailCount ( ) ;

return ;

}

delayTime = serviceObj. getCacheMillis ( ) * DEFAULT_UPDATE_CACHE_TIME_MULTIPLE ;

resetFailCount ( ) ;

} catch ( Throwable e) {

incFailCount ( ) ;

NAMING_LOGGER . warn ( "[NA] failed to update serviceName: " + groupedServiceName, e) ;

} finally {

executor. schedule ( this , Math . min ( delayTime << failCount, DEFAULT_DELAY * 60 ) , TimeUnit . MILLISECONDS ) ;

}

}

我们再回到服务注册的逻辑,在服务注册成功后开启定时任务默认每5秒执行一次请求nacos的beat接口保持心跳,nacos注册中心在通过beat()接口接收到心跳请求后,获取到当前发起心跳的服务ip,端口号等信息,与serviceMap注册列表中保存的对应的instance是否相同,如果相同更新心跳时间返回,如果不同,或者第一次接受到心跳,或者当前instance实例健康状态发生改变时,会执行**"getPushService().serviceChanged(service);"发布服务变更事件,默认使用UDP+ACK机制来推送服务信息时给当前服务的订阅者实现信息同步(因为UDP方式比较轻量,但是不能保证数据可靠性,所以引入ack机制也是定时任务默认10后执行进行ack确认,如果重试次数达到最大值(默认为3次)则放弃发送,并记录失败日志),那么nacos注册中心是如何找到对应客户端的 在服务client启动后会请求nacos注册中心获取目标服务,在请求nacos注册中心时会调用"pushReceiver.getUDPPort()"获取到自己的udp端口号一块上报给注册中心,此时注册中心就能拿到对应客户端地址了, 那当前服务的这个地址是怎么分配的,后续客户端又是如何接收处理nacos服务通过udp推送过来的变更请求的 这里再回到整合nacos注册中心的服务启动,整合了nacos注册中心的服务在启动时首先会解析yaml中的nacos相关配置,执行NamingFactory下的createNamingService()方法创建NamingService,默认情况下返回的是NacosNamingService,这个类重写了init()方法又初始化创建了几个核心类,其中有一个HostReactor用来处理与nacos服务的udp通信,查看HostReactor构造器源码,当前终点关注创建了PushReceiver public HostReactor ( NamingProxy serverProxy, BeatReactor beatReactor, String cacheDir, boolean loadCacheAtStart, boolean pushEmptyProtection, int pollingThreadCount) {

this . futureMap = new HashMap ( ) ;

this . executor = new ScheduledThreadPoolExecutor ( pollingThreadCount, new ThreadFactory ( ) {

public Thread newThread ( Runnable r) {

Thread thread = new Thread ( r) ;

thread. setDaemon ( true ) ;

thread. setName ( "com.alibaba.nacos.client.naming.updater" ) ;

return thread;

}

} ) ;

this . beatReactor = beatReactor;

this . serverProxy = serverProxy;

this . cacheDir = cacheDir;

if ( loadCacheAtStart) {

this . serviceInfoMap = new ConcurrentHashMap ( DiskCache . read ( this . cacheDir) ) ;

} else {

this . serviceInfoMap = new ConcurrentHashMap ( 16 ) ;

}

this . pushEmptyProtection = pushEmptyProtection;

this . updatingMap = new ConcurrentHashMap ( ) ;

this . failoverReactor = new FailoverReactor ( this , cacheDir) ;

this . pushReceiver = new PushReceiver ( this ) ;

this . notifier = new InstancesChangeNotifier ( ) ;

NotifyCenter . registerToPublisher ( InstancesChangeEvent . class , 16384 ) ;

NotifyCenter . registerSubscriber ( this . notifier) ;

}

PushReceiver,该类本身就实现了Runnable接口,是一个任务类,查看构造器源码

分配udp端口号 创建了一个ScheduledThreadPoolExecutor线程池,将自身作为任务类执行

public PushReceiver ( HostReactor hostReactor) {

try {

this . hostReactor = hostReactor;

String udpPort = getPushReceiverUdpPort ( ) ;

if ( StringUtils . isEmpty ( udpPort) ) {

this . udpSocket = new DatagramSocket ( ) ;

} else {

this . udpSocket = new DatagramSocket ( new InetSocketAddress ( Integer . parseInt ( udpPort) ) ) ;

}

this . executorService = new ScheduledThreadPoolExecutor ( 1 , new ThreadFactory ( ) {

@Override

public Thread newThread ( Runnable r) {

Thread thread = new Thread ( r) ;

thread. setDaemon ( true ) ;

thread. setName ( "com.alibaba.nacos.naming.push.receiver" ) ;

return thread;

}

} ) ;

this . executorService. execute ( this ) ;

} catch ( Exception e) {

NAMING_LOGGER . error ( "[NA] init udp socket failed" , e) ;

}

}

查看PushReceiver用来处理任务的run()方法

内部开启了一个无限循环,通过udpSocket.receive(packet)来接收来自nacos注册中心发来的服务信息变更请求 调用HostReactor下的processServiceJson()处理接收到的变更,同步本地 执行udpSocket.send() ack响应nacos注册中心

public void run ( ) {

while ( ! this . closed) {

try {

byte [ ] buffer = new byte [ 65536 ] ;

DatagramPacket packet = new DatagramPacket ( buffer, buffer. length) ;

this . udpSocket. receive ( packet) ;

String json = ( new String ( IoUtils . tryDecompress ( packet. getData ( ) ) , UTF_8 ) ) . trim ( ) ;

LogUtils . NAMING_LOGGER . info ( "received push data: " + json + " from " + packet. getAddress ( ) . toString ( ) ) ;

PushReceiver. PushPacket pushPacket = ( PushReceiver. PushPacket ) JacksonUtils . toObj ( json, PushReceiver. PushPacket . class ) ;

String ack;

if ( ! "dom" . equals ( pushPacket. type) && ! "service" . equals ( pushPacket. type) ) {

if ( "dump" . equals ( pushPacket. type) ) {

ack = "{\"type\": \"dump-ack\", \"lastRefTime\": \"" + pushPacket. lastRefTime + "\", \"data\":\"" + StringUtils . escapeJavaScript ( JacksonUtils . toJson ( this . hostReactor. getServiceInfoMap ( ) ) ) + "\"}" ;

} else {

ack = "{\"type\": \"unknown-ack\", \"lastRefTime\":\"" + pushPacket. lastRefTime + "\", \"data\":\"\"}" ;

}

} else {

this . hostReactor. processServiceJson ( pushPacket. data) ;

ack = "{\"type\": \"push-ack\", \"lastRefTime\":\"" + pushPacket. lastRefTime + "\", \"data\":\"\"}" ;

}

this . udpSocket. send ( new DatagramPacket ( ack. getBytes ( UTF_8 ) , ack. getBytes ( UTF_8 ) . length, packet. getSocketAddress ( ) ) ) ;

} catch ( Exception var6) {

if ( this . closed) {

return ;

}

LogUtils . NAMING_LOGGER . error ( "[NA] error while receiving push data" , var6) ;

}

}

}

nacos注册中心通过InstanceController下的/list接收订阅请求,查看该接口源码

解析获取请求数据,比如namespceId、serviceName、agent(指定提交请求的客户端是哪种类型)、clusters、clusterIP、udpPort(后续UDP通信会使用)、app、tenant 等 重点都在最后调用的doSrvIpxt()方法中,根据agent创建对应的ClientInfo,然后进行订阅处理

@GetMapping ( "/list" )

@Secured ( parser = NamingResourceParser . class , action = ActionTypes . READ )

public ObjectNode list ( HttpServletRequest request) throws Exception {

String namespaceId = WebUtils . optional ( request, CommonParams . NAMESPACE_ID , Constants . DEFAULT_NAMESPACE_ID ) ;

String serviceName = WebUtils . required ( request, CommonParams . SERVICE_NAME ) ;

NamingUtils . checkServiceNameFormat ( serviceName) ;

String agent = WebUtils . getUserAgent ( request) ;

String clusters = WebUtils . optional ( request, "clusters" , StringUtils . EMPTY ) ;

String clientIP = WebUtils . optional ( request, "clientIP" , StringUtils . EMPTY ) ;

int udpPort = Integer . parseInt ( WebUtils . optional ( request, "udpPort" , "0" ) ) ;

String env = WebUtils . optional ( request, "env" , StringUtils . EMPTY ) ;

boolean isCheck = Boolean . parseBoolean ( WebUtils . optional ( request, "isCheck" , "false" ) ) ;

String app = WebUtils . optional ( request, "app" , StringUtils . EMPTY ) ;

String tenant = WebUtils . optional ( request, "tid" , StringUtils . EMPTY ) ;

boolean healthyOnly = Boolean . parseBoolean ( WebUtils . optional ( request, "healthyOnly" , "false" ) ) ;

return doSrvIpxt ( namespaceId, serviceName, agent, clusters, clientIP, udpPort, env, isCheck, app, tenant,

healthyOnly) ;

}

查看doSrvIpxt()源码,内部:

首先根据不同的agent创建对应的ClientInfo,比如有对应 java、c、c++、go、nginx、dnsf等类型的 然后判断是否拿到了当前客户端的udp端口号udpPort,如果拿到了执行pushService.addClient()将当前客户端封装为PushClient最终添加到nacos注册中心的clientMap列表中 其实就是往clientMap集合中添加PushClient对象,而每一个PushClient对象里面都封装了拉取的客户端的信息, 服务提供方启动后会发送心跳给注册中心,并且注册中心内部有自己的健康检查,当发现服务注册方存在变化时调用PushService的serviceChanged(service)方法插入事件,事件执行通过udp+ack的方式通知订阅当前服务的客户端,客户端信息就是通过这个clientMap获取到的

public ObjectNode doSrvIpxt ( String namespaceId, String serviceName, String agent, String clusters, String clientIP,

int udpPort, String env, boolean isCheck, String app, String tid, boolean healthyOnly) throws Exception {

ClientInfo clientInfo = new ClientInfo ( agent) ;

ObjectNode result = JacksonUtils . createEmptyJsonNode ( ) ;

Service service = serviceManager. getService ( namespaceId, serviceName) ;

long cacheMillis = switchDomain. getDefaultCacheMillis ( ) ;

try {

if ( udpPort > 0 && pushService. canEnablePush ( agent) ) {

pushService

. addClient ( namespaceId, serviceName, clusters, agent, new InetSocketAddress ( clientIP, udpPort) ,

pushDataSource, tid, app) ;

cacheMillis = switchDomain. getPushCacheMillis ( serviceName) ;

}

} catch ( Exception e) {

Loggers . SRV_LOG

. error ( "[NACOS-API] failed to added push client {}, {}:{}" , clientInfo, clientIP, udpPort, e) ;

cacheMillis = switchDomain. getDefaultCacheMillis ( ) ;

}

if ( service == null ) {

if ( Loggers . SRV_LOG . isDebugEnabled ( ) ) {

Loggers . SRV_LOG . debug ( "no instance to serve for service: {}" , serviceName) ;

}

result. put ( "name" , serviceName) ;

result. put ( "clusters" , clusters) ;

result. put ( "cacheMillis" , cacheMillis) ;

result. replace ( "hosts" , JacksonUtils . createEmptyArrayNode ( ) ) ;

return result;

}

checkIfDisabled ( service) ;

List < Instance > ;

srvedIPs = service. srvIPs ( Arrays . asList ( StringUtils . split ( clusters, "," ) ) ) ;

if ( service. getSelector ( ) != null && StringUtils . isNotBlank ( clientIP) ) {

srvedIPs = service. getSelector ( ) . select ( clientIP, srvedIPs) ;

}

if ( CollectionUtils . isEmpty ( srvedIPs) ) {

if ( Loggers . SRV_LOG . isDebugEnabled ( ) ) {

Loggers . SRV_LOG . debug ( "no instance to serve for service: {}" , serviceName) ;

}

if ( clientInfo. type == ClientInfo. ClientType . JAVA

&& clientInfo. version. compareTo ( VersionUtil . parseVersion ( "1.0.0" ) ) >= 0 ) {

result. put ( "dom" , serviceName) ;

} else {

result. put ( "dom" , NamingUtils . getServiceName ( serviceName) ) ;

}

result. put ( "name" , serviceName) ;

result. put ( "cacheMillis" , cacheMillis) ;

result. put ( "lastRefTime" , System . currentTimeMillis ( ) ) ;

result. put ( "checksum" , service. getChecksum ( ) ) ;

result. put ( "useSpecifiedURL" , false ) ;

result. put ( "clusters" , clusters) ;

result. put ( "env" , env) ;

result. set ( "hosts" , JacksonUtils . createEmptyArrayNode ( ) ) ;

result. set ( "metadata" , JacksonUtils . transferToJsonNode ( service. getMetadata ( ) ) ) ;

return result;

}

Map < Boolean , List < Instance > > = new HashMap < > ( 2 ) ;

ipMap. put ( Boolean . TRUE , new ArrayList < > ( ) ) ;

ipMap. put ( Boolean . FALSE , new ArrayList < > ( ) ) ;

for ( Instance ip : srvedIPs) {

ipMap. get ( ip. isHealthy ( ) ) . add ( ip) ;

}

if ( isCheck) {

result. put ( "reachProtectThreshold" , false ) ;

}

double threshold = service. getProtectThreshold ( ) ;

if ( ( float ) ipMap. get ( Boolean . TRUE ) . size ( ) / srvedIPs. size ( ) <= threshold) {

Loggers . SRV_LOG . warn ( "protect threshold reached, return all ips, service: {}" , serviceName) ;

if ( isCheck) {

result. put ( "reachProtectThreshold" , true ) ;

}

ipMap. get ( Boolean . TRUE ) . addAll ( ipMap. get ( Boolean . FALSE ) ) ;

ipMap. get ( Boolean . FALSE ) . clear ( ) ;

}

if ( isCheck) {

result. put ( "protectThreshold" , service. getProtectThreshold ( ) ) ;

result. put ( "reachLocalSiteCallThreshold" , false ) ;

return JacksonUtils . createEmptyJsonNode ( ) ;

}

ArrayNode hosts = JacksonUtils . createEmptyArrayNode ( ) ;

for ( Map. Entry < Boolean , List < Instance > > : ipMap. entrySet ( ) ) {

List < Instance > = entry. getValue ( ) ;

if ( healthyOnly && ! entry. getKey ( ) ) {

continue ;

}

for ( Instance instance : ips) {

if ( ! instance. isEnabled ( ) ) {

continue ;

}

ObjectNode ipObj = JacksonUtils . createEmptyJsonNode ( ) ;

ipObj. put ( "ip" , instance. getIp ( ) ) ;

ipObj. put ( "port" , instance. getPort ( ) ) ;

ipObj. put ( "valid" , entry. getKey ( ) ) ;

ipObj. put ( "healthy" , entry. getKey ( ) ) ;

ipObj. put ( "marked" , instance. isMarked ( ) ) ;

ipObj. put ( "instanceId" , instance. getInstanceId ( ) ) ;

ipObj. set ( "metadata" , JacksonUtils . transferToJsonNode ( instance. getMetadata ( ) ) ) ;

ipObj. put ( "enabled" , instance. isEnabled ( ) ) ;

ipObj. put ( "weight" , instance. getWeight ( ) ) ;

ipObj. put ( "clusterName" , instance. getClusterName ( ) ) ;

if ( clientInfo. type == ClientInfo. ClientType . JAVA

&& clientInfo. version. compareTo ( VersionUtil . parseVersion ( "1.0.0" ) ) >= 0 ) {

ipObj. put ( "serviceName" , instance. getServiceName ( ) ) ;

} else {

ipObj. put ( "serviceName" , NamingUtils . getServiceName ( instance. getServiceName ( ) ) ) ;

}

ipObj. put ( "ephemeral" , instance. isEphemeral ( ) ) ;

hosts. add ( ipObj) ;

}

}

result. replace ( "hosts" , hosts) ;

if ( clientInfo. type == ClientInfo. ClientType . JAVA

&& clientInfo. version. compareTo ( VersionUtil . parseVersion ( "1.0.0" ) ) >= 0 ) {

result. put ( "dom" , serviceName) ;

} else {

result. put ( "dom" , NamingUtils . getServiceName ( serviceName) ) ;

}

result. put ( "name" , serviceName) ;

result. put ( "cacheMillis" , cacheMillis) ;

result. put ( "lastRefTime" , System . currentTimeMillis ( ) ) ;

result. put ( "checksum" , service. getChecksum ( ) ) ;

result. put ( "useSpecifiedURL" , false ) ;

result. put ( "clusters" , clusters) ;

result. put ( "env" , env) ;

result. replace ( "metadata" , JacksonUtils . transferToJsonNode ( service. getMetadata ( ) ) ) ;

return result;

}

这里记录的是在别人博客中看到的,我并没有找到相关源码,此处只是记录不一定正确 服务消费方在获取到目标服务后会执行NacosNamingService中的subscribe()开启对目标服务的订阅,实际底层还是跟上面一样请求nacos注册中心获取目标服务信息,缓存到本地serviceInfoMap中,并开启心跳任务

public void subscribe ( String serviceName, String groupName, List < String > , EventListener listener)

throws NacosException {

if ( null == listener) {

return ;

}

String clusterString = StringUtils . join ( clusters, "," ) ;

changeNotifier. registerListener ( groupName, serviceName, clusterString, listener) ;

clientProxy. subscribe ( serviceName, groupName, clusterString) ;

}

查看NamingClientProxyDelegate下的subscribe()获取本地缓存的目标服务如果为null则发送

@Override

public ServiceInfo subscribe ( String serviceName, String groupName, String clusters) throws NacosException {

String serviceNameWithGroup = NamingUtils . getGroupedName ( serviceName, groupName) ;

String serviceKey = ServiceInfo . getKey ( serviceNameWithGroup, clusters) ;

ServiceInfo result = serviceInfoHolder. getServiceInfoMap ( ) . get ( serviceKey) ;

if ( null == result) {

result = grpcClientProxy. subscribe ( serviceName, groupName, clusters) ;

}

serviceInfoUpdateService. scheduleUpdateIfAbsent ( serviceName, groupName, clusters) ;

serviceInfoHolder. processServiceInfo ( result) ;

return result;

}

区别

功能范围:nacos不仅是一个注册中心,还是一个配置中心,它可以管理服务的配置信息,并支持在线编辑和动态刷新。eureka只是一个注册中心,它需要配合其他组件(如Config或Consul)来实现配置中心的功能,并且不提供管理界面和动态刷新机制 一致性协议:nacos支持AP和CP两种模式的切换,它可以根据不同的数据和场景选择不同的一致性机制。AP模式下,nacos只支持注册临时实例,并采用客户端心跳方式进行健康检查。CP模式下,nacos支持注册持久化实例,并采用服务端主动探测方式进行健康检查,eureka只支持AP模式,它采用客户端心跳方式进行健康检查,并在网络分区发生时开启自我保护机制,避免误删服务 服务推送:nacos支持服务列表变更的消息推送模式,它使用Netty保持TCP长连接,实时推送服务列表的更新给客户端。eureka使用短轮询的方式获取服务列表,它需要客户端定时向服务端发送请求,并缓存服务列表到本地内存中。 集群部署:nacos需要使用MySQL进行数据持久化,并配置集群IP再启动。eureka不需要使用MySQL或其他数据库,只需要配置Eureka Server之间的相互注册即可实现集群部署

自己整理的在CAP角度,Nacos的AP或CP是根据临时实例或持久化实例来区分的,通过spring.cloud.nacos.discovery.ephemeral配置,默认true为临时实例,false为永久实例,临时使用AP,持久使用CP

对应AP模式提供了EphemeralConsistencyService 对应CP模式提供了RaftConsistencyServiceImpl,内部封装了RaftCore一致性算法类,调用RaftCore的signalPublish()实现同步,查看该方法源码首先获取到majorityCount需要同步的nacos服务的节点数,利用CountDownLatch控制调用 HttpClient.asyncHttpPostLarge()向其他节点发送确认请求实现多数节点的确认,如果在规定的时间内没有得到majorityCount个节点的成功响应,则认为数据发布失败,并抛出异常 而Eureka中只支持AP模式

服务异常剔除方面

eureka: client在默认情况每隔30s向EurekaServer发送一次心跳,当EurekaServer在默认连续90s秒的情况下没有收到心跳, 会把client 从注册表中剔除,在由EurekaServer 60秒的清除间隔,把Eureka client 给下线 nacos: client 通过心跳上报方式告诉 nacos注册中心健康状态,默认心跳间隔5秒,nacos会在超过15秒未收到心跳后将实例设置为不健康状态,可以正常接收到请求,超过30秒nacos将实例删除,不会再接收请求

自我保护机制方面

Eureka保护方式:当在短时间内,统计续约失败的比例,如果达到一定阈值,则会触发自我保护的机制,在该机制下不会剔除任何服务 Nacos保护方式:当域名健康实例 (Instance) 占总服务实例(Instance) 的比例小于阈值时,无论实例 (Instance) 是否健康,都会将这个实例 (Instance) 返回给客户端。这样做虽然损失了一部分流量,但是保证了集群的剩余健康实例 (Instance) 能正常工作,并且Nacos的自我保护的这个阈值是

4685

4685

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?