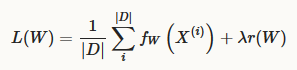

solver的方法解决了使loss最小化的问题,给定一个dataset(D),优化的对象就是求loss的平均值:

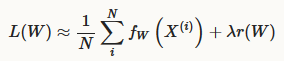

当数据集D很大时,利用mini-batch of N<<|D| instances计算loss:

GD方法有以下六种:

1. Stochastic Gradient Descent (type: “SGD”)

2. AdaDelta (type: “AdaDelta”)

3. Adaptive Gradient (type: “AdaGrad”)

4. Adam (type: “Adam”)

5. Nesterov’s Accelerated Gradient (type: “Nesterov”)

6. RMSprop (type: “RMSProp”)

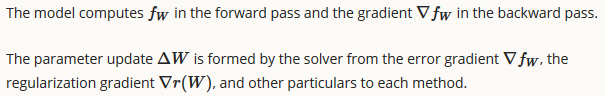

利用GD方法更新weight,进行优化。

参照文件“./caffe-master/src/caffe/proto/caffe.proto”,我们可以看到如何在solver.prototxt文件中对GD optimization algorithm进行配置。

message SolverParameter {

//////////////////////////////////////////////////////////////////////////////

// Specifying the train and test networks

//

// Exactly one train net must be specified using one of the following fields:

// train_net_param, train_net, net_param, net

// One or more test nets may be specified using any of the following fields:

// test_net_param, test_net, net_param, net

// If more than one test net field is specified (e.g., both net and

// test_net are specified), they will be evaluated in the field order given

// above: (1) test_net_param, (2) test_net, (3) net_param/net.

// A test_iter must be specified for each test_net.

// A test_level and/or a test_stage may also be specified for each test_net.

//////////////////////////////////////////////////////////////////////////////

// type of the solver

optional string type = 40 [default = "SGD"];

// numerical stability for RMSProp, AdaGrad and AdaDelta and Adam

optional float delta = 31 [default = 1e-8];

// parameters for the Adam solver

optional float momentum2 = 39 [default = 0.999];

// RMSProp decay value

// MeanSquare(t) = rms_decay*MeanSquare(t-1) + (1-rms_decay)*SquareGradient(t)

optional float rms_decay = 38;

// If true, print information about the state of the net that may help with

// debugging learning problems.

optional bool debug_info = 23 [default = false];

// If false, don't save a snapshot after training finishes.

optional bool snapshot_after_train = 28 [default = true];

// DEPRECATED: old solver enum types, use string instead

enum SolverType {

SGD = 0;

NESTEROV = 1;

ADAGRAD = 2;

RMSPROP = 3;

ADADELTA = 4;

ADAM = 5;

}

// DEPRECATED: use type instead of solver_type

optional SolverType solver_type = 30 [default = SGD];

}

534

534

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?