昇思25天学习打卡营第11天 | FCN图像语义分割

文章目录

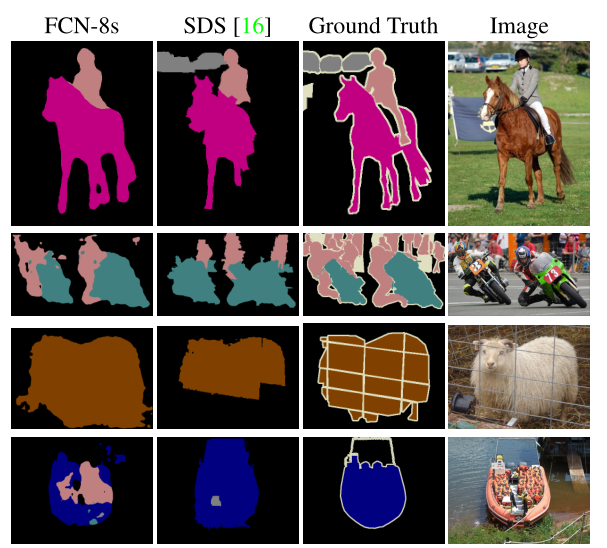

语义分割(semantic segmentation) 常被用于人脸识别、物体检测、医学影像、卫星图像分析、自动驾驶等领域。

语义分割的目的是对图像中的每一个像素进行分类,输出与输入图像大小相同的图像,每个像素代表对应输入像素所属的类别。

FCN模型

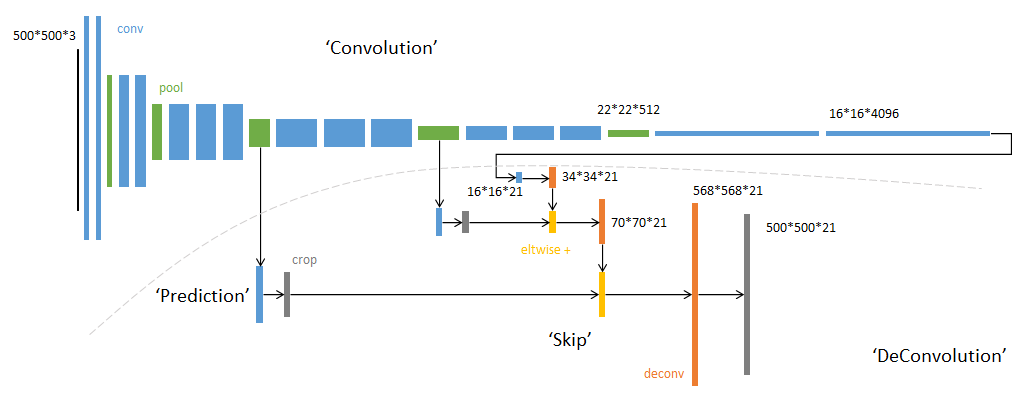

全卷积神经网络(Fully Convolutional Networks,FCN)是一种端到端的分割方法,通过进行像素级的预测直接得出与原图大小相等的label map。

FCN主要使用以下三种技术:

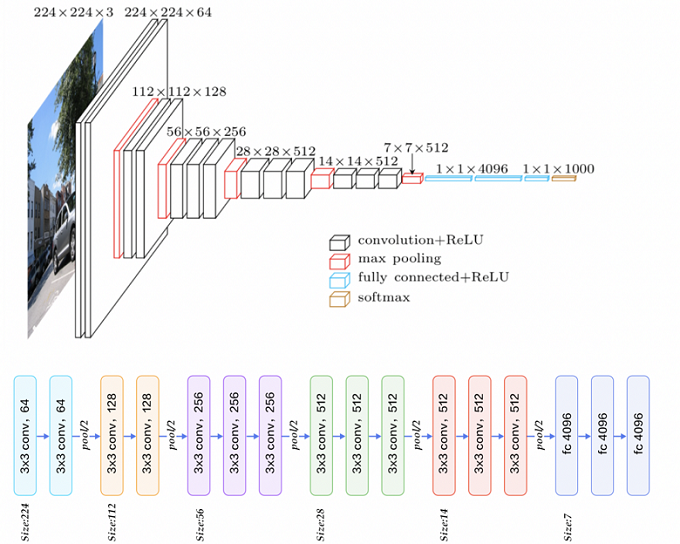

- 卷积化: 使用VGG-16作为FCN的backbone。

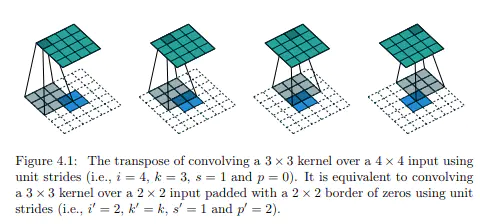

- 上采样: :通过反卷积对特征图进行上采样,以恢复输入图像的分辨率。

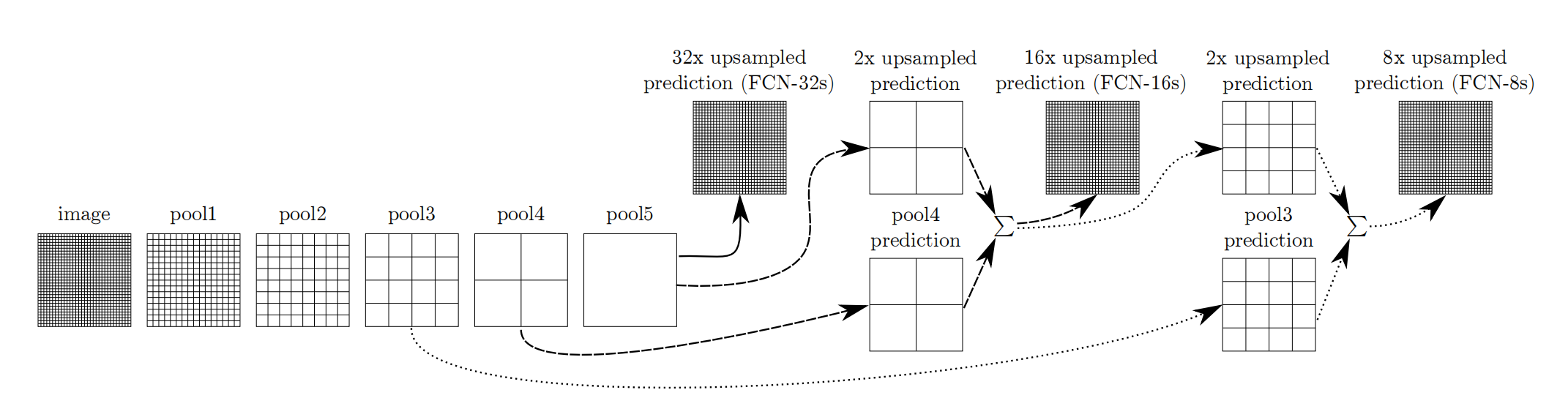

- 跳跃结构: 由于最后一层特征图太小,损失过多细节,采用skips结构将更具全局信息的最后一层和更浅层的预测结合,使预测结果获得更多的局部细节。

数据处理

下载数据集

from download import download

url = "https://mindspore-website.obs.cn-north-4.myhuaweicloud.com/notebook/datasets/dataset_fcn8s.tar"

download(url, "./dataset", kind="tar", replace=True)

创建训练集

import cv2

import mindspore.dataset as ds

import numpy as np

class SegDataset:

def __init__(

self,

image_mean,

image_std,

data_file="",

batch_size=32,

crop_size=512,

max_scale=2.0,

min_scale=0.5,

ignore_label=255,

num_classes=21,

num_readers=2,

num_parallel_calls=4,

):

self.data_file = data_file

self.batch_size = batch_size

self.crop_size = crop_size

self.image_mean = np.array(image_mean, dtype=np.float32)

self.image_std = np.array(image_std, dtype=np.float32)

self.max_scale = max_scale

self.min_scale = min_scale

self.ignore_label = ignore_label

self.num_classes = num_classes

self.num_readers = num_readers

self.num_parallel_calls = num_parallel_calls

max_scale > min_scale

def preprocess_dataset(self, image, label):

image_out = cv2.imdecode(np.frombuffer(image, dtype=np.uint8), cv2.IMREAD_COLOR)

label_out = cv2.imdecode(

np.frombuffer(label, dtype=np.uint8), cv2.IMREAD_GRAYSCALE

)

sc = np.random.uniform(self.min_scale, self.max_scale) # 随机缩放

new_h, new_w = int(sc * image_out.shape[0]), int(sc * image_out.shape[1])

image_out = cv2.resize(image_out, (new_w, new_h), interpolation=cv2.INTER_CUBIC)

label_out = cv2.resize(

label_out, (new_w, new_h), interpolation=cv2.INTER_NEAREST

)

image_out = (image_out - self.image_mean) / self.image_std

out_h, out_w = max(new_h, self.crop_size), max(new_w, self.crop_size)

pad_h, pad_w = out_h - new_h, out_w - new_w

if pad_h > 0 or pad_w > 0:

image_out = cv2.copyMakeBorder(

image_out, 0, pad_h, 0, pad_w, cv2.BORDER_CONSTANT, value=0

)

label_out = cv2.copyMakeBorder(

label_out,

0,

pad_h,

0,

pad_w,

cv2.BORDER_CONSTANT,

value=self.ignore_label,

)

offset_h = np.random.randint(0, out_h - self.crop_size + 1)

offset_w = np.random.randint(0, out_w - self.crop_size + 1)

image_out = image_out[

offset_h : offset_h + self.crop_size,

offset_w : offset_w + self.crop_size,

:,

]

label_out = label_out[

offset_h : offset_h + self.crop_size, offset_w : offset_w + self.crop_size

]

if np.random.uniform(0.0, 1.0) > 0.5:

image_out = image_out[:, ::-1, :]

label_out = label_out[:, ::-1]

image_out = image_out.transpose((2, 0, 1))

image_out = image_out.copy()

label_out = label_out.copy()

label_out = label_out.astype("int32")

return image_out, label_out

def get_dataset(self):

ds.config.set_numa_enable(True)

dataset = ds.MindDataset(

self.data_file,

columns_list=["data", "label"],

shuffle=True,

num_parallel_workers=self.num_readers,

)

transforms_list = self.preprocess_dataset

dataset = dataset.map(

operations=transforms_list,

input_columns=["data", "label"],

output_columns=["data", "label"],

num_parallel_workers=self.num_parallel_calls,

)

dataset = dataset.shuffle(buffer_size=self.batch_size * 10)

dataset = dataset.batch(self.batch_size, drop_remainder=True)

return dataset

# 定义创建数据集的参数

IMAGE_MEAN = [103.53, 116.28, 123.675]

IMAGE_STD = [57.375, 57.120, 58.395]

DATA_FILE = "dataset/dataset_fcn8s/mindname.mindrecord"

# 定义模型训练参数

train_batch_size = 4

crop_size = 512

min_scale = 0.5

max_scale = 2.0

ignore_label = 255

num_classes = 21

# 实例化Dataset

dataset = SegDataset(

image_mean=IMAGE_MEAN,

image_std=IMAGE_STD,

data_file=DATA_FILE,

batch_size=train_batch_size,

crop_size=crop_size,

max_scale=max_scale,

min_scale=min_scale,

ignore_label=ignore_label,

num_classes=num_classes,

num_readers=2,

num_parallel_calls=4,

)

dataset = dataset.get_dataset()

在上面的类SegDataset中:

__init__()里初始化了一些参数preprocess_dataset()定义了对输入图像的大量变换,包括:- 对图像和标签随机缩放;

- 图像归一化;

- 填充或裁剪图片为

crop_size × crop_size大小; - 随机水平翻转;

- 将<H,W,C>图像转换为<C,H,W>;

- 将标签数据转换为

int32类型。

get_dataset()中定义了数据集和数据变换,设置了并行。

可视化训练集

import matplotlib.pyplot as plt

import numpy as np

plt.figure(figsize=(16, 8))

# 对训练集中的数据进行展示

for i in range(1, 9):

plt.subplot(2, 4, i)

show_data = next(dataset.create_dict_iterator())

show_images = show_data["data"].asnumpy()

show_images = np.clip(show_images, 0, 1)

# 将图片转换HWC格式后进行展示

plt.imshow(show_images[0].transpose(1, 2, 0))

plt.axis("off")

plt.subplots_adjust(wspace=0.05, hspace=0)

plt.show()

网络构建

网络结构

- 第一个卷积块:输入

512

×

512

×

3

512\times512\times3

512×512×3图像,每一个卷积操作后面紧跟一个

nn.BatchNorm2d(out_channels)和nn.ReLU()

self.conv1 = nn.SequentialCell(

nn.Conv2d(

in_channels=3,

out_channels=64,

kernel_size=3,

weight_init="xavier_uniform",

),

nn.BatchNorm2d(64),

nn.ReLU(),

nn.Conv2d(

in_channels=64,

out_channels=64,

kernel_size=3,

weight_init="xavier_uniform",

),

nn.BatchNorm2d(64),

nn.ReLU(),

)

- 第一个池化:最大池化(Max Pooling),输入图像尺寸变为原始图像 1 / 2 1/2 1/2。

self.pool1 = nn.MaxPool2d(kernel_size=2, stride=2)

- 第二个卷积块:

self.conv2 = nn.SequentialCell(

nn.Conv2d(

in_channels=64,

out_channels=128,

kernel_size=3,

weight_init="xavier_uniform",

),

nn.BatchNorm2d(128),

nn.ReLU(),

nn.Conv2d(

in_channels=128,

out_channels=128,

kernel_size=3,

weight_init="xavier_uniform",

),

nn.BatchNorm2d(128),

nn.ReLU(),

)

- 第二个池化:变为原始图像 1 / 4 1/4 1/4

self.pool2 = nn.MaxPool2d(kernel_size=2, stride=2)

- 第三个卷积快:

self.conv3 = nn.SequentialCell(

nn.Conv2d(

in_channels=128,

out_channels=256,

kernel_size=3,

weight_init="xavier_uniform",

),

nn.BatchNorm2d(256),

nn.ReLU(),

nn.Conv2d(

in_channels=256,

out_channels=256,

kernel_size=3,

weight_init="xavier_uniform",

),

nn.BatchNorm2d(256),

nn.ReLU(),

nn.Conv2d(

in_channels=256,

out_channels=256,

kernel_size=3,

weight_init="xavier_uniform",

),

nn.BatchNorm2d(256),

nn.ReLU(),

)

- 第三个池化:变为原始图像 1 / 8 1/8 1/8

self.pool3 = nn.MaxPool2d(kernel_size=2, stride=2)

- 第四个卷积块:

self.conv4 = nn.SequentialCell(

nn.Conv2d(

in_channels=256,

out_channels=512,

kernel_size=3,

weight_init="xavier_uniform",

),

nn.BatchNorm2d(512),

nn.ReLU(),

nn.Conv2d(

in_channels=512,

out_channels=512,

kernel_size=3,

weight_init="xavier_uniform",

),

nn.BatchNorm2d(512),

nn.ReLU(),

nn.Conv2d(

in_channels=512,

out_channels=512,

kernel_size=3,

weight_init="xavier_uniform",

),

nn.BatchNorm2d(512),

nn.ReLU(),

)

- 第四个池化:变为原始图像 1 / 16 1/16 1/16

self.pool4 = nn.MaxPool2d(kernel_size=2, stride=2)

- 第五个卷积块:

self.conv5 = nn.SequentialCell(

nn.Conv2d(

in_channels=512,

out_channels=512,

kernel_size=3,

weight_init="xavier_uniform",

),

nn.BatchNorm2d(512),

nn.ReLU(),

nn.Conv2d(

in_channels=512,

out_channels=512,

kernel_size=3,

weight_init="xavier_uniform",

),

nn.BatchNorm2d(512),

nn.ReLU(),

nn.Conv2d(

in_channels=512,

out_channels=512,

kernel_size=3,

weight_init="xavier_uniform",

),

nn.BatchNorm2d(512),

nn.ReLU(),

)

- 第五个池化:变为原始图像 1 / 32 1/32 1/32

self.pool5 = nn.MaxPool2d(kernel_size=2, stride=2)

- 第六、七个卷积块:保持图像大小不变,替换全连接层

self.conv6 = nn.SequentialCell(

nn.Conv2d(

in_channels=512,

out_channels=4096,

kernel_size=7,

weight_init="xavier_uniform",

),

nn.BatchNorm2d(4096),

nn.ReLU(),

)

self.conv7 = nn.SequentialCell(

nn.Conv2d(

in_channels=4096,

out_channels=4096,

kernel_size=1,

weight_init="xavier_uniform",

),

nn.BatchNorm2d(4096),

nn.ReLU(),

)

self.score_fr = nn.Conv2d(in_channels=4096, out_channels=self.n_class,

kernel_size=1, weight_init='xavier_uniform')

- 反卷积层:

self.upscore2 = nn.Conv2dTranspose(

in_channels=self.n_class,

out_channels=self.n_class,

kernel_size=4,

stride=2,

weight_init="xavier_uniform",

)

self.score_pool4 = nn.Conv2d(

in_channels=512,

out_channels=self.n_class,

kernel_size=1,

weight_init="xavier_uniform",

)

self.upscore_pool4 = nn.Conv2dTranspose(

in_channels=self.n_class,

out_channels=self.n_class,

kernel_size=4,

stride=2,

weight_init="xavier_uniform",

)

self.score_pool3 = nn.Conv2d(

in_channels=256,

out_channels=self.n_class,

kernel_size=1,

weight_init="xavier_uniform",

)

self.upscore8 = nn.Conv2dTranspose(

in_channels=self.n_class,

out_channels=self.n_class,

kernel_size=16,

stride=8,

weight_init="xavier_uniform",

)

张量操作

def construct(self, x):

x1 = self.conv1(x)

p1 = self.pool1(x1)

x2 = self.conv2(p1)

p2 = self.pool2(x2)

x3 = self.conv3(p2)

p3 = self.pool3(x3)

x4 = self.conv4(p3)

p4 = self.pool4(x4)

x5 = self.conv5(p4)

p5 = self.pool5(x5)

x6 = self.conv6(p5)

x7 = self.conv7(x6)

sf = self.score_fr(x7)

u2 = self.upscore2(sf)

s4 = self.score_pool4(p4)

f4 = s4 + u2

u4 = self.upscore_pool4(f4)

s3 = self.score_pool3(p3)

f3 = s3 + u4

out = self.upscore8(f3)

return out

训练准备

导入VGG-16部分预训练权重:

from download import download

from mindspore import load_checkpoint, load_param_into_net

url = "https://mindspore-website.obs.cn-north-4.myhuaweicloud.com/notebook/datasets/fcn8s_vgg16_pretrain.ckpt"

download(url, "fcn8s_vgg16_pretrain.ckpt", replace=True)

def load_vgg16():

ckpt_vgg16 = "fcn8s_vgg16_pretrain.ckpt"

param_vgg = load_checkpoint(ckpt_vgg16)

load_param_into_net(net, param_vgg)

损失函数

语义分割是对图像中像素点进行分类,仍然属于分类问题,故使用交叉熵损失函数nn.CrossEntropyLoss()。

模型评估指标

用来评估训练出来的模型好坏。

- Pixel Accuracy(PA, 像素精度):标记真确的像素占总像素的比例:

P A = ∑ i = 0 k p i i ∑ i = 0 k ∑ j = 0 k p i j PA = \frac{\sum_{i=0}^kp_{ii}}{\sum_{i=0}^k\sum_{j=0}^kp_{ij}} PA=∑i=0k∑j=0kpij∑i=0kpii

import mindspore as ms

import mindspore.nn as nn

import mindspore.train as train

import numpy as np

class PixelAccuracy(train.Metric):

def __init__(self, num_class=21):

super(PixelAccuracy, self).__init__()

self.num_class = num_class

def _generate_matrix(self, gt_image, pre_image):

mask = (gt_image >= 0) & (gt_image < self.num_class)

label = self.num_class * gt_image[mask].astype("int") + pre_image[mask]

count = np.bincount(label, minlength=self.num_class**2)

confusion_matrix = count.reshape(self.num_class, self.num_class)

return confusion_matrix

def clear(self):

self.confusion_matrix = np.zeros((self.num_class,) * 2)

def update(self, *inputs):

y_pred = inputs[0].asnumpy().argmax(axis=1)

y = inputs[1].asnumpy().reshape(4, 512, 512)

self.confusion_matrix += self._generate_matrix(y, y_pred)

def eval(self):

pixel_accuracy = (

np.diag(self.confusion_matrix).sum() / self.confusion_matrix.sum()

)

return pixel_accuracy

- Mean Pixel Accuracy(MPA,均像素精度):计算每个类内正确分类的像素比例,然后求平均:

M P A = 1 k + 1 ∑ i = 0 k p i i ∑ j = 0 k p i j MPA = \frac1{k+1}\sum_{i=0}^k\frac{p_{ii}}{\sum_{j=0}^kp_{ij}} MPA=k+11i=0∑k∑j=0kpijpii

class PixelAccuracyClass(train.Metric):

def __init__(self, num_class=21):

super(PixelAccuracyClass, self).__init__()

self.num_class = num_class

def _generate_matrix(self, gt_image, pre_image):

mask = (gt_image >= 0) & (gt_image < self.num_class)

label = self.num_class * gt_image[mask].astype("int") + pre_image[mask]

count = np.bincount(label, minlength=self.num_class**2)

confusion_matrix = count.reshape(self.num_class, self.num_class)

return confusion_matrix

def update(self, *inputs):

y_pred = inputs[0].asnumpy().argmax(axis=1)

y = inputs[1].asnumpy().reshape(4, 512, 512)

self.confusion_matrix += self._generate_matrix(y, y_pred)

def clear(self):

self.confusion_matrix = np.zeros((self.num_class,) * 2)

def eval(self):

mean_pixel_accuracy = np.diag(

self.confusion_matrix

) / self.confusion_matrix.sum(axis=1)

mean_pixel_accuracy = np.nanmean(mean_pixel_accuracy)

return mean_pixel_accuracy

- Mean Intersction over Union(MIoU,均交并比):两个集合(真实值和预测值)的交集和并集的比值。

M I o U = 1 k + 1 ∑ i = 0 k p i i ∑ j = 0 k p i j + ∑ j = 0 k p j i − p i i MIoU=\frac1{k+1}\sum_{i=0}^k\frac{p_{ii}}{\sum_{j=0}^kp_{ij}+\sum_{j=0}^kp_{ji}-p_{ii}} MIoU=k+11i=0∑k∑j=0kpij+∑j=0kpji−piipii

class MeanIntersectionOverUnion(train.Metric):

def __init__(self, num_class=21):

super(MeanIntersectionOverUnion, self).__init__()

self.num_class = num_class

def _generate_matrix(self, gt_image, pre_image):

mask = (gt_image >= 0) & (gt_image < self.num_class)

label = self.num_class * gt_image[mask].astype("int") + pre_image[mask]

count = np.bincount(label, minlength=self.num_class**2)

confusion_matrix = count.reshape(self.num_class, self.num_class)

return confusion_matrix

def update(self, *inputs):

y_pred = inputs[0].asnumpy().argmax(axis=1)

y = inputs[1].asnumpy().reshape(4, 512, 512)

self.confusion_matrix += self._generate_matrix(y, y_pred)

def clear(self):

self.confusion_matrix = np.zeros((self.num_class,) * 2)

def eval(self):

mean_iou = np.diag(self.confusion_matrix) / (

np.sum(self.confusion_matrix, axis=1)

+ np.sum(self.confusion_matrix, axis=0)

- np.diag(self.confusion_matrix)

)

mean_iou = np.nanmean(mean_iou)

return mean_iou

- Frequency Weighted Intersection over Union(FWIoU,频权交并比):根据每个类别出现的频率为MIoU设置权重

F W I o U = 1 ∑ i = 0 k ∑ j = 0 k p i j ∑ i = 0 k p i i ∑ j = 0 k p i j + ∑ j = 0 k p j i − p i i FWIoU=\frac1{\sum_{i=0}^k\sum_{j=0}^kp_{ij}}\sum_{i=0}^k\frac{p_{ii}}{\sum_{j=0}^kp_{ij}+\sum_{j=0}^kp_{ji}-p_{ii}} FWIoU=∑i=0k∑j=0kpij1i=0∑k∑j=0kpij+∑j=0kpji−piipii

class FrequencyWeightedIntersectionOverUnion(train.Metric):

def __init__(self, num_class=21):

super(FrequencyWeightedIntersectionOverUnion, self).__init__()

self.num_class = num_class

def _generate_matrix(self, gt_image, pre_image):

mask = (gt_image >= 0) & (gt_image < self.num_class)

label = self.num_class * gt_image[mask].astype("int") + pre_image[mask]

count = np.bincount(label, minlength=self.num_class**2)

confusion_matrix = count.reshape(self.num_class, self.num_class)

return confusion_matrix

def update(self, *inputs):

y_pred = inputs[0].asnumpy().argmax(axis=1)

y = inputs[1].asnumpy().reshape(4, 512, 512)

self.confusion_matrix += self._generate_matrix(y, y_pred)

def clear(self):

self.confusion_matrix = np.zeros((self.num_class,) * 2)

def eval(self):

freq = np.sum(self.confusion_matrix, axis=1) / np.sum(self.confusion_matrix)

iu = np.diag(self.confusion_matrix) / (

np.sum(self.confusion_matrix, axis=1)

+ np.sum(self.confusion_matrix, axis=0)

- np.diag(self.confusion_matrix)

)

frequency_weighted_iou = (freq[freq > 0] * iu[freq > 0]).sum()

return frequency_weighted_iou

模型训练

导入VGG-16预训练参数,定义超参数,实例化损失函数、优化器,使用Model接口编译网络,然后开始训练:

import mindspore

import mindspore.nn as nn

from mindspore import Tensor

from mindspore.train import (

CheckpointConfig,

LossMonitor,

Model,

ModelCheckpoint,

TimeMonitor,

)

device_target = "Ascend"

mindspore.set_context(mode=mindspore.PYNATIVE_MODE, device_target=device_target)

train_batch_size = 4

num_classes = 21

# 初始化模型结构

net = FCN8s(n_class=21)

# 导入vgg16预训练参数

load_vgg16()

# 计算学习率

min_lr = 0.0005

base_lr = 0.05

train_epochs = 1

iters_per_epoch = dataset.get_dataset_size()

total_step = iters_per_epoch * train_epochs

lr_scheduler = mindspore.nn.cosine_decay_lr(

min_lr, base_lr, total_step, iters_per_epoch, decay_epoch=2

)

lr = Tensor(lr_scheduler[-1])

# 定义损失函数

loss = nn.CrossEntropyLoss(ignore_index=255)

# 定义优化器

optimizer = nn.Momentum(

params=net.trainable_params(), learning_rate=lr, momentum=0.9, weight_decay=0.0001

)

# 定义loss_scale

scale_factor = 4

scale_window = 3000

loss_scale_manager = ms.amp.DynamicLossScaleManager(scale_factor, scale_window)

# 初始化模型

if device_target == "Ascend":

model = Model(

net,

loss_fn=loss,

optimizer=optimizer,

loss_scale_manager=loss_scale_manager,

metrics={

"pixel accuracy": PixelAccuracy(),

"mean pixel accuracy": PixelAccuracyClass(),

"mean IoU": MeanIntersectionOverUnion(),

"frequency weighted IoU": FrequencyWeightedIntersectionOverUnion(),

},

)

else:

model = Model(

net,

loss_fn=loss,

optimizer=optimizer,

metrics={

"pixel accuracy": PixelAccuracy(),

"mean pixel accuracy": PixelAccuracyClass(),

"mean IoU": MeanIntersectionOverUnion(),

"frequency weighted IoU": FrequencyWeightedIntersectionOverUnion(),

},

)

# 设置ckpt文件保存的参数

time_callback = TimeMonitor(data_size=iters_per_epoch)

loss_callback = LossMonitor()

callbacks = [time_callback, loss_callback]

save_steps = 330

keep_checkpoint_max = 5

config_ckpt = CheckpointConfig(

save_checkpoint_steps=10, keep_checkpoint_max=keep_checkpoint_max

)

ckpt_callback = ModelCheckpoint(prefix="FCN8s", directory="./ckpt", config=config_ckpt)

callbacks.append(ckpt_callback)

model.train(train_epochs, dataset, callbacks=callbacks)

模型评估

IMAGE_MEAN = [103.53, 116.28, 123.675]

IMAGE_STD = [57.375, 57.120, 58.395]

DATA_FILE = "dataset/dataset_fcn8s/mindname.mindrecord"

# 下载已训练好的权重文件

url = "https://mindspore-website.obs.cn-north-4.myhuaweicloud.com/notebook/datasets/FCN8s.ckpt"

download(url, "FCN8s.ckpt", replace=True)

net = FCN8s(n_class=num_classes)

ckpt_file = "FCN8s.ckpt"

param_dict = load_checkpoint(ckpt_file)

load_param_into_net(net, param_dict)

if device_target == "Ascend":

model = Model(

net,

loss_fn=loss,

optimizer=optimizer,

loss_scale_manager=loss_scale_manager,

metrics={

"pixel accuracy": PixelAccuracy(),

"mean pixel accuracy": PixelAccuracyClass(),

"mean IoU": MeanIntersectionOverUnion(),

"frequency weighted IoU": FrequencyWeightedIntersectionOverUnion(),

},

)

else:

model = Model(

net,

loss_fn=loss,

optimizer=optimizer,

metrics={

"pixel accuracy": PixelAccuracy(),

"mean pixel accuracy": PixelAccuracyClass(),

"mean IoU": MeanIntersectionOverUnion(),

"frequency weighted IoU": FrequencyWeightedIntersectionOverUnion(),

},

)

# 实例化Dataset

dataset = SegDataset(

image_mean=IMAGE_MEAN,

image_std=IMAGE_STD,

data_file=DATA_FILE,

batch_size=train_batch_size,

crop_size=crop_size,

max_scale=max_scale,

min_scale=min_scale,

ignore_label=ignore_label,

num_classes=num_classes,

num_readers=2,

num_parallel_calls=4,

)

dataset_eval = dataset.get_dataset()

model.eval(dataset_eval)

模型推理

使用训练的网络进行推理:

import cv2

import matplotlib.pyplot as plt

net = FCN8s(n_class=num_classes)

# 设置超参

ckpt_file = "FCN8s.ckpt"

param_dict = load_checkpoint(ckpt_file)

load_param_into_net(net, param_dict)

eval_batch_size = 4

img_lst = []

mask_lst = []

res_lst = []

# 推理效果展示(上方为输入图片,下方为推理效果图片)

plt.figure(figsize=(8, 5))

show_data = next(dataset_eval.create_dict_iterator())

show_images = show_data["data"].asnumpy()

mask_images = show_data["label"].reshape([4, 512, 512])

show_images = np.clip(show_images, 0, 1)

for i in range(eval_batch_size):

img_lst.append(show_images[i])

mask_lst.append(mask_images[i])

res = net(show_data["data"]).asnumpy().argmax(axis=1)

for i in range(eval_batch_size):

plt.subplot(2, 4, i + 1)

plt.imshow(img_lst[i].transpose(1, 2, 0))

plt.axis("off")

plt.subplots_adjust(wspace=0.05, hspace=0.02)

plt.subplot(2, 4, i + 5)

plt.imshow(res[i])

plt.axis("off")

plt.subplots_adjust(wspace=0.05, hspace=0.02)

plt.show()

总结

这一节介绍了图像语义分割中的FCN网络,从网络的结构开始,介绍了图像数据集的变换和创建,介绍了完整的网络框架和Tensor操作,以及预训练模型的加载。此外,对于模型效果的而评估,还介绍了4个指标。通过这一节,大致学会了从paper的网络结构中创建一个网络模型。

打卡

167

167

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?