阿里研发的一个离线数据同步工具

1.Windows安装datax

1.1在java环境基础上,还要安装python,因为datax启动命令是执行的python命令

在bin目录下,打开控制台执行就行

python datax.py {YOUR_JOB.json}1.2.安装datax

2.datax出现乱码

输入CHCP 65001 回车,进入新的页面输入命令,打印日志就不会乱码

3.实践datax迁移的三种情况

1.mysqlTOmysql

2.csvTOmysql

3.csvTOcsv----(这个生成文件名不对,还请路过大神指教)

以下为json文件配置,亲测有效

三个json文件以及输出下载地址

datax的job文件夹job.zip-互联网其他资源-CSDN下载

1.mysqlTOmysql

配置mysqlreader,mysqlwriter,以及各自的数据库连接,表名,用户名,密码

踩坑记录

java.sql.SQLException: No suitable driver found for ["jdbc:mysql://localhost:3306/错误原因: reader过程jdbcurl有[],writer过程jdbcurl没有[]

{

"job":{

"content":[

{

"reader":{

"name":"mysqlreader",

"parameter":{

"column":[

"order1",

"order2"

],

"connection":[

{

"jdbcUrl":[

"jdbc:mysql://127.0.0.1:3306/devdb?useUnicode=true&characterEncoding=utf-8&serverTimezone=Asia/Shanghai&multiple&allowMultiQueries=true"

],

"table":[

"testorder"

]

}

],

"password":"root",

"username":"root"

}

},

"writer":{

"name":"mysqlwriter",

"parameter":{

"column":[

"order1",

"order2"

],

"connection":[

{

"jdbcUrl":

"jdbc:mysql://127.0.0.1:3306/devdb?useUnicode=true&characterEncoding=utf-8&serverTimezone=Asia/Shanghai&multiple&allowMultiQueries=true"

,

"table":[

"testorder2"

]

}

],

"password":"root",

"username":"root"

}

}

}

],

"setting":{

"speed":{

"channel":"1"

}

}

}

}

2.csvTOmysql

配置txtfilereader,mysqlwriter,csv文件路径和mysql连接信息

{

"job": {

"content": [

{

"reader": {

"name": "txtfilereader",

"parameter": {

"path": ["D:/UTIL/datax/datax/job/testcsv.csv"],

"encoding":"utf-8",

"column": [

{

"index": 0,

"type": "string"

},

{

"index": 1,

"type": "string"

}

],

"skipHeader": "true",

"fieldDelimiter":","

}

},

"writer":{

"name":"mysqlwriter",

"parameter":{

"column":[

"order1",

"order2"

],

"connection":[

{

"jdbcUrl":

"jdbc:mysql://127.0.0.1:3306/devdb?useUnicode=true&characterEncoding=utf-8&serverTimezone=Asia/Shanghai&multiple&allowMultiQueries=true"

,

"table":[

"testorder2"

]

}

],

"password":"root",

"username":"root",

"writeMode":"insert"

}

}

}

],

"setting": {

"speed": {

"channel": "2"

}

}

}

}3.csvTOcsv

踩坑记录

1.控制台输出日志报脏数据

错误原因:csv文件创建方式不对

我之前错误创建:直接把创建好的.xlsx文件,后缀改成了.csv

正确方式:如图

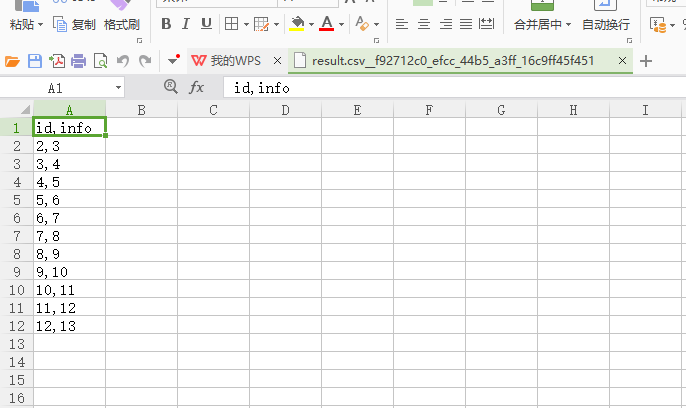

2.开始,输出的csv文件如下图,没有按照列分割

解决办法:在输出的csv配置分隔符为制表符 \t

"fieldDelimiter":"\t"

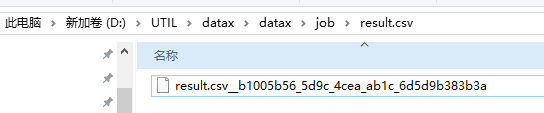

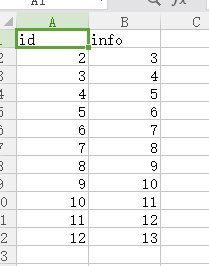

正确输出格式(文件名问题至今未解决)

json文件

{

"job": {

"content": [

{

"reader": {

"name": "txtfilereader",

"parameter": {

"path": ["D:/UTIL/datax/datax/job/testcsv.csv"],

"encoding":"utf-8",

"column": [

{

"index": 0,

"type": "string"

},

{

"index": 1,

"type": "string"

}

],

"skipHeader": "true"

}

},

"writer": {

"name": "txtfilewriter",

"parameter": {

"path": "D:/UTIL/datax/datax/job/result.csv",

"fileName": "result.csv",

"writeMode": "truncate",

"fileType": "csv",

"header":["id", "info"],

"fieldDelimiter":"\t"

}

}

}

],

"setting": {

"speed": {

"channel": "2"

}

}

}

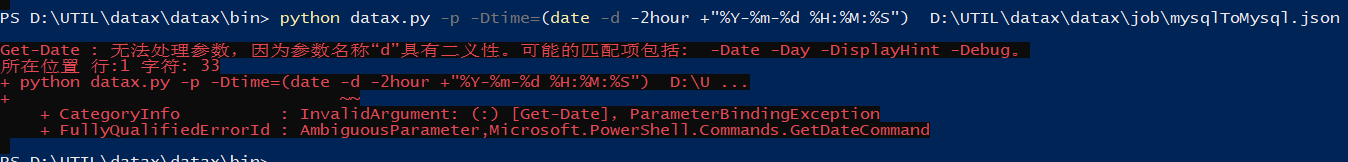

}4.动态传参 -p -D

python datax.py -p -Dtime="'2020-07-28'" D:\UTIL\datax\datax\job\mysqlToMysql.json"where":"removeTime<=${time}",5.当无法动态传参时,将语句直接写到json文件里

6.querySql

与where区别:写到connection中 ,有querySql的情况下,connection中不需要table属性,parameter中不需要column属性

6.1不带querySql的

"reader":{

"name":"mysqlreader",

"parameter":{

"column":[

"order1",

"order2"

],

"connection":[

{

"jdbcUrl":[

"jdbc:mysql://127.0.0.1:3306/devdb?useUnicode=true&characterEncoding=utf-8&serverTimezone=Asia/Shanghai&multiple&allowMultiQueries=true"

],

"table":[

"testorder1"

]

}

],

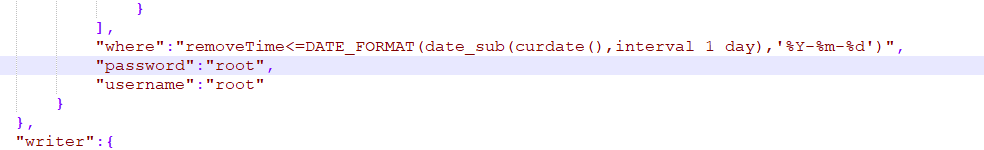

"where":"removeTime<=DATE_FORMAT(date_sub(curdate(),interval 1 day),'%Y-%m-%d')",

"password":"root",

"username":"root"

}

},6.2带querySql的

"reader":{

"name":"mysqlreader",

"parameter":{

"connection":[

{

"jdbcUrl":[

"jdbc:mysql://127.0.0.1:3306/devdb?useUnicode=true&characterEncoding=utf-8&serverTimezone=Asia/Shanghai&multiple&allowMultiQueries=true"

],

"querySql":[

"SELECT count(order1) ,order1 from testorder1 GROUP BY order1;"

]

}

],

"password":"root",

"username":"root"

}

}

905

905

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?