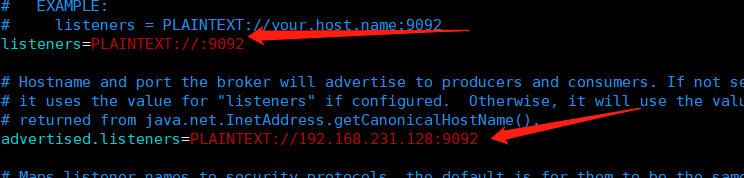

首先启动kafka。注意要修改配置文件 打开远程控制

1.创建springboot工程并引入kafka依赖

<dependency>

<groupId>org.springframework.kafka</groupId>

<artifactId>spring-kafka</artifactId>

</dependency>

2.在主配置文件里进行kafka的配置

#============== kafka ===================

# 指定kafka 代理地址,可以多个

spring.kafka.bootstrap-servers=192.168.231.128:9092

#=============== provider =======================

spring.kafka.producer.retries=0

# 每次批量发送消息的数量

spring.kafka.producer.batch-size=16384

spring.kafka.producer.buffer-memory=33554432

# 指定消息key和消息体的编解码方式

spring.kafka.producer.key-serializer=org.apache.kafka.common.serialization.StringSerializer

spring.kafka.producer.value-serializer=org.apache.kafka.common.serialization.StringSerializer

#=============== consumer =======================

# 指定默认消费者group id

spring.kafka.consumer.group-id=test-hello-group

spring.kafka.consumer.auto-offset-reset=earliest

spring.kafka.consumer.enable-auto-commit=true

spring.kafka.consumer.auto-commit-interval=100

# 指定消息key和消息体的编解码方式

spring.kafka.consumer.key-deserializer=org.apache.kafka.common.serialization.StringDeserializer

spring.kafka.consumer.value-deserializer=org.apache.kafka.common.serialization.StringDeserializer

3.编写提供者

@Component

public class KafkaProvider {

@Autowired

private KafkaTemplate kafkaTemplate;

public void sendMessage() {

kafkaTemplate.send("codesheep", "hello kafka");

}

}

4.编写消费者

@Component

public class KafkaConsumer {

//这里的topic名要与发送那里保持一致

@KafkaListener(topics = {"codesheep"})

public void receive(ConsumerRecord consumerRecord) {

System.out.println("consumer is invoker");

String topic = consumerRecord.topic();

String value = (String) consumerRecord.value();

System.out.println("value " + value);

System.out.println(topic);

}

}

462

462

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?