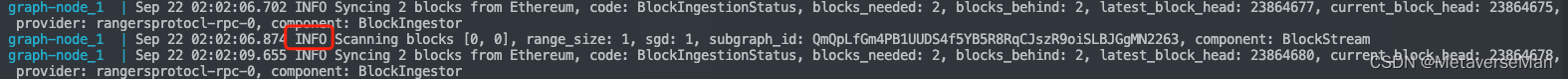

日志里已经开始块同步了 但是查不到

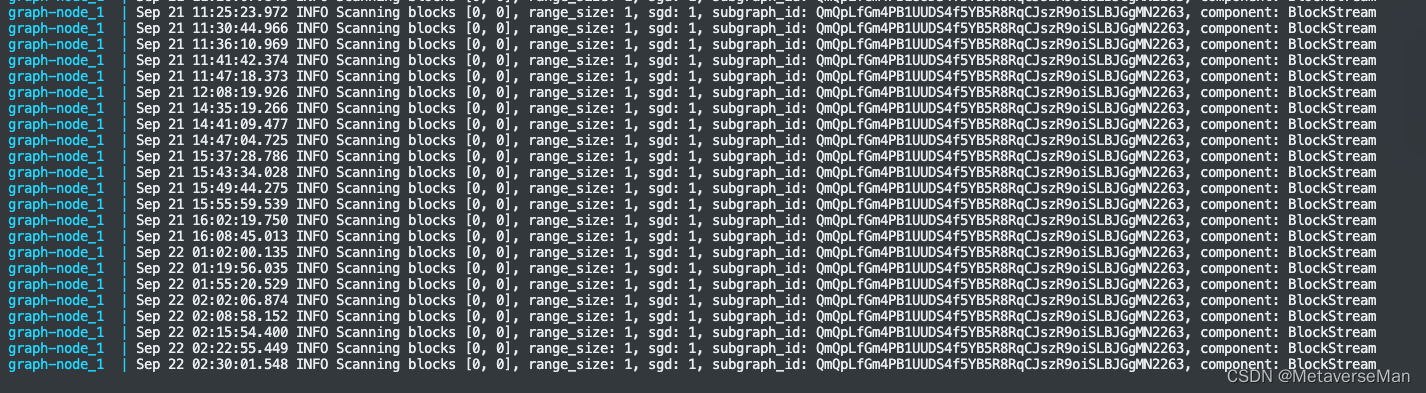

本来感觉[0,0]不对

但是找到了这个说是对的 https://docs.skale.network/develop/using-graph

docker-compose logs -f | grep Scanning

查看一下同步的日志

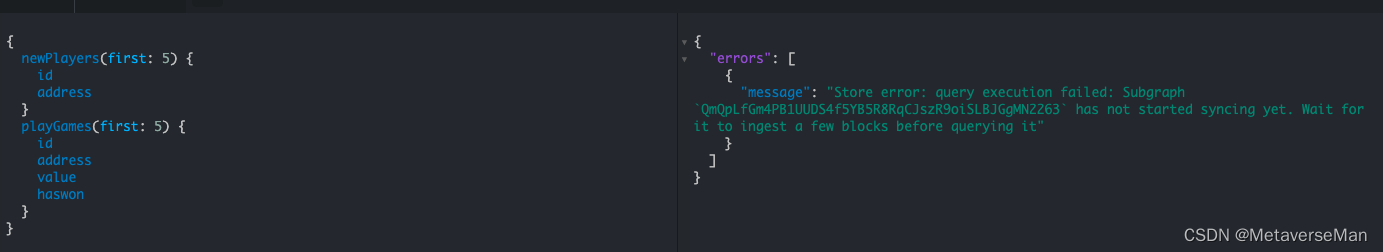

明明有同步,却还是查不到数据

------------------------分割线-------------------------------------------------

兄弟们我成功了!!! 事实证明不是部署上的错误,是我选用的链的RPC节点不行!而且[0,0]是不对的!应该是增长的。

经过几次略微调整我认为可能不对的点,重新部署之前的rangers链后,还是不对 !

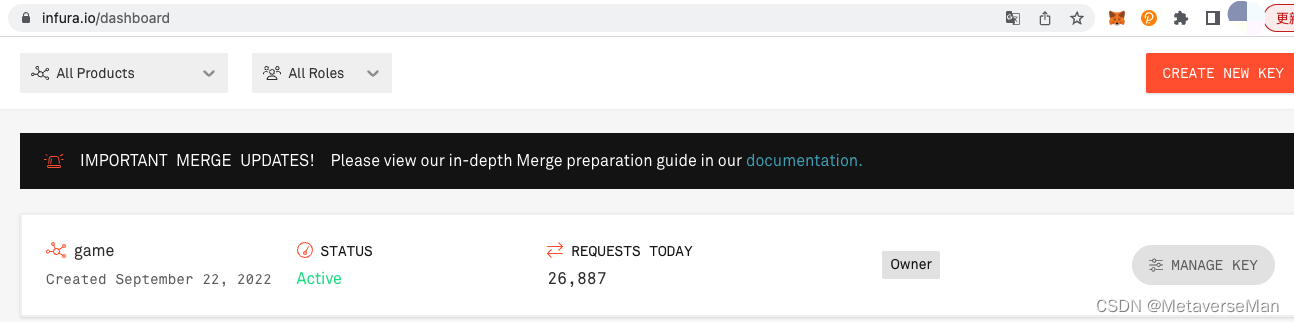

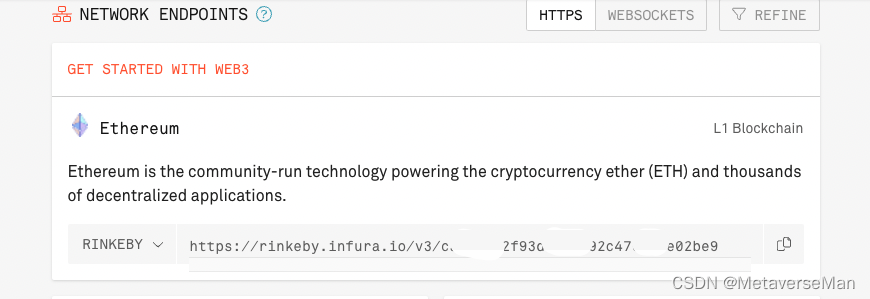

于是我决定换条链试试,我把合约部署在了 rinkeby测试网,节点选用infura的,

https://rinkeby.infura.io/v3/<your infura api key>

然后还是按着上篇文章的步骤进行了部署,但是thegraph节点,不用重新部署了,直接

docker-compose down

vim docker-compose.yml

(修改这行:ethereum: 'rinkeby:https://rinkeby.infura.io/v3/<your infura api key>')

docker-compose up -d

然后参考上篇将子图部署到私有节点上。

graph-node_1 | Sep 22 08:19:00.563 INFO Received subgraph_deploy request, params: SubgraphDeployParams { name: SubgraphName("MetaverseMan/Game"), ipfs_hash: DeploymentHash("QmaKiVgqUe29bhNm8ZJ5EpbosyjaTNQBaKH9hMmrXjeV5F"), node_id: None, debug_fork: None }, component: JsonRpcServer

graph-node_1 | Sep 22 08:19:05.581 INFO Resolve schema, link: /ipfs/QmdKLtNAeVDpuKL1yYEQHn7Bdh7iqjbVHtsgwHL9GTobBu, sgd: 0, subgraph_id: QmaKiVgqUe29bhNm8ZJ5EpbosyjaTNQBaKH9hMmrXjeV5F, component: SubgraphRegistrar

graph-node_1 | Sep 22 08:19:05.582 INFO Resolve data source, source_start_block: 0, source_address: Some(0xc716e9df2bb6b6b5b3660f4d210d222e952abfce), name: Game, sgd: 0, subgraph_id: QmaKiVgqUe29bhNm8ZJ5EpbosyjaTNQBaKH9hMmrXjeV5F, component: SubgraphRegistrar

graph-node_1 | Sep 22 08:19:05.583 INFO Resolve mapping, link: /ipfs/QmcmqtAfWdyR2ZdtmpEKtRQDwzrCPw7U2c8woJyZad7b3P, sgd: 0, subgraph_id: QmaKiVgqUe29bhNm8ZJ5EpbosyjaTNQBaKH9hMmrXjeV5F, component: SubgraphRegistrar

graph-node_1 | Sep 22 08:19:05.583 INFO Resolve ABI, link: /ipfs/QmapPy7RyHEGVX7Fpp8ZgjdeeDXGN3JA2xnvJzbV78R2rP, name: Game, sgd: 0, subgraph_id: QmaKiVgqUe29bhNm8ZJ5EpbosyjaTNQBaKH9hMmrXjeV5F, component: SubgraphRegistrar

graph-node_1 | Sep 22 08:19:05.594 INFO Set subgraph start block, block: None, sgd: 0, subgraph_id: QmaKiVgqUe29bhNm8ZJ5EpbosyjaTNQBaKH9hMmrXjeV5F, component: SubgraphRegistrar

graph-node_1 | Sep 22 08:19:05.594 INFO Graft base, block: None, base: None, sgd: 0, subgraph_id: QmaKiVgqUe29bhNm8ZJ5EpbosyjaTNQBaKH9hMmrXjeV5F, component: SubgraphRegistrar

graph-node_1 | Sep 22 08:19:06.255 INFO Starting subgraph writer, queue_size: 5, sgd: 1, subgraph_id: QmaKiVgqUe29bhNm8ZJ5EpbosyjaTNQBaKH9hMmrXjeV5F, component: SubgraphInstanceManager

graph-node_1 | Sep 22 08:19:06.266 INFO Resolve subgraph files using IPFS, sgd: 1, subgraph_id: QmaKiVgqUe29bhNm8ZJ5EpbosyjaTNQBaKH9hMmrXjeV5F, component: SubgraphInstanceManager

graph-node_1 | Sep 22 08:19:06.266 INFO Resolve schema, link: /ipfs/QmdKLtNAeVDpuKL1yYEQHn7Bdh7iqjbVHtsgwHL9GTobBu, sgd: 1, subgraph_id: QmaKiVgqUe29bhNm8ZJ5EpbosyjaTNQBaKH9hMmrXjeV5F, component: SubgraphInstanceManager

graph-node_1 | Sep 22 08:19:06.266 INFO Resolve data source, source_start_block: 0, source_address: Some(0xc716e9df2bb6b6b5b3660f4d210d222e952abfce), name: Game, sgd: 1, subgraph_id: QmaKiVgqUe29bhNm8ZJ5EpbosyjaTNQBaKH9hMmrXjeV5F, component: SubgraphInstanceManager

graph-node_1 | Sep 22 08:19:06.267 INFO Resolve mapping, link: /ipfs/QmcmqtAfWdyR2ZdtmpEKtRQDwzrCPw7U2c8woJyZad7b3P, sgd: 1, subgraph_id: QmaKiVgqUe29bhNm8ZJ5EpbosyjaTNQBaKH9hMmrXjeV5F, component: SubgraphInstanceManager

graph-node_1 | Sep 22 08:19:06.267 INFO Resolve ABI, link: /ipfs/QmapPy7RyHEGVX7Fpp8ZgjdeeDXGN3JA2xnvJzbV78R2rP, name: Game, sgd: 1, subgraph_id: QmaKiVgqUe29bhNm8ZJ5EpbosyjaTNQBaKH9hMmrXjeV5F, component: SubgraphInstanceManager

graph-node_1 | Sep 22 08:19:06.268 INFO Successfully resolved subgraph files using IPFS, sgd: 1, subgraph_id: QmaKiVgqUe29bhNm8ZJ5EpbosyjaTNQBaKH9hMmrXjeV5F, component: SubgraphInstanceManager

graph-node_1 | Sep 22 08:19:06.268 INFO Data source count at start: 1, sgd: 1, subgraph_id: QmaKiVgqUe29bhNm8ZJ5EpbosyjaTNQBaKH9hMmrXjeV5F, component: SubgraphInstanceManager

graph-node_1 | Sep 22 08:19:06.520 INFO Scanning blocks [0, 0], range_size: 1, sgd: 1, subgraph_id: QmaKiVgqUe29bhNm8ZJ5EpbosyjaTNQBaKH9hMmrXjeV5F, component: BlockStream

graph-node_1 | Sep 22 08:19:07.216 INFO Scanning blocks [1, 10], range_size: 10, sgd: 1, subgraph_id: QmaKiVgqUe29bhNm8ZJ5EpbosyjaTNQBaKH9hMmrXjeV5F, component: BlockStream

graph-node_1 | Sep 22 08:19:07.915 INFO Scanning blocks [11, 110], range_size: 100, sgd: 1, subgraph_id: QmaKiVgqUe29bhNm8ZJ5EpbosyjaTNQBaKH9hMmrXjeV5F, component: BlockStream

graph-node_1 | Sep 22 08:19:08.605 INFO Scanning blocks [111, 1110], range_size: 1000, sgd: 1, subgraph_id: QmaKiVgqUe29bhNm8ZJ5EpbosyjaTNQBaKH9hMmrXjeV5F, component: BlockStream

graph-node_1 | Sep 22 08:19:09.310 INFO Scanning blocks [1111, 3110], range_size: 2000, sgd: 1, subgraph_id: QmaKiVgqUe29bhNm8ZJ5EpbosyjaTNQBaKH9hMmrXjeV5F, component: BlockStream

graph-node_1 | Sep 22 08:19:10.000 INFO Scanning blocks [3111, 5110], range_size: 2000, sgd: 1, subgraph_id: QmaKiVgqUe29bhNm8ZJ5EpbosyjaTNQBaKH9hMmrXjeV5F, component: BlockStream

graph-node_1 | Sep 22 08:19:10.763 INFO Scanning blocks [5111, 7110], range_size: 2000, sgd: 1, subgraph_id: QmaKiVgqUe29bhNm8ZJ5EpbosyjaTNQBaKH9hMmrXjeV5F, component: BlockStream

graph-node_1 | Sep 22 08:19:11.463 INFO Scanning blocks [7111, 9110], range_size: 2000, sgd: 1, subgraph_id: QmaKiVgqUe29bhNm8ZJ5EpbosyjaTNQBaKH9hMmrXjeV5F, component: BlockStream

graph-node_1 | Sep 22 08:19:12.190 INFO Scanning blocks [9111, 11110], range_size: 2000, sgd: 1, subgraph_id: QmaKiVgqUe29bhNm8ZJ5EpbosyjaTNQBaKH9hMmrXjeV5F, component: BlockStream

graph-node_1 | Sep 22 08:19:12.900 INFO Scanning blocks [11111, 13110], range_size: 2000, sgd: 1, subgraph_id: QmaKiVgqUe29bhNm8ZJ5EpbosyjaTNQBaKH9hMmrXjeV5F, component: BlockStream

graph-node_1 | Sep 22 08:19:13.603 INFO Scanning blocks [13111, 15110], range_size: 2000, sgd: 1, subgraph_id: QmaKiVgqUe29bhNm8ZJ5EpbosyjaTNQBaKH9hMmrXjeV5F, component: BlockStream

graph-node_1 | Sep 22 08:19:14.296 INFO Scanning blocks [15111, 17110], range_size: 2000, sgd: 1, subgraph_id: QmaKiVgqUe29bhNm8ZJ5EpbosyjaTNQBaKH9hMmrXjeV5F, component: BlockStream

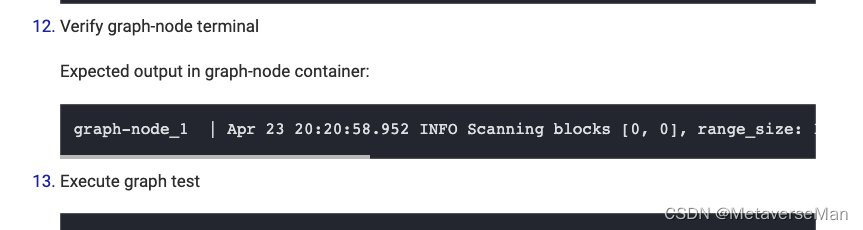

看到扫块的个数是增长的,不是[0,0]…这里也被那篇文章误导了,以为[0,0]是正常状态,其实用菊花想也应该是有增长的。

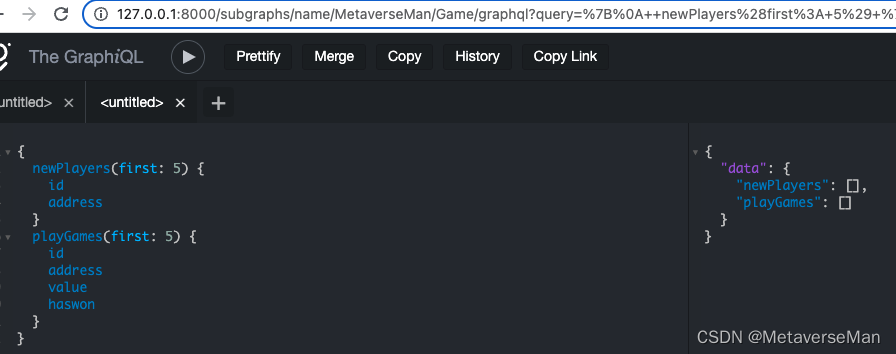

这个时候再去面板查询就已经不是query execution failed: Subgraph has not started syncing yet 了,而是正常的展示,没报错。

现在还没同步完,我的合约在11423609块上,目前看还得同步个个把小时。一会好了查查看。对了,这个scanning是从0块开始扫的,syncing是从你部署的时候的高度开始同步的。

----------------------------------分割线---------------------------

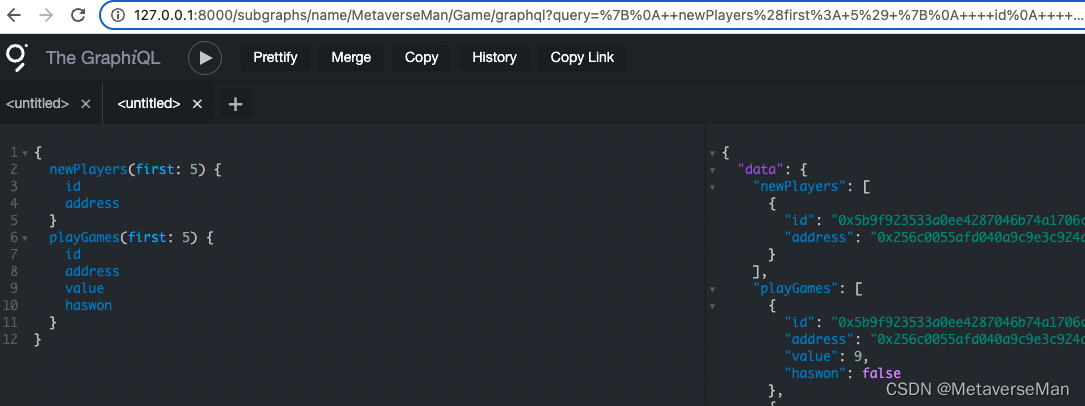

同步完了。查询功能正常使用。

659

659

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?