进入官网我们可以看到很多内置的source/sink,这能覆盖大多数的应用场景,

嗯,大多数…

产品:

我:

产品:“我想直接读取mysql的数据…”

我:

那就自定义一个吧,首先学习一下如何自定义Datasource,显然官方预见到了这个场景,给我们提供了三个接口:

- SourceFunction:非并行数据源

- ParallelSourceFunction:并行数据源

- RichParallelSourceFunction:(Rich??,这个我懂,丰富的意思,英语四级300多分不是白考的)丰富的并行数据源

下面一个一个举栗子:

SourceFunction:并行数据源

- 定义MyNoParallelSourceScala 类

package hctang.tech.streaming.custormSource

import org.apache.flink.streaming.api.functions.source.SourceFunction

import org.apache.flink.streaming.api.functions.source.SourceFunction.SourceContext

/**

* 自定义并行度为一的source

* 实现从一开始产生递增

*/

class MyNoParallelSourceScala extends SourceFunction[Long]{

/* override def run(ctx:SourceContext[Long])={

}*/

var count=1L

var isRunning=true

override def run(sourceContext: SourceContext[Long]): Unit = {

while(isRunning){

sourceContext.collect(count)

count+=1

Thread.sleep(1000)

}

}

override def cancel(): Unit = {

isRunning=false

}

}

调用

package hctang.tech.streaming.custormSource

import org.apache.flink.api.common.typeinfo.TypeInformation

import org.apache.flink.streaming.api.scala.StreamExecutionEnvironment

import org.apache.flink.streaming.api.windowing.time.Time

object StreamingDemoWithNoParallelSourceScala {

def main(args: Array[String]): Unit = {

implicit val typeInfo = TypeInformation.of(classOf[(String)])

//获取执行环境

val env=StreamExecutionEnvironment.getExecutionEnvironment

//隐式转换

import org.apache.flink.api.scala._

val text=env.addSource(new MyNoParallelSourceScala)

val mapData = text.map(line=>{

println("接收到的数据:"+line)

line

})

val sum =mapData.timeWindowAll(Time.seconds(5)).sum(0)//窗口,五秒一次

sum.print().setParallelism(1)

env.execute("StreamingFromCollectionScala")

}

}

执行结果如图:

定义并行数据源

- 定义MyParallelSourceScala

package hctang.tech.streaming.custormSource

import org.apache.flink.streaming.api.functions.source.{ParallelSourceFunction, SourceFunction}

import org.apache.flink.streaming.api.functions.source.SourceFunction.SourceContext

/**

* 自定义并行度为一的source

* 实现从一开始产生递增

*/

class MyParallelSourceScala extends ParallelSourceFunction[Long]{

/* override def run(ctx:SourceContext[Long])={

}*/

var count=1L

var isRunning=true

override def run(sourceContext: SourceContext[Long]): Unit = {

while(isRunning){

sourceContext.collect(count)

count+=1

Thread.sleep(1000)

}

}

override def cancel(): Unit = {

isRunning=false

}

}

- 定义StreamingDemoWithParallelSourceScala调用MyParallelSourceScala

package hctang.tech.streaming.custormSource

import org.apache.flink.api.common.typeinfo.TypeInformation

import org.apache.flink.streaming.api.scala.StreamExecutionEnvironment

import org.apache.flink.streaming.api.windowing.time.Time

object StreamingDemoWithParallelSourceScala {

def main(args: Array[String]): Unit = {

implicit val typeInfo = TypeInformation.of(classOf[(String)])

//获取执行环境

val env=StreamExecutionEnvironment.getExecutionEnvironment

//隐式转换

import org.apache.flink.api.scala._

val text=env.addSource(new MyParallelSourceScala).setParallelism(2)

val mapData = text.map(line=>{

println("接收到的数据:"+line)

line

})

val sum =mapData.timeWindowAll(Time.seconds(5)).sum(0)

sum.print().setParallelism(1)

env.execute("StreamingFromCollectionScala")

}

}

- 运行结果

定义丰富的并行数据源…

- 定义MyRichParallelSourceScala

package hctang.tech.streaming.custormSource

import org.apache.flink.configuration.Configuration

import org.apache.flink.streaming.api.functions.source.{ParallelSourceFunction, RichParallelSourceFunction}

import org.apache.flink.streaming.api.functions.source.SourceFunction.SourceContext

/**

* 自定义并行度为一的source

* 实现从一开始产生递增

*/

class MyRichParallelSourceScala extends RichParallelSourceFunction[Long]{

/* override def run(ctx:SourceContext[Long])={

}*/

var count=1L

var isRunning=true

override def run(sourceContext: SourceContext[Long]): Unit = {

while(isRunning){

sourceContext.collect(count)

count+=1

Thread.sleep(1000)

}

}

override def cancel(): Unit = {

isRunning=false

}

//Rich

override def open(parameters: Configuration): Unit = super.open(parameters)

override def close(): Unit = super.close()

}

说明: 看上面代码,是不是多了open和close方法,没错,Rich!!

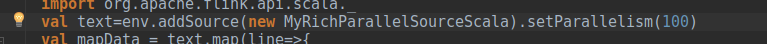

- 调用

package hctang.tech.streaming.custormSource

import org.apache.flink.api.common.typeinfo.TypeInformation

import org.apache.flink.streaming.api.scala.StreamExecutionEnvironment

import org.apache.flink.streaming.api.windowing.time.Time

object StreamingDemoWithRichParallelSourceScala {

def main(args: Array[String]): Unit = {

implicit val typeInfo = TypeInformation.of(classOf[(String)])

//获取执行环境

val env=StreamExecutionEnvironment.getExecutionEnvironment

//隐式转换

import org.apache.flink.api.scala._

val text=env.addSource(new MyRichParallelSourceScala).setParallelism(100)

val mapData = text.map(line=>{

println("接收到的数据:"+line)

line

})

val sum =mapData.timeWindowAll(Time.seconds(10)).sum(0)

sum.print().setParallelism(1)

env.execute("StreamingFromCollectionScala")

}

}

- 运行结果

恩…错了,重来,刚并行度设置成了100

并行度改为2

热身结束,回到最开始的场景,读取mysql中的数据,mysql有url,需要打开,不用了需要关闭,显然我们需要用RichSourceFunction(里边有close和open方法),

- class类SQL_source

package hctang.tech.streaming.custormSource

import java.sql.{Connection, DriverManager, PreparedStatement}

import org.apache.flink.configuration.Configuration

import org.apache.flink.streaming.api.functions.source.RichSourceFunction

import org.apache.flink.streaming.api.functions.source.SourceFunction.SourceContext

class SQL_source extends RichSourceFunction[Student]{

private var connection: Connection = null

private var ps: PreparedStatement = null

override def open(parameters: Configuration): Unit = {

val driver = "com.mysql.jdbc.Driver"

val url = "jdbc:mysql://local:3306/test"

val username = "root"

val password = "root"

Class.forName(driver)

connection = DriverManager.getConnection(url, username, password)

val sql = "select id , name , addr , sex from student"

ps = connection.prepareStatement(sql)

}

override def close(): Unit = {

if (connection != null) {

connection.close()

}

if (ps != null) {

ps.close()

}

}

override def run(sourceContext: SourceContext[Student]): Unit = {

val queryRequest = ps.executeQuery()

while (queryRequest.next()) {

val stuid = queryRequest.getInt("id")

val stuname = queryRequest.getString("name")

val stuaddr = queryRequest.getString("addr")

val stusex = queryRequest.getString("sex")

val stu = new Student(stuid, stuname, stuaddr, stusex)

sourceContext.collect(stu)

}

}

override def cancel(): Unit = {}

}

case class Student(stuid: Int, stuname: String, stuaddr: String, stusex: String) {

override def toString: String = {

"stuid:" + stuid + " stuname:" + stuname + " stuaddr:" + stuaddr + " stusex:" + stusex

}

}

- Object:MysqlSourceScala

package hctang.tech.streaming.custormSource

import org.apache.flink.streaming.api.scala.{DataStream, StreamExecutionEnvironment}

import org.apache.flink.api.scala._

object MysqlSoureScala {

def main(args:Array[String]):Unit = {

val env = StreamExecutionEnvironment.getExecutionEnvironment

val source: DataStream[Student] = env.addSource(new SQL_source)

source.print()

env.execute()

}

}

注意,不要忘记添加依赖(根据自己环境修改)

- pom.xml

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com.hctang.flink</groupId>

<artifactId>firstcode</artifactId>

<version>1.0-SNAPSHOT</version>

<dependencies>

<!-- https://mvnrepository.com/artifact/org.apache.flink/flink-scala -->

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-java</artifactId>

<version>1.9.0</version>

<!--<scope>provided</scope>-->

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-streaming-java_2.11</artifactId>

<version>1.9.0</version>

<!--<scope>provided</scope>-->

<!--指定包的作用域,集群中运行的话,很多东西并不需要,-->

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-scala_2.11</artifactId>

<version>1.9.0</version>

</dependency>

<!-- https://mvnrepository.com/artifact/org.apache.flink/flink-streaming-scala -->

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-streaming-scala_2.11</artifactId>

<version>1.9.0</version>

</dependency><!--flink kafka connector-->

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-connector-kafka-0.11_2.11</artifactId>

<version>1.9.0</version>

</dependency>

<!-- https://mvnrepository.com/artifact/org.apache.flink/flink-shaded-hadoop-2 -->

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-shaded-hadoop-2</artifactId>

<version>2.7.5-9.0</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-hadoop-fs</artifactId>

<version>1.9.0</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-common</artifactId>

<version>2.7.3</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-hdfs</artifactId>

<version>2.7.3</version>

</dependency>

<!--日志-->

<dependency>

<groupId>org.slf4j</groupId>

<artifactId>slf4j-log4j12</artifactId>

<version>1.7.7</version>

<scope>runtime</scope>

</dependency>

<dependency>

<groupId>log4j</groupId>

<artifactId>log4j</artifactId>

<version>1.2.17</version>

<scope>runtime</scope>

</dependency>

<!--alibaba fastjson-->

<dependency>

<groupId>com.alibaba</groupId>

<artifactId>fastjson</artifactId>

<version>1.2.51</version>

</dependency>

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<version>5.1.46</version>

</dependency>

</dependencies>

<build>

<plugins>

<!-- 编译插件 -->

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-compiler-plugin</artifactId>

<version>3.6.0</version>

<configuration>

<source>1.8</source>

<target>1.8</target>

<encoding>UTF-8</encoding>

</configuration>

</plugin>

<!-- scala编译插件 -->

<plugin>

<groupId>net.alchim31.maven</groupId>

<artifactId>scala-maven-plugin</artifactId>

<version>3.1.6</version>

<configuration>

<scalaCompatVersion>2.11</scalaCompatVersion>

<scalaVersion>2.11.8</scalaVersion>

<encoding>UTF-8</encoding>

</configuration>

<executions>

<execution>

<id>compile-scala</id>

<phase>compile</phase>

<goals>

<goal>add-source</goal>

<goal>compile</goal>

</goals>

</execution>

<execution>

<id>test-compile-scala</id>

<phase>test-compile</phase>

<goals>

<goal>add-source</goal>

<goal>testCompile</goal>

</goals>

</execution>

</executions>

</plugin>

<!-- 打jar包插件(会包含所有依赖) -->

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-assembly-plugin</artifactId>

<version>2.6</version>

<configuration>

<descriptorRefs>

<descriptorRef>jar-with-dependencies</descriptorRef>

</descriptorRefs>

<archive>

<manifest>

<!-- 可以设置jar包的入口类(可选)-->

<mainClass>hctang.tech.bacth.Bacth.BatchWordCount</mainClass>

</manifest>

</archive>

</configuration>

<executions>

<execution>

<id>make-assembly</id>

<phase>package</phase>

<goals>

<goal>single</goal>

</goals>

</execution>

</executions>

</plugin>

</plugins>

</build>

</project>

#sink到mysql

需要自定义,类似于source

def main(args: Array[String]): Unit = {

//1.创建流执行环境

val env = StreamExecutionEnvironment.getExecutionEnvironment

//2.准备数据

val dataStream: DataStream[Student] = env.fromElements(Student(8, "xiaoming", "beijing biejing", "female"))

//将 student 转换成字符串

val studentStream: DataStream[String] = dataStream.map(student => toJsonString(student) )// 这里需要显示 SerializerFeature 中的某一个,否则会报同时匹配两个方法的错误

//studentStream.print()

val prop = new Properties()

prop.setProperty("bootstrap.servers", "node01:9092")

val myProducer = new FlinkKafkaProducer011[String](sinkTopic, new KeyedSerializationSchemaWrapper[String](new

SimpleStringSchema()), prop)

studentStream.addSink(myProducer)

studentStream.print()

env.execute("Flink add sink")

}

}

class StudentSinkToMysql extends RichSinkFunction[Student]{

private var connection:Connection = null

private var ps:PreparedStatement = null

override def open(parameters: Configuration): Unit = {

val driver = "com.mysql.jdbc.Driver"

val url = "jdbc:mysql://node03:3306/test"

val username = "root"

val password = "root"

//1:加载驱动

Class.forName(driver)

//2:创建连接

connection = DriverManager.getConnection(url , username , password)

val sql = "insert into student(id , name , addr , sex) values(?,?,?,?);"

//3:获得执行语句

ps = connection.prepareStatement(sql)

}

//关闭连接操作

override def close(): Unit = {

if(connection != null){ connection.close()

}

if(ps != null){ ps.close()

}

}

//每个元素的插入,都要触发一次 invoke,这里主要进行 invoke 插入

override def invoke(stu: Student): Unit = {

try{

//4.组装数据,执行插入操作 ps.setInt(1, stu.id) ps.setString(2, stu.name) ps.setString(3, stu.addr) ps.setString(4, stu.sex) ps.executeUpdate()

}catch {

case e:Exception => println(e.getMessage)

}

}

}

}

4987

4987

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?