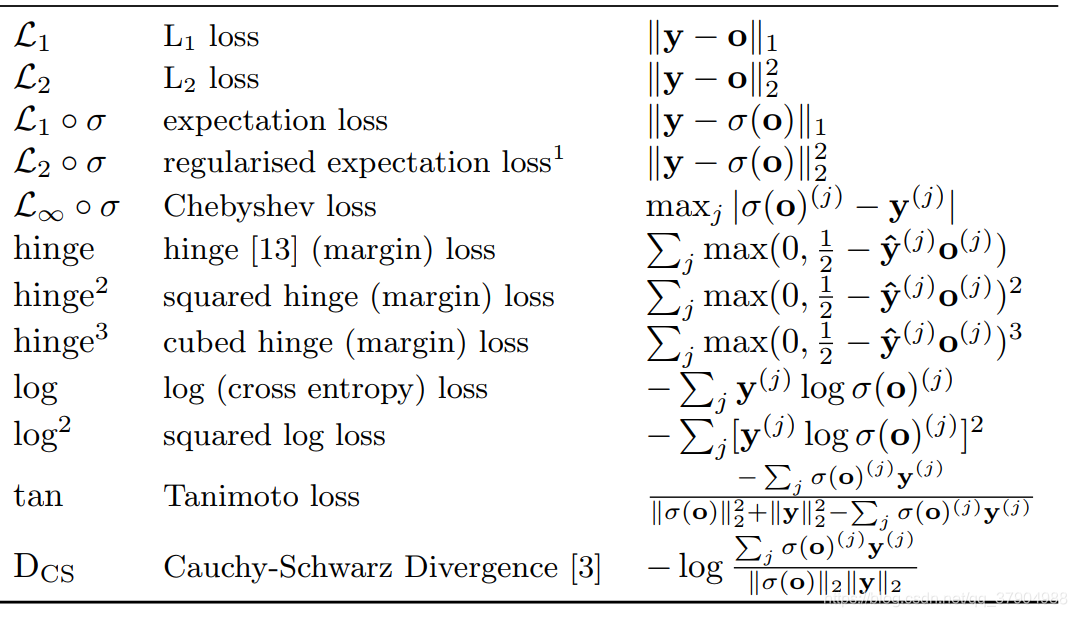

分类问题中的另一类loss函数

In particular, for purely accuracy focused research, squared hinge loss seems

to be a better choice at it converges faster as well as provides better performance.

It is also more robust to noise in the training set labelling and

slightly more robust to noise in the input space. However, if one works

with highly noised dataset (both input and output spaces) – the expectation

losses described in detail in this paper – seem to be the best choice,

both from theoretical and empirical perspective.

At the same time this topic is far from being exhausted, with a large

amount of possible paths to follow and questions to be answered. In

particular, non-classical loss functions such as Tanimoto loss(噪声大的情况) and CauchySchwarz

Divergence(无噪声) are worth further investigation.

4604

4604

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?