首先进入job.waitForCompletion

由于job状态已经被定义,所以直接进入submit方法

1.进入submit方法:

public void submit() throws IOException, InterruptedException, ClassNotFoundException {

//再次确认job状态

this.ensureState(Job.JobState.DEFINE);

/*

//设置使用新的API,新旧API在命名上是有差别的

旧:mapred.mapper/reducer.class

新:mapreduce.mapper/reducer.class

*/

this.setUseNewAPI();

this.connect(); *********

final JobSubmitter submitter = this.getJobSubmitter(this.cluster.getFileSystem(), this.cluster.getClient());

this.status = (JobStatus)this.ugi.doAs(new PrivilegedExceptionAction<JobStatus>() {

public JobStatus run() throws IOException, InterruptedException, ClassNotFoundException {

return submitter.submitJobInternal(Job.this, Job.this.cluster);

}

});

this.state = Job.JobState.RUNNING;

LOG.info("The url to track the job: " + this.getTrackingURL());

}

1.1进入submit中的connect方法:

作用:创建cluster对象

private synchronized void connect() throws IOException, InterruptedException, ClassNotFoundException {

/*

由于开始并没有创建cluster对象 cluster为null

*/

if (this.cluster == null) {

this.cluster = (Cluster)this.ugi.doAs(new PrivilegedExceptionAction<Cluster>() {

public Cluster run() throws IOException, InterruptedException, ClassNotFoundException {

//接下来进入此方法

return new Cluster(Job.this.getConfiguration());*********

}

});

}

}

public Cluster(InetSocketAddress jobTrackAddr, Configuration conf) throws IOException {

.......

this.initialize(jobTrackAddr//null, conf);

}

1.1.1 进入initialize方法

initialize方法作用:创建客户端协议提供者,之后利用其创建clientProtocol 对象

private void initialize(InetSocketAddress jobTrackAddr, Configuration conf) throws IOException {

synchronized(frameworkLoader) {

Iterator var4 = frameworkLoader.iterator();

while(var4.hasNext()) {

/*

private static ServiceLoader<ClientProtocolProvider> frameworkLoader = ServiceLoader.load(ClientProtocolProvider.class);

1.首先根据 服务加载器创建framework,之后通过其创建provider

*/

ClientProtocolProvider provider = (ClientProtocolProvider)var4.next();

LOG.debug("Trying ClientProtocolProvider : " + provider.getClass().getName());

ClientProtocol clientProtocol = null;

try {

if (jobTrackAddr == null) {

进入此

clientProtocol = provider.create(conf);

/*

public ClientProtocol create(Configuration conf) throws IOException {

String framework = conf.get("mapreduce.framework.name", "local");

if (!"local".equals(framework//==local)) {

return null;

} else {

conf.setInt("mapreduce.job.maps", 1);

return new LocalJobRunner(conf);

}

}

*/

} else {

clientProtocol = provider.create(jobTrackAddr, conf);

}

}

1.2接下来重点研究connect下面的这个方法

1.通过之前conenct方法中创建的cluster对象创建submitter对象

/*

[root@l4 tmp]# ll

total 12

-rw-r--r--. 1 root root 9442 Mar 20 14:04 debugger-agent-storage6349796582743942141jar

drwxr-xr-x. 3 root root 20 Mar 20 11:21 hadoop-root

drwxr-xr-x. 2 root root 32 Mar 20 14:04 hsperfdata_root

*/

final JobSubmitter submitter = this.getJobSubmitter(this.cluster.getFileSystem(), this.cluster.getClient());//创建的目录文件

this.status = (JobStatus)this.ugi.doAs(new PrivilegedExceptionAction<JobStatus>() {

public JobStatus run() throws IOException, InterruptedException, ClassNotFoundException {

//进入submitJobInternal方法

return submitter.submitJobInternal(Job.this, Job.this.cluster);

}

});

进入submitJobInternal()

JobStatus submitJobInternal(Job job, Cluster cluster) throws ClassNotFoundException, InterruptedException, IOException {

this.checkSpecs(job);//检查输出文件路径是否存在 ,进入此方法 output.checkOutputSpecs(job);

/*

output.checkOutputSpecs(job); F7进入此方法

====================== == = = = == = =

Path outDir = getOutputPath(job);

if (outDir == null) {

throw new InvalidJobConfException("Output directory not set.");

} else {

TokenCache.obtainTokensForNamenodes(job.getCredentials(), new Path[]{outDir}, job.getConfiguration());

if (outDir.getFileSystem(job.getConfiguration()).exists(outDir)) {

throw new FileAlreadyExistsException("Output directory " + outDir + " already exists");

}

}

}

*/

===========================================================

设置新的jobID

JobID jobId = this.submitClient.getNewJobID();

jobId :job_localranid_0000jobid

/*

public synchronized org.apache.hadoop.mapreduce.JobID getNewJobID() {

// this.randid = this.rand.nextInt(2147483647);

private static int jobid = 0;

return new org.apache.hadoop.mapreduce.JobID("local" + this.randid, ++jobid);

/*

public JobID(String jtIdentifier, int id) {

super(id);

this.jtIdentifier = new Text(jtIdentifier);

}

*/

}

*/

job.setJobID(jobId);

Path submitJobDir = new Path(jobStagingArea, jobId.toString());

JobStatus status = null;

}

=======================================================

进入分片机制源码:

int maps = this.writeSplits(job, submitJobDir);

private int writeSplits(Job . .. . {

JobConf jConf = (JobConf)job.getConfiguration();

int maps;

if (jConf.getUseNewMapper()) {

maps = this.writeNewSplits(job, jobSubmitDir); // *******

} else {

maps = this.writeOldSplits(jConf, jobSubmitDir);

}

return maps;

}

}

maps = this.writeNewSplits(job, jobSubmitDir)方法进入:

Configuration conf = job.getConfiguration(); InputFormat<?, ?> input = (InputFormat)ReflectionUtils.newInstance(job.getInputFormatClass(), conf);

List<InputSplit> splits = input.getSplits(job);//进入此方法

/*

StopWatch sw = (new StopWatch()).start();

//minSize 1L(defaultvalue)

long minSize = Math.max(this.getFormatMinSplitSize(), getMinSplitSize(job));

//maxSize 9223372036854775807L(defaultvalue)

long maxSize = getMaxSplitSize(job);

List<InputSplit> splits = new ArrayList();

//得到所有输入文件

List<FileStatus> files = this.listStatus(job);// /*

开始循环的得到每一个文件

while(true) {

while(true) {

while(var9.hasNext()) {

FileStatus file = (FileStatus)var9.next();

//得到文件路径以及大小

Path path = file.getPath();

long length = file.getLen();

. . . . . .

if (this.isSplitable(job, path)) {

long blockSize = file.getBlockSize();//128M

//计算出切片大小,默认=blockSize

long splitSize = this.computeSplitSize(blockSize, minSize, maxSize);

/*

protected long computeSplitSize(long blockSize, long minSize, long maxSize) {

//return blockSize

return Math.max(minSize, Math.min(maxSize, blockSize));

}

*/

切片规划:

/* for 开始 形成split s1=0-128 s2=129-256 .. .

每次切片都要判断切完剩余部分是否大于快的大小的1.1倍,否则,就划分为一个splits*/

for(bytesRemaining = length; (double)bytesRemaining / (double)splitSize > 1.1D; bytesRemaining -= splitSize) {

blkIndex = this.getBlockIndex(blkLocations, length - bytesRemaining);

splits.add(this.makeSplit(path, length - bytesRemaining, splitSize, blkLocations[blkIndex].getHosts(), blkLocations[blkIndex].getCachedHosts()));

}

步骤总结:

1.首先提交job任务,进入submit方法。

2.submit方法中一个最为重要的方法就是connect方法,他的作用是创建一个Cluster对象。

3.在创建Cluster对象之前必须进行初始化,而初始化的作用就是通过遍历Cluster静态成员变量framework 创建 ClientProtocolProvider 的provider对象 而provider可以创建与集群进行通信的客户端通信协议实例clientProtocol。

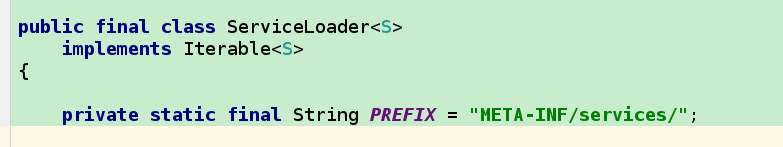

4.在hadoop通过使用动态加载ServiceLoader方式创建了静态成员变量framework

private static ServiceLoader<ClientProtocolProvider> frameworkLoader =ServiceLoader.load(ClientProtocolProvider.class);

5.ServiceLoader通过加载jar包下的META-INF的service文件夹下面的相应的实现类进行实现。其实内部也是施工反射机制实现的。

6.ClientProtocolProvider 以及ClientProtocol都是CLuster类中的私有成员变量。

7 .在4步骤中,ServiceLoader创建对象需要ClientProtocolProvider 。而framework的创建也是根据ClientProtocolProvider 子类的类型进行创建,要不然怎么区分local和yarn模式呢?

ClientProtocolProvider 有俩个子类,一个是YarnClientProtocolProvider 一个是LocalClientProtocolProvider,一个是本地local提交调用的,一个是yarn提交调用。

8.以下是LocalClientProtocolProvider源码:

-------首先会通过配置文件得到mapreduce.framework.name值,默认是local

-------进行配置job作业的map数量,配置为1

-------创建LocalJobRunner对象

9.以下是YarnClientProtocolProvider源码

-------首先会通过配置文件得到mapreduce.framework.name值,默认是local

-------进行配置job作业的map数量,配置为1

-------创建YarnRunner对象

10.接下来看一下YarnRunenr源码:

— 看一下他的成员变量

最重要的一个变量就是ResourceManager代理ResourceMgrDelegate实例resMgrDelegate,Yarn模式下整个MapReduce客户端就是由它负责与Yarn集群进行通信,完成诸如作业提交、作业状态查询等过程,通过它获取集群的信息,其内部有一个YarnClient实例YarnClient,负责与Yarn进行通信,还有ApplicationId、ApplicationSubmissionContext等与特定应用程序相关的成员变量

private static final Log LOG = LogFactory.getLog(YARNRunner.class);

private static final RecordFactory recordFactory = RecordFactoryProvider.getRecordFactory((Configuration)null);

public static final Priority AM_CONTAINER_PRIORITY;

private ResourceMgrDelegate resMgrDelegate;

private ClientCache clientCache;

private Configuration conf;

private final FileContext defaultFileContext;

-----总共有三个构造函数:

YARNRunner一共提供了三个构造函数,而我们之前说的WordCount作业提交时,其内部调用的是YARNRunner带有一个参数的构造函数,它会先构造ResourceManager代理ResourceMgrDelegate实例,然后再调用两个参数的构造函数,继而构造客户端缓存ClientCache实例,然后再调用三个参数的构造函数,而最终的构造函数只是进行简单的类成员变量赋值,然后通过FileContext的静态getFileContext()方法获取文件山下文FileContext实例defaultFileContext。

6091

6091

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?