import numpy as np

import torch

from torchvision import transforms

from torchvision import datasets

from torch.utils.data import DataLoader

import torch.nn.functional as F

import torch.optim as optim

import matplotlib.pyplot as plt

accuracy_list =[]

epoch_list = []

# prepare dta

batch_size = 64

# convert PIL image to Tensor

transform = transforms.Compose([transforms.ToTensor(),

transforms.Normalize((0.1307,),(0.3081,))])

train_dataset = datasets.MNIST(root='./dataset/mnist',

train=True,

download=True,

transform=transform)

train_loader = DataLoader(train_dataset,

shuffle=True,

batch_size=batch_size)

test_dataset = datasets.MNIST(root='./dataset/mnist',

train=False,

download=True,

transform=transform)

test_loader = DataLoader(test_dataset,

shuffle=False,

batch_size=batch_size)

class ResidualBlock(torch.nn.Module):

def __init__(self, channels):

super(ResidualBlock, self).__init__()

self.channels = channels

self.conv1 = torch.nn.Conv2d(channels, channels,

kernel_size=3, padding=1)

self.conv2 = torch.nn.Conv2d(channels, channels,

kernel_size=3, padding=1)

def forward(self, x):

y = F.relu(self.conv1(x))

y = self.conv2(y)

return F.relu(x+y)

class Net(torch.nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = torch.nn.Conv2d(1, 16, kernel_size=5)

self.conv2 = torch.nn.Conv2d(16, 32, kernel_size=5)

self.mp = torch.nn.MaxPool2d(2)

self.rblock1 = ResidualBlock(16)

self.rblock2 = ResidualBlock(32)

self.fc = torch.nn.Linear(512, 10)

def forward(self, x):

in_size = x.size(0)

x = self.mp((F.relu(self.conv1(x))))

x = self.rblock1(x)

x = self.mp((F.relu(self.conv2(x))))

x = self.rblock2(x)

x = x.view(in_size, -1)

x = self.fc(x)

return x

model = Net()

device = torch.device('cuda:0'if torch.cuda.is_available() else 'cpu')

model.to(device)

criterion = torch.nn.CrossEntropyLoss()

optimizer = optim.SGD(model.parameters(), lr=0.01, momentum=0.5)

def train(epoch):

running_loss = 0.0

for batch_idx, data in enumerate(train_loader,0):

inputs, target = data

inputs, target = inputs.to(device), target.to(device)

optimizer.zero_grad()

# forward + backward + update

outputs = model(inputs)

loss = criterion(outputs, target)

loss.backward()

optimizer.step()

running_loss += loss.item()

if batch_idx % 300 == 299:

print('[%d, %5d] loss: %.3f' % (epoch + 1, batch_idx + 1, running_loss / 300))

running_loss = 0.0

# test

def test():

correct = 0

total = 0

with torch.no_grad():

for data in test_loader:

images, labels = data

images, labels = images.to(device), labels.to(device)

outputs = model(images)

_, predicted = torch.max(outputs.data, dim=1)

total += labels.size(0)

correct += (predicted == labels).sum().item()

print('Accuracy on test set: %d %%' % (100 * correct / total))

accuracy_list.append(100 * correct / total)

if __name__ == '__main__':

for epoch in range(10):

train(epoch)

test()

epoch_list.append(epoch)

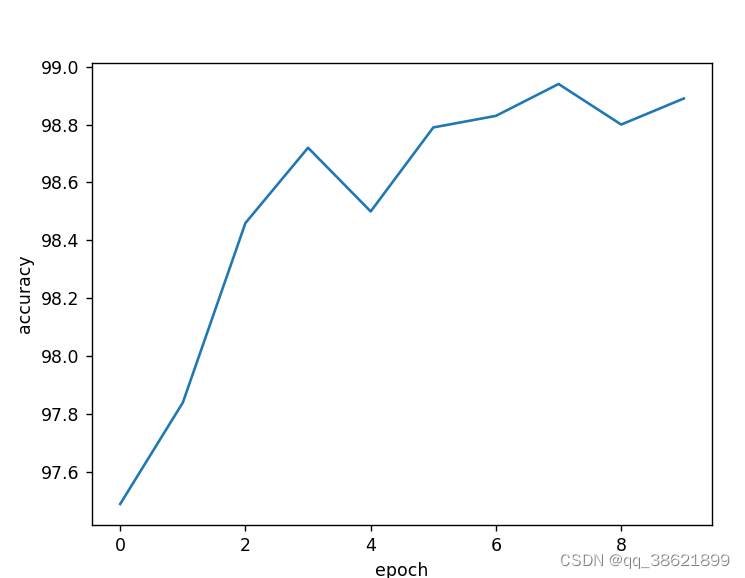

# 绘图

plt.plot(epoch_list, accuracy_list)

plt.ylabel('accuracy')

plt.xlabel('epoch')

plt.show()

结果:

[1, 300] loss: 0.608

[1, 600] loss: 0.159

[1, 900] loss: 0.115

Accuracy on test set: 97 %

[2, 300] loss: 0.084

[2, 600] loss: 0.081

[2, 900] loss: 0.074

Accuracy on test set: 97 %

[3, 300] loss: 0.060

[3, 600] loss: 0.057

[3, 900] loss: 0.055

Accuracy on test set: 98 %

[4, 300] loss: 0.046

[4, 600] loss: 0.050

[4, 900] loss: 0.047

Accuracy on test set: 98 %

[5, 300] loss: 0.041

[5, 600] loss: 0.037

[5, 900] loss: 0.040

Accuracy on test set: 98 %

[6, 300] loss: 0.033

[6, 600] loss: 0.034

[6, 900] loss: 0.036

Accuracy on test set: 98 %

[7, 300] loss: 0.032

[7, 600] loss: 0.028

[7, 900] loss: 0.032

Accuracy on test set: 98 %

[8, 300] loss: 0.025

[8, 600] loss: 0.028

[8, 900] loss: 0.026

Accuracy on test set: 98 %

[9, 300] loss: 0.023

[9, 600] loss: 0.024

[9, 900] loss: 0.027

Accuracy on test set: 98 %

[10, 300] loss: 0.022

[10, 600] loss: 0.021

[10, 900] loss: 0.022

Accuracy on test set: 98 %

2万+

2万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?