分析Robots协议

书中以简书为例,对robots.txt文件分析。

robots.txt

简书robots.txt文件内容如下:

# See http://www.robotstxt.org/wc/norobots.html for documentation on how to use the robots.txt file

#

# To ban all spiders from the entire site uncomment the next two lines:

User-agent: *

Disallow: /search

Disallow: /convos/

Disallow: /notes/

Disallow: /admin/

Disallow: /adm/

Disallow: /p/0826cf4692f9

Disallow: /p/d8b31d20a867

Disallow: /collections/*/recommended_authors

Disallow: /trial/*

Disallow: /keyword_notes

Disallow: /stats-2017/*

User-agent: trendkite-akashic-crawler

Request-rate: 1/2 # load 1 page per 2 seconds

Crawl-delay: 60

User-agent: YisouSpider

Request-rate: 1/10 # load 1 page per 10 seconds

Crawl-delay: 60

User-agent: Cliqzbot

Disallow: /

User-agent: Googlebot

Request-rate: 2/1 # load 2 page per 1 seconds

Crawl-delay: 10

Allow: /

User-agent: Mediapartners-Google

Allow: /爬虫代码:

书中代码返回为True,False,但是我在实际中执行一直是False,False:

from urllib.robotparser import RobotFileParser

import urllib.request

rp = RobotFileParser()

rp.set_url('http://www.jianshu.com/robots.txt')

# 必不可少的一部,虽然没有返回

rp.read()

print(rp.can_fetch('*', 'http://www.jianshu.com/p/b67554025d7d'))

print(rp.can_fetch('*', "http://www.jianshu.com/search?q=python&page=l&type=collections"))

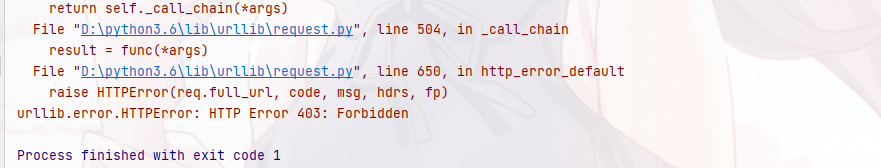

print(rp.mtime())于是我就开始尝试分析RobotFileParser中read()方法,发现它使用的是urlopen,怀疑是urlopen在请求连接时没有加上浏览器头部信息被服务端认为是爬虫拒绝访问导致。

# 尝试用urlopen方法打开此链接

f = urllib.request.urlopen('http://www.jianshu.com/robots.txt')

print(f)

结果果然如此:

解决这种情况有两种办法:

①:对read()方法进行修改,弃用urlopen启用request方法:

read()源代码如下:

def read(self):

"""Reads the robots.txt URL and feeds it to the parser."""

try:

f = urllib.request.urlopen(self.url)

except urllib.error.HTTPError as err:

if err.code in (401, 403):

self.disallow_all = True

elif err.code >= 400 and err.code < 500:

self.allow_all = True

else:

raw = f.read()

self.parse(raw.decode("utf-8").splitlines())修改后read()方法:

def read(self):

"""Reads the robots.txt URL and feeds it to the parser."""

try:

f = urllib.request.Request(self.url, headers={'User-Agent': 'Mozilla/5.0 (Windows NT 6.1; WOW64; rv:23.0) Gecko/20100101 Firefox/23.0'})

except urllib.error.HTTPError as err:

if err.code in (401, 403):

self.disallow_all = True

elif err.code >= 400 and err.code < 500:

self.allow_all = True

else:

raw = urllib.request.urlopen(f).read()

self.parse(raw.decode("utf-8").splitlines())

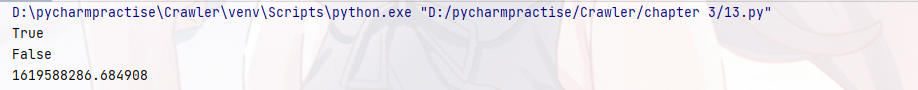

再次运行结果如下:成功!

②:不用read()改用 parse()方法 即

使用parse()方法来执行robots.txt分析 和读取

程序代码如下:

rps = RobotFileParser()

headers = {'User-Agent': 'Mozilla/5.0 (Windows NT 6.1; WOW64; rv:23.0) Gecko/20100101 Firefox/23.0'}

response = urllib.request.Request('http://www.jianshu.com/robots.txt', headers=headers)

req = urllib.request.urlopen(response).read().decode('utf-8').split('\n')

# 对robots.txt 内容进行分析

rps.parse(req)

# 使用了request 加上heders信息 返回True了

print(rps.can_fetch('*', 'http://www.jianshu.com/p/b67554025d7d'))

print(rps.can_fetch('*', "http://www.jianshu.com/search?q=python&page=l&type=collections"))

print(rps.mtime())执行结果如下:成功

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?