docker基础环境安装可参考

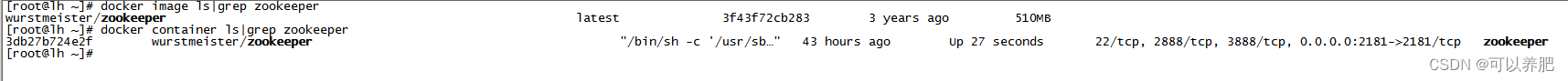

1.拉取启动zookeeper

docker pull wurstmeister/zookeeper

docker run -d --name zookeeper -p 2181:2181 -t wurstmeister/zookeeper

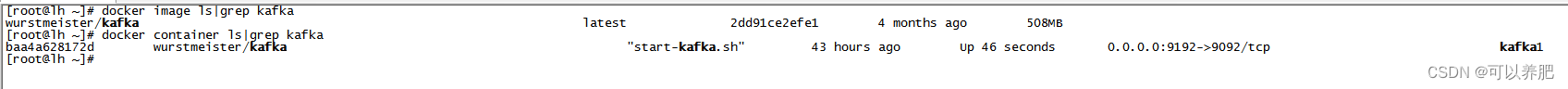

2.拉取启动kafka

docker pull wurstmeister/kafka

docker run -d --name kafka1 -p 9192:9092 \

-e KAFKA_BROKER_ID=1 \

-e KAFKA_ZOOKEEPER_CONNECT=172.17.0.14:2181 \

-e KAFKA_ADVERTISED_LISTENERS=PLAINTEXT://x.x.x.x:9192 \

-e KAFKA_LISTENERS=PLAINTEXT://0.0.0.0:9092 \

-t wurstmeister/kafka

其中x.x.x.x为云服务器外网地址,9192为云服务器对外映射端口

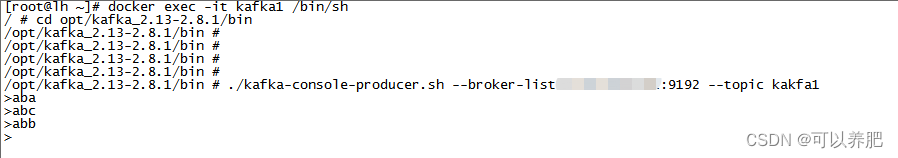

3.测试kafka生产和消费

1.进入kafka容器

docker exec -it kafka1 /bin/sh

2.进入kafka目录

cd opt/kafka_2.13-2.8.1/bin

3.创建测试topic

./kafka-topics.sh --create --zookeeper 172.17.0.14 --replication-factor 1 --partitions 1 --topic kakfa1

4.生产消息

./kafka-console-producer.sh --broker-list x.x.x.x:9192 --topic kakfa1

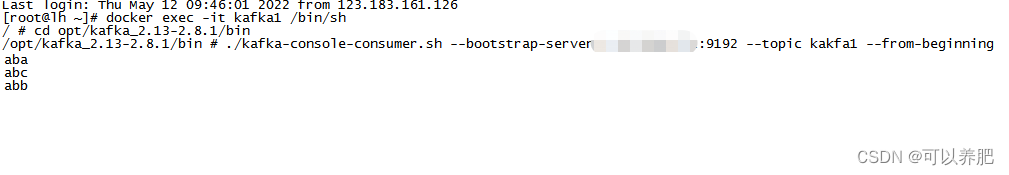

5.消费消息

./kafka-console-consumer.sh --bootstrap-server X.x.x.x:9192 --topic kakfa1 --from-beginning

生产

消费

消费

4.编写Java程序

pom文件

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com.lihao</groupId>

<artifactId>zike</artifactId>

<version>1.0-SNAPSHOT</version>

<packaging>jar</packaging>

<name>Spring Boot Blank Project (from https://github.com/making/spring-boot-blank)</name>

<parent>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-parent</artifactId>

<version>2.6.0</version>

</parent>

<properties>

<project.build.sourceEncoding>UTF-8</project.build.sourceEncoding>

<start-class>com.lihao.App</start-class>

<java.version>1.8</java.version>

<lombok.version>1.14.8</lombok.version>

</properties>

<dependencies>

<dependency>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-starter-web</artifactId>

</dependency>

<dependency>

<groupId>org.projectlombok</groupId>

<artifactId>lombok</artifactId>

<version>${lombok.version}</version>

<scope>provided</scope>

</dependency>

<dependency>

<groupId>org.springframework.kafka</groupId>

<artifactId>spring-kafka</artifactId>

</dependency>

</dependencies>

<build>

<plugins>

<plugin>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-maven-plugin</artifactId>

<dependencies>

<dependency>

<groupId>org.springframework</groupId>

<artifactId>springloaded</artifactId>

<version>1.2.8.RELEASE</version>

</dependency>

</dependencies>

</plugin>

</plugins>

</build>

</project>

配置文件

# 开发环境配置

server:

port: 8081

topic:

spring:

application:

name: kakfa1

kafka:

bootstrap-servers:

配置类

package com.lihao.config;

import org.apache.kafka.clients.consumer.ConsumerConfig;

import org.apache.kafka.common.serialization.StringDeserializer;

import org.springframework.beans.factory.annotation.Value;

import org.springframework.context.annotation.Bean;

import org.springframework.context.annotation.Configuration;

import org.springframework.kafka.annotation.EnableKafka;

import org.springframework.kafka.config.ConcurrentKafkaListenerContainerFactory;

import org.springframework.kafka.core.ConsumerFactory;

import org.springframework.kafka.core.DefaultKafkaConsumerFactory;

import java.util.HashMap;

import java.util.Map;

@Configuration

@EnableKafka

public class KafkaConnectConfig {

@Value("${spring.kafka.bootstrap-servers}")

private String bootStrapServer;

@Bean("containerFactory")

public ConcurrentKafkaListenerContainerFactory<String, String> kafkaListenerContainerFactory() {

ConcurrentKafkaListenerContainerFactory<String, String> factory = new ConcurrentKafkaListenerContainerFactory<>();

factory.setConsumerFactory(consumerFactory());

return factory;

}

/**

* 消费者工厂配置

*

* @return

*/

@Bean

public ConsumerFactory<String, String> consumerFactory() {

return new DefaultKafkaConsumerFactory<>(consumerProps());

}

/**

* 消费配置方法

*

* @return

*/

@Bean

public Map<String, Object> consumerProps() {

Map<String, Object> props = new HashMap<>();

props.put(ConsumerConfig.BOOTSTRAP_SERVERS_CONFIG, bootStrapServer);

props.put(ConsumerConfig.GROUP_ID_CONFIG, "1");

props.put(ConsumerConfig.AUTO_OFFSET_RESET_CONFIG, "earliest");

props.put(ConsumerConfig.ENABLE_AUTO_COMMIT_CONFIG, true);

props.put(ConsumerConfig.AUTO_COMMIT_INTERVAL_MS_CONFIG, "100");

props.put(ConsumerConfig.SESSION_TIMEOUT_MS_CONFIG, "15000");

props.put(ConsumerConfig.KEY_DESERIALIZER_CLASS_CONFIG, StringDeserializer.class);

props.put(ConsumerConfig.VALUE_DESERIALIZER_CLASS_CONFIG, StringDeserializer.class);

return props;

}

}

消费监听类

package com.lihao.kafka;

import org.springframework.kafka.annotation.KafkaListener;

import org.springframework.kafka.support.KafkaHeaders;

import org.springframework.messaging.MessageHeaders;

import org.springframework.messaging.handler.annotation.Header;

import org.springframework.messaging.handler.annotation.Headers;

import org.springframework.messaging.handler.annotation.Payload;

import org.springframework.stereotype.Component;

@Component

public class KafkaConsumerServiceImpl {

@KafkaListener(topics = {"#{'${topic}'.split(',')}"}, containerFactory = "containerFactory")

public void topicMessage(@Payload String data,

@Header(KafkaHeaders.RECEIVED_TOPIC) String topic,

@Headers MessageHeaders messageHeaders,

@Header(KafkaHeaders.OFFSET) String offset) {

if (data != null) {

System.out.println("=============>>");

System.out.println("data:"+data);

System.out.println("topic:"+topic);

System.out.println("messageHeaders:"+messageHeaders);

System.out.println("offset"+offset);

}

}

}

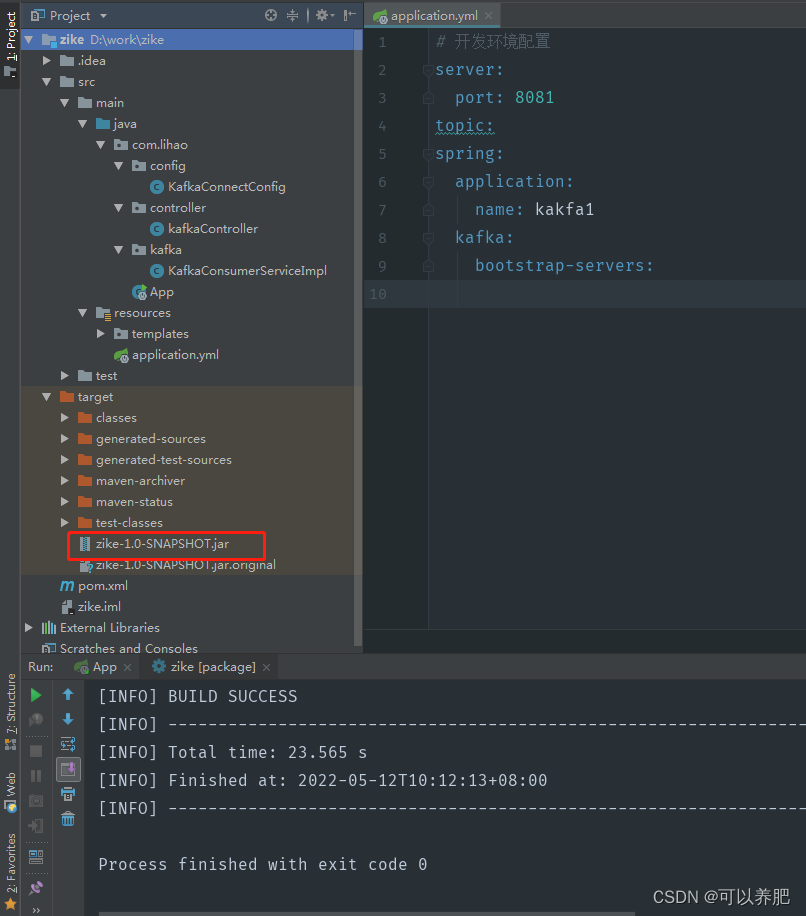

打包

5.制作镜像

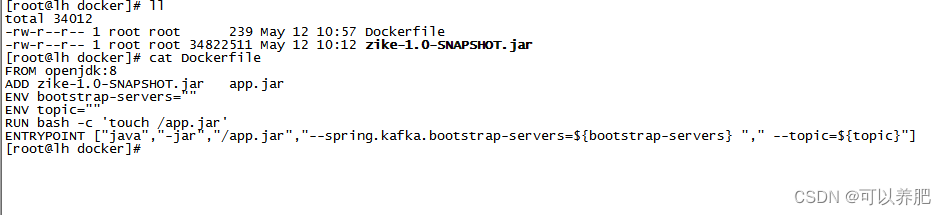

上传jar和Dockerfile

Dockerfile

FROM openjdk:8

ADD zike-1.0-SNAPSHOT.jar app.jar

ENV bootstrap-servers=""

ENV topic=""

RUN bash -c 'touch /app.jar'

ENTRYPOINT ["java","-jar","/app.jar","--spring.kafka.bootstrap-servers=${bootstrap-servers} "," --topic=${topic}"]

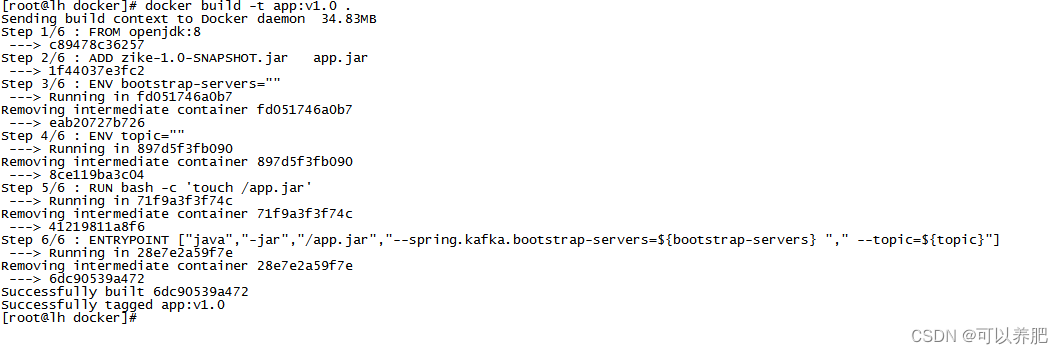

生成本地镜像

docker build -t app:v1.0 .

6.通过自定义镜像运行容器测试

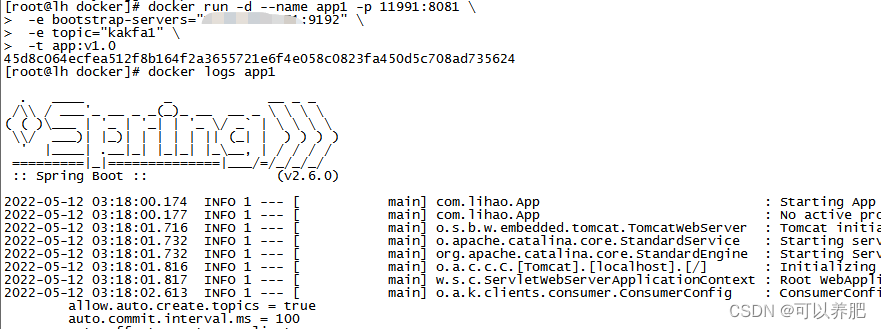

方式一(docker命令)

docker run -d --name app1 -p 11991:8081 \

-e bootstrap-servers=X.x.x.x:9192 \

-e topic="kakfa1" \

-t app:v1.0

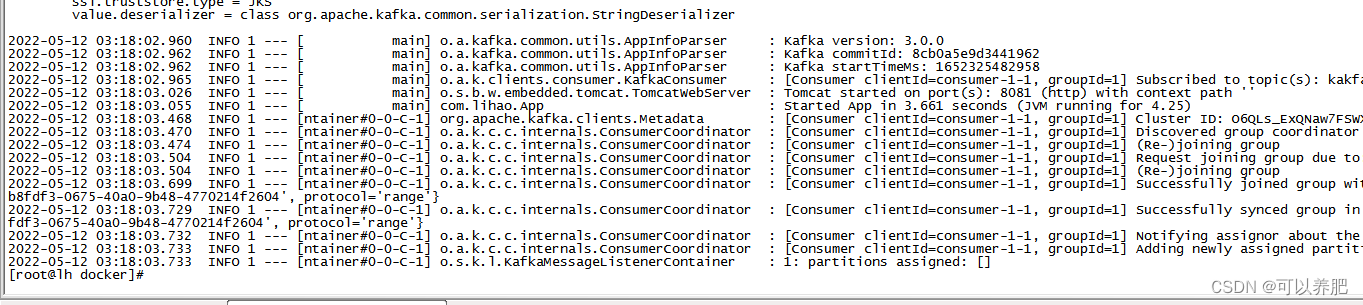

启动日志

启动日志

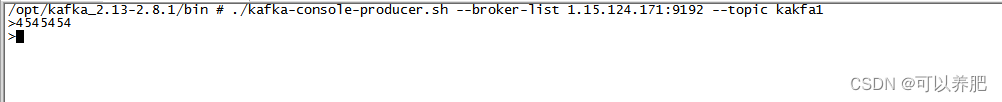

生产

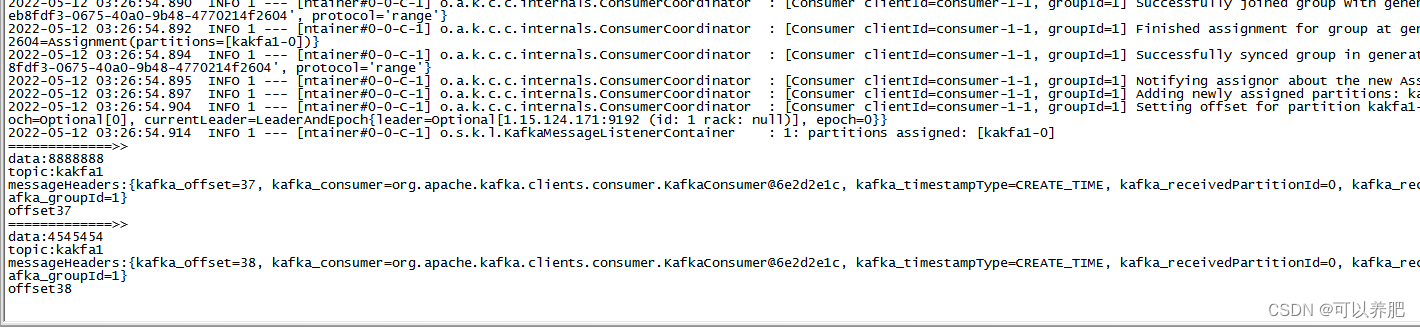

消费

方式二(docker API)

dockerAPI打开

vim /lib/systemd/system/docker.service

找到相应的行追加

ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.sock -H 0.0.0.0:2222

重启

systemctl daemon-reload

systemctl restart docker

测试dockerAPI

剩下的操作了以查看dockerAPI文档

875

875

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?