import numpy as np

from torch.utils.data import DataLoader, Dataset

import torch

from skimage.measure import shannon_entropyhr_data_dir: Ji/Data/IXI_npy/4Scale/train/IXI_training_hr_t2_scale_by_4_imgs.npy

lr_data_dir: Ji/Data/IXI_npy/4Scale/train/IXI_training_lr_t2_scale_by_4_imgs.npy

other_data_dir: Ji/Data/IXI_npy/4Scale/train/IXI_training_hr_t1_scale_by_4_imgs.npybrain_dataset = load_superres_data(

args.hr_data_dir,

args.lr_data_dir,

args.other_data_dir

)def load_superres_data(hr_data_dir, lr_data_dir, other_data_dir):

# Load the super-resolution data using the specified directories

return load_data(

hr_data_dir=hr_data_dir,

lr_data_dir=lr_data_dir,

other_data_dir=other_data_dir)def load_data(

*,

hr_data_dir,

lr_data_dir,

other_data_dir,

deterministic=False

):

return BraTSMRI(hr_data_dir, lr_data_dir, other_data_dir)class BraTSMRI(Dataset):

def __init__(self, hr_data_name, lr_data_name, other_data_name):

#使用 numpy 的 load 函数载入这些文件,并采用内存映射模式 mmap_mode='r'(只读)。这些数据都被

#切片以获取中间的20层(从第40层到第60层)。

self.hr_data, self.lr_data, self.other_data = np.load(hr_data_name, mmap_mode="r")[:, 40:60 ], \

np.load(lr_data_name, mmap_mode="r")[:, 40:60], \

np.load(other_data_name, mmap_mode="r")[:, 40:60]

num_subject, num_slice, h, w = self.hr_data.shape

self.hr_data = self.hr_data.reshape(num_subject * num_slice, h, w)

self.lr_data = self.lr_data.reshape(num_subject * num_slice, h, w)

self.other_data = self.other_data.reshape(num_subject * num_slice, h, w)

data_dict = {}

#data_dict:创建一个空字典用于根据熵值存储对应的索引。

#遍历 self.hr_data 中的每个图像,计算其 Shannon 熵(信息熵),并将其四舍五入。

#如果熵值已存在于 data_dict 中,则将当前图像的索引追加到该熵值的列表中;

#如果不存在,则在字典中新建该熵值的条目并添加索引。

for s in range(len(self.hr_data)):

entropy = np.round(shannon_entropy(self.hr_data[s]))

if entropy in data_dict:

data_dict[entropy].append(s)

else:

data_dict[entropy] = [s]

self.hr_data = torch.from_numpy(self.hr_data).float()

self.lr_data = torch.from_numpy(self.lr_data).float()

self.other_data = torch.from_numpy(self.other_data).float()

# 使用 torch.unsqueeze在每个数据的第一个维度(通道维度)添加一维对于后续的神经网络处理很重要。

self.hr_data = torch.unsqueeze(self.hr_data, 1)

self.lr_data = torch.unsqueeze(self.lr_data, 1)

self.other_data = torch.unsqueeze(self.other_data, 1)

self.data_dict = data_dict

print(self.hr_data.shape, self.lr_data.shape, self.other_data.shape)

def __len__(self):

#这里是 self.hr_data 的第一个维度的大小,即所有病例的所有层的数量。

return self.hr_data.shape[0]

def __getitem__(self, index):

return self.hr_data[index], self.lr_data[index], self.other_data[index] def get_dataloader(self):

loader = self.load_dataloader()

for hr_data, lr_data, other_data in loader:

model_kwargs = {"low_res": lr_data, "other": other_data}

yield hr_data, model_kwargs def load_dataloader(self, deterministic=False):

if deterministic:

loader = DataLoader(

self.data, batch_size=self.batch_size, shuffle=False, num_workers=8, drop_last=True, pin_memory=True,

)

else:

loader = DataLoader(

self.data, batch_size=self.batch_size, shuffle=True, num_workers=8, drop_last=True, pin_memory=True

)

while True:

yield from loader

【MICCAI 2023, Early accept】DisC-Diff: Disentangled Conditional Diffusion Model for Multi-Contrast MRI Super-Resolution

本文介绍了DisC-Diff,一种用于多对比度MRI超分辨率的解纠缠条件扩散模型,该模型已被 MICCAI 2023 早期接受。文章涉及数据集链接和代码解读。

本文介绍了DisC-Diff,一种用于多对比度MRI超分辨率的解纠缠条件扩散模型,该模型已被 MICCAI 2023 早期接受。文章涉及数据集链接和代码解读。

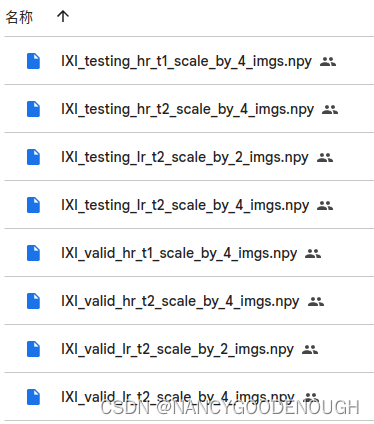

https://drive.google.com/drive/folders/1i2nj-xnv0zBRC-jOtu079Owav12WIpDE

https://drive.google.com/drive/folders/1i2nj-xnv0zBRC-jOtu079Owav12WIpDE

1162

1162

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?