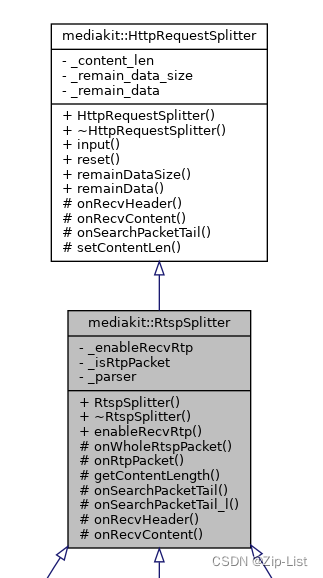

RtspSpliter

结构

bool _enableRecvRtp = false; //是否允许收到rtp

bool _isRtpPacket = false; //包是否是rtp包

Parser _parser; //jie

重写了HttpRequestSpliter

ssize_t onRecvHeader(const char *data,size_t len) override;

void onRecvContent(const char *data,size_t len) override;

const char *onSearchPacketTail(const char *data,size_t len) override ;

子类需要实现

/**

* 收到完整的rtsp包回调,包括sdp等content数据

* @param parser rtsp包

*/

virtual void onWholeRtspPacket(Parser &parser) = 0;

/**

* 收到rtp包回调

* @param data

* @param len

*/

virtual void onRtpPacket(const char *data,size_t len) = 0;

override

onSearchPacketTail

const char *RtspSplitter::onSearchPacketTail(const char *data, size_t len) {

auto ret = onSearchPacketTail_l(data, len);

if(ret){

return ret;

}

if (len > 256 * 1024) {

//rtp大于256KB

ret = (char *) memchr(data, '$', len);

if (!ret) {

WarnL << "rtp缓存溢出:" << hexdump(data, 1024);

reset();

}

}

return ret;

}

为什么**$开头就是rtp包**呢

rtp载荷H264解包过程分析,ffmpeg解码qt展示_土豆西瓜大芝麻的博客-CSDN博客_ffmpeg qt rtp

const char *RtspSplitter::onSearchPacketTail_l(const char *data, size_t len) {

if(!_enableRecvRtp || data[0] != '$'){

//这是rtsp包

_isRtpPacket = false;

return HttpRequestSplitter::onSearchPacketTail(data, len);

}

//这是rtp包

if(len < 4){

//数据不够

return nullptr;

}

uint16_t length = (((uint8_t *)data)[2] << 8) | ((uint8_t *)data)[3];

if(len < (size_t)(length + 4)){

//数据不够

return nullptr;

}

//返回rtp包末尾

_isRtpPacket = true;

return data + 4 + length;

}

onRecvHeader

如果是裹着rtp的rtsp(以$开头)就onRtpPacket

如果是没有Content的rtsp,比如OPTIONS SETUP RECORD 等等,onWholeRtspPacket

如果是有Content的rtsp,比如ANNOUNCE,就回到HttpRequestSplitter::input中,之后调用onRecvContent

ssize_t RtspSplitter::onRecvHeader(const char *data, size_t len) {

PrintD("onRecvHeader%s", data);

if(_isRtpPacket){

onRtpPacket(data,len);

return 0;

}

_parser.Parse(data);

auto ret = getContentLength(_parser);

if(ret == 0){

onWholeRtspPacket(_parser);

_parser.Clear();

}

return ret;

}

onRecvContent

void RtspSplitter::onRecvContent(const char *data, size_t len) {

PrintD("onRecvContent%s", data);

_parser.setContent(string(data,len));

onWholeRtspPacket(_parser);

_parser.Clear();

}

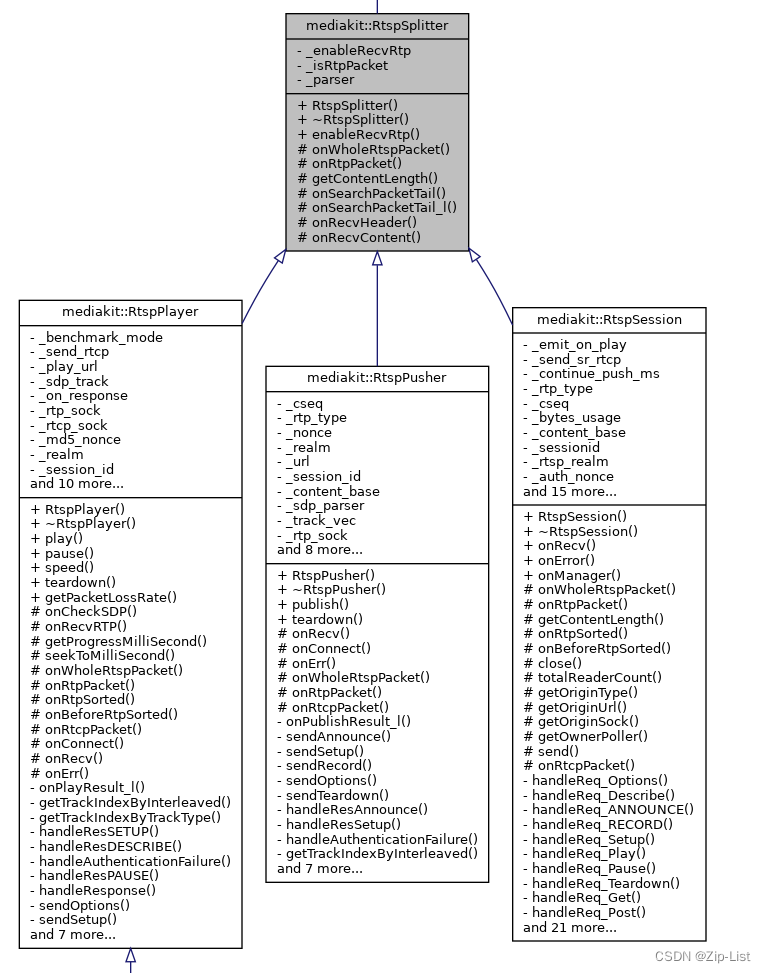

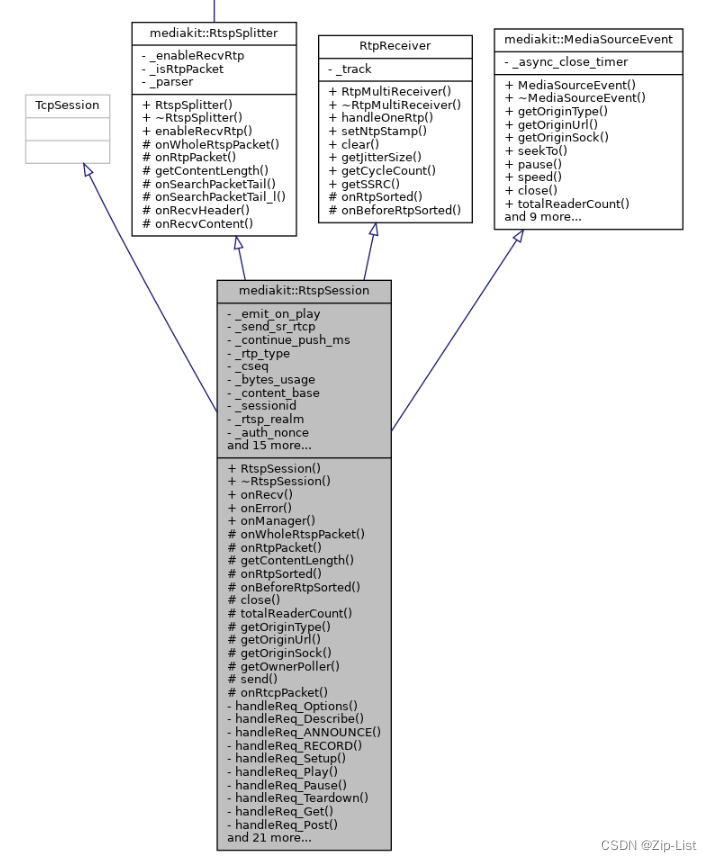

RtspSession

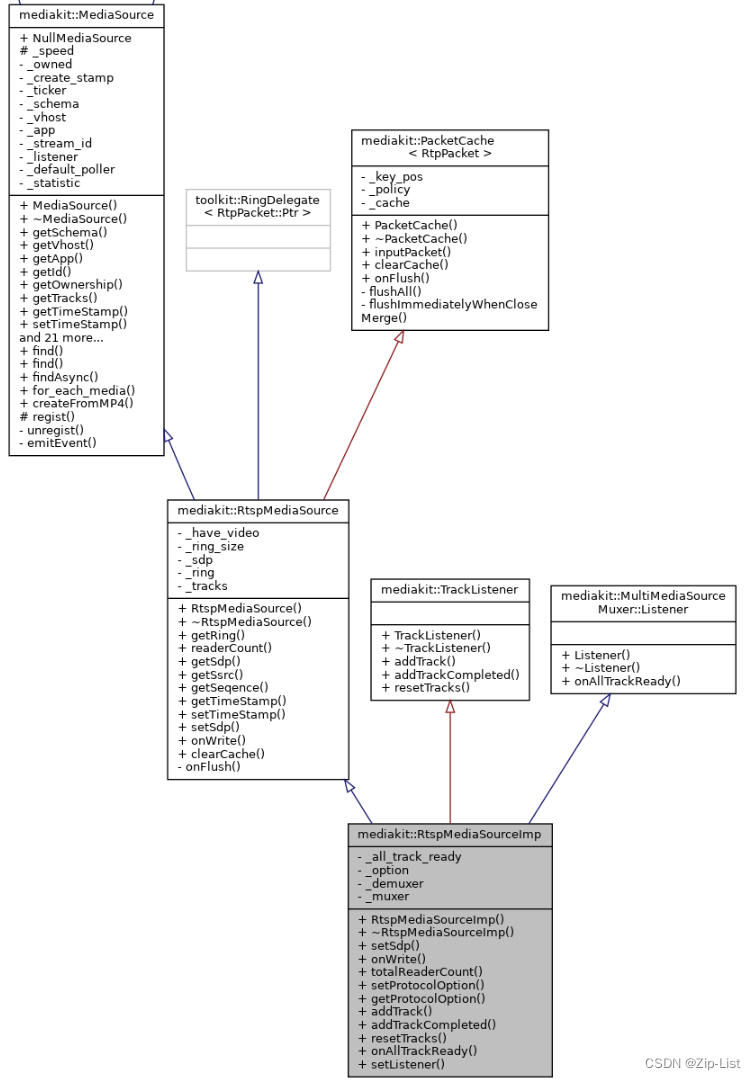

UML

数据结构

has a RtspMediaSourceImp

//是否已经触发on_play事件

bool _emit_on_play = false;

bool _send_sr_rtcp[2] = {true, true};

//断连续推延时

uint32_t _continue_push_ms = 0;

//推流或拉流客户端采用的rtp传输方式

Rtsp::eRtpType _rtp_type = Rtsp::RTP_Invalid;

//收到的seq,回复时一致

int _cseq = 0;

//消耗的总流量

uint64_t _bytes_usage = 0;

//ContentBase

std::string _content_base;

//Session号

std::string _sessionid;

//记录是否需要rtsp专属鉴权,防止重复触发事件

std::string _rtsp_realm;

//登录认证

std::string _auth_nonce;

//用于判断客户端是否超时

toolkit::Ticker _alive_ticker;

//url解析后保存的相关信息

MediaInfo _media_info;

//rtsp推流相关绑定的源

RtspMediaSourceImp::Ptr _push_src;

//推流器所有权

std::shared_ptr<void> _push_src_ownership;

//rtsp播放器绑定的直播源

std::weak_ptr<RtspMediaSource> _play_src;

//直播源读取器

RtspMediaSource::RingType::RingReader::Ptr _play_reader;

//sdp里面有效的track,包含音频或视频

std::vector<SdpTrack::Ptr> _sdp_track;

RTP over udp

//RTP端口,trackid idx 为数组下标

toolkit::Socket::Ptr _rtp_socks[2];

//RTCP端口,trackid idx 为数组下标

toolkit::Socket::Ptr _rtcp_socks[2];

//标记是否收到播放的udp打洞包,收到播放的udp打洞包后才能知道其外网udp端口号

std::unordered_set<int> _udp_connected_flags;

RTP over udp_multicast

//共享的rtp组播对象

RtpMultiCaster::Ptr _multicaster;

RTSP over HTTP

//quicktime 请求rtsp会产生两次tcp连接,

//一次发送 get 一次发送post,需要通过x-sessioncookie关联起来

std::string _http_x_sessioncookie;

std::function<void(const toolkit::Buffer::Ptr &)> _on_recv;

// rtcp

//rtcp发送时间,trackid idx 为数组下标

toolkit::Ticker _rtcp_send_tickers[2];

//统计rtp并发送rtcp

std::vector<RtcpContext::Ptr> _rtcp_context;

RtspSplitter override

onRtpPacket

根据RTSP Interleaved Frame的第二个字节channel identifier判断是RTCP还是RTP协议

0x00 Video RTP 0x01Video RTCP 0x02 Audio Rtp 0x03 Audio Rtcp

void RtspSession::onRtpPacket(const char *data, size_t len) {

uint8_t interleaved = data[1];

if (interleaved % 2 == 0) {

auto track_idx = getTrackIndexByInterleaved(interleaved);

handleOneRtp(track_idx, _sdp_track[track_idx]->_type, _sdp_track[track_idx]->_samplerate, (uint8_t *) data + RtpPacket::kRtpTcpHeaderSize, len - RtpPacket::kRtpTcpHeaderSize); //RtpMultiReceiver::handleOneRtp

} else {

auto track_idx = getTrackIndexByInterleaved(interleaved - 1);

onRtcpPacket(track_idx, _sdp_track[track_idx], data + RtpPacket::kRtpTcpHeaderSize, len - RtpPacket::kRtpTcpHeaderSize);

}

}

onWholeRtspPacket

handle对应的信令

void RtspSession::onWholeRtspPacket(Parser &parser) {

string method = parser.Method(); //提取出请求命令字

_cseq = atoi(parser["CSeq"].data());

if (_content_base.empty() && method != "GET") {

_content_base = parser.Url();

_media_info.parse(parser.FullUrl());

_media_info._schema = RTSP_SCHEMA;

}

using rtsp_request_handler = void (RtspSession::*)(const Parser &parser);

static unordered_map<string, rtsp_request_handler> s_cmd_functions;

static onceToken token([]() {

s_cmd_functions.emplace("OPTIONS", &RtspSession::handleReq_Options);

s_cmd_functions.emplace("DESCRIBE", &RtspSession::handleReq_Describe);

s_cmd_functions.emplace("ANNOUNCE", &RtspSession::handleReq_ANNOUNCE);

s_cmd_functions.emplace("RECORD", &RtspSession::handleReq_RECORD);

s_cmd_functions.emplace("SETUP", &RtspSession::handleReq_Setup);

s_cmd_functions.emplace("PLAY", &RtspSession::handleReq_Play);

s_cmd_functions.emplace("PAUSE", &RtspSession::handleReq_Pause);

s_cmd_functions.emplace("TEARDOWN", &RtspSession::handleReq_Teardown);

s_cmd_functions.emplace("GET", &RtspSession::handleReq_Get);

s_cmd_functions.emplace("POST", &RtspSession::handleReq_Post);

s_cmd_functions.emplace("SET_PARAMETER", &RtspSession::handleReq_SET_PARAMETER);

s_cmd_functions.emplace("GET_PARAMETER", &RtspSession::handleReq_SET_PARAMETER);

});

auto it = s_cmd_functions.find(method);

if (it == s_cmd_functions.end()) {

sendRtspResponse("403 Forbidden");

throw SockException(Err_shutdown, StrPrinter << "403 Forbidden:" << method);

}

(this->*(it->second))(parser);

parser.Clear();

}

RtpReceiver override

在RtpTrack给包排序的时候会调用

onRtpSorted

if (_push_src) {

_push_src->onWrite(std::move(rtp), false);//RtspMediaSourceImp::onWrite

} else {

WarnL << "Not a rtsp push!";

}//rtsp推流相关绑定的源中写

onBeforeRtpSorted

updateRtcpContext

void RtspSession::updateRtcpContext(const RtpPacket::Ptr &rtp)

{

//send rtcp every 5 second

}

OPTION信令 handleReq_OPTION

服务器下发自己的能力

ANNOUNCE信令 handleReq_ANNOUNCE

ANNOUNCE会回复session_id

发布 BroadcastMediaPublish 广播媒体源注册事件

auto flag = NoticeCenter::Instance().emitEvent(Broadcast::kBroadcastMediaPublish, MediaOriginType::rtsp_push, _media_info, invoker, static_cast<SockInfo &>(*this));

config.h中找到对应的事件,目前WebHook.cpp中installWebHook订阅了该事件

// 收到rtsp/rtmp推流事件广播,通过该事件控制推流鉴权

extern const std::string kBroadcastMediaPublish;

#define BroadcastMediaPublishArgs //宏定义偷懒不写参数 \

const MediaOriginType &type, const MediaInfo &args, const Broadcast::PublishAuthInvoker &invoker, SockInfo &sender

之前的webhook订阅

NoticeCenter::Instance().addListener(nullptr, Broadcast::kBroadcastMediaPublish, [](BroadcastMediaPublishArgs) {

...

invoker("", ProtocolOption());//调用invoker

return;

}

onRes

//创建_push_src, ringSize (RtspMediaSourceImp:RtspMediaSource)环形缓存大小默认RTP_GOP_SIZE=512

//断连后继续推流

SdpParser sdpParser(parser.Content());//解析sdp,同时初始化av_track

_sessionid = makeRandStr(12);//产生新的session_id

_sdp_track = sdpParser.getAvailableTrack();

_rtcp_context.clear();

for (auto &track : _sdp_track) {

_rtcp_context.emplace_back(std::make_shared<RtcpContextForRecv>());//对应的每个轨道都初始化rtcp控制

}

if (!_push_src) {

_push_src = std::make_shared<RtspMediaSourceImp>(_media_info._vhost, _media_info._app, _media_info._streamid);

//获取所有权

_push_src_ownership = _push_src->getOwnership();

_push_src->setProtocolOption(option);

_push_src->setSdp(parser.Content());

}

SETUP信令 handle_setup

一般av会发两次

V

SETUP rtsp://127.0.0.1:554/live/test1/streamid=0 RTSP/1.0

Transport: RTP/AVP/TCP;unicast;interleaved=0-1;mode=record //recode模式

CSeq: 3

User-Agent: Lavf58.29.100

Session: YEtFIGQP5dCI

//根据客户端要求的协议初始化 RTP/AVP/TCP

//推流或拉流客户端采用的rtp传输方式

Rtsp::eRtpType _rtp_type = Rtsp::RTP_Invalid;

if(2 == sscanf(key_values["interleaved"].data(),"%d-%d",&interleaved_rtp,&interleaved_rtcp)){

trackRef->_interleaved = interleaved_rtp; //V轨绑定0

A

SETUP rtsp://127.0.0.1:554/live/test1/streamid=1 RTSP/1.0

Transport: RTP/AVP/TCP;unicast;interleaved=2-3;mode=record

CSeq: 4

User-Agent: Lavf58.29.100

Session: YEtFIGQP5dCI

v已经根据客户端协议初始化了,A就确定和v一致了

A轨绑定2

因为客户端是TCP的,

如果是**UDP **unicast,还需要对应轨道分配对应的rtp/rtsp(udp)端口(AV总共4个端口…),用于传输Udp数据包()

如果是MULTICAST组播,和UDP unicast不同,所有的连接公用组播的端口,即一对多(省端口了)

RECORD信令 handleReq_RECORD

录制/开始推流

RTSP/1.0 200 OK

CSeq: 5

Date: Fri, Jul 22 2022 09:12:43 GMT

RTP-Info: url=rtsp://127.0.0.1:554/live/test1/streamid=0,url=rtsp://127.0.0.1:554/live/test1/streamid=1

Server: ZLMediaKit(git hash:305722e,branch:master,build time:Jul 22 2022 02:07:24)

Session: YEtFIGQP5dCI

合并写选项,服务器攒多了包再回ACK给pusher,再发REQ给player。提高服务器性能,加大推拉流测的延迟

GET_CONFIG(int, mergeWriteMS, General::kMergeWriteMS);

if(mergeWriteMS > 0) {

//推流模式下,关闭TCP_NODELAY会增加推流端的延时,但是服务器性能将提高

SockUtil::setNoDelay(getSock()->rawFD(), false);

//播放模式下,开启MSG_MORE会增加延时,但是能提高发送性能

setSendFlags(SOCKET_DEFAULE_FLAGS | FLAG_MORE);

}

toPlayer

DESCRIBE信令 handleReq_Describe

客户端请求服务器rtsp sdp信息,在此处做鉴权,分配session

emitOnPlay()

onAuthSuccess()

SETUP信令 handle_setup

pusher的setup

多了Session和Transport且声明自己是推流测(record)

Transport: RTP/AVP/TCP;unicast;interleaved=0-1;mode=record

Session: YEtFIGQP5dCI

回复时对应的ssrc=0

player的setup,声明自己已什么方式拉流

Transport: RTP/AVP/TCP;unicast;interleaved=0-1

?:第一次没有附带describe返回的session,第二次才附带对应的session

回复时带上ssrc=A93E689E。是对应pusher流的引用,拉相同流客户端获得的ssrc是相同的

PLAY信令 handleReq_Play

void RtspSession::handleReq_Play(const Parser &parser)

{

//判断对应的流和源是否都还在

//根据Range:npt=0.000-判断对应useGop

//填充rsp中RTSP对应的RTP-Info:字段

rsp

_play_reader = play_src->getRing()->attach(getPoller(), useGOP);//绑定对应的读取器

//给play_src源中,对应本线程poller的,read_dispatch添加reader

_play_reader->setDetachCB()//detach时

_play_reader->setReadCB() //reader 应该怎样读包

}

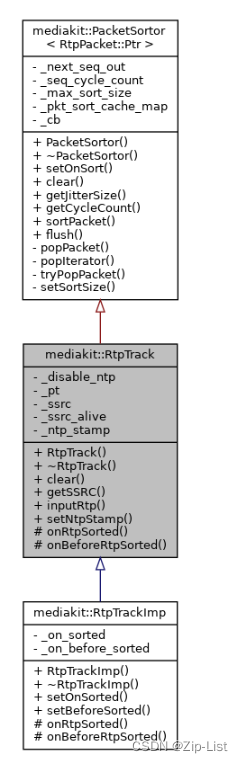

RtpReceiver/RtpMultiReceiver<2>

/**

* 输入数据指针生成并排序rtp包

* @param index track下标索引

* @param type track类型

* @param samplerate rtp时间戳基准时钟,视频为90000,音频为采样率

* @param ptr rtp数据指针

* @param len rtp数据指针长度

* @return 解析成功返回true

*///RtpMultiReceiver::handleOneRtp

bool handleOneRtp(int index, TrackType type, int sample_rate, uint8_t *ptr, size_t len) {

assert(index < kCount && index >= 0);

//RtpTrack::inputRtp

return _track[index].inputRtp(type, sample_rate, ptr, len).operator bool();

}

RtpTrack

UML

PacketSortor

结构

//下次应该输出的SEQ

SEQ _next_seq_out = 0;

//seq回环次数计数

size_t _seq_cycle_count = 0;

//排序缓存长度

size_t _max_sort_size = kMin;

//pkt排序缓存,根据seq排序

std::map<SEQ, T> _pkt_sort_cache_map;

//回调

std::function<void(SEQ seq, T &packet)> _cb;

sortPacket

给包排序过程中 会触发 RtspSession::onRtpSorted

/**

* 输入并排序

* @param seq 序列号

* @param packet 包负载

*/ //PacketSortor::sortPacket

void sortPacket(SEQ seq, T packet) {

if (seq < _next_seq_out) {

if (_next_seq_out < seq + kMax) {

//过滤seq回退包(回环包除外)

return;

}

} else if (_next_seq_out && seq - _next_seq_out > ((std::numeric_limits<SEQ>::max)() >> 1)) {

//过滤seq跳变非常大的包(防止回环时乱序时收到非常大的seq)

return;

}

//放入排序缓存

_pkt_sort_cache_map.emplace(seq, std::move(packet));

//尝试输出排序后的包

tryPopPacket();

}

flush/popIterator

void flush(){

//清空缓存

while (!_pkt_sort_cache_map.empty()) {

popIterator(_pkt_sort_cache_map.begin());

}

}

//弹出一个包

void popIterator(typename std::map<SEQ, T>::iterator it) {

auto seq = it->first;

auto data = std::move(it->second);

_pkt_sort_cache_map.erase(it);

_next_seq_out = seq + 1;//下一个被弹出的包是,这个包的序号+1

_cb(seq, data);

}

popIterator !

从_pkt_sort_cache_map中popcache时,就是pusher写的时候,监听的客户端就会收到对应的序号包

void popIterator(typename std::map<SEQ, T>::iterator it) {

auto seq = it->first;

auto data = std::move(it->second);

_pkt_sort_cache_map.erase(it);

_next_seq_out = seq + 1;

_cb(seq, data);

//RtpTrack::RtpTrack onRtpSorted(std::move(packet));

//RtpTrackImp::onRtpSorted _on_sorted(std::move(rtp));

//RtpMultiReceiver::RtpMultiReceiver() --> onRtpSorted(std::move(rtp), index);

//RtspSession::onRtpSorted --> _push_src->onWrite(std::move(rtp), false);

//RtspMediaSourceImp::onWrite

}

inputRtp

RtpPacket::Ptr RtpTrack::inputRtp(TrackType type, int sample_rate, uint8_t *ptr, size_t len)

{

...

//收到一个包后看5s内需不需要发送rtcp

onBeforeRtpSorted(rtp);//RtpMultiReceiver()->track.setBeforeSorted RtspSession::onBeforeRtpSorted --> updateRtcpContext(rtp);

sortPacket(rtp->getSeq(), rtp); //PacketSortor::sortPacket

}

RtspMediaSourceImp

重写RtspMediaSource(RingDelegate) onWrite

先解复用rtp,直接代理模式下在写入ringbuffer

onWrite

void onWrite(RtpPacket::Ptr rtp, bool key_pos) override {

if (_all_track_ready && !_muxer->isEnabled()) {

//获取到所有Track后,并且未开启转协议,那么不需要解复用rtp

//在关闭rtp解复用后,无法知道是否为关键帧,这样会导致无法秒开,或者开播花屏

key_pos = rtp->type == TrackVideo;

} else {

//需要解复用rtp

key_pos = _demuxer->inputRtp(rtp);

//RtspDemuxer::inputRtp //解复用之后才能判断key_pos

//H264RtpDecoder::inputRtp

}

GET_CONFIG(bool, directProxy, Rtsp::kDirectProxy);

if (directProxy) {

//直接代理模式才直接使用原始rtp

RtspMediaSource::onWrite(std::move(rtp), key_pos);

//RtspMediaSource::onWrite

}

}

RtspMediaSource

* rtsp媒体源的数据抽象

* rtsp有关键的两要素,分别是sdp、rtp包

* 只要生成了这两要素,那么要实现rtsp推流、rtsp服务器就很简单了

* rtsp推拉流协议中,先传递sdp,然后再协商传输方式(tcp/udp/组播),最后一直传递rtp

onWrite

void onWrite(RtpPacket::Ptr rtp, bool keyPos) override {

_speed[rtp->type] += rtp->size();

assert(rtp->type >= 0 && rtp->type < TrackMax);

auto &track = _tracks[rtp->type];

auto stamp = rtp->getStampMS();

if (track) {

track->_seq = rtp->getSeq();

track->_time_stamp = rtp->getStamp() * uint64_t(1000) / rtp->sample_rate;

track->_ssrc = rtp->getSSRC();

}

if (!_ring) {

std::weak_ptr<RtspMediaSource> weakSelf = std::dynamic_pointer_cast<RtspMediaSource>(shared_from_this());

auto lam = [weakSelf](int size) {

auto strongSelf = weakSelf.lock();

if (!strongSelf) {

return;

}

strongSelf->onReaderChanged(size);

};

//GOP默认缓冲512组RTP包,每组RTP包时间戳相同(如果开启合并写了,那么每组为合并写时间内的RTP包),

//每次遇到关键帧第一个RTP包,则会清空GOP缓存(因为有新的关键帧了,同样可以实现秒开)

_ring = std::make_shared<RingType>(_ring_size, std::move(lam));

onReaderChanged(0);

if (!_sdp.empty()) {

regist();

}

}

bool is_video = rtp->type == TrackVideo;

PacketCache<RtpPacket>::inputPacket(stamp, is_video, std::move(rtp), keyPos);

//PacketCache::inputPacket

}

onFlush

/**

* 批量flush rtp包时触发该函数

* @param rtp_list rtp包列表

* @param key_pos 是否包含关键帧

*/

void onFlush(std::shared_ptr<toolkit::List<RtpPacket::Ptr> > rtp_list, bool key_pos) override {

//如果不存在视频,那么就没有存在GOP缓存的意义,所以is_key一直为true确保一直清空GOP缓存

_ring->write(std::move(rtp_list), _have_video ? key_pos : true);

//RingBuffer::write

}

RTSP

SdpParser

load

v=0

o=- 0 0 IN IP4 127.0.0.1

s=No Name

c=IN IP4 127.0.0.1

t=0 0

a=tool:libavformat 58.29.100

m=video 0 RTP/AVP 96

a=rtpmap:96 H264/90000

a=fmtp:96 packetization-mode=1; sprop-parameter-sets=Z2QAKKzZQHgCJ+XARAAAAwAEAAADAPA8YMZY,aOvjyyLA; profile-level-id=640028

a=control:streamid=0

m=audio 0 RTP/AVP 97

b=AS:128

a=rtpmap:97 MPEG4-GENERIC/48000/2

a=fmtp:97 profile-level-id=1;mode=AAC-hbr;sizelength=13;indexlength=3;indexdeltalength=3; config=119056E500

a=control:streamid=1

void SdpParser::load(const string &sdp)

{

std::vector<std::string> lines = split(sdp, "\n"); //"\n"分隔

trim(line); //去掉"\r"

for (auto &track_ptr : _track_vec) //3个 m对应着新的SdpTrack

{

for (it = track._attr.find("rtpmap"); it != track._attr.end() && it->first == "rtpmap";)

{

else if (3 == sscanf(rtpmap.data(), "%d %15[^/]/%d", &pt, codec, &samplerate))//"96 H264/90000"

//获取 payload_type,codec,samplerate //负载类型,编码格式,采样率

}

for (it = track._attr.find("fmtp"); it != track._attr.end() && it->first == "fmtp"; )

{

track._fmtp = //packetization-mode=1; sprop-parameter-sets=Z2QAKKzZQHgCJ+XARAAAAwAEAAADAPA8YMZY,aOvjyyLA; profile-level-id=640028

}

}

}

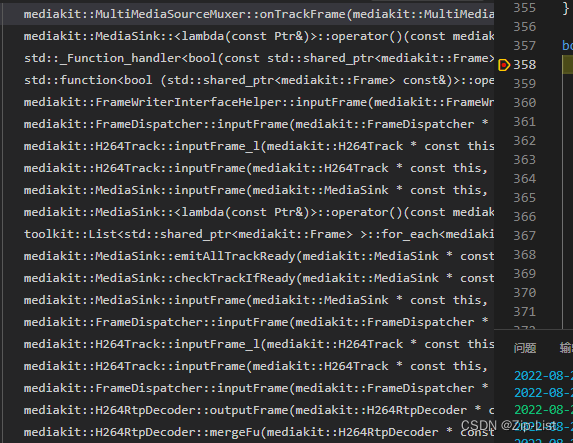

推流时服务器调用栈

推流rtp/rtsp调用栈

EventPoller::runLoop

(*cb)(toPoller(ev.events));

Socket::attachEvent

strong_self->onRead(strong_sock, is_udp);

Socket::onRead

_on_read(_read_buffer, (struct sockaddr *)&addr, len);

TcpServer::onAcceptConnection

strong_session->onRecv(buf);

RtspSession::onRecv

input(buf->data(), buf->size());

HttpRequestSplitter::input

_content_len = onRecvHeader(header_ptr, header_size);

RtspSplitter::onRecvHeader

onRtpPacket(data,len);

RtspSession::onRtpPacket

rtsp/rtp

handleOneRtp

onRtcpPacket

处理rtp调用栈

RtspSession::onRtpPacket

handleOneRtp

RtpMultiReceiver::handleOneRtp

return _track[index].inputRtp(type, sample_rate, ptr, len).operator bool();

RtpTrack::inputRtp

onBeforeRtpSorted

RtpTrackImp::onBeforeRtpSorted

_on_before_sorted(rtp);

RtpMultiReceiver()

onBeforeRtpSorted(rtp, index);

RtpTrack::inputRtp

onBeforeRtpSorted(rtp); //执行完毕后sortPacket(rtp->getSeq(), rtp);

RtspSession::onBeforeRtpSorted

updateRtcpContext(rtp);

RtspSession::updateRtcpContext

rtcp_ctx->onRtp(...);

RtcpContextForRecv::onRtp

更新rtcp 对应的_packets/_bytes/_last_rtp_stamp/_last_ntp_stamp_ms

RtpTrack::inputRtp

sortPacket(rtp->getSeq(), rtp);

PacketSortor

sortPacket

//放入排序缓存

_pkt_sort_cache_map.emplace(seq, std::move(packet));//3398:pkg 3399:pkg

...popIterator

_cb(seq, data);

//RtpTrack::RtpTrack onRtpSorted(std::move(packet));

//RtpTrackImp::onRtpSorted _on_sorted(std::move(rtp));

//RtpMultiReceiver::RtpMultiReceiver() --> onRtpSorted(std::move(rtp), index);

//RtspSession::onRtpSorted --> _push_src->onWrite(std::move(rtp), false);

//RtspMediaSourceImp::onWrite

解复用

RtspMediaSourceImp::onWrite

key_pos = _demuxer->inputRtp(rtp);

RtspDemuxer::inputRtp

return _video_rtp_decoder->inputRtp(rtp, true);

H264RtpDecoder::inputRtp

auto ret = decodeRtp(rtp);

// 24 STAP-A 28 FU-A <24 singleFrame

H264RtpDecoder::decodeRtp

outputFrame(rtp, _frame);

H264RtpDecoder::outputFrame

RtpCodec::inputFrame(frame); //写入帧并派发

FrameDispatcher::inputFrame //和下面的FrameDispatcher 不是一个

H264Track::inputFrame

H264Track::inputFrame_l

insertConfigFrame(frame);//插入sps pps

VideoTrack::inputFrame(frame);

---------------------------------------------------------

FrameDispatcher::inputFrame //和下面的FrameDispatcher 不是一个

MediaSink::inputFrame

MediaSink::inputFrame

auto ret = it->second.first->inputFrame(frame);//MediaSink::addTrack ANOUACE时loadsdp时添加

H264Track::inputFrame

FrameDispatcher::inputFrame

MediaSink::inputFrame

FrameWriterInterfaceHelper::inputFrame //和下面的FrameDispatcher 不是一个

return _writeCallback(frame); //经历3层 FrameDispatcher::inputFrame

MediaSink::addTrack

track->addDelegate ---> return onTrackFrame(frame);

MultiMediaSourceMuxer::onTrackFrame

//MultiMediaSourceMuxer::MultiMediaSourceMuxer初始化时添加

RtmpMediaSourceMuxer::Ptr _rtmp;

RtspMediaSourceMuxer::Ptr _rtsp;

TSMediaSourceMuxer::Ptr _ts;

MediaSinkInterface::Ptr _mp4;

HlsRecorder::Ptr _hls;

--------------------------------------------------------

if (_rtmp) {

ret = _rtmp->inputFrame(frame) ? true : ret;

}

if (_rtsp) {

ret = _rtsp->inputFrame(frame) ? true : ret;

}

if (_ts) {

ret = _ts->inputFrame(frame) ? true : ret;

}

--------------------------rtmp----------------------------

RtmpMediaSourceMuxer::inputFrame

RtmpMuxer::inputFrame

H264RtmpEncoder::inputFrame

--------------------------rtsp----------------------------

RtspMediaSourceMuxer::inputFrame

RtspMuxer::inputFrame

H264RtmpEncoder::inputFrame

--------------------------ts----------------------------

TSMediaSourceMuxer::inputFrame

MpegMuxer::inputFrame

--------------------------hls----------------------------

HlsRecorder::inputFrame

MpegMuxer::inputFrame

--------------------------_fmp4----------------------------

FMP4MediaSourceMuxer::inputFrame

MP4MuxerMemory::inputFrame

拉流时player调用栈

test_player.cpp

TcpClient::onSockConnect

strong_self->onRecv(pBuf);

RtspPlayer::onRecv

input(buf->data(), buf->size());

HttpRequestSplitter::input

_content_len = onRecvHeader(header_ptr, header_size);

RtspSplitter::onRecvHeader

onRtpPacket(data,len);

RtspPlayer::onRtpPacket

handleOneRtp(trackIdx, _sdp_track[trackIdx]->_type,

bool RtpMultiReceiver::handleOneRtp

return _track[index].inputRtp(type, sample_rate, ptr, len).operator bool();

RtpTrack::inputRtp

sortPacket(rtp->getSeq(), rtp);

PacketSortor::sortPacket

tryPopPacket

popPacket

popIterator : _cb(seq, data)

RtpTrack::RtpTrack()

onRtpSorted(std::move(packet));

RtpTrackImp::onRtpSorted

_on_sorted(std::move(rtp));

RtpMultiReceiver()

onRtpSorted(std::move(rtp), index);

RtspPlayer::onRtpSorted

onRecvRTP(std::move(rtppt), _sdp_track[trackidx]);

RtspPlayerImp::onRecvRTP

_demuxer->inputRtp(rtp);

RtspDemuxer::inputRtp

return _video_rtp_decoder->inputRtp(rtp, true);

H264RtpDecoder::inputRtp

decodeRtp(rtp);

singleFrame(rtp, frame, payload_size, stamp);

outputFrame(rtp, _frame);

RtpCodec::inputFrame(frame);

FrameDispatcher::inputFrame // RtpCodec::inputFrame的_delegates == 1

H264Track::inputFrame

splitH264(frame->data(), ...

H264Track::inputFrame_l

VideoTrack::inputFrame

FrameDispatcher::inputFrame // VideoTrack::inputFrame的_delegates == 1

FrameWriterInterfaceHelper ---> return _writeCallback(frame);

auto delegate = std::make_shared<FrameWriterInterfaceHelper>//test_player.cpp 自己实现的FFmpegDecoder

return decoder->inputFrame(frame, false, true);

addDecodeTask(frame->keyFrame(), [this, live, frame_cache, enable_merge]() //把帧放入队列里去异步解析

{

inputFrame_l(frame_cache, live, enable_merge);

});

//异步线程

------------------------------------onDecode----------------------------------------

//老套路inputFrame_l中 调ffmpeg avcodec_send_packet/avcodec_receive_frame 解出帧了

onDecode(out_frame);

decoder->setOnDecode([displayer](const FFmpegFrame::Ptr &yuv)//test_player.cpp

//把解出的帧展示成画面

总结

-

sdp协议的作用:parser解析sdp字段,sscanf用法

-

RTSP Interleaved Frame 区分 rtp/rtcp

-

怎么完成对包的排序的PacketSortor

-

rtp udp/tcp/组播 怎么处理的

1187

1187

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?