问题描述:

完成flower_photos的分类任务,我们通过VGG19的迁移学习,并增加一层5个神经元的全连接层,将VGG19的1000分类任务训练为一个可以进行5分类的分类任务

数据集下载地址:

http://download.tensorflow.org/example_images/flower_photos.tgz

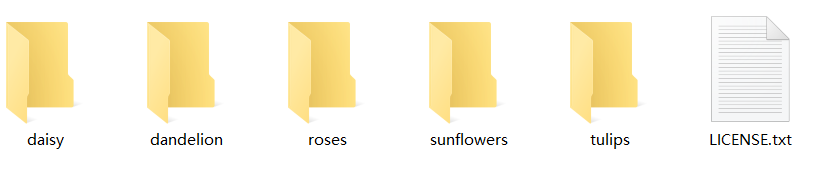

下载后得到一个.tgr文件,解压后,文件夹下包含了5个子文件夹,每个子文件夹都存储了一种类别的花的图片,子文件夹的名称就是花的类别的名称,如下图:

数据集划分代码

文件flower_list.py——返回文件路径列表,以及对应的标签列表

import tensorflow as tf

import numpy as np

import random

import os

import math

from matplotlib import pyplot as plt

def get_files(file_dir):

"""

创建数据文件名列表

:param file_dir:

:return:image_list 所有图像文件名的列表,label_list 所有对应标贴的列表

"""

#step1.获取图片,并贴上标贴

#新建五个列表,存储文件夹下的文件名

daisy=[]

label_daisy=[]

dandelion=[]

label_dandelion = []

roses=[]

label_roses = []

sunflowers=[]

label_sunflowers = []

tulips=[]

label_tulips = []

for file in os.listdir(file_dir+"/daisy"):

daisy.append(file_dir+"/daisy"+"/"+file)

label_daisy.append(0)

for file in os.listdir(file_dir+"/dandelion"):

dandelion.append(file_dir+"/dandelion"+"/"+file)

label_dandelion.append(1)

for file in os.listdir(file_dir+"/roses"):

roses.append(file_dir+"/roses"+"/"+file)

label_roses.append(2)

for file in os.listdir(file_dir+"/sunflowers"):

sunflowers.append(file_dir+"/sunflowers"+"/"+file)

label_sunflowers.append(3)

for file in os.listdir(file_dir+"/tulips"):

tulips.append(file_dir+"/tulips"+"/"+file)

label_tulips.append(4)

#step2:对生成的图片路径和标签List做打乱处理

#把所有图片跟标贴合并到一个列表list(img和lab)

images_list=np.hstack([daisy,dandelion,roses,sunflowers,tulips])

labels_list=np.hstack([label_daisy,label_dandelion,label_roses,label_sunflowers,label_tulips])

#利用shuffle打乱顺序

temp=np.array([images_list,labels_list]).transpose()

np.random.shuffle(temp)

# 从打乱的temp中再取出list(img和lab)

image_list=list(temp[:,0])

label_list=list(temp[:,1])

label_list_new=[int(i) for i in label_list]

# 将所得List分为两部分,一部分用来训练tra,一部分用来测试val

# 测试样本数, ratio是测试集的比例

ratio=0.2

n_sample = len(label_list)

n_val = int(math.ceil(n_sample * ratio))

n_train = n_sample - n_val # 训练样本数

tra_images = image_list[0:n_train]

tra_labels = label_list_new[0:n_train]

#tra_labels = [int(float(i)) for i in tra_labels] # 转换成int数据类型

val_images = image_list[n_train:-1]

val_labels = label_list_new[n_train:-1]

#val_labels = [int(float(i)) for i in val_labels] # 转换成int数据类型

return tra_images, tra_labels, val_images, val_labels训练数据生成

文件flower_data.py——用于生成训练集、测试集、验证集的样本数据集

import os

os.environ["CUDA_VISIBLE_DEVICES"]="-1"

import pandas as pd

import cv2

import numpy as np

import flower_list as flower

# import psutil

class Dataset():

def __init__(self,split_ratio=[0.8,0.2]):

# self.cur_path = os.getcwd()

# file_path = os.path.join(self.cur_path, 'data/input/ButterflyClassification/train.csv')

mypath = "flower_photos"

tra_image, tra_label, test_image, test_label = flower.get_files(mypath)

###========================== Split Data =============================###

image_total=len(tra_image)

self.train_total=int(image_total*split_ratio[0])

self.train_image,self.train_label=tra_image[:self.train_total],tra_label[:self.train_total]

self.val_image,self.val_label=tra_image[self.train_total:],tra_label[self.train_total:]

self.test_image,self.test_label=test_image,test_label

self.start=0

def train(self):

xs,ys=[],[]

for x,y in zip(self.train_image,self.train_label):

###========================== read image =============================###

# image_path = os.path.join(self.cur_path, "data\input\ButterflyClassification", x)

# image_path = os.path.join(DATA_PATH, "ButterflyClassification", x)

image = cv2.imread(x)

image_cvt = cv2.cvtColor(image, cv2.COLOR_BGR2RGB)

image_resize = cv2.resize(image_cvt, (224, 224))

image_normal = image_resize / (255 / 2.0) - 1

# image_normal = image_resize / 255.0

# print(image_normal.shape)

###========================== general label =============================###

label = [0] * 5

label[y] = 1

# print(x, label)

# print(label)

xs.append(image_normal)

ys.append(label)

return xs, ys

def validation(self):

xs, ys = [], []

for x, y in zip(self.val_image[16:32], self.val_label[16:32]):

###========================== read image =============================###

# image_path = os.path.join(self.cur_path, "data\input\ButterflyClassification", x)

# image_path = os.path.join(DATA_PATH, "ButterflyClassification", x)

image = cv2.imread(x)

image_cvt = cv2.cvtColor(image, cv2.COLOR_BGR2RGB)

image_resize = cv2.resize(image_cvt, (224, 224))

image_normal = image_resize / (255 / 2.0) - 1

# image_normal = image_resize / 255.0

# print(image_normal.shape)

###========================== general label =============================###

label = [0] * 5

label[y] = 1

# print(label)

xs.append(image_normal)

ys.append(label)

# return xs, ys

return np.array(xs, dtype=np.float32), np.array(ys, dtype=np.int64)

def test(self):

xs, ys = [], []

for x, y in zip(self.test_image, self.test_label):

###========================== read image =============================###

# image_path = os.path.join(self.cur_path, "data/input/ButterflyClassification", x)

# image_path = os.path.join(DATA_PATH, "ButterflyClassification", x)

image = cv2.imread(x)

image_cvt = cv2.cvtColor(image, cv2.COLOR_BGR2RGB)

image_resize = cv2.resize(image_cvt, (224, 224))

image_normal = image_resize / (255 / 2.0) - 1

# image_normal = image_resize / 255.0

# print(image_normal.shape)

###========================== general label =============================###

label = [0] * 5

label[y] = 1

# print(label)

xs.append(image_normal)

ys.append(label)

return xs, ys

def train_next_batch(self,batch_size):

xs, ys = [], []

# print(self.start)

while True:

x,y=self.train_image[self.start],self.train_label[self.start]

###========================== read image =============================###

# image_path = os.path.join(self.cur_path, "data/input/ButterflyClassification", x)

# image_path = os.path.join(DATA_PATH, "ButterflyClassification", x)

image = cv2.imread(x)

image_cvt = cv2.cvtColor(image, cv2.COLOR_BGR2RGB)

image_resize = cv2.resize(image_cvt, (224, 224))

image_normal = image_resize / (255 / 2.0) - 1

# image_normal = image_resize / 255.0

# print(image_normal.shape)

###========================== general label =============================###

label = [0] * 5

label[y] = 1

# print(label)

xs.append(image_normal)

ys.append(label)

self.start+=1

if self.start>=self.train_total:

self.start=0

if len(xs)>=batch_size:

break

# return xs,

return np.array(xs,dtype=np.float32),np.array(ys,dtype=np.int64)

vgg19模型

文件名model.py——用于构建VGG19模型

import numpy as np

import tensorflow as tf

import tensorlayer as tl

import tensorlayer as tl

from tensorlayer.layers import *

import time

import os

# os.environ["CUDA_VISIBLE_DEVICES"]="-1"

def Vgg19_simple_api(rgb, reuse):

"""

Build the VGG 19 Model

Parameters

-----------

rgb : rgb image placeholder [batch, height, width, 3] values scaled [0, 1]

"""

VGG_MEAN = [103.939, 116.779, 123.68]

with tf.variable_scope("VGG19", reuse=reuse) as vs:

start_time = time.time()

print("build model started")

rgb_scaled = rgb * 255.0

# Convert RGB to BGR

red, green, blue = tf.split(rgb_scaled, 3, 3)

assert red.get_shape().as_list()[1:] == [224, 224, 1]

assert green.get_shape().as_list()[1:] == [224, 224, 1]

assert blue.get_shape().as_list()[1:] == [224, 224, 1]

bgr = tf.concat(

[

blue - VGG_MEAN[0],

green - VGG_MEAN[1],

red - VGG_MEAN[2],

], axis=3)

assert bgr.get_shape().as_list()[1:] == [224, 224, 3]

""" input layer """

net_in = InputLayer(bgr, name='input')

""" conv1 """

network = Conv2d(net_in, n_filter=64, filter_size=(3, 3), strides=(1, 1), act=tf.nn.relu, padding='SAME',

name='conv1_1')

network = Conv2d(network, n_filter=64, filter_size=(3, 3), strides=(1, 1), act=tf.nn.relu, padding='SAME',

name='conv1_2')

network = MaxPool2d(network, filter_size=(2, 2), strides=(2, 2), padding='SAME', name='pool1')

""" conv2 """

network = Conv2d(network, n_filter=128, filter_size=(3, 3), strides=(1, 1), act=tf.nn.relu, padding='SAME',

name='conv2_1')

network = Conv2d(network, n_filter=128, filter_size=(3, 3), strides=(1, 1), act=tf.nn.relu, padding='SAME',

name='conv2_2')

network = MaxPool2d(network, filter_size=(2, 2), strides=(2, 2), padding='SAME', name='pool2')

""" conv3 """

network = Conv2d(network, n_filter=256, filter_size=(3, 3), strides=(1, 1), act=tf.nn.relu, padding='SAME',

name='conv3_1')

network = Conv2d(network, n_filter=256, filter_size=(3, 3), strides=(1, 1), act=tf.nn.relu, padding='SAME',

name='conv3_2')

network = Conv2d(network, n_filter=256, filter_size=(3, 3), strides=(1, 1), act=tf.nn.relu, padding='SAME',

name='conv3_3')

network = Conv2d(network, n_filter=256, filter_size=(3, 3), strides=(1, 1), act=tf.nn.relu, padding='SAME',

name='conv3_4')

network = MaxPool2d(network, filter_size=(2, 2), strides=(2, 2), padding='SAME', name='pool3')

""" conv4 """

network = Conv2d(network, n_filter=512, filter_size=(3, 3), strides=(1, 1), act=tf.nn.relu, padding='SAME',

name='conv4_1')

network = Conv2d(network, n_filter=512, filter_size=(3, 3), strides=(1, 1), act=tf.nn.relu, padding='SAME',

name='conv4_2')

network = Conv2d(network, n_filter=512, filter_size=(3, 3), strides=(1, 1), act=tf.nn.relu, padding='SAME',

name='conv4_3')

network = Conv2d(network, n_filter=512, filter_size=(3, 3), strides=(1, 1), act=tf.nn.relu, padding='SAME',

name='conv4_4')

network = MaxPool2d(network, filter_size=(2, 2), strides=(2, 2), padding='SAME',

name='pool4') # (batch_size, 14, 14, 512)

conv = network

""" conv5 """

network = Conv2d(network, n_filter=512, filter_size=(3, 3), strides=(1, 1), act=tf.nn.relu, padding='SAME',

name='conv5_1')

network = Conv2d(network, n_filter=512, filter_size=(3, 3), strides=(1, 1), act=tf.nn.relu, padding='SAME',

name='conv5_2')

network = Conv2d(network, n_filter=512, filter_size=(3, 3), strides=(1, 1), act=tf.nn.relu, padding='SAME',

name='conv5_3')

network = Conv2d(network, n_filter=512, filter_size=(3, 3), strides=(1, 1), act=tf.nn.relu, padding='SAME',

name='conv5_4')

network = MaxPool2d(network, filter_size=(2, 2), strides=(2, 2), padding='SAME',

name='pool5') # (batch_size, 7, 7, 512)

""" fc 6~8 """

network = FlattenLayer(network, name='flatten')

network = DenseLayer(network, n_units=4096, act=tf.nn.relu, name='fc6')

network = DenseLayer(network, n_units=4096, act=tf.nn.relu, name='fc7')

network = DenseLayer(network, n_units=1000, act=tf.identity, name='fc8')

print("build model finished: %fs" % (time.time() - start_time))

return network, conv

def my_model(my_tensors,reuse):

with tf.variable_scope("VGG19", reuse=reuse) as vs:

net_in=InputLayer(my_tensors,name="input")

net_out=DenseLayer(net_in,n_units=200,act=tf.identity,name="fc9")

return net_out训练代码

文件名flower.py——用于训练模型

import os

os.environ["CUDA_VISIBLE_DEVICES"]="-1"

import argparse

import psutil

import tensorlayer as tl

import tensorflow as tf

import numpy as np

from model import Vgg19_simple_api,Vgg19_simple

from flower_data import Dataset

class Main():

'''

项目中必须继承FlyAI类,否则线上运行会报错。

'''

def __init__(self):

self.bottleneck_size = 1000

self.num_class = 5

def memory_usage(self):

mem_available = psutil.virtual_memory().available

mem_process = psutil.Process(os.getpid()).memory_info().rss

return round(mem_process / 1024 / 1024, 2), round(mem_available / 1024 / 1024, 2)

def train(self):

batch_size=16

max_steps=5000

data=Dataset()

input_image = tf.placeholder(dtype=tf.float32,shape=[None, 224, 224, 3],name='input_image')

label_image=tf.placeholder(dtype=tf.float32, shape=[None,5], name='input_image')

net_vgg, vgg_target_emb = Vgg19_simple_api(input_image, reuse=False)

###============================= Create Model ===============================###

weights = tf.Variable(tf.truncated_normal([self.bottleneck_size, self.num_class], stddev=0.1), name="fc9/w")

biases = tf.Variable(tf.constant(0.1, shape=[self.num_class]), name="fc9/b")

logits = tf.matmul(net_vgg.outputs, weights) + biases

# 计算损失、准确率

loss = tf.reduce_mean(

tf.nn.sparse_softmax_cross_entropy_with_logits(labels=tf.argmax(label_image, 1), logits=logits))

# loss=tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(labels=label_image,logits=net_vgg.outputs))

accuracy = tf.reduce_mean(

tf.cast(tf.equal(tf.argmax(label_image, 1), tf.argmax(logits, 1)), tf.float32))

# 优化器

optimizer=tf.train.GradientDescentOptimizer(learning_rate=0.00001).minimize(loss=loss)

# optimizer=tf.train.AdamOptimizer(learning_rate=0.001).minimize(loss=loss)

###============================= LOAD VGG ===============================###

vgg19_npy_path = "vgg19.npy"

if not os.path.isfile(vgg19_npy_path):

print("Please download vgg19.npz from : https://github.com/machrisaa/tensorflow-vgg")

exit()

npz = np.load(vgg19_npy_path, encoding='latin1').item()

params = []

for val in sorted(npz.items()):

W = np.asarray(val[1][0])

b = np.asarray(val[1][1])

print(" Loading %s: %s, %s" % (val[0], W.shape, b.shape))

params.extend([W, b])

print('ok')

saver = tf.train.Saver(var_list=[weights, biases])

init_op = tf.global_variables_initializer()

config = tf.ConfigProto(allow_soft_placement=True, log_device_placement=False)

with tf.Session(config=config) as sess:

tl.layers.initialize_global_variables(sess)

sess.run(init_op)

tl.files.assign_params(sess, params, net_vgg)

###========================== RESTORE MODEL =============================###

# tl.files.load_and_assign_npz(sess=sess,name="data/output/model/my_vgg.npz",network=net_vgg)

tl.files.load_and_assign_npz(sess=sess, name=os.path.join('model','my_vgg.npz'), network=net_vgg)

try:

saver.restore(sess=sess,save_path=os.path.join('model','model.ckpt'))

except:

print("load model err")

###========================== TRAIN MODEL =============================###

val_image,val_label=data.validation()

validate_feed = {input_image:val_image, label_image:val_label}

for epoch in range(max_steps):

# 模型训练

train_image,train_label=data.train_next_batch(batch_size)

train_feed = {input_image:train_image, label_image:train_label}

cur_loss,_=sess.run([loss, optimizer],feed_dict=train_feed) #返回的变量名与sess.run()中的不可以相同

print("Epoch:%d,loss %.2f"%(epoch,cur_loss*100),self.memory_usage())

if epoch!=0 and epoch%50==0:

# 模型验证

my_accuracy=sess.run(accuracy,feed_dict=validate_feed)

# print(my_accuracy)

print("Epoch:%d ,accuracy: %.2f%%"%(epoch, my_accuracy*100))

#模型保存

tl.files.save_npz(net_vgg.all_params, name=os.path.join('model','my_vgg.npz'),sess=sess)

saver.save(sess=sess, save_path=os.path.join('model', 'model.ckpt'))

def test(self):

data = Dataset()

input_image = tf.placeholder('float32', [None, 224, 224, 3], name='image_input')

label_image = tf.placeholder('float32', [None, 5], name='input_image')

# net_vgg, vgg_target_emb = Vgg19_simple_api((input_image + 1) / 2, reuse=False)

net_vgg, vgg_target_emb = Vgg19_simple_api(input_image, reuse=False)

###============================= Create Model ===============================###

weights = tf.Variable(tf.truncated_normal([self.bottleneck_size, self.num_class], stddev=0.1), name="fc9/w")

biases = tf.Variable(tf.constant(0.1, shape=[self.num_class]), name="fc9/b")

logits = tf.matmul(net_vgg.outputs, weights) + biases

# model_out=my_model(net_vgg.outputs,reuse=False)

# 计算损失、准确率

loss = tf.reduce_mean(

tf.nn.sparse_softmax_cross_entropy_with_logits(labels=tf.argmax(label_image, 1), logits=logits))

# loss=tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(labels=label_image,logits=net_vgg.outputs))

accuracy = tf.reduce_mean(

tf.cast(tf.equal(tf.argmax(label_image, 1), tf.argmax(logits, 1)), tf.float32))

# print(accuracy)

# 优化器

# optimizer=tf.train.GradientDescentOptimizer(learning_rate=0.00001).minimize(loss=loss)

# optimizer = tf.train.AdamOptimizer(learning_rate=0.0001).minimize(loss=loss)

###============================= LOAD VGG ===============================###

saver = tf.train.Saver(var_list=[weights, biases])

config = tf.ConfigProto(allow_soft_placement=True, log_device_placement=False)

with tf.Session(config=config) as sess:

###========================== RESTORE MODEL =============================###

tl.files.load_and_assign_npz(sess=sess, name=os.path.join('model', 'my_vgg.npz'), network=net_vgg)

# tl.files.load_and_assign_npz(sess=sess, name=os.path.join(MODEL_PATH, 'my_vgg_fc9.npz'), network=model_out)

# if os.path.exists(os.path.join(MODEL_PATH, 'model.ckpt')):

saver.restore(sess=sess, save_path=os.path.join('model', 'model.ckpt'))

###========================== TEST MODEL =============================###

test_image, test_label = data.test()

my_accuracy = 0

for x_test, y_test in zip(test_image, test_label):

validate_feed = {input_image: [x_test], label_image: [y_test]}

# 模型验证

acc = sess.run(accuracy, feed_dict=validate_feed) # 返回的变量名与sess.run()中的不可以相同

my_accuracy += acc

print(my_accuracy)

print("Accuracy %.2f" % (my_accuracy / len(test_image)))

if __name__ == '__main__':

main = Main()

# main.train()

main.test()

结果:

最终训练模型在测试集的准确率:98%

本文介绍了一个使用迁移学习技术的花卉图片分类项目。通过调整预训练的VGG19模型,将其应用于一个包含五种花卉的数据集上,实现了五分类任务。项目包括数据集处理、模型构建与训练等关键步骤。

本文介绍了一个使用迁移学习技术的花卉图片分类项目。通过调整预训练的VGG19模型,将其应用于一个包含五种花卉的数据集上,实现了五分类任务。项目包括数据集处理、模型构建与训练等关键步骤。

2253

2253

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?