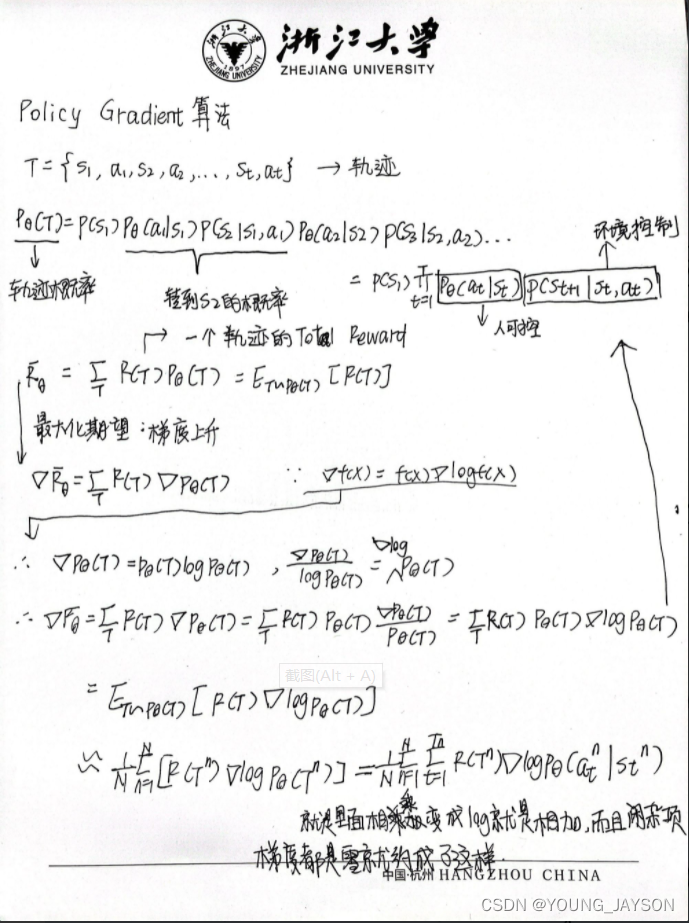

这差不多就是最后的式子了,然后就可以将其带入pytorch的框架里写代码了。

当然还可以继续进行优化,

- 加一个baseline来相减,这样就可以有正有负,更好地去进行优化

- 第二个就是对于一个episode里面如果没有状态都使用 R ( T n ) R(T^n) R(Tn)来是不是很公平的,所以可以采用只算上之后所获得的奖励,也就是说 ∑ k = t T n r k n − b \sum_{k = t}^{T_n}r_k^n-b ∑k=tTnrkn−br

- 接着还可以就是乘上gamma来优化变成了 ∑ k = t T n γ k − t r k n − b \sum_{k = t}^{T_n}\gamma ^{k-t}r_k^n-b ∑k=tTnγk−trkn−b,可以这样简单的理解就是越往后没有那么重要

所以最后的式子如下:

1

N

∑

n

=

1

N

∑

t

=

1

T

n

∑

k

=

t

T

n

(

γ

k

−

t

r

k

n

−

b

)

g

r

a

d

i

e

n

l

o

g

p

θ

(

a

t

n

∣

s

t

n

)

\frac{1}{N}\sum_{n=1}^{N}\sum_{t=1}^{T_n}\sum_{k = t}^{T_n}(\gamma ^{k-t}r_k^n-b)gradien\ logp_{\theta}(a_t^n|s_t^n)

N1n=1∑Nt=1∑Tnk=t∑Tn(γk−trkn−b)gradien logpθ(atn∣stn)

代码实现

import torch

import gym

import argparse

import torch.nn as nn

import torch.nn.functional as F

import numpy as np

import torch.optim as optim

from torch.distributions import Categorical

GAMMA = 0.98

env = gym.make("CartPole-v1")

N_ACTION = env.action_space.n

N_STATE = env.observation_space.shape[0]

EPS = np.finfo(np.float32).eps.item() # 非负的最小值,使得归一化时分母不为0

print(N_ACTION,N_STATE)

class Net(nn.Module):

def __init__(self,input,output):

super(Net,self).__init__()

self.net = nn.Sequential(nn.Linear(input,64),nn.Tanh(),nn.Linear(64,output))

def forward(self,x):

x = self.net(x)

return F.softmax(x,dim = 1)

class agent():

def __init__(self) -> None:

self.episode_reward = []

self.net = Net(N_STATE,N_ACTION)

self.probs_log = []

self.optimizer = optim.Adam(self.net.parameters(),lr = 1e-2)

def choose_action(self,state):

state = torch.as_tensor(state).unsqueeze(0)

probs = self.net(state)

m = Categorical(probs)

action = m.sample()

#print(m.log_prob(action))

self.probs_log.append(m.log_prob(action))

#print(action.item())

return action.item()

RL = agent()

TOTAL_EPISODE = 500

render_run = False

for episode in range(TOTAL_EPISODE):

state = env.reset() # 先初始化

total_reward = 0

while True:

a = RL.choose_action(state)

state_,reward,done,info = env.step(a)

if render_run > 50:

env.render()

RL.episode_reward.append(reward)

total_reward += reward

state = state_

#env.render()

if done :

rewards_tmp = []

loss = []

R = 0

for r in RL.episode_reward[::-1]:

R = reward + GAMMA * R

rewards_tmp.insert(0,R)

#进行归一化操作

rewards_tmp = torch.tensor(rewards_tmp)

rewards_tmp = (rewards_tmp - rewards_tmp.mean())/(rewards_tmp.std() + EPS)

#print(len(rewards_tmp))

#print(rewards_tmp)

for log_prob, r in zip(RL.probs_log, rewards_tmp):

loss.append(-log_prob * r) # 损失函数为交叉熵

#for i in range(len(rewards_tmp)):

# loss.append(rewards_tmp[i] * (-RL.probs_log[i]))

RL.optimizer.zero_grad()

loss = torch.cat(loss).sum()

loss.backward()

RL.optimizer.step()

del RL.probs_log[:]

del RL.episode_reward[:]

if total_reward > 400:

render_run += 1

print("the reward of the {}th episode is :{}".format(episode,total_reward))

break

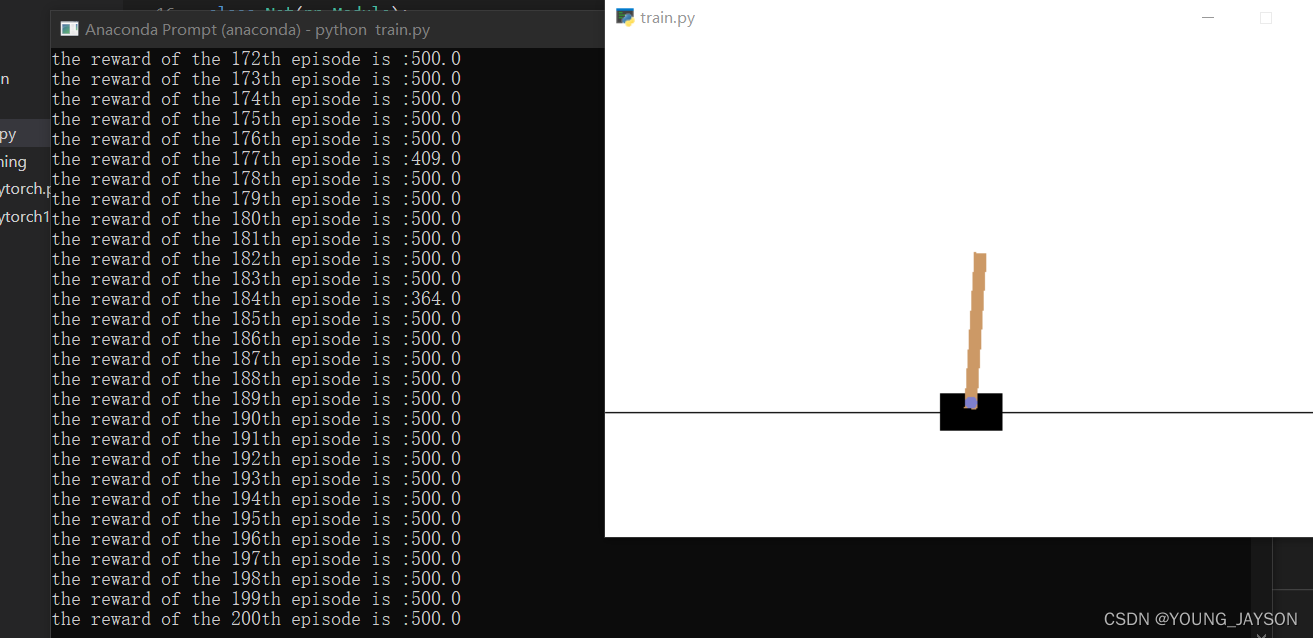

实验结果

1244

1244

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?