#导入模块

import matplotlib.pyplot as plt

import numpy as np

from scipy.io import loadmat

import matplotlib

import scipy.optimize as opt

from sklearn.metrics import classification_report#这个包是评价报告

第一步:数据可视化

#导入数据

data =loadmat('ex4data1.mat')

X =data['X']

y =data['y']

#对X,y进行处理

#对X做转置

#数字是旋转的需要对其进行转置

X1 =np.array([im.reshape(20,20).T for im in X])

X =np.array([im.reshape(400) for im in X1])

#对y做平铺

y =y.reshape(y.shape[0])

#输出100个数字图像

#随机选取X中的100个数字图像

image_index =np.random.choice(X.shape[0],100)

image_100 =X[image_index,:]

fig,ax =plt.subplots(nrows =10,ncols =10,figsize =(10,10),sharey=True, sharex=True,)

for i in range(10):

for j in range(10):

#不要下标

ax[i,j].matshow(image_100[i*10+j,:].reshape(20,20),cmap =matplotlib.cm.binary)

plt.xticks(np.array([]))

plt.yticks(np.array([]))

第二步:模型展示

#导入数据

data =loadmat('ex4data1.mat')

X =data['X']

y =data['y']

#对X,y进行处理

#对y做平铺

y =y.reshape(y.shape[0])

#对X第一列插入1值

X_train =np.insert(X,0,np.ones(X.shape[0]),axis =1)

#X_train.shape,y.shape,y

#((5000, 401), (5000,), array([10, 10, 10, ..., 9, 9, 9], dtype=uint8))

ln =[]

#对数据y1进行处理,例如只看数字10为标签,则10的位置为1,其他地方为0

#因此,对10,1,2.。。数字进行分类标签处理,每个数字映射为0,1标签(5000*1)

for num in range(1,11):

ln.append((y==num).astype(int))

#ln =[ln[-1]]+ln[:-1]

y_train =np.array(ln).T

#y_train.shape,y_train

#读取权重

weights =loadmat('ex4weights.mat')

theta1 =weights['Theta1']

theta2 =weights['Theta2']

print(theta1.shape,theta2.shape)

#定义权重的合并与还原

#定义合并

def serialize(a, b):

# 扁平化参数,25*401+10*26=10285

return np.concatenate((np.ravel(a), np.ravel(b)))

#定义还原

def deserialize(seq):

# 返回 (25, 401), (10, 26)

return seq[:25 * 401].reshape(25, 401), seq[25 * 401:].reshape(10, 26)

定义前向传播函数

#定义前向传播函数

theta =serialize(theta1,theta2)

print(theta)

def sigmoid(z):

return 1 / (1 + np.exp(-z))

def feed_forward(theta,X):

theta1 ,theta2 =deserialize(theta)

#第一层

a1 =X #5000*401

#第二层

z2 =a1@theta1.T # 5000*25 = 5000*401 @ 401*25

a2 =sigmoid(z2)

a2 =np.insert(a2, 0, values=np.ones(a2.shape[0]), axis=1) #5000*26

#第三层

z3 =a2@theta2.T # 5000*10 = 5000*26 @ 26*10

h =sigmoid(z3) # 5000*10

# h 是最终输出的预测结果

return a1,z2,a2,z3,h #反向传播时用到

_, _, _, _, h = feed_forward(theta, X_train)

h.shape,h

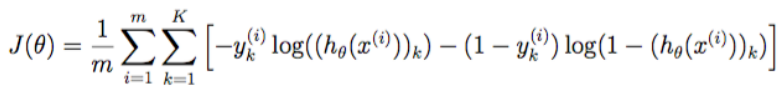

代价函数

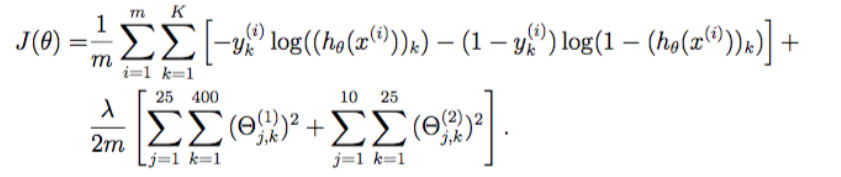

正则化代价函数

#代价函数

def cost(theta, X, y):

#计算损失值

#输入:theta 10285*1,X 5000*401, y 5000*10

#输出:损失值

m = X.shape[0]

# h 是前向传播输出的预测结果 5000*10

_, _, _, _, h = feed_forward(theta, X)

# 计算代价函数的括号里的东西5000*10

pair_computation = -np.multiply(y, np.log(h)) - np.multiply((1 - y), np.log(1 - h))

return pair_computation.sum() / m

#正则化代价函数

def regularized_cost(theta, X, y, lr=1):

#计算正则化损失值

#输入:theta 10285*1,X 5000*401, y 5000*10 lr(learn rate) 学习步长

#输出:损失值

#展开theta1和theta2的值 theta1 25*401 theta2 10*26

t1, t2 = deserialize(theta)

m = X.shape[0]

#正则化参数theta第一项不参与,从下标为1开始

reg_t1 = (lr / (2 * m)) * np.power(t1[:, 1:], 2).sum()

reg_t2 = (lr / (2 * m)) * np.power(t2[:, 1:], 2).sum()

return cost(theta, X, y) + reg_t1 + reg_t2

cost(theta, X_train, y_train)

#0.2876291651613189

regularized_cost(theta, X_train, y_train)

#0.38376985909092365

反向传播

正则化梯度

def sigmoid_gradient(z):

#计算激活函数sigmoid的梯度值

return np.multiply(sigmoid(z), 1 - sigmoid(z))

#反向传播迭代梯度函数

def gradient(theta, X, y):

#输入 theta 10285*1,X 5000*401, y 5000*10

#输出 迭代的梯度值

#展开theta1和theta2的值

#theta1 25*401 theta2 10*26

t1, t2 = deserialize(theta)

m = X.shape[0]

delta1 = np.zeros(t1.shape) # (25, 401)

delta2 = np.zeros(t2.shape) # (10, 26)

#前向传播,得到最后结果h,前面几层计算的结果a1,z2,a2,z3

a1, z2, a2, z3, h = feed_forward(theta, X)

for i in range(m):

a1i = a1[i, :] # (1, 401)

z2i = z2[i, :] # (1, 25)

a2i = a2[i, :] # (1, 26)

hi = h[i, :] # (1, 10)

yi = y[i, :] # (1, 10)

d3i = hi - yi # (1, 10)

z2i = np.insert(z2i, 0, np.ones(1)) # (1, 26) <--插入1值

d2i = np.multiply(t2.T @ d3i, sigmoid_gradient(z2i)) # (1, 26)

# 计算迭代梯度

delta2 += np.matrix(d3i).T @ np.matrix(a2i) # (1, 10).T @ (1, 26) -> (10, 26)

delta1 += np.matrix(d2i[1:]).T @ np.matrix(a1i) # (1, 25).T @ (1, 401) -> (25, 401)

delta1 = delta1 / m

delta2 = delta2 / m

return serialize(delta1, delta2)

#正则化反向传播梯度

def regularized_gradient(theta, X, y, lr=1):

#输入 theta 10285*1,X 5000*401, y 5000*10 lr(learn rate) 学习步长

#输出 梯度值

m = X.shape[0]

delta1, delta2 = deserialize(gradient(theta, X, y))

#展开theta1和theta2的值

#theta1 25*401 theta2 10*26

t1, t2 = deserialize(theta)

#正则化从下标为1开始,对下标为0不做处理

t1[:, 0] = 0

reg_term_d1 = (lr / m) * t1

delta1 = delta1 + reg_term_d1

t2[:, 0] = 0

reg_term_d2 = (lr / m) * t2

delta2 = delta2 + reg_term_d2

return serialize(delta1, delta2)

第三步 训练模型和评价

#初始化参数theta的值

theta =np.random.uniform(-0.12, 0.12, 10285) #25*401 + 10*26

theta.shape

#训练模型

def nn_training(theta,X, y,lr=1):

res = opt.minimize(fun=regularized_cost,

x0=theta,

args=(X, y, lr),

method='TNC',

jac=regularized_gradient,

options={'maxiter': 400})

return res

result =nn_training(theta,X_train,y_train)

预测与评价

final_theta =result.x

#5000*10 ,要得到预测的结果是5000*1,

#也就是说,每一行中数值最大的那个数对应的数字预测结果可能性最大

#所以每一行返回数值最大的对应的下标,将5000*10映射为5000*1,

#由于索引是从0开始,所以再加上1便是结果

_, _, _, _, predict = feed_forward(final_theta, X_train)

predict_y =np.argmax(predict,axis =1)+1

real_y =y.copy()

print(classification_report(real_y,predict_y))

301

301

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?