import torch

import torch.utils.data as data

import torch.nn as nn

import torch.optim as optim

import torch.nn.functional as F

import matplotlib.pyplot as plt

lr = 1e-2

batch_size = 32

epochs = 12

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

x = torch.unsqueeze(torch.linspace(-1, 1, 1000), dim=1)

y = torch.pow(x, 2) + 0.1 * torch.normal(torch.zeros_like(x))

dataset = data.TensorDataset(x, y)

loader = data.DataLoader(dataset=dataset, batch_size=batch_size, shuffle=True)

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.hidden = nn.Linear(1, 20)

self.predict = nn.Linear(20, 1)

def forward(self, x):

x = F.relu(self.hidden(x))

x = self.predict(x)

return x

net_SGD = Net()

net_Momentum = Net()

net_RMSProp = Net()

net_Adam = Net()

nets = [net_SGD, net_Momentum, net_RMSProp, net_Adam]

opt_SGD = optim.SGD(net_SGD.parameters(), lr=lr)

opt_Momentum = optim.SGD(net_SGD.parameters(), lr=lr, momentum=0.9)

opt_RMSProp = optim.RMSprop(net_SGD.parameters(), lr=lr, alpha=0.9)

opt_Adam = optim.Adam(net_SGD.parameters(), lr=lr, betas=(0.9, 0.99))

optimizers = [opt_SGD, opt_Momentum, opt_RMSProp, opt_Adam]

loss_func = nn.MSELoss()

loss_his = [[], [], [], []]

for epoch in range(epochs):

for step, (batch_x, batch_y) in enumerate(loader):

for net, opt, l_his in zip(nets, optimizers, loss_his):

batch_x = batch_x.to(device)

batch_y = batch_y.to(device)

out = net(batch_x)

loss = loss_func(out, batch_y)

l_his.append(loss.data.numpy())

opt.zero_grad()

loss.backward()

opt.step()

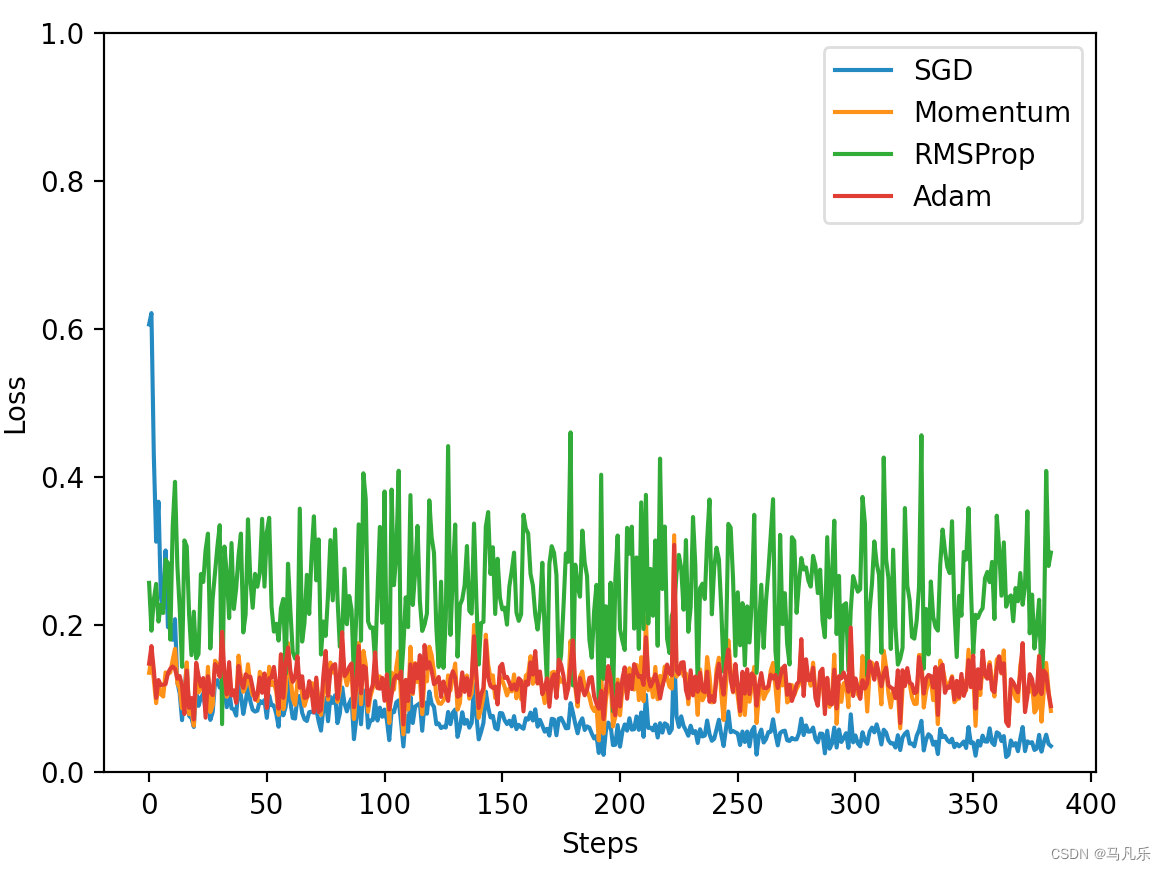

labels = ['SGD', 'Momentum', 'RMSProp', 'Adam']

for i, l_his in enumerate(loss_his):

plt.plot(l_his, label=labels[i])

plt.legend(loc='best')

plt.xlabel('Steps')

plt.ylabel('Loss')

plt.ylim((0, 1))

plt.show()

729

729

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?