lvs简介、负载均衡、keepalived、lvs高可用

环境机器:

server1:172.25.15.1

server2:172.25.15.2

server3:172.25.15.3

server4:172.25.15.4

真机: 172.25.254.15

一、lvs(linux虚拟服务器)

LVS集群采用IP负载均衡技术和基于内容请求分发技术。调度器具有很好的吞吐率,将请求均衡地转移到不同的服务器上执行,且调度器自动屏蔽掉服务器的故障,从而将一组服务器构成一个高性能的、高可用的虚拟服务器。整个服务器集群的结构对客户是透明的,而且无需修改客户端和服务器端的程序。为此,在设计时需要考虑系统的透明性、可伸缩性、高可用性和易管理性。

集群采用三层结构

一般来说,LVS集群采用三层结构,其主要组成部分为:

A、负载调度器(load balancer),它是整个集群对外面的前端机,负责将客户的请求发送到一组服务器上执行,而客户认为服务是来自一个IP地址(我们可称之为虚拟IP地址)上的。

B、服务器池(server pool),是一组真正执行客户请求的服务器,执行的服务有WEB、MAIL、FTP和DNS等。

C、共享存储(shared storage),它为服务器池提供一个共享的存储区,这样很容易使得服务器池拥有相同的内容,提供相同的服务。

二、负载均衡

1.环境配置

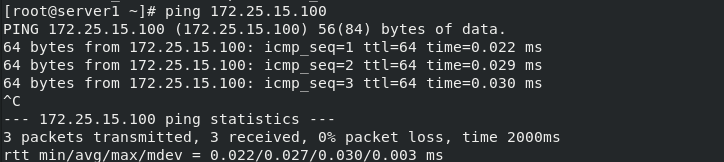

(1)server1

[root@server1 ~]# yum install ipvsadm.x86_64 -y #安装ipvsadm

[root@server1 ~]# ip addr add 172.25.15.100/24 dev eth0 #添加ip

[root@server1 ~]# ip addr show #查看ip

[root@server1 ~]# ping 172.25.15.100 #测试是否连通

[root@server1 ~]# ipvsadm -A -t 172.25.15.100:80 -s rr

[root@server1 ~]# ipvsadm -a -t 172.25.15.100:80 -r 172.25.15.2:80 -g

[root@server1 ~]# ipvsadm -a -t 172.25.15.100:80 -r 172.25.15.3:80 -g

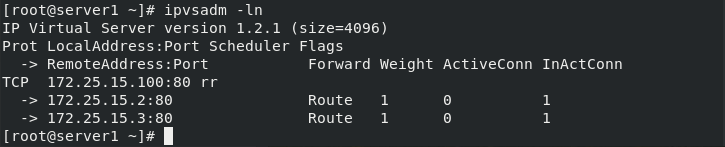

[root@server1 ~]# ipvsadm -ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 172.25.15.100:80 rr

-> 172.25.15.2:80 Route 1 0 0

-> 172.25.15.3:80 Route 1 0 0

[root@server1 ~]#

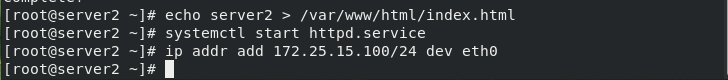

(2)server2和server3

[root@server2 ~]# yum install -y httpd

[root@server2 ~]# echo server2 > /var/www/html/index.html #编写HTTP默认发布文件

[root@server2 ~]# systemctl start httpd.service

[root@server2 ~]# ip addr add 172.25.15.100/24 dev eth0

[root@server2 ~]#

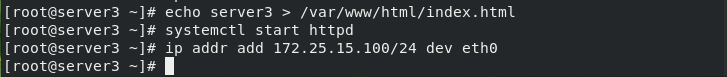

[root@server3 ~]# yum install -y httpd

[root@server3 ~]# echo server3 > /var/www/html/index.html

[root@server3 ~]# systemctl start httpd

[root@server3 ~]# ip addr add 172.25.15.100/24 dev eth0

[root@server3 ~]#

配置完成

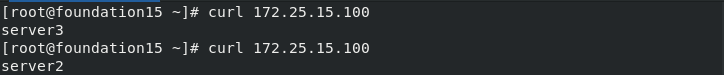

[root@foundation15 ~]# curl 172.25.15.100

server3

[root@foundation15 ~]# curl 172.25.15.100

server2

[root@server1 ~]# ipvsadm -ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 172.25.15.100:80 rr

-> 172.25.15.2:80 Route 1 0 1

-> 172.25.15.3:80 Route 1 0 1

[root@server1 ~]#

2.测试

(1)可以看到负载均衡,但是存在巧合情况

做6次循环访问

[root@foundation15 ~]# for i in {1..6}; do curl 172.25.15.100;done

server3

server2

server3

server2

server3

server2

[root@foundation15 ~]#

均衡

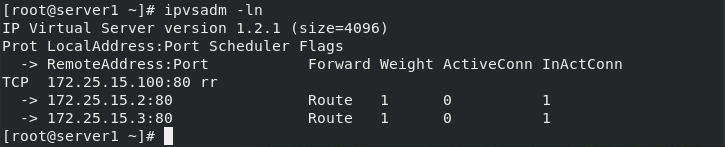

(2)删除缓存,多次实验

[root@foundation15 ~]# arp -an | grep 100

? (172.25.15.100) at 52:54:00:0c:35:41 [ether] on br0

[root@foundation15 ~]# arp -d 172.25.15.100

[root@foundation15 ~]# arp -an | grep 100

[root@foundation15 ~]# for i in {1..6}; do curl 172.25.15.100;done

server2

server2

server2

server2

server2

server2

我们发现这次不是负载均衡了

多实验几次

[root@foundation15 ~]# arp -d 172.25.15.100

[root@foundation15 ~]# for i in {1..6}; do curl 172.25.15.100;done

server3

server2

server3

server2

server3

server2

[root@foundation15 ~]# arp -d 172.25.15.100

[root@foundation15 ~]# for i in {1..6}; do curl 172.25.15.100;done

server2

server2

server2

server2

server2

server2

[root@foundation15 ~]#

我们发现是随机按速度接管响应

(3)因此我们只让调度器响应,禁止server2和server3的私自响应

[root@server2 ~]# yum install arptables -y

[root@server2 ~]# arptables -L

Chain INPUT (policy ACCEPT)

Chain OUTPUT (policy ACCEPT)

Chain FORWARD (policy ACCEPT)

[root@server2 ~]# arptables -A INPUT -d 172.25.15.100 -j DROP #有访问直接丢弃

[root@server2 ~]# arptables -A OUTPUT -d 172.25.15.100 -j mangle --mangle-ip-s 172.25.15.2

#输出数据时,设置来源为172.25.15.2

[root@server2 ~]# arptables-save

*filter

:INPUT ACCEPT

:OUTPUT ACCEPT

:FORWARD ACCEPT

-A INPUT -j DROP -d 172.25.15.100

-A OUTPUT -j mangle -d 172.25.15.100 --mangle-ip-s 172.25.15.2

[root@server2 ~]# arptables-save >/etc/sysconfig/arptables #保存策略

[root@server2 ~]# systemctl restart arptables.service

[root@server2 ~]# arptables -nL

Chain INPUT (policy ACCEPT)

-j DROP -d 172.25.15.100

Chain OUTPUT (policy ACCEPT)

-j mangle -d 172.25.15.100 --mangle-ip-s 172.25.15.2

Chain FORWARD (policy ACCEPT)

[root@server2 ~]#

[root@server3 ~]# yum install arptables -y

[root@server3 ~]# arptables -L

Chain INPUT (policy ACCEPT)

Chain OUTPUT (policy ACCEPT)

Chain FORWARD (policy ACCEPT)

[root@server3 ~]# arptables -A INPUT -d 172.25.15.100 -j DROP #有访问直接丢弃

[root@server3 ~]# arptables -A OUTPUT -d 172.25.15.100 -j mangle --mangle-ip-s 172.25.15.3 #输出数据时,设置来源为172.25.15.3

[root@server3 ~]# arptables-save

*filter

:INPUT ACCEPT

:OUTPUT ACCEPT

:FORWARD ACCEPT

-A INPUT -j DROP -d 172.25.15.100

-A OUTPUT -j mangle -d 172.25.15.100 --mangle-ip-s 172.25.15.3

[root@server3 ~]# arptables-save >/etc/sysconfig/arptables #保存策略

[root@server3 ~]# systemctl restart arptables.service

[root@server3 ~]# arptables -nL

Chain INPUT (policy ACCEPT)

-j DROP -d 172.25.15.100

Chain OUTPUT (policy ACCEPT)

-j mangle -d 172.25.15.100 --mangle-ip-s 172.25.15.3

Chain FORWARD (policy ACCEPT)

[root@server3 ~]#

(4)只有调度器可以访问,负载均衡配置完毕,刷新删除,都不影响哦均衡

(5)最终测试

[root@foundation15 ~]# arp -an | grep 100

? (172.25.15.100) at 52:54:00:41:8c:ed [ether] on br0

[root@foundation15 ~]# arp -d 172.25.15.100 #删除缓存

[root@foundation15 ~]# for i in {1..6}; do curl 172.25.15.100;done

server3

server2

server3

server2

server3

server2

[root@foundation15 ~]# arp -d 172.25.15.100

[root@foundation15 ~]# for i in {1..6}; do curl 172.25.15.100;done

server3

server2

server3

server2

server3

server2

[root@foundation15 ~]# arp -d 172.25.15.100

[root@foundation15 ~]# for i in {1..6}; do curl 172.25.15.100;done

server3

server2

server3

server2

server3

server2

[root@foundation15 ~]#

负载均衡

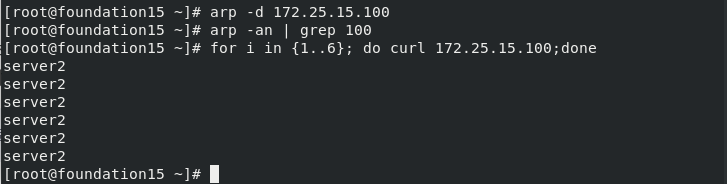

三、keepalived(配置DR模式负载均衡)

1.环境配置

配置文件,重启服务,自动配置(DR)负载均衡

[root@server1 ~]# yum install keepalived -y #安装keepalived

[root@server1 ~]# cd /etc/keepalived/ #j进入安装的目录

[root@server1 keepalived]# ls

keepalived.conf

[root@server1 keepalived]# vim keepalived.conf #配置文件

[root@server1 keepalived]# cat keepalived.conf

! Configuration File for keepalived

global_defs {

notification_email {

root@localhost #修改

}

notification_email_from keepalived@localhost #修改

smtp_server 127.0.0.1 #修改

smtp_connect_timeout 30

router_id LVS_DEVEL

vrrp_skip_check_adv_addr

#vrrp_strict #注释掉

vrrp_garp_interval 0

vrrp_gna_interval 0

}

vrrp_instance VI_1 {

state MASTER #主DR服务机

interface eth0 #所用网卡

virtual_router_id 15 #修改

priority 100 #DR优先级

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

virtual_ipaddress { #虚拟ip vip

172.25.15.100

}

}

virtual_server 172.25.15.100 80 { #虚拟ip部分配置

delay_loop 6

lb_algo rr

lb_kind DR #模式DR

#persistence_timeout 50 #注释掉

protocol TCP

real_server 172.25.15.2 80 { #RS主机配置

weight 1

TCP_CHECK {

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

}

}

real_server 172.25.15.3 80 {

weight 1

TCP_CHECK {

connect_timeout 3

nb_get_retry 3

delay_before_retry 3

}

}

}

之后全删掉

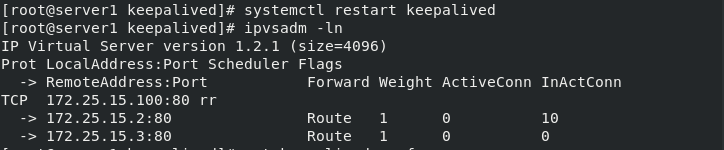

2.重启服务keepalived服务,实现负载均衡

[root@server1 keepalived]# ip addr del 172.25.15.100/24 dev eth0 #删除vip

[root@server1 keepalived]# ip addr show

[root@server1 keepalived]# ipvsadm -ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 172.25.15.100:80 rr

-> 172.25.15.2:80 Route 1 0 0

-> 172.25.15.3:80 Route 1 0 0

[root@server1 keepalived]# ipvsadm -C #清除

[root@server1 keepalived]# ipvsadm -ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

[root@server1 keepalived]# systemctl restart keepalived #重启服务

[root@server1 keepalived]# ipvsadm -ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 172.25.15.100:80 rr

-> 172.25.15.2:80 Route 1 0 10

-> 172.25.15.3:80 Route 1 0 0

[root@foundation15 ~]# for i in {1..6}; do curl 172.25.15.100;done #循环测试是否负载均衡

server3

server2

server3

server2

server3

server2

[root@foundation15 ~]#

四、LVS(高可用)

1.环境配置

四台虚拟机

server1

server2

server3

server4

对server4进行配置

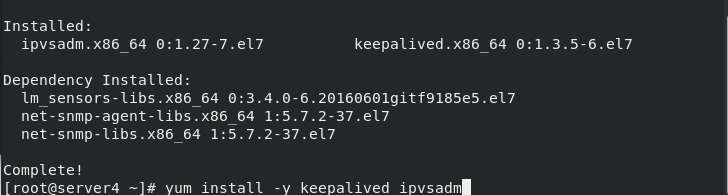

[root@server4 ~]# yum install -y keepalived ipvsadm #安装ipvsadm keepalived

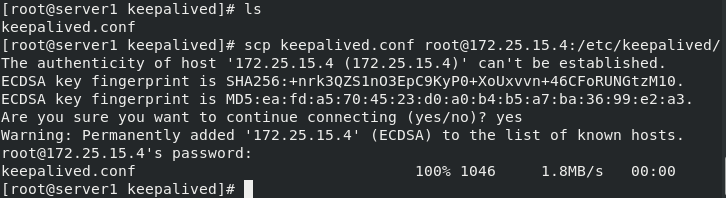

[root@server1 keepalived]# ls

keepalived.conf

[root@server1 keepalived]# scp keepalived.conf root@172.25.15.4:/etc/keepalived/ #将server1配置好的keepalived.conf文件发送给server4

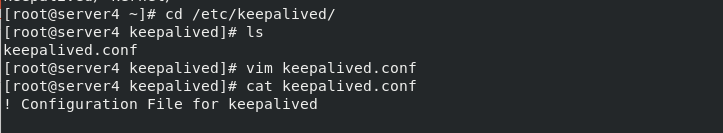

[root@server4 ~]# cd /etc/keepalived/

[root@server4 keepalived]# ls

keepalived.conf

[root@server4 keepalived]# vim keepalived.conf #配置文件

[root@server4 keepalived]# cat keepalived.conf

! Configuration File for keepalived

....

vrrp_instance VI_1 {

state BACKUP #改为备用

interface eth0

virtual_router_id 15

priority 50 #优先级比server1低

advert_int 1

authentication {

auth_type PASS

auth_pass 1111

}

....

[root@server4 keepalived]# systemctl start keepalived.service #重启服务

[root@server4 keepalived]# ipvsadm -ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 172.25.15.100:80 rr

-> 172.25.15.2:80 Route 1 0 0

-> 172.25.15.3:80 Route 1 0 0

[root@server4 keepalived]# ip addr #查看ip,没有vip

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP group default qlen 1000

link/ether 52:54:00:93:d0:19 brd ff:ff:ff:ff:ff:ff

inet 172.25.15.4/24 brd 172.25.15.255 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::5054:ff:fe93:d019/64 scope link

valid_lft forever preferred_lft forever

[root@server4 keepalived]#

2.测试

(1)关闭server1的keepalived服务

[root@server1 keepalived]# ipvsadm -ln

IP Virtual Server version 1.2.1 (size=4096)

Prot LocalAddress:Port Scheduler Flags

-> RemoteAddress:Port Forward Weight ActiveConn InActConn

TCP 172.25.15.100:80 rr

-> 172.25.15.2:80 Route 1 0 0

-> 172.25.15.3:80 Route 1 0 0

[root@server1 keepalived]# systemctl stop keepalived.service

[root@server1 keepalived]#

(2)查看Server4日志,发现server1服务关闭后,server4自动切换为MASTER

[root@server4 keepalived]# cat /var/log/messages

Jul 10 04:39:42 server4 Keepalived_vrrp[23598]: VRRP_Instance(VI_1) Entering MASTER STATE

Jul 10 04:39:42 server4 Keepalived_vrrp[23598]: VRRP_Instance(VI_1) setting protocol VIPs.

[root@server4 keepalived]# ip addr #查看ip发现有vip

(3)负载均衡不受影响,高可用配置完成

服务端访问测试

[root@foundation15 ~]# for i in {1..6}; do curl 172.25.15.100;done

server3

server2

server3

server2

server3

server2

[root@foundation15 ~]# for i in {1..6}; do curl 172.25.15.100;done

server3

server2

server3

server2

server3

server2

[root@foundation15 ~]#

530

530

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?