HPA、Helm安装、应用部署redis集群、mycahrt、nfs、metrics-server

一、HPA实例

1.拉取镜像,上传至仓库

[root@server1 ~]# lftp 172.25.15.250

lftp 172.25.15.250:~> cd pub/docs/k8s/

lftp 172.25.15.250:/pub/docs/k8s> get hpa-example.tar

505652224 bytes transferred in 4 seconds (118.75M/s)

lftp 172.25.15.250:/pub/docs/k8s> exit

[root@server1 ~]# docker load -i hpa-example.tar

[root@server1 ~]# docker tag mirrorgooglecontainers/hpa-example:latest reg.westos.org/library/hpa-example:latest

[root@server1 ~]# docker push reg.westos.org/library/hpa-example:latest

2.运行php-apache 服务器

[root@server4 ~]# mkdir hpa

[root@server4 ~]# cd hpa/

[root@server4 hpa]# ls

[root@server4 hpa]# vim deploy.yaml

[root@server4 hpa]# cat deploy.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: php-apache

spec:

selector:

matchLabels:

run: php-apache

replicas: 1

template:

metadata:

labels:

run: php-apache

spec:

containers:

- name: php-apache

image: library/hpa-example #修改为自己的私有仓库

ports:

- containerPort: 80

resources:

limits:

cpu: 500m

requests:

cpu: 200m

---

apiVersion: v1

kind: Service

metadata:

name: php-apache

labels:

run: php-apache

spec:

ports:

- port: 80

selector:

run: php-apache

[root@server4 hpa]# kubectl get pod

[root@server4 hpa]# kubectl apply -f deploy.yaml

[root@server4 hpa]# kubectl get pod

[root@server4 hpa]# kubectl describe svc php-apache

[root@server4 hpa]# curl 10.110.112.153

OK![root@server4 hpa]#

3.创建 Horizontal Pod Autoscaler

php-apache 服务器已经运行,我们将通过 kubectl autoscale 命令创建 Horizontal Pod Autoscaler。 以下命令将创建一个 Horizontal Pod Autoscaler 用于控制我们上一步骤中创建的 Deployment,使 Pod 的副本数量维持在 1 到 10 之间。 大致来说,HPA 将(通过 Deployment)增加或者减少 Pod 副本的数量以保持所有 Pod 的平均 CPU 利用率在 50% 左右(由于每个 Pod 请求 200 毫核的 CPU,这意味着平均 CPU 用量为 100 毫核)。自动扩缩完成副本数量的改变可能需要几分钟的时间。Hpa会根据Pod的CPU使用率动态调节Pod的数量

[root@server4 hpa]# kubectl autoscale deployment php-apache --cpu-percent=50 --min=1 --max=10

horizontalpodautoscaler.autoscaling/php-apache autoscaled

[root@server4 hpa]# kubectl get deployments.apps

[root@server4 hpa]# kubectl get hpa

[root@server4 hpa]# kubectl get hpa

[root@server4 hpa]# kubectl top pod

** 请注意当前的 CPU 利用率是 0%,这是由于我们尚未发送任何请求到服务器 (CURRENT 列显示了相应 Deployment 所控制的所有 Pod 的平均 CPU 利用率)**

4.增加负载

Autoscaler 如何对增加负载作出反应。 我们将启动一个容器,并通过一个循环向 php-apache 服务器发送无限的查询请求

[root@server4 hpa]# kubectl run -i --tty load-generator --rm --image=busybox --restart=Never -- /bin/sh -c "while sleep 0.01; do wget -q -O- http://php-apache; done" 向 php-apache 服务器发送无限的查询请求

##重新在开一个窗口

[root@server4 ~]# kubectl get hpa #可以看到 CPU 负载升高了

由于请求增多,CPU 利用率已经升至请求值的 305%。 可以看到,Deployment 的副本数量已经增长到了 7:

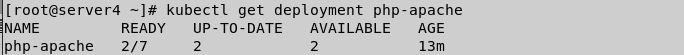

[root@server4 ~]# kubectl get deployment php-apache

说明: 有时最终副本的数量可能需要几分钟才能稳定下来。由于环境的差异, 不同环境中最终的副本数量可能与本示例中的数量不同。

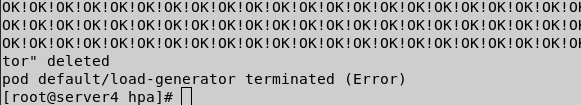

5.停止负载

在我们创建 busybox 容器的终端中,输入<Ctrl> + C 来终止负载的产生。

然后我们可以再次检查负载状态(等待几分钟时间):

[root@server4 ~]# kubectl get hpa

[root@server4 ~]# kubectl get deployment php-apache

这时,CPU 利用率已经降到 0,所以 HPA 将自动缩减副本数量至 1。

说明: 自动扩缩完成副本数量的改变可能需要几分钟的时间。

6.基于多项度量指标和自定义度量指标自动扩缩

6.1简介

HPA伸缩过程:

收集HPA控制下所有Pod最近的cpu使用情况(CPU utilization)

对比在扩容条件里记录的cpu限额(CPUUtilization)

调整实例数(必须要满足不超过最大/最小实例数)

每隔30s做一次自动扩容的判断

CPU utilization的计算方法是用cpu usage(最近一分钟的平均值,通过metrics可以直接获取到)除以cpu request(这里cpu request就是我们在创建容器时制定的cpu使用核心数)得到一个平均值,这个平均值可以理解为:平均每个Pod CPU核心的使用占比。

HPA进行伸缩算法:

计算公式:TargetNumOfPods = ceil(sum(CurrentPodsCPUUtilization) / Target)

ceil()表示取大于或等于某数的最近一个整数

每次扩容后冷却3分钟才能再次进行扩容,而缩容则要等5分钟后。

当前Pod Cpu使用率与目标使用率接近时,不会触发扩容或缩容:

触发条件:avg(CurrentPodsConsumption) / Target >1.1 或 <0.9

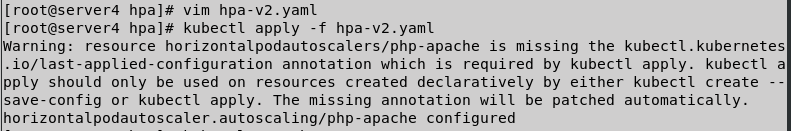

6.2 vim hpa-v2.yaml

[root@server4 hpa]# vim hpa-v2.yaml

apiVersion: autoscaling/v2beta2

kind: HorizontalPodAutoscaler

metadata:

name: php-apache

spec:

maxReplicas: 10

minReplicas: 1

scaleTargetRef:

apiVersion: apps/v1

kind: Deployment

name: php-apache

metrics:

- type: Resource

resource:

name: cpu

target:

averageUtilization: 60

type: Utilization

- type: Resource

resource:

name: memory

target:

averageValue: 50Mi

type: AverageValue

[root@server4 hpa]# kubectl apply -f hpa-v2.yaml

[root@server4 hpa]# kubectl get hpa

NAME REFERENCE TARGETS MINPODS MAXPODS REPLICAS AGE

php-apache Deployment/php-apache 19161088/50Mi, 0%/60% 1 10 1 55m

[root@server4 hpa]# kubectl top pod

二、Helm

1.简介

Helm是Kubernetes 应用的包管理工具,主要用来管理 Charts,类似Linux系统的yum。

Helm Chart 是用来封装 Kubernetes 原生应用程序的一系列 YAML 文件。可以在你部署应用的时候自定义应用程序的一些 Metadata,以便于应用程序的分发。

对于应用发布者而言,可以通过 Helm 打包应用、管理应用依赖关系、管理应用版本并发布应用到软件仓库。

对于使用者而言,使用 Helm 后不用需要编写复杂的应用部署文件,可以以简单的方式在 Kubernetes 上查找、安装、升级、回滚、卸载应用程序。

2.Helm安装:

[root@server4 ~]# mkdir helm

[root@server4 ~]# cd helm/

[root@server4 helm]# ls

[root@server4 helm]# lftp 172.25.15.250

lftp 172.25.15.250:~> cd pub/docs/k8s

cd ok, cwd=/pub/docs/k8s

lftp 172.25.15.250:/pub/docs/k8s> get helm-v3.4.1-linux-amd64.tar.gz

13323294 bytes transferred

lftp 172.25.15.250:/pub/docs/k8s> exit

[root@server4 helm]# ls

helm-v3.4.1-linux-amd64.tar.gz

[root@server4 helm]# tar zxf helm-v3.4.1-linux-amd64.tar.gz

[root@server4 helm]# cd linux-amd64/

[root@server4 linux-amd64]# cp helm /usr/local/bin/

[root@server4 ~]# echo "source <(helm completion bash)" >> ~/.bashrc #设置helm命令补齐

[root@server4 ~]# source .bashrc #更新环境变量

[root@server4 ~]# helm search hub wordpress #搜索官方helm hub chart库

[root@server4 ~]# helm repo add bitnami https://charts.bitnami.com/bitnami #添加库,作为测试

[root@server2 ~]# helm repo list

3.上传镜像

harbor仓库创建bitnami

[root@server1 ~]# docker pull bitnami/redis-cluster:6.2.5-debian-10-r0

[root@server1 ~]# docker tag bitnami/redis-cluster:6.2.5-debian-10-r0 reg.westos.org/bitnami/redis-cluster:6.2.5-debian-10-r0

[root@server1 ~]# docker push reg.westos.org/bitnami/redis-cluster:6.2.5-debian-10-r0

4.部署redis集群

[root@server4 ~]# helm pull bitnami/redis-cluster

[root@server4 ~]# ls

calico ingress mysql-xtrabackup.tar roles

configmap ingress-nginx-v0.48.1.tar nfs-client schedu

dashboard kube-flannel.yml nfs-client-provisioner-v4.0.0.tar statefulset

deploy.yaml limit pod volumes

helm metallb psp

hpa metallb-v0.10.2.tar redis-cluster-6.3.2.tgz

[root@server4 ~]# mv redis-cluster-6.3.2.tgz helm/

[root@server4 ~]# cd helm/

[root@server4 helm]# ls

helm-v3.4.1-linux-amd64.tar.gz linux-amd64 redis-cluster-6.3.2.tgz

[root@server4 helm]# rm -rf linux-amd64/ helm-v3.4.1-linux-amd64.tar.gz

[root@server4 helm]# ls

redis-cluster-6.3.2.tgz

[root@server4 helm]# tar zxf redis-cluster-6.3.2.tgz

[root@server4 helm]# ls

redis-cluster redis-cluster-6.3.2.tgz

[root@server4 helm]# cd redis-cluster/

[root@server4 redis-cluster]# ls

Chart.lock charts Chart.yaml img README.md templates values.yaml

[root@server4 redis-cluster]# vim values.yaml #编写为自己的仓库

[root@server4 redis-cluster]# helm install redis-cluster .

[root@server4 redis-cluster]# kubectl get pod

三、mychart应用部署

1.编写应用部署信息

[root@server4 ~]# cd helm/

[root@server4 helm]# ls

redis-cluster redis-cluster-6.3.2.tgz

[root@server4 helm]# helm create mychart

Creating mychart

[root@server4 helm]# cd mychart/

[root@server4 mychart]# ls

charts Chart.yaml templates values.yaml

[root@server4 mychart]# vim values.yaml

[root@server4 mychart]# helm lint . #检查依赖和模板配置是否正确

==> Linting .

[INFO] Chart.yaml: icon is recommended

1 chart(s) linted, 0 chart(s) failed

[root@server4 mychart]# cd ..

[root@server4 helm]# ls

mychart redis-cluster redis-cluster-6.3.2.tgz

[root@server4 helm]# helm package mychart/ #将应用打包

Successfully packaged chart and saved it to: /root/helm/mychart-0.1.0.tgz

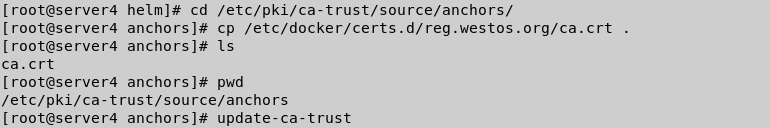

2.建立本地chart仓库

## 证书认证

[root@server4 helm]# cd /etc/pki/ca-trust/source/anchors/

[root@server4 anchors]# cp /etc/docker/certs.d/reg.westos.org/ca.crt .

[root@server4 anchors]# ls

ca.crt

[root@server4 anchors]# pwd

/etc/pki/ca-trust/source/anchors

[root@server4 anchors]# update-ca-trust

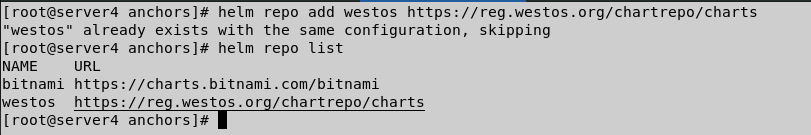

[root@server4 anchors]# helm repo add westos https://reg.westos.org/chartrepo/charts

[root@server4 anchors]# helm repo list

3.安装helm-push插件

$ helm plugin install https://github.com/chartmuseum/helm-push #在线安装

外网访问过慢,我们选择离线安装

离线安装

$ helm env //获取插件目录

$ mkdir ~/.local/share/helm/plugins/push

$ tar zxf helm-push_0.8.1_linux_amd64.tar.gz -C ~/.local/share/helm/plugins/push

$ helm push --help

[root@server4 ~]# cd helm/

[root@server4 helm]# ls

mychart mychart-0.1.0.tgz redis-cluster redis-cluster-6.3.2.tgz

[root@server4 helm]# lftp 172.25.15.250

lftp 172.25.15.250:~> cd pub/docs/k8s/

lftp 172.25.15.250:/pub/docs/k8s> get helm-push_0.9.0_linux_amd64.tar.gz

8943728 bytes transferred

lftp 172.25.15.250:/pub/docs/k8s> exit

[root@server4 helm]# ls

helm-push_0.9.0_linux_amd64.tar.gz redis-cluster

mychart redis-cluster-6.3.2.tgz

mychart-0.1.0.tgz

[root@server4 helm]# helm env

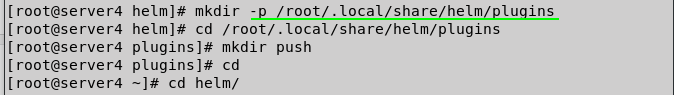

[root@server4 helm]# mkdir -p /root/.local/share/helm/plugins

[root@server4 helm]# cd /root/.local/share/helm/plugins

[root@server4 plugins]# mkdir push

[root@server4 plugins]# cd

[root@server4 ~]# cd helm/

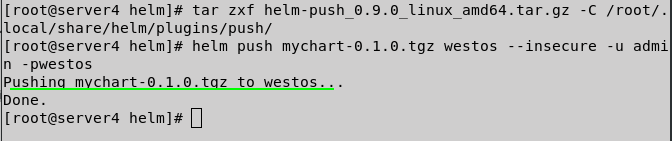

[root@server4 helm]# tar zxf helm-push_0.9.0_linux_amd64.tar.gz -C /root/.local/share/helm/plugins/push/

[root@server4 helm]# helm push mychart-0.1.0.tgz westos --insecure -u admin -pwestos #上传至仓库

Pushing mychart-0.1.0.tgz to westos...

Done.

4.部署mychart应用到k8s集群

[root@server4 helm]# helm repo update #更新

Hang tight while we grab the latest from your chart repositories...

...Successfully got an update from the "westos" chart repository

^C

[root@server4 helm]# helm search repo mychart #查看

NAME CHART VERSION APP VERSION DESCRIPTION

westos/mychart 0.1.0 1.16.0 A Helm chart for Kubernetes

[root@server4 helm]# helm install mychart westos/mychart #安装部署

[root@server4 helm]# kubectl get all #查看部署状态

service/mychart ClusterIP 10.97.108.48 <none> 80/TCP 2m55s

[root@server4 helm]# curl 10.97.108.48 #测试

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>

5.更新

[root@server4 ~]# kubectl get pod #查看pod

NAME READY STATUS RESTARTS AGE

mychart-6675bd6ffd-9w2xd 1/1 Running 1 16h

[root@server4 ~]# cd helm/mychart/

[root@server4 mychart]# ls

charts Chart.yaml templates values.yaml

[root@server4 mychart]# ls

charts Chart.yaml templates values.yaml

[root@server4 mychart]# vim values.yaml

[root@server4 mychart]# vim Chart.yaml

[root@server4 mychart]# vim values.yaml

[root@server4 mychart]# cd ..

[root@server4 helm]# helm lint mychart #检查依赖和模板配置是否正确

==> Linting mychart

[INFO] Chart.yaml: icon is recommended

1 chart(s) linted, 0 chart(s) failed

[root@server4 helm]# helm package mychart #打包

Successfully packaged chart and saved it to: /root/helm/mychart-0.2.0.tgz

[root@server4 helm]# ls

helm-push_0.9.0_linux_amd64.tar.gz mychart-0.2.0.tgz

mychart redis-cluster

mychart-0.1.0.tgz redis-cluster-6.3.2.tgz

[root@server4 helm]# helm push mychart-0.2.0.tgz westos --insecure -u admin -p westos #上传至仓库

Pushing mychart-0.2.0.tgz to westos...

Done.

[root@server4 helm]# helm repo update #更新

Hang tight while we grab the latest from your chart repositories...

...Successfully got an update from the "westos" chart repository

^C

[root@server4 helm]# helm search repo mychart #查看

NAME CHART VERSION APP VERSION DESCRIPTION

westos/mychart 0.2.0 v2 A Helm chart for Kubernetes

[root@server4 helm]# helm search repo mychart -l #查看历史版本

NAME CHART VERSION APP VERSION DESCRIPTION

westos/mychart 0.2.0 v2 A Helm chart for Kubernetes

westos/mychart 0.1.0 1.16.0 A Helm chart for Kubernetes

[root@server4 mychart]# helm upgrade mychart westos/mychart #更新

[root@server4 mychart]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 10d

mychart ClusterIP 10.97.108.48 <none> 80/TCP 16h

php-apache ClusterIP 10.110.112.153 <none> 80/TCP 22h

[root@server4 mychart]# curl 10.97.108.48

Hello MyApp | Version: v2 | <a href="hostname.html">Pod Name</a>

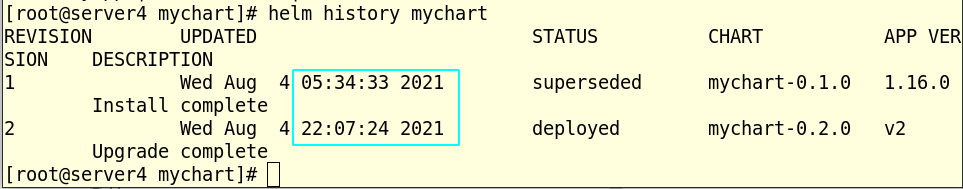

[root@server4 mychart]# helm history mychart

6.回滚

[root@server4 mychart]# helm rollback mychart 1 #回滚

Rollback was a success! Happy Helming!

[root@server4 mychart]# helm history mychart

REVISION UPDATED STATUS CHART APP VERSION DESCRIPTION

1 Wed Aug 4 05:34:33 2021 superseded mychart-0.1.0 1.16.0 Install complete

2 Wed Aug 4 22:07:24 2021 superseded mychart-0.2.0 v2 Upgrade complete

3 Wed Aug 4 22:13:38 2021 deployed mychart-0.1.0 1.16.0 Rollback to 1

[root@server4 mychart]# curl 10.97.108.48

Hello MyApp | Version: v1 | <a href="hostname.html">Pod Name</a>

[root@server4 mychart]#

四、nfs部署

1.下载镜像,上传至仓库

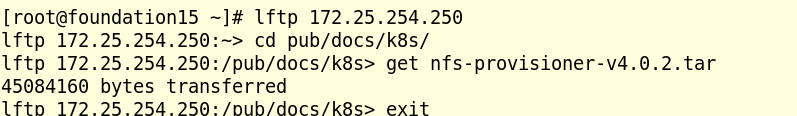

[root@foundation15 ~]# lftp 172.25.254.250

lftp 172.25.254.250:~> cd pub/docs/k8s/

lftp 172.25.254.250:/pub/docs/k8s> get nfs-provisioner-v4.0.2.tar #下载

45084160 bytes transferred

lftp 172.25.254.250:/pub/docs/k8s> exit

[root@foundation15 ~]# scp nfs-provisioner-v4.0.2.tar 172.25.15.1: #

root@172.25.15.1's password:

nfs-provisioner-v4.0.2.tar 100% 43MB 97.9MB/s 00:00

[root@foundation15 ~]#

[root@server1 ~]# docker load -i nfs-provisioner-v4.0.2.tar #导入镜像

[root@server1 ~]# docker push reg.westos.org/sig-storage/nfs-subdir-external-provisioner

2.编写部署nfs应用信息

[root@server4 helm]# helm repo list #查看源

[root@server4 helm]# helm repo add nfs-subdir-external-provisioner https://kubernetes-sigs.github.io/nfs-subdir-external-provisioner/ #添加源

"nfs-subdir-external-provisioner" has been added to your repositories

[root@server4 helm]# helm repo list

[root@server4 helm]# helm pull nfs-subdir-external-provisioner/nfs-subdir-external-provisioner #拉取镜像

[root@server4 helm]# ls

[root@server4 helm]# tar zxf nfs-subdir-external-provisioner-4.0.13.tgz

[root@server4 helm]# cd nfs-subdir-external-provisioner/

[root@server4 nfs-subdir-external-provisioner]# ls

Chart.yaml ci README.md templates values.yaml

[root@server4 nfs-subdir-external-provisioner]# vim values.yaml

repository: reg.westos.org/sig-storage/nfs-subdir-external-provision er

6 tag: v4.0.2

7 pullPolicy: IfNotPresent

8 imagePullSecrets: []

10 nfs:

11 server: 172.25.15.1

12 path: /mnt/nfs

13 mountOptions:

25 defaultClass: ture

38 archiveOnDelete: false

[root@server4 helm]# tar zxf nfs-subdir-external-provisioner-4.0.13.tgz

[root@server4 helm]# cd nfs-subdir-external-provisioner/

[root@server4 nfs-subdir-external-provisioner]# ls

Chart.yaml ci README.md templates values.yaml

[root@server4 nfs-subdir-external-provisioner]# vim values.yaml

[root@server4 nfs-subdir-external-provisioner]# vim values.yaml

[root@server4 nfs-subdir-external-provisioner]# kubectl create namespace nfs-provisioner

namespace/nfs-provisioner created

## 清理环境

[root@server4 nfs-subdir-external-provisioner]# helm list --all-namespaces

NAME NAMESPACE REVISION UPDATED STATUS CHART APP VERSION

mychart default 3 2021-08-04 22:13:38.868761606 -0400 EDT deployed mychart-0.1.0 1.16.0

[root@server4 nfs-subdir-external-provisioner]# helm uninstall mychart

release "mychart" uninstalled

[root@server4 nfs-subdir-external-provisioner]# helm list --all-namespaces

NAME NAMESPACE REVISION UPDATED STATUS CHART APP VERSION

3.部署nfs应用到k8s集群

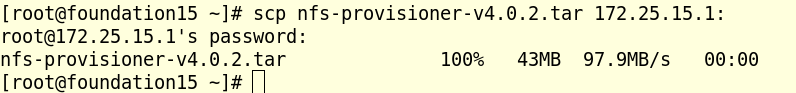

[root@server4 nfs-subdir-external-provisioner]# helm install nfs-subdir-external-provisioner . -n nfs-provisioner #安装部署

NAME: nfs-subdir-external-provisioner

LAST DEPLOYED: Wed Aug 4 23:40:24 2021

NAMESPACE: nfs-provisioner

STATUS: deployed

REVISION: 1

TEST SUITE: None

[root@server4 nfs-subdir-external-provisioner]# helm list -n nfs-provisioner

[root@server4 nfs-subdir-external-provisioner]# kubectl get sc

[root@server4 nfs-subdir-external-provisioner]# kubectl get pod -n kube-system

[root@server4 nfs-subdir-external-provisioner]# kubectl get all -n nfs-provisioner

[root@server4 nfs-subdir-external-provisioner]# vim pvc.yaml

[root@server4 nfs-subdir-external-provisioner]# cat pvc.yaml

kind: PersistentVolumeClaim

apiVersion: v1

metadata:

name: test-claim

spec:

accessModes:

- ReadWriteMany

resources:

requests:

storage: 1Mi

[root@server4 nfs-subdir-external-provisioner]# kubectl apply -f pvc.yaml

persistentvolumeclaim/test-claim created

[root@server4 nfs-subdir-external-provisioner]# kubectl get pv

NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE

pvc-2c936f9d-cb23-4e52-9a18-2c241cb9a5f7 1Mi RWX Delete Bound default/test-claim nfs-client 5s

[root@server4 nfs-subdir-external-provisioner]# kubectl get pvc

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

test-claim Bound pvc-2c936f9d-cb23-4e52-9a18-2c241cb9a5f7 1Mi RWX nfs-client 12s

[root@server4 nfs-subdir-external-provisioner]# kubectl delete -f pvc.yaml --force

warning: Immediate deletion does not wait for confirmation that the running resource has been terminated. The resource may continue to run on the cluster indefinitely.

persistentvolumeclaim "test-claim" force deleted

五、部署metrics-server

1.下载镜像,上传仓库

[root@server1 ~]# docker pull bitnami/metrics-server:0.5.0-debian-10-r59

0.5.0-debian-10-r59: Pulling from bitnami/metrics-server

2e4c6c15aa52: Pull complete

a894671f5dca: Pull complete

049ba86a208b: Pull complete

5d7966168fc3: Pull complete

9804d459b822: Pull complete

a416b953cdc3: Pull complete

Digest: sha256:118158c95578aa18b42d49d60290328c23cbdb8252b812d4a7c142d46ecabcf6

Status: Downloaded newer image for bitnami/metrics-server:0.5.0-debian-10-r59

docker.io/bitnami/metrics-server:0.5.0-debian-10-r59

[root@server1 ~]# docker tag docker.io/bitnami/metrics-server:0.5.0-debian-10-r59 reg.westos.org/bitnami/metrics-server:0.5.0-debian-10-r59

[root@server1 ~]# docker push reg.westos.org/bitnami/metrics-server:0.5.0-debian-10-r59

The push refers to repository [reg.westos.org/bitnami/metrics-server]

c30a7821a361: Pushed

5ecebc0f05f5: Pushed

6e8fc12b0093: Pushed

7f6d39b64fd8: Pushed

ade06b968aa1: Pushed

209a01d06165: Pushed

0.5.0-debian-10-r59: digest: sha256:118158c95578aa18b42d49d60290328c23cbdb8252b812d4a7c142d46ecabcf6 size: 1578

[root@server1 ~]#

2.清理环境,并部署

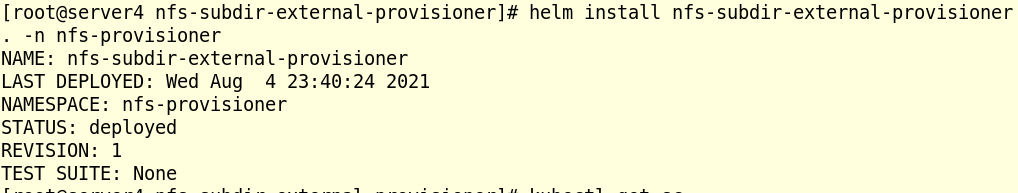

[root@server4 helm]# helm search repo metrics-server

NAME CHART VERSION APP VERSION DESCRIPTION

bitnami/metrics-server 5.9.2 0.5.0 Metrics Server is a cluster-wide aggregator of ...

[root@server4 helm]# helm pull bitnami/metrics-server

[root@server4 helm]# ls

[root@server4 helm]# tar zxf metrics-server-5.9.2.tgz

[root@server4 helm]# ls

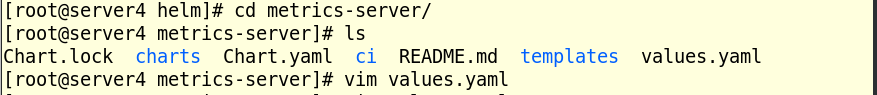

[root@server4 helm]# cd metrics-server/

[root@server4 metrics-server]# ls

Chart.lock charts Chart.yaml ci README.md templates values.yaml

[root@server4 metrics-server]# vim values.yaml

## 清理环境,之前用yaml文件部署过

[root@server4 ~]# cd metrics-server/

[root@server4 metrics-server]# ls

components.yaml

[root@server4 metrics-server]# kubectl delete -f components.yaml

[root@server4 metrics-server]# ls

Chart.lock charts Chart.yaml ci README.md templates values.yaml

[root@server4 metrics-server]# kubectl create namespace metrics-server

namespace/metrics-server created

[root@server4 metrics-server]# vim values.yaml

100 create : ture

[root@server4 metrics-server]# helm install metrics-server . -n metrics-server

NAME: metrics-server

LAST DEPLOYED: Thu Aug 5 03:29:09 2021

NAMESPACE: metrics-server

STATUS: deployed

REVISION: 1

TEST SUITE: None

NOTES:

** Please be patient while the chart is being deployed **

The metric server has been deployed.

In a few minutes you should be able to list metrics using the following

command:

kubectl get --raw "/apis/metrics.k8s.io/v1beta1/nodes"

[root@server4 metrics-server]#

清理之前的环境

部署安装

3.部署完成

[root@server4 metrics-server]# helm list -n metrics-server

NAME NAMESPACE REVISION UPDATED STATUS CHART APP VERSION

metrics-server metrics-server 1 2021-08-05 03:29:09.799526572 -0400 EDT deployed metrics-server-5.9.2 0.5.0

[root@server4 metrics-server]# kubectl -n metrics-server get pod

NAME READY STATUS RESTARTS AGE

metrics-server-6b4db5d56b-l6gq4 1/1 Running 0 7m11s

[root@server4 metrics-server]# kubectl -n metrics-server get all

NAME READY STATUS RESTARTS AGE

pod/metrics-server-6b4db5d56b-l6gq4 1/1 Running 0 7m15s

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

service/metrics-server ClusterIP 10.101.158.139 <none> 443/TCP 7m15s

NAME READY UP-TO-DATE AVAILABLE AGE

deployment.apps/metrics-server 1/1 1 1 7m15s

NAME DESIRED CURRENT READY AGE

replicaset.apps/metrics-server-6b4db5d56b 1 1 1 7m15s

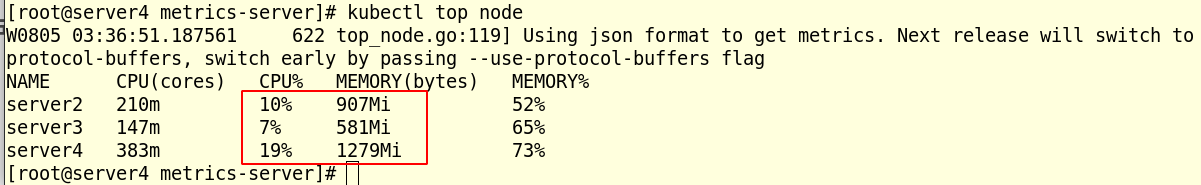

[root@server4 metrics-server]# kubectl top node #查看集群各个节点的内存cpu使用情况

W0805 03:36:51.187561 622 top_node.go:119] Using json format to get metrics. Next release will switch to protocol-buffers, switch early by passing --use-protocol-buffers flag

NAME CPU(cores) CPU% MEMORY(bytes) MEMORY%

server2 210m 10% 907Mi 52%

server3 147m 7% 581Mi 65%

server4 383m 19% 1279Mi 73%

788

788

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?