搜寻到以前做过的旧代码操作记录,零基础入门nlp,用了机器学习的算法进行预测,本次给出的数据集格式是csv格式,读取文件的代码是

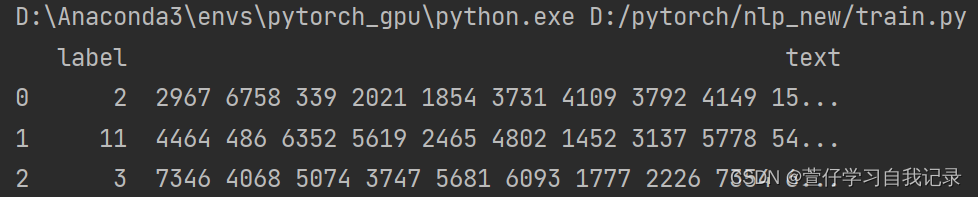

df = pd.read_csv(file_path, sep='\t')包含了label和text,其中官网给出的数据为了避免被人为标记已经做了处理,其中数据提取出来如下所示

然后本次算法是选取了随机森林算法等多种算法进行训练预测,由于数据集涉及到20w条,本电脑内存不足,先把训练集分割成10个,取其中一个进行训练,

以下是拆分的代码

import pandas as pd

def split_csv_file(filename, num_files):

df = pd.read_csv(filename)

rows_per_file = len(df)

if len(df) % num_files != 0:

rows_per_file += 1

for i in range(num_files):

start_row = i * rows_per_file

end_row = min(start_row + rows_per_file, len(df))

df_subset = df.iloc[start_row:end_row]

output_filename = f'split_{i + 1}.csv'

df_subset.to_csv(output_filename, index=False)

print(f'Saved {output_filename}')

split_csv_file('./train_set.csv', 10)随机森林算法

import pandas as pd

import torch

from sklearn.ensemble import RandomForestClassifier

from sklearn.feature_extraction.text import CountVectorizer

from sklearn.metrics import accuracy_score

from torch.utils.data import Dataset, DataLoader

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import LabelEncoder

import pandas as pd

df = pd.read_csv('./split_1.csv', sep='\t')

print(df.head(3))

df = pd.DataFrame(df)

X_train, X_test, y_train, y_test = train_test_split(df['text'], df['label'], test_size=0.3, random_state=42)

vectorizer = CountVectorizer()

X_train = vectorizer.fit_transform(X_train)

X_test = vectorizer.transform(X_test)

classifier = RandomForestClassifier()

classifier.fit(X_train, y_train)

y_pred = classifier.predict(X_test)

accuracy = accuracy_score(y_test, y_pred)

print(f'Accuracy: {accuracy:.2f}')

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?