大数据系列之运维(自主搭建的大数据平台)

(4)Hive运维

- 启动 Hvie 客户端,通过 Hive 查看 hadoop 所有文件路径

[root@master apache-hive-2.1.1-bin]# bin/hive

hive> dfs -ls /;

Found 6 items

drwxr-xr-x - root supergroup 0 2020-03-29 22:12 /1daoyun

drwxr-xr-x - root supergroup 0 2020-03-29 21:54 /app

drwxr-xr-x - root supergroup 0 2020-03-30 21:01 /hbase

drwxr-xr-x - root supergroup 0 2020-03-29 22:32 /output

drwxrwxr-x - root supergroup 0 2020-03-29 22:04 /tmp

drwxr-xr-x - root supergroup 0 2020-03-29 22:04 /user

还有一种是

hive> dfs -ls;

区别是前一个查看的是HDFS的根目录下所有文件路径,后一个查看的是HDFS下所在用户的所有文件路径。

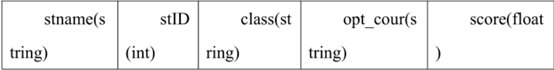

- 使用 Hive 工具来创建数据表 xd_phy_course,将 phy_course_xd.txt 导入到该表中,其中 xd_phy_course 表的数据结构如下表所示。导入完成后,通过hive 查询数据表 xd_phy_course 中数据在 HDFS 所处的文件位置列表信息。

hive> create table xd_phy_course (stname string,stID int,class string,opt_cour string) row format delimited fields terminated by '\t' lines terminated by '\n' stored as textfile;

OK

Time taken: 3.45 seconds

hive> load data local inpath'/root/tiku/Hive/phy_course_xd.txt' into table xd_phy_course;

Loading data to table default.xd_phy_course

OK

Time taken: 1.442 seconds

hive> dfs -ls /user/hive_remote/warehouse;

Found 1 items

drwxrwxr-x - root supergroup 0 2020-03-30 22:41 /user/hive_remote/warehouse/xd_phy_course

- 使用 Hive 工具来创建数据表 xd_phy_course,并定义该表为外部表,外部存储位置为/1daoyun/data/hive,将 phy_course_xd.txt 导入到该表中,其中xd_phy_course 表的数据结构如下表所示。导入完成后,在 hive 中查询数据表 xd_phy_course 的数据结构信息。

hive> create external table xd_phy_course (stname string,stID int,class string,opt_cour string) row format delimited fields terminated by '\t' lines terminated by '\n' location '/1daoyun/data/hive';

OK

Time taken: 0.211 seconds

hive> load data local inpath'/root/tiku/Hive/phy_course_xd.txt' into table xd_phy_course;

Loading data to table default.xd_phy_course

OK

Time taken: 0.577 seconds

hive> describe xd_phy_course;

OK

stname string

stid int

class string

opt_cour string

Time taken: 0.114 seconds, Fetched: 4 row(s)

- 使用 Hive 工具来查找出 phy_course_xd.txt 文件中某高校 Software_1403 班级报名选修 volleyball 的成员所有信息,其中 phy_course_xd.txt 文件数据结构如下表所示,选修科目字段为 opt_cour,班级字段为 class。

hive> create table phy_course_xd (stname string,stID int,class string,opt_cour string) row format delimited fields terminated by '\t' lines terminated by '\n';

OK

Time taken: 0.177 seconds

hive> load data local inpath'/root/tiku/Hive/phy_course_xd.txt' into table phy_course_xd;

Loading data to table default.phy_course_xd

OK

Time taken: 0.537 seconds

hive> select * from phy_course_xd where class='Software_1403' and opt_cour='volleyball';

OK

student409 10120408 Software_1403 volleyball

student411 10120410 Software_1403 volleyball

student413 10120412 Software_1403 volleyball

student419 10120418 Software_1403 volleyball

student421 10120420 Software_1403 volleyball

student422 10120421 Software_1403 volleyball

student424 10120423 Software_1403 volleyball

student432 10120431 Software_1403 volleyball

student438 10120437 Software_1403 volleyball

student447 10120446 Software_1403 volleyball

Time taken: 2.072 seconds, Fetched: 10 row(s)

- 使用 Hive 工具来统计 phy_course_xd.txt 文件中某高校报名选修各个体育科目的总人数,其中 phy_course_xd.txt 文件数据结构如下表所示,选修科目字段为 opt_cour,将统计的结果导入到表 phy_opt_count 中,通过 SELECT语句查询表 phy_opt_count 内容。

hive> create table phy_course_xd (stname string,stID int,class string,opt_cour string) row format delimited fields terminated by '\t' lines terminated by '\n';

OK

Time taken: 0.177 seconds

hive> load data local inpath'/root/tiku/Hive/phy_course_xd.txt' into table phy_course_xd;

Loading data to table default.phy_course_xd

OK

Time taken: 0.537 seconds

hive> create table phy_opt_count (opt_cour string,cour_count int) row format delimited fields terminated by '\t' lines terminated by '\n';

OK

Time taken: 0.152 seconds

hive> insert overwrite table phy_opt_count select phy_course_xd.opt_cour,count(distinct phy_course_xd.stID) from phy_course_xd group by phy_course_xd.opt_cour;

WARNING: Hive-on-MR is deprecated in Hive 2 and may not be available in the future versions. Consider using a different execution engine (i.e. spark, tez) or using Hive 1.X releases.

Query ID = root_20200330231614_ff660b65-5065-439b-9661-6ecb9bb5f4c9

Total jobs = 1

Launching Job 1 out of 1

Number of reduce tasks not specified. Estimated from input data size: 1

In order to change the average load for a reducer (in bytes):

set hive.exec.reducers.bytes.per.reducer=<number>

In order to limit the maximum number of reducers:

set hive.exec.reducers.max=<number>

In order to set a constant number of reducers:

set mapreduce.job.reduces=<number>

Starting Job = job_1585573247854_0001, Tracking URL = http://master:18088/proxy/application_1585573247854_0001/

Kill Command = /usr/hadoop/hadoop-2.7.3/bin/hadoop job -kill job_1585573247854_0001

Hadoop job information for Stage-1: number of mappers: 1; number of reducers: 1

2020-03-30 23:16:37,906 Stage-1 map = 0%, reduce = 0%

2020-03-30 23:16:54,728 Stage-1 map = 100%, reduce = 0%, Cumulative CPU 8.5 sec

2020-03-30 23:17:12,782 Stage-1 map = 100%, reduce = 100%, Cumulative CPU 16.93 sec

MapReduce Total cumulative CPU time: 16 seconds 930 msec

Ended Job = job_1585573247854_0001

Loading data to table default.phy_opt_count

MapReduce Jobs Launched:

Stage-Stage-1: Map: 1 Reduce: 1 Cumulative CPU: 16.93 sec HDFS Read: 101059 HDFS Write: 216 SUCCESS

Total MapReduce CPU Time Spent: 16 seconds 930 msec

OK

Time taken: 61.419 seconds

hive> select * from phy_opt_count;

OK

badminton 234

basketball 224

football 206

gymnastics 220

opt_cour 0

swimming 234

table tennis 277

taekwondo 222

tennis 223

volleyball 209

Time taken: 0.156 seconds, Fetched: 10 row(s)

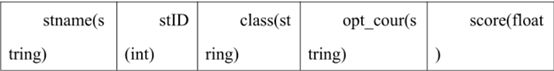

- 使用 Hive 工具来查找出 phy_course_score_xd.txt 文件中某高校Software_1403 班级体育选修成绩在 90 分以上的成员所有信息,其中phy_course_score_xd.txt 文件数据结构如下表所示,选修科目字段为 opt_cour,成绩字段为 score。

hive> create table phy_course_score_xd (stname string,stID int,class string,opt_cour string,score float) row format delimited fields terminated by '\t' lines terminated by '\n';

OK

Time taken: 3.367 seconds

hive> load data local inpath'/root/tiku/Hive/phy_course_score_xd.txt' into table phy_course_score_xd;

Loading data to table default.phy_course_score_xd

OK

Time taken: 2.704 seconds

hive> select * from phy_course_score_xd where class='Software_1403' and score>90;

OK

student403 10120402 Software_1403 swimming 94.0

student413 10120412 Software_1403 volleyball 91.8

student427 10120426 Software_1403 taekwondo 98.6

student428 10120427 Software_1403 table tennis 91.4

student449 10120448 Software_1403 badminton 97.9

Time taken: 3.008 seconds, Fetched: 5 row(s)

- 使用 Hive 工具来统计 phy_course_score_xd.txt 文件中某高校各个班级体育课的平均成绩,使用 round 函数保留两位小数。其中 phy_course_score_xd.txt文件数据结构如下表所示,班级字段为 class,成绩字段为 score。

hive> select class,round(avg(score),2) from phy_course_score_xd group by class;

WARNING: Hive-on-MR is deprecated in Hive 2 and may not be available in the future versions. Consider using a different execution engine (i.e. spark, tez) or using Hive 1.X releases.

Query ID = root_20200331113210_24c326ce-a5d2-4394-b5d3-48e9b94de263

Total jobs = 1

Launching Job 1 out of 1

Number of reduce tasks not specified. Estimated from input data size: 1

In order to change the average load for a reducer (in bytes):

set hive.exec.reducers.bytes.per.reducer=<number>

In order to limit the maximum number of reducers:

set hive.exec.reducers.max=<number>

In order to set a constant number of reducers:

set mapreduce.job.reduces=<number>

Starting Job = job_1585624368328_0002, Tracking URL = http://master:18088/proxy/application_1585624368328_0002/

Kill Command = /usr/hadoop/hadoop-2.7.3/bin/hadoop job -kill job_1585624368328_0002

Hadoop job information for Stage-1: number of mappers: 1; number of reducers: 1

2020-03-31 11:32:25,660 Stage-1 map = 0%, reduce = 0%

2020-03-31 11:32:37,608 Stage-1 map = 100%, reduce = 0%, Cumulative CPU 5.31 sec

2020-03-31 11:32:48,501 Stage-1 map = 100%, reduce = 100%, Cumulative CPU 11.23 sec

MapReduce Total cumulative CPU time: 11 seconds 230 msec

Ended Job = job_1585624368328_0002

MapReduce Jobs Launched:

Stage-Stage-1: Map: 1 Reduce: 1 Cumulative CPU: 11.23 sec HDFS Read: 111370 HDFS Write: 1313 SUCCESS

Total MapReduce CPU Time Spent: 11 seconds 230 msec

OK

ClOfih_1401 80.83

ClOfih_1402 79.59

ClOfih_1403 79.75

ClOfih_1404 79.35

ClOfih_1405 79.43

ClOfih_1406 83.18

ClOfih_1407 82.25

Computer_1401 79.25

Computer_1402 82.06

Computer_1403 79.63

Computer_1404 80.84

Computer_1405 79.75

IntOfTh_1401 83.21

IntOfTh_1402 79.22

IntOfTh_1403 79.59

IntOfTh_1404 79.59

IntOfTh_1405 80.54

IntOfTh_1406 80.26

IntOfTh_1407 81.36

IntOfTh_1408 79.63

IntOfTh_1409 78.21

Mobile_1401 82.44

Mobile_1402 79.36

Mobile_1403 78.8

Mobile_1404 80.21

Mobile_1405 80.29

Mobile_1406 80.79

Network_1401 80.83

Network_1402 80.97

Network_1403 80.65

Network_1404 80.47

Network_1405 79.2

Software_1401 79.2

Software_1402 81.03

Software_1403 81.49

Software_1404 80.15

Software_1405 80.18

Software_1406 79.51

Software_1407 81.07

class NULL

Time taken: 40.442 seconds, Fetched: 40 row(s)

- 使用 Hive 工具来统计 phy_course_score_xd.txt 文件中某高校各个班级体育课的最高成绩。其中 phy_course_score_xd.txt 文件数据结构如下表所示,班级字段为 class,成绩字段为 score。

hive> select class,max(score) from phy_course_score_xd group by class;

WARNING: Hive-on-MR is deprecated in Hive 2 and may not be available in the future versions. Consider using a different execution engine (i.e. spark, tez) or using Hive 1.X releases.

Query ID = root_20200331113621_7d430176-398a-4d0d-be0e-17688fa4967b

Total jobs = 1

Launching Job 1 out of 1

Number of reduce tasks not specified. Estimated from input data size: 1

In order to change the average load for a reducer (in bytes):

set hive.exec.reducers.bytes.per.reducer=<number>

In order to limit the maximum number of reducers:

set hive.exec.reducers.max=<number>

In order to set a constant number of reducers:

set mapreduce.job.reduces=<number>

Starting Job = job_1585624368328_0003, Tracking URL = http://master:18088/proxy/application_1585624368328_0003/

Kill Command = /usr/hadoop/hadoop-2.7.3/bin/hadoop job -kill job_1585624368328_0003

Hadoop job information for Stage-1: number of mappers: 1; number of reducers: 1

2020-03-31 11:36:36,187 Stage-1 map = 0%, reduce = 0%

2020-03-31 11:36:55,042 Stage-1 map = 100%, reduce = 0%, Cumulative CPU 9.65 sec

2020-03-31 11:37:05,837 Stage-1 map = 100%, reduce = 100%, Cumulative CPU 14.41 sec

MapReduce Total cumulative CPU time: 14 seconds 410 msec

Ended Job = job_1585624368328_0003

MapReduce Jobs Launched:

Stage-Stage-1: Map: 1 Reduce: 1 Cumulative CPU: 14.41 sec HDFS Read: 110404 HDFS Write: 1278 SUCCESS

Total MapReduce CPU Time Spent: 14 seconds 410 msec

OK

ClOfih_1401 99.2

ClOfih_1402 97.0

ClOfih_1403 90.8

ClOfih_1404 98.7

ClOfih_1405 98.7

ClOfih_1406 99.4

ClOfih_1407 99.8

Computer_1401 100.0

Computer_1402 99.2

Computer_1403 98.3

Computer_1404 98.6

Computer_1405 98.1

IntOfTh_1401 98.7

IntOfTh_1402 98.6

IntOfTh_1403 94.7

IntOfTh_1404 99.3

IntOfTh_1405 97.1

IntOfTh_1406 98.3

IntOfTh_1407 99.3

IntOfTh_1408 98.6

IntOfTh_1409 96.4

Mobile_1401 99.5

Mobile_1402 99.3

Mobile_1403 97.9

Mobile_1404 99.2

Mobile_1405 99.2

Mobile_1406 99.8

Network_1401 97.2

Network_1402 99.7

Network_1403 99.8

Network_1404 99.8

Network_1405 99.9

Software_1401 99.1

Software_1402 99.5

Software_1403 98.6

Software_1404 99.8

Software_1405 99.8

Software_1406 97.7

Software_1407 99.6

class NULL

Time taken: 45.152 seconds, Fetched: 40 row(s)

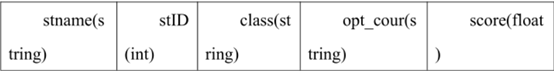

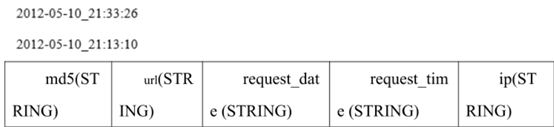

- 在 Hive 数据仓库将网络日志 weblog_entries.txt 中分开的 request_date 和request_time 字段进行合并,并以一个下划线“_”进行分割,如下图所示,其中 weblog_entries.txt 的数据结构如下表所示。

hive> create table weblog_entries (md5 STRING,url STRING,request_date STRING,request_time STRING,ip STRING) row format delimited fields terminated by '\t' lines terminated by '\n';

hive> load data local inpath'/root/tiku/Hive/weblog_entries.txt' into table weblog_entries;

hive> select concat_ws('_', request_date,request_time) from weblog_entries;

2012-05-10_21:29:01

2012-05-10_21:13:47

2012-05-10_21:12:37

2012-05-10_21:34:20

2012-05-10_21:27:00

2012-05-10_21:33:53

2012-05-10_21:10:19

2012-05-10_21:12:05

2012-05-10_21:25:58

2012-05-10_21:34:28

。。。。。。

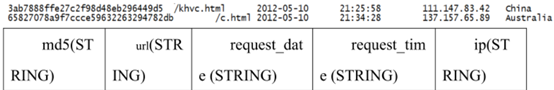

- 在 Hive 数据仓库将网络日志 weblog_entries.txt 中的 IP 字段与ip_to_country 中 IP 对应的国家进行简单的内链接,输出结果如下图所示,其中 weblog_entries.txt 的数据结构如下表所示。

hive> create table ip_to_country (ip string,country string) row format delimited fields terminated by '\t' lines terminated by '\n';

OK

Time taken: 0.167 seconds

hive> load data local inpath'/root/tiku/Hive/ip_to_country.txt' into table ip_to_country;

Loading data to table default.ip_to_country

OK

Time taken: 0.613 seconds

hive> select wlg.*,itc.country from weblog_entries wlg join ip_to_country itc on wlg.ip=itc.ip;

22b2549649dcc284ba8bf7d4993ac62 /e.html 2012-05-10 21:12:05 105.57.100.182 Morocco

3ab7888ffe27c2f98d48eb296449d5 /khvc.html 2012-05-10 21:25:58 111.147.83.42 China

65827078a9f7ccce59632263294782db /c.html 2012-05-10 21:34:28 137.157.65.89 Australia

- 使用 Hive 动态地关于网络日志 weblog_entries.txt 的查询结果创建 Hive表。通过创建一张名为 weblog_entries_url_length 的新表来定义新的网络日志数据库的三个字段,分别是 url,request_date,request_time。此外,在表中定义一个获取 url 字符串长度名为“url_length”的新字段,其中weblog_entries.txt 的数据结构如下表所示。完成后查询weblog_entries_url_length 表文件内容。

hive> create table weblog_entries_url_length as select url, request_date, request_time,length(url) as url_length from weblog_entries;

hive> select * from weblog_entries_url_length;

/hjmdhaoogwqhp.html 2012-05-10 21:34:20 19

/angjbmea.html 2012-05-10 21:27:00 14

/mmdttqsnjfifkihcvqu.html 2012-05-10 21:33:53 25

/eorxuryjadhkiwsf.html 2012-05-10 21:10:19 22

/e.html 2012-05-10 21:12:05 7

/khvc.html 2012-05-10 21:25:58 10

/c.html 2012-05-10 21:34:28 7

在此感谢先电云提供的题库。

感谢Apache开源技术服务支持

感谢抛物线、mn525520、菜鸟一枚2019三位博主的相关博客。

897

897

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?