目录

1、准备3台虚拟机(关闭防火墙、配置静态IP 和 主机名称)

2、安装JDK 和 Hadoop 并配置JDK和Hadoop的环境变量

Hadoop运行模式——完全分布式

1、准备3台虚拟机(关闭防火墙、配置静态IP 和 主机名称)

2、安装JDK 和 Hadoop 并配置JDK和Hadoop的环境变量

3、配置完全分布式集群

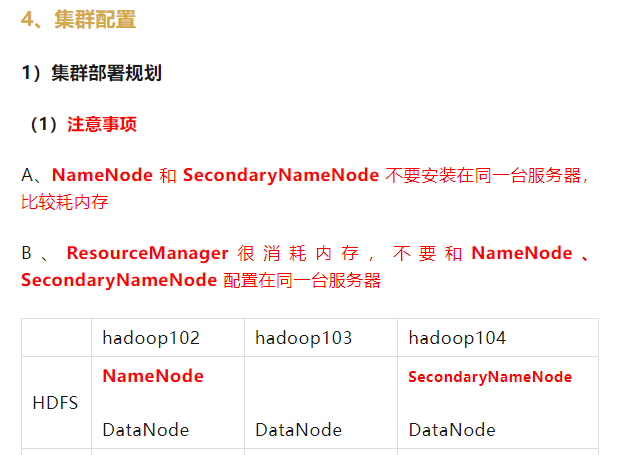

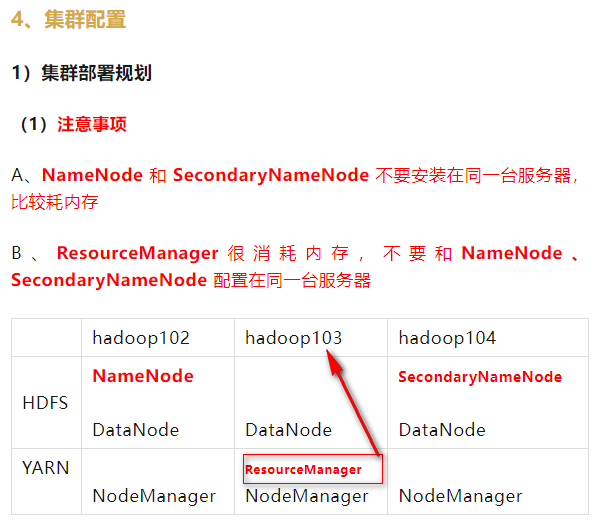

4、集群配置

1)集群部署规划

(1)注意事项

A、NameNode 和 SecondaryNameNode 不要安装在同一台服务器,比较耗内存

B、ResourceManager很消耗内存,不要和NameNode、SecondaryNameNode 配置在同一台服务器

| hadoop102 | hadoop103 | hadoop104 | |

| HDFS | NameNode DataNode | DataNode | SecondaryNameNode DataNode |

| YARN | NodeManager | ResourceManager NodeManager | NodeManager |

2)配置文件说明

Hadoop配置文件分为2类:

默认配置文件 和 自定义配置文件,当用户想修改某个默认配置值时,才需要修改自定义配置文件,更改相应属性值

(1)默认配置文件

| 默认配置文件 | 文件存放在Hadoop的jar包中的位置 |

| 【core-default.xml】 | hadoop-common-3.1.3.jar/core-default.xml |

| 【hdfs-default.xml】 | hadoop-hdfs-3.1.3.jar/hdfs-default.xml |

| 【yarn-default.xml】 | hadoop-yarn-common-3.1.3.jar/yarn-default.xml |

| 【mapred-default.xml】 | hadoop-mapreduce-client-core-3.1.3.jar/mapred-default.xml |

(2)自定义配置文件

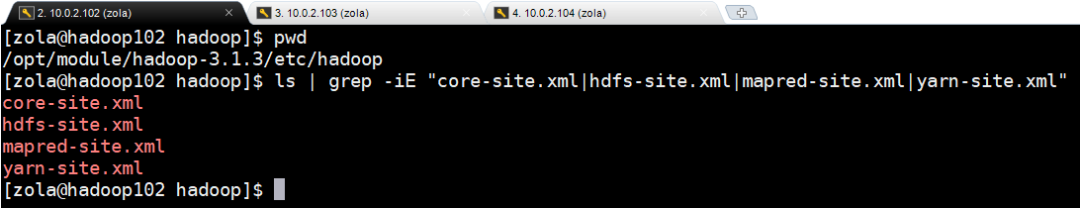

core-site.xml、hdfs-site.xml、yarn-site.xml、mapred-site.xml 四个配置文件存放在 $HADOOP_HOME/etc/hadoop 这个路径上,用户可以根据项目需求重新进行修改配置

本次生产环境上的路径:

cd /opt/module/hadoop-3.1.3/etc/hadoopls | grep -iE "core-site.xml|hdfs-site.xml|mapred-site.xml|yarn-site.xml"

3)配置集群

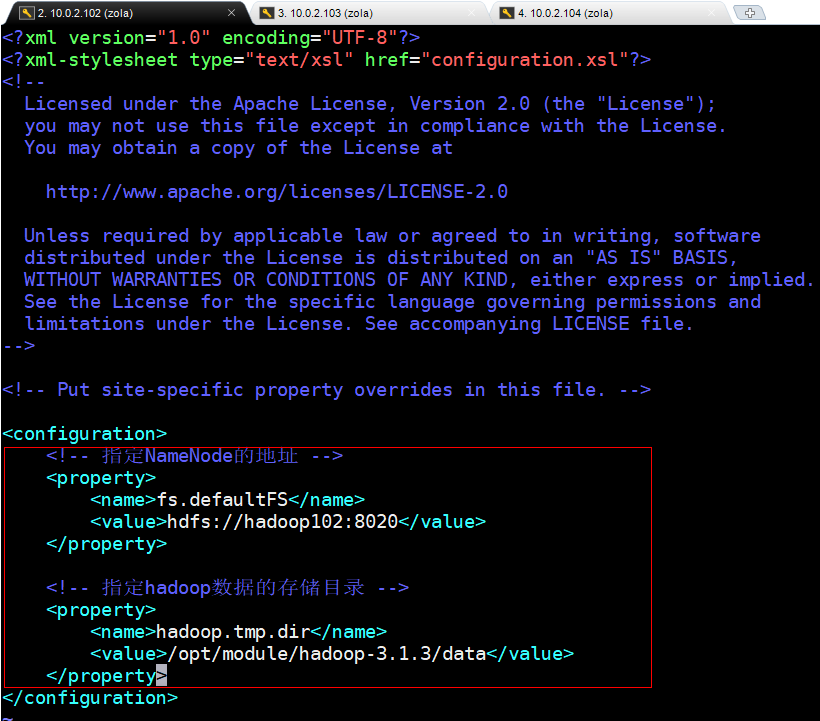

(1)核心 配置文件

hadoop102 配置 core-site.xml 文件

cd $HADOOP_HOME/etc/hadoopvim core-site.xml

core-site.xml配置文件内容如下:

<?xml version="1.0" encoding="UTF-8"?><?xml-stylesheet type="text/xsl" href="configuration.xsl"?><configuration><!-- 指定NameNode的地址 --><property><name>fs.defaultFS</name><!-- 指定Hadoop内部通讯地址 --><value>hdfs://hadoop102:8020</value></property><!-- 指定hadoop数据的存储目录 --><property><name>hadoop.tmp.dir</name><value>/opt/module/hadoop-3.1.3/data</value></property><!-- 配置HDFS网页登录使用的静态用户为zola --><property><name>hadoop.http.staticuser.user</name><value>zola</value></property></configuration>

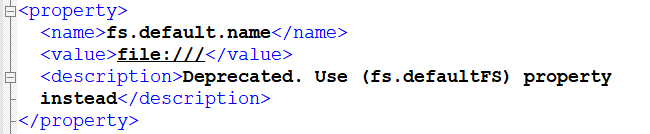

fs.defaultFS 属性:

根据 1)集群部署规划的(1)注意事项 里面的规划表来填写配置表,指定 NameNode的地址,默认值为:file:/// 是个文件协议的本地地址,如下图1所示为默认数值。修改为:hdfs://hadoop102:8020,用hdfs协议,部署在 hadoop102上,使用服务端口 8020 或者使用9000或9820都可以,推荐使用 8020

图1

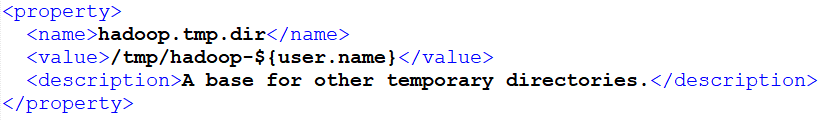

hadoop.tmp.dir 属性:

hadoop中HDFS要存储数据,这些数据存储的目录地址,默认值为:/tmp/hadoop-${user.name},如下图2所示为默认值,在本环境 Linux系统里面是指:/tmp/hadoop-zola,但是 /tmp 目录是1个临时目录,一般Linux系统1个月清理一次。修改为:/opt/module/hadoop-3.1.3/data,这里data目录若不存在会自动创建

图2

hadoop.http.staticuser.user 属性:

配置 HDFS 网页登录使用的 静态用户为 zola ,这个配置参数可以先不配置,后期需要的时候再进行配置,默认值如下图3所示

图3

配置文件添加内容:

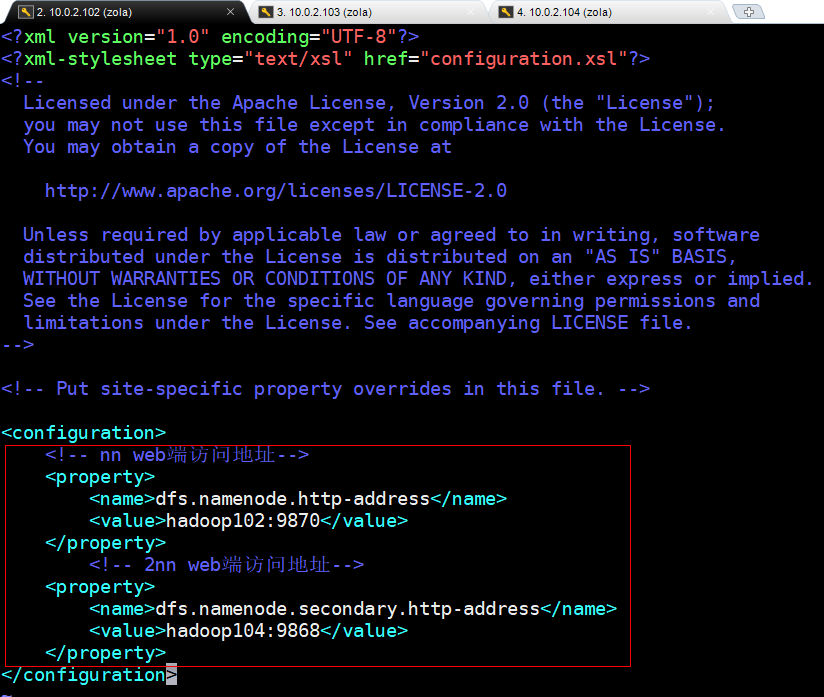

(2)HDFS 配置文件

hadoop102 配置 hdfs-site.xml 文件

cd $HADOOP_HOME/etc/hadoopvim hdfs-site.xml

hdfs-site.xml配置文件内容如下:

<?xml version="1.0" encoding="UTF-8"?><?xml-stylesheet type="text/xsl" href="configuration.xsl"?><configuration><!-- nn web端访问地址,给外部访问提供的接口--><property><name>dfs.namenode.http-address</name><value>hadoop102:9870</value></property><!-- 2nn web端访问地址--><property><name>dfs.namenode.secondary.http-address</name><value>hadoop104:9868</value></property></configuration>

dfs.namenode.http-address 属性:

NameNode(nn)要让 HDFS从 web页面访问,也需要暴露一个端口来提供访问,这里配置为:hadoop102:9870,

dfs.namenode.secondary.http-address 属性:

SecondaryNameNode(2nn)要让 HDFS 从 web页面访问,也需要暴露一个端口来提供访问,根据 1)集群部署规划的(1)注意事项 里面的规划表来填写配置表,这里配置为:hadoop104:9868,

配置文件添加内容:

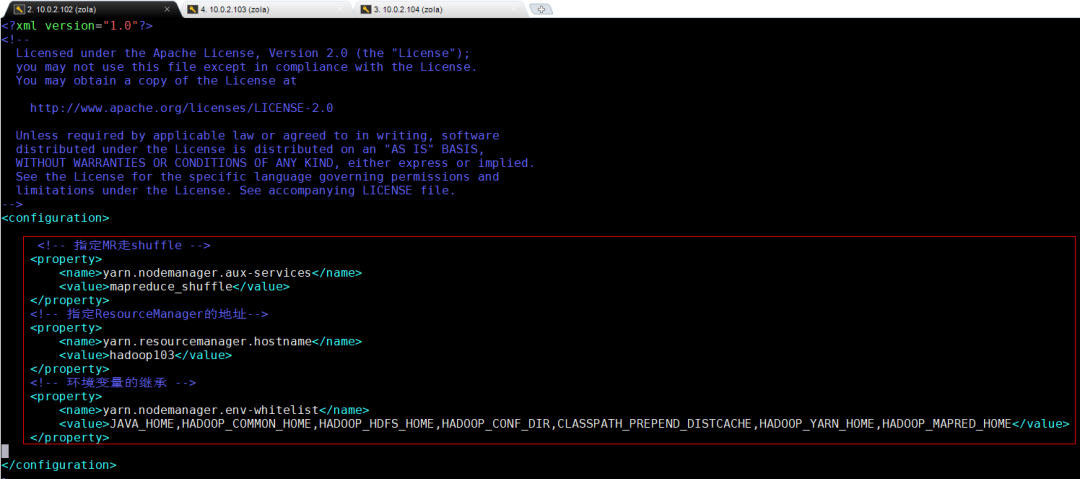

(3)YARN 配置文件

hadoop102 配置 hdfs-site.xml 文件

cd $HADOOP_HOME/etc/hadoopvim yarn-site.xml

yarn-site.xml配置文件内容如下:

<?xml version="1.0" encoding="UTF-8"?><?xml-stylesheet type="text/xsl" href="configuration.xsl"?><configuration><!-- 指定MR走shuffle --><property><name>yarn.nodemanager.aux-services</name><value>mapreduce_shuffle</value></property><!-- 指定ResourceManager的地址--><property><name>yarn.resourcemanager.hostname</name><value>hadoop103</value></property><!-- 环境变量的继承 --><property><name>yarn.nodemanager.env-whitelist</name><value>JAVA_HOME,HADOOP_COMMON_HOME,HADOOP_HDFS_HOME,HADOOP_CONF_DIR,CLASSPATH_PREPEND_DISTCACHE,HADOOP_YARN_HOME,HADOOP_MAPRED_HOME</value></property></configuration>

yarn.nodemanager.aux-services 属性:

MR走什么协议,默认推荐是走 mapreduce_shuffle ,以 shuffle 的方式进行后续的资源调度

resourcemanager 属性:

resourcemanager 部署地址, 根据 1)集群部署规划的(1)注意事项 里面的规划表来填写配置表,指定 ResourceManager 的地址 为 hadoop103

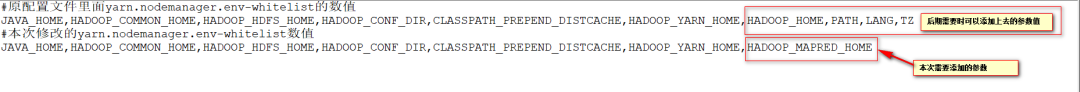

yarn.nodemanager.env-whitelist 属性:

这里Hadoop 3.1.3版本 里面环境变量的继承中有1个小 bug ,到了Hadoop 3.2.X版本以上就不需要进行配置环境变量了,而且 HADOOP_MAPRED_HOME 也自动添加上了,对比原配置文件里面的数值 和 本次修改的数值 差异

#原配置文件里面yarn.nodemanager.env-whitelist的数值JAVA_HOME,HADOOP_COMMON_HOME,HADOOP_HDFS_HOME,HADOOP_CONF_DIR,CLASSPATH_PREPEND_DISTCACHE,HADOOP_YARN_HOME,HADOOP_HOME,PATH,LANG,TZ#本次修改的yarn.nodemanager.env-whitelist数值JAVA_HOME,HADOOP_COMMON_HOME,HADOOP_HDFS_HOME,HADOOP_CONF_DIR,CLASSPATH_PREPEND_DISTCACHE,HADOOP_YARN_HOME,HADOOP_MAPRED_HOME

配置文件添加内容:

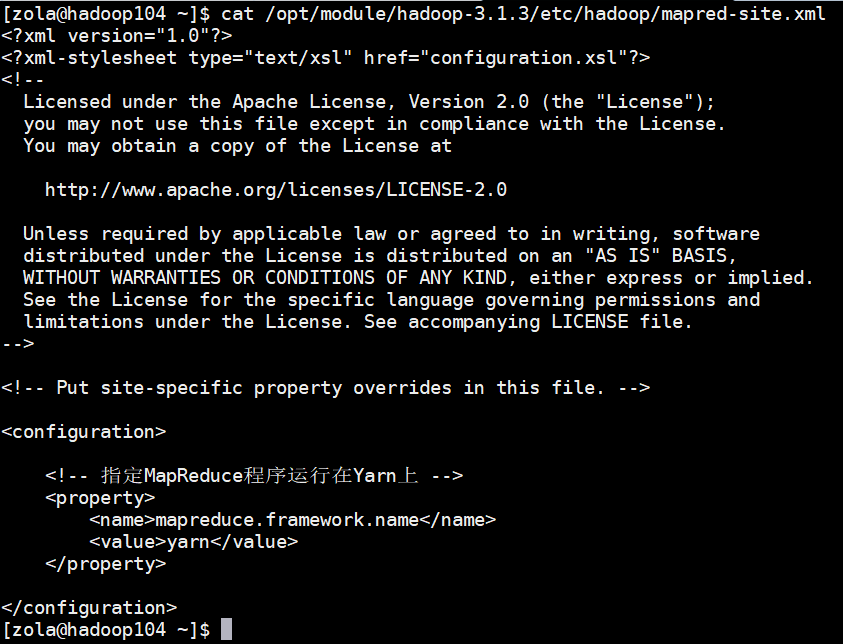

(4)MapReduce 配置文件

hadoop102 配置 mapred-site.xml 文件

cd $HADOOP_HOME/etc/hadoopvim mapred-site.xml

mapred-site.xml 配置文件内容如下:

<?xml version="1.0" encoding="UTF-8"?><?xml-stylesheet type="text/xsl" href="configuration.xsl"?><configuration><!-- 指定MapReduce程序运行在Yarn上 --><property><name>mapreduce.framework.name</name><value>yarn</value></property></configuration>

mapreduce.framework.name 属性:

MapReduce默认是 local 本地运行,本次设置运行 是在 yarn 上 运行。修改配置文件时,可以先 进入 默认配置文件 mapred-default.xml 里面 查询一下默认的配置

配置文件添加内容:

(1)(2)(3)(4)操作至此,已经在 Hadoop102 上完成了 集群的配置,还需要在 Hadoop103、Hadoop104 上 进行相同的配置,才算是将集群 完整配置完成

(5)在集群的 Hadoop102 上分发配置好的Hadoop配置文件,到其它节点

#执行分发脚本xsync /opt/module/hadoop-3.1.3/etc/hadoop/#执行结果如下:==================== hadoop102 ====================sending incremental file listsent 916 bytes received 18 bytes 1,868.00 bytes/sectotal size is 119,834 speedup is 128.30==================== hadoop103 ====================sending incremental file listhadoop/hadoop/.yarn-site.xml.swphadoop/core-site.xmlhadoop/hdfs-site.xmlhadoop/mapred-site.xmlhadoop/yarn-site.xmlsent 15,638 bytes received 158 bytes 31,592.00 bytes/sectotal size is 119,834 speedup is 7.59==================== hadoop104 ====================sending incremental file listhadoop/hadoop/.yarn-site.xml.swphadoop/capacity-scheduler.xmlhadoop/configuration.xslhadoop/container-executor.cfghadoop/core-site.xmlhadoop/hadoop-env.cmdhadoop/hadoop-env.shhadoop/hadoop-metrics2.propertieshadoop/hadoop-policy.xmlhadoop/hadoop-user-functions.sh.examplehadoop/hdfs-site.xmlhadoop/httpfs-env.shhadoop/httpfs-log4j.propertieshadoop/httpfs-signature.secrethadoop/httpfs-site.xmlhadoop/kms-acls.xmlhadoop/kms-env.shhadoop/kms-log4j.propertieshadoop/kms-site.xmlhadoop/log4j.propertieshadoop/mapred-env.cmdhadoop/mapred-env.shhadoop/mapred-queues.xml.templatehadoop/mapred-site.xmlhadoop/ssl-client.xml.examplehadoop/ssl-server.xml.examplehadoop/user_ec_policies.xml.templatehadoop/workershadoop/yarn-env.cmdhadoop/yarn-env.shhadoop/yarn-site.xmlhadoop/yarnservice-log4j.propertieshadoop/shellprofile.d/hadoop/shellprofile.d/example.shsent 17,377 bytes received 1,657 bytes 38,068.00 bytes/sectotal size is 119,834 speedup is 6.30

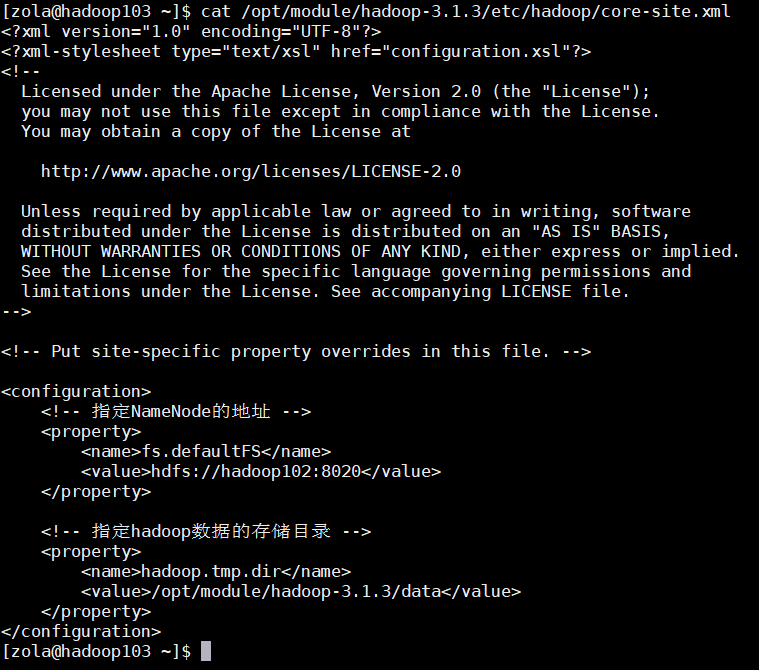

(6)验证配置文件分发结果,在 Hadoop103、Hadoop104 上进行验证

cat /opt/module/hadoop-3.1.3/etc/hadoop/core-site.xmlcat /opt/module/hadoop-3.1.3/etc/hadoop/mapred-site.xml

验证结果如下:成功分发

5、集群启动 与 测试

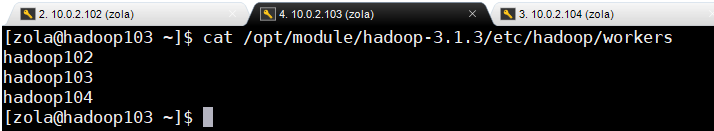

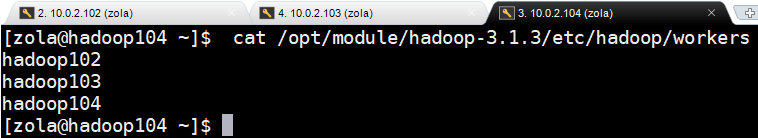

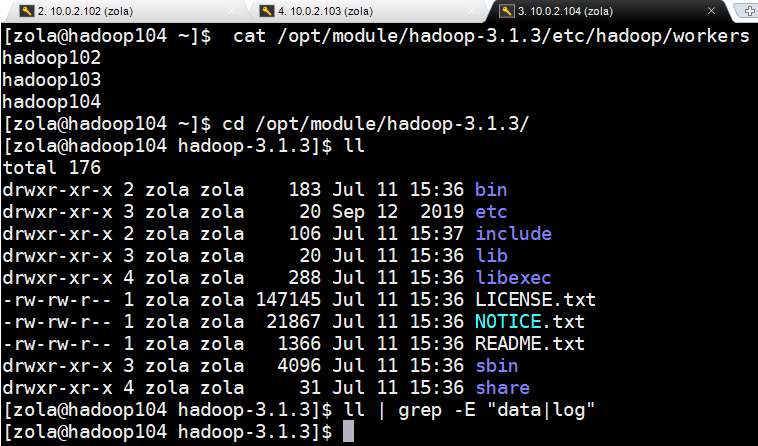

1)workers的配置

集群完成配置后,在启动集群之前,还需要 配置workers节点 ,集群中有几个节点, /opt/module/hadoop-3.1.3/etc/hadoop/workers配置文件里面对应的就配置几个主机名称

(1)在Hadoop102 上的 workers 增加如下内容:

#修改前查看原有配置 为 localhost ,删掉即可cat /opt/module/hadoop-3.1.3/etc/hadoop/workerslocalhost#正式进行修改操作vim /opt/module/hadoop-3.1.3/etc/hadoop/workershadoop102hadoop103hadoop104#查看修改结果,进行核实验证cat /opt/module/hadoop-3.1.3/etc/hadoop/workershadoop102hadoop103hadoop104

注意:/opt/module/hadoop-3.1.3/etc/hadoop/workers文件中添加的内容,结尾不允许有空格,文件中也不允许有空行。因为启动脚本会读取调用该文件里面的主机名称,程序读取时没有去空格化,文件里面有 空格 会一起读取到,导致启动时找不到对应的主机名,从而导致集群启动失败

(2)在Hadoop102 上把workers 配置文件同步到所有集群节点

#执行同步脚本xsync /opt/module/hadoop-3.1.3/etc#同步脚本执行结果==================== hadoop102 ====================sending incremental file listsent 944 bytes received 19 bytes 1,926.00 bytes/sectotal size is 119,854 speedup is 124.46==================== hadoop103 ====================sending incremental file listetc/hadoop/etc/hadoop/workerssent 1,024 bytes received 51 bytes 2,150.00 bytes/sectotal size is 119,854 speedup is 111.49==================== hadoop104 ====================sending incremental file listetc/etc/hadoop/etc/hadoop/workerssent 1,027 bytes received 54 bytes 2,162.00 bytes/sectotal size is 119,854 speedup is 110.87

(3)验证Hadoop102上分发的配置文件,在集群其它节点上 Hadoop103、Hadoop104 核实workers 配置文件

cat /opt/module/hadoop-3.1.3/etc/hadoop/workers验证结果,正常分发

2)启动集群

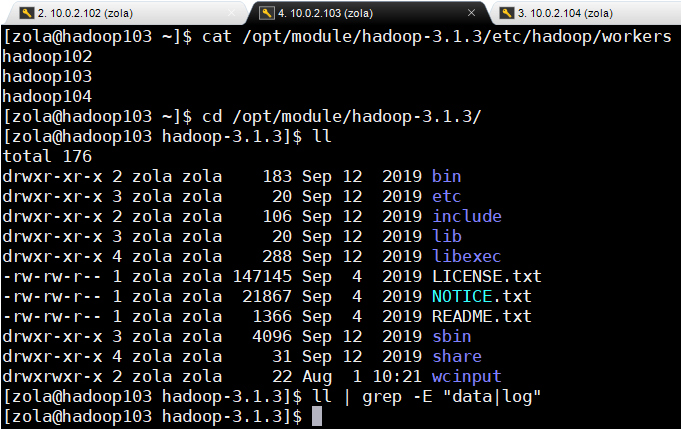

(1)第一次启动集群需要先进行初始化(将namenode记账本清空)

第一次启动集群时,需要先进行初始化,就好比买了1个U盘第一次使用时,需要先进行格式化U盘一样。注意只是在第一次启动集群时需要进行初始化,之后启动集群就不需要进行初始化了,因为初始化操作相当于清理掉集群原有的数据,就好比并不是每次使用U盘都需要进行格式化一样,格式化U盘会导致数据丢失

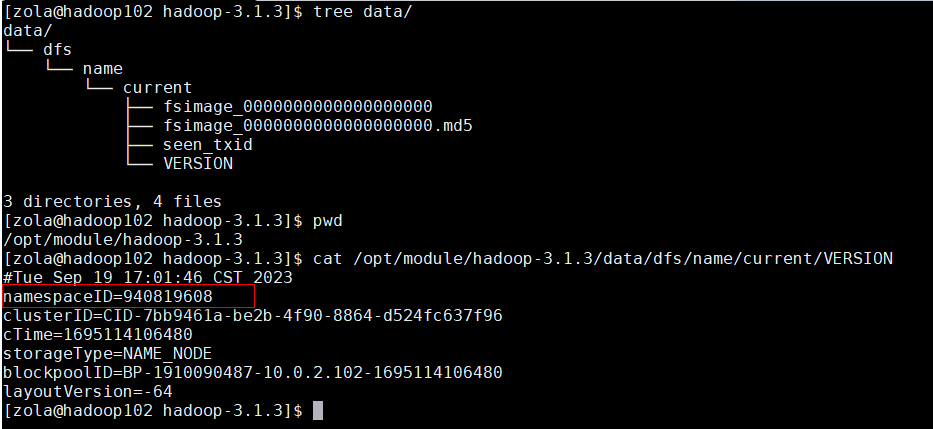

A、需要在 hadoop102 节点格式化NameNode(注意:格式化NameNode,会产生新的集群id,导致NameNode和DataNode的集群id不一致,集群找不到以往数据。如果集群在运行过程中报错,需要重新格式化NameNode的话,一定要先停止namenode和datanode进程,并且要删除所有机器的data和logs目录,然后再进行格式化)

#进入Hadoop的家目录cd /opt/module/hadoop-3.1.3/ls#返回结果bin etc include lib libexec LICENSE.txt NOTICE.txt README.txt sbin share wcinputll#返回结果total 176drwxr-xr-x 2 zola zola 183 Sep 12 2019 bindrwxr-xr-x 3 zola zola 20 Sep 12 2019 etcdrwxr-xr-x 2 zola zola 106 Sep 12 2019 includedrwxr-xr-x 3 zola zola 20 Sep 12 2019 libdrwxr-xr-x 4 zola zola 288 Sep 12 2019 libexec-rw-rw-r-- 1 zola zola 147145 Sep 4 2019 LICENSE.txt-rw-rw-r-- 1 zola zola 21867 Sep 4 2019 NOTICE.txt-rw-rw-r-- 1 zola zola 1366 Sep 4 2019 README.txtdrwxr-xr-x 3 zola zola 4096 Sep 12 2019 sbindrwxr-xr-x 4 zola zola 31 Sep 12 2019 sharedrwxrwxr-x 2 zola zola 22 Aug 1 10:21 wcinput#查看目录下是否有data和logs目录ll | grep -E "data|logs"#执行NameNode格式化操作,执行过程中没有报错即可,该指令相当于是将 那么node记账本清空hdfs namenode -format#返回结果WARNING: /opt/module/hadoop-3.1.3/logs does not exist. Creating.2023-09-19 17:01:44,637 INFO namenode.NameNode: STARTUP_MSG:/************************************************************STARTUP_MSG: Starting NameNodeSTARTUP_MSG: host = hadoop102/10.0.2.102STARTUP_MSG: args = [-format]STARTUP_MSG: version = 3.1.3STARTUP_MSG: classpath = /opt/module/hadoop-3.1.3/etc/hadoop:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/accessors-smart-1.2.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/animal-sniffer-annotations-1.17.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/asm-5.0.4.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/audience-annotations-0.5.0.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/avro-1.7.7.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/checker-qual-2.5.2.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/commons-beanutils-1.9.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/commons-cli-1.2.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/commons-codec-1.11.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/commons-collections-3.2.2.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/commons-compress-1.18.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/commons-configuration2-2.1.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/commons-io-2.5.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/commons-lang-2.6.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/commons-lang3-3.4.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/commons-logging-1.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/commons-math3-3.1.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/commons-net-3.6.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/curator-client-2.13.0.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/curator-framework-2.13.0.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/curator-recipes-2.13.0.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/error_prone_annotations-2.2.0.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/failureaccess-1.0.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/gson-2.2.4.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/guava-27.0-jre.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/hadoop-annotations-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/hadoop-auth-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/htrace-core4-4.1.0-incubating.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/httpclient-4.5.2.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/httpcore-4.4.4.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/j2objc-annotations-1.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/jackson-annotations-2.7.8.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/jackson-core-2.7.8.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/jackson-core-asl-1.9.13.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/jackson-databind-2.7.8.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/jackson-jaxrs-1.9.13.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/jackson-mapper-asl-1.9.13.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/jackson-xc-1.9.13.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/javax.servlet-api-3.1.0.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/jaxb-api-2.2.11.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/jaxb-impl-2.2.3-1.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/jcip-annotations-1.0-1.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/jersey-core-1.19.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/jersey-json-1.19.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/jersey-server-1.19.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/jersey-servlet-1.19.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/jettison-1.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/jetty-http-9.3.24.v20180605.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/jetty-io-9.3.24.v20180605.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/jetty-security-9.3.24.v20180605.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/jetty-server-9.3.24.v20180605.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/jetty-servlet-9.3.24.v20180605.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/jetty-util-9.3.24.v20180605.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/jetty-webapp-9.3.24.v20180605.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/jetty-xml-9.3.24.v20180605.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/jsch-0.1.54.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/json-smart-2.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/jsp-api-2.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/jsr305-3.0.0.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/jsr311-api-1.1.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/kerb-admin-1.0.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/kerb-client-1.0.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/kerb-common-1.0.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/kerb-core-1.0.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/kerb-crypto-1.0.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/kerb-identity-1.0.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/kerb-server-1.0.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/kerb-simplekdc-1.0.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/kerb-util-1.0.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/kerby-asn1-1.0.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/kerby-config-1.0.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/kerby-pkix-1.0.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/kerby-util-1.0.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/kerby-xdr-1.0.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/listenablefuture-9999.0-empty-to-avoid-conflict-with-guava.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/log4j-1.2.17.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/netty-3.10.5.Final.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/nimbus-jose-jwt-4.41.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/paranamer-2.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/protobuf-java-2.5.0.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/re2j-1.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/slf4j-api-1.7.25.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/slf4j-log4j12-1.7.25.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/snappy-java-1.0.5.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/stax2-api-3.1.4.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/token-provider-1.0.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/woodstox-core-5.0.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/zookeeper-3.4.13.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/jul-to-slf4j-1.7.25.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/lib/metrics-core-3.2.4.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/hadoop-common-3.1.3-tests.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/hadoop-common-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/hadoop-nfs-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/common/hadoop-kms-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/commons-daemon-1.0.13.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/jetty-util-ajax-9.3.24.v20180605.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/leveldbjni-all-1.8.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/netty-all-4.0.52.Final.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/okhttp-2.7.5.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/okio-1.6.0.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/jersey-servlet-1.19.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/jersey-json-1.19.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/hadoop-auth-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/commons-codec-1.11.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/log4j-1.2.17.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/httpclient-4.5.2.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/httpcore-4.4.4.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/commons-logging-1.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/nimbus-jose-jwt-4.41.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/jcip-annotations-1.0-1.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/json-smart-2.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/accessors-smart-1.2.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/asm-5.0.4.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/zookeeper-3.4.13.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/audience-annotations-0.5.0.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/netty-3.10.5.Final.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/curator-framework-2.13.0.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/curator-client-2.13.0.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/guava-27.0-jre.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/failureaccess-1.0.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/listenablefuture-9999.0-empty-to-avoid-conflict-with-guava.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/jsr305-3.0.0.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/checker-qual-2.5.2.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/error_prone_annotations-2.2.0.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/j2objc-annotations-1.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/animal-sniffer-annotations-1.17.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/kerb-simplekdc-1.0.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/kerb-client-1.0.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/kerby-config-1.0.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/kerb-core-1.0.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/kerby-pkix-1.0.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/kerby-asn1-1.0.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/kerby-util-1.0.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/kerb-common-1.0.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/kerb-crypto-1.0.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/commons-io-2.5.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/kerb-util-1.0.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/token-provider-1.0.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/kerb-admin-1.0.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/kerb-server-1.0.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/kerb-identity-1.0.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/kerby-xdr-1.0.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/jersey-core-1.19.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/jsr311-api-1.1.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/jersey-server-1.19.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/javax.servlet-api-3.1.0.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/json-simple-1.1.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/jetty-server-9.3.24.v20180605.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/jetty-http-9.3.24.v20180605.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/jetty-util-9.3.24.v20180605.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/jetty-io-9.3.24.v20180605.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/jetty-webapp-9.3.24.v20180605.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/jetty-xml-9.3.24.v20180605.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/jetty-servlet-9.3.24.v20180605.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/jetty-security-9.3.24.v20180605.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/hadoop-annotations-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/commons-cli-1.2.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/commons-math3-3.1.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/commons-net-3.6.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/commons-collections-3.2.2.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/jettison-1.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/jaxb-impl-2.2.3-1.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/jaxb-api-2.2.11.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/jackson-core-asl-1.9.13.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/jackson-mapper-asl-1.9.13.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/jackson-jaxrs-1.9.13.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/jackson-xc-1.9.13.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/commons-lang-2.6.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/commons-beanutils-1.9.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/commons-configuration2-2.1.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/commons-lang3-3.4.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/avro-1.7.7.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/paranamer-2.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/snappy-java-1.0.5.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/commons-compress-1.18.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/re2j-1.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/protobuf-java-2.5.0.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/gson-2.2.4.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/jsch-0.1.54.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/curator-recipes-2.13.0.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/htrace-core4-4.1.0-incubating.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/jackson-databind-2.7.8.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/jackson-annotations-2.7.8.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/jackson-core-2.7.8.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/stax2-api-3.1.4.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/lib/woodstox-core-5.0.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/hadoop-hdfs-3.1.3-tests.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/hadoop-hdfs-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/hadoop-hdfs-nfs-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/hadoop-hdfs-client-3.1.3-tests.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/hadoop-hdfs-client-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/hadoop-hdfs-native-client-3.1.3-tests.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/hadoop-hdfs-native-client-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/hadoop-hdfs-rbf-3.1.3-tests.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/hadoop-hdfs-rbf-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/hdfs/hadoop-hdfs-httpfs-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/mapreduce/lib/hamcrest-core-1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/mapreduce/lib/junit-4.11.jar:/opt/module/hadoop-3.1.3/share/hadoop/mapreduce/hadoop-mapreduce-client-app-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/mapreduce/hadoop-mapreduce-client-common-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/mapreduce/hadoop-mapreduce-client-core-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/mapreduce/hadoop-mapreduce-client-hs-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/mapreduce/hadoop-mapreduce-client-hs-plugins-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-3.1.3-tests.jar:/opt/module/hadoop-3.1.3/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/mapreduce/hadoop-mapreduce-client-nativetask-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/mapreduce/hadoop-mapreduce-client-shuffle-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/mapreduce/hadoop-mapreduce-client-uploader-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/mapreduce/hadoop-mapreduce-examples-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn:/opt/module/hadoop-3.1.3/share/hadoop/yarn/lib/HikariCP-java7-2.4.12.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/lib/aopalliance-1.0.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/lib/dnsjava-2.1.7.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/lib/ehcache-3.3.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/lib/fst-2.50.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/lib/geronimo-jcache_1.0_spec-1.0-alpha-1.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/lib/guice-4.0.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/lib/guice-servlet-4.0.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/lib/jackson-jaxrs-base-2.7.8.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/lib/jackson-jaxrs-json-provider-2.7.8.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/lib/jackson-module-jaxb-annotations-2.7.8.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/lib/java-util-1.9.0.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/lib/javax.inject-1.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/lib/jersey-client-1.19.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/lib/jersey-guice-1.19.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/lib/json-io-2.5.1.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/lib/metrics-core-3.2.4.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/lib/mssql-jdbc-6.2.1.jre7.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/lib/objenesis-1.0.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/lib/snakeyaml-1.16.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/lib/swagger-annotations-1.5.4.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/hadoop-yarn-api-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/hadoop-yarn-applications-distributedshell-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/hadoop-yarn-applications-unmanaged-am-launcher-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/hadoop-yarn-client-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/hadoop-yarn-common-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/hadoop-yarn-registry-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/hadoop-yarn-server-applicationhistoryservice-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/hadoop-yarn-server-common-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/hadoop-yarn-server-nodemanager-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/hadoop-yarn-server-resourcemanager-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/hadoop-yarn-server-router-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/hadoop-yarn-server-sharedcachemanager-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/hadoop-yarn-server-tests-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/hadoop-yarn-server-timeline-pluginstorage-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/hadoop-yarn-server-web-proxy-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/hadoop-yarn-services-api-3.1.3.jar:/opt/module/hadoop-3.1.3/share/hadoop/yarn/hadoop-yarn-services-core-3.1.3.jarSTARTUP_MSG: build = https://gitbox.apache.org/repos/asf/hadoop.git -r ba631c436b806728f8ec2f54ab1e289526c90579; compiled by 'ztang' on 2019-09-12T02:47ZSTARTUP_MSG: java = 1.8.0_212************************************************************/2023-09-19 17:01:44,654 INFO namenode.NameNode: registered UNIX signal handlers for [TERM, HUP, INT]2023-09-19 17:01:44,914 INFO namenode.NameNode: createNameNode [-format]Formatting using clusterid: CID-7bb9461a-be2b-4f90-8864-d524fc637f962023-09-19 17:01:46,096 INFO namenode.FSEditLog: Edit logging is async:true2023-09-19 17:01:46,121 INFO namenode.FSNamesystem: KeyProvider: null2023-09-19 17:01:46,122 INFO namenode.FSNamesystem: fsLock is fair: true2023-09-19 17:01:46,122 INFO namenode.FSNamesystem: Detailed lock hold time metrics enabled: false2023-09-19 17:01:46,137 INFO namenode.FSNamesystem: fsOwner = zola (auth:SIMPLE)2023-09-19 17:01:46,137 INFO namenode.FSNamesystem: supergroup = supergroup2023-09-19 17:01:46,137 INFO namenode.FSNamesystem: isPermissionEnabled = true2023-09-19 17:01:46,137 INFO namenode.FSNamesystem: HA Enabled: false2023-09-19 17:01:46,205 INFO common.Util: dfs.datanode.fileio.profiling.sampling.percentage set to 0. Disabling file IO profiling2023-09-19 17:01:46,218 INFO blockmanagement.DatanodeManager: dfs.block.invalidate.limit: configured=1000, counted=60, effected=10002023-09-19 17:01:46,234 INFO blockmanagement.DatanodeManager: dfs.namenode.datanode.registration.ip-hostname-check=true2023-09-19 17:01:46,240 INFO blockmanagement.BlockManager: dfs.namenode.startup.delay.block.deletion.sec is set to 000:00:00:00.0002023-09-19 17:01:46,240 INFO blockmanagement.BlockManager: The block deletion will start around 2023 Sep 19 17:01:462023-09-19 17:01:46,243 INFO util.GSet: Computing capacity for map BlocksMap2023-09-19 17:01:46,243 INFO util.GSet: VM type = 64-bit2023-09-19 17:01:46,246 INFO util.GSet: 2.0% max memory 235.9 MB = 4.7 MB2023-09-19 17:01:46,246 INFO util.GSet: capacity = 2^19 = 524288 entries2023-09-19 17:01:46,256 INFO blockmanagement.BlockManager: dfs.block.access.token.enable = false2023-09-19 17:01:46,276 INFO Configuration.deprecation: No unit for dfs.namenode.safemode.extension(30000) assuming MILLISECONDS2023-09-19 17:01:46,276 INFO blockmanagement.BlockManagerSafeMode: dfs.namenode.safemode.threshold-pct = 0.99900001287460332023-09-19 17:01:46,276 INFO blockmanagement.BlockManagerSafeMode: dfs.namenode.safemode.min.datanodes = 02023-09-19 17:01:46,276 INFO blockmanagement.BlockManagerSafeMode: dfs.namenode.safemode.extension = 300002023-09-19 17:01:46,277 INFO blockmanagement.BlockManager: defaultReplication = 32023-09-19 17:01:46,277 INFO blockmanagement.BlockManager: maxReplication = 5122023-09-19 17:01:46,277 INFO blockmanagement.BlockManager: minReplication = 12023-09-19 17:01:46,277 INFO blockmanagement.BlockManager: maxReplicationStreams = 22023-09-19 17:01:46,277 INFO blockmanagement.BlockManager: redundancyRecheckInterval = 3000ms2023-09-19 17:01:46,277 INFO blockmanagement.BlockManager: encryptDataTransfer = false2023-09-19 17:01:46,277 INFO blockmanagement.BlockManager: maxNumBlocksToLog = 10002023-09-19 17:01:46,361 INFO namenode.FSDirectory: GLOBAL serial map: bits=24 maxEntries=167772152023-09-19 17:01:46,406 INFO util.GSet: Computing capacity for map INodeMap2023-09-19 17:01:46,406 INFO util.GSet: VM type = 64-bit2023-09-19 17:01:46,407 INFO util.GSet: 1.0% max memory 235.9 MB = 2.4 MB2023-09-19 17:01:46,407 INFO util.GSet: capacity = 2^18 = 262144 entries2023-09-19 17:01:46,407 INFO namenode.FSDirectory: ACLs enabled? false2023-09-19 17:01:46,407 INFO namenode.FSDirectory: POSIX ACL inheritance enabled? true2023-09-19 17:01:46,407 INFO namenode.FSDirectory: XAttrs enabled? true2023-09-19 17:01:46,408 INFO namenode.NameNode: Caching file names occurring more than 10 times2023-09-19 17:01:46,416 INFO snapshot.SnapshotManager: Loaded config captureOpenFiles: false, skipCaptureAccessTimeOnlyChange: false, snapshotDiffAllowSnapRootDescendant: true, maxSnapshotLimit: 655362023-09-19 17:01:46,418 INFO snapshot.SnapshotManager: SkipList is disabled2023-09-19 17:01:46,423 INFO util.GSet: Computing capacity for map cachedBlocks2023-09-19 17:01:46,423 INFO util.GSet: VM type = 64-bit2023-09-19 17:01:46,424 INFO util.GSet: 0.25% max memory 235.9 MB = 603.8 KB2023-09-19 17:01:46,424 INFO util.GSet: capacity = 2^16 = 65536 entries2023-09-19 17:01:46,436 INFO metrics.TopMetrics: NNTop conf: dfs.namenode.top.window.num.buckets = 102023-09-19 17:01:46,436 INFO metrics.TopMetrics: NNTop conf: dfs.namenode.top.num.users = 102023-09-19 17:01:46,436 INFO metrics.TopMetrics: NNTop conf: dfs.namenode.top.windows.minutes = 1,5,252023-09-19 17:01:46,443 INFO namenode.FSNamesystem: Retry cache on namenode is enabled2023-09-19 17:01:46,443 INFO namenode.FSNamesystem: Retry cache will use 0.03 of total heap and retry cache entry expiry time is 600000 millis2023-09-19 17:01:46,445 INFO util.GSet: Computing capacity for map NameNodeRetryCache2023-09-19 17:01:46,445 INFO util.GSet: VM type = 64-bit2023-09-19 17:01:46,445 INFO util.GSet: 0.029999999329447746% max memory 235.9 MB = 72.5 KB2023-09-19 17:01:46,445 INFO util.GSet: capacity = 2^13 = 8192 entries2023-09-19 17:01:46,496 INFO namenode.FSImage: Allocated new BlockPoolId: BP-1910090487-10.0.2.102-16951141064802023-09-19 17:01:46,512 INFO common.Storage: Storage directory /opt/module/hadoop-3.1.3/data/dfs/name has been successfully formatted.2023-09-19 17:01:46,556 INFO namenode.FSImageFormatProtobuf: Saving image file /opt/module/hadoop-3.1.3/data/dfs/name/current/fsimage.ckpt_0000000000000000000 using no compression2023-09-19 17:01:46,703 INFO namenode.FSImageFormatProtobuf: Image file /opt/module/hadoop-3.1.3/data/dfs/name/current/fsimage.ckpt_0000000000000000000 of size 391 bytes saved in 0 seconds .2023-09-19 17:01:46,719 INFO namenode.NNStorageRetentionManager: Going to retain 1 images with txid >= 02023-09-19 17:01:46,727 INFO namenode.FSImage: FSImageSaver clean checkpoint: txid = 0 when meet shutdown.2023-09-19 17:01:46,728 INFO namenode.NameNode: SHUTDOWN_MSG:/************************************************************SHUTDOWN_MSG: Shutting down NameNode at hadoop102/10.0.2.102************************************************************/#查看目录下是否有data和logs目录,此处生成了 data 和 logs目录ll | grep -E "data|logs"#返回结果drwxrwxr-x 3 zola zola 17 Sep 19 17:01 datadrwxrwxr-x 2 zola zola 37 Sep 19 17:01 logs[zola@hadoop102 hadoop-3.1.3]$ lltotal 176drwxr-xr-x 2 zola zola 183 Sep 12 2019 bindrwxrwxr-x 3 zola zola 17 Sep 19 17:01 datadrwxr-xr-x 3 zola zola 20 Sep 12 2019 etcdrwxr-xr-x 2 zola zola 106 Sep 12 2019 includedrwxr-xr-x 3 zola zola 20 Sep 12 2019 libdrwxr-xr-x 4 zola zola 288 Sep 12 2019 libexec-rw-rw-r-- 1 zola zola 147145 Sep 4 2019 LICENSE.txtdrwxrwxr-x 2 zola zola 37 Sep 19 17:01 logs-rw-rw-r-- 1 zola zola 21867 Sep 4 2019 NOTICE.txt-rw-rw-r-- 1 zola zola 1366 Sep 4 2019 README.txtdrwxr-xr-x 3 zola zola 4096 Sep 12 2019 sbindrwxr-xr-x 4 zola zola 31 Sep 12 2019 sharedrwxrwxr-x 2 zola zola 22 Aug 1 10:21 wcinput

B、在 Hadoop103 、 Hadoop104上 检查对比,没有在 Hadoop家目录下生成 data 和 logs目录,因为目前初始化的只是 Hadoop102

C、查看对应的 Version文件

[zola@hadoop102 hadoop-3.1.3]$cat /opt/module/hadoop-3.1.3/data/dfs/name/current/VERSION#返回结果如下#Tue Sep 19 17:01:46 CST 2023namespaceID=940819608clusterID=CID-7bb9461a-be2b-4f90-8864-d524fc637f96cTime=1695114106480storageType=NAME_NODEblockpoolID=BP-1910090487-10.0.2.102-1695114106480layoutVersion=-64

当前的机器码和namespaceID记录一下,后面会用到

(2)启动HDFS

A、步骤(1)初始化完成后,正式启动集群,启动集群的HDFS,启动指令在 Hadoop家目录的 sbin 目录下

[zola@hadoop102 hadoop-3.1.3]$ pwd/opt/module/hadoop-3.1.3[zola@hadoop102 hadoop-3.1.3]$ ll sbin/total 108-rwxr-xr-x 1 zola zola 2756 Sep 12 2019 distribute-exclude.shdrwxr-xr-x 4 zola zola 36 Sep 12 2019 FederationStateStore-rwxr-xr-x 1 zola zola 1983 Sep 12 2019 hadoop-daemon.sh-rwxr-xr-x 1 zola zola 2522 Sep 12 2019 hadoop-daemons.sh-rwxr-xr-x 1 zola zola 1542 Sep 12 2019 httpfs.sh-rwxr-xr-x 1 zola zola 1500 Sep 12 2019 kms.sh-rwxr-xr-x 1 zola zola 1841 Sep 12 2019 mr-jobhistory-daemon.sh-rwxr-xr-x 1 zola zola 2086 Sep 12 2019 refresh-namenodes.sh-rwxr-xr-x 1 zola zola 1779 Sep 12 2019 start-all.cmd-rwxr-xr-x 1 zola zola 2221 Sep 12 2019 start-all.sh-rwxr-xr-x 1 zola zola 1880 Sep 12 2019 start-balancer.sh-rwxr-xr-x 1 zola zola 1401 Sep 12 2019 start-dfs.cmd-rwxr-xr-x 1 zola zola 5170 Sep 12 2019 start-dfs.sh-rwxr-xr-x 1 zola zola 1793 Sep 12 2019 start-secure-dns.sh-rwxr-xr-x 1 zola zola 1571 Sep 12 2019 start-yarn.cmd-rwxr-xr-x 1 zola zola 3342 Sep 12 2019 start-yarn.sh-rwxr-xr-x 1 zola zola 1770 Sep 12 2019 stop-all.cmd-rwxr-xr-x 1 zola zola 2166 Sep 12 2019 stop-all.sh-rwxr-xr-x 1 zola zola 1783 Sep 12 2019 stop-balancer.sh-rwxr-xr-x 1 zola zola 1455 Sep 12 2019 stop-dfs.cmd-rwxr-xr-x 1 zola zola 3898 Sep 12 2019 stop-dfs.sh-rwxr-xr-x 1 zola zola 1756 Sep 12 2019 stop-secure-dns.sh-rwxr-xr-x 1 zola zola 1642 Sep 12 2019 stop-yarn.cmd-rwxr-xr-x 1 zola zola 3083 Sep 12 2019 stop-yarn.sh-rwxr-xr-x 1 zola zola 1982 Sep 12 2019 workers.sh-rwxr-xr-x 1 zola zola 1814 Sep 12 2019 yarn-daemon.sh-rwxr-xr-x 1 zola zola 2328 Sep 12 2019 yarn-daemons.sh[zola@hadoop102 hadoop-3.1.3]$ sbin/start-dfs.shStarting namenodes on [hadoop102]Starting datanodeshadoop103: WARNING: /opt/module/hadoop-3.1.3/logs does not exist. Creating.hadoop104: WARNING: /opt/module/hadoop-3.1.3/logs does not exist. Creating.Starting secondary namenodes [hadoop104]

B、集群启动完毕后,验证是否与最初规划相符合在本文的(4、集群配置 1)集群部署规划 (1)注意事项 )规划如下图

实际部署情况如下:

#Hadoop102[zola@hadoop102 hadoop-3.1.3]$ jps1955 DataNode2203 Jps1839 NameNode#Hadoop103[zola@hadoop103 hadoop-3.1.3]$ jps1572 Jps1511 DataNode[zola@hadoop103 hadoop-3.1.3]$ lltotal 176drwxr-xr-x 2 zola zola 183 Sep 12 2019 bindrwxrwxr-x 3 zola zola 17 Sep 19 17:25 datadrwxr-xr-x 3 zola zola 20 Sep 12 2019 etcdrwxr-xr-x 2 zola zola 106 Sep 12 2019 includedrwxr-xr-x 3 zola zola 20 Sep 12 2019 libdrwxr-xr-x 4 zola zola 288 Sep 12 2019 libexec-rw-rw-r-- 1 zola zola 147145 Sep 4 2019 LICENSE.txtdrwxrwxr-x 2 zola zola 121 Sep 19 17:25 logs-rw-rw-r-- 1 zola zola 21867 Sep 4 2019 NOTICE.txt-rw-rw-r-- 1 zola zola 1366 Sep 4 2019 README.txtdrwxr-xr-x 3 zola zola 4096 Sep 12 2019 sbindrwxr-xr-x 4 zola zola 31 Sep 12 2019 sharedrwxrwxr-x 2 zola zola 22 Aug 1 10:21 wcinput[zola@hadoop103 hadoop-3.1.3]$ ll | grep -E "data|log"drwxrwxr-x 3 zola zola 17 Sep 19 17:25 datadrwxrwxr-x 2 zola zola 121 Sep 19 17:25 logs#Hadoop104[zola@hadoop104 hadoop-3.1.3]$ jps1618 SecondaryNameNode1666 Jps1513 DataNode[zola@hadoop104 hadoop-3.1.3]$ lltotal 176drwxr-xr-x 2 zola zola 183 Jul 11 15:36 bindrwxrwxr-x 3 zola zola 17 Sep 19 17:25 datadrwxr-xr-x 3 zola zola 20 Sep 12 2019 etcdrwxr-xr-x 2 zola zola 106 Jul 11 15:37 includedrwxr-xr-x 3 zola zola 20 Jul 11 15:36 libdrwxr-xr-x 4 zola zola 288 Jul 11 15:36 libexec-rw-rw-r-- 1 zola zola 147145 Jul 11 15:36 LICENSE.txtdrwxrwxr-x 2 zola zola 223 Sep 19 17:25 logs-rw-rw-r-- 1 zola zola 21867 Jul 11 15:36 NOTICE.txt-rw-rw-r-- 1 zola zola 1366 Jul 11 15:36 README.txtdrwxr-xr-x 3 zola zola 4096 Jul 11 15:36 sbindrwxr-xr-x 4 zola zola 31 Jul 11 15:36 share[zola@hadoop104 hadoop-3.1.3]$ ll | grep -E "data|log"drwxrwxr-x 3 zola zola 17 Sep 19 17:25 datadrwxrwxr-x 2 zola zola 223 Sep 19 17:25 logs

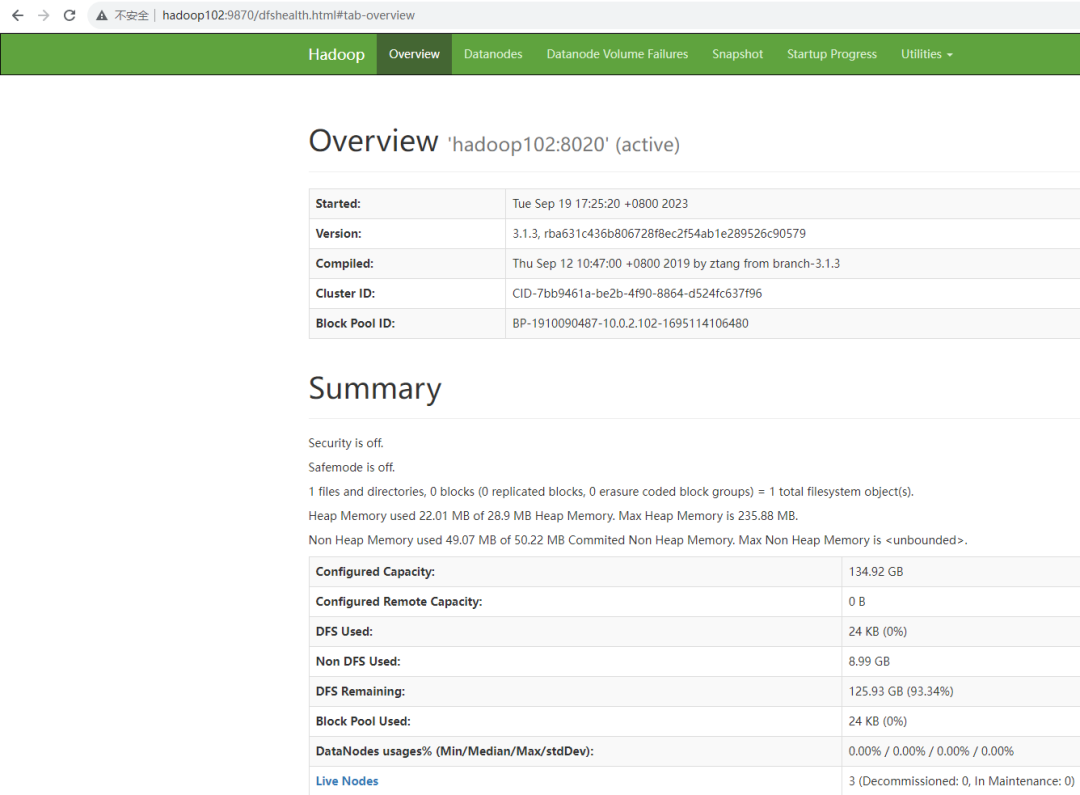

C、Web端查看HDFS的NameNode

登录HDFS组件提供的页面

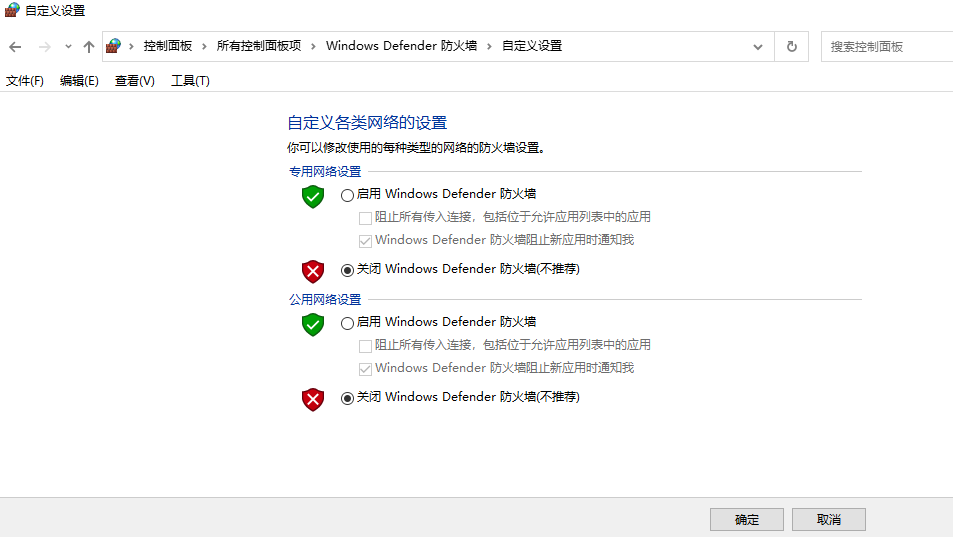

在本地PC机上配置hosts解析文件

C:\Windows\System32\drivers\etchosts#Hadoop主机映射配置10.0.2.100 hadoop10010.0.2.101 hadoop10110.0.2.102 hadoop10210.0.2.103 hadoop10310.0.2.104 hadoop10410.0.2.105 hadoop10510.0.2.106 hadoop10610.0.2.107 hadoop107

关闭本地PC机防火墙

hdfs-site.xml配置文件里面有对应的访问端口 9870

浏览器访问地址:http://hadoop102:9870/

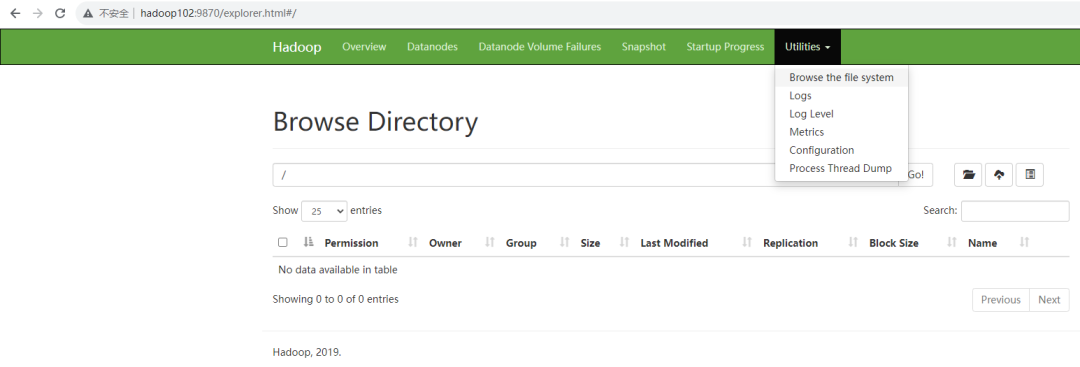

查看HDFS上存储的数据信息。主要使用是这个 Browse the file system ,这个路径主要是管理着,该路径下有哪些文件,当前该路径下是没有文件的,因为还没有上传文件

(3)在配置了ResourceManager的节点(hadoop103),启动YARN

A、查看最初的规划如下图所示:

ResourceManager部署在 hadoop103上

B、在hadoop103上,启动YARN

[zola@hadoop103 ~]$ cd /opt/module/hadoop-3.1.3/[zola@hadoop103 hadoop-3.1.3]$ lltotal 176drwxr-xr-x 2 zola zola 183 Sep 12 2019 bindrwxrwxr-x 3 zola zola 17 Sep 19 17:25 datadrwxr-xr-x 3 zola zola 20 Sep 12 2019 etcdrwxr-xr-x 2 zola zola 106 Sep 12 2019 includedrwxr-xr-x 3 zola zola 20 Sep 12 2019 libdrwxr-xr-x 4 zola zola 288 Sep 12 2019 libexec-rw-rw-r-- 1 zola zola 147145 Sep 4 2019 LICENSE.txtdrwxrwxr-x 2 zola zola 121 Sep 19 17:25 logs-rw-rw-r-- 1 zola zola 21867 Sep 4 2019 NOTICE.txt-rw-rw-r-- 1 zola zola 1366 Sep 4 2019 README.txtdrwxr-xr-x 3 zola zola 4096 Sep 12 2019 sbindrwxr-xr-x 4 zola zola 31 Sep 12 2019 sharedrwxrwxr-x 2 zola zola 22 Aug 1 10:21 wcinput[zola@hadoop103 hadoop-3.1.3]$ sbin/start-yarn.shStarting resourcemanagerStarting nodemanagers

C、在hadoop103、hadoop102 和 hadoop104上查看部署情况,符合最初的部署规划,需求和设计完全匹配上了

#hadoop103[zola@hadoop103 hadoop-3.1.3]$ jps2066 NodeManager1511 DataNode2407 Jps1960 ResourceManager#hadoop102[zola@hadoop102 ~]$ jps1955 DataNode2540 NodeManager2638 Jps1839 NameNode#hadoop104[zola@hadoop104 ~]$ jps1618 SecondaryNameNode2472 Jps1513 DataNode2348 NodeManager

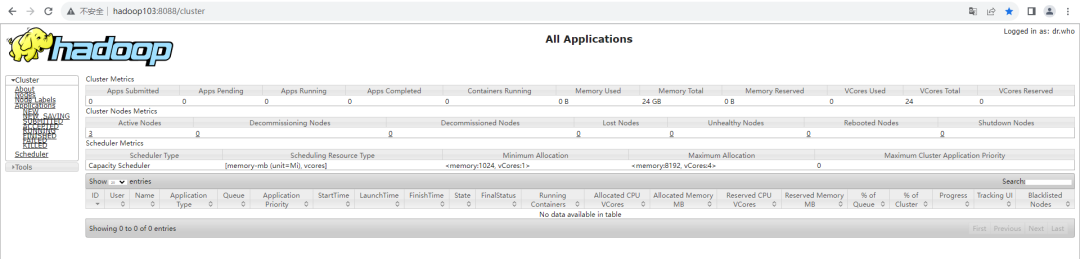

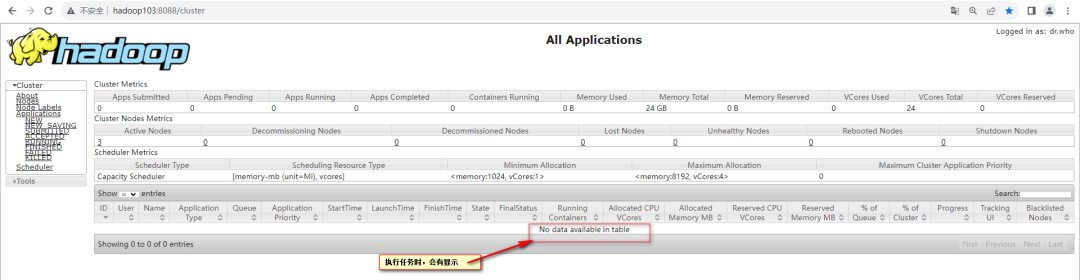

D、在Web端查看YARN的ResourceManager

浏览器访问地址:http://hadoop103:8088

查看YARN上运行的Job信息

5万+

5万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?