环境配置

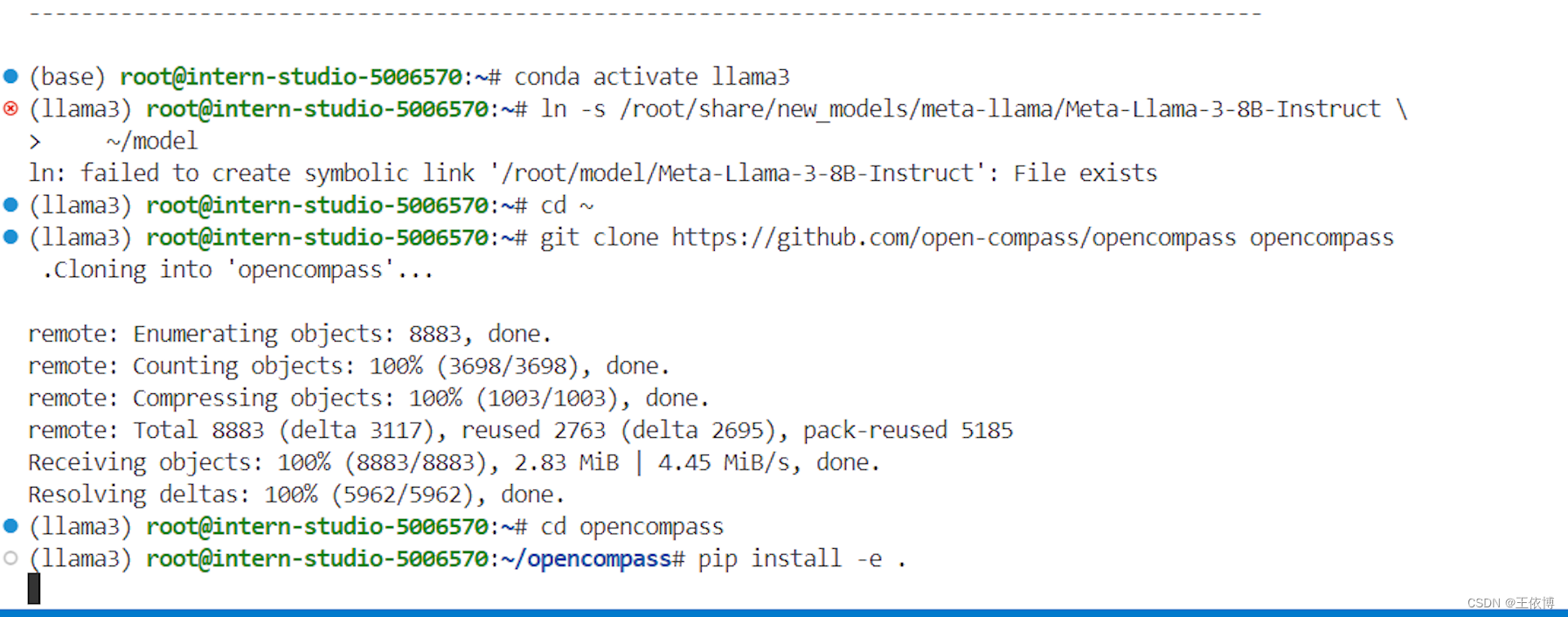

llama3环境、软链接Llama-3-8B-Instruct模型

安装 OpenCompass

cd ~

git clone https://github.com/open-compass/opencompass opencompass

cd opencompass

pip install -e .

运行以下代码

pip install -r requirements.txt

pip install protobuf

export MKL_SERVICE_FORCE_INTEL=1

export MKL_THREADING_LAYER=GNU数据准备

下载数据集到 data/ 处

wget https://github.com/open-compass/opencompass/releases/download/0.2.2.rc1/OpenCompassData-core-20240207.zip

unzip OpenCompassData-core-20240207.zip命令行快速评测

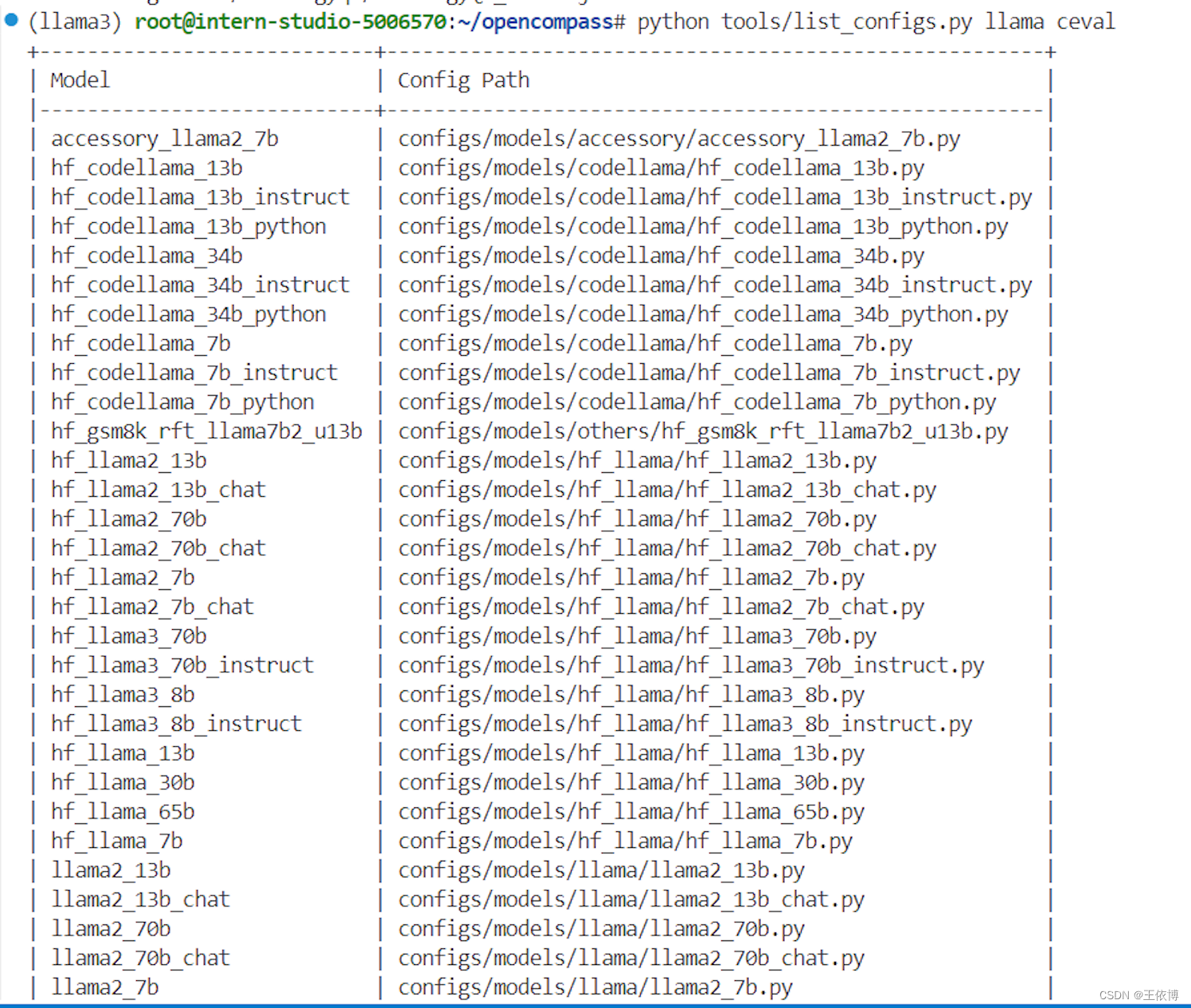

查看配置文件和支持的数据集名称

# 列出所有配置

# python tools/list_configs.py

# 列出所有跟 llama (模型)及 ceval(数据集) 相关的配置

python tools/list_configs.py llama ceval

以 C-Eval_gen 为例测评

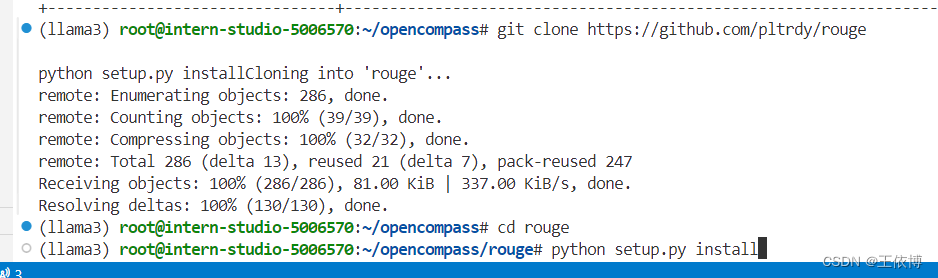

- 运行以下代码

git clone https://github.com/pltrdy/rouge

cd rouge

python setup.py install

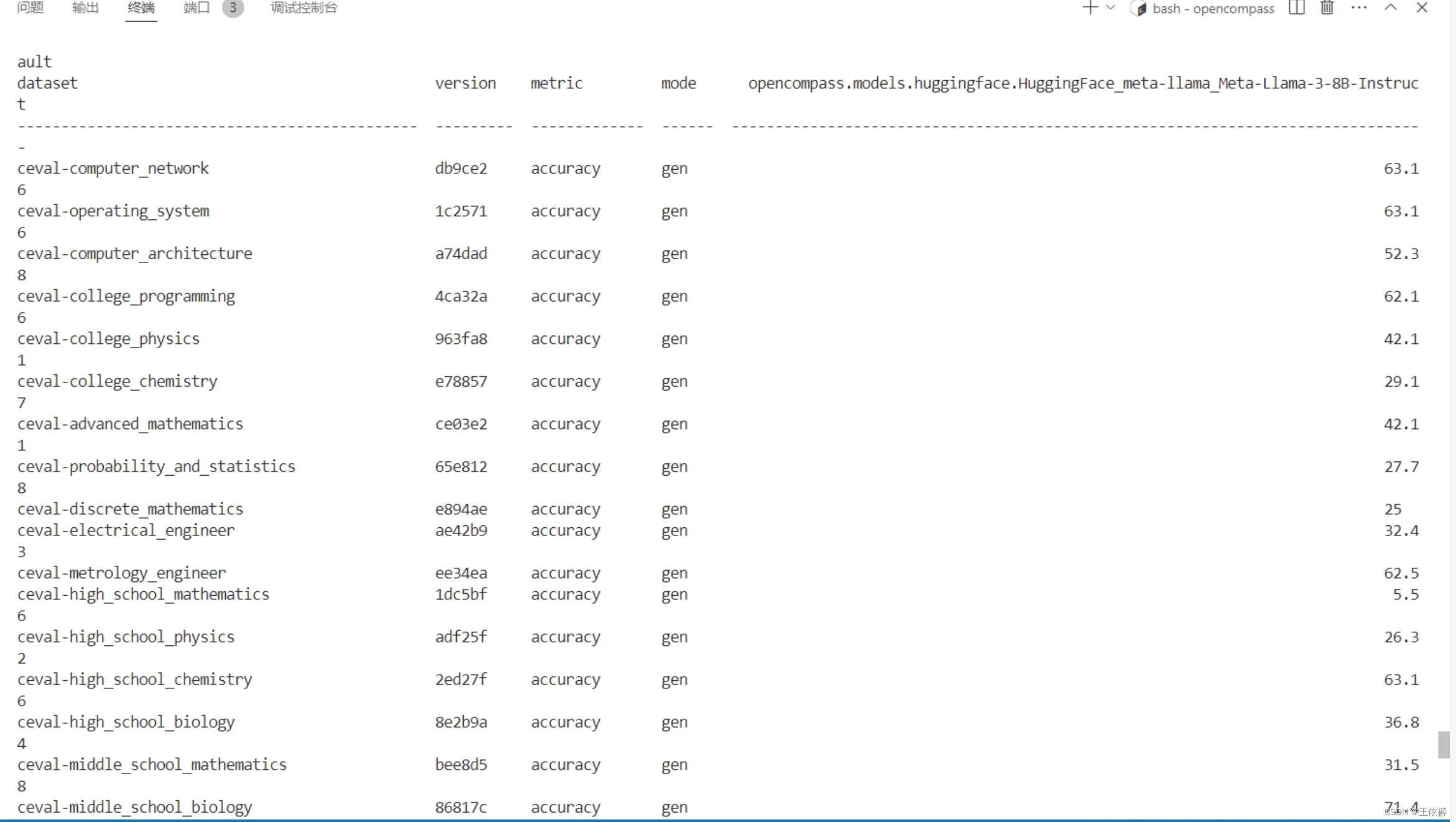

- 测评

python run.py --datasets ceval_gen --hf-path /root/model/Meta-Llama-3-8B-Instruct --tokenizer-path /root/model/Meta-Llama-3-8B-Instruct --tokenizer-kwargs padding_side='left' truncation='left' trust_remote_code=True --model-kwargs trust_remote_code=True device_map='auto' --max-seq-len 2048 --max-out-len 16 --batch-size 4 --num-gpus 1 --debug- 结果

config 快速评测

1.在 config 下添加模型配置文件 eval_llama3_8b_demo.py

from mmengine.config import read_base

with read_base():

from .datasets.mmlu.mmlu_gen_4d595a import mmlu_datasets

datasets = [*mmlu_datasets]

from opencompass.models import HuggingFaceCausalLM

models = [

dict(

type=HuggingFaceCausalLM,

abbr='Llama3_8b', # 运行完结果展示的名称

path='/root/model/Meta-Llama-3-8B-Instruct', # 模型路径

tokenizer_path='/root/model/Meta-Llama-3-8B-Instruct', # 分词器路径

model_kwargs=dict(

device_map='auto',

trust_remote_code=True

),

tokenizer_kwargs=dict(

padding_side='left',

truncation_side='left',

trust_remote_code=True,

use_fast=False

),

generation_kwargs={"eos_token_id": [128001, 128009]},

batch_padding=True,

max_out_len=100,

max_seq_len=2048,

batch_size=16,

run_cfg=dict(num_gpus=1),

)

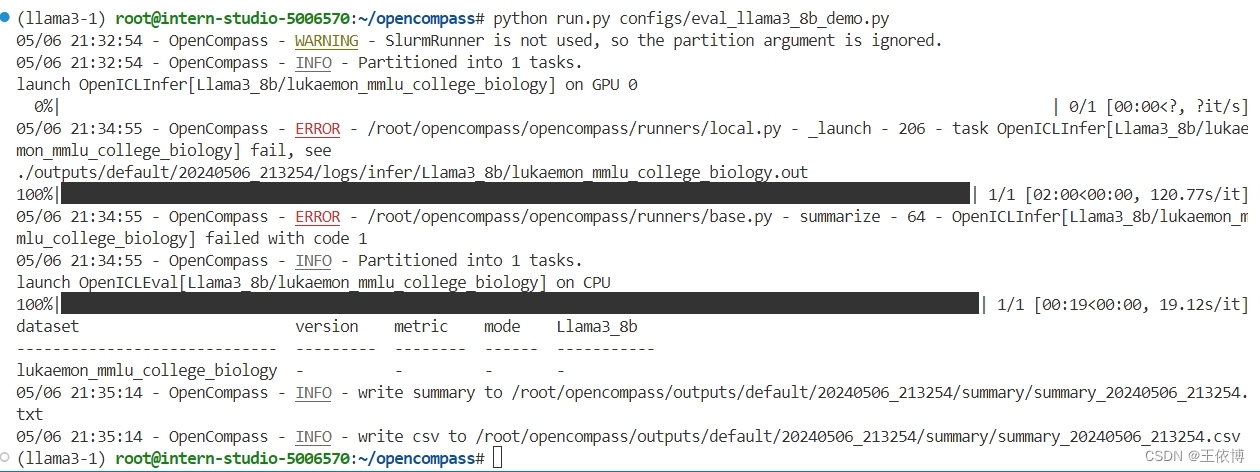

]2.运行测评文件进行测评

python run.py configs/eval_llama3_8b_demo.py在/root/opencompass/configs/datasets/mmlu/mmlu_gen_4d595a.py文中修改mmlu_all_sets,先仅测试第1个

结果出错,--debug

结果出错,--debug

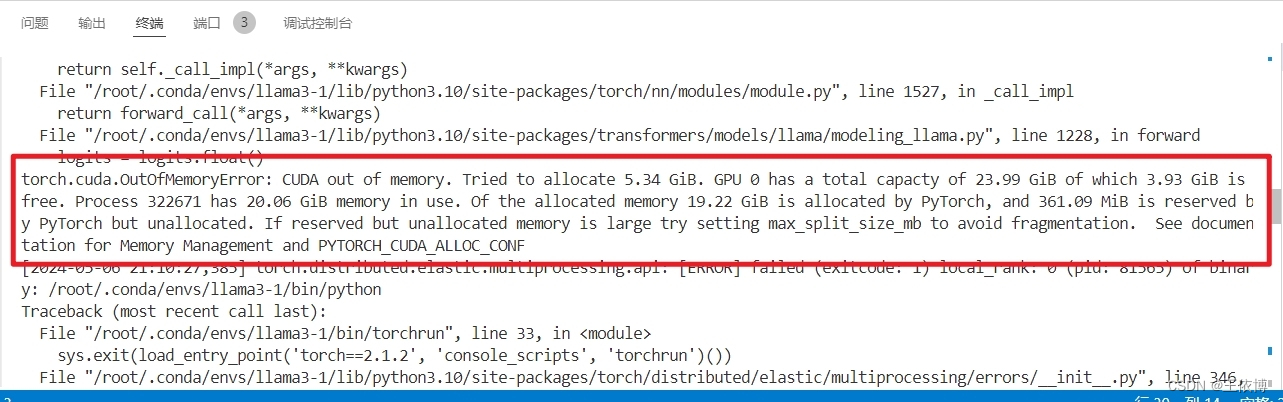

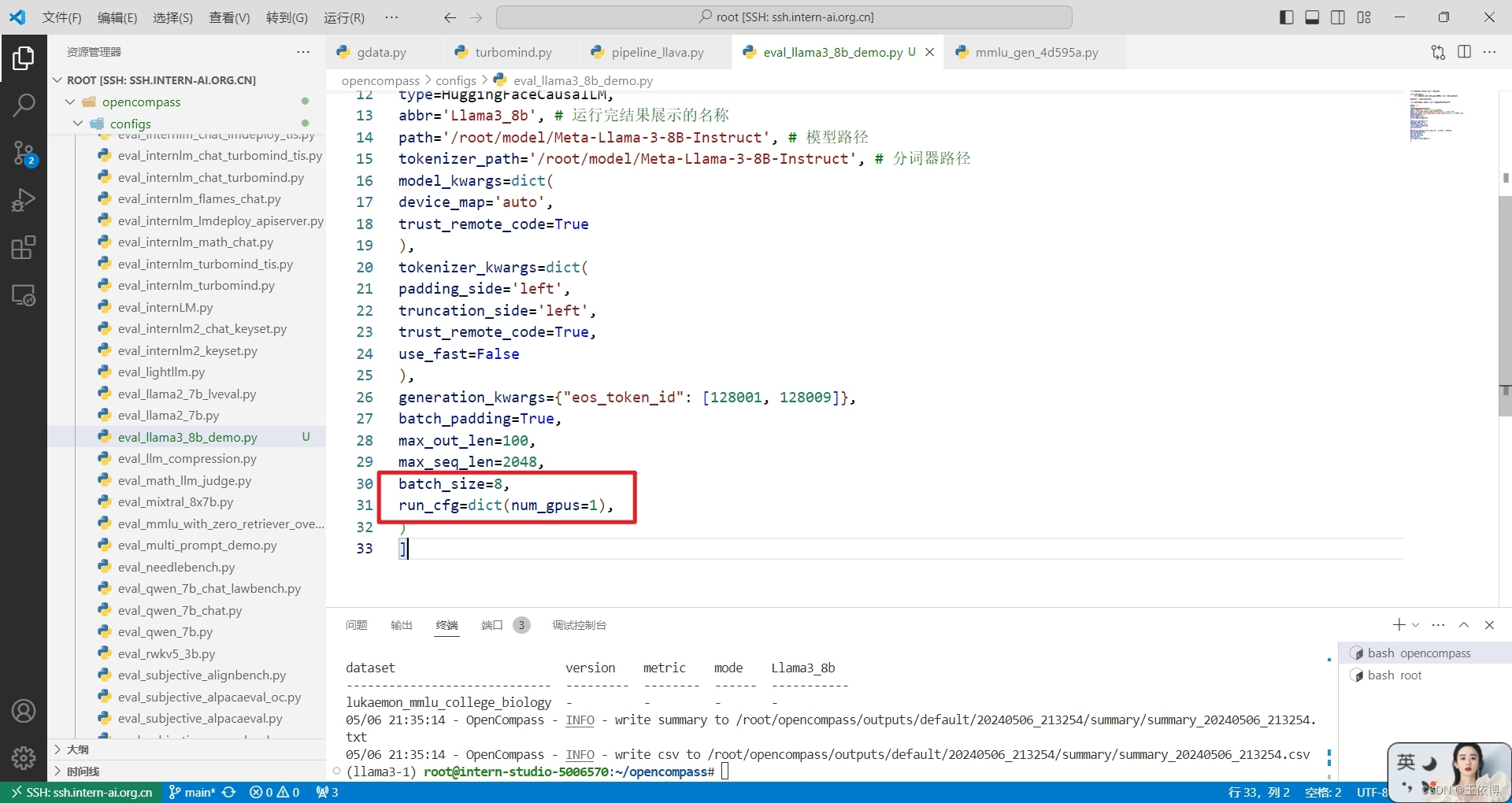

30%A100显存不够🙃 减小batch_size重试

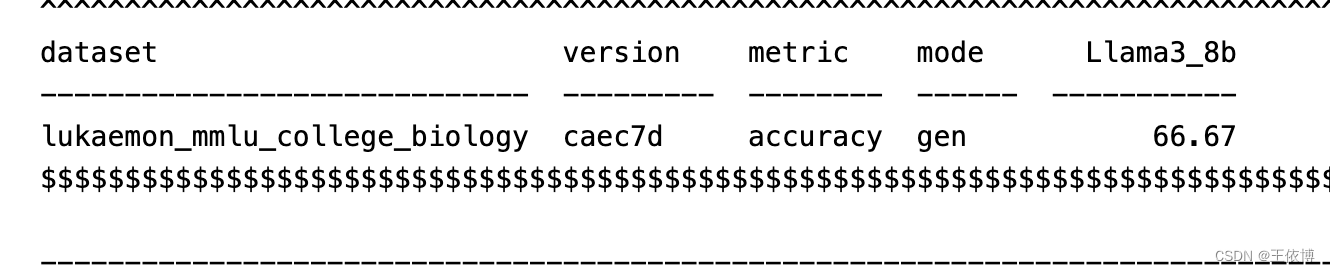

评测完成。

3871

3871

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?