一、引言

CLIP score是一种用于评估 text2img 或者 img2img,模型生成的图像与原文本(prompt text)或者原图关联度大小的指标。

大多是Text2Image论文中都会用到CLIP Score,今天这篇博客主要是实现了text2image-similary和image2image-similary。

二、环境配置

首先你需要安装好最基本的pytroch环境,这里就不过多叙述。

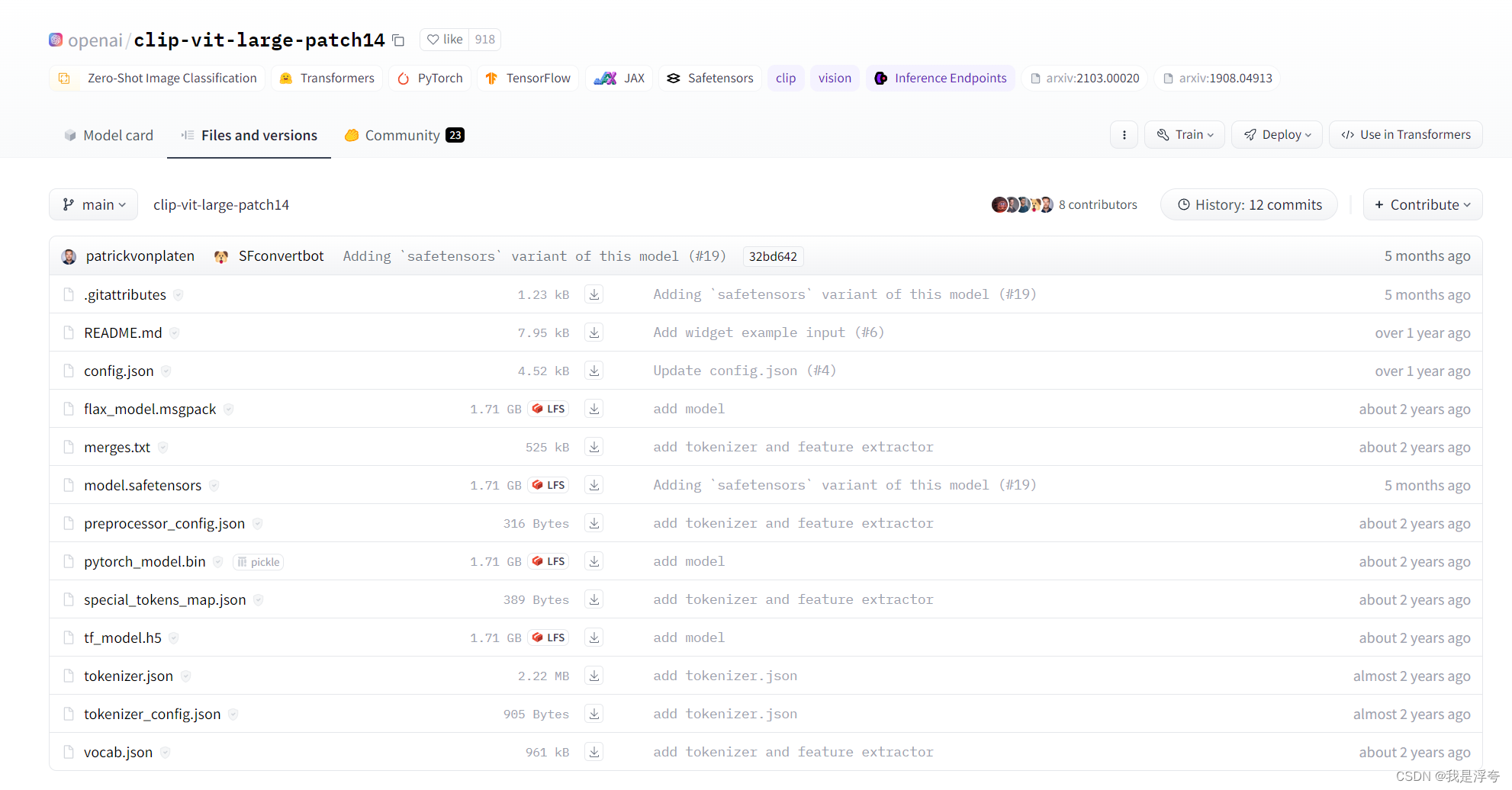

代码实现中用到了Clip模型,这里我用的是openai/clip-vit-large-patch14 · Hugging Face,大家下载好放到项目文件夹中即可(每个文件都要下载别漏了哈)。

三、Text2Image-similary

稍微讲解一下代码:

首先,加载了预训练的CLIP模型和数据处理器,然后,这里定义了几个函数来处理图像和文本数据。

get_all_folders函数用于获取指定文件夹下的所有子文件夹,get_all_images函数用于获取指定文件夹下的所有图像文件,get_clip_score函数用于计算图像和文本之间的CLIP得分。

calculate_clip_scores_for_all_categories函数是主要的处理函数,它遍历指定的图像文件夹,对每个子文件夹(即类别)下的所有图像计算CLIP得分,并计算每个类别的平均得分。

最后,我们从文件中读取文本提示,然后调用calculate_clip_scores_for_all_categories函数计算所有类别的CLIP得分和平均得分,并打印出结果。

from tqdm import tqdm

from PIL import Image

import torch

import os

import numpy as np

from transformers import CLIPProcessor, CLIPModel

# 设置GPU

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

torch.cuda.set_device(6)

# 加载模型

model = CLIPModel.from_pretrained("openai/clip-vit-large-patch14").to(device)

# 加载数据处理器

processor = CLIPProcessor.from_pretrained("openai/clip-vit-large-patch14")

def get_all_folders(folder_path):

# 获取文件夹中的所有文件和文件夹

all_files = os.listdir(folder_path)

# 过滤所有的文件夹

folder_files = [file for file in all_files if os.path.isdir(os.pat

本文介绍了如何使用CLIP模型在文本到图像(Text2Image)和图像到图像(Image2Image)任务中计算相似度,包括环境配置、代码实现以及具体函数的功能,如获取文件夹中的图像、计算图像与文本的关联度和类别间的平均相似度。

本文介绍了如何使用CLIP模型在文本到图像(Text2Image)和图像到图像(Image2Image)任务中计算相似度,包括环境配置、代码实现以及具体函数的功能,如获取文件夹中的图像、计算图像与文本的关联度和类别间的平均相似度。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

2448

2448

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?