说在前面

建议在本地模式下运行Hive,数据量不够大的情况下本地模式反而快哦!

设置本地模式相关可以去看

第一题

难点

开窗函数的运用,系统内置函数的运用

数据准备

我们有如下的用户访问数据

| userId | visitDate | visitCount |

|---|---|---|

| u01 | 2017/1/21 | 5 |

| u02 | 2017/1/23 | 6 |

| u03 | 2017/1/22 | 8 |

| u04 | 2017/1/20 | 3 |

| u01 | 2017/1/23 | 6 |

| u01 | 2017/2/21 | 8 |

| u02 | 2017/1/23 | 6 |

| u01 | 2017/2/22 | 4 |

要求使用SQL统计出每个用户的累积访问次数,如下表所示:

| 用户id | 月份 | 小计 | 累积 |

|---|---|---|---|

| u01 | 2017-01 | 11 | 11 |

| u01 | 2017-02 | 12 | 23 |

| u02 | 2017-01 | 12 | 12 |

| u03 | 2017-01 | 8 | 8 |

| u04 | 2017-01 | 3 | 3 |

数据:

u01 2017/1/21 5

u02 2017/1/23 6

u03 2017/1/22 8

u04 2017/1/20 3

u01 2017/1/23 6

u01 2017/2/21 8

u02 2017/1/23 6

u01 2017/2/22 4

创建表

create table action

(userId string,

visitDate string,

visitCount int)

row format delimited fields terminated by "\t";

加载数据

根据自己需求加载,我使用的是load data语法,有不了解的可以去看

实战之路

需求一:统计出每个用户的每月访问次数及累计访问次数

思路

需要统计的是每个用户的每月访问次数及累计访问次数。

很简单,拆需求!

第一步要统计每个用户的每月访问次数。但我们观察上文中结果集可知访问的时间需要从yyyy/M/dd转变为yyyy-MM,所以可以先转变字段格式,再来求,因此:

第一步:修改月份格式

第二步:根据第一步的结果集求出每个用户的每月访问次数

第三步:根据第二步的结果集求出每个用户的累计访问次数

OK,思路清晰,直奔主题!

1:修改月份格式

select userid,date_format(regexp_replace(visitdate,"/","-"),"yyyy-MM") the_month,visitcount

from extend1_visit; #作为t1

2:根据第一步的结果集求出每个用户的每月访问次数

select

userid,the_month,sum(visitcount)

from

()t1

group by userid,the_month;

即:

select

userid,the_month,sum(visitcount) month_visit

from

(select userid,date_format(regexp_replace(visitdate,"/","-"),"yyyy-MM") the_month,visitcount

from extend1_visit)t1

group by userid,the_month #作为t2

3:根据2求出每个用户的累计访问次数

select

userid,the_month,month_visit,sum(month_visit) over(partition by userid rows between unbounded preceding and current row) all_visit

from ()t2

即:

select

userid,the_month,month_visit,sum(month_visit) over(partition by userid rows between unbounded preceding and current row) all_visit

from (select

userid,the_month,sum(visitcount) month_visit

from

(select userid,date_format(regexp_replace(visitdate,"/","-"),"yyyy-MM") the_month,visitcount

from extend1_visit)t1

group by userid,the_month)t2;

OK,需求解决,如果你直接看结果的SQL的话难免觉得长,但是在清晰的思路下,一步一步生成它,就很容易了!

第二题

难点

rank()函数配合开窗函数的运用

数据准备

有50W个京东店铺,每个顾客访问任何一个店铺的任何一个商品时都会产生一条访问日志,访问日志存储的表名为visit,访客的用户id为user_id,被访问的店铺名称为shop,请统计:

u1 a

u2 b

u1 b

u1 a

u3 c

u4 b

u1 a

u2 c

u5 b

u4 b

u6 c

u2 c

u1 b

u2 a

u2 a

u3 a

u5 a

u5 a

u5 a

咳咳,数据仅作为练习使用,因此数据量较小,问题不大问题不大。

创建表

create table visit(user_id string,shop string) row format delimited fields terminated

by '\';

加载数据

根据自己需求加载,我使用的是load data语法,有不了解的可以去看

实战之路

需求一:每个店铺的UV(访问数)

思路:即按照店铺分组,获取用户名的数量(不去重)

select

shop,count(user_id) num

from extend2_visit

group by

shop;

so easy!

需求二:每个店铺访问次数top3的访客信息。输出店铺名称、访客id、访问次数

思路:

1 首先获取每个店铺的访客信息

2 获取rank

3 获取top3

1 首先获取每个店铺的访客信息

select shop,user_id,count(user_id) vt

from

extend2_visit

group by shop,user_id; #-->t1

2 获取rank

select

shop,user_id,vt,rank() over(partition by shop,user_id order by vt) rk

from

()t1

where rk<=3;

即:

select

shop,user_id,vt,rank() over(partition by shoporder by vt) rk

from

(select shop,user_id,count(user_id) vt

from

extend2_visit

group by shop,user_id)t1; #-->t2

3 获取top3

select shop,user_id,rk

from () t2

where rk <=3;

即:

select shop,user_id,rk

from (select

shop,user_id,vt,rank() over(partition by shop order by vt) rk

from

(select shop,user_id,count(user_id) vt

from

extend2_visit

group by shop,user_id)t1) t2

where rk <=3;

结果集如下:我从Linux复制粘贴来的。。。。

±------±---------±----+

| shop | user_id | rk |

±------±---------±----+

| a | u3 | 1 |

| a | u2 | 2 |

| a | u1 | 3 |

| a | u5 | 3 |

| b | u2 | 1 |

| b | u5 | 1 |

| b | u1 | 3 |

| b | u4 | 3 |

| c | u3 | 1 |

| c | u6 | 1 |

| c | u2 | 3 |

±------±---------±----+

第三题

难点

很多,思路也比较发散~~~

数据准备

以下表记录了用户每天的蚂蚁森林低碳生活领取的记录流水。

table_name:user_low_carbon

user_id data_dt low_carbon

用户 日期 减少碳排放(g)

蚂蚁森林植物换购表,用于记录申领环保植物所需要减少的碳排放量

table_name: plant_carbon

plant_id plant_name low_carbon

植物编号 植物名 换购植物所需要的碳

提供的数据说明:

(使用的话请去除每个记录之间的空格)

user_low_carbon:

u_001 2017/1/1 10

u_001 2017/1/2 150

u_001 2017/1/2 110

u_001 2017/1/2 10

u_001 2017/1/4 50

u_001 2017/1/4 10

u_001 2017/1/6 45

u_001 2017/1/6 90

u_002 2017/1/1 10

u_002 2017/1/2 150

u_002 2017/1/2 70

u_002 2017/1/3 30

u_002 2017/1/3 80

u_002 2017/1/4 150

u_002 2017/1/5 101

u_002 2017/1/6 68

u_003 2017/1/1 20

u_003 2017/1/2 10

u_003 2017/1/2 150

u_003 2017/1/3 160

u_003 2017/1/4 20

u_003 2017/1/5 120

u_003 2017/1/6 20

u_003 2017/1/7 10

u_003 2017/1/7 110

u_004 2017/1/1 110

u_004 2017/1/2 20

u_004 2017/1/2 50

u_004 2017/1/3 120

u_004 2017/1/4 30

u_004 2017/1/5 60

u_004 2017/1/6 120

u_004 2017/1/7 10

u_004 2017/1/7 120

u_005 2017/1/1 80

u_005 2017/1/2 50

u_005 2017/1/2 80

u_005 2017/1/3 180

u_005 2017/1/4 180

u_005 2017/1/4 10

u_005 2017/1/5 80

u_005 2017/1/6 280

u_005 2017/1/7 80

u_005 2017/1/7 80

u_006 2017/1/1 40

u_006 2017/1/2 40

u_006 2017/1/2 140

u_006 2017/1/3 210

u_006 2017/1/3 10

u_006 2017/1/4 40

u_006 2017/1/5 40

u_006 2017/1/6 20

u_006 2017/1/7 50

u_006 2017/1/7 240

u_007 2017/1/1 130

u_007 2017/1/2 30

u_007 2017/1/2 330

u_007 2017/1/3 30

u_007 2017/1/4 530

u_007 2017/1/5 30

u_007 2017/1/6 230

u_007 2017/1/7 130

u_007 2017/1/7 30

u_008 2017/1/1 160

u_008 2017/1/2 60

u_008 2017/1/2 60

u_008 2017/1/3 60

u_008 2017/1/4 260

u_008 2017/1/5 360

u_008 2017/1/6 160

u_008 2017/1/7 60

u_008 2017/1/7 60

u_009 2017/1/1 70

u_009 2017/1/2 70

u_009 2017/1/2 70

u_009 2017/1/3 170

u_009 2017/1/4 270

u_009 2017/1/5 70

u_009 2017/1/6 70

u_009 2017/1/7 70

u_009 2017/1/7 70

u_010 2017/1/1 90

u_010 2017/1/2 90

u_010 2017/1/2 90

u_010 2017/1/3 90

u_010 2017/1/4 90

u_010 2017/1/4 80

u_010 2017/1/5 90

u_010 2017/1/5 90

u_010 2017/1/6 190

u_010 2017/1/7 90

u_010 2017/1/7 90

u_011 2017/1/1 110

u_011 2017/1/2 100

u_011 2017/1/2 100

u_011 2017/1/3 120

u_011 2017/1/4 100

u_011 2017/1/5 100

u_011 2017/1/6 100

u_011 2017/1/7 130

u_011 2017/1/7 100

u_012 2017/1/1 10

u_012 2017/1/2 120

u_012 2017/1/2 10

u_012 2017/1/3 10

u_012 2017/1/4 50

u_012 2017/1/5 10

u_012 2017/1/6 20

u_012 2017/1/7 10

u_012 2017/1/7 10

u_013 2017/1/1 50

u_013 2017/1/2 150

u_013 2017/1/2 50

u_013 2017/1/3 150

u_013 2017/1/4 550

u_013 2017/1/5 350

u_013 2017/1/6 50

u_013 2017/1/7 20

u_013 2017/1/7 60

u_014 2017/1/1 220

u_014 2017/1/2 120

u_014 2017/1/2 20

u_014 2017/1/3 20

u_014 2017/1/4 20

u_014 2017/1/5 250

u_014 2017/1/6 120

u_014 2017/1/7 270

u_014 2017/1/7 20

u_015 2017/1/1 10

u_015 2017/1/2 20

u_015 2017/1/2 10

u_015 2017/1/3 10

u_015 2017/1/4 20

u_015 2017/1/5 70

u_015 2017/1/6 10

u_015 2017/1/7 80

u_015 2017/1/7 60

plant_carbon:

p001 梭梭树 17

p002 沙柳 19

p003 樟子树 146

p004 胡杨 215

创建表

create table user_low_carbon(user_id String,data_dt String,low_carbon int) row format delimited fields terminated by '\t';

create table plant_carbon(plant_id string,plant_name String,low_carbon int) row format delimited fields terminated by '\t';

加载数据

根据自己需求加载,我使用的是load data语法,有不了解的可以去看

实战之路

需求一:

问题:假设2017年1月1日开始记录低碳数据(user_low_carbon),

假设2017年10月1日之前满足申领条件的用户都申领了一颗p004-胡杨,

剩余的能量全部用来领取“p002-沙柳”。

统计在10月1日累计申领“p002-沙柳"排名前10的用户信息;以及他比后一名多领了几颗沙柳。

令小白望而生畏的需求~~~~

让我们一起来拆分吧!

我的思路:

1 查找 日期小于等于10月1日的信息

2 根据1的结果 查找 每个用户的总能量group by后having 条件 总能量>查询plant表胡杨的能量

3 根据2的结果 查找总能量排名前十的用户信息

4 根据3的结果 查找(总能量-胡杨的能量)/沙柳的能量 别名兑换的沙柳数

5 根据4的结果 查找当前行的沙柳数和下一行沙柳数的数目

6 根据5的结果 查找当前行的沙柳数减去上一行的数目

1 查找 日期小于等于10月1日的信息

① 转换日期格式(Hive只认识以’-'分隔的日期,因此需要转换)

select

user_id,regexp_replace(data_dt,'/','-') u_date,low_carbon

from user_low_carbon; -->t1

②根据①的结果筛选日期小于‘2017-10-1’的信息

select user_id,u_date,low_carbon

from ()t1

where u_date<='2017-10-1';

②根据①的结果筛选日期小于‘2017-10-1’的信息

select user_id,u_date,low_carbon

from ()t1

where u_date<=‘2017-10-1’;

即:

select user_id,u_date,low_carbon

from (

select

user_id,regexp_replace(data_dt,'/','-') u_date,low_carbon

from user_low_carbon)t1

where datediff(u_date,'2017-10-1')<0; -->t2

2 根据1的结果 查找 每个用户的总能量group by后having 条件 总能量>查询plant表胡杨的能量

select user_id,sum(low_carbon) all_carbon

from ()t2

group by user_id

having all_carbon>(select low_carbon from plant_carbon where plant_id ='p004');

即:

select user_id,sum(low_carbon) all_carbon

from (select user_id,u_date,low_carbon

from (

select

user_id,regexp_replace(data_dt,'/','-') u_date,low_carbon

from user_low_carbon)t1

where datediff(u_date,'2017-10-1')<0)t2

group by user_id

having all_carbon>(select low_carbon from plant_carbon where plant_id ='p004')

-->t3

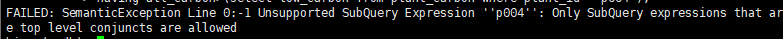

但是很遗憾,Hive的子查询不允许作用在Having子句中。

因此我决定先分组,然后以分组后的结果作为数据源使用where过滤。

第二步正确做法如下:

①分组

select user_id,sum(low_carbon) all_carbon

from ()t2

group by user_id

即:

select user_id,sum(low_carbon) all_carbon

from (select user_id,u_date,low_carbon

from (

select

user_id,regexp_replace(data_dt,'/','-') u_date,low_carbon

from user_low_carbon)t1

where datediff(u_date,'2017-10-1')<0)t2

group by user_id

; -->t3

②过滤

select user_id,all_carbon

from ()t3

where all_carbon>(select low_carbon from plant_carbon where plant_id='p004')

即:

select user_id,all_carbon

from (select user_id,sum(low_carbon) all_carbon

from (select user_id,u_date,low_carbon

from (

select

user_id,regexp_replace(data_dt,'/','-') u_date,low_carbon

from user_low_carbon)t1

where datediff(u_date,'2017-10-1')<0)t2

group by user_id)t3

where all_carbon>(select low_carbon from plant_carbon where plant_id='p004') -->t4

3 根据2的结果 查找总能量排名前十的用户信息

select user_id,all_carbon

from ()t4

order by all_carbon desc limit 10; -->t5

即:

即:

select user_id,all_carbon

from (select user_id,all_carbon

from (select user_id,sum(low_carbon) all_carbon

from (select user_id,u_date,low_carbon

from (

select

user_id,regexp_replace(data_dt,'/','-') u_date,low_carbon

from user_low_carbon)t1

where datediff(u_date,'2017-10-1')<0)t2

group by user_id)t3

where all_carbon>(select low_carbon from plant_carbon where plant_id='p004'))t4

order by all_carbon desc limit 10; -->t5

4 根据3的结果 查找(总能量-胡杨的能量)/沙柳的能量 别名兑换的沙柳数

select user_id,all_carbon,(all_carbon-(select low_carbon from plant_carbon where plant_id='p004'))/(select low_carbon from plant_carbon where plant_id='p002') sls

frin ()t5;

即:

select user_id,all_carbon,(all_carbon-(select low_carbon from plant_carbon where plant_id='p004'))/(select low_carbon from plant_carbon where plant_id='p002') sls

from (select user_id,all_carbon

from (select user_id,all_carbon

from (select user_id,sum(low_carbon) all_carbon

from (select user_id,u_date,low_carbon

from (

select

user_id,regexp_replace(data_dt,'/','-') u_date,low_carbon

from user_low_carbon)t1

where datediff(u_date,'2017-10-1')<0)t2

group by user_id)t3

where all_carbon>(select low_carbon from plant_carbon where plant_id='p004'))t4

order by all_carbon desc limit 10)t5; -->t6

5 根据4的结果 查找当前行的沙柳数和下一行沙柳数的数目

select user_id,all_carbon,sls,lead(sls,1) over(order by sls desc) morethannext

from ()t6;

即:

select user_id,all_carbon,sls,lead(sls,1) over(order by sls desc) morethannext

from (select user_id,all_carbon,(all_carbon-(select low_carbon from plant_carbon where plant_id='p004'))/(select low_carbon from plant_carbon where plant_id='p002') sls

from (select user_id,all_carbon

from (select user_id,all_carbon

from (select user_id,sum(low_carbon) all_carbon

from (select user_id,u_date,low_carbon

from (

select

user_id,regexp_replace(data_dt,'/','-') u_date,low_carbon

from user_low_carbon)t1

where datediff(u_date,'2017-10-1')<0)t2

group by user_id)t3

where all_carbon>(select low_carbon from plant_carbon where plant_id='p004'))t4

order by all_carbon desc limit 10)t5)t6; -->t7

6 根据5的结果 查找当前行的沙柳数减去上一行的数目

select user_id,all_carbon,sls,(sls-morethannext) more_thannext

from (select user_id,all_carbon,sls,lead(sls,1) over(order by sls desc) morethannext

from (select user_id,all_carbon,(all_carbon-(select low_carbon from plant_carbon where plant_id='p004'))/(select low_carbon from plant_carbon where plant_id='p002') sls

from (select user_id,all_carbon

from (select user_id,all_carbon

from (select user_id,sum(low_carbon) all_carbon

from (select user_id,u_date,low_carbon

from (

select

user_id,regexp_replace(data_dt,'/','-') u_date,low_carbon

from user_low_carbon)t1

where datediff(u_date,'2017-10-1')<0)t2

group by user_id)t3

where all_carbon>(select low_carbon from plant_carbon where plant_id='p004'))t4

order by all_carbon desc limit 10)t5)t6)t7;

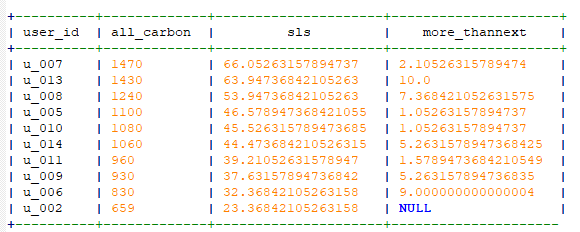

结果如下:

需求二:

问题:查询user_low_carbon表中每日流水记录,条件为:

用户在2017年,连续三天(或以上)的天数里,

每天减少碳排放(low_carbon)都超过100g的用户低碳流水。

思路:

步骤1:筛选2017年的数据及每天的流水

步骤2:按user_id分区,求前天,昨天,当天、明天、后天的流水

步骤3:根据2的结果判断

在当天流水>100的前提下:

明天>100 and 后天>100

or

昨天>100 and 明天>100

or

昨天>100 and 前天>100

步骤1:筛选2017年的数据及每天的流水

select user_id,data_dt,sum(low_carbon) day_carbon

from user_low_carbon

where year(regexp_replace(data_dt,"/","-"))="2017"

group by data_dt,user_id -->t1

步骤2:按user_id分区,求前天,昨天,当天、明天、后天的流水

select user_id,data_dt,day_carbon,lag(day_carbon,2) over(partition by user_id) least_low,lag(day_carbon,1) over(partition by user_id) last_low,lead(day_carbon,1) over(partition by user_id) tomor_low,lead(day_carbon,2) over(partition by user_id) next_low

from

()t1;

即:

select user_id,data_dt,day_carbon,lag(day_carbon,2) over(partition by user_id) least_low,lag(day_carbon,1) over(partition by user_id) last_low,lead(day_carbon,1) over(partition by user_id) tomor_low,lead(day_carbon,2) over(partition by user_id) next_low

from

(select user_id,data_dt,sum(low_carbon) day_carbon

from user_low_carbon

where year(regexp_replace(data_dt,"/","-"))="2017"

group by data_dt,user_id)t1; -->t2

步骤3:根据2的结果判断

在当天流水>100的前提下:

明天>100 and 后天>100

or

昨天>100 and 明天>100

or

昨天>100 and 前天>100

select user_id,data_dt,day_carbon

from ()t2

where day_carbon >100 and (tomor_low>100 and next_low >100) or (last_low>100 and tomor_low>100) or (last_low>100 and least_low>100);

即:

select user_id,data_dt,day_carbon

from (select user_id,data_dt,day_carbon,lag(day_carbon,2) over(partition by user_id) least_low,lag(day_carbon,1) over(partition by user_id) last_low,lead(day_carbon,1) over(partition by user_id) tomor_low,lead(day_carbon,2) over(partition by user_id) next_low

from

(select user_id,data_dt,sum(low_carbon) day_carbon

from user_low_carbon

where year(regexp_replace(data_dt,"/","-"))="2017"

group by data_dt,user_id)t1)t2

where day_carbon >100 and ((tomor_low>100 and next_low >100) or (last_low>100 and tomor_low>100) or (last_low>100 and least_low>100));

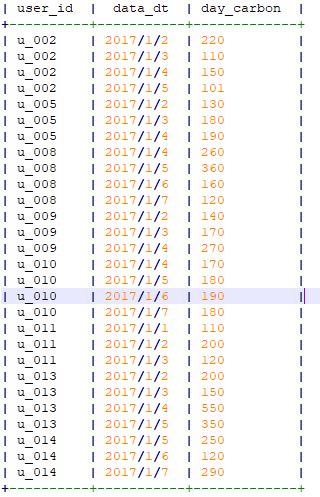

结果如下:

说在最后

以前学习Mysql的时候遇到这种题目难免头大,当我能够将需求拆分,这些很长很长的sql在我眼中反而变成了将一个方法的结果作为参数代入下一个方法。不怕它长,只要能运行出来就是好代码!

本文通过三个复杂的数据分析案例,详细解析了如何使用Hive SQL进行用户访问统计和能量兑换分析。首先,统计用户每月访问次数及累计访问次数,利用开窗函数和日期格式转换;其次,计算每个店铺的UV和访问次数Top3的访客信息,应用rank()函数;最后,处理蚂蚁森林能量领取记录,找出在特定日期前满足条件的用户及其兑换沙柳数量,并计算相邻用户间的差距。整个过程展示了Hive SQL在大数据处理中的实用性和灵活性。

本文通过三个复杂的数据分析案例,详细解析了如何使用Hive SQL进行用户访问统计和能量兑换分析。首先,统计用户每月访问次数及累计访问次数,利用开窗函数和日期格式转换;其次,计算每个店铺的UV和访问次数Top3的访客信息,应用rank()函数;最后,处理蚂蚁森林能量领取记录,找出在特定日期前满足条件的用户及其兑换沙柳数量,并计算相邻用户间的差距。整个过程展示了Hive SQL在大数据处理中的实用性和灵活性。

1661

1661