简要:爬取网站近期疫情数据,并存入数据库中。

一、建立数据库

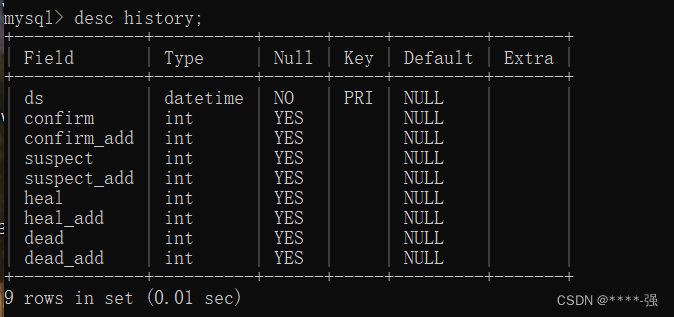

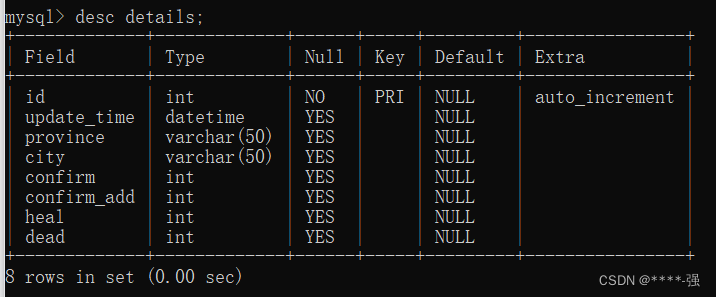

数据库包含history表,detail表

history表:

CREATE TABLE `history` (

`ds` datetime NOT NULL COMMENT '日期',

`confirm` int(11) DEFAULT NULL COMMENT '累计确诊',

`confirm_add` int(11) DEFAULT NULL COMMENT '当日新增确诊',

`suspect` int(11) DEFAULT NULL COMMENT '剩余疑似',

`suspect_add` int(11) DEFAULT NULL COMMENT '当日新增疑似',

`heal` int(11) DEFAULT NULL COMMENT '累计治愈',

`heal_add` int(11) DEFAULT NULL COMMENT '当日新增治愈',

`dead` int(11) DEFAULT NULL COMMENT '累计死亡',

`dead_add` int(11) DEFAULT NULL COMMENT '当日新增死亡',

PRIMARY KEY (`ds`) USING BTREE )

ENGINE=InnoDB DEFAULT CHARSET=utf8mb4;查看:

detail表:

CREATE TABLE `details` (

`id` int(11) NOT NULL AUTO_INCREMENT,

`update_time` datetime DEFAULT NULL COMMENT '数据最后更新时间',

`province` varchar(50) DEFAULT NULL COMMENT '省',

`city` varchar(50) DEFAULT NULL COMMENT '市',

`confirm` int(11) DEFAULT NULL COMMENT '累计确诊',

`confirm_add` int(11) DEFAULT NULL COMMENT '新增确诊',

`heal` int(11) DEFAULT NULL COMMENT '累计治愈',

`dead` int(11) DEFAULT NULL COMMENT '累计死亡',

PRIMARY KEY (`id`) )

ENGINE=InnoDB DEFAULT CHARSET=utf8mb4; 查看:

二、爬取数据,并导入数据库

rt_02_util.py(封装mysql数据的公共操作)

import pymysql

# 获取数据库连接

def get_conn():

conn = pymysql.connect(

host="localhost",port=3306,

user="root",password="******",

database="sn_python",charset="utf8"

)

cursor = conn.cursor()

# 返回数据库连接和cursor

return conn,cursor

# 释放资源

def close(conn,curosr):

conn.close()

curosr.close()rt_01_爬取疫情数据.py

def get_history():爬取history表中的数据

def insert_history():插入数据到history表

def get_details():爬取details表中的数据

def insert_details():插入数据到details表

import requests

import json

import time

import day07.rt_util as util

# https://view.inews.qq.com/g2/getOnsInfo?name=disease_h5:details表的数据

# https://view.inews.qq.com/g2/getOnsInfo?name=disease_other:history表的数据

def get_history():

"""爬取history表中的数据"""

# 确定爬取数据的url地址

url = "https://view.inews.qq.com/g2/getOnsInfo?name=disease_other"

# 发送请求,获取响应

response = requests.get(url)

# 获取响应体中的数据

json_data = response.text

print(json_data)

# 把json字符串转换成字典格式

datas = json.loads(json_data)

print(datas)

print(type(json_data), type(datas))

datas = datas['data']

print(datas)

print(type(datas))

datas = json.loads(datas)

print(type(datas))

# 遍历字典获取字典中的数据

# for key in datas.keys():

# print(key)

# chinaDayList:history表中的累计数据(4条)

# 定义字典,存储爬取到的history表的数据

history = {}

for day in datas['chinaDayList']:

# print(day)

# 累计数据:日期,累计确诊,先有疑似,累计治愈,累计死亡

ds = day['y']+"."+day['date']

# 把日期字符串处理成数据表中需要的格式

tup_ds = time.strptime(ds, "%Y.%m.%d")

ds = time.strftime("%Y-%m-%d", tup_ds)

confirm = day['confirm']

suspect = day['suspect']

dead = day['dead']

heal = day['heal']

# print(ds, confirm, suspect, dead, heal)

history[ds] = {"confirm": confirm, "suspect": suspect, "heal": heal, "dead": dead}

print("*"*100)

# chinaDayAddList:history表中的新增数据(4条)

for day_add in datas['chinaDayAddList']:

# print(day_add)

# 新增数据:日期,新增确诊,新增疑似,新增治愈,新增死亡

ds = day_add['y']+"."+day_add['date']

tup_ds = time.strptime(ds, "%Y.%m.%d")

ds = time.strftime("%Y-%m-%d", tup_ds)

confirm_add = day_add['confirm']

suspect_add = day_add['suspect']

heal_add = day_add['heal']

dead_add = day_add['dead']

# print(ds, confirm_add, suspect_add, heal_add, dead_add)

history[ds].update({"confirm_add": confirm_add, "suspect_add": suspect_add, "heal_add": heal_add, "dead_add": dead_add})

for item in history.items():

print(item)

# 返回爬取到的数据字典

return history

def insert_history():

"""插入数据到history表"""

# 获取数据

history = get_history()

# 获取数据库连接和cursor

conn, cursor = util.get_conn()

# 执行添加操作

sql = "insert into history values(%s, %s, %s, %s, %s, %s, %s, %s, %s)"

for key,value in history.items():

cursor.execute(sql, [key, value['confirm'], value['confirm_add'],

value['suspect'], value['suspect_add'],

value['heal'], value['heal_add'],

value['dead'], value['dead_add']])

# 提交事务

conn.commit()

# 释放资源

util.close(conn, cursor)

print("history表数据添加完成!")

def get_details():

"""爬取details表中的数据"""

# url = "https://view.inews.qq.com/g2/getOnsInfo?name=disease_h5"

url = "https://voice.baidu.com/api/newpneumonia?from=page"

response = requests.get(url)

json_str = response.text

print(json_str)

# 把json字符串转换为字典格式的数据

datas = json.loads(json_str)

print(datas)

datas = datas['data']

print(datas)

print(type(datas))

# 获取最后更新时间

upateTime = datas['upateTime']

print(upateTime)

# for key in datas.keys():

# print(key)

# 存储爬取到details表中的数据

details = []

for item in datas['caseList']:

# print(item)

province = item['area']

# print(province)

# print("*"*50)

for city in item['subList']:

c_name = city['city']

confirm = city['confirmed']

if len(confirm) == 0:

confirm = 0

confirm_add = city['confirmedRelative']

if len(confirm_add) == 0:

confirm_add = 0

# print(type(confirm_add), len(confirm_add))

dead = city['died']

if len(dead) == 0:

dead = 0

heal = city['crued']

if len(heal) == 0:

heal = 0

# 追加数据

details.append([upateTime, province, c_name, confirm, confirm_add, heal, dead])

# 返回爬取到的数据

# for data in details:

# print(data)

return details

def insert_details():

"""插入数据到details表"""

# 获取数据

details = get_details()

# 获取数据库连接

conn, cursor = util.get_conn()

sql = "insert into details values(null, %s, %s, %s, %s, %s, %s, %s)"

for item in details:

cursor.execute(sql, item)

# 提交事务

conn.commit()

# 释放资源

util.close(conn, cursor)

print("details表中数据添加成功!")

#测试模块

if __name__ == '__main__':

# get_history()

insert_history()

# get_details()

# insert_details()查看:

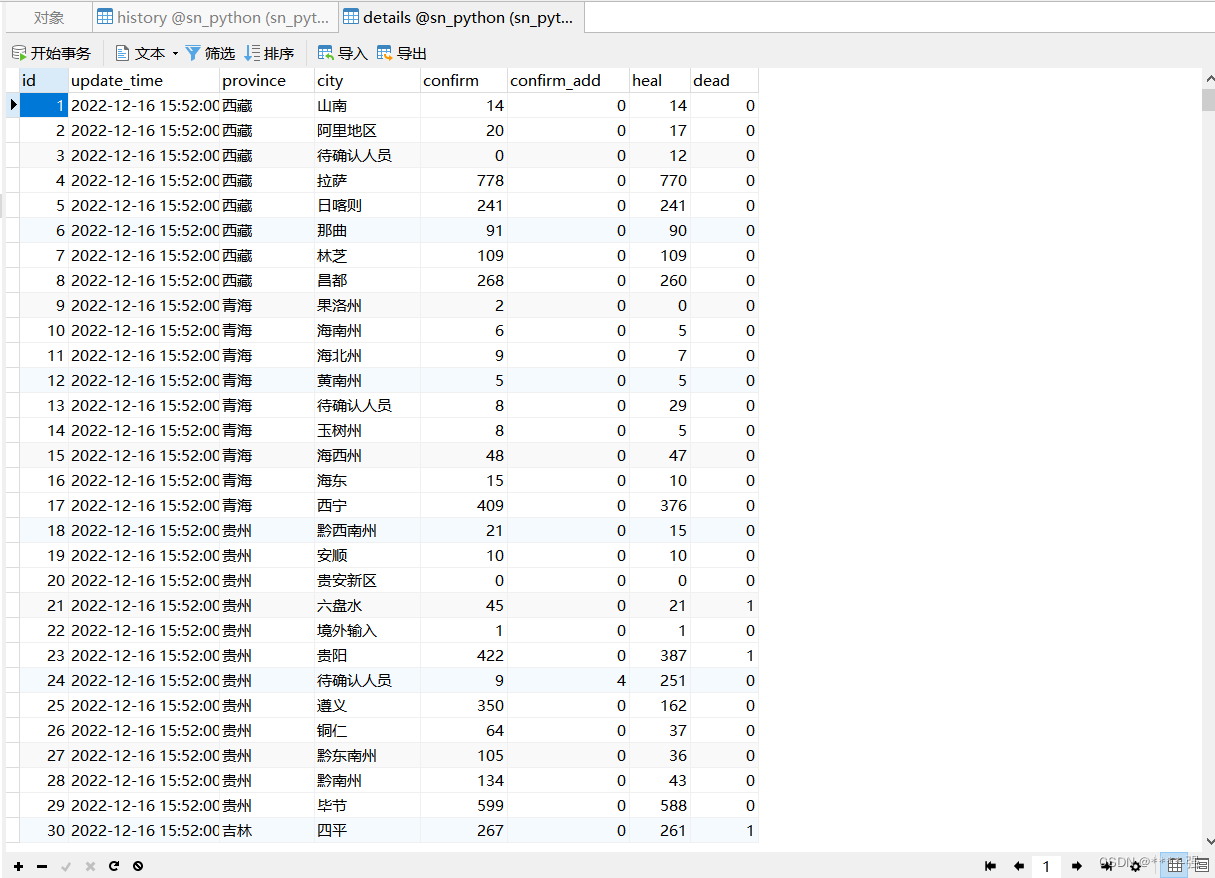

history表:

details表:

1574

1574

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?