🍨 本文为365天深度学习训练营 中的学习记录博客

🍖 原作者:K同学啊

我的环境

python 3.9

使用jupyter

torch==1.13.0+cu116

torchvision==0.14.0+cu116

运行代码

import warnings

import numpy as np

import torch

import torch.nn as nn

import matplotlib.pyplot as plt

import torchvision

import os

from torchinfo import summaryos.environ["KMP_DUPLICATE_LIB_OK"] = "TRUE"

device = torch.device("cuda"if torch.cuda.is_available() else "cpu")

print(device) ![]()

# 划分数据集

train_ds = torchvision.datasets.CIFAR10('re/data',train=True,

transform=torchvision.transforms.ToTensor(),

download=True)

test_ds = torchvision.datasets.CIFAR10('re/data',train=False,

transform=torchvision.transforms.ToTensor(),

download=True)

#数据加载

batch_size = 32

train_loader = torch.utils.data.DataLoader(train_ds,

batch_size=batch_size

,shuffle=True)

test_loader = torch.utils.data.DataLoader(test_ds,

batch_size=batch_size)# shuffle:重新排列数据

# 取一个批次查看数据格式

imgs,labels = next(iter(train_loader))

print(imgs.shape) # shape:[batch_size,channel,height,weight]

# 数据可视化

plt.figure(figsize=(20,5))

for i,imgs in enumerate(imgs[:20]):

npimg = imgs.numpy().transpose((1,2,0))

#将tensor变成numpy,图像数据从 (channels, height, width) 格式转换为 (height, width, channels) 格式,

plt.subplot(2,10,i+1)

plt.imshow(npimg,cmap=plt.cm.binary)

plt.axis('off')

plt.show()

# 构建网络

num_classes = 10 #图片类别数

class Model(nn.Module):

def __init__(self):

super().__init__()

self.module1 = nn.Sequential(

#卷积+池化

nn.Conv2d(3,64,3,1),

nn.ReLU(),

nn.MaxPool2d(2),

nn.Conv2d(64,64,3,1),

nn.ReLU(),

nn.MaxPool2d(2),

nn.Conv2d(64, 128, 3, 1),

nn.ReLU(),

nn.MaxPool2d(2),

#全连接

nn.Flatten(start_dim=1),

nn.Linear(512,256),

nn.ReLU(),

nn.Linear(256,num_classes)

)

#前向传播

def forward(self,x):

x = self.module1(x)

return x

#打印加载模型

model = Model().to(device) # 到GPU中运行

summary(model)# 模型训练

loss_fn = nn.CrossEntropyLoss()

learn_rate = 1e-2

opt = torch.optim.SGD(model.parameters(),lr=learn_rate)

#训练函数

def train(dataloader,model,loss_fn,optimizer):

size = len(dataloader.dataset) # 训练集大小

num_batches = len(dataloader) # 训练批次数目

train_loss,train_acc = 0,0

for X,y in dataloader:

X,y = X.to(device),y.to(device)

# 计算预测误差

pred = model(X)

loss = loss_fn(pred,y)

#反向传播

optimizer.zero_grad()

loss.backward()

optimizer.step()

# 计算acc,loss

train_acc = train_acc + y.eq(pred.argmax(dim=1)).type(torch.float).sum().item() #对的个数

train_loss = train_loss + loss.item()

train_acc = train_acc/size

train_loss = train_loss/num_batches

return train_acc,train_loss

# 测试函数

def test(dataloader,model,loss_fn):

size = len(dataloader.dataset)

num_batches = len(dataloader)

test_loss,test_acc = 0,0

with torch.no_grad():

for imgs,target in dataloader:

imgs,target = imgs.to(device),target.to(device)

# 计算loss

target_pred = model(imgs)

loss= loss_fn(target_pred,target)

test_loss += loss.item()

# 计算acc

test_acc += target.eq(target_pred.argmax(1)).type(torch.float).sum().item()

test_acc /= size

test_loss /= num_batches

return test_acc,test_loss# 正式训练

epochs = 10

train_loss = []

train_acc = []

test_loss = []

test_acc = []

for epoch in range(epochs):

model.train()

epoch_train_acc,epoch_train_loss = train(train_loader,model,loss_fn,opt)

model.eval()

epoch_test_acc,epoch_test_loss = test(test_loader,model,loss_fn)

train_loss.append(epoch_train_loss)

train_acc.append(epoch_train_acc)

test_loss.append(epoch_test_loss)

test_acc.append(epoch_test_acc)

template = ('Epoch:{:2d},Train_acc:{:.1f}%,Train_loss:{:.3f},Test_acc:{:.1f}%,Test_loss:{:.3f}')

print(template.format(epoch+1,epoch_train_acc*100,epoch_train_loss,epoch_test_acc*100,epoch_test_loss))

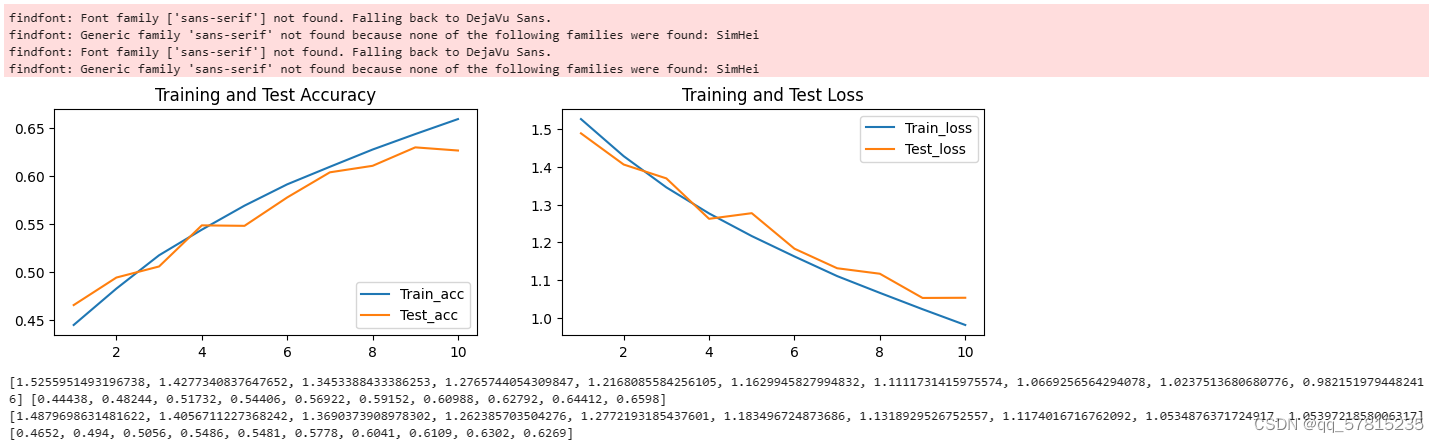

# 可视化

warnings.filterwarnings('ignore')

plt.rcParams['font.sans-serif'] = ['SimHei'] # 用来正常显示中文标签

plt.rcParams['axes.unicode_minus'] = False # 用来正常显示负号

plt.rcParams['figure.dpi'] = 100 # 分辨率

epochs_range = range(1,epochs+1)

plt.figure(figsize=(12,3))

plt.subplot(1, 2, 1)

plt.plot(epochs_range,train_acc,label='Train_acc')

plt.plot(epochs_range,test_acc,label='Test_acc')

plt.legend(loc='lower right')

plt.title('Training and Test Accuracy')

plt.subplot(1, 2, 2)

plt.plot(epochs_range,train_loss,label='Train_loss')

plt.plot(epochs_range,test_loss,label='Test_loss')

plt.legend(loc='upper right')

plt.title('Training and Test Loss')

plt.show()

print(train_loss,train_acc)

print(test_loss,test_acc)

学习总结

CIFAR-10是一个流行的计算机视觉数据集,主要用于图像识别和分类任务的训练和测试。这个数据集包含了60000张32x32像素的彩色图像,这些图像被分为10个类别,每个类别6000张图像。这些类别包括飞机、汽车、鸟、猫、鹿、狗、青蛙、马、船和卡车。在对CIFAR-10数据集训练时,首先需要对数据进行预处理,使用交叉熵损失函数,使用SGD优化器来最小化损失函数。主要学会了构建CNN网络,学习推导卷积层与池化层的计算过程。

850

850

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?