Oracle11g rac 集群安装(Redhat7.9)

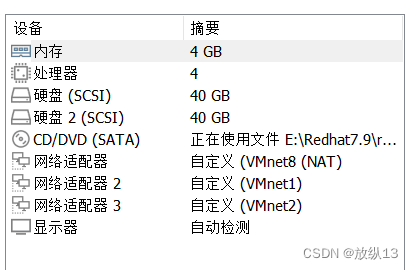

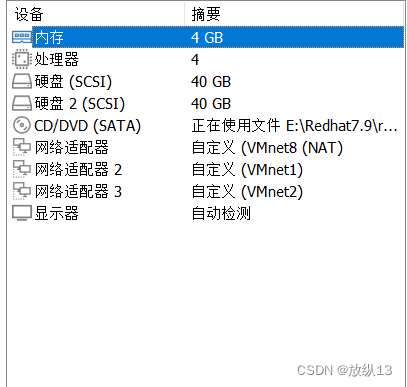

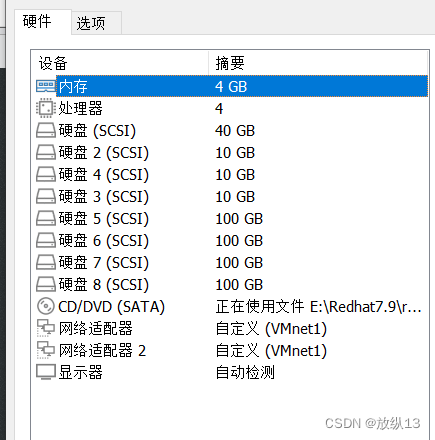

1.虚拟机规划

以下为初始时创建的虚拟机配置,并不代表最终配置

| 主机名 | 内存 | 处理器 | 硬盘 |

|---|---|---|---|

| racnode1 | 4G | 4 | 40G |

| racnode2 | 4G | 4 | 40G |

| storage(共享存储) | 4G | 4 | 40G |

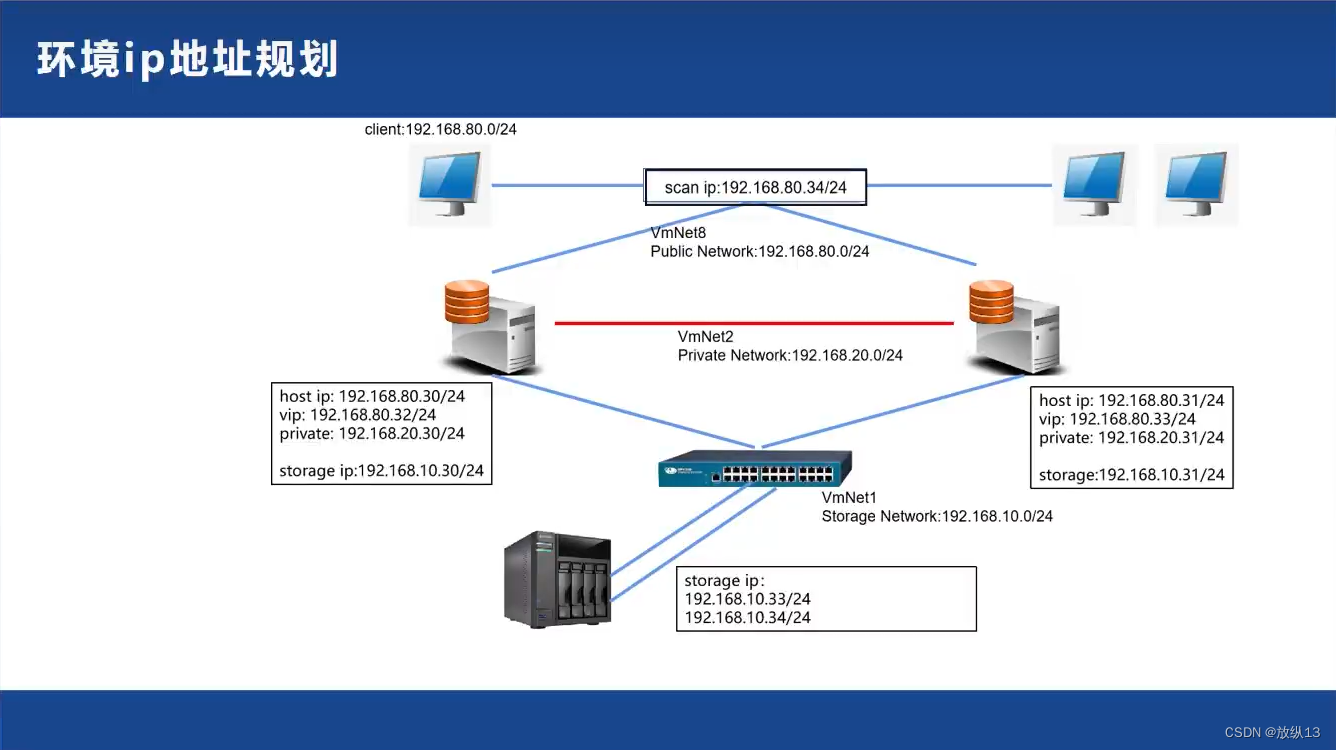

2.网络规划

网络规划配置图如下

最终结果如下

最终结果如下

racnode1:

racnode2:

storage:

3.网络配置

操作步骤:

复制主网卡文件(例如:ifcfg-ens33)到新网卡文件

修改新网卡文件参数

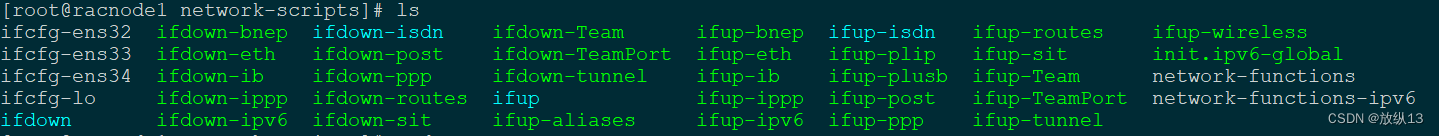

cd /etc/sysconfig/network-scripts

cp ifcfg-ens33 ifcfg-ens34

# 下面是我已经复制好的

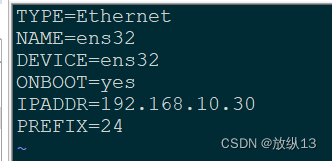

复制好后修改相应的参数

我只留了一些重要的参数

# 修改完后记得重启网卡

systemctl restart network.service

4.配置共享存储

由于要下载相关的包,所以要先配置本地 yum 源,不会的可以看我之前的博客

1.在storage存储服务器server端 安装target包

先配置服务端

#重新挂载一下

mount /dev/cdrom /mnt/cdrom/

yum -y install targetd targetcli

#启动target服务,并查看其状态,将其设置为开机自启动

systemctl start target

systemctl status target

systemctl enable target

#先把准备共享的块做出来,创建一个target,在 target 上创建 LUN

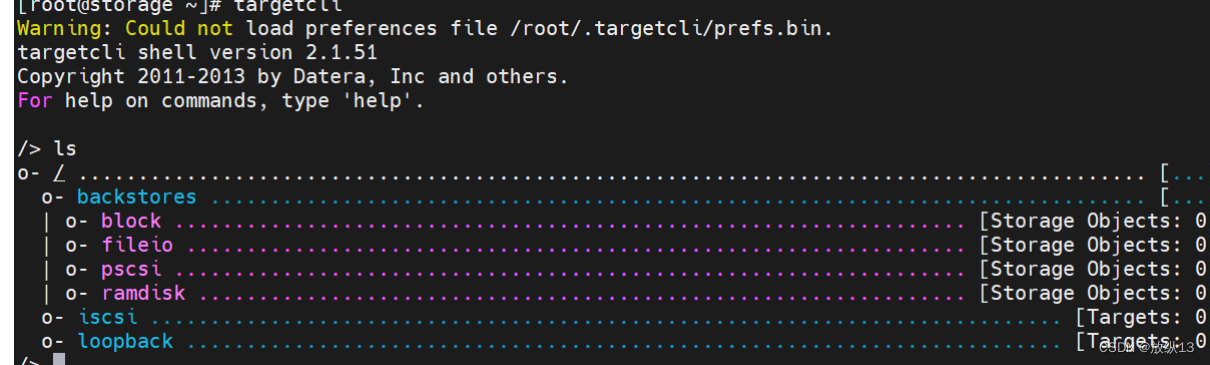

targetcli

#目前是在根路径下,直接敲ls(和linux的ls一样)命令来查看所有路径及路径下的配置,敲pwd命令可以显 示当前所在的路径(和linux的pwd一样)。

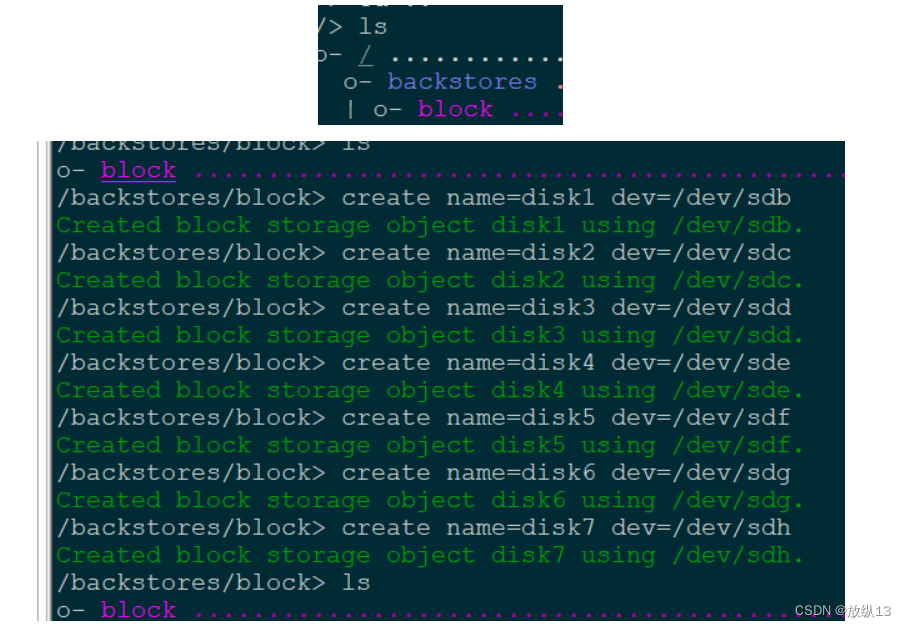

cd /backstores/block

创建block

create name=disk1 dev=/dev/sdb

create name=disk2 dev=/dev/sdc

create name=disk3 dev=/dev/sdd

create name=disk4 dev=/dev/sde

create name=disk5 dev=/dev/sdf

create name=disk6 dev=/dev/sdg

create name=disk7 dev=/dev/sdh

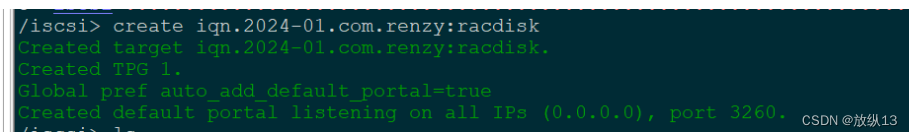

#进入ISCSI路径下创建完成iscsi名称

# 创建 iqn

cd /iscsi

create iqn.2024-01.com.renzy:racdisk

# 创建完成 iscsi 名称后,下面会默认创建一个 tpg1 的路径,在 tpg1 路径下有三个路径为主要的:

# 1.acls(客户端访问名称,免认证配置)

# 2.luns(共享 lun 存储池,调用 block 共享块)

# 3.portals (共享存储地址和端口)

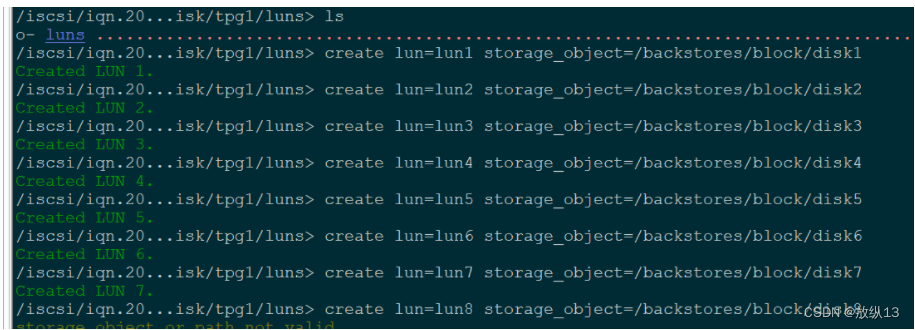

# 绑定lun

cd /iscsi/iqn.2024-01.com.renzy:racdisk/tpg1/luns

create lun=lun1 storage_object=/backstores/block/disk1

create lun=lun2 storage_object=/backstores/block/disk2

create lun=lun3 storage_object=/backstores/block/disk3

create lun=lun4 storage_object=/backstores/block/disk4

create lun=lun5 storage_object=/backstores/block/disk5

create lun=lun6 storage_object=/backstores/block/disk6

create lun=lun7 storage_object=/backstores/block/disk7

cd /iscsi/iqn.2024-01.com.renzy:racdisk/tpg1/acls

create wwn=iqn.2024-01.com.renzy:racnode1

create wwn=iqn.2024-01.com.renzy:racnode2

# 配置完成后,保存配置信息

cd /

saveconfig

vi /etc/iscsi/initiatorname.iscsi

InitiatorName=iqn.2024-01.com.renzy:racnode1

# 修改配置文件(racnode2)

vi /etc/iscsi/initiatorname.iscsi

InitiatorName=iqn.2024-01.com.renzy:racnode2

# 配置完成后重启客户端服务

systemctl restart iscsid.service

systemctl restart iscsi.service

# 客户端连接网络存储

# 发现网络存储

iscsiadm -m discovery -t st -p 192.168.10.33

iscsiadm -m discovery -t sendtargets -p 192.168.10.33

5.配置多路径

首先要安装多路径软件 device-mapper-multipath

然后记录自己的每个磁盘 id (虽然会出现大小一样的两个盘,但是每个磁盘的 id是唯一的)

最后将多路径填入配置文件

配置多路径的作用就是防止一个存储网卡坏了而导致集群无法正常工作,所以两个网卡指向同一个共享存储

# 5.配置多路径

# 连接共享存储

iscsiadm -m node -T iqn.2024-01.com.renzy:racdisk -p 192.168.10.33:3260 -l

# 另一条连接存储的路径

iscsiadm -m discovery -t sendtargets -p 192.168.10.32

iscsiadm -m node -T iqn.2024-01.com.renzy:racdisk -p 192.168.10.32:3260 -l

# 绑定相同磁盘

# 由于连接共享存储有两条线路,所以显示出来的盘会有相同的,在使用时我们把相同的盘绑定到一起

# 提供这个服务的包:device-mapper device-mapper-multipath

yum install device-mapper-multipath device-mapper -y

# 启动服务(注意:以下命令针对 rhel7 的报错:ConditionPathExists=/etc/multipath.conf was not met)

mpathconf --enable # 该命令会生成 multipath.conf 文件

systemctl restart multipathd.service

systemctl status multipathd.service

# 查看每个磁盘的 id 号

for i in b c d e f g h i j k l m n o;

do

echo "sd$i" "`/usr/lib/udev/scsi_id --whitelisted --replace-whitespace --device=/dev/sd$i` ";

done

sdb 36001405918d3ac03e31492bb9ae8847c

sdc 36001405580432cdee1d4d72b3a35a4bb

sdd 3600140598cc86c64520430facfa0f3c5

sde 360014050bae87632ef042e4abbc87188

sdf 36001405556c924993d2423fad0e01a5b

sdg 36001405407eafc26052451aad249bbdb

sdh 3600140537149623de5e4284baf922272

sdi 36001405918d3ac03e31492bb9ae8847c

sdj 36001405580432cdee1d4d72b3a35a4bb

sdk 3600140598cc86c64520430facfa0f3c5

sdl 360014050bae87632ef042e4abbc87188

sdm 36001405556c924993d2423fad0e01a5b

sdn 36001405407eafc26052451aad249bbdb

sdo 3600140537149623de5e4284baf922272

cat /etc/multipath/bindings

mpatha 36001405918d3ac03e31492bb9ae8847c

mpathb 36001405580432cdee1d4d72b3a35a4bb

mpathc 3600140598cc86c64520430facfa0f3c5

mpathd 360014050bae87632ef042e4abbc87188

mpathe 36001405556c924993d2423fad0e01a5b

mpathf 36001405407eafc26052451aad249bbdb

mpathg 3600140537149623de5e4284baf922272

磁盘 /dev/mapper/mpatha:10.7 GB, 10737418240 字节,20971520 个扇区

磁盘 /dev/mapper/mpathb:10.7 GB, 10737418240 字节,20971520 个扇区

磁盘 /dev/mapper/mpathc:10.7 GB, 10737418240 字节,20971520 个扇区

磁盘 /dev/mapper/mpathd:107.4 GB, 107374182400 字节,209715200 个扇区

磁盘 /dev/mapper/mpathe:107.4 GB, 107374182400 字节,209715200 个扇区

磁盘 /dev/mapper/mpathf:107.4 GB, 107374182400 字节,209715200 个扇区

磁盘 /dev/mapper/mpathg:107.4 GB, 107374182400 字节,209715200 个扇区

# 修改配置文件

vim /etc/multipath.conf

defaults {

user_friendly_names yes

find_multipaths yes

}

blacklist {

devnode "^sd[a]"

}

multipaths {

multipath {

wwid 360014050bae87632ef042e4abbc87188

alias oracle-data01

path_grouping_policy multibus

path_selector "round-robin 0"

failback immediate

}

multipath {

wwid 36001405556c924993d2423fad0e01a5b

alias oracle-data02

path_grouping_policy multibus

path_selector "round-robin 0"

failback immediate

}

multipath {

wwid 36001405407eafc26052451aad249bbdb

alias oracle-data03

path_grouping_policy multibus

path_selector "round-robin 0"

failback immediate

}

multipath {

wwid 3600140537149623de5e4284baf922272

alias oracle-data04

path_grouping_policy multibus

path_selector "round-robin 0"

failback immediate

}

multipath {

wwid 36001405918d3ac03e31492bb9ae8847c

alias oracle-ocr01

path_grouping_policy multibus

path_selector "round-robin 0"

failback immediate

}

multipath {

wwid 36001405580432cdee1d4d72b3a35a4bb

alias oracle-ocr02

path_grouping_policy multibus

path_selector "round-robin 0"

failback immediate

}

multipath {

wwid 3600140598cc86c64520430facfa0f3c5

alias oracle-ocr03

path_grouping_policy multibus

path_selector "round-robin 0"

failback immediate

}

}

devices {

device {

vendor "openfiler "

product "virtual-disk"

path_grouping_policy multibus

path_checker readsector0

path_selector "round-robin 0"

hardware_handler "0"

}

}

# 为两个节点增加一块 40G 的硬盘为安装 oracle 做准备

6.设置操作系统

为 GI 的安装创建足够的条件,这样后面 GI 的安装会容易一些

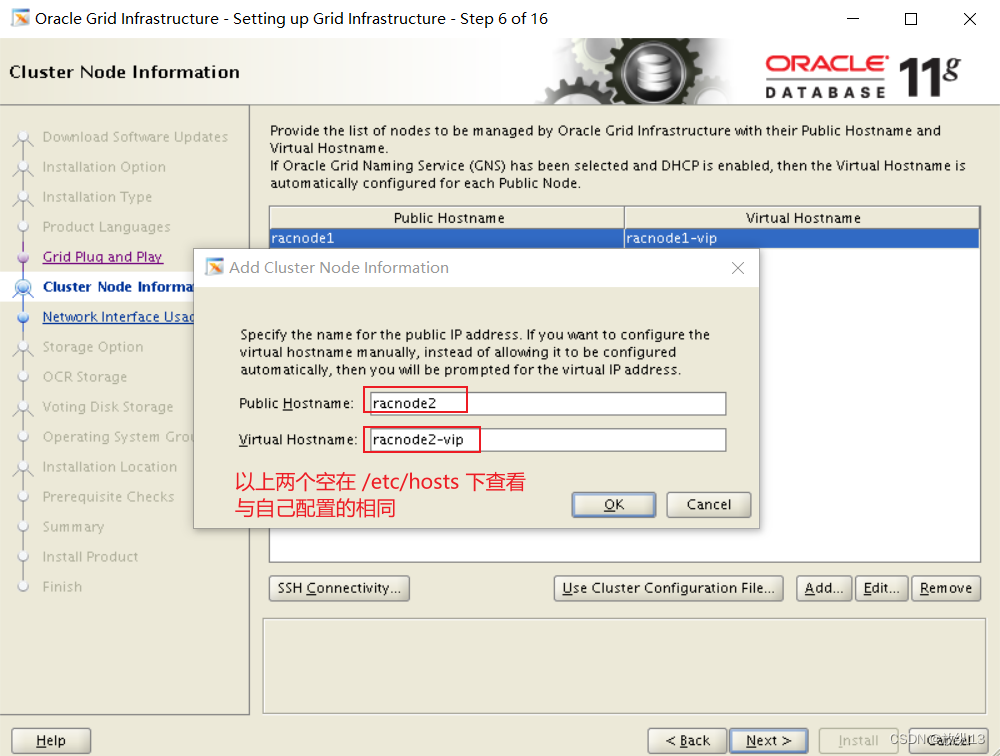

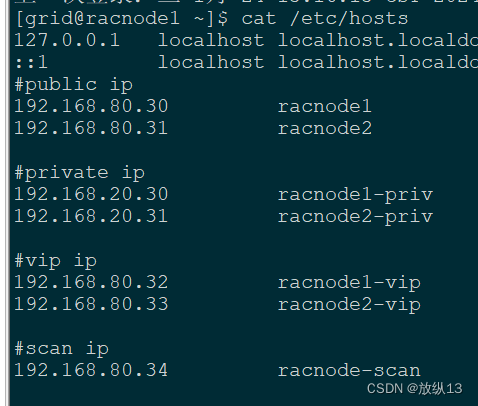

1.设置 /etc/hosts

# 操作系统配置

vi /etc/hosts

cat /etc/hosts

#public ip

192.168.80.30 racnode1

192.168.80.31 racnode2

#private ip

192.168.20.30 racnode1-priv

192.168.20.31 racnode2-priv

#vip ip

192.168.80.32 racnode1-vip

192.168.80.33 racnode2-vip

#scan ip

192.168.80.34 racnode-scan

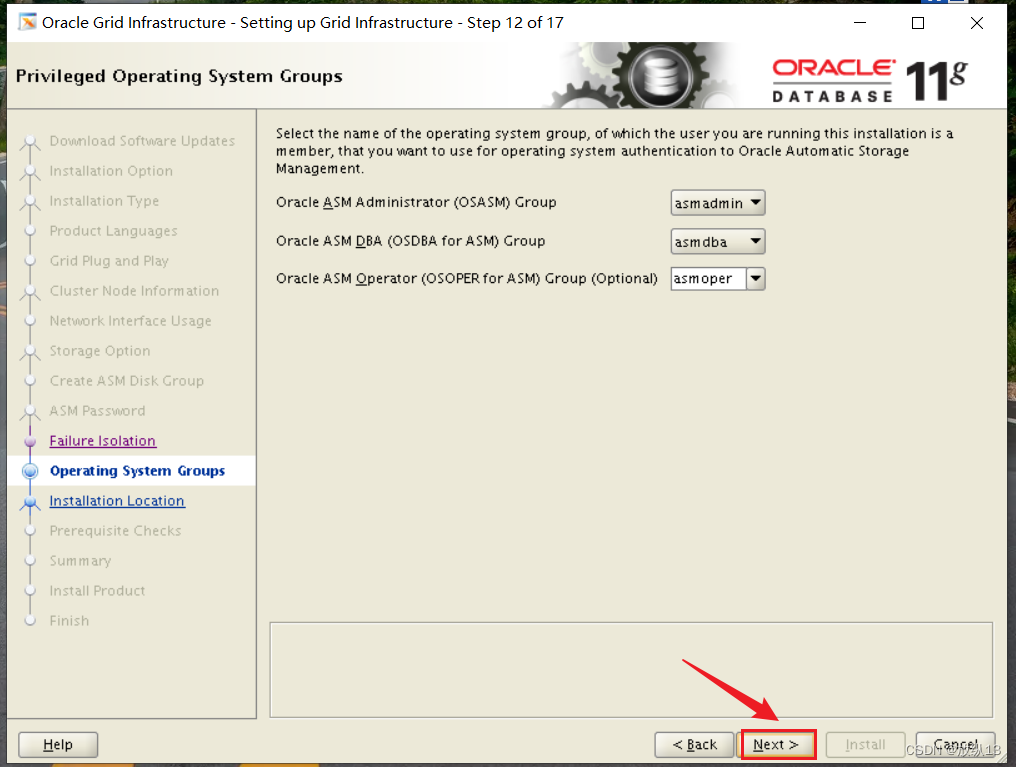

2.创建用户和组

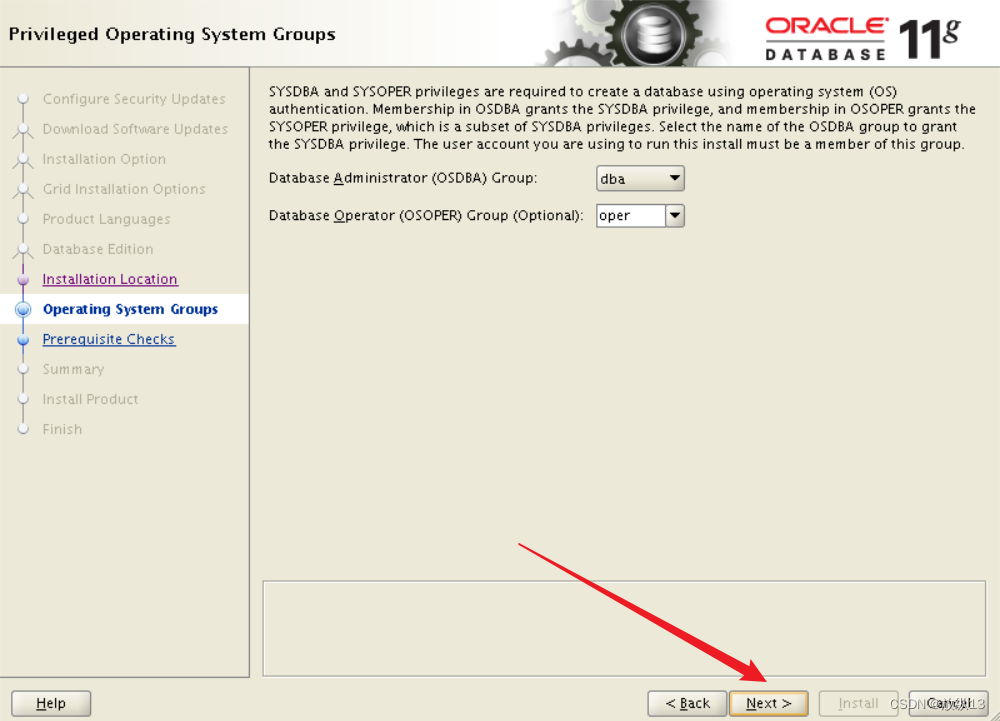

groupadd -g 10001 oinstall

groupadd -g 10002 dba

groupadd -g 10003 oper

groupadd -g 10004 asmadmin

groupadd -g 10005 asmoper

groupadd -g 10006 asmdba

useradd -g oinstall -G dba,asmdba,oper oracle

useradd -g oinstall -G asmadmin,asmdba,asmoper,oper,dba grid

3.为用户设置密码

echo '123456' | passwd --stdin grid

echo '123456' | passwd --stdin oracle

4.创建目录

fdisk /dev/sdb

mkfs.xfs /dev/sdb1

# 将 sdb1 挂载到 u01

mkdir /u01

vim /etc/fstab

blkid /dev/sdb1

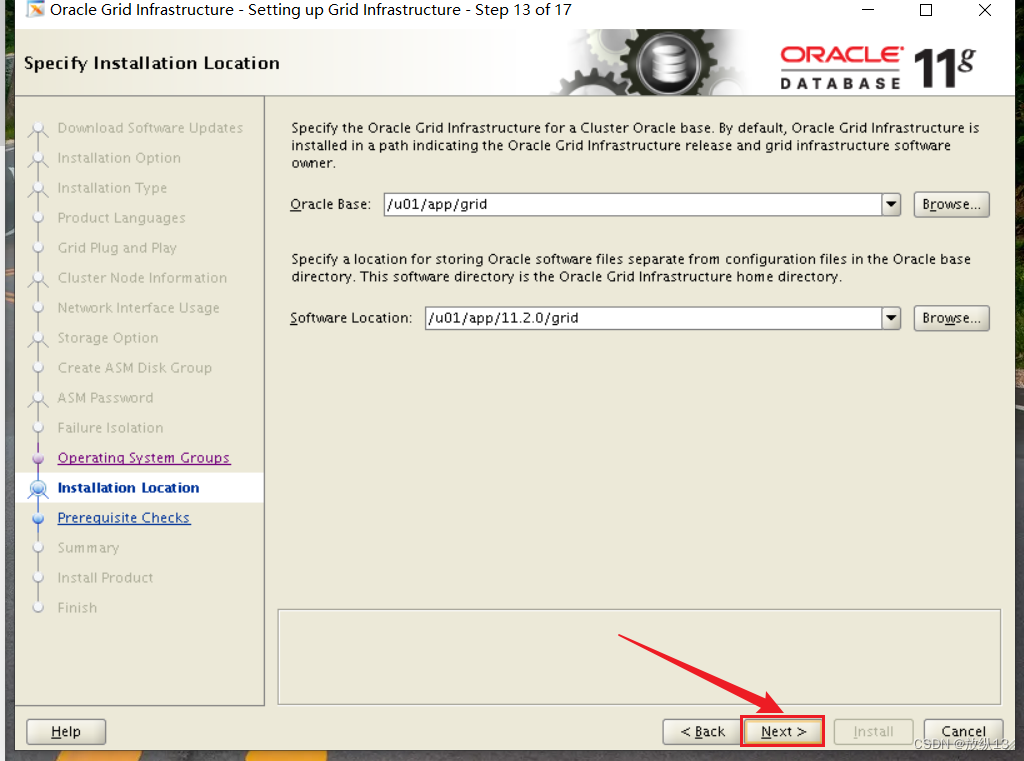

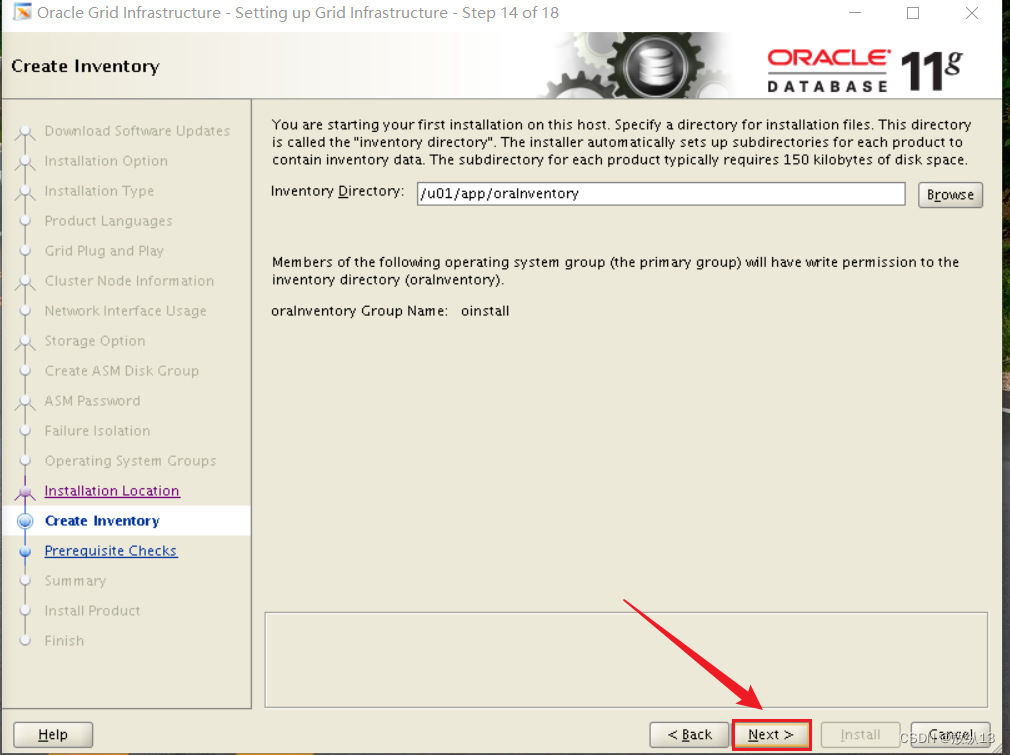

# 创建Oracle目录

mkdir -p /u01/app/11.2.0/grid

mkdir -p /u01/app/grid

chown -R grid:oinstall /u01

mkdir -p /u01/app/oracle

chown -R oracle:oinstall /u01/app/oracle

chmod -R 775 /u01/

# 安装依赖包

yum install binutils -y

yum install compat-libcap1 -y

yum install compat-libstdc++-33 -y

yum install gcc -y

yum install gcc-c++ -y

yum install glibc -y

yum install glibc-devel -y

yum install ksh -y

yum install libgcc -y

yum install libstdc++ -y

yum install libstdc++-devel -y

yum install libaio -y

yum install libaio-devel -y

yum install libXext -y

yum install libXtst -y

yum install libX11 -y

yum install libXau -y

yum install libxcb -y

yum install libXi -y

yum install make -y

yum install sysstat -y

yum install unixODBC -y

yum install unixODBC-devel -y

yum install unzip -y

5.修改资源限制参数

vi /etc/security/limits.conf

grid soft nproc 16384

grid hard nproc 16384

grid soft nofile 65536

grid hard nofile 65536

grid soft stack 32768

grid hard stack 32768

oracle soft nproc 16384

oracle hard nproc 16384

oracle soft nofile 65536

oracle hard nofile 65536

oracle soft stack 32768

oracle hard stack 32768

echo "session required pam_limits.so" >> /etc/pam.d/login

6.修改内核参数

vi /etc/sysctl.conf

fs.aio-max-nr = 1048576

fs.file-max = 6815744

kernel.sem = 250 32000 100 128

net.ipv4.ip_local_port_range = 9000 65500

net.core.rmem_default = 262144

net.core.rmem_max = 4194304

net.core.wmem_default = 262144

net.core.wmem_max = 1048586

kernel.panic_on_oops = 1

kernel.shmmax = 2348810240

kernel.shmall = 573440

kernel.shmmni = 4096

vm.swappiness = 10

vm.nr_hugepages = 1120

#使内核参数生效

sysctl -p

7.关闭透明页,numa

#配置开机设置never

vi /etc/rc.d/rc.local

if test -f /sys/kernel/mm/transparent_hugepage/enabled; then

echo never > /sys/kernel/mm/transparent_hugepage/enabled

fi

if test -f /sys/kernel/mm/transparent_hugepage/defrag; then

echo never > /sys/kernel/mm/transparent_hugepage/defrag

fi

source /etc/rc.d/rc.local

vi /etc/default/grub

GRUB_TIMEOUT=5

GRUB_DISTRIBUTOR="$(sed 's, release .*$,,g' /etc/system-release)"

GRUB_DEFAULT=saved

GRUB_DISABLE_SUBMENU=true

GRUB_TERMINAL_OUTPUT="console"

GRUB_CMDLINE_LINUX="crashkernel=auto rhgb quiet numa=off transparent_hugepage=never"

GRUB_DISABLE_RECOVERY="true"

#运行grub2–mkconfig 命令以重新生成grub.cfg文件

grub2-mkconfig -o /boot/grub2/grub.cfg

# grid用户配置环境变量

#两个节点

su - grid

vi .bash_profile

alias sqlplus="rlwrap sqlplus"

export TMP=/tmp

export ORACLE_BASE=/u01/app/grid

export ORACLE_HOME=/u01/app/11.2.0/grid

export GRID_HOME=/u01/app/11.2.0/grid

export ORACLE_SID=+ASM1

export NLS_DATE_FORMAT="yyyy-mm-dd HH24:MI:SS"

export PATH=$PATH:$ORACLE_HOME/bin:$ORACLE_HOME/OPatch

alias sqlplus="rlwrap sqlplus"

export TMP=/tmp

export ORACLE_BASE=/u01/app/grid

export ORACLE_HOME=/u01/app/11.2.0/grid

export GRID_HOME=/u01/app/11.2.0/grid

export ORACLE_SID=+ASM2

export NLS_DATE_FORMAT="yyyy-mm-dd HH24:MI:SS"

export PATH=$PATH:$ORACLE_HOME/bin:$ORACLE_HOME/OPatch

# Oracle用户环境变量

#两个节点

su - oracle

vi .bash_profile

alias sqlplus="rlwrap sqlplus"

export TMP=/tmp

export TMPDIR=$TMP

export LANG=en_US

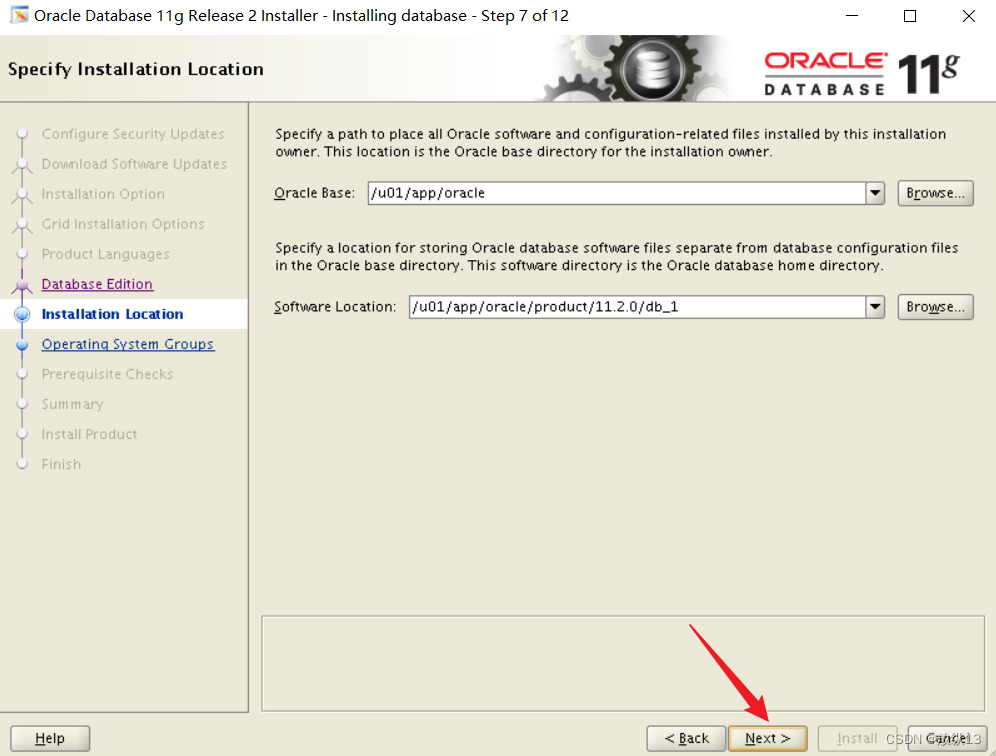

export ORACLE_BASE=/u01/app/oracle

export ORACLE_HOME=/u01/app/oracle/product/11.2.0/db_1

export ORACLE_SID=racdb1

export PATH=$PATH:$ORACLE_HOME/bin:$ORACLE_HOME/OPatch

export LD_LIBRARY_PATH=$ORACLE_HOME/lib:/lib:/usr/lib

export CLASSPATH=$ORACLE_HOME/JRE:$ORACLE_HOME/jlib:$ORACLE_HOME/rdbms/jlib

export NLS_DATE_FORMAT="yyyy-mm-dd HH24:MI:SS"

export NLS_LANG=AMERICAN_AMERICA.UTF8

vi .bash_profile

alias sqlplus="rlwrap sqlplus"

export TMP=/tmp

export TMPDIR=$TMP

export LANG=en_US

export ORACLE_BASE=/u01/app/oracle

export ORACLE_HOME=/u01/app/oracle/product/11.2.0/db_1

export ORACLE_SID=racdb2

export PATH=$PATH:$ORACLE_HOME/bin:$ORACLE_HOME/OPatch

export LD_LIBRARY_PATH=$ORACLE_HOME/lib:/lib:/usr/lib

export CLASSPATH=$ORACLE_HOME/JRE:$ORACLE_HOME/jlib:$ORACLE_HOME/rdbms/jlib

export NLS_DATE_FORMAT="yyyy-mm-dd HH24:MI:SS"

export NLS_LANG=AMERICAN_AMERICA.UTF8

# 配置 asm 磁盘访问权限

vi /etc/udev/rules.d/99-oracle-asmdevices.rules

ENV{DM_NAME}=="oracle-ocr01", OWNER:="grid", GROUP:="asmadmin", MODE:="660"

ENV{DM_NAME}=="oracle-ocr02", OWNER:="grid", GROUP:="asmadmin", MODE:="660"

ENV{DM_NAME}=="oracle-ocr03", OWNER:="grid", GROUP:="asmadmin", MODE:="660"

ENV{DM_NAME}=="oracle-data01", OWNER:="grid", GROUP:="asmadmin", MODE:="660"

ENV{DM_NAME}=="oracle-data02", OWNER:="grid", GROUP:="asmadmin", MODE:="660"

ENV{DM_NAME}=="oracle-data03", OWNER:="grid", GROUP:="asmadmin", MODE:="660"

ENV{DM_NAME}=="oracle-data04", OWNER:="grid", GROUP:="asmadmin", MODE:="660"

/sbin/udevadm control --reload

/sbin/udevadm trigger --type=devices --action=change

udevadm trigger

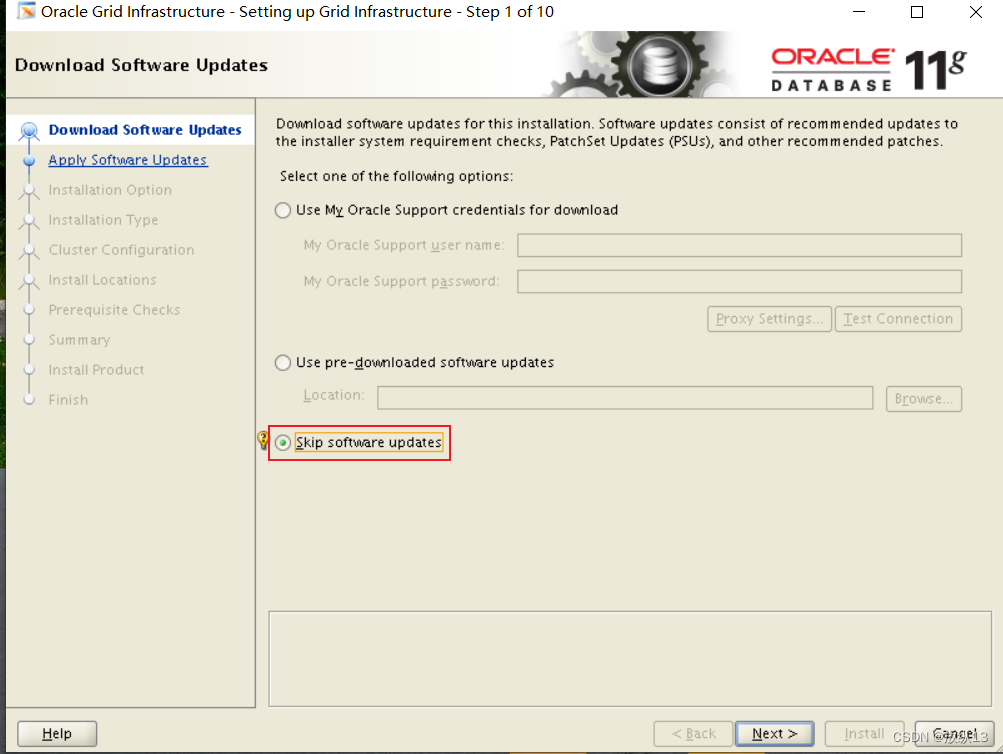

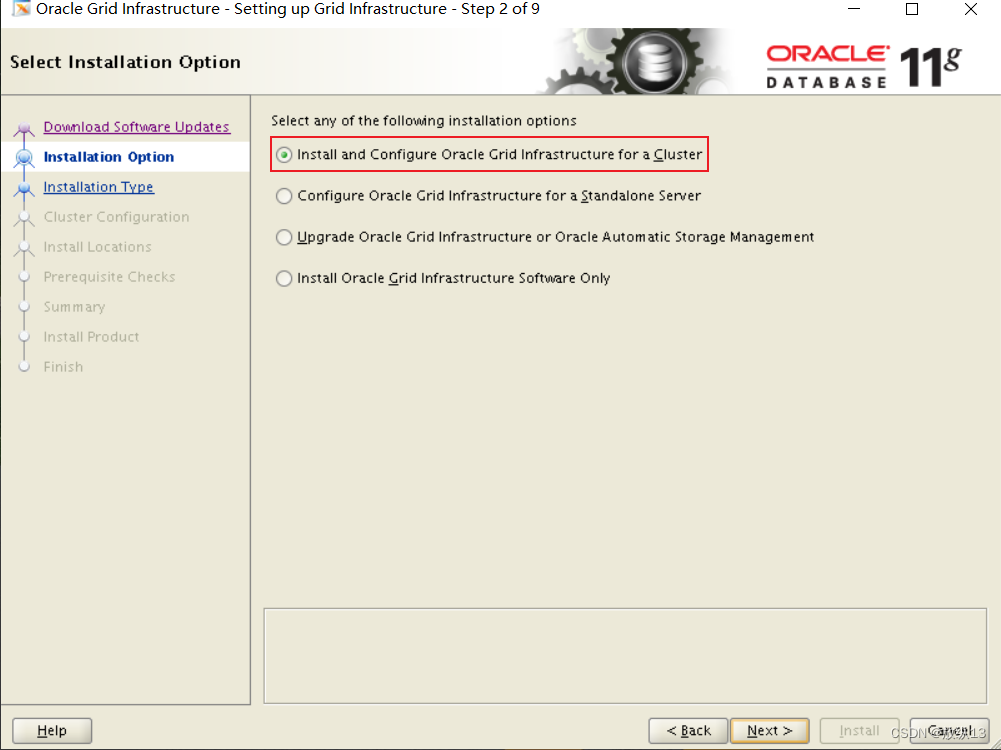

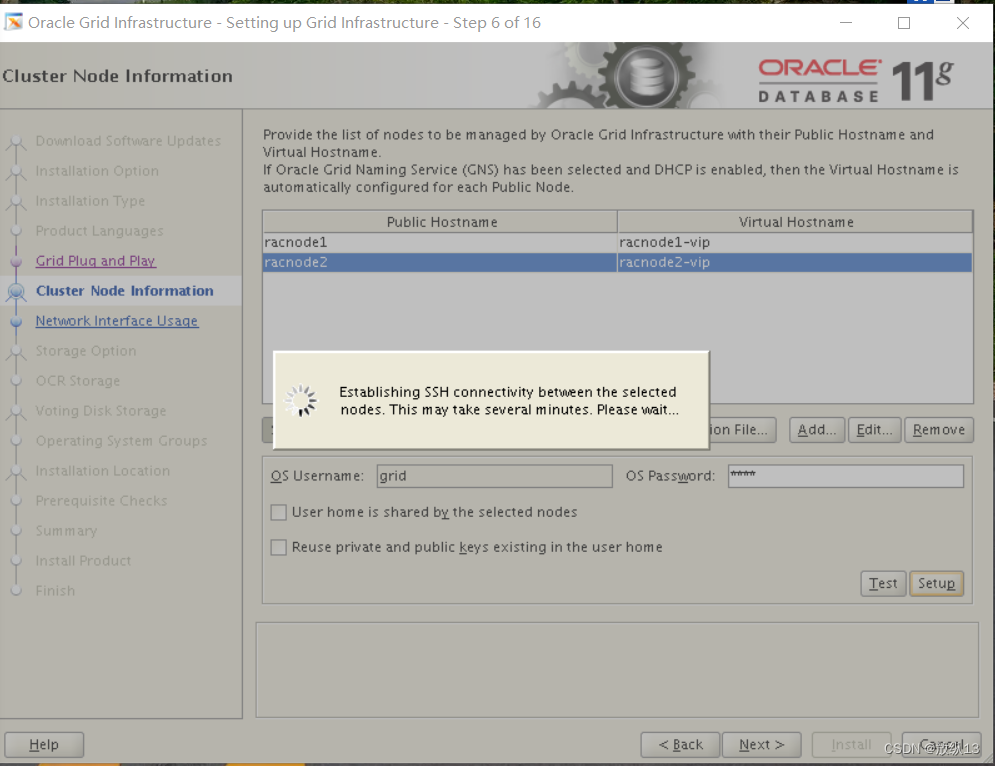

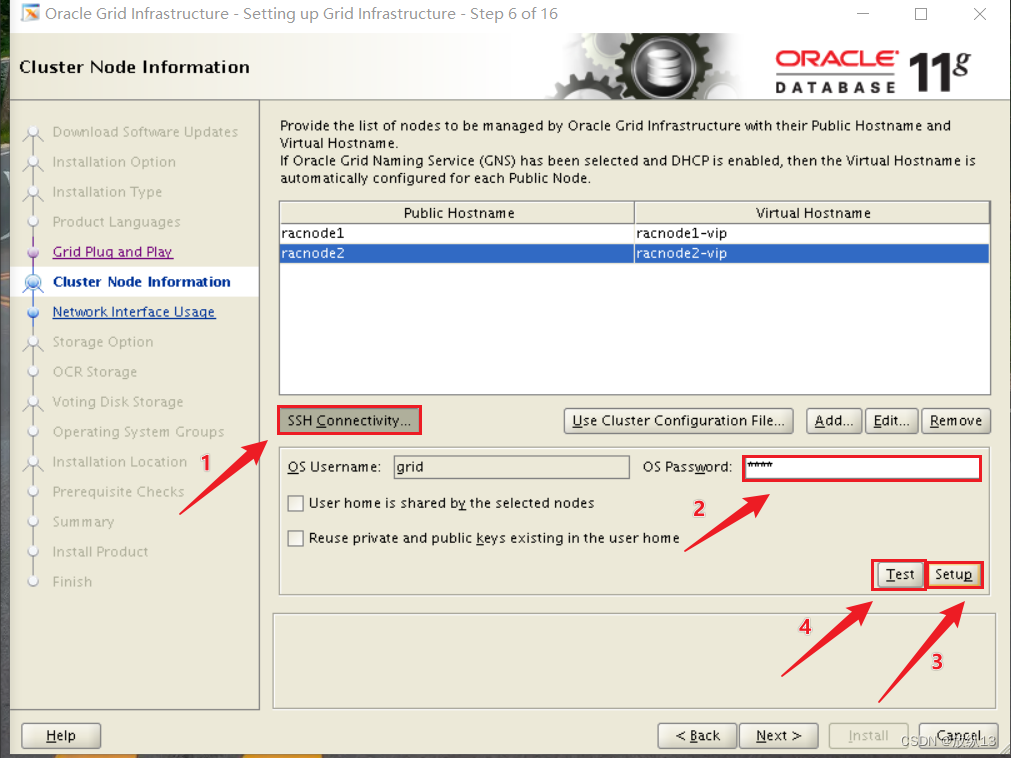

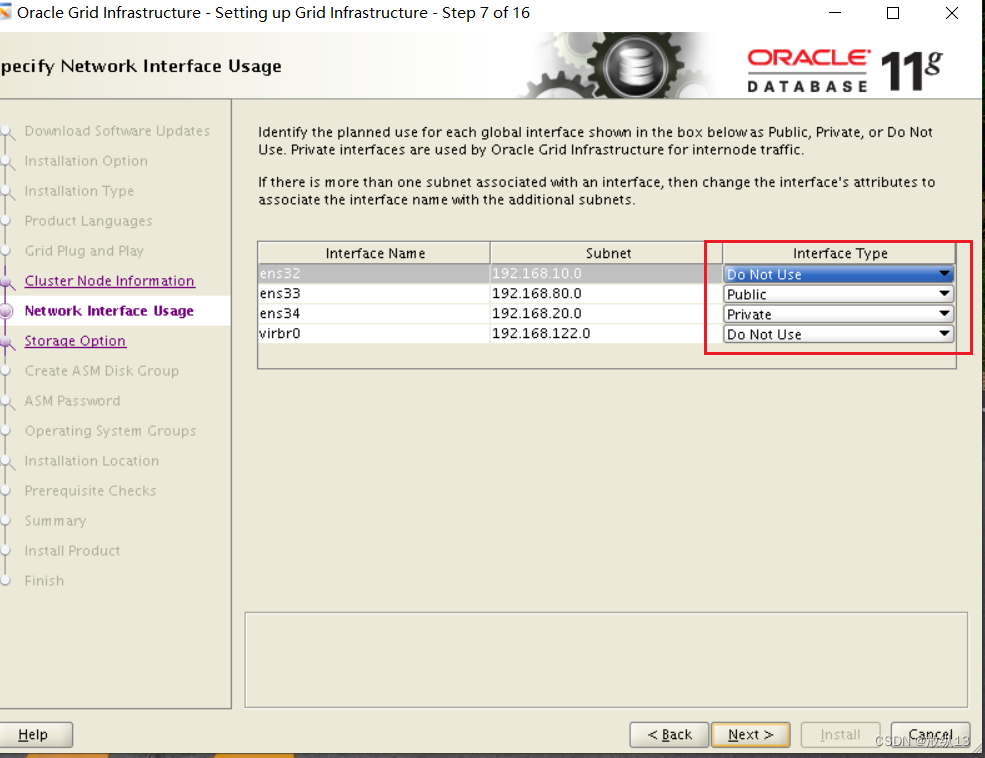

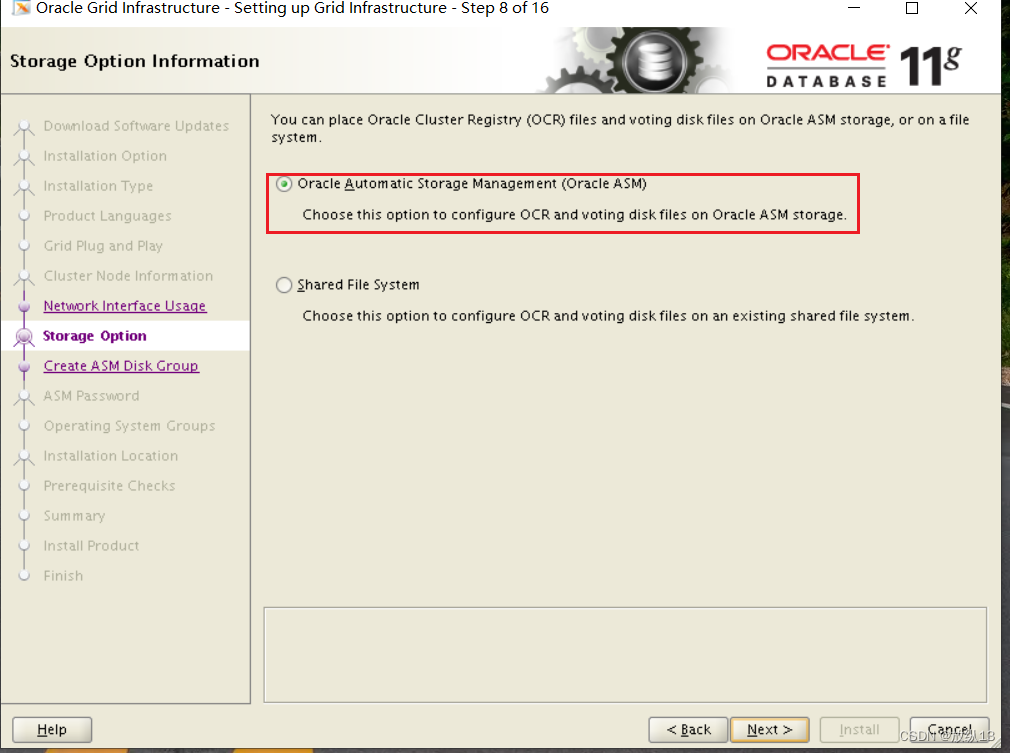

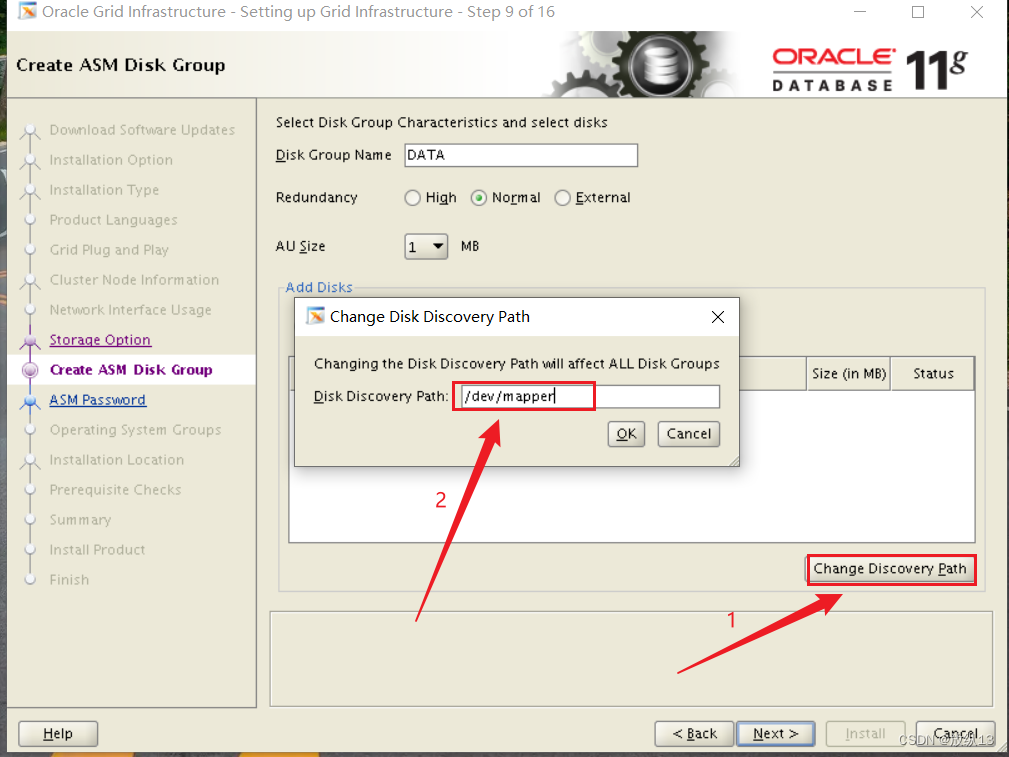

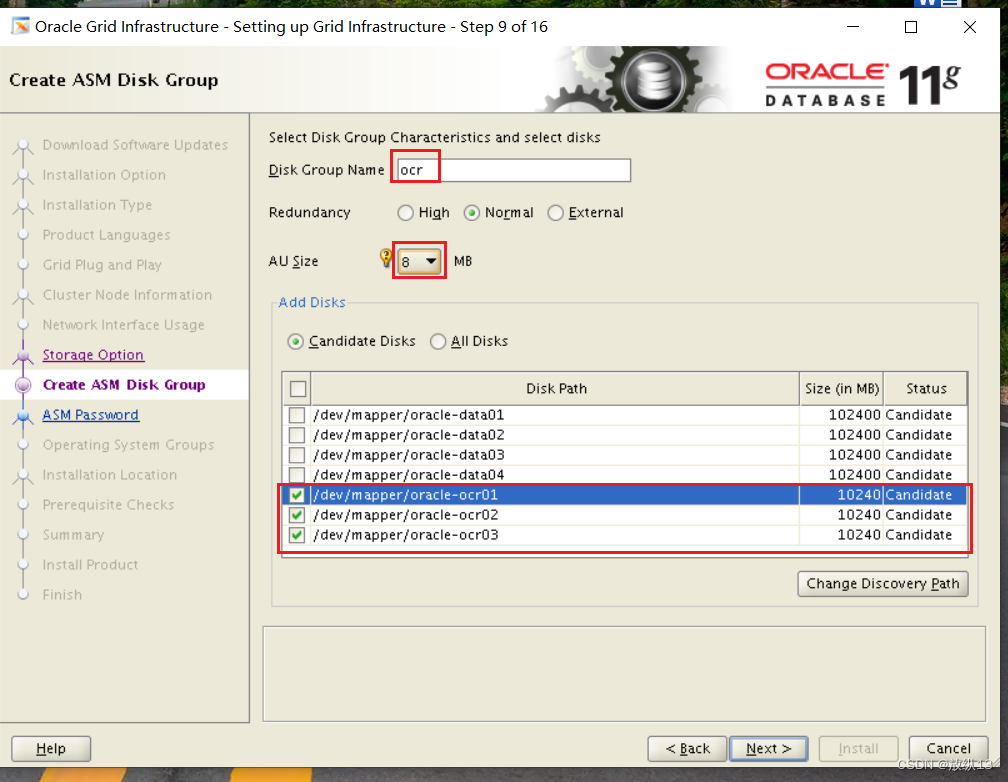

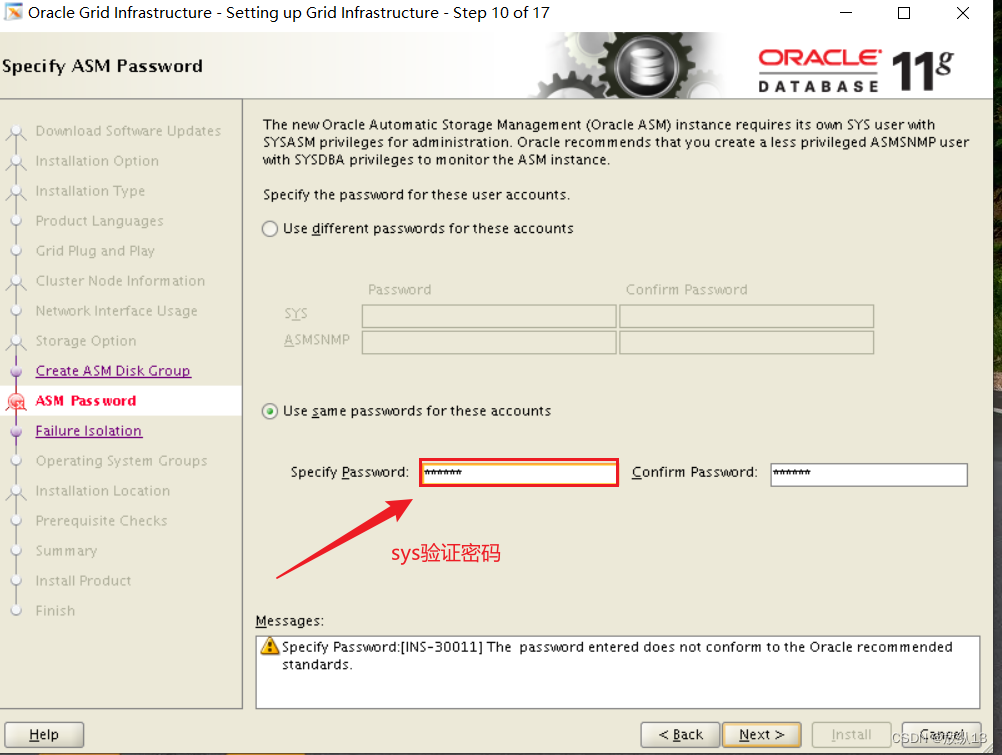

7.安装集群软件GI

#为新加的磁盘创建分区

fdisk /dev/sdc

#格式化

mkfs.xfs /dev/sdc1

mkdir /soft

chmod -R 777 /soft

mount /dev/sdc1 /soft/

cd /soft/

mkdir grid

cd grid

#将集群软件传到/soft/grid下

#这两个文件权限要赋给grid

chown - R grid:*

#在grid用户下解压集群软件

unzip p13390677_112040_Linux-x86-64_3of7.zip

#然后进入到grid中

./runInstaller

#图中几个包需要安装一下

rpm -ivh compat-libstdc++-33-3.2.3-72.el7.x86_64.rpm

rpm -ivh --force --nodeps pdksh-5.2.14-37.el5.x86_64.rpm

yum install elfutils-libelf-devel.x86_64 -y

#再进到这个目录下一个包

cd /soft/grid/grid/rpm

rpm -ivh cvuqdisk-1.0.9-1.rpm

#将这几个包传到racnode2上也安装一下

scp compat-libstdc++-33-3.2.3-72.el7.x86_64.rpm root@192.168.80.31:/tmp

scp pdksh-5.2.14-37.el5.x86_64.rpm root@192.168.80.31:/tmp

scp cvuqdisk-1.0.9-1.rpm root@192.168.80.31:/tmp

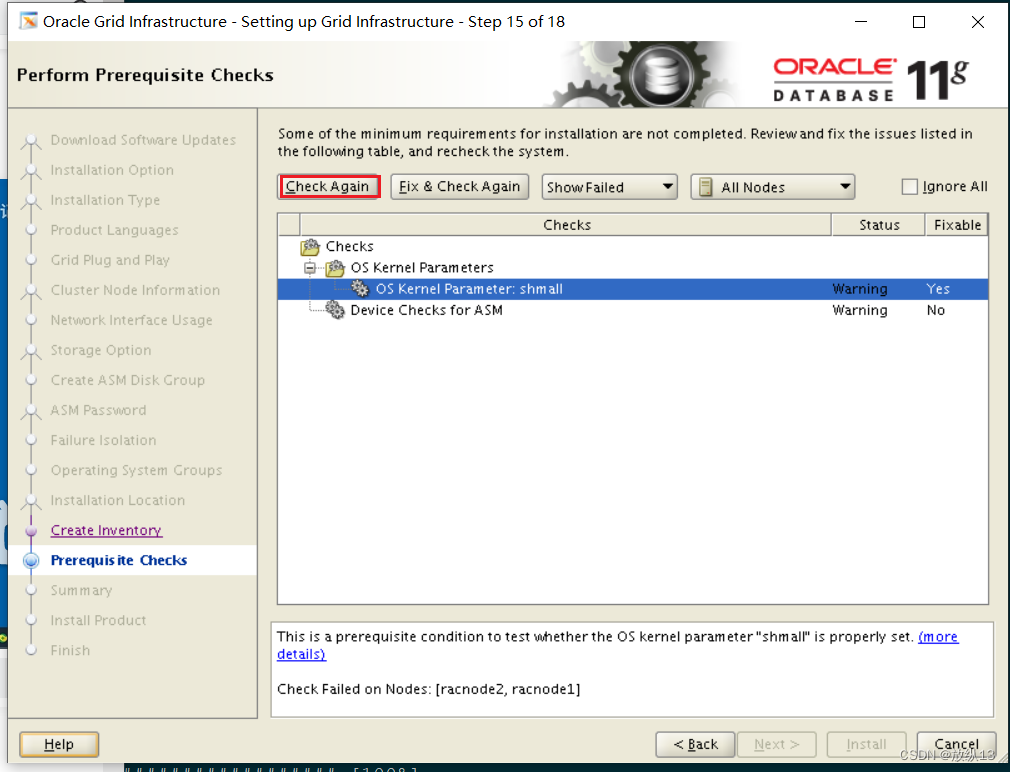

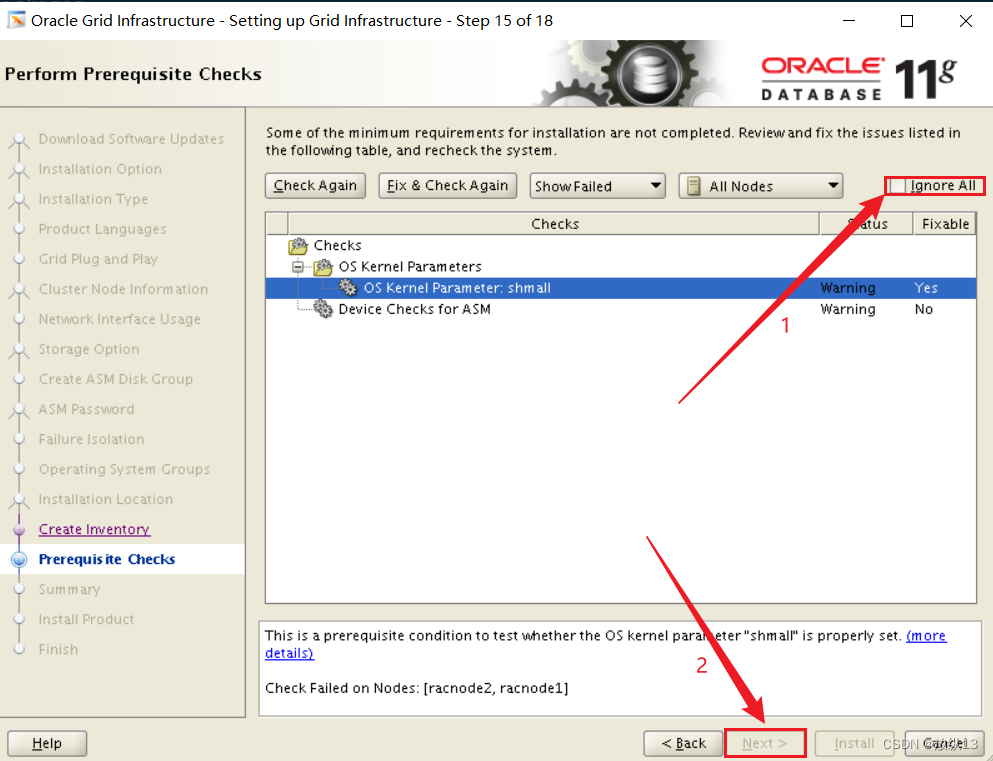

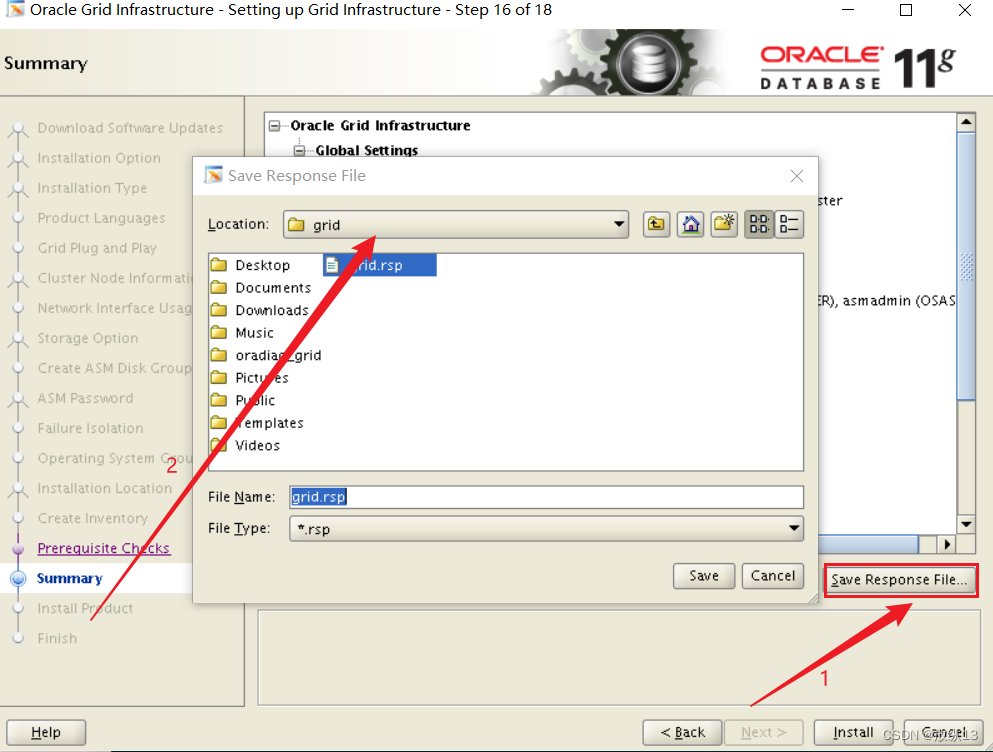

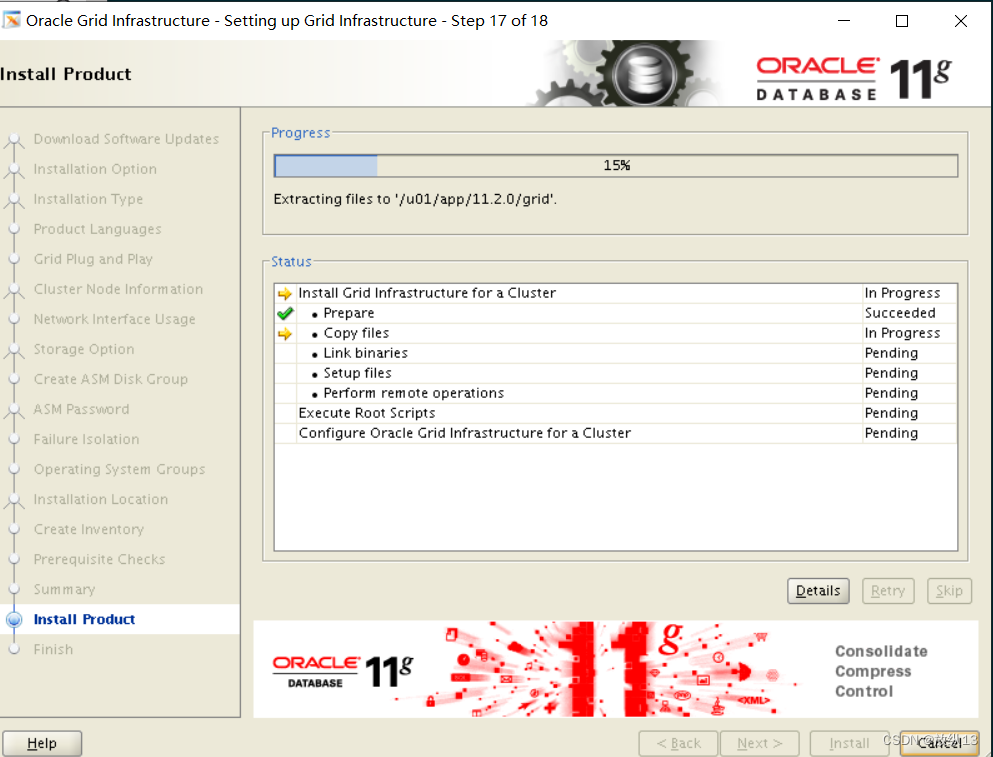

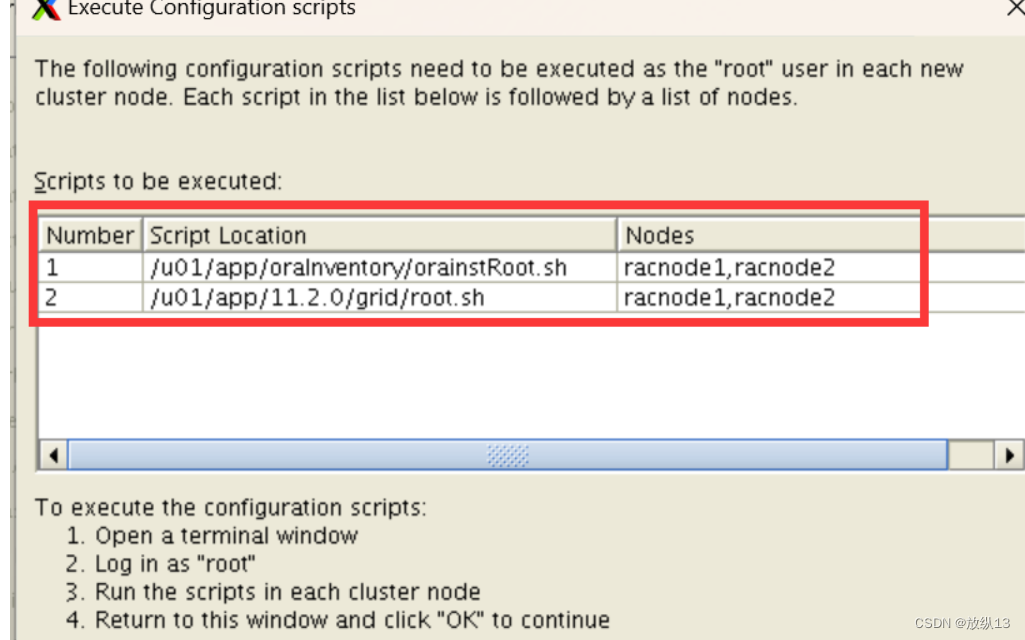

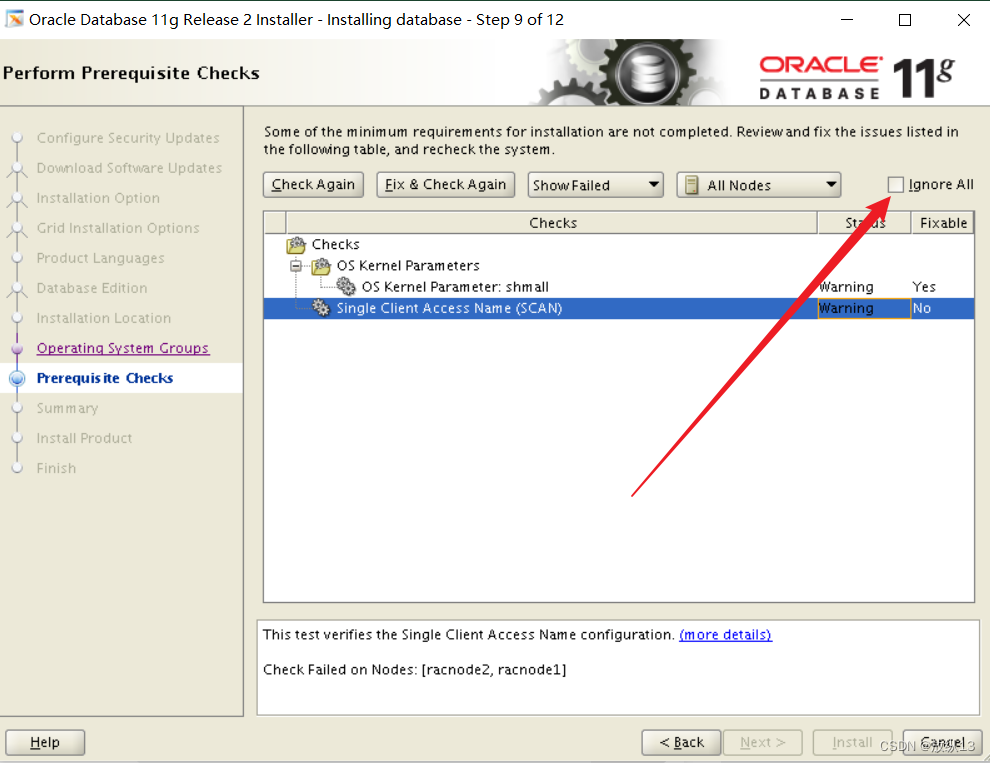

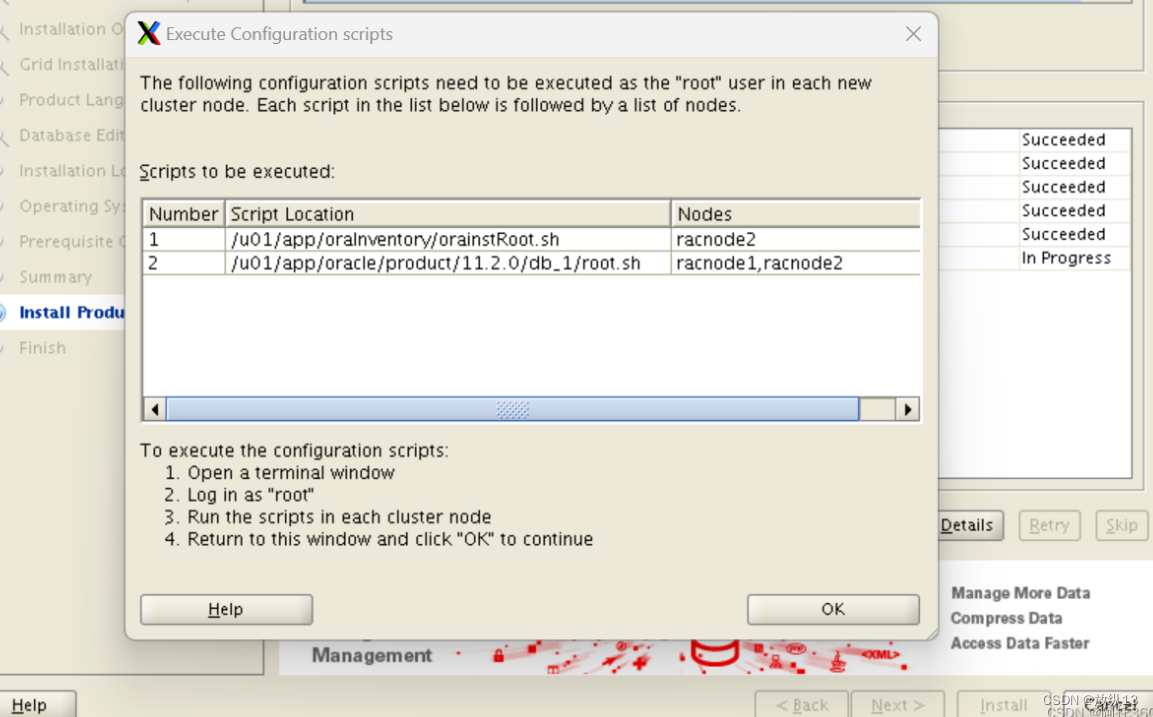

#装完之后继续安装GI,点击check again

#执行对应脚本

/u01/app/oraInventory/orainstRoot.sh

/u01/app/11.2.0/grid/root.sh

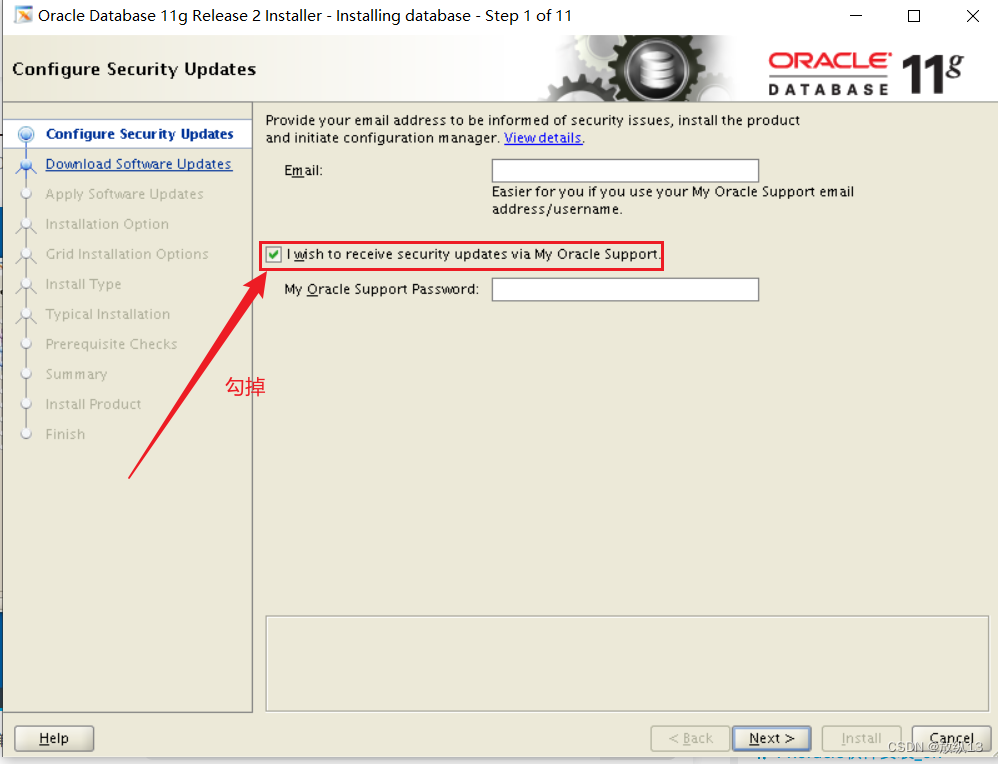

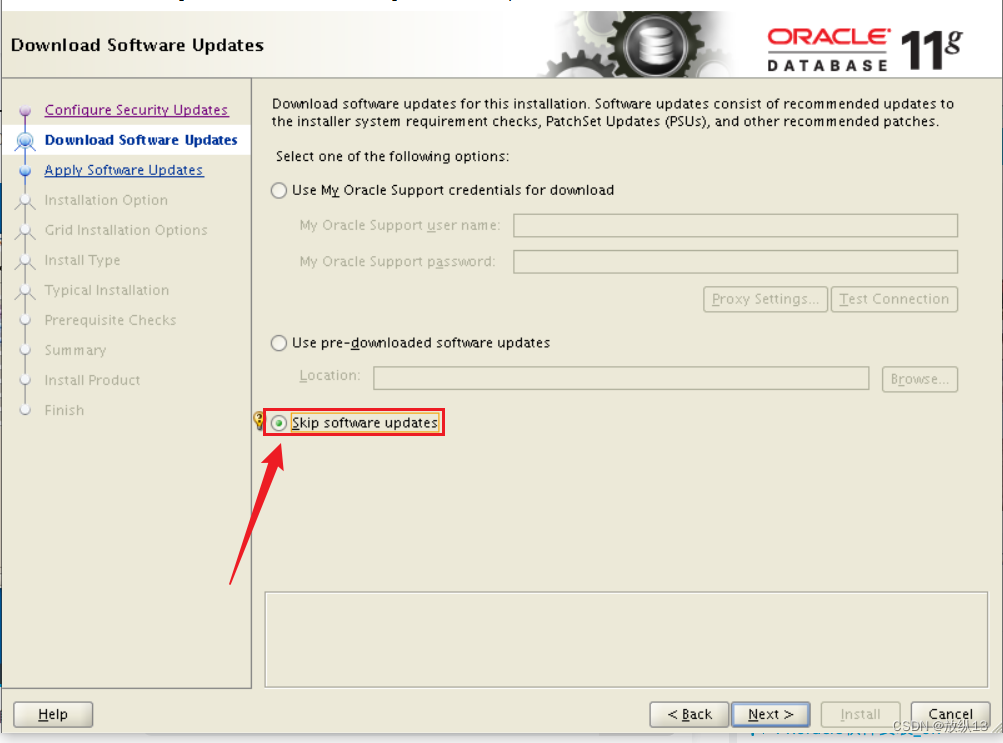

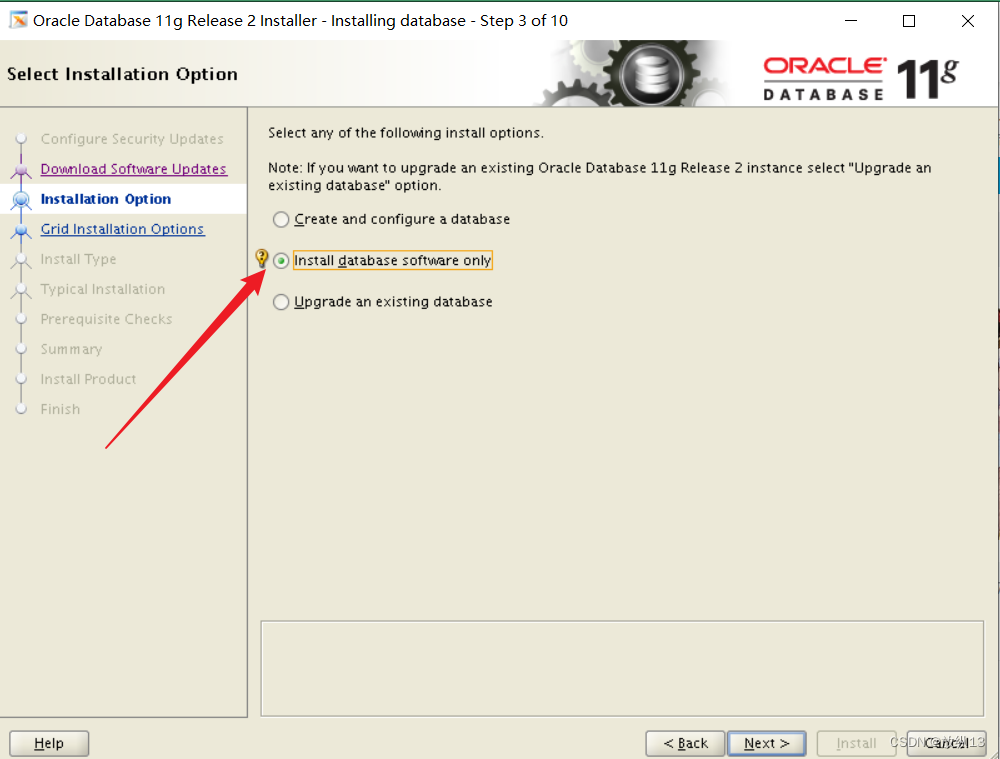

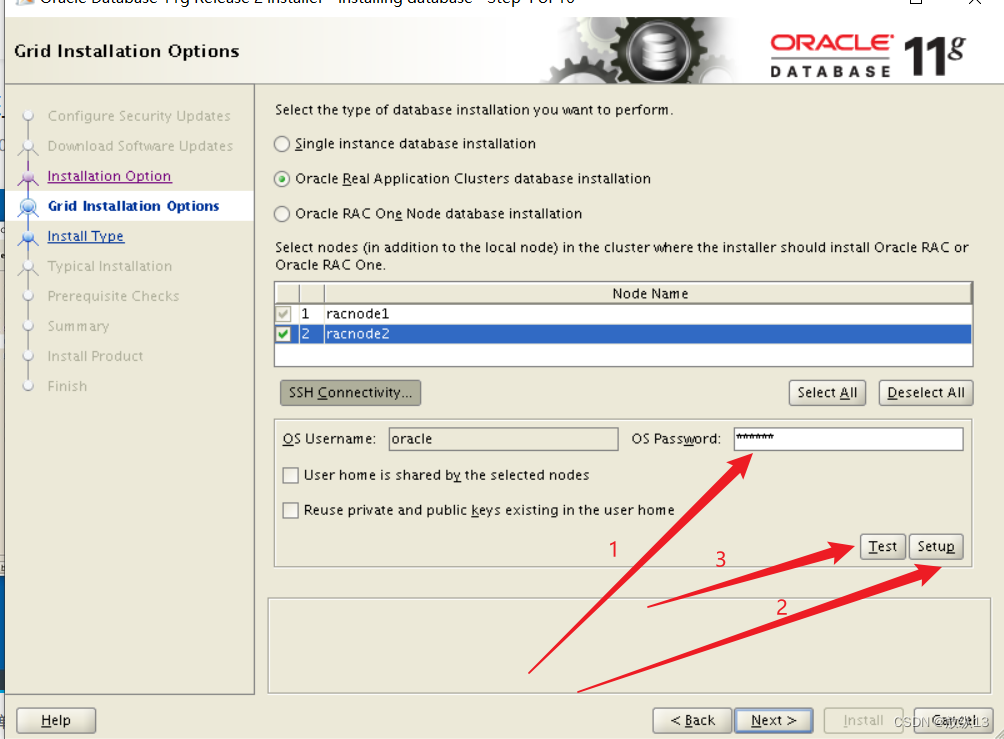

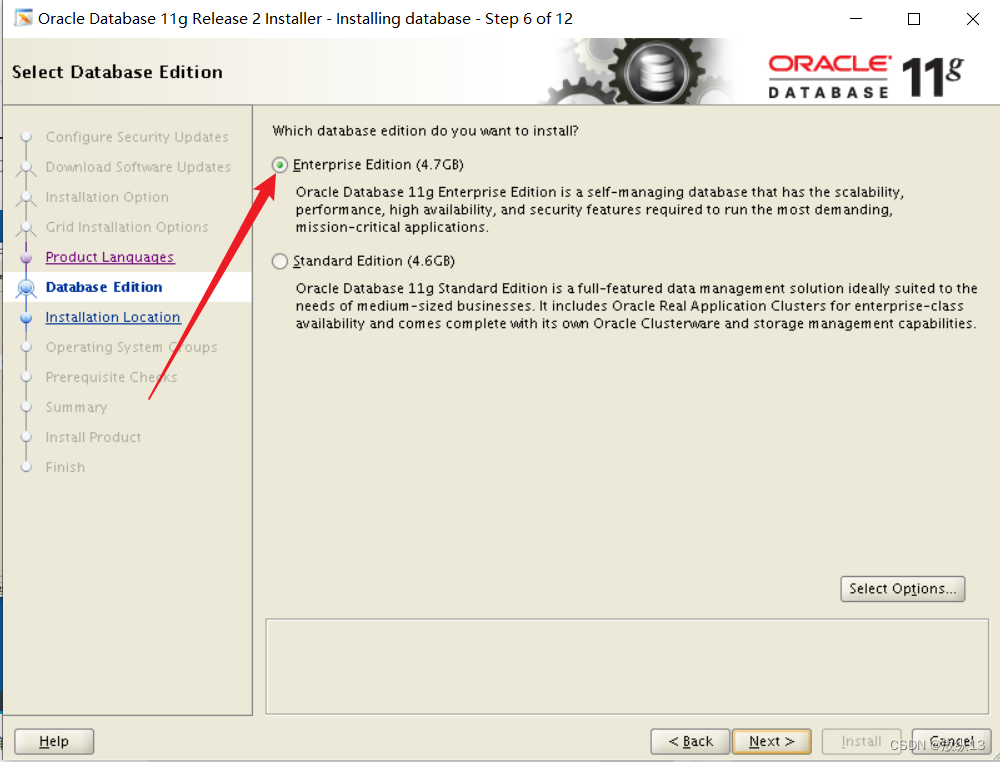

8.安装Oracle

su - oracle

cd /soft/

mkdir oracle

cd oracle/

#上传数据库软件并赋给对应的权限,然后解压对应包

unzip p13390677_112040_Linux-x86-64_1of7.zip

unzip p13390677_112040_Linux-x86-64_2of7.zip

cd database/

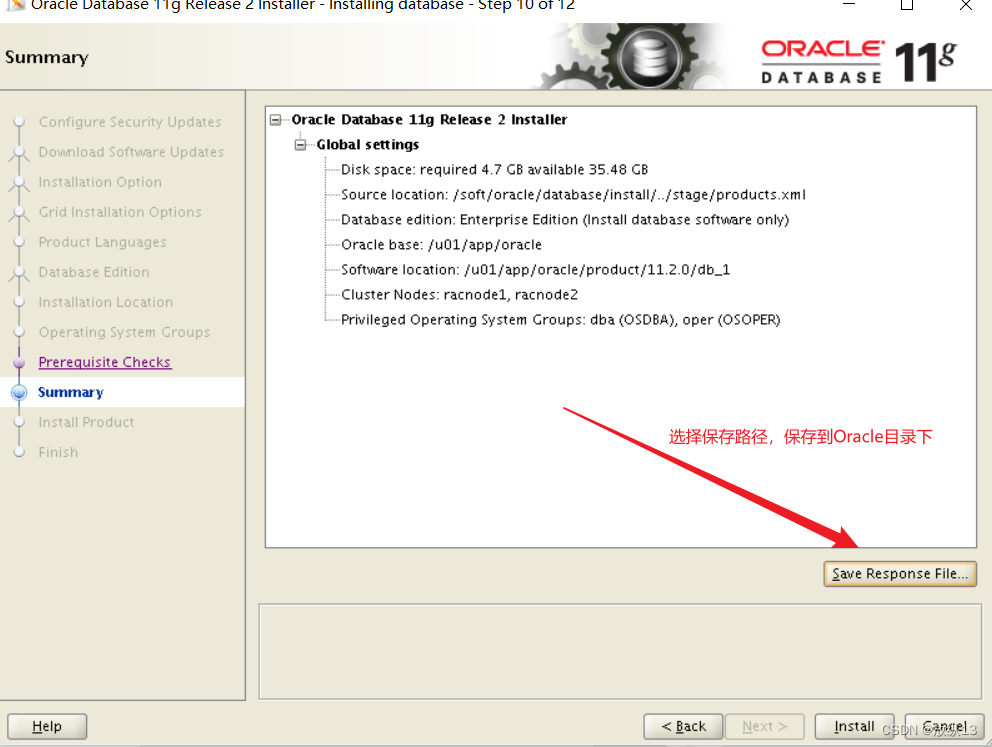

./runInstaller

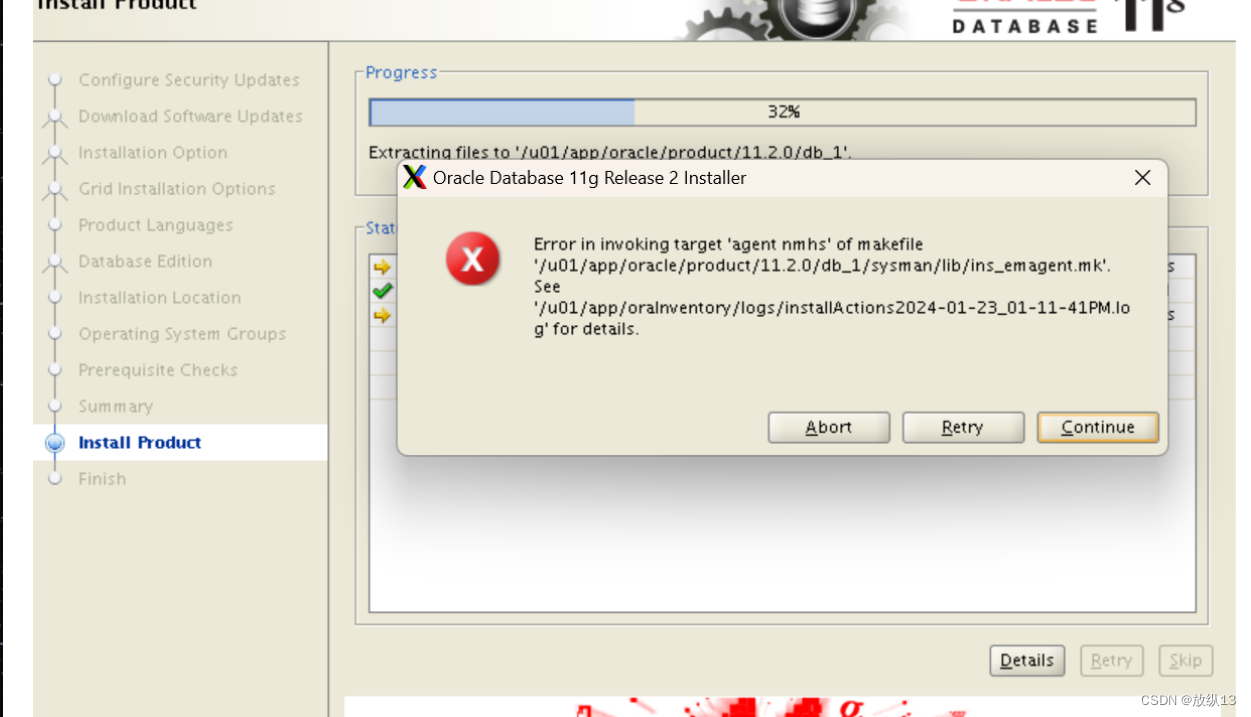

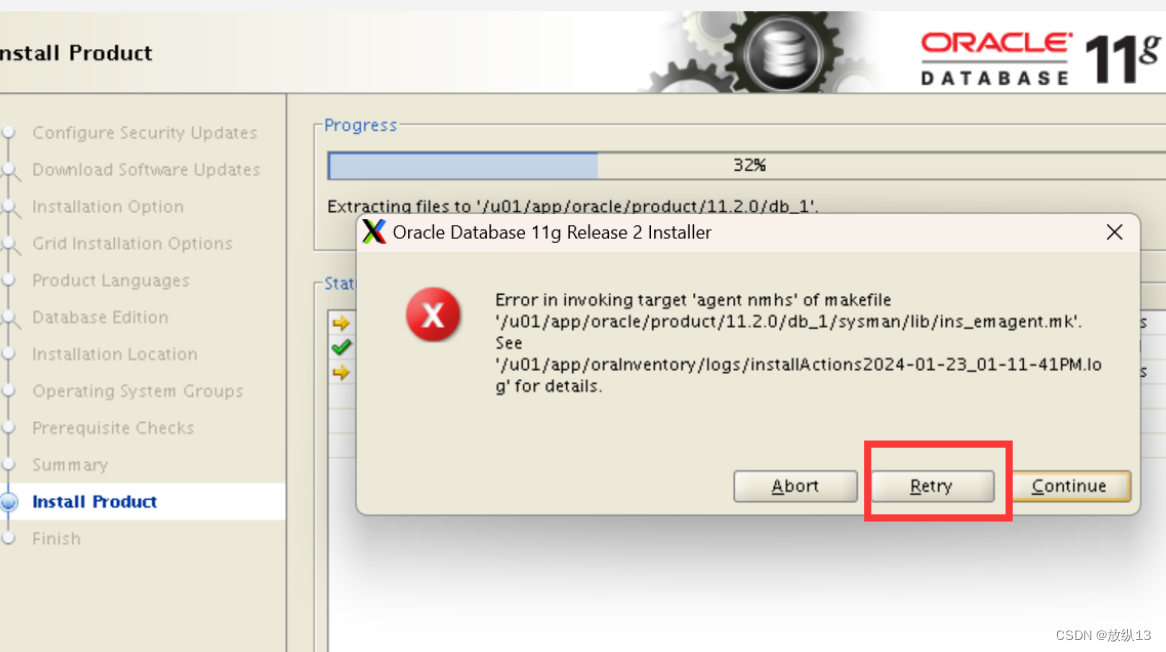

#出现上述报错的话,解决方法如下

cd $ORACLE_HOME

cd sysman/lib/

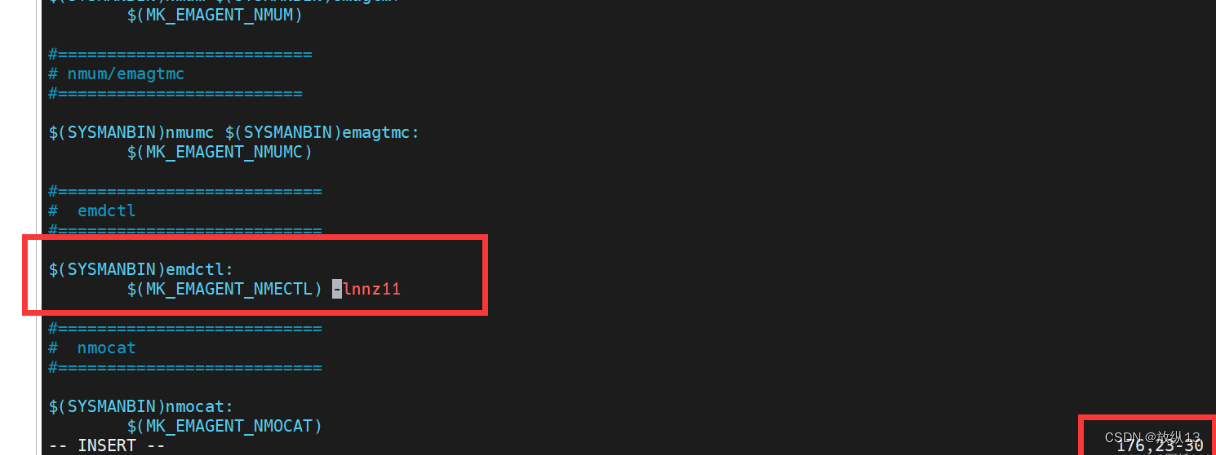

#先备份一下上述文件,备份之后修改这个文件,添加如图字符串

cp ins_emagent.mk ins_emagent.mk.bak

-lnnz11

执行两个脚本

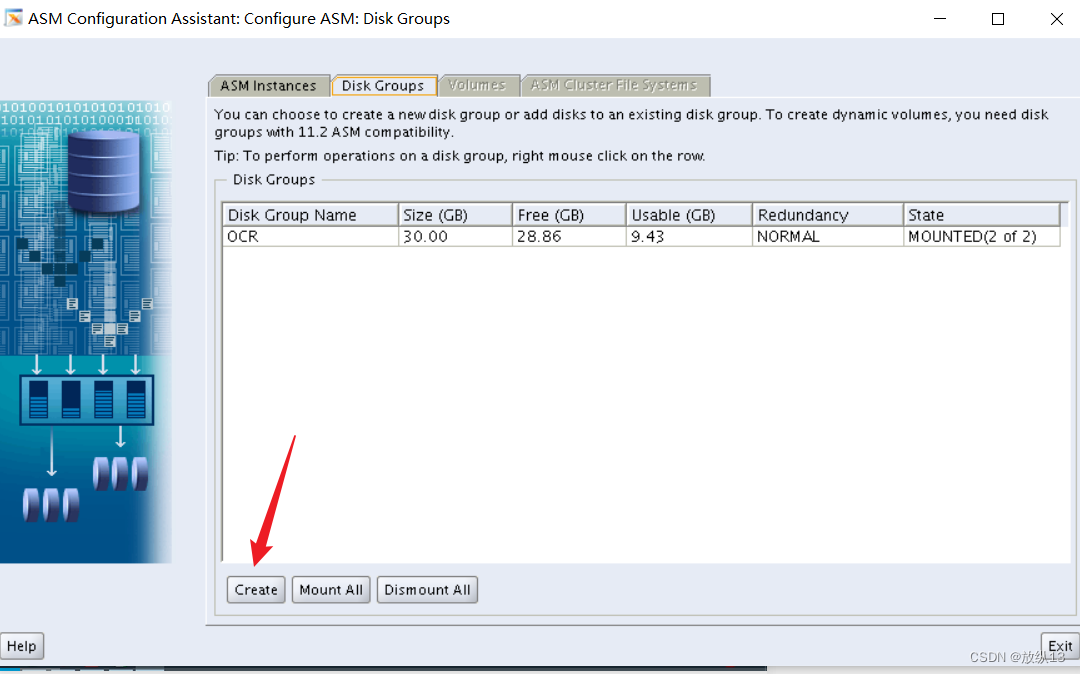

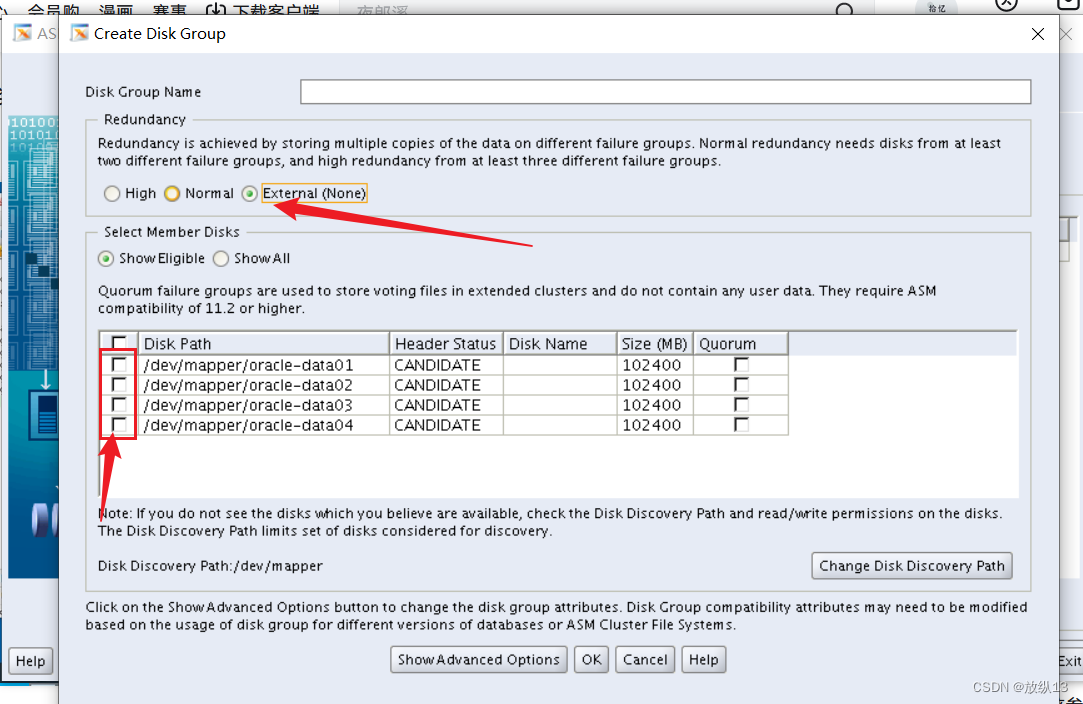

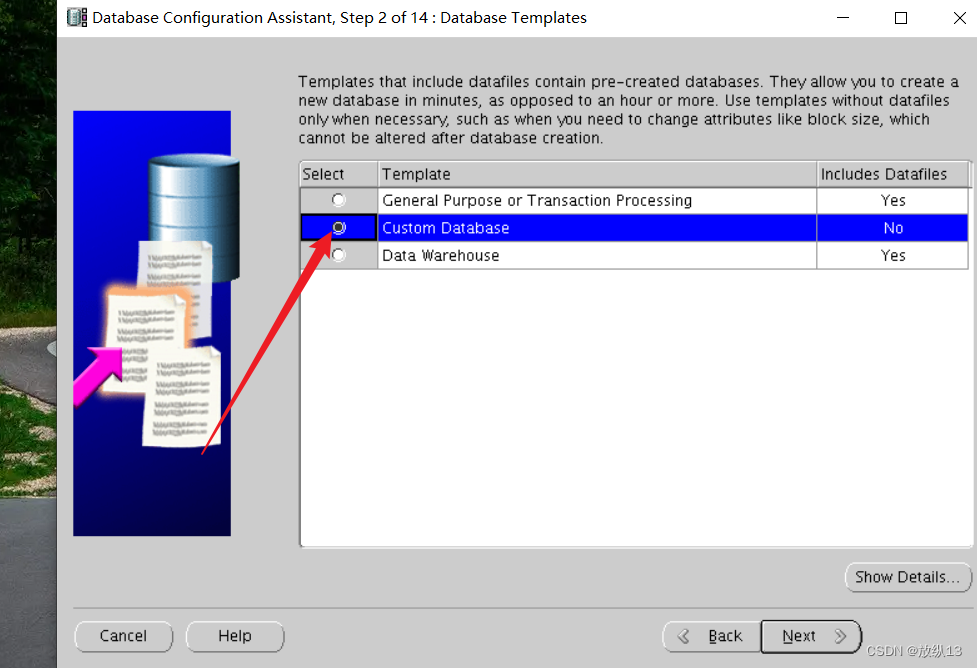

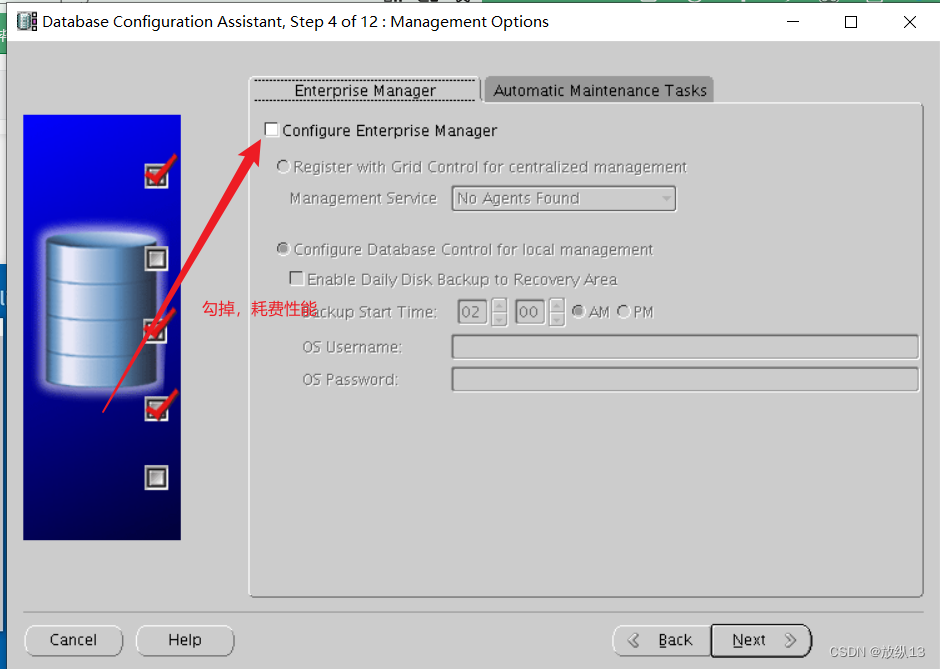

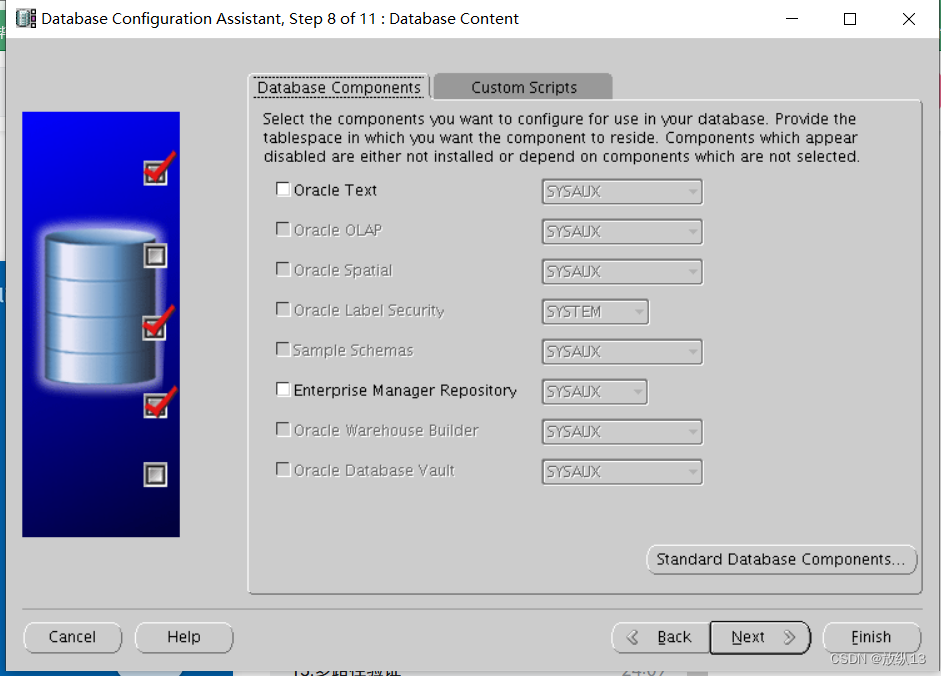

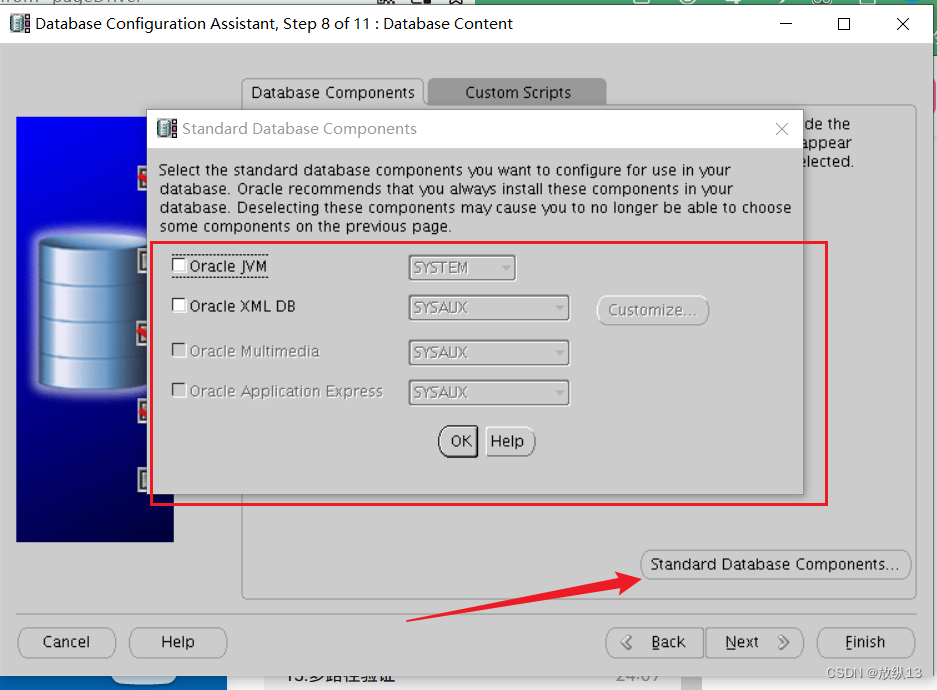

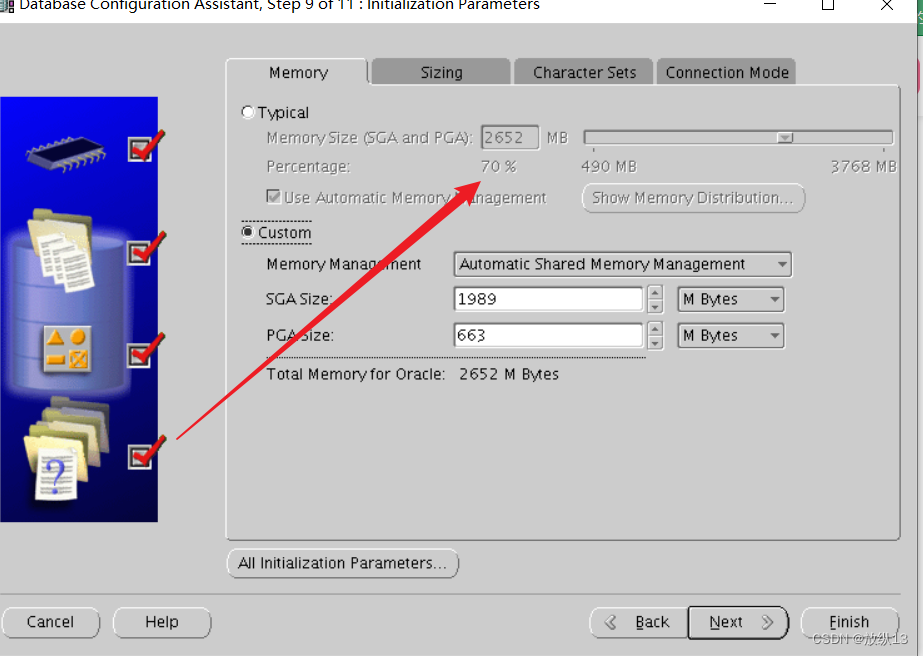

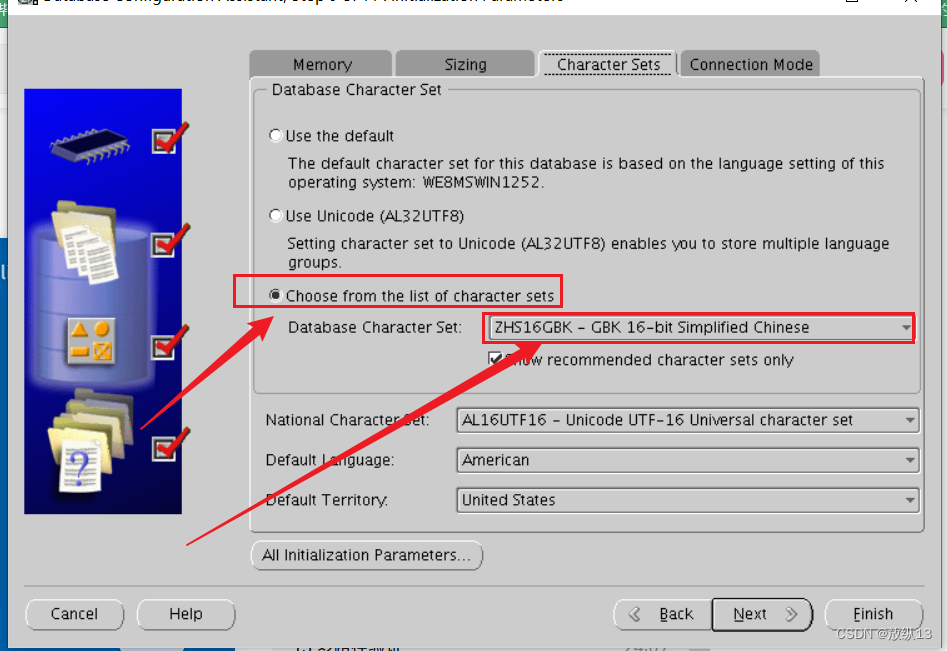

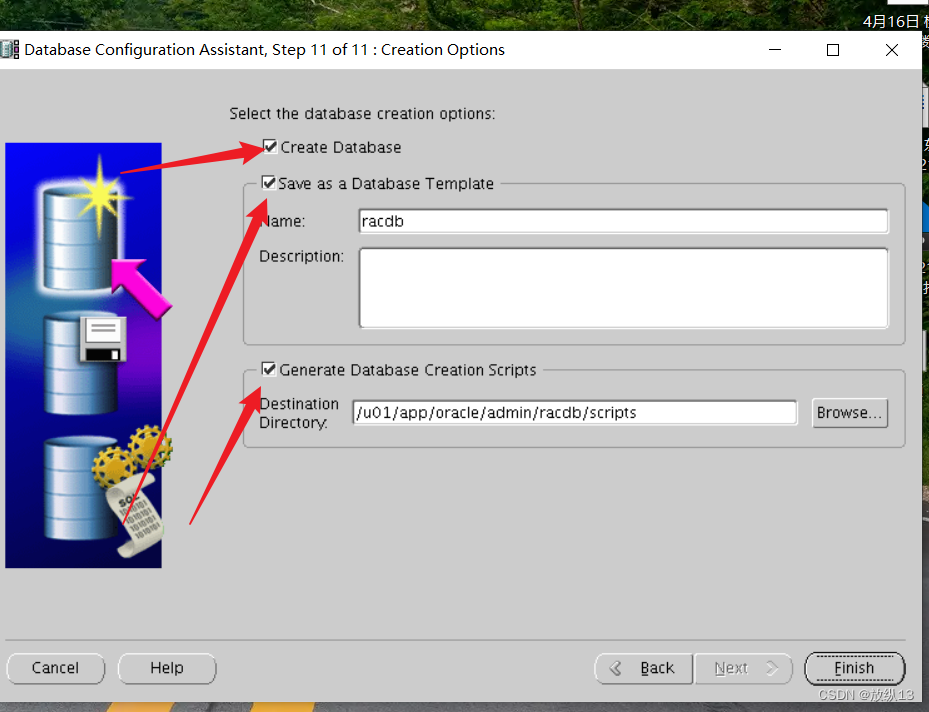

9.DBCA创建数据库

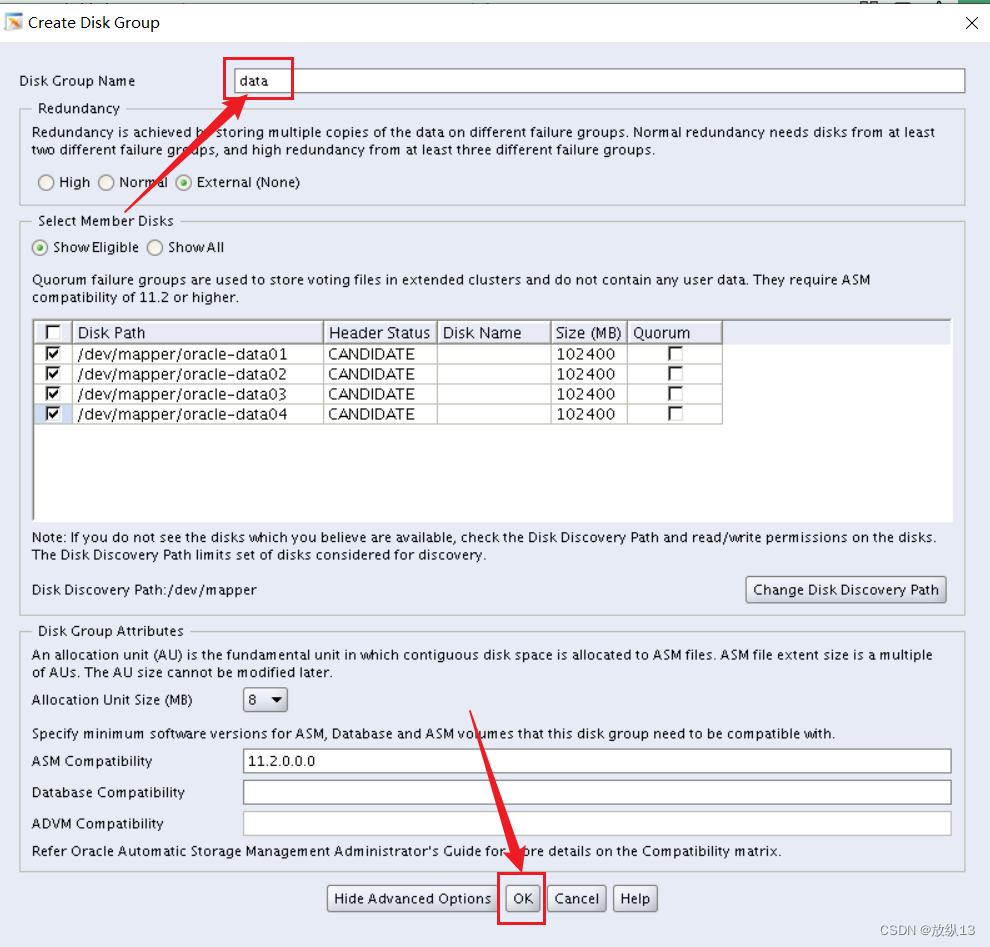

在配置oracle数据库前,用grid用户连接,配置asm磁盘组

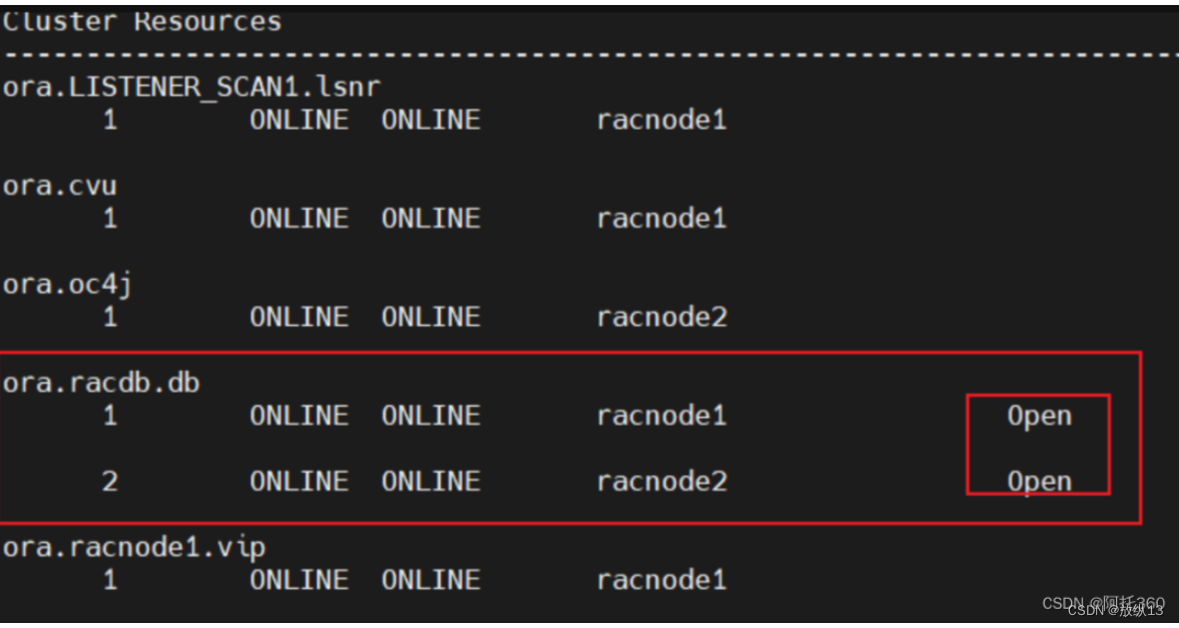

集群应该在启动状态

至此,集群搭建完成 !

本次搭建主要参考:https://www.bilibili.com/video/BV1FV4y1L7Kt/?spm_id_from=333.788&vd_source=777307e959c73e566042a94ab6c90d38

2111

2111

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?