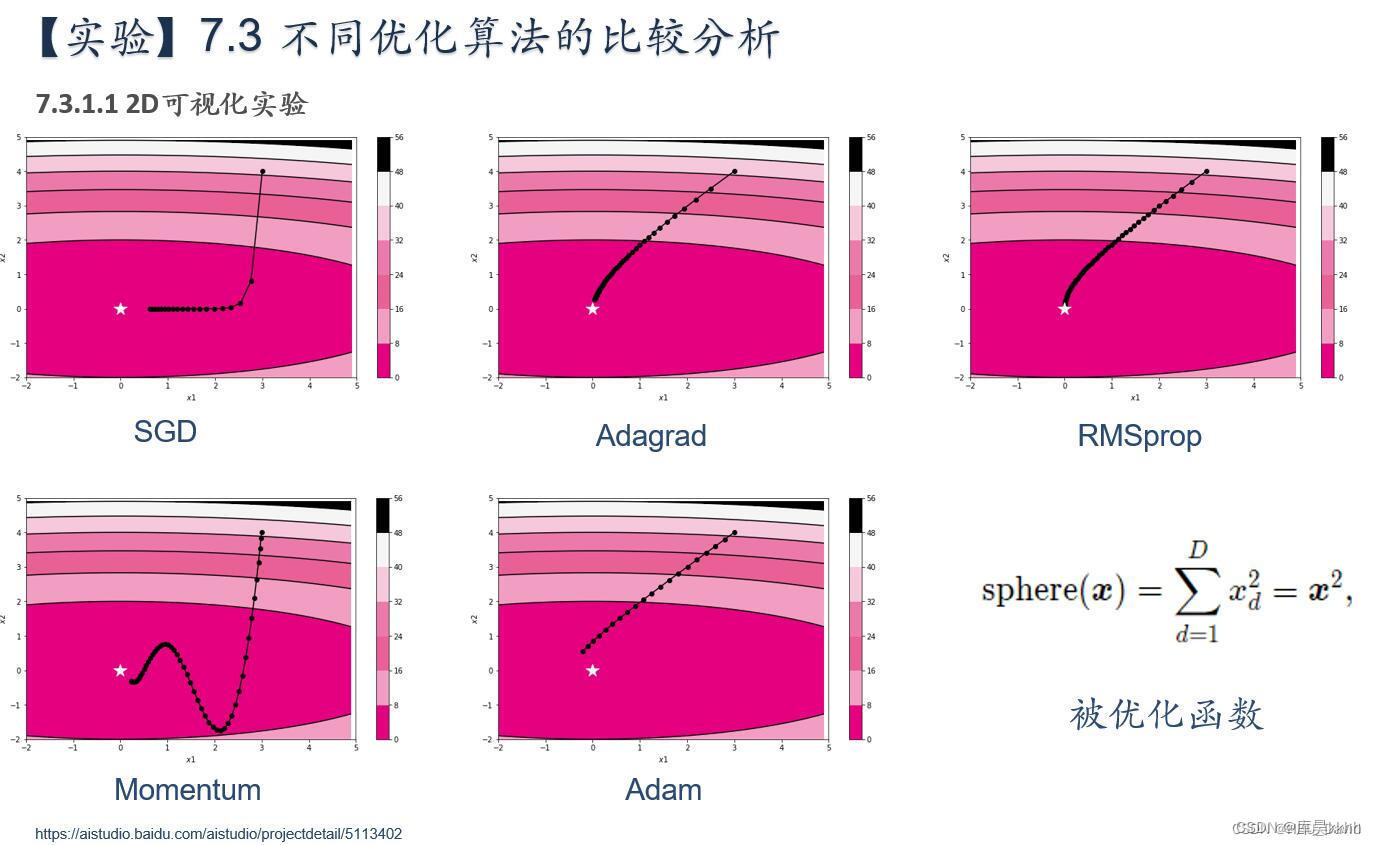

简要介绍图中的优化算法,编程实现并2D可视化

1. 被优化函数 x^2

(1)SGD:

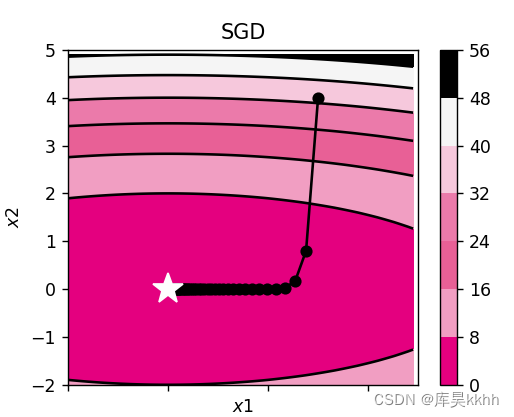

随机梯度下降(Stochastic Gradient Descent)是基于梯度的一种优化算法,用于寻找损失函数最小化的参数配置。SGD通过计算每个样本的梯度来更新参数,并在每次更新中随机选择一个或一批样本,使用一个样本来计算损失函数的梯度,并更新权重。

实现结果:

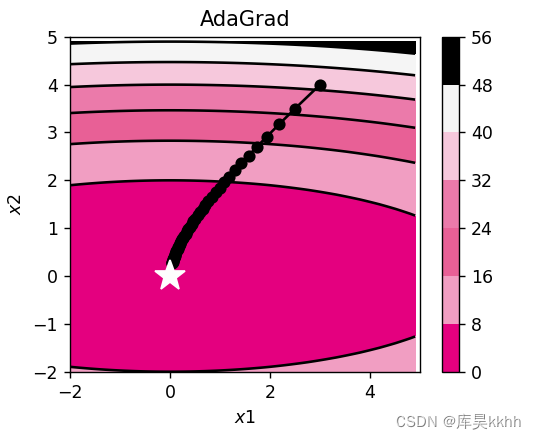

(2)Adagrad:

它被称为自适应学习率优化算法,根据自变量在每个维度的梯度值的大小来调整各个维度上的学习率,从而避免统一的学习率难以适应所有维度的问题。

实现结果:

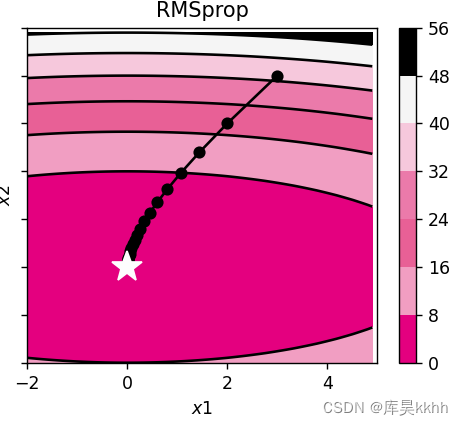

(3)RMSprop:

它是梯度下降优化算法的扩展,旨在解决AdaGrad算法中学习率急剧下降的问题,采用指数加权移动平均来计算每个维度上的学习率,从而在迭代过程中保持稳定的学习率。具体来说,RMSprop使用小批量随机梯度平方的加权平均来动态调整每个维度上的学习率。这样可以避免在迭代过程中学习率单调递减或过快下降的问题,从而加速优化过程。

实现结果:

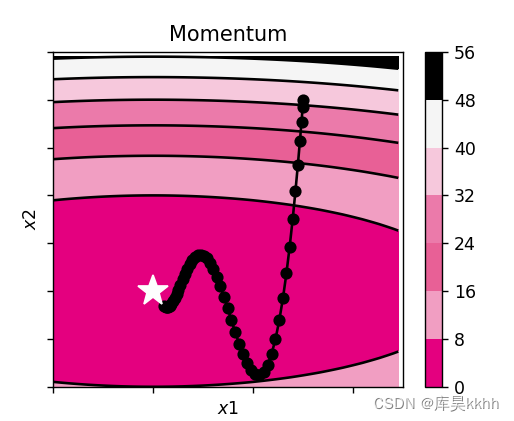

(4)Momentum:

对梯度下降法的一种优化, 它在原理上模拟了物理学中的动量,它的核心思想是通过积累之前梯度的动量来加速优化过程,将一段时间内的梯度向量进行了加权平均,分别计算得到梯度更新过程中 w和 b的大致走向,一定程度上消除了更新过程中的不确定性因素(如摆动现象),使得梯度更新朝着一个越来越明确的方向前进。

实现结果:

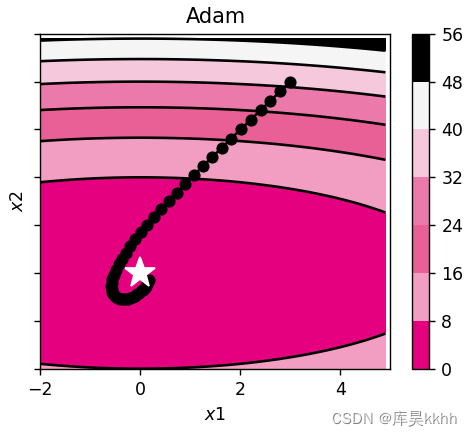

(5)Adam:

一种可以替代传统随机梯度下降过程的一阶优化算法,它能基于训练数据迭代地更新神经网络权重,是一种自适应学习率的优化算法,结合了Momentum和RMSprop的思想。在训练神经网络时,Adam可以动态地调整每个参数的学习率,从而更好地处理非线性问题和稀疏梯度问题。

实现结果:

完整代码:

# coding: utf-8

import copy

from op import Op

import torch

import numpy as np

from matplotlib import pyplot as plt

import warnings

warnings.filterwarnings('ignore')

warnings.filterwarnings('ignore', category=DeprecationWarning)

warnings.filterwarnings('ignore', message='This is a warning message')

class OptimizedFunction(Op):

def __init__(self, w):

super(OptimizedFunction, self).__init__()

self.w = w

self.params = {'x': 0}

self.grads = {'x': 0}

def forward(self, x):

self.params['x'] = x

return torch.matmul(self.w.T, torch.tensor(torch.square(self.params['x']), dtype=torch.float32))

def backward(self):

self.grads['x'] = 2 * torch.multiply(self.w.T, self.params['x'])

class SGD:

"""

随机梯度下降法(Stochastic Gradient Descent)

"""

def __init__(self, lr=0.01):

self.lr = torch.tensor(lr)

def update(self, params, grads):

for key in params.keys():

params[key] -= self.lr * grads[key]

class Momentum:

"""

Momentum SGD

"""

def __init__(self, lr=0.01, momentum=0.9):

self.lr = torch.tensor(lr)

self.momentum = torch.tensor(momentum)

self.v = None

def update(self, params, grads):

if self.v is None:

self.v = {}

for key, val in params.items():

self.v[key] = torch.tensor(np.zeros_like(val))

for key in params.keys():

self.v[key] = self.momentum * self.v[key] - self.lr * grads[key]

params[key] += self.v[key]

class Nesterov:

"""

Nesterov's Accelerated Gradient (http://arxiv.org/abs/1212.0901)

"""

def __init__(self, lr=0.01, momentum=0.9):

self.lr = lr

self.momentum = torch.tensor(momentum)

self.v = None

def update(self, params, grads):

if self.v is None:

self.v = {}

for key, val in params.items():

self.v[key] = torch.tensor(np.zeros_like(val))

for key in params.keys():

self.v[key] *= self.momentum

self.v[key] -= self.lr * grads[key]

params[key] += self.momentum * self.momentum * self.v[key]

params[key] -= (1 + self.momentum) * self.lr * grads[key]

class AdaGrad:

"""

AdaGrad

"""

def __init__(self, lr=0.5):

self.lr = torch.tensor(lr)

self.h = None

def update(self, params, grads):

if self.h is None:

self.h = {}

for key, val in params.items():

self.h[key] = torch.tensor(np.zeros_like(val))

for key in params.keys():

self.h[key] += grads[key] * grads[key]

params[key] -= self.lr * grads[key] / (torch.tensor(np.sqrt(self.h[key])) + torch.tensor(1e-7))

class RMSprop:

"""

RMSprop

"""

def __init__(self, lr=0.1, decay_rate=0.99):

self.lr = torch.tensor(lr)

self.decay_rate = torch.tensor(decay_rate)

self.h = None

def update(self, params, grads):

if self.h is None:

self.h = {}

for key, val in params.items():

self.h[key] = torch.tensor(np.zeros_like(val))

for key in params.keys():

self.h[key] *= self.decay_rate

self.h[key] += (1 - self.decay_rate) * grads[key] * grads[key]

params[key] -= self.lr * grads[key] / (torch.tensor(np.sqrt(self.h[key])) + torch.tensor(1e-7))

class Adam:

"""

Adam (http://arxiv.org/abs/1412.6980v8)

"""

def __init__(self, lr=0.2, beta1=0.9, beta2=0.99):

self.lr = torch.tensor(lr)

self.beta1 = torch.tensor(beta1)

self.beta2 = torch.tensor(beta2)

self.iter = torch.tensor(0)

self.m = None

self.v = None

def update(self, params, grads):

if self.m is None:

self.m, self.v = {}, {}

for key, val in params.items():

self.m[key] = torch.tensor(np.zeros_like(val))

self.v[key] = torch.tensor(np.zeros_like(val))

self.iter += 1

lr_t = self.lr * torch.tensor(np.sqrt(1.0 - self.beta2 ** self.iter)) / (1.0 - self.beta1 ** self.iter)

for key in params.keys():

self.m[key] += (1 - self.beta1) * (grads[key] - self.m[key])

self.v[key] += (1 - self.beta2) * (grads[key] ** 2 - self.v[key])

params[key] -= lr_t * self.m[key] / (np.sqrt(self.v[key]) + 1e-7)

def train_f(model, optimizer, x_init, epoch):

x = x_init

all_x = []

losses = []

for i in range(epoch):

all_x.append(copy.copy(x.numpy()))

loss = model(x)

losses.append(loss)

model.backward()

optimizer.update(params=model.params, grads=model.grads)

x = model.params['x']

return torch.tensor(all_x), losses

# 参数更新可视化

class Visualization(object):

def __init__(self):

"""

初始化可视化类

"""

# 只画出参数x1和x2在区间[-5, 5]的曲线部分

x1 = np.arange(-5, 5, 0.1)

x2 = np.arange(-5, 5, 0.1)

x1, x2 = np.meshgrid(x1, x2)

self.init_x = torch.tensor([x1, x2])

def plot_2d(self, ax, model, x, ax_i, ax_j, fig_name):

"""

可视化参数更新轨迹

"""

cp = ax[ax_i, ax_j].contourf(self.init_x[0], self.init_x[1], model(self.init_x.transpose(0, 1)),

colors=['#e4007f', '#f19ec2', '#e86096', '#eb7aaa', '#f6c8dc', '#f5f5f5',

'#000000'])

c = ax[ax_i, ax_j].contour(self.init_x[0], self.init_x[1], model(self.init_x.transpose(0, 1)), colors='black')

cbar = fig.colorbar(cp)

ax[ax_i, ax_j].plot(x[:, 0], x[:, 1], '-o', color='#000000')

ax[ax_i, ax_j].plot(0, 'r*', markersize=18, color='#fefefe')

ax[ax_i, ax_j].set_xlabel('$x1$')

ax[ax_i, ax_j].set_ylabel('$x2$')

ax[ax_i, ax_j].set_xlim((-2, 5))

ax[ax_i, ax_j].set_ylim((-2, 5))

ax[ax_i, ax_j].set_title(fig_name)

def train_and_plot_f(ax, model, optimizer, epoch, ax_i, ax_j, fig_name):

"""

训练模型并可视化参数更新轨迹

"""

# 设置x的初始值

x_init = torch.tensor([3, 4], dtype=torch.float32)

x, losses = train_f(model, optimizer, x_init, epoch)

# 展示x1、x2的更新轨迹

vis = Visualization()

vis.plot_2d(ax, model, x, ax_i, ax_j, fig_name)

# 固定随机种子

torch.manual_seed(0)

w = torch.tensor([0.2, 2])

# 实例化模型

model = OptimizedFunction(w)

# 创建图像并分块

fig, ax = plt.subplots(2, 3, sharex=True, sharey=True, figsize=(10, 6))

opt1 = SGD(lr=0.2)

train_and_plot_f(ax, model, opt1, epoch=50, ax_i=0, ax_j=0, fig_name='SGD')

opt2 = Momentum()

train_and_plot_f(ax, model, opt2, epoch=50, ax_i=0, ax_j=1, fig_name='Momentum')

opt3 = Nesterov()

train_and_plot_f(ax, model, opt3, epoch=50, ax_i=0, ax_j=2, fig_name='AdaGrad')

opt4 = RMSprop()

train_and_plot_f(ax, model, opt4, epoch=50, ax_i=1, ax_j=0, fig_name='RMSprop')

opt5 = Adam()

train_and_plot_f(ax, model, opt5, epoch=50, ax_i=1, ax_j=1, fig_name='Adam')

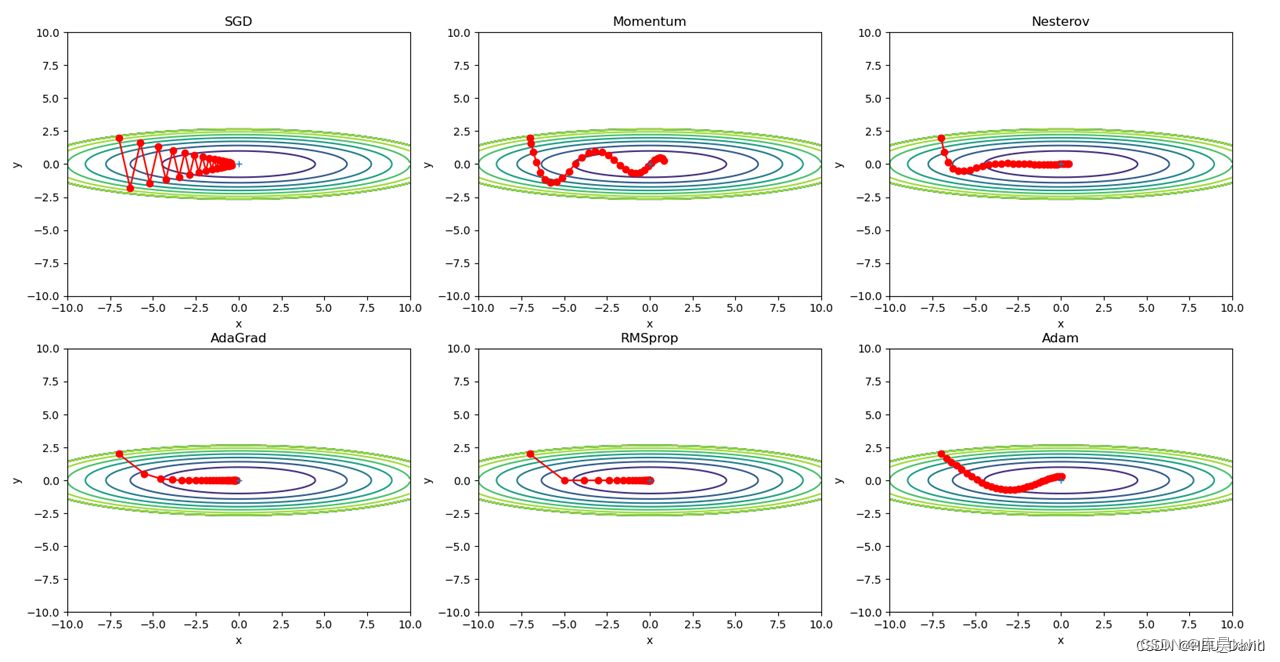

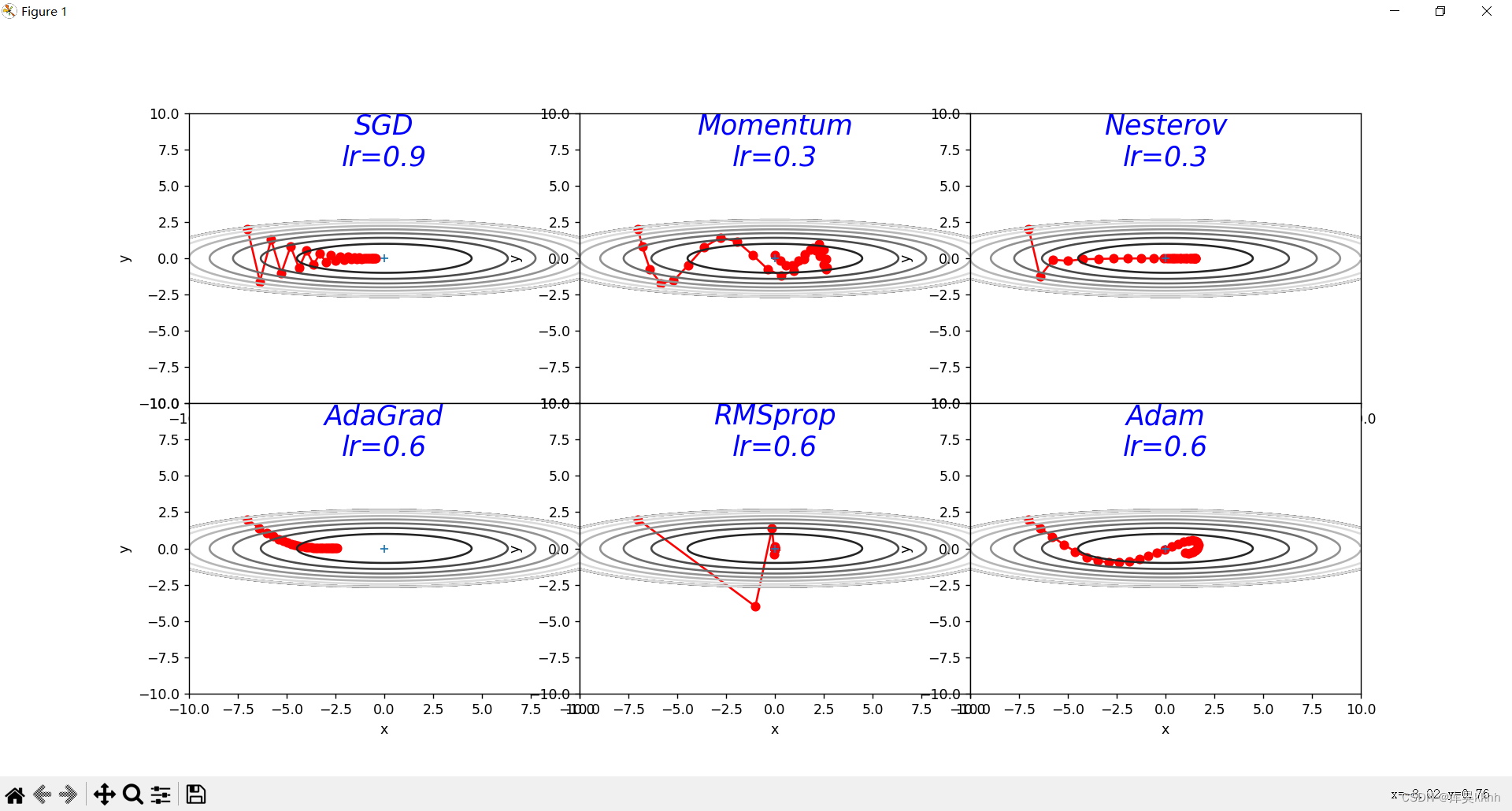

plt.show()2. 被优化函数  x^2/20+y^2

x^2/20+y^2

代码:

# coding: utf-8

import numpy as np

import matplotlib.pyplot as plt

from collections import OrderedDict

class SGD:

"""随机梯度下降法(Stochastic Gradient Descent)"""

def __init__(self, lr=0.01):

self.lr = lr

def update(self, params, grads):

for key in params.keys():

params[key] -= self.lr * grads[key]

class Momentum:

"""Momentum SGD"""

def __init__(self, lr=0.01, momentum=0.9):

self.lr = lr

self.momentum = momentum

self.v = None

def update(self, params, grads):

if self.v is None:

self.v = {}

for key, val in params.items():

self.v[key] = np.zeros_like(val)

for key in params.keys():

self.v[key] = self.momentum * self.v[key] - self.lr * grads[key]

params[key] += self.v[key]

class Nesterov:

"""Nesterov's Accelerated Gradient (http://arxiv.org/abs/1212.0901)"""

def __init__(self, lr=0.01, momentum=0.9):

self.lr = lr

self.momentum = momentum

self.v = None

def update(self, params, grads):

if self.v is None:

self.v = {}

for key, val in params.items():

self.v[key] = np.zeros_like(val)

for key in params.keys():

self.v[key] *= self.momentum

self.v[key] -= self.lr * grads[key]

params[key] += self.momentum * self.momentum * self.v[key]

params[key] -= (1 + self.momentum) * self.lr * grads[key]

class AdaGrad:

"""AdaGrad"""

def __init__(self, lr=0.01):

self.lr = lr

self.h = None

def update(self, params, grads):

if self.h is None:

self.h = {}

for key, val in params.items():

self.h[key] = np.zeros_like(val)

for key in params.keys():

self.h[key] += grads[key] * grads[key]

params[key] -= self.lr * grads[key] / (np.sqrt(self.h[key]) + 1e-7)

class RMSprop:

"""RMSprop"""

def __init__(self, lr=0.01, decay_rate=0.99):

self.lr = lr

self.decay_rate = decay_rate

self.h = None

def update(self, params, grads):

if self.h is None:

self.h = {}

for key, val in params.items():

self.h[key] = np.zeros_like(val)

for key in params.keys():

self.h[key] *= self.decay_rate

self.h[key] += (1 - self.decay_rate) * grads[key] * grads[key]

params[key] -= self.lr * grads[key] / (np.sqrt(self.h[key]) + 1e-7)

class Adam:

"""Adam (http://arxiv.org/abs/1412.6980v8)"""

def __init__(self, lr=0.001, beta1=0.9, beta2=0.999):

self.lr = lr

self.beta1 = beta1

self.beta2 = beta2

self.iter = 0

self.m = None

self.v = None

def update(self, params, grads):

if self.m is None:

self.m, self.v = {}, {}

for key, val in params.items():

self.m[key] = np.zeros_like(val)

self.v[key] = np.zeros_like(val)

self.iter += 1

lr_t = self.lr * np.sqrt(1.0 - self.beta2 ** self.iter) / (1.0 - self.beta1 ** self.iter)

for key in params.keys():

self.m[key] += (1 - self.beta1) * (grads[key] - self.m[key])

self.v[key] += (1 - self.beta2) * (grads[key] ** 2 - self.v[key])

params[key] -= lr_t * self.m[key] / (np.sqrt(self.v[key]) + 1e-7)

def f(x, y):

return x ** 2 / 20.0 + y ** 2

def df(x, y):

return x / 10.0, 2.0 * y

init_pos = (-7.0, 2.0)

params = {}

params['x'], params['y'] = init_pos[0], init_pos[1]

grads = {}

grads['x'], grads['y'] = 0, 0

learningrate = [0.9, 0.3, 0.3, 0.6, 0.6, 0.6, 0.6]

optimizers = OrderedDict()

optimizers["SGD"] = SGD(lr=learningrate[0])

optimizers["Momentum"] = Momentum(lr=learningrate[1])

optimizers["Nesterov"] = Nesterov(lr=learningrate[2])

optimizers["AdaGrad"] = AdaGrad(lr=learningrate[3])

optimizers["RMSprop"] = RMSprop(lr=learningrate[4])

optimizers["Adam"] = Adam(lr=learningrate[5])

idx = 1

id_lr = 0

for key in optimizers:

optimizer = optimizers[key]

lr = learningrate[id_lr]

id_lr = id_lr + 1

x_history = []

y_history = []

params['x'], params['y'] = init_pos[0], init_pos[1]

for i in range(30):

x_history.append(params['x'])

y_history.append(params['y'])

grads['x'], grads['y'] = df(params['x'], params['y'])

optimizer.update(params, grads)

x = np.arange(-10, 10, 0.01)

y = np.arange(-5, 5, 0.01)

X, Y = np.meshgrid(x, y)

Z = f(X, Y)

# for simple contour line

mask = Z > 7

Z[mask] = 0

# plot

plt.subplot(2, 3, idx)

idx += 1

plt.plot(x_history, y_history, 'o-', color="r")

# plt.contour(X, Y, Z) # 绘制等高线

plt.contour(X, Y, Z, cmap='gray') # 颜色填充

plt.ylim(-10, 10)

plt.xlim(-10, 10)

plt.plot(0, 0, '+')

# plt.axis('off')

# plt.title(key+'\nlr='+str(lr), fontstyle='italic')

plt.text(0, 10, key + '\nlr=' + str(lr), fontsize=20, color="b",

verticalalignment='top', horizontalalignment='center', fontstyle='italic')

plt.xlabel("x")

plt.ylabel("y")

plt.subplots_adjust(wspace=0, hspace=0) # 调整子图间距

plt.show()结果:

3. 解释不同轨迹的形成原因

分析各个算法的优缺点

(1)SGD:

轨迹形成原因:图中轨迹上下震荡呈之字形向中心波动,幅度越来越小更加密集,这是由于SGD在更新过程中非常频繁,梯度的方向并不总是指向最小值的方向,这可能导致SGD在优化过程中走了一些低效的路径,当图像在y方向变化很大,而在x方向变化很小时,SGD可能无法直接找到最优路径,因此只能迂回往复地寻找,从而形成了之字形的路径。

优点:训练速度快,参数更新速度快

缺点:当训练过程深入时准确率会不断下降,可能会收敛到局部最优,模型无法正确收敛

(2)Adagrad:

轨迹形成原因:图中轨迹变得越来越平滑,幅度变小,这是由于训练过程的后期学习率慢慢变得非常小导致梯度越来越小

优点:能实现学习率的自动更改,避免多次重新设置学习率,对低频的参数做较大的更新,对高频的做较小的更新,对于稀疏的数据处理很好

缺点:分母上梯度平方的累加会越来越大,步长也越来越小,学习率就会收缩并最终会变得非常小

(3)RMSprop:

轨迹形成原因:由于该算法根据历史梯度的平均值来动态调整学习率,梯度与最近的历史梯度相关性高,导致轨迹偏移幅度变化大

优点:自适应学习率方法,使收敛速度更快,可以避免学习率过大或过小的问题,能够更好地解决学习率调整问题

缺点:超参数的选择非常敏感,可能需要进行比较多的调参才能得到最优的结果。 可能会出现震荡,也可能出现梯度爆炸或消失问题

(4)Momentum:

轨迹形成原因:由于该算法引入了动量的概念,使得梯度下降在优化过程中具有了一定的“惯性”,每次更新不仅考虑当前的梯度方向,还会考虑上一次更新的方向,动量变量会与当前的梯度方向进行加权求和,得到一个综合的方向,然后按照这个综合的方向进行更新。

优点:面对小而连续的梯度含有很多噪音时,可以很好的加速学习,缓解噪音问题

缺点:由于梯度的累加,每次更新参数时会有一定的衰减,导致梯度误差变大

(5)Adam:

轨迹形成原因:动量变量和梯度平方的指数移动平均值都被用来调整参数更新的方向和步长。这种结合动量和平滑梯度的方式使得Adam优化算法在优化过程中更加稳定,收敛速度更快,且能够自适应地调整学习率

优点:自适应地调整每个参数的学习率,使得学习过程更加高效,不需要手动调整超参数,对内存需求较小,优化过程中能够保持较好的稳定性,避免过拟合或欠拟合的情况发生,适用于大规模的数据集和模型,能够快速收敛,提高训练效率

缺点:参数初始值设置不当导致可能不收敛,可能错过全局最优解

990

990

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?