文章目录

一、如何使用和修改现有网络模型?

1.1 参数pretrained 为false和true时的区别?

vgg16_false = torchvision.models.vgg16(pretrained = False) #False,下载的是网络模型,默认参数

vgg16_true = torchvision.models.vgg16(pretrained = True) #True,下载的是网络模型,并且在数据集上面训练好的参数。

1)pretrained=False时,只是加载网络模型,把神经网络的代码加载了进来,其中的参数都是默认的参数,不需要下载。

2)pretrained=True时,它就要去从网络中下载,比如说卷积层对应的参数时多少,池化层对应的参数时多少等。这些参数都是在 ImageNet 数据集中训练好的。

1.2 使用和修改现有网络模型(例:vgg16)

import torchvision

from torch import nn

vgg16_false = torchvision.models.vgg16(pretrained=False)

vgg16_true = torchvision.models.vgg16(pretrained=True)

print(vgg16_true)

train_data = torchvision.datasets.CIFAR10('./dataset_ts', train=True, transform=torchvision.transforms.ToTensor(), download=True)

# vgg16最后训练出的模型为100个类别,而CIFAR10为10个类别,我们应该如何修改?

# 我们需要在线性层后面在加一个线性层,或者直接修改

# 1)要在vgg16的classifier下加一层模型

# 名叫add_linear的module名,in_feature=1000,out_feature=10

vgg16_true.classifier.add_module('add_linear', nn.Linear(1000, 10))

print(vgg16_true)

print(vgg16_false)

# 2)修改最后一行结构为out_feature=10

vgg16_false.classifier[6] = nn.Linear(4096, 10)

print(vgg16_false)

二、模型的保存和加载

import torch

import torchvision

vgg16 = torchvision.models.vgg16(weights=None)

# 保存方式1:保存模型结构和参数

torch.save(vgg16, "vgg16_method1.pth")

# 保存方式2:保存模型参数(将参数用字典形式保存)(官方推荐)

torch.save(vgg16.state_dict(), "vgg16_method2.pth")

# 加载方式1:

model1 = torch.load("vgg16_method1.pth")

print(model1)

# 加载方式2:

# 用第2种保存方式,如果想要恢复模型结构使用load_state_dict;否则,将以字典形式读入

model2 = torchvision.models.vgg16(weights=None)

model2.load_state_dict(torch.load("vgg16_method2.pth"))

print(model2)

# 加载方式1存在一个陷阱

# 在加载自己定义的模型时,需要导入该模型的类:from 自定义模型类 import *

三、完整的训练模型套路

3.1 构建自己的模型model.py

import torch

from torch import nn

from torch.nn import Sequential, Conv2d, MaxPool2d, Flatten, Linear

class Mymodel(nn.Module):

def __init__(self):

super(Mymodel, self).__init__()

# 这里构建CIFAR 10 model结构

self.model = Sequential(

Conv2d(3, 32, 5, padding=2),

MaxPool2d(2),

Conv2d(32, 32, 5, padding=2),

MaxPool2d(2),

Conv2d(32, 64, 5, padding=2),

MaxPool2d(2),

Flatten(),

Linear(1024, 64), # 64*4*4=1024

Linear(64, 10)

)

def forward(self, x):

x = self.model(x)

return x

if __name__ == '__main__':

mymodel = Mymodel()

input = torch.ones((64, 3, 32, 32))

output = mymodel(input)

print(output.shape)

3.2 训练过程

import torch

import torchvision

from torch.utils.data import DataLoader

from torch.utils.tensorboard import SummaryWriter

from model import *

# 1. 准备数据集

train_data = torchvision.datasets.CIFAR10("./dataset", train=True,

transform=torchvision.transforms.ToTensor(), download=True)

test_data = torchvision.datasets.CIFAR10("./dataset", train=False,

transform=torchvision.transforms.ToTensor(), download=True)

# 2. length长度

train_data_size = len(train_data)

test_data_size = len(test_data)

# 常用format这种方式输出训练集长度

print("训练数据集的长度:{}".format(train_data_size))

print("测试数据集的长度:{}".format(test_data_size))

# 3. 利用DataLoader加载数据集

train_dataloader = DataLoader(train_data, batch_size=64)

test_dataloader = DataLoader(test_data, batch_size=64)

# 4. 搭建神经网络

mymodel = Mymodel()

# 5. 损失函数

loss_fn = nn.CrossEntropyLoss()

# 6. 优化器:设置学习速率为0.01

learning_rate = 1e-2

optimzer = torch.optim.SGD(mymodel.parameters(), lr=learning_rate)

# 7. 设置训练网络的一些参数:训练次数,测试次数,训练轮数

total_train_step = 0

total_test_step = 0

epoch = 10

# 添加tensorboard

writer = SummaryWriter("logs")

# 8. 开始训练

for i in range(epoch):

print("-----第{}轮训练开始-----".format(i + 1))

# 1)训练步骤开始

mymodel.train() # 当模型中出现特殊的层时使用,比如Dropout,BatchNorm等

for data in train_dataloader:

imgs, targets = data

outputs = mymodel(imgs)

loss = loss_fn(outputs, targets)

# 优化器模型

optimzer.zero_grad()

loss.backward()

optimzer.step()

total_train_step = total_train_step + 1

if total_train_step % 100 == 0:

print("训练次数:{},loss:{}".format(total_train_step, loss.item()))

writer.add_scalar("train_loss", loss.item(), total_train_step)

# 2)测试步骤开始

mymodel.eval() # 当模型中出现特殊的层时使用,比如Dropout,BatchNorm等

total_test_loss = 0

total_accuracy = 0

with torch.no_grad(): # 使用这个测试记录不会保存在网络中

for data in test_dataloader:

imgs, targets = data

outputs = mymodel(imgs)

# loss

loss = loss_fn(outputs, targets)

total_test_loss = total_test_loss + loss.item()

# 正确率accuracy:

# argmax为1表示横向最大的下标,为0表示纵向

# 判断输出与输入是否为同一个类别

accuracy = (outputs.argmax(1) == targets).sum()

total_accuracy = total_accuracy + accuracy

print("整体测试集上的loss:{}".format(total_test_loss))

writer.add_scalar("test_loss", total_test_loss, total_test_step)

print("整体测试集上的正确率:{}".format(total_accuracy/test_data_size))

writer.add_scalar("test_accuracy", total_accuracy/test_data_size, total_test_step)

total_test_step = total_test_step + 1

# 保存模型:这里利用的是第一种保存方式

torch.save(mymodel, "mymodel_{}.pth".format(i))

print("模型已保存")

writer.close()

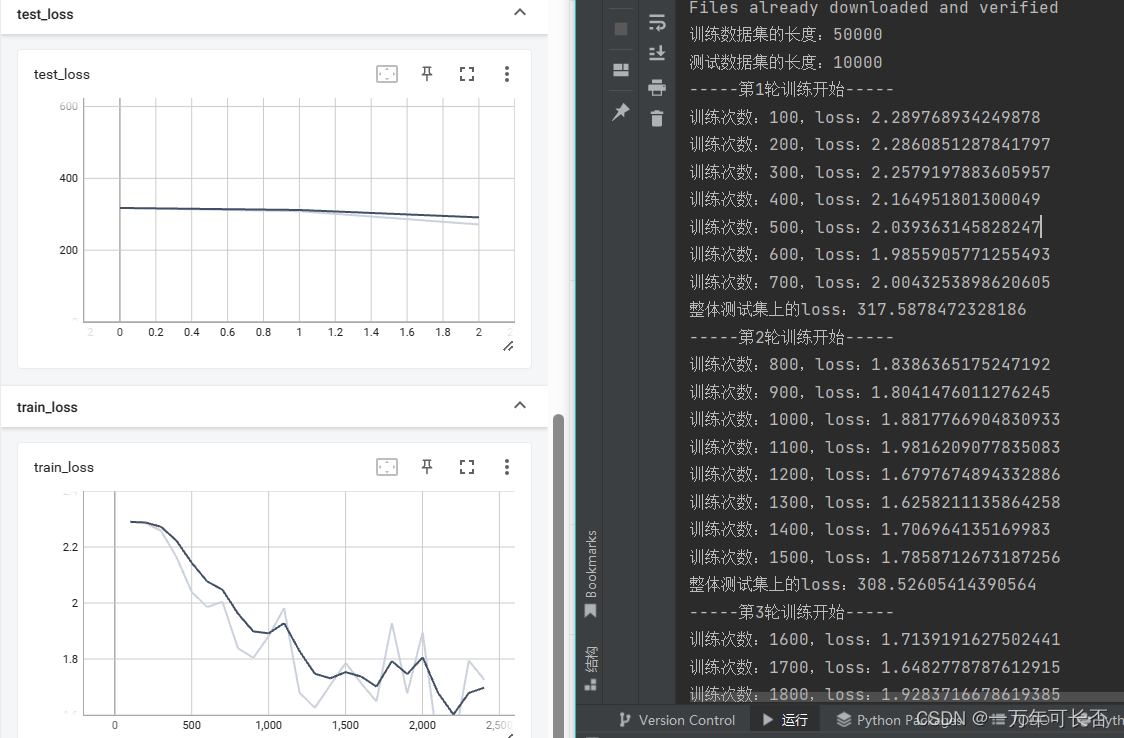

3.3 结果展示

这里可以看出随着训练的进行loss不断减小。因为训练时间过长,这里只展示测试前几轮。

四、使用GPU加速

GPU的使用:

- 第一种方式:对 网络模型、loss、数据 调用.cuda()即可

import torch

import torchvision

from torch import nn

from torch.nn import Sequential, Conv2d, MaxPool2d, Flatten, Linear

from torch.utils.data import DataLoader

from torch.utils.tensorboard import SummaryWriter

import time

# GPU的使用:

# 第一种方式:对 网络模型、loss、数据 调用.cuda()即可

# 谷歌线上使用GPU工具:google colab,也有其他工具

# 1. 准备数据集

train_data = torchvision.datasets.CIFAR10("./dataset", train=True,

transform=torchvision.transforms.ToTensor(), download=True)

test_data = torchvision.datasets.CIFAR10("./dataset", train=False,

transform=torchvision.transforms.ToTensor(), download=True)

# 2. length长度

train_data_size = len(train_data)

test_data_size = len(test_data)

# 常用format这种方式输出训练集长度

print("训练数据集的长度:{}".format(train_data_size))

print("测试数据集的长度:{}".format(test_data_size))

# 3. 利用DataLoader加载数据集

train_dataloader = DataLoader(train_data, batch_size=64)

test_dataloader = DataLoader(test_data, batch_size=64)

# 4. 搭建神经网络

class Mymodel(nn.Module):

def __init__(self):

super(Mymodel, self).__init__()

# 这里构建CIFAR 10 model结构

self.model = Sequential(

Conv2d(3, 32, 5, padding=2),

MaxPool2d(2),

Conv2d(32, 32, 5, padding=2),

MaxPool2d(2),

Conv2d(32, 64, 5, padding=2),

MaxPool2d(2),

Flatten(),

Linear(1024, 64), # 64*4*4=1024

Linear(64, 10)

)

def forward(self, x):

x = self.model(x)

return x

mymodel = Mymodel()

if torch.cuda.is_available():

mymodel = mymodel.cuda()

# 5. 损失函数

loss_fn = nn.CrossEntropyLoss()

if torch.cuda.is_available():

loss_fn = loss_fn.cuda()

# 6. 优化器:设置学习速率为0.01

learning_rate = 1e-2

optimzer = torch.optim.SGD(mymodel.parameters(), lr=learning_rate)

# 7. 设置训练网络的一些参数:训练次数,测试次数,训练轮数

total_train_step = 0

total_test_step = 0

epoch = 10

# 添加tensorboard

writer = SummaryWriter("logs")

# 8. 开始训练

start_time = time.time()

for i in range(epoch):

print("-----第{}轮训练开始-----".format(i + 1))

# 1)训练步骤开始

mymodel.train() # 当模型中出现特殊的层时使用,比如Dropout,BatchNorm等

for data in train_dataloader:

imgs, targets = data

if torch.cuda.is_available():

imgs = imgs.cuda()

targets = targets.cuda()

outputs = mymodel(imgs)

loss = loss_fn(outputs, targets)

# 优化器模型

optimzer.zero_grad()

loss.backward()

optimzer.step()

total_train_step = total_train_step + 1

if total_train_step % 100 == 0:

end_time = time.time()

print("所用时间:{}".format(end_time-start_time))

print("训练次数:{},loss:{}".format(total_train_step, loss.item()))

writer.add_scalar("train_loss", loss.item(), total_train_step)

# 2)测试步骤开始

mymodel.eval() # 当模型中出现特殊的层时使用,比如Dropout,BatchNorm等

total_test_loss = 0

total_accuracy = 0

with torch.no_grad(): # 使用这个测试记录不会保存在网络中

for data in test_dataloader:

imgs, targets = data

if torch.cuda.is_available():

imgs = imgs.cuda()

targets = targets.cuda()

outputs = mymodel(imgs)

# loss

loss = loss_fn(outputs, targets)

total_test_loss = total_test_loss + loss.item()

# 正确率accuracy:

# argmax为1表示横向最大的下标,为0表示纵向

# 判断输出与输入是否为同一个类别

accuracy = (outputs.argmax(1) == targets).sum()

total_accuracy = total_accuracy + accuracy

print("整体测试集上的loss:{}".format(total_test_loss))

writer.add_scalar("test_loss", total_test_loss, total_test_step)

print("整体测试集上的正确率:{}".format(total_accuracy/test_data_size))

writer.add_scalar("test_accuracy", total_accuracy/test_data_size, total_test_step)

total_test_step = total_test_step + 1

# 保存模型:这里利用的是第一种保存方式

torch.save(mymodel, "mymodel_{}.pth".format(i))

print("模型已保存")

writer.close()

- 第二种方式:

1)定义一个device = torch.device(“cuda”)

2)然后 网络模型、loss、数据 调用.to(device)

import torch

import torchvision

from torch import nn

from torch.nn import Sequential, Conv2d, MaxPool2d, Flatten, Linear

from torch.utils.data import DataLoader

from torch.utils.tensorboard import SummaryWriter

import time

# GPU的使用:

# 第二种方式:

# 1)定义一个device = torch.device("cuda")

# 2)然后 网络模型、loss、数据 调用.to(device)

# 定义GPU训练设备

device = torch.device("cuda")

# 1. 准备数据集

train_data = torchvision.datasets.CIFAR10("./dataset", train=True,

transform=torchvision.transforms.ToTensor(), download=True)

test_data = torchvision.datasets.CIFAR10("./dataset", train=False,

transform=torchvision.transforms.ToTensor(), download=True)

# 2. length长度

train_data_size = len(train_data)

test_data_size = len(test_data)

# 常用format这种方式输出训练集长度

print("训练数据集的长度:{}".format(train_data_size))

print("测试数据集的长度:{}".format(test_data_size))

# 3. 利用DataLoader加载数据集

train_dataloader = DataLoader(train_data, batch_size=64)

test_dataloader = DataLoader(test_data, batch_size=64)

# 4. 搭建神经网络

class Mymodel(nn.Module):

def __init__(self):

super(Mymodel, self).__init__()

# 这里构建CIFAR 10 model结构

self.model = Sequential(

Conv2d(3, 32, 5, padding=2),

MaxPool2d(2),

Conv2d(32, 32, 5, padding=2),

MaxPool2d(2),

Conv2d(32, 64, 5, padding=2),

MaxPool2d(2),

Flatten(),

Linear(1024, 64), # 64*4*4=1024

Linear(64, 10)

)

def forward(self, x):

x = self.model(x)

return x

mymodel = Mymodel()

mymodel = mymodel.to(device)

# 5. 损失函数

loss_fn = nn.CrossEntropyLoss()

loss_fn = loss_fn.to(device)

# 6. 优化器:设置学习速率为0.01

learning_rate = 1e-2

optimzer = torch.optim.SGD(mymodel.parameters(), lr=learning_rate)

# 7. 设置训练网络的一些参数:训练次数,测试次数,训练轮数

total_train_step = 0

total_test_step = 0

epoch = 10

# 添加tensorboard

writer = SummaryWriter("logs")

# 8. 开始训练

start_time = time.time()

for i in range(epoch):

print("-----第{}轮训练开始-----".format(i + 1))

# 1)训练步骤开始

mymodel.train() # 当模型中出现特殊的层时使用,比如Dropout,BatchNorm等

for data in train_dataloader:

imgs, targets = data

imgs = imgs.to(device)

targets = targets.to(device)

outputs = mymodel(imgs)

loss = loss_fn(outputs, targets)

# 优化器模型

optimzer.zero_grad()

loss.backward()

optimzer.step()

total_train_step = total_train_step + 1

if total_train_step % 100 == 0:

end_time = time.time()

print("所用时间:{}".format(end_time - start_time))

print("训练次数:{},loss:{}".format(total_train_step, loss.item()))

writer.add_scalar("train_loss", loss.item(), total_train_step)

# 2)测试步骤开始

mymodel.eval() # 当模型中出现特殊的层时使用,比如Dropout,BatchNorm等

total_test_loss = 0

total_accuracy = 0

with torch.no_grad(): # 使用这个测试记录不会保存在网络中

for data in test_dataloader:

imgs, targets = data

imgs = imgs.to(device)

targets = targets.to(device)

outputs = mymodel(imgs)

# loss

loss = loss_fn(outputs, targets)

total_test_loss = total_test_loss + loss.item()

# 正确率accuracy:

# argmax为1表示横向最大的下标,为0表示纵向

# 判断输出与输入是否为同一个类别

accuracy = (outputs.argmax(1) == targets).sum()

total_accuracy = total_accuracy + accuracy

print("整体测试集上的loss:{}".format(total_test_loss))

writer.add_scalar("test_loss", total_test_loss, total_test_step)

print("整体测试集上的正确率:{}".format(total_accuracy / test_data_size))

writer.add_scalar("test_accuracy", total_accuracy / test_data_size, total_test_step)

total_test_step = total_test_step + 1

# 保存模型:这里利用的是第一种保存方式

torch.save(mymodel, "mymodel_{}.pth".format(i))

print("模型已保存")

writer.close()

五、完整的模型验证(测试/demo)套路

利用已经训练好的模型给它提供输入

import torchvision

import torch

from torch import nn

from torch.nn import Sequential, Conv2d, MaxPool2d, Flatten, Linear

from PIL import Image

# 1.读取需要判断图片

image_path = "../imgs/dog.png"

image = Image.open(image_path)

# 原图像是4通道,这里需要转化成3通道

image = image.convert('RGB')

print(image)

transform = torchvision.transforms.Compose(

[torchvision.transforms.Resize((32, 32)),

torchvision.transforms.ToTensor()])

image = transform(image)

print(image.shape)

# image是[3, 32, 32]3维的,需要转化成4维的[1, 3, 32, 32]

image = torch.reshape(image, (1, 3, 32, 32))

# 2. 网络模型

class Mymodel(nn.Module):

def __init__(self):

super(Mymodel, self).__init__()

# 这里构建CIFAR 10 model结构

self.model = Sequential(

Conv2d(3, 32, 5, padding=2),

MaxPool2d(2),

Conv2d(32, 32, 5, padding=2),

MaxPool2d(2),

Conv2d(32, 64, 5, padding=2),

MaxPool2d(2),

Flatten(),

Linear(1024, 64), # 64*4*4=1024

Linear(64, 10)

)

def forward(self, x):

x = self.model(x)

return x

# 3. 用已经训练好的模型(将cuda->cpu),进行判别

model = torch.load("mymodel_0.pth", map_location=torch.device('cpu'))

model.eval()

with torch.no_grad():

output = model(image)

print(output)

print(output.argmax(1))

根据输出,可以明显可以看出,测试错误;因为我们用的这个模型就训练了一轮,正确率不高;读者可以利用正确率高的模型

<PIL.Image.Image image mode=RGB size=456x336 at 0x266FF3CBC70>

torch.Size([3, 32, 32])

tensor([[-1.7129, -0.1896, 0.6489, 0.7595, 0.8513, 0.9252, 1.1398, 0.4540,

-2.0718, -1.0213]])

tensor([6])

866

866

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?