LHL’Python 之 经典爬虫教程(豆瓣)

#-*-coding:utf-8-*-

##2020.5.8.14.01.00

##罗宏亮船新作品

##

from typing import Pattern

from bs4 import BeautifulSoup

import re

import urllib

import xlwt

import urllib.request

import sqlite3

def main():

baseurl="https://movie.douban.com/top250?start="

datalist=getData(baseurl)

savepath='罗宏亮船新爬虫之豆瓣电影TOP250.xls'

saveData(savepath,datalist)

findLink=re.compile(r'<a href=(.*?)">')

#网址

findImgSrc=re.compile(r'<img.*src="(.*?)"',re.S)

#图片

findTitle=re.compile(r'<span class="title">(.*?)</span>')

findRating=re.compile((r'<span class="rating_num" property="v:average">(.*?)</span>'))

findJudge=re.compile(r'<span>(\d*)人评价</span>')

findInq=re.compile(r'<span class="inq">(.*?)</span>')

findBd=re.compile(r'<p class="">(.*?)</p>',re.S)

def getData(baseurl):

datalist=[]

for i in range(0,10):

url=baseurl+str(i*25)

html=askURL(url)

soup=BeautifulSoup(html,"html.parser")

for item in soup.find_all('div',class_="item"):

data=[]

item=str(item)

link=re.findall(findLink,item)[0]

data.append(link)

ImgSrc=re.findall(findImgSrc,item)[0]

data.append(ImgSrc)

titles=re.findall(findTitle,item)

if(len(titles)==2):

ctitle=titles[0]

data.append(ctitle)

otitle=titles[1].replace("/","")

data.append(otitle)

else:

data.append(titles[0])

data.append(' ')

rating=re.findall(findRating,item)[0]

data.append(rating)

judge=re.findall(findJudge,item)[0]

data.append(judge)

inq=re.findall(findInq,item)

if len(inq)!=0:

inq=inq[0].replace("。",'')

data.append(inq)

else:

data.append(' ')

bd=re.findall((findBd),item)[0]

bd=re.sub('<br(\s+)?/>(\s+)?'," ",bd)

bd=re.sub('/'," ",bd)

data.append(bd.strip())

datalist.append(data)

return datalist

def askURL(url):

head={

"User-Agent": "Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/78.0.3904.108 Safari/537.36"

}

request=urllib.request.Request(url,headers=head)

html=""

try:

response=urllib.request.urlopen(request)

html=response.read().decode("utf-8")

except urllib.error.URLError as e:

if hasattr(e,"code"):

print((e.code))

if hasattr(e,"reason"):

print(e.reason)

return(html)

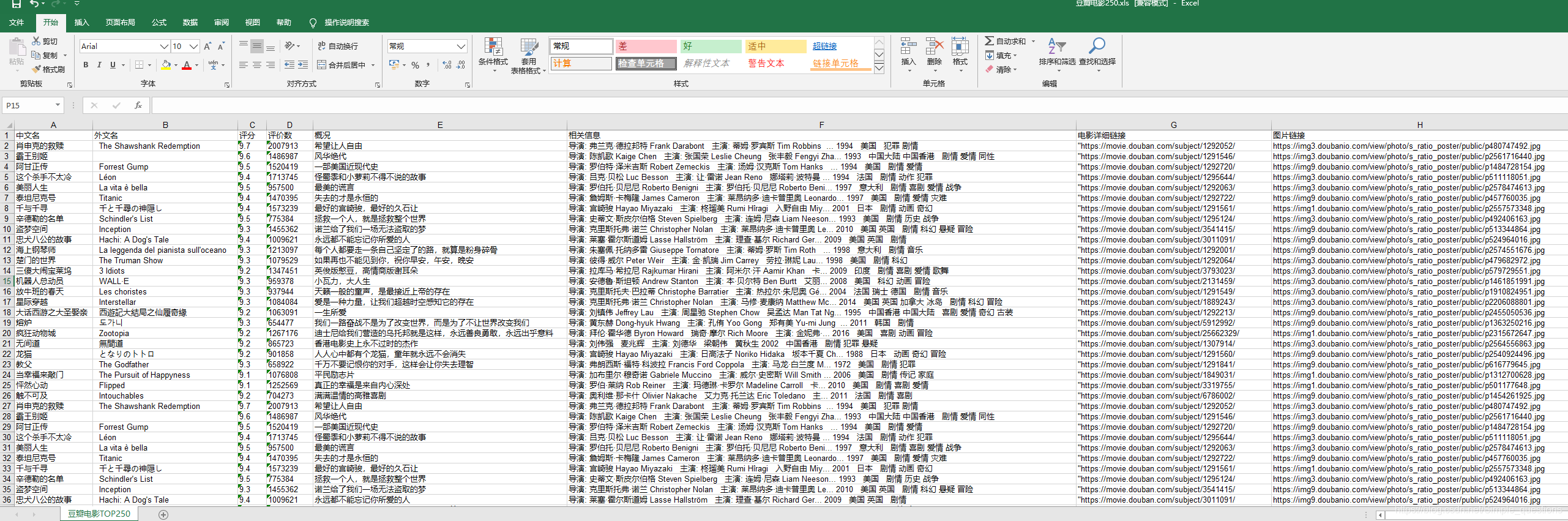

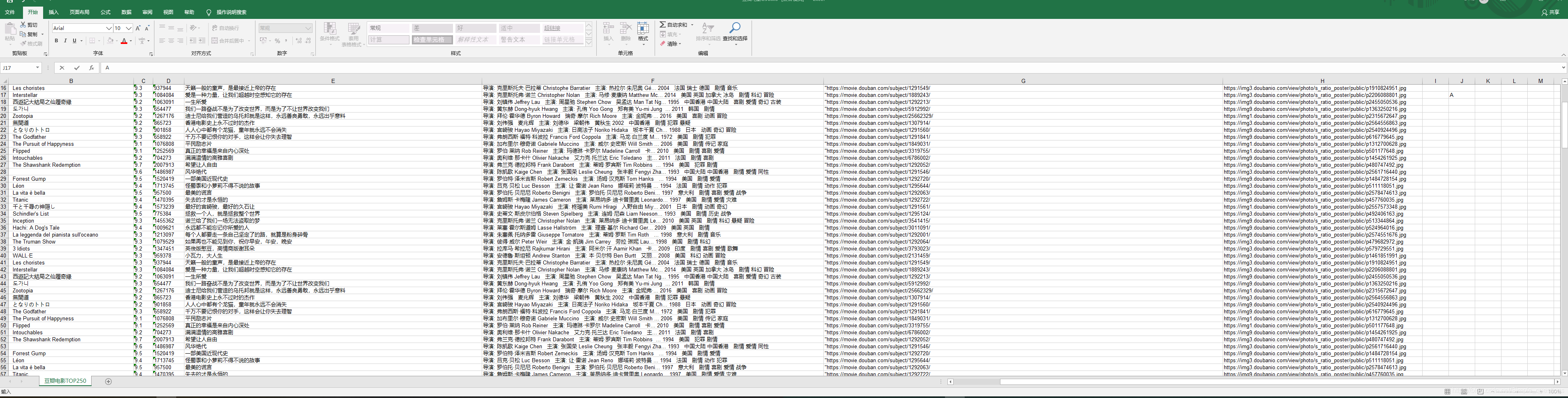

def saveData(savepath,datalist):

print("save...")

book=xlwt.Workbook(encoding="utf-8",style_compression=0)

sheet=book.add_sheet('罗宏亮船新爬虫之豆瓣电影TOP250',cell_overwrite_ok=True)

col=("电影详细链接","图片链接","中文名","外文名","评分","评价数","概况","相关信息")

for i in range(0,8):

sheet.write(0,i,col[i])

for i in range(0,250):

print("第%d条"%(i+1))

data=datalist[i]

for j in range(0,8):

sheet.write(i+1,j,data[j])

book.save(savepath)

if __name__=="__main__":

main()

print("爬取完成!")

防止刚儿偷窥,战术图片诱惑

1399

1399

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?