Building your Deep Neural Network: Step by Step

3.2 - L-layer Neural Network

The initialization for a deeper L-layer neural network is more complicated because there are many more weight matrices and bias vectors. When completing the initialize_parameters_deep, you should make sure that your dimensions match between each layer. Recall that n[l] n [ l ] is the number of units in layer l l . Thus for example if the size of our input

is (12288,209) ( 12288 , 209 ) (with m=209 m = 209 examples) then:

| **Shape of W** | **Shape of b** | **Activation** | **Shape of Activation** | |

| **Layer 1** | (n[1],12288) ( n [ 1 ] , 12288 ) | (n[1],1) ( n [ 1 ] , 1 ) | Z[1]=W[1]X+b[1] Z [ 1 ] = W [ 1 ] X + b [ 1 ] | (n[1],209) ( n [ 1 ] , 209 ) |

| **Layer 2** | (n[2],n[1]) ( n [ 2 ] , n [ 1 ] ) | (n[2],1) ( n [ 2 ] , 1 ) | Z[2]=W[2]A[1]+b[2] Z [ 2 ] = W [ 2 ] A [ 1 |

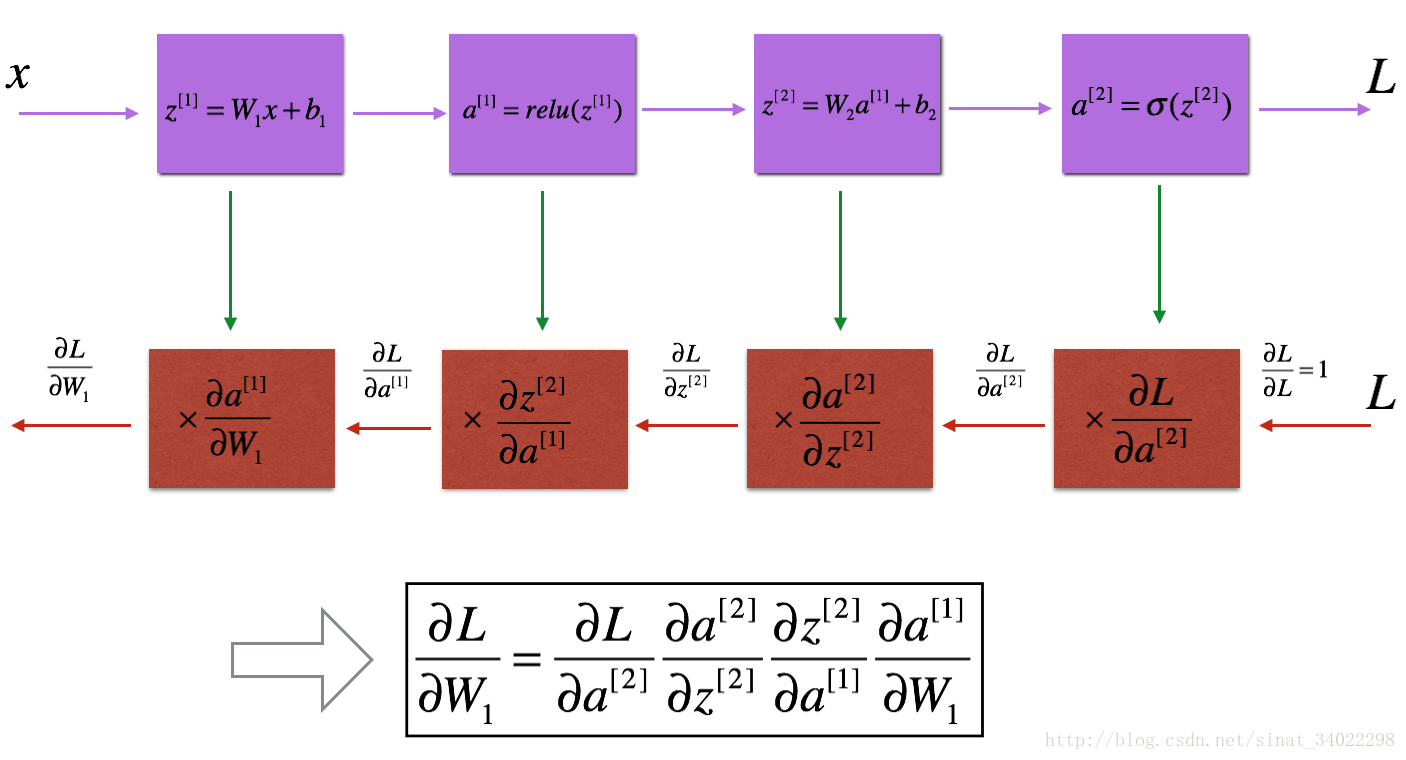

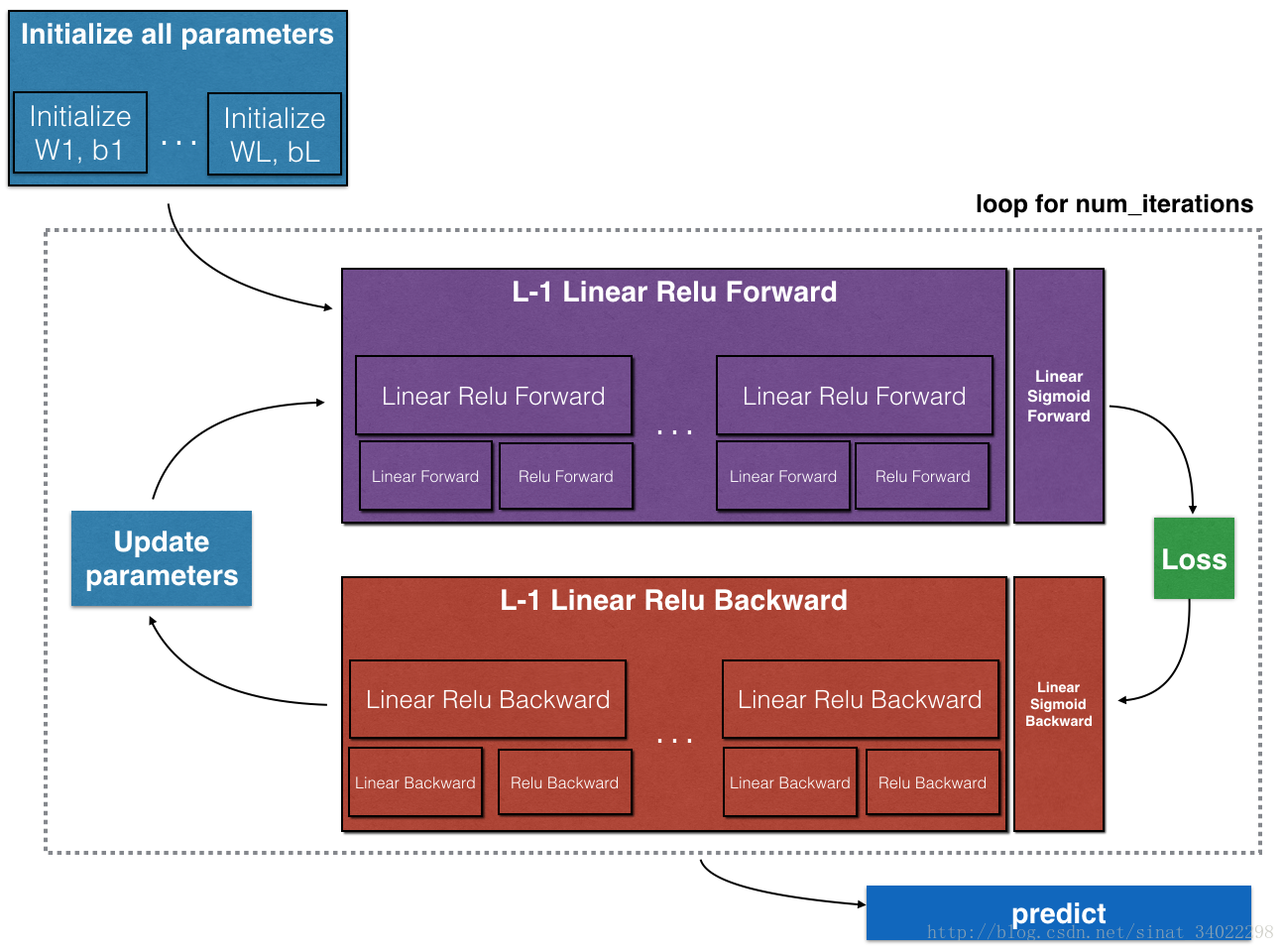

本文详细介绍了L层神经网络的构建步骤,包括初始化参数、线性激活函数的前向传播、反向传播及整个网络的前向传播和反向传播。通过实例解释了如何处理不同层之间的权重矩阵和偏置向量,以及如何进行线性部分的梯度计算。内容涵盖了深度学习的基础知识和实际操作技巧。

本文详细介绍了L层神经网络的构建步骤,包括初始化参数、线性激活函数的前向传播、反向传播及整个网络的前向传播和反向传播。通过实例解释了如何处理不同层之间的权重矩阵和偏置向量,以及如何进行线性部分的梯度计算。内容涵盖了深度学习的基础知识和实际操作技巧。

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

1405

1405

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?