系列文章目录

实践数据湖iceberg 第一课 入门

实践数据湖iceberg 第二课 iceberg基于hadoop的底层数据格式

实践数据湖iceberg 第三课 在sqlclient中,以sql方式从kafka读数据到iceberg

实践数据湖iceberg 第四课 在sqlclient中,以sql方式从kafka读数据到iceberg(升级版本到flink1.12.7)

实践数据湖iceberg 第五课 hive catalog特点

实践数据湖iceberg 第六课 从kafka写入到iceberg失败问题 解决

实践数据湖iceberg 第七课 实时写入到iceberg

实践数据湖iceberg 第八课 hive与iceberg集成

实践数据湖iceberg 第九课 合并小文件

实践数据湖iceberg 第十课 快照删除

实践数据湖iceberg 第十一课 测试分区表完整流程(造数、建表、合并、删快照)

实践数据湖iceberg 第十二课 catalog是什么

实践数据湖iceberg 第十三课 metadata比数据文件大很多倍的问题

实践数据湖iceberg 第十四课 元数据合并(解决元数据随时间增加而元数据膨胀的问题)

实践数据湖iceberg 第十五课 spark安装与集成iceberg(jersey包冲突)

实践数据湖iceberg 第十六课 通过spark3打开iceberg的认知之门

实践数据湖iceberg 第十七课 hadoop2.7,spark3 on yarn运行iceberg配置

实践数据湖iceberg 第十八课 多种客户端与iceberg交互启动命令(常用命令)

实践数据湖iceberg 第十九课 flink count iceberg,无结果问题

实践数据湖iceberg 第二十课 flink + iceberg CDC场景(版本问题,测试失败)

实践数据湖iceberg 第二十一课 flink1.13.5 + iceberg0.131 CDC(测试成功INSERT,变更操作失败)

实践数据湖iceberg 第二十二课 flink1.13.5 + iceberg0.131 CDC(CRUD测试成功)

实践数据湖iceberg 第二十三课 flink-sql从checkpoint重启

实践数据湖iceberg 第二十四课 iceberg元数据详细解析

实践数据湖iceberg 第二十五课 后台运行flink sql 增删改的效果

实践数据湖iceberg 第二十六课 checkpoint设置方法

实践数据湖iceberg 第二十七课 flink cdc 测试程序故障重启:能从上次checkpoint点继续工作

实践数据湖iceberg 第二十八课 把公有仓库上不存在的包部署到本地仓库

实践数据湖iceberg 第二十九课 如何优雅高效获取flink的jobId

实践数据湖iceberg 第三十课 mysql->iceberg,不同客户端有时区问题

实践数据湖iceberg 更多的内容目录

文章目录

前言

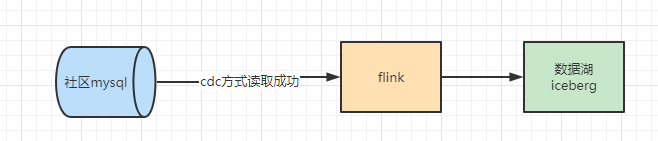

mysql->flink-sql-cdc->iceberg。从flink查数据时间没问题,从spark-sql查,时区+8了。对这个问题进行记录

最后解决方案: 源表没有timezone, 下游表需要设置local timezone,这样就没问题了!

一、spark查询iceberg数据,日期加8, 市区原因

1、spark sql查询iceberg带有日期的字段报关于timezone的错

java.lang.IllegalArgumentException: Cannot handle timestamp without timezone fields in Spark. Spark does not natively support this type but if you would like to handle all timestamps as timestamp with timezone set 'spark.sql.iceberg.handle-timestamp-without-timezone' to true. This will not change the underlying values stored but will change their displayed values in Spark. For more information please see https://docs.databricks.com/spark/latest/dataframes-datasets/dates-timestamps.html#ansi-sql-and-spark-sql-timestamps

at org.apache.iceberg.relocated.com.google.common.base.Preconditions.checkArgument(Preconditions.java:142)

at org.apache.iceberg.spark.source.SparkBatchScan.readSchema(SparkBatchScan.java:127)

at org.apache.spark.sql.execution.datasources.v2.PushDownUtils$.pruneColumns(PushDownUtils.scala:136)

at org.apache.spark.sql.execution.datasources.v2.V2ScanRelationPushDown$$anonfun$applyColumnPruning$1.applyOrElse(V2ScanRelationPushDown.scala:191)

at org.apache.spark.sql.execution.datasources.v2.V2ScanRelationPushDown$$anonfun$applyColumnPruning$1.applyOrElse(V2ScanRelationPushDown.scala:184)

at org.apache.spark.sql.catalyst.trees.TreeNode.$anonfun$transformDownWithPruning$1(TreeNode.scala:481)

at org.apache.spark.sql.catalyst.trees.CurrentOrigin$.withOrigin(TreeNode.scala:82)

at org.apache.spark.sql.catalyst.trees.TreeNode.transformDownWithPruning(TreeNode.scala:481)

at org.apache.spark.sql.catalyst.plans.logical.LogicalPlan.org$apache$spark$sql$catalyst$plans$logical$AnalysisHelper$$super$transformDownWithPruning(LogicalPlan.scala:30)

at org.apache.spark.sql.catalyst.plans.logical.AnalysisHelper.transformDownWithPruning(AnalysisHelper.scala:267)

at org.apache.spark.sql.catalyst.plans.logical.AnalysisHelper.transformDownWithPruning$(AnalysisHelper.scala:263)

at org.apache.spark.sql.catalyst.plans.logical.LogicalPlan.transformDownWithPruning(LogicalPlan.scala:30)

at org.apache.spark.sql.catalyst.plans.logical.LogicalPlan.transformDownWithPruning(LogicalPlan.scala:30)

at org.apache.spark.sql.catalyst.trees.TreeNode.transformDown(TreeNode.scala:457)

at org.apache.spark.sql.catalyst.trees.TreeNode.transform(TreeNode.scala:425)

at org.apache.spark.sql.execution.datasources.v2.V2ScanRelationPushDown$.applyColumnPruning(V2ScanRelationPushDown.scala:184)

at org.apache.spark.sql.execution.datasources.v2.V2ScanRelationPushDown$.apply(V2ScanRelationPushDown.scala:39)

at org.apache.spark.sql.execution.datasources.v2.V2ScanRelationPushDown$.apply(V2ScanRelationPushDown.scala:35)

at org.apache.spark.sql.catalyst.rules.RuleExecutor.$anonfun$execute$2(RuleExecutor.scala:211)

at scala.collection.LinearSeqOptimized.foldLeft(LinearSeqOptimized.scala:126)

at scala.collection.LinearSeqOptimized.foldLeft$(LinearSeqOptimized.scala:122)

at scala.collection.immutable.List.foldLeft(List.scala:91)

at org.apache.spark.sql.catalyst.rules.RuleExecutor.$anonfun$execute$1(RuleExecutor.scala:208)

at org.apache.spark.sql.catalyst.rules.RuleExecutor.$anonfun$execute$1$adapted(RuleExecutor.scala:200)

at scala.collection.immutable.List.foreach(List.scala:431)

at org.apache.spark.sql.catalyst.rules.RuleExecutor.execute(RuleExecutor.scala:200)

at org.apache.spark.sql.catalyst.rules.RuleExecutor.$anonfun$executeAndTrack$1(RuleExecutor.scala:179)

at org.apache.spark.sql.catalyst.QueryPlanningTracker$.withTracker(QueryPlanningTracker.scala:88)

at org.apache.spark.sql.catalyst.rules.RuleExecutor.executeAndTrack(RuleExecutor.scala:179)

at org.apache.spark.sql.execution.QueryExecution.$anonfun$optimizedPlan$1(QueryExecution.scala:138)

at org.apache.spark.sql.catalyst.QueryPlanningTracker.measurePhase(QueryPlanningTracker.scala:111)

at org.apache.spark.sql.execution.QueryExecution.$anonfun$executePhase$1(QueryExecution.scala:196)

at org.apache.spark.sql.SparkSession.withActive(SparkSession.scala:775)

at org.apache.spark.sql.execution.QueryExecution.executePhase(QueryExecution.scala:196)

at org.apache.spark.sql.execution.QueryExecution.optimizedPlan$lzycompute(QueryExecution.scala:134)

at org.apache.spark.sql.execution.QueryExecution.optimizedPlan(QueryExecution.scala:130)

at org.apache.spark.sql.execution.QueryExecution.assertOptimized(QueryExecution.scala:148)

at org.apache.spark.sql.execution.QueryExecution.$anonfun$executedPlan$1(QueryExecution.scala:166)

at org.apache.spark.sql.execution.QueryExecution.withCteMap(QueryExecution.scala:73)

at org.apache.spark.sql.execution.QueryExecution.executedPlan$lzycompute(QueryExecution.scala:163)

at org.apache.spark.sql.execution.QueryExecution.executedPlan(QueryExecution.scala:163)

at org.apache.spark.sql.execution.SQLExecution$.$anonfun$withNewExecutionId$5(SQLExecution.scala:101)

at org.apache.spark.sql.execution.SQLExecution$.withSQLConfPropagated(SQLExecution.scala:163)

at org.apache.spark.sql.execution.SQLExecution$.$anonfun$withNewExecutionId$1(SQLExecution.scala:90)

at org.apache.spark.sql.SparkSession.withActive(SparkSession.scala:775)

at org.apache.spark.sql.execution.SQLExecution$.withNewExecutionId(SQLExecution.scala:64)

at org.apache.spark.sql.hive.thriftserver.SparkSQLDriver.run(SparkSQLDriver.scala:69)

at org.apache.spark.sql.hive.thriftserver.SparkSQLCLIDriver.processCmd(SparkSQLCLIDriver.scala:384)

at org.apache.spark.sql.hive.thriftserver.SparkSQLCLIDriver.$anonfun$processLine$1(SparkSQLCLIDriver.scala:504)

at org.apache.spark.sql.hive.thriftserver.SparkSQLCLIDriver.$anonfun$processLine$1$adapted(SparkSQLCLIDriver.scala:498)

at scala.collection.Iterator.foreach(Iterator.scala:943)

at scala.collection.Iterator.foreach$(Iterator.scala:943)

at scala.collection.AbstractIterator.foreach(Iterator.scala:1431)

at scala.collection.IterableLike.foreach(IterableLike.scala:74)

at scala.collection.IterableLike.foreach$(IterableLike.scala:73)

at scala.collection.AbstractIterable.foreach(Iterable.scala:56)

at org.apache.spark.sql.hive.thriftserver.SparkSQLCLIDriver.processLine(SparkSQLCLIDriver.scala:498)

at org.apache.spark.sql.hive.thriftserver.SparkSQLCLIDriver$.main(SparkSQLCLIDriver.scala:287)

at org.apache.spark.sql.hive.thriftserver.SparkSQLCLIDriver.main(SparkSQLCLIDriver.scala)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.spark.deploy.JavaMainApplication.start(SparkApplication.scala:52)

at org.apache.spark.deploy.SparkSubmit.org$apache$spark$deploy$SparkSubmit$$runMain(SparkSubmit.scala:955)

at org.apache.spark.deploy.SparkSubmit.doRunMain$1(SparkSubmit.scala:180)

at org.apache.spark.deploy.SparkSubmit.submit(SparkSubmit.scala:203)

at org.apache.spark.deploy.SparkSubmit.doSubmit(SparkSubmit.scala:90)

at org.apache.spark.deploy.SparkSubmit$$anon$2.doSubmit(SparkSubmit.scala:1043)

at org.apache.spark.deploy.SparkSubmit$.main(SparkSubmit.scala:1052)

at org.apache.spark.deploy.SparkSubmit.main(SparkSubmit.scala)

2、按照提示,进行去时区处理

set spark.sql.iceberg.handle-timestamp-without-timezone=true;

spark-sql (default)> set `spark.sql.iceberg.handle-timestamp-without-timezone`=true;

key value

spark.sql.iceberg.handle-timestamp-without-timezone true

Time taken: 0.016 seconds, Fetched 1 row(s)

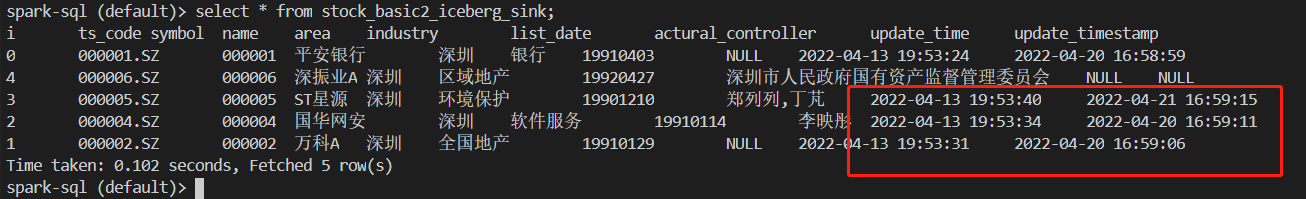

spark-sql (default)> select * from stock_basic2_iceberg_sink;

i ts_code symbol name area industry list_date actural_controller update_time update_timestamp

0 000001.SZ 000001 平安银行 深圳 银行 19910403 NULL 2022-04-14 03:53:24 2022-04-21 00:58:59

1 000002.SZ 000002 万科A 深圳 全国地产 19910129 NULL 2022-04-14 03:53:31 2022-04-21 00:59:06

4 000006.SZ 000006 深振业A 深圳 区域地产 19920427 深圳市人民政府国有资产监督管理委员会 NULL NULL

2 000004.SZ 000004 国华网安 深圳 软件服务 19910114 李映彤 2022-04-14 03:53:34 2022-04-21 00:59:11

3 000005.SZ 000005 ST星源 深圳 环境保护 19901210 郑列列,丁芃 2022-04-14 03:53:40 2022-04-22 00:59:15

Time taken: 1.856 seconds, Fetched 5 row(s)

数据湖的时间与mysql的时间,明显不一致。spark查iceberg的时间明显加了8小时。

结论:不能简单去时区

3. 更改local timezone

SET `table.local-time-zone` = 'Asia/Shanghai';

set spark.sql.iceberg.handle-timestamp-without-timezone=true; 后

再设置时区,发现无效:

设置为上海时区:

spark-sql (default)> SET `table.local-time-zone` = 'Asia/Shanghai';

key value

table.local-time-zone 'Asia/Shanghai'

Time taken: 0.014 seconds, Fetched 1 row(s)

spark-sql (default)> select * from stock_basic2_iceberg_sink;

i ts_code symbol name area industry list_date actural_controller update_time update_timestamp

0 000001.SZ 000001 平安银行 深圳 银行 19910403 NULL 2022-04-14 03:53:24 2022-04-21 00:58:59

1 000002.SZ 000002 万科A 深圳 全国地产 19910129 NULL 2022-04-14 03:53:31 2022-04-21 00:59:06

4 000006.SZ 000006 深振业A 深圳 区域地产 19920427 深圳市人民政府国有资产监督管理委员会 NULL NULL

2 000004.SZ 000004 国华网安 深圳 软件服务 19910114 李映彤 2022-04-14 03:53:34 2022-04-21 00:59:11

3 000005.SZ 000005 ST星源 深圳 环境保护 19901210 郑列列,丁芃 2022-04-14 03:53:40 2022-04-22 00:59:15

Time taken: 0.187 seconds, Fetched 5 row(s)

设置为utc时区:

spark-sql (default)> SET `table.local-time-zone` = 'UTC';

key value

table.local-time-zone 'UTC'

Time taken: 0.015 seconds, Fetched 1 row(s)

spark-sql (default)> select * from stock_basic2_iceberg_sink;

i ts_code symbol name area industry list_date actural_controller update_time update_timestamp

0 000001.SZ 000001 平安银行 深圳 银行 19910403 NULL 2022-04-14 03:53:24 2022-04-21 00:58:59

1 000002.SZ 000002 万科A 深圳 全国地产 19910129 NULL 2022-04-14 03:53:31 2022-04-21 00:59:06

4 000006.SZ 000006 深振业A 深圳 区域地产 19920427 深圳市人民政府国有资产监督管理委员会 NULL NULL

2 000004.SZ 000004 国华网安 深圳 软件服务 19910114 李映彤 2022-04-14 03:53:34 2022-04-21 00:59:11

3 000005.SZ 000005 ST星源 深圳 环境保护 19901210 郑列列,丁芃 2022-04-14 03:53:40 2022-04-22 00:59:15

Time taken: 0.136 seconds, Fetched 5 row(s)

设置时区,发现无效

spark.sql.session.timeZone 待测试

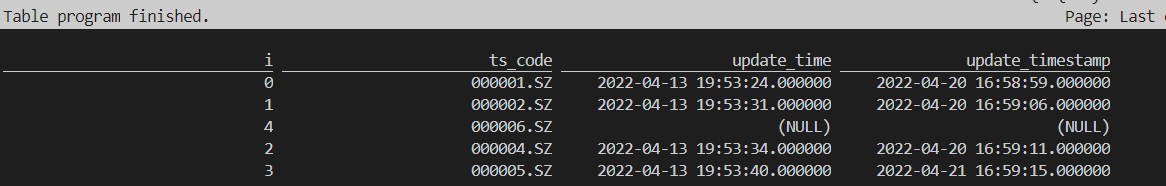

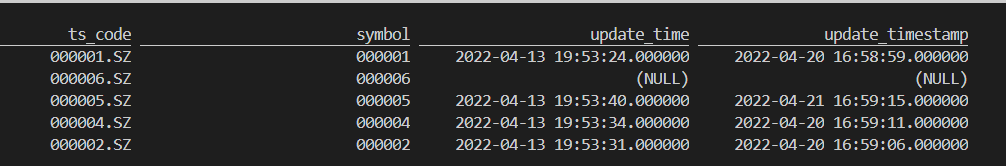

二、 使用flink-sql查询,发现时间没问题

结论: 时间语义与mysql端是一致的:

- flink sql 端查询结果:

Flink SQL> select i,ts_code,update_time,update_timestamp from stock_basic2_iceberg_sink;

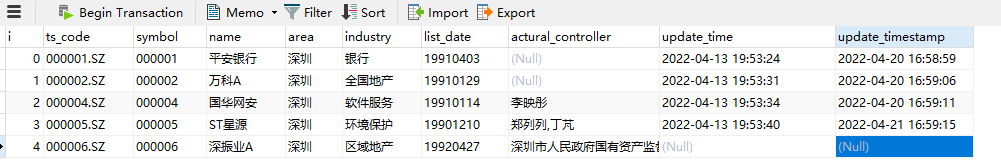

2. mysql端查询结果:

结论:时间语义与mysql端是一致的

三、强行给source 表加timezone,报错

把timestamp改为TIMESTAMP_LTZ

String createSql = "CREATE TABLE stock_basic_source(\n" +

" `i` INT NOT NULL,\n" +

" `ts_code` CHAR(10) NOT NULL,\n" +

" `symbol` CHAR(10) NOT NULL,\n" +

" `name` char(10) NOT NULL,\n" +

" `area` CHAR(20) NOT NULL,\n" +

" `industry` CHAR(20) NOT NULL,\n" +

" `list_date` CHAR(10) NOT NULL,\n" +

" `actural_controller` CHAR(100),\n" +

" `update_time` TIMESTAMP_LTZ\n," +

" `update_timestamp` TIMESTAMP_LTZ\n," +

" PRIMARY KEY(i) NOT ENFORCED\n" +

") WITH (\n" +

" 'connector' = 'mysql-cdc',\n" +

" 'hostname' = 'hadoop103',\n" +

" 'port' = '3306',\n" +

" 'username' = 'xxxxx',\n" +

" 'password' = 'XXXx',\n" +

" 'database-name' = 'xxzh_stock',\n" +

" 'table-name' = 'stock_basic2'\n" +

")" ;

运行报错,

Caused by: java.lang.IllegalArgumentException: Unable to convert to TimestampData from unexpected value '1649879611000' of type java.lang.Long

at com.ververica.cdc.debezium.table.RowDataDebeziumDeserializeSchema$12.convert(RowDataDebeziumDeserializeSchema.java:504)

at com.ververica.cdc.debezium.table.RowDataDebeziumDeserializeSchema$17.convert(RowDataDebeziumDeserializeSchema.java:641)

at com.ververica.cdc.debezium.table.RowDataDebeziumDeserializeSchema.convertField(RowDataDebeziumDeserializeSchema.java:626)

at com.ververica.cdc.debezium.table.RowDataDebeziumDeserializeSchema.access$000(RowDataDebeziumDeserializeSchema.java:63)

at com.ververica.cdc.debezium.table.RowDataDebeziumDeserializeSchema$16.convert(RowDataDebeziumDeserializeSchema.java:611)

at com.ververica.cdc.debezium.table.RowDataDebeziumDeserializeSchema$17.convert(RowDataDebeziumDeserializeSchema.java:641)

at com.ververica.cdc.debezium.table.RowDataDebeziumDeserializeSchema.extractAfterRow(RowDataDebeziumDeserializeSchema.java:146)

at com.ververica.cdc.debezium.table.RowDataDebeziumDeserializeSchema.deserialize(RowDataDebeziumDeserializeSchema.java:121)

at com.ververica.cdc.connectors.mysql.source.reader.MySqlRecordEmitter.emitElement(MySqlRecordEmitter.java:118)

at com.ververica.cdc.connectors.mysql.source.reader.MySqlRecordEmitter.emitRecord(MySqlRecordEmitter.java:100)

at com.ververica.cdc.connectors.mysql.source.reader.MySqlRecordEmitter.emitRecord(MySqlRecordEmitter.java:54)

at org.apache.flink.connector.base.source.reader.SourceReaderBase.pollNext(SourceReaderBase.java:128)

at org.apache.flink.streaming.api.operators.SourceOperator.emitNext(SourceOperator.java:294)

at org.apache.flink.streaming.runtime.io.StreamTaskSourceInput.emitNext(StreamTaskSourceInput.java:69)

at org.apache.flink.streaming.runtime.io.StreamOneInputProcessor.processInput(StreamOneInputProcessor.java:66)

at org.apache.flink.streaming.runtime.tasks.StreamTask.processInput(StreamTask.java:423)

at org.apache.flink.streaming.runtime.tasks.mailbox.MailboxProcessor.runMailboxLoop(MailboxProcessor.java:204)

at org.apache.flink.streaming.runtime.tasks.StreamTask.runMailboxLoop(StreamTask.java:684)

at org.apache.flink.streaming.runtime.tasks.StreamTask.executeInvoke(StreamTask.java:639)

at org.apache.flink.streaming.runtime.tasks.StreamTask.runWithCleanUpOnFail(StreamTask.java:650)

at org.apache.flink.streaming.runtime.tasks.StreamTask.invoke(StreamTask.java:623)

at org.apache.flink.runtime.taskmanager.Task.doRun(Task.java:779)

at org.apache.flink.runtime.taskmanager.Task.run(Task.java:566)

at java.lang.Thread.run(Thread.java:748)

22/04/21 10:49:30 INFO akka.AkkaRpcService: Stopping Akka RPC service.

能不能给下游表加timezone?

四、 上游表没timezone,下游表加timezone

下游表:mysql的datetime和timestamp, 由原来对应的TIMESTAMP,改为TIMESTAMP_LTZ

LTZ: LOCAL TIME ZONE的意思

mysql表结构:

mysql表:

String createSql = "CREATE TABLE stock_basic_source(\n" +

" `i` INT NOT NULL,\n" +

" `ts_code` CHAR(10) NOT NULL,\n" +

" `symbol` CHAR(10) NOT NULL,\n" +

" `name` char(10) NOT NULL,\n" +

" `area` CHAR(20) NOT NULL,\n" +

" `industry` CHAR(20) NOT NULL,\n" +

" `list_date` CHAR(10) NOT NULL,\n" +

" `actural_controller` CHAR(100),\n" +

" `update_time` TIMESTAMP\n," +

" `update_timestamp` TIMESTAMP\n," +

" PRIMARY KEY(i) NOT ENFORCED\n" +

") WITH (\n" +

" 'connector' = 'mysql-cdc',\n" +

" 'hostname' = 'hadoop103',\n" +

" 'port' = '3306',\n" +

" 'username' = 'XX',\n" +

" 'password' = 'XX" +

" 'database-name' = 'xxzh_stock',\n" +

" 'table-name' = 'stock_basic2'\n" +

")" ;

下游表:

String createSQl = "CREATE TABLE if not exists stock_basic2_iceberg_sink(\n" +

" `i` INT NOT NULL,\n" +

" `ts_code` CHAR(10) NOT NULL,\n" +

" `symbol` CHAR(10) NOT NULL,\n" +

" `name` char(10) NOT NULL,\n" +

" `area` CHAR(20) NOT NULL,\n" +

" `industry` CHAR(20) NOT NULL,\n" +

" `list_date` CHAR(10) NOT NULL,\n" +

" `actural_controller` CHAR(100) ,\n" +

" `update_time` TIMESTAMP_LTZ\n," +

" `update_timestamp` TIMESTAMP_LTZ\n," +

" PRIMARY KEY(i) NOT ENFORCED\n" +

") with(\n" +

" 'write.metadata.delete-after-commit.enabled'='true',\n" +

" 'write.metadata.previous-versions-max'='5',\n" +

" 'format-version'='2'\n" +

")";

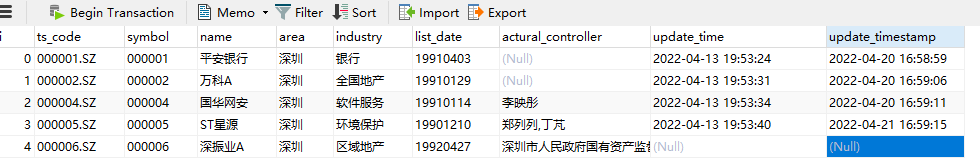

测试后,发现OK了。

spark-sql的查询结果:

flink-sql 查询的结果:

结论

关于日期的问题:源表没有timezone, 下游表需要设置local timezone

1152

1152

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?