Android StagefrightPlayer调用流程

Android 2.3开始,Android MediaPlayer采用Stagefright框架。Based on Android 4.0.1.

StagefrightPlayer创建函数如下:(MediaPlayerService.cpp,详细过程见文章:Android Audio 数据流详解)

- static sp<MediaPlayerBase> createPlayer(player_type playerType, void* cookie,

- notify_callback_f notifyFunc)

- {

- sp<MediaPlayerBase> p;

- switch (playerType) {

- case SONIVOX_PLAYER:

- LOGV(" create MidiFile");

- p = new MidiFile();

- break;

- case STAGEFRIGHT_PLAYER:

- LOGV(" create StagefrightPlayer");

- p = new StagefrightPlayer;

- break;

- case NU_PLAYER:

- LOGV(" create NuPlayer");

- p = new NuPlayerDriver;

- break;

- case TEST_PLAYER:

- LOGV("Create Test Player stub");

- p = new TestPlayerStub();

- break;

- default:

- LOGE("Unknown player type: %d", playerType);

- return NULL;

- }

- if (p != NULL) {

- if (p->initCheck() == NO_ERROR) {

- p->setNotifyCallback(cookie, notifyFunc);

- } else {

- p.clear();

- }

- }

- if (p == NULL) {

- LOGE("Failed to create player object");

- }

- return p;

- }

static sp<MediaPlayerBase> createPlayer(player_type playerType, void* cookie,

notify_callback_f notifyFunc)

{

sp<MediaPlayerBase> p;

switch (playerType) {

case SONIVOX_PLAYER:

LOGV(" create MidiFile");

p = new MidiFile();

break;

case STAGEFRIGHT_PLAYER:

LOGV(" create StagefrightPlayer");

p = new StagefrightPlayer;

break;

case NU_PLAYER:

LOGV(" create NuPlayer");

p = new NuPlayerDriver;

break;

case TEST_PLAYER:

LOGV("Create Test Player stub");

p = new TestPlayerStub();

break;

default:

LOGE("Unknown player type: %d", playerType);

return NULL;

}

if (p != NULL) {

if (p->initCheck() == NO_ERROR) {

p->setNotifyCallback(cookie, notifyFunc);

} else {

p.clear();

}

}

if (p == NULL) {

LOGE("Failed to create player object");

}

return p;

}首先了解下系统本身的调用流程,StagefrightPlayer使用的AwesomePlayer来实现的,首先是Demuxer的实现,对于系统本身不支持的格式是没有分离器的,具体查看代码(本文以本地文件播放为例).

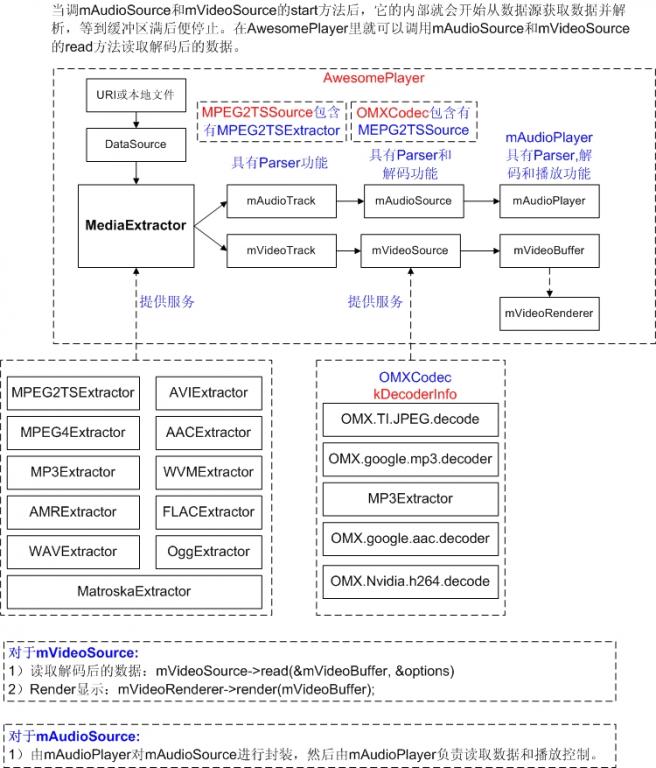

1. Demuxer的实现

Awesomeplayer.cpp的setDataSource_l(

const sp<DataSource> &dataSource) {

sp<MediaExtractor> extractor = MediaExtractor::Create(dataSource);

这个就是创建Demux的地方,查看代码MediaExtractor.cpp的create函数,datasource的sniff函数就是自动检测媒体格式容器的类型的,如果需要新增原系统无法识别的媒体格式,必然无法得到有效的分离器,所以这里需要自己创建自己Demuxer,每一种格式的XXXXExtractor.cpp函数中有个SniffXXXX函数,在Awesomeplayer的构造函数中的这一句DataSource::RegisterDefaultSniffers()就是注册好所有的sniff函数,写一个自己的XXXExtractor类,照样写个XXXXsinff函数,在RegisterDefaultSniffers中加入自己的函数。然后自已实现一个需要的Demuxer.通过Demuxer分离出音视频流后,就是进入解码阶段了。

2. AV Decoder

status_t AwesomePlayer::initVideoDecoder() {

mVideoSource = OMXCodec::Create(

mClient.interface(), mVideoTrack->getFormat(),

false,

mVideoTrack);

这个就是创建的解码器了,create的最后一个参数就是分离出来的独立的视频流,具备的接口最重要的就是read接口,是分离器中实现的,这个track是XXXXExtractor中的getTrack获取的。

解码器的逻辑主要集中在OMXCodec.cpp中,在OMXCodec::Create中主要负责寻找匹配的codec并创建codec.

寻找匹配的codec:

- Vector<String8> matchingCodecs;

- findMatchingCodecs(

- mime, createEncoder, matchComponentName, flags, &matchingCodecs);

- if (matchingCodecs.isEmpty()) {

- return NULL;

- }

Vector<String8> matchingCodecs;

findMatchingCodecs(

mime, createEncoder, matchComponentName, flags, &matchingCodecs);

if (matchingCodecs.isEmpty()) {

return NULL;

}创建codec:

- status_t err = omx->allocateNode(componentName, observer, &node);

- if (err == OK) {

- LOGV("Successfully allocated OMX node '%s'", componentName);

- sp<OMXCodec> codec = new OMXCodec(

- omx, node, quirks, flags,

- createEncoder, mime, componentName,

- source, nativeWindow);

- observer->setCodec(codec);

- err = codec->configureCodec(meta);

- if (err == OK) {

- if (!strcmp("OMX.Nvidia.mpeg2v.decode", componentName)) {

- codec->mFlags |= kOnlySubmitOneInputBufferAtOneTime;

- }

- return codec;

- }

- LOGV("Failed to configure codec '%s'", componentName);

- }

status_t err = omx->allocateNode(componentName, observer, &node);

if (err == OK) {

LOGV("Successfully allocated OMX node '%s'", componentName);

sp<OMXCodec> codec = new OMXCodec(

omx, node, quirks, flags,

createEncoder, mime, componentName,

source, nativeWindow);

observer->setCodec(codec);

err = codec->configureCodec(meta);

if (err == OK) {

if (!strcmp("OMX.Nvidia.mpeg2v.decode", componentName)) {

codec->mFlags |= kOnlySubmitOneInputBufferAtOneTime;

}

return codec;

}

LOGV("Failed to configure codec '%s'", componentName);

}

findMatchingCodecs这个函数就是查找解码器的,它在kDecoderInfo数组中寻找需要的解码器。

- void OMXCodec::findMatchingCodecs(

- const char *mime,

- bool createEncoder, const char *matchComponentName,

- uint32_t flags,

- Vector<String8> *matchingCodecs) {

- matchingCodecs->clear();

- for (int index = 0;; ++index) {

- const char *componentName;

- if (createEncoder) {

- componentName = GetCodec(

- kEncoderInfo,

- sizeof(kEncoderInfo) / sizeof(kEncoderInfo[0]),

- mime, index);

- } else {

- componentName = GetCodec(

- kDecoderInfo,

- sizeof(kDecoderInfo) / sizeof(kDecoderInfo[0]),

- mime, index);

- }

- if (!componentName) {

- break;

- }

- // If a specific codec is requested, skip the non-matching ones.

- if (matchComponentName && strcmp(componentName, matchComponentName)) {

- continue;

- }

- // When requesting software-only codecs, only push software codecs

- // When requesting hardware-only codecs, only push hardware codecs

- // When there is request neither for software-only nor for

- // hardware-only codecs, push all codecs

- if (((flags & kSoftwareCodecsOnly) && IsSoftwareCodec(componentName)) ||

- ((flags & kHardwareCodecsOnly) && !IsSoftwareCodec(componentName)) ||

- (!(flags & (kSoftwareCodecsOnly | kHardwareCodecsOnly)))) {

- matchingCodecs->push(String8(componentName));

- }

- }

- if (flags & kPreferSoftwareCodecs) {

- matchingCodecs->sort(CompareSoftwareCodecsFirst);

- }

- }

void OMXCodec::findMatchingCodecs(

const char *mime,

bool createEncoder, const char *matchComponentName,

uint32_t flags,

Vector<String8> *matchingCodecs) {

matchingCodecs->clear();

for (int index = 0;; ++index) {

const char *componentName;

if (createEncoder) {

componentName = GetCodec(

kEncoderInfo,

sizeof(kEncoderInfo) / sizeof(kEncoderInfo[0]),

mime, index);

} else {

componentName = GetCodec(

kDecoderInfo,

sizeof(kDecoderInfo) / sizeof(kDecoderInfo[0]),

mime, index);

}

if (!componentName) {

break;

}

// If a specific codec is requested, skip the non-matching ones.

if (matchComponentName && strcmp(componentName, matchComponentName)) {

continue;

}

// When requesting software-only codecs, only push software codecs

// When requesting hardware-only codecs, only push hardware codecs

// When there is request neither for software-only nor for

// hardware-only codecs, push all codecs

if (((flags & kSoftwareCodecsOnly) && IsSoftwareCodec(componentName)) ||

((flags & kHardwareCodecsOnly) && !IsSoftwareCodec(componentName)) ||

(!(flags & (kSoftwareCodecsOnly | kHardwareCodecsOnly)))) {

matchingCodecs->push(String8(componentName));

}

}

if (flags & kPreferSoftwareCodecs) {

matchingCodecs->sort(CompareSoftwareCodecsFirst);

}

}

差不多流程是这样把,关于显示部分就不用管了,解码器对接render部分,应该自己会弄好,解码器最重要的对外接口也就是read接口的,返回的一帧帧的解码后的数据,

http://blog.csdn.net/myarrow/article/details/7043494

--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

Android Audio 数据流详解

Android Audio Architecture 图如下所示

详细调用路径如下

1. 音频播放

示例代码

MediaPlayer mp = new MediaPlayer();

mp.setDataSource(PATH_TO_FILE);

mp.prepare();

mp.start();

1.1 MediaPlayer mp = new MediaPlayer()

实现流程如下表:

| 函数名 | 文件名 |

| MediaPlayer:: MediaPlayer | MediaPlayer.Java |

| MediaPlayer::native_setup | MediaPlayer.Java |

| android_media_MediaPlayer_native_setup | android_media_MediaPlayer.cpp |

| MediaPlayer::MediaPlayer | MediaPlayer.cpp |

1.2 mp.setDataSource(PATH_TO_FILE);

实现流程如下:

| 函数名 | 文件名 |

| MediaPlayer::setDataSource | MediaPlayer.Java |

| android_media_MediaPlayer_setDataSource | android_media_MediaPlayer.cpp |

| MediaPlayer::setDataSource | MediaPlayer.cpp |

| -MediaPlayer::getMediaPlayerService | MediaPlayer.cpp |

| -IMediaPlayerService | IMediaPlayerService.h |

| -IMediaPlayerService::create | MediaPlayer.cpp |

| --MediaPlayerService::create | MediaPlayerService.cpp |

| -IMediaPlayer::setDataSource | MediaPlayer.cpp |

| --BpMediaPlayer::setDataSource | IMediaPlayer.cpp |

| --MediaPlayerService::Client::setDataSource(真正执行操作) | MediaPlayerService.cpp |

| --android::createPlayer | MediaPlayerService.cpp |

| -- new StagefrightPlayer | MediaPlayerService.cpp |

| --new AudioOutput | MediaPlayerService.cpp |

| --StagefrightPlayer::setDataSource | StagefrightPlayer.cpp |

| --AwesomePlayer::setDataSource | AwesomePlayer.cpp |

| --AwesomePlayer::setDataSource_l | AwesomePlayer.cpp |

1.3 mp.prepare()

| 函数名 | 文件名 |

| MediaPlayer:: prepare | MediaPlayer.Java |

| android_media_MediaPlayer_prepare | android_media_MediaPlayer.cpp |

| MediaPlayer:: prepare | MediaPlayer.cpp |

| MidiFile:: prepare | MidiFile.cpp |

| VorbisPlayer:: prepare | VorbisPlayer.cpp |

| VorbisPlayer::createOutputTrack | VorbisPlayer.cpp |

| AudioOutput::open | MediaPlayerService.cpp |

| AudioTrack::AudioTrack | AudioTrack.cpp |

| AudioSystem::get_audio_flinger | AudioSystem.cpp |

| AudioFlinger::createTrack | AudioFlinger.cpp |

1.4 mp.start()

| 函数名 | 文件名 |

| MediaPlayer:: start | MediaPlayer.Java |

| android_media_MediaPlayer_start | android_media_MediaPlayer.cpp |

| MediaPlayer::start | MediaPlayer.cpp |

| PVPlayer:: start | PVPlayer.h |

| MidiFile:: start | MidiFile.cpp |

| VorbisPlayer:: start | VorbisPlayer.cpp |

| AudioTrack::start | AudioTrack.cpp |

MediaPlayerService:: MediaPlayerService(MediaPlayerService.cpp)由systemserver进程创建,在文件system_init.cpp里的函数system_init()调用MediaPlayerService::instantiate创建

getPlayerType(MediaPlayerService.cpp)返回3种player:

1) PV_PLAYER:播放mp3

2) SONIVOX_PLAYER:播放midi

3) VORBIS_PLAYER:播放ogg,

简单说来,播放流程如下:

Java端发起调用,MediaPlayer会转至MediaPlayerService,然后会调用相应的解码工具解码后创建AudioTrack,所有待输出的AudioTrack在AudioFlinger::AudioMixer里合成,然后通过AudioHAL(AudioHardwareInterface的实际实现者)传至实际的硬件来实现播放

http://blog.csdn.net/myarrow/article/details/7036955

------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

AwesomePlayer的启动工作

继前一篇文章AwesomePlayer的准备工作,本文主要描述当Java调用mp.start();时,AwesomePlayer做了些什么...

1. AwesomePlayer::play_l

其调用流程如下:

StagefrightPlayer::start->

AwesomePlayer::play->

AwesomePlayer::play_l

AwesomePlayer::play_l主要代码如下:

- status_t AwesomePlayer::play_l() {

- modifyFlags(SEEK_PREVIEW, CLEAR);

- modifyFlags(PLAYING, SET);

- modifyFlags(FIRST_FRAME, SET);

- // 创建AudioPlayer

- if (mAudioSource != NULL) {

- if (mAudioPlayer == NULL) {

- if (mAudioSink != NULL) {

- mAudioPlayer = new AudioPlayer(mAudioSink, this);

- mAudioPlayer->setSource(mAudioSource);

- mTimeSource = mAudioPlayer;

- // If there was a seek request before we ever started,

- // honor the request now.

- // Make sure to do this before starting the audio player

- // to avoid a race condition.

- seekAudioIfNecessary_l();

- }

- }

- CHECK(!(mFlags & AUDIO_RUNNING));

- //如果只播放音频,则启动AudioPlayer

- if (mVideoSource == NULL) {

- // We don't want to post an error notification at this point,

- // the error returned from MediaPlayer::start() will suffice.

- status_t err = startAudioPlayer_l(

- false /* sendErrorNotification */);

- if (err != OK) {

- delete mAudioPlayer;

- mAudioPlayer = NULL;

- modifyFlags((PLAYING | FIRST_FRAME), CLEAR);

- if (mDecryptHandle != NULL) {

- mDrmManagerClient->setPlaybackStatus(

- mDecryptHandle, Playback::STOP, 0);

- }

- return err;

- }

- }

- }

- if (mTimeSource == NULL && mAudioPlayer == NULL) {

- mTimeSource = &mSystemTimeSource;

- }

- // 启动视频回放

- if (mVideoSource != NULL) {

- // Kick off video playback

- postVideoEvent_l();

- if (mAudioSource != NULL && mVideoSource != NULL) {

- postVideoLagEvent_l();

- }

- }

- ...

- return OK;

- }

status_t AwesomePlayer::play_l() {

modifyFlags(SEEK_PREVIEW, CLEAR);

modifyFlags(PLAYING, SET);

modifyFlags(FIRST_FRAME, SET);

// 创建AudioPlayer

if (mAudioSource != NULL) {

if (mAudioPlayer == NULL) {

if (mAudioSink != NULL) {

mAudioPlayer = new AudioPlayer(mAudioSink, this);

mAudioPlayer->setSource(mAudioSource);

mTimeSource = mAudioPlayer;

// If there was a seek request before we ever started,

// honor the request now.

// Make sure to do this before starting the audio player

// to avoid a race condition.

seekAudioIfNecessary_l();

}

}

CHECK(!(mFlags & AUDIO_RUNNING));

//如果只播放音频,则启动AudioPlayer

if (mVideoSource == NULL) {

// We don't want to post an error notification at this point,

// the error returned from MediaPlayer::start() will suffice.

status_t err = startAudioPlayer_l(

false /* sendErrorNotification */);

if (err != OK) {

delete mAudioPlayer;

mAudioPlayer = NULL;

modifyFlags((PLAYING | FIRST_FRAME), CLEAR);

if (mDecryptHandle != NULL) {

mDrmManagerClient->setPlaybackStatus(

mDecryptHandle, Playback::STOP, 0);

}

return err;

}

}

}

if (mTimeSource == NULL && mAudioPlayer == NULL) {

mTimeSource = &mSystemTimeSource;

}

// 启动视频回放

if (mVideoSource != NULL) {

// Kick off video playback

postVideoEvent_l();

if (mAudioSource != NULL && mVideoSource != NULL) {

postVideoLagEvent_l();

}

}

...

return OK;

}

1.1 创建AudioPlayer

创建AudioPlayer,创建之后,如果只播放音频,则调用AwesomePlayer::startAudioPlayer_l启动音频播放,在启动音频播放时,主要调用以下启动工作:

AudioPlayer::start->

mSource->start

mSource->read

mAudioSink->open

mAudioSink->start

1.2 启动视频回放

调用AwesomePlayer::postVideoEvent_l启动视频回放。此函数代码如下:

- void AwesomePlayer::postVideoEvent_l(int64_t delayUs) {

- if (mVideoEventPending) {

- return;

- }

- mVideoEventPending = true;

- mQueue.postEventWithDelay(mVideoEvent, delayUs < 0 ? 10000 : delayUs);

- }

void AwesomePlayer::postVideoEvent_l(int64_t delayUs) {

if (mVideoEventPending) {

return;

}

mVideoEventPending = true;

mQueue.postEventWithDelay(mVideoEvent, delayUs < 0 ? 10000 : delayUs);

}

前面已经讲过, mQueue.postEventWithDelay发送一个事件到队列中,最终执行事件的fire函数。这些事件的初始化在AwesomePlayer::AwesomePlayer中进行。

- AwesomePlayer::AwesomePlayer()

- : mQueueStarted(false),

- mUIDValid(false),

- mTimeSource(NULL),

- mVideoRendererIsPreview(false),

- mAudioPlayer(NULL),

- mDisplayWidth(0),

- mDisplayHeight(0),

- mFlags(0),

- mExtractorFlags(0),

- mVideoBuffer(NULL),

- mDecryptHandle(NULL),

- mLastVideoTimeUs(-1),

- mTextPlayer(NULL) {

- CHECK_EQ(mClient.connect(), (status_t)OK);

- DataSource::RegisterDefaultSniffers();

- mVideoEvent = new AwesomeEvent(this, &AwesomePlayer::onVideoEvent);

- mVideoEventPending = false;

- mStreamDoneEvent = new AwesomeEvent(this, &AwesomePlayer::onStreamDone);

- mStreamDoneEventPending = false;

- mBufferingEvent = new AwesomeEvent(this, &AwesomePlayer::onBufferingUpdate);

- mBufferingEventPending = false;

- mVideoLagEvent = new AwesomeEvent(this, &AwesomePlayer::onVideoLagUpdate);

- mVideoEventPending = false;

- mCheckAudioStatusEvent = new AwesomeEvent(

- this, &AwesomePlayer::onCheckAudioStatus);

- mAudioStatusEventPending = false;

- reset();

- }

AwesomePlayer::AwesomePlayer()

: mQueueStarted(false),

mUIDValid(false),

mTimeSource(NULL),

mVideoRendererIsPreview(false),

mAudioPlayer(NULL),

mDisplayWidth(0),

mDisplayHeight(0),

mFlags(0),

mExtractorFlags(0),

mVideoBuffer(NULL),

mDecryptHandle(NULL),

mLastVideoTimeUs(-1),

mTextPlayer(NULL) {

CHECK_EQ(mClient.connect(), (status_t)OK);

DataSource::RegisterDefaultSniffers();

mVideoEvent = new AwesomeEvent(this, &AwesomePlayer::onVideoEvent);

mVideoEventPending = false;

mStreamDoneEvent = new AwesomeEvent(this, &AwesomePlayer::onStreamDone);

mStreamDoneEventPending = false;

mBufferingEvent = new AwesomeEvent(this, &AwesomePlayer::onBufferingUpdate);

mBufferingEventPending = false;

mVideoLagEvent = new AwesomeEvent(this, &AwesomePlayer::onVideoLagUpdate);

mVideoEventPending = false;

mCheckAudioStatusEvent = new AwesomeEvent(

this, &AwesomePlayer::onCheckAudioStatus);

mAudioStatusEventPending = false;

reset();

}

现在明白了,对于mVideoEnent,最终将执行函数AwesomePlayer::onVideoEvent,一层套一层,再继续向下看看...

1.2.1 AwesomePlayer::onVideoEvent

相关简化代码如下:

- <span style="font-size: 10px;">void AwesomePlayer::postVideoEvent_l(int64_t delayUs)

- {

- mQueue.postEventWithDelay(mVideoEvent, delayUs);

- }

- void AwesomePlayer::onVideoEvent()

- {

- mVideoSource->read(&mVideoBuffer,&options); //获取解码后的YUV数据

- [Check Timestamp] //进行AV同步

- mVideoRenderer->render(mVideoBuffer); //显示解码后的YUV数据

- postVideoEvent_l(); //进行下一帧的显示

- }

- </span>

void AwesomePlayer::postVideoEvent_l(int64_t delayUs)

{

mQueue.postEventWithDelay(mVideoEvent, delayUs);

}

void AwesomePlayer::onVideoEvent()

{

mVideoSource->read(&mVideoBuffer,&options); //获取解码后的YUV数据

[Check Timestamp] //进行AV同步

mVideoRenderer->render(mVideoBuffer); //显示解码后的YUV数据

postVideoEvent_l(); //进行下一帧的显示

}

1)调用OMXCodec::read创建mVideoBuffer

2)调用AwesomePlayer::initRenderer_l初始化mVideoRender

- if (USE_SURFACE_ALLOC //硬件解码

- && !strncmp(component, "OMX.", 4)

- && strncmp(component, "OMX.google.", 11)) {

- // Hardware decoders avoid the CPU color conversion by decoding

- // directly to ANativeBuffers, so we must use a renderer that

- // just pushes those buffers to the ANativeWindow.

- mVideoRenderer =

- new AwesomeNativeWindowRenderer(mNativeWindow, rotationDegrees);

- } else { //软件解码

- // Other decoders are instantiated locally and as a consequence

- // allocate their buffers in local address space. This renderer

- // then performs a color conversion and copy to get the data

- // into the ANativeBuffer.

- mVideoRenderer = new AwesomeLocalRenderer(mNativeWindow, meta);

- }

if (USE_SURFACE_ALLOC //硬件解码

&& !strncmp(component, "OMX.", 4)

&& strncmp(component, "OMX.google.", 11)) {

// Hardware decoders avoid the CPU color conversion by decoding

// directly to ANativeBuffers, so we must use a renderer that

// just pushes those buffers to the ANativeWindow.

mVideoRenderer =

new AwesomeNativeWindowRenderer(mNativeWindow, rotationDegrees);

} else { //软件解码

// Other decoders are instantiated locally and as a consequence

// allocate their buffers in local address space. This renderer

// then performs a color conversion and copy to get the data

// into the ANativeBuffer.

mVideoRenderer = new AwesomeLocalRenderer(mNativeWindow, meta);

}

3)调用AwesomePlayer::startAudioPlayer_l启动音频播放

4)然后再循环调用postVideoEvent_l来post mVideoEvent事件,以循环工作。

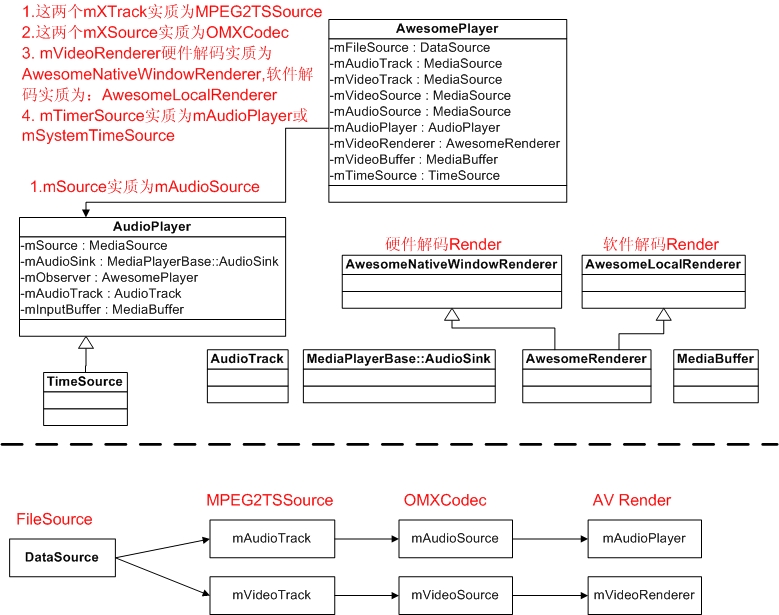

其主要对象及关系如下图所示:

2. AwesomePlayer数据流

----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

AwesomePlayer的准备工作

1. 前提条件

本文以播放本地文件为例,且setDataSource时传入的是文件的url地址。

在Java中,若要播放一个本地文件,其代码如下:

MediaPlayer mp = new MediaPlayer();

mp.setDataSource(PATH_TO_FILE); ...... (1)

mp.prepareAsync(); ........................ (2)、(3)

当收到视频准备完毕,收到OnPreparedListener时

mp.start(); .......................... (4)

在AwesomePlayer中,则会看到相应的处理;

2. AwesomePlayer::setDataSource

为了能播放本地文件,需要通过AwesomePlayer::setDataSource来告诉AwesomePlayer播放url地址,AwesomePlayer也只是简单地把此url地址存入mUri和mStats.mURI中以备在prepare时使用。

3. AwesomePlayer::prepareAsync或AwesomePlayer::prepare

3.1 mQueue.start();

表面看是启动一个队例,实质上是创建了一个线程,此程入口函数为:TimedEventQueue::ThreadWrapper。真正的线程处理函数为:TimedEventQueue::threadEntry, 从TimedEventQueue::mQueue队列中读取事件,然后调用event->fire处理此事件。TimedEventQueue中的每一个事件都带有触发此事件的绝对时间,到时间之后才执行此事件的fire.

TimedEventQueue::Event的fire是一个纯虚函数,其实现由其派生类来实现,如在AwesomePlayer::prepareAsync_l中,创建了一个AwesomeEvent,然后通过mQueue.postEvent把事件发送到mQueue中,此时,fire函数为AwesomePlayer::onPrepareAsyncEvent.

3.2 AwesomePlayer::onPrepareAsyncEvent被执行

根据上面的描述,把事件发送到队列之后,队列线程将读取此线程的事件,然后执行event的fire. 3.1中事件的fire函数为AwesomePlayer::onPrepareAsyncEvent,其代码为:

- void AwesomePlayer::onPrepareAsyncEvent() {

- Mutex::Autolock autoLock(mLock);

- ....

- if (mUri.size() > 0) { //获取mAudioTrack和mVideoTrack

- status_t err = finishSetDataSource_l(); ---3.2.1

- ...

- }

- if (mVideoTrack != NULL && mVideoSource == NULL) { //获取mVideoSource

- status_t err = initVideoDecoder(); ---3.2.2

- ...

- }

- if (mAudioTrack != NULL && mAudioSource == NULL) { //获取mAudioSource

- status_t err = initAudioDecoder(); ---3.2.3

- ...

- }

- modifyFlags(PREPARING_CONNECTED, SET);

- if (isStreamingHTTP() || mRTSPController != NULL) {

- postBufferingEvent_l();

- } else {

- finishAsyncPrepare_l();

- }

- }

void AwesomePlayer::onPrepareAsyncEvent() {

Mutex::Autolock autoLock(mLock);

....

if (mUri.size() > 0) { //获取mAudioTrack和mVideoTrack

status_t err = finishSetDataSource_l(); ---3.2.1

...

}

if (mVideoTrack != NULL && mVideoSource == NULL) { //获取mVideoSource

status_t err = initVideoDecoder(); ---3.2.2

...

}

if (mAudioTrack != NULL && mAudioSource == NULL) { //获取mAudioSource

status_t err = initAudioDecoder(); ---3.2.3

...

}

modifyFlags(PREPARING_CONNECTED, SET);

if (isStreamingHTTP() || mRTSPController != NULL) {

postBufferingEvent_l();

} else {

finishAsyncPrepare_l();

}

}

3.2.1 finishSetDataSource_l

- {

- dataSource = DataSource::CreateFromURI(mUri.string(), ...); (3.2.1.1)

- sp<MediaExtractor> extractor =

- MediaExtractor::Create(dataSource); ..... (3.2.1.2)

- return setDataSource_l(extractor); ......................... (3.2.1.3)

- }

{

dataSource = DataSource::CreateFromURI(mUri.string(), ...); (3.2.1.1)

sp<MediaExtractor> extractor =

MediaExtractor::Create(dataSource); ..... (3.2.1.2)

return setDataSource_l(extractor); ......................... (3.2.1.3)

}

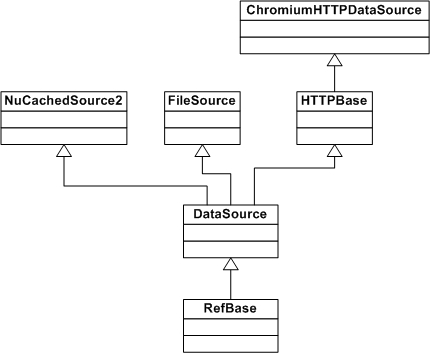

3.2.1.1 创建dataSource

a. 对于本地文件(http://,https://,rtsp://实现方式不一样)的实现方式如下:

dataSource = DataSource::CreateFromURI(mUri.string(), &mUriHeaders);

根据url创建dataSource,它实际上new了一个FileSource。当new FileSource时,它打开此文件:

mFd = open(filename, O_LARGEFILE | O_RDONLY);

b. 对于http://和https://,则new一个ChromiumHTTPDataSource,

这些类之间的派生关系如下图所示:

3.2.1.2 创建一个MediaExtractor

创建MediaExtractor::Create中创建真正的MediaExtractor,以下以MPEG2TSExtractor为例,它解析TS流,它也是一个空架子,它有传入的mDataSource给它读数据,并创建了一个mParser(ATSParser)来真正的数据解析。在此过程中产生的对象即拥有关系为:

MPEG2TSExtractor->ATSParser->ATSParser::Program->ATSParser::Stream->AnotherPacketSource

extractor = MediaExtractor::Create(dataSource);它解析source所指定的文件,并且根据其header来选择extractor(解析器)。其代码如下:

- sp<MediaExtractor> MediaExtractor::Create(

- const sp<DataSource> &source, const char *mime) {

- sp<AMessage> meta;

- String8 tmp;

- if (mime == NULL) {

- float confidence;

- if (!source->sniff(&tmp, &confidence, &meta)) {

- return NULL;

- }

- mime = tmp.string();

- }

- ...

- MediaExtractor *ret = NULL;

- if (!strcasecmp(mime, MEDIA_MIMETYPE_CONTAINER_MPEG4)

- || !strcasecmp(mime, "audio/mp4")) {

- ret = new MPEG4Extractor(source);

- } else if (!strcasecmp(mime, MEDIA_MIMETYPE_AUDIO_MPEG)) {

- ret = new MP3Extractor(source, meta);

- } else if (!strcasecmp(mime, MEDIA_MIMETYPE_AUDIO_AMR_NB)

- || !strcasecmp(mime, MEDIA_MIMETYPE_AUDIO_AMR_WB)) {

- ret = new AMRExtractor(source);

- } else if (!strcasecmp(mime, MEDIA_MIMETYPE_AUDIO_FLAC)) {

- ret = new FLACExtractor(source);

- } else if (!strcasecmp(mime, MEDIA_MIMETYPE_CONTAINER_WAV)) {

- ret = new WAVExtractor(source);

- } else if (!strcasecmp(mime, MEDIA_MIMETYPE_CONTAINER_OGG)) {

- ret = new OggExtractor(source);

- } else if (!strcasecmp(mime, MEDIA_MIMETYPE_CONTAINER_MATROSKA)) {

- ret = new MatroskaExtractor(source);

- } else if (!strcasecmp(mime, MEDIA_MIMETYPE_CONTAINER_MPEG2TS)) {

- ret = new MPEG2TSExtractor(source);

- } else if (!strcasecmp(mime, MEDIA_MIMETYPE_CONTAINER_AVI)) {

- ret = new AVIExtractor(source);

- } else if (!strcasecmp(mime, MEDIA_MIMETYPE_CONTAINER_WVM)) {

- ret = new WVMExtractor(source);

- } else if (!strcasecmp(mime, MEDIA_MIMETYPE_AUDIO_AAC_ADTS)) {

- ret = new AACExtractor(source);

- }

- if (ret != NULL) {

- if (isDrm) {

- ret->setDrmFlag(true);

- } else {

- ret->setDrmFlag(false);

- }

- }

- ...

- return ret;

- }

sp<MediaExtractor> MediaExtractor::Create(

const sp<DataSource> &source, const char *mime) {

sp<AMessage> meta;

String8 tmp;

if (mime == NULL) {

float confidence;

if (!source->sniff(&tmp, &confidence, &meta)) {

return NULL;

}

mime = tmp.string();

}

...

MediaExtractor *ret = NULL;

if (!strcasecmp(mime, MEDIA_MIMETYPE_CONTAINER_MPEG4)

|| !strcasecmp(mime, "audio/mp4")) {

ret = new MPEG4Extractor(source);

} else if (!strcasecmp(mime, MEDIA_MIMETYPE_AUDIO_MPEG)) {

ret = new MP3Extractor(source, meta);

} else if (!strcasecmp(mime, MEDIA_MIMETYPE_AUDIO_AMR_NB)

|| !strcasecmp(mime, MEDIA_MIMETYPE_AUDIO_AMR_WB)) {

ret = new AMRExtractor(source);

} else if (!strcasecmp(mime, MEDIA_MIMETYPE_AUDIO_FLAC)) {

ret = new FLACExtractor(source);

} else if (!strcasecmp(mime, MEDIA_MIMETYPE_CONTAINER_WAV)) {

ret = new WAVExtractor(source);

} else if (!strcasecmp(mime, MEDIA_MIMETYPE_CONTAINER_OGG)) {

ret = new OggExtractor(source);

} else if (!strcasecmp(mime, MEDIA_MIMETYPE_CONTAINER_MATROSKA)) {

ret = new MatroskaExtractor(source);

} else if (!strcasecmp(mime, MEDIA_MIMETYPE_CONTAINER_MPEG2TS)) {

ret = new MPEG2TSExtractor(source);

} else if (!strcasecmp(mime, MEDIA_MIMETYPE_CONTAINER_AVI)) {

ret = new AVIExtractor(source);

} else if (!strcasecmp(mime, MEDIA_MIMETYPE_CONTAINER_WVM)) {

ret = new WVMExtractor(source);

} else if (!strcasecmp(mime, MEDIA_MIMETYPE_AUDIO_AAC_ADTS)) {

ret = new AACExtractor(source);

}

if (ret != NULL) {

if (isDrm) {

ret->setDrmFlag(true);

} else {

ret->setDrmFlag(false);

}

}

...

return ret;

}

当然对于TS流,它将创建一个MPEG2TSExtractor并返回。

当执行new MPEG2TSExtractor(source)时:

1) 把传入的FileSource对象保存在MEPG2TSExtractor的mDataSource成没变量中

2) 创建一个ATSParser并保存在mParser中,它负责TS文件的解析,

3) 在feedMore中,通过mDataSource->readAt从文件读取数据,把读取的数据作为mParser->feedTSPacket的参数,它将分析PAT表(ATSParser::parseProgramAssociationTable)而找到并创建对应的Program,并把Program存入ATSParser::mPrograms中。每个Program有一个唯一的program_number和programMapPID.

扫盲一下,PAT中包含有所有PMT的PID,一个Program有一个对应的PMT,PMT中包含有Audio PID和Video PID.

ATSParser::Program::parseProgramMap中,它分析PMT表,并分别根据Audio和Video的PID,为他们分别创建一个Stream。然后把新创建的Stream保存在ATSParser::Program的mStreams成员变量中。

ATSParser::Stream::Stream构造函数中,它根据媒体类型,创建一个类型为ElementaryStreamQueue的对象mQueue;并创建一个类型为ABuffer的对象mBuffer(mBuffer = new ABuffer(192 * 1024);)用于保存数据 。

注:ATSParser::Stream::mSource<AnotherPacketSource>创建流程为:

MediaExtractor::Create->

MPEG2TSExtractor::MPEG2TSExtractor->

MPEG2TSExtractor::init->

MPEG2TSExtractor::feedMore->

ATSParser::feedTSPacket->

ATSParser::parseTS->

ATSParser::parsePID->

ATSParser::parseProgramAssociationTable

ATSParser::Program::parsePID->

ATSParser::Program::parseProgramMap

ATSParser::Stream::parse->

ATSParser::Stream::flush->

ATSParser::Stream::parsePES->

ATSParser::Stream::onPayloadData

以上source->sniff函数在DataSource::sniff中实现,这些sniff函数是通过DataSource::RegisterSniffer来进行注册的,如MEPG2TS的sniff函数为:SniffMPEG2TS,其代码如下:

- bool SniffMPEG2TS(

- const sp<DataSource> &source, String8 *mimeType, float *confidence,

- sp<AMessage> *) {

- for (int i = 0; i < 5; ++i) {

- char header;

- if (source->readAt(kTSPacketSize * i, &header, 1) != 1

- || header != 0x47) {

- return false;

- }

- }

- *confidence = 0.1f;

- mimeType->setTo(MEDIA_MIMETYPE_CONTAINER_MPEG2TS);

- return true;

- }

bool SniffMPEG2TS(

const sp<DataSource> &source, String8 *mimeType, float *confidence,

sp<AMessage> *) {

for (int i = 0; i < 5; ++i) {

char header;

if (source->readAt(kTSPacketSize * i, &header, 1) != 1

|| header != 0x47) {

return false;

}

}

*confidence = 0.1f;

mimeType->setTo(MEDIA_MIMETYPE_CONTAINER_MPEG2TS);

return true;

}

由此可见,这些sniff是根据文件开始的内容来识别各种file container. 比如wav文件通过其头中的RIFF或WAVE字符串来识别。注:在创建player时,是根据url中的相关信息来判断的,而不是文件的内容来判断。

3.2.1.3 AwesomePlayer::setDataSource_l(extractor)

主要逻辑代码如下(当然此extractor实质为MPEG2TSExtractor对象):

- status_t AwesomePlayer::setDataSource_l(const sp<MediaExtractor> &extractor)

- {

- for (size_t i = 0; i < extractor->countTracks(); ++i) {

- ...

- if (!haveVideo && !strncasecmp(mime, "video/", 6))

- setVideoSource(extractor->getTrack(i));

- ...

- if(!haveAudio && !strncasecmp(mime, "audio/", 6))

- setAudioSource(extractor->getTrack(i));

- ...

- }

- }

status_t AwesomePlayer::setDataSource_l(const sp<MediaExtractor> &extractor)

{

for (size_t i = 0; i < extractor->countTracks(); ++i) {

...

if (!haveVideo && !strncasecmp(mime, "video/", 6))

setVideoSource(extractor->getTrack(i));

...

if(!haveAudio && !strncasecmp(mime, "audio/", 6))

setAudioSource(extractor->getTrack(i));

...

}

}

先看看extractor->getTrack做了些什么?

它以MPEG2TSExtractor和AnotherPacketSource做为参数创建了一个MPEG2TSSource对象返回,然后AwesomePlayer把它保存在mVideoTrack或mAudioTrack中。

3.2.2 initVideoDecoder

主要代码如下:

- status_t AwesomePlayer::initVideoDecoder(uint32_t flags) {

- if (mDecryptHandle != NULL) {

- flags |= OMXCodec::kEnableGrallocUsageProtected;

- }

- mVideoSource = OMXCodec::Create( //3.2.2.1

- mClient.interface(), mVideoTrack->getFormat(),

- false, // createEncoder

- mVideoTrack,

- NULL, flags, USE_SURFACE_ALLOC ? mNativeWindow : NULL);

- if (mVideoSource != NULL) {

- int64_t durationUs;

- if (mVideoTrack->getFormat()->findInt64(kKeyDuration, &durationUs)) {

- Mutex::Autolock autoLock(mMiscStateLock);

- if (mDurationUs < 0 || durationUs > mDurationUs) {

- mDurationUs = durationUs;

- }

- }

- status_t err = mVideoSource->start(); //3.2.2.2

- if (err != OK) {

- mVideoSource.clear();

- return err;

- }

- }

- return mVideoSource != NULL ? OK : UNKNOWN_ERROR;

- }

status_t AwesomePlayer::initVideoDecoder(uint32_t flags) {

if (mDecryptHandle != NULL) {

flags |= OMXCodec::kEnableGrallocUsageProtected;

}

mVideoSource = OMXCodec::Create( //3.2.2.1

mClient.interface(), mVideoTrack->getFormat(),

false, // createEncoder

mVideoTrack,

NULL, flags, USE_SURFACE_ALLOC ? mNativeWindow : NULL);

if (mVideoSource != NULL) {

int64_t durationUs;

if (mVideoTrack->getFormat()->findInt64(kKeyDuration, &durationUs)) {

Mutex::Autolock autoLock(mMiscStateLock);

if (mDurationUs < 0 || durationUs > mDurationUs) {

mDurationUs = durationUs;

}

}

status_t err = mVideoSource->start(); //3.2.2.2

if (err != OK) {

mVideoSource.clear();

return err;

}

}

return mVideoSource != NULL ? OK : UNKNOWN_ERROR;

}

它主要做了两件事,1)创建一个OMXCodec对象,2)调用OMXCodec的start方法。注mClient.interface()返回为一个OMX对象。其创建流程如下:

AwesomePlayer::AwesomePlayer->

mClient.connect->

OMXClient::connect(获取OMX对象,并保存在mOMX)->

BpMediaPlayerService::getOMX->

BnMediaPlayerService::onTransact(GET_OMX)->

MediaPlayerService::getOMX

3.2.2.1 创建OMXCodec对象

从上面的代码中可以看出,其mVideoTrack参数为一个MPEG2TSSource对象。

1)从MPEG2TSSource的metadata中获取mime类型

2)调用OMXCodec::findMatchingCodecs从kDecoderInfo中寻找可以解此mime媒体类型的codec名,并放在matchingCodecs变量中

3)创建一个OMXCodecObserver对象

4)调用OMX::allocateNode函数,以codec名和OMXCodecObserver对象为参数,创建一个OMXNodeInstance对象,并把其makeNodeID的返回值保存在node(node_id)中。

5)以node,codec名,mime媒体类型,MPEG2TSSource对象为参数,创建一个OMXCodec对象,并把此OMXCodec对象保存在OMXCodecObserver::mTarget中

- OMXCodec::OMXCodec(

- const sp<IOMX> &omx, IOMX::node_id node,

- uint32_t quirks, uint32_t flags,

- bool isEncoder,

- const char *mime,

- const char *componentName,

- const sp<MediaSource> &source,

- const sp<ANativeWindow> &nativeWindow)

OMXCodec::OMXCodec(

const sp<IOMX> &omx, IOMX::node_id node,

uint32_t quirks, uint32_t flags,

bool isEncoder,

const char *mime,

const char *componentName,

const sp<MediaSource> &source,

const sp<ANativeWindow> &nativeWindow)

6)调用OMXCodec::configureCodec并以MEPG2TSSource的MetaData为参数,对此Codec进行配置。

3.2.2.2 调用OMXCodec::start方法

1)它调用mSource->start,即调用MPEG2TSSource::start函数。

2)它又调用Impl->start,即AnotherPacketSource::start,真遗憾,这其中什么都没有做。只是return OK;就完事了。

3.2.3 initAudioDecoder

其流程基本上与initVideoDecoder类似。创建一个OMXCodec保存在mAudioSource中。

至此,AwesomePlayer的准备工作已经完成。其架构如下图所示:

http://blog.csdn.net/myarrow/article/details/7067574

----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

Android4.0.1中各个Player的功能

1. Android4.0.1中默认定义了4个真正的Player,具体情况如下:

- static sp<MediaPlayerBase> createPlayer(player_type playerType, void* cookie,

- notify_callback_f notifyFunc)

- {

- sp<MediaPlayerBase> p;

- switch (playerType) {

- case SONIVOX_PLAYER:

- LOGV(" create MidiFile");

- p = new MidiFile();

- break;

- case STAGEFRIGHT_PLAYER:

- LOGV(" create StagefrightPlayer");

- p = new StagefrightPlayer;

- break;

- case NU_PLAYER:

- LOGV(" create NuPlayer");

- p = new NuPlayerDriver;

- break;

- case TEST_PLAYER:

- LOGV("Create Test Player stub");

- p = new TestPlayerStub();

- break;

- default:

- LOGE("Unknown player type: %d", playerType);

- return NULL;

- }

- if (p != NULL) {

- if (p->initCheck() == NO_ERROR) {

- p->setNotifyCallback(cookie, notifyFunc);

- } else {

- p.clear();

- }

- }

- if (p == NULL) {

- LOGE("Failed to create player object");

- }

- return p;

- }

static sp<MediaPlayerBase> createPlayer(player_type playerType, void* cookie,

notify_callback_f notifyFunc)

{

sp<MediaPlayerBase> p;

switch (playerType) {

case SONIVOX_PLAYER:

LOGV(" create MidiFile");

p = new MidiFile();

break;

case STAGEFRIGHT_PLAYER:

LOGV(" create StagefrightPlayer");

p = new StagefrightPlayer;

break;

case NU_PLAYER:

LOGV(" create NuPlayer");

p = new NuPlayerDriver;

break;

case TEST_PLAYER:

LOGV("Create Test Player stub");

p = new TestPlayerStub();

break;

default:

LOGE("Unknown player type: %d", playerType);

return NULL;

}

if (p != NULL) {

if (p->initCheck() == NO_ERROR) {

p->setNotifyCallback(cookie, notifyFunc);

} else {

p.clear();

}

}

if (p == NULL) {

LOGE("Failed to create player object");

}

return p;

}

2. 每个Player的专长是什么呢?

| Player Type | Feature Description |

| TEST_PLAYER | url以test:开始的。如test:xxx |

| NU_PLAYER | url以http://或https://开始的,且url以.m3u8结束或url中包含有m3u8字符串 |

| SONIVOX_PLAYER | 处理url中扩展名为:.mid,.midi,.smf,.xmf,.imy,.rtttl,.rtx,.ota的媒体文件 |

| STAGEFRIGHT_PLAYER | 它是一个大好人,前面三位不能处理的都交给它来处理,不知能力是否有如此强大 |

以上言论以代码为证,获取player type的代码如下:

- player_type getPlayerType(const char* url)

- {

- if (TestPlayerStub::canBeUsed(url)) {

- return TEST_PLAYER;

- }

- if (!strncasecmp("http://", url, 7)

- || !strncasecmp("https://", url, 8)) {

- size_t len = strlen(url);

- if (len >= 5 && !strcasecmp(".m3u8", &url[len - 5])) {

- return NU_PLAYER;

- }

- if (strstr(url,"m3u8")) {

- return NU_PLAYER;

- }

- }

- // use MidiFile for MIDI extensions

- int lenURL = strlen(url);

- for (int i = 0; i < NELEM(FILE_EXTS); ++i) {

- int len = strlen(FILE_EXTS[i].extension);

- int start = lenURL - len;

- if (start > 0) {

- if (!strncasecmp(url + start, FILE_EXTS[i].extension, len)) {

- return FILE_EXTS[i].playertype;

- }

- }

- }

- return getDefaultPlayerType();

- }

player_type getPlayerType(const char* url)

{

if (TestPlayerStub::canBeUsed(url)) {

return TEST_PLAYER;

}

if (!strncasecmp("http://", url, 7)

|| !strncasecmp("https://", url, 8)) {

size_t len = strlen(url);

if (len >= 5 && !strcasecmp(".m3u8", &url[len - 5])) {

return NU_PLAYER;

}

if (strstr(url,"m3u8")) {

return NU_PLAYER;

}

}

// use MidiFile for MIDI extensions

int lenURL = strlen(url);

for (int i = 0; i < NELEM(FILE_EXTS); ++i) {

int len = strlen(FILE_EXTS[i].extension);

int start = lenURL - len;

if (start > 0) {

if (!strncasecmp(url + start, FILE_EXTS[i].extension, len)) {

return FILE_EXTS[i].playertype;

}

}

}

return getDefaultPlayerType();

}

如果你想增加自己的player,以上两个函数都需要修改。

现在对整个媒体系统基本上有一个清晰的框架了,就突发奇想,到底在StagefrightPlayer如何增加自己的硬件解码呢? 很好奇,想一探究竟...

http://blog.csdn.net/myarrow/article/details/7055786

-----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

MediaPlayer-MediaPlayerService-MediaPlayerService::Client的三角关系

1. MediaPlayer是客户端

2. MediaPlayerService和MediaPlayerService::Client是服务器端。

2.1 MediaPlayerService实现IMediaPlayerService定义的业务逻辑,其主要功能是根据MediaPlayer::setDataSource输入的URL调用create函数创建对应的Player.

2.2 MediaPlayerService::Client实现IMediaPlayer定义的业务逻辑,其主要功能包括start, stop, pause, resume...,其实现方法是调用MediaPlayerService create的Player中的对应方法来实现具体功能。

2.3 它们的三角恋如下图所示:

3. IXX是什么东东?

在一个BnXXX或BpXXX都派生于两个类,具体情况如下:

class BpXXX : public IXXX, public BpRefBase

class BnXXX : public IXXX, public BBinder

3.1 看到了吧,BpXXX和BnXXX都派生于IXXX,哪IXXX又是做什么的呢? 明确地告诉你,定义业务逻辑,但在BpXXX与BnXXX中的实现方式不同:

1) 在BpXXX中,把对应的binder_transaction_data打包之后通过BpRefBase中的mRemote(BpBinder)发送出去,并等待结果

2) 在BnXXX中,实现对应的业务逻辑,通过调用BnXXX派生类中的方法来实现,如MediaPlayerService::Client

懂了吧, IXXX定义业务逻辑

3.2 哪另外一个做什么呢?

从上图可以看出,是用来进行进程间通信用的。

BpRefBase中有一个mRemote(BpBinder)用来与Binder驱动交互用的。

BBinder是用来从Binder驱动中接收相关请求,并进行相关处理的。

4. 不得不说的MediaPlayerService

class MediaPlayerService : public BnMediaPlayerService

它本身用来实现IMediaPlayerService中定义的业务逻辑,可以看看IMediaPlayerService到底定义了些什么东东?

- class IMediaPlayerService: public IInterface

- {

- public:

- DECLARE_META_INTERFACE(MediaPlayerService);

- virtual sp<IMediaRecorder> createMediaRecorder(pid_t pid) = 0;

- virtual sp<IMediaMetadataRetriever> createMetadataRetriever(pid_t pid) = 0;

- virtual sp<IMediaPlayer> create(pid_t pid, const sp<IMediaPlayerClient>& client, const char* url, const KeyedVector<String8, String8> *headers = NULL) = 0;

- virtual sp<IMediaPlayer> create(pid_t pid, const sp<IMediaPlayerClient>& client, int fd, int64_t offset, int64_t length) = 0;

- virtual sp<IMediaConfig> createMediaConfig(pid_t pid ) = 0;

- virtual sp<IMemory> decode(const char* url, uint32_t *pSampleRate, int* pNumChannels, int* pFormat) = 0;

- virtual sp<IMemory> decode(int fd, int64_t offset, int64_t length, uint32_t *pSampleRate, int* pNumChannels, int* pFormat) = 0;

- virtual sp<IOMX> getOMX() = 0;

- // Take a peek at currently playing audio, for visualization purposes.

- // This returns a buffer of 16 bit mono PCM data, or NULL if no visualization buffer is currently available.

- virtual sp<IMemory> snoop() = 0;

- };

class IMediaPlayerService: public IInterface

{

public:

DECLARE_META_INTERFACE(MediaPlayerService);

virtual sp<IMediaRecorder> createMediaRecorder(pid_t pid) = 0;

virtual sp<IMediaMetadataRetriever> createMetadataRetriever(pid_t pid) = 0;

virtual sp<IMediaPlayer> create(pid_t pid, const sp<IMediaPlayerClient>& client, const char* url, const KeyedVector<String8, String8> *headers = NULL) = 0;

virtual sp<IMediaPlayer> create(pid_t pid, const sp<IMediaPlayerClient>& client, int fd, int64_t offset, int64_t length) = 0;

virtual sp<IMediaConfig> createMediaConfig(pid_t pid ) = 0;

virtual sp<IMemory> decode(const char* url, uint32_t *pSampleRate, int* pNumChannels, int* pFormat) = 0;

virtual sp<IMemory> decode(int fd, int64_t offset, int64_t length, uint32_t *pSampleRate, int* pNumChannels, int* pFormat) = 0;

virtual sp<IOMX> getOMX() = 0;

// Take a peek at currently playing audio, for visualization purposes.

// This returns a buffer of 16 bit mono PCM data, or NULL if no visualization buffer is currently available.

virtual sp<IMemory> snoop() = 0;

};

从上面可以看出,还说自己是一个MediaPlayerService,而且还向大内总管ServiceManager进行了注册,就这么个create和decode,能做什么呢? 我们最熟悉的start ,stop, pause, resume...怎么不见呢?没有这些东东,MediaPlayer需要的功能如何实现呢?

带着这么多疑问,我们先看看MediaPlayerService是如何实现上述功能的,它到底做了些什么?

还是让代码自己说话吧:

- sp<IMediaPlayer> MediaPlayerService::create(

- pid_t pid, const sp<IMediaPlayerClient>& client, const char* url,

- const KeyedVector<String8, String8> *headers)

- {

- int32_t connId = android_atomic_inc(&mNextConnId);

- sp<Client> c = new Client(this, pid, connId, client);

- LOGV("Create new client(%d) from pid %d, url=%s, connId=%d", connId, pid, url, connId);

- if (NO_ERROR != c->setDataSource(url, headers))

- {

- c.clear();

- return c;

- }

- wp<Client> w = c;

- Mutex::Autolock lock(mLock);

- mClients.add(w);

- return c;

- }

sp<IMediaPlayer> MediaPlayerService::create(

pid_t pid, const sp<IMediaPlayerClient>& client, const char* url,

const KeyedVector<String8, String8> *headers)

{

int32_t connId = android_atomic_inc(&mNextConnId);

sp<Client> c = new Client(this, pid, connId, client);

LOGV("Create new client(%d) from pid %d, url=%s, connId=%d", connId, pid, url, connId);

if (NO_ERROR != c->setDataSource(url, headers))

{

c.clear();

return c;

}

wp<Client> w = c;

Mutex::Autolock lock(mLock);

mClients.add(w);

return c;

}

原来如此,它负责创建MediaPlayer需要的对应功能,创建了Client(MediaPlayerService),MediaPlayerService::Client的定义如下:

class Client : public BnMediaPlayer, 它实现了IMediaPlayer定义的业务逻辑,哪就看看IMediaPlayer定义了些什么:

- class IMediaPlayer: public IInterface

- {

- public:

- DECLARE_META_INTERFACE(MediaPlayer);

- virtual void disconnect() = 0;

- virtual status_t setVideoSurface(const sp<ISurface>& surface) = 0;

- virtual status_t setSurfaceXY(int x,int y) = 0; /*add by h00186041 add interface for surface left-up position*/

- virtual status_t setSurfaceWH(int w,int h) = 0; /*add by h00186041 add interface for surface size*/

- virtual status_t prepareAsync() = 0;

- virtual status_t start() = 0;

- virtual status_t stop() = 0;

- virtual status_t pause() = 0;

- virtual status_t isPlaying(bool* state) = 0;

- virtual status_t seekTo(int msec) = 0;

- virtual status_t getCurrentPosition(int* msec) = 0;

- virtual status_t getDuration(int* msec) = 0;

- virtual status_t reset() = 0;

- virtual status_t setAudioStreamType(int type) = 0;

- virtual status_t setLooping(int loop) = 0;

- virtual status_t setVolume(float leftVolume, float rightVolume) = 0;

- virtual status_t suspend() = 0;

- virtual status_t resume() = 0;

- // Invoke a generic method on the player by using opaque parcels

- // for the request and reply.

- // @param request Parcel that must start with the media player

- // interface token.

- // @param[out] reply Parcel to hold the reply data. Cannot be null.

- // @return OK if the invocation was made successfully.

- virtual status_t invoke(const Parcel& request, Parcel *reply) = 0;

- // Set a new metadata filter.

- // @param filter A set of allow and drop rules serialized in a Parcel.

- // @return OK if the invocation was made successfully.

- virtual status_t setMetadataFilter(const Parcel& filter) = 0;

- // Retrieve a set of metadata.

- // @param update_only Include only the metadata that have changed

- // since the last invocation of getMetadata.

- // The set is built using the unfiltered

- // notifications the native player sent to the

- // MediaPlayerService during that period of

- // time. If false, all the metadatas are considered.

- // @param apply_filter If true, once the metadata set has been built based

- // on the value update_only, the current filter is

- // applied.

- // @param[out] metadata On exit contains a set (possibly empty) of metadata.

- // Valid only if the call returned OK.

- // @return OK if the invocation was made successfully.

- virtual status_t getMetadata(bool update_only,

- bool apply_filter,

- Parcel *metadata) = 0;

- }

class IMediaPlayer: public IInterface

{

public:

DECLARE_META_INTERFACE(MediaPlayer);

virtual void disconnect() = 0;

virtual status_t setVideoSurface(const sp<ISurface>& surface) = 0;

virtual status_t setSurfaceXY(int x,int y) = 0; /*add by h00186041 add interface for surface left-up position*/

virtual status_t setSurfaceWH(int w,int h) = 0; /*add by h00186041 add interface for surface size*/

virtual status_t prepareAsync() = 0;

virtual status_t start() = 0;

virtual status_t stop() = 0;

virtual status_t pause() = 0;

virtual status_t isPlaying(bool* state) = 0;

virtual status_t seekTo(int msec) = 0;

virtual status_t getCurrentPosition(int* msec) = 0;

virtual status_t getDuration(int* msec) = 0;

virtual status_t reset() = 0;

virtual status_t setAudioStreamType(int type) = 0;

virtual status_t setLooping(int loop) = 0;

virtual status_t setVolume(float leftVolume, float rightVolume) = 0;

virtual status_t suspend() = 0;

virtual status_t resume() = 0;

// Invoke a generic method on the player by using opaque parcels

// for the request and reply.

// @param request Parcel that must start with the media player

// interface token.

// @param[out] reply Parcel to hold the reply data. Cannot be null.

// @return OK if the invocation was made successfully.

virtual status_t invoke(const Parcel& request, Parcel *reply) = 0;

// Set a new metadata filter.

// @param filter A set of allow and drop rules serialized in a Parcel.

// @return OK if the invocation was made successfully.

virtual status_t setMetadataFilter(const Parcel& filter) = 0;

// Retrieve a set of metadata.

// @param update_only Include only the metadata that have changed

// since the last invocation of getMetadata.

// The set is built using the unfiltered

// notifications the native player sent to the

// MediaPlayerService during that period of

// time. If false, all the metadatas are considered.

// @param apply_filter If true, once the metadata set has been built based

// on the value update_only, the current filter is

// applied.

// @param[out] metadata On exit contains a set (possibly empty) of metadata.

// Valid only if the call returned OK.

// @return OK if the invocation was made successfully.

virtual status_t getMetadata(bool update_only,

bool apply_filter,

Parcel *metadata) = 0;

}

终于找到了思念已久的start, stop, pause, resume...

所以MediaPlayer::setDataSource返回时,会创建一个与MediaPlayerService::Client对应的BpMediaPlayer,用于获取MediaPlayerService::Client的各项功能。

4. 1 MediaPlayer又是如何找到MediaPlayerService::Client的呢? 只有MediaPlayerService才向ServiceManager进行了注册,所以MediaPlayer必须先获取BpMediaPlayerService,然后通过BpMediaService的管理功能create,来创建一个MediaPlayerService::Client.

4.2 为什么不直接定义一个MediaPlayer向ServiceManager注册呢?

也许是为了系统简化吧,MediaPlayerService包含的功能不只是Client, 还有AudioOutput,AudioCache,MediaConfigClient功能。现在明白了吧,MediaPlayerService就是一个媒体服务的接口,它先把客人接回来,再根据客人的需求,安排不同的员工去做,就这么简单。

http://blog.csdn.net/myarrow/article/details/7054936

------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

Android中真正的Player

1. 在前面的介绍中,从Java到MediaPlayer---Binder---MediaPlayerService::Client已经讲清楚了。可是,在MediaPlayerService::Client <MediaPlayerService::create-> new Client / MediaPlayerService::Client::setDataSource->getPlayerType/createPlayer->android::createPlayer>中调用android::createPlayer之后,并把新创建的真正的Player保存到MediaPlayerService::Client中的mPlayer之后就没有下文了。

2. 真正的Player到底是什么样的?

根据本人的了解,有PVPlayer, StagefrightPlayer,MidiFile,VorbisPlayer,每个Player处理不同的媒体类型,他们通过getPlayerType(const char* url)的返回值进行分工完成。每个Player都有自己的特长,并做自己善于做的事情。详细分工情况见代码:sp<MediaPlayerBase> createPlayer(player_type playerType, void* cookie,

notify_callback_f notifyFunc)函数。

3. 如果要在自己的芯片上实现硬件Demux和硬件Decoder(如对于机顶盒中的TS流),怎么办呢?

据本人了解,有两种办法:

1)基于MediaPlayerInterface接口,实现其中所有的接口函数,自已创建一个XXPlayer,并增加到android::createPlayer中。每个接口函数的具体实现就与以前在Linux环境下实现的方式一样,简单吧,以前的成果都可以用上了。

2)在现在的Player(如StagefrightPlayer)中写Extractor和Decoder插件。

为了实现第二种方案,需要先了解StagefrightPlayer的架构。为什么两种方案都需要了解呢,因为怎么做,取决于你的首席架构师或CTO等。

http://blog.csdn.net/myarrow/article/details/7054631

------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

Android媒体播放器框架--图

1. 由于小弟用的平台的AV播放一直不稳定,为研究其原由,不得不把MediaPlayer这个东东搞个明白。

2. 媒体播放器本地部分对上层的接口是MediaPlayer,对下层的接口是媒体播放器对硬件的抽象层,StagefrightPlayer是其中的一个实现,你也可以自己基于硬件驱动实现一个MyPlayer,然后添加到MediaPlayerService.cpp中的createPlayer中,同时需要修改本文件中的getPlayerType以指定哪些类型由你的MyPlayer来处理。CreatePlayer中是根据URL的player_type来创建对应的播放器,并把它保存在MediaPlayerService::Client的mPlayer成员变量中,以供BnMediaPlayer的onTransact调用。

3. 媒体播放器的框架如下图所示:

http://blog.csdn.net/myarrow/article/details/7053622

-------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------

78

78

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?