分类打标签的公式:

loss function的设计思想:

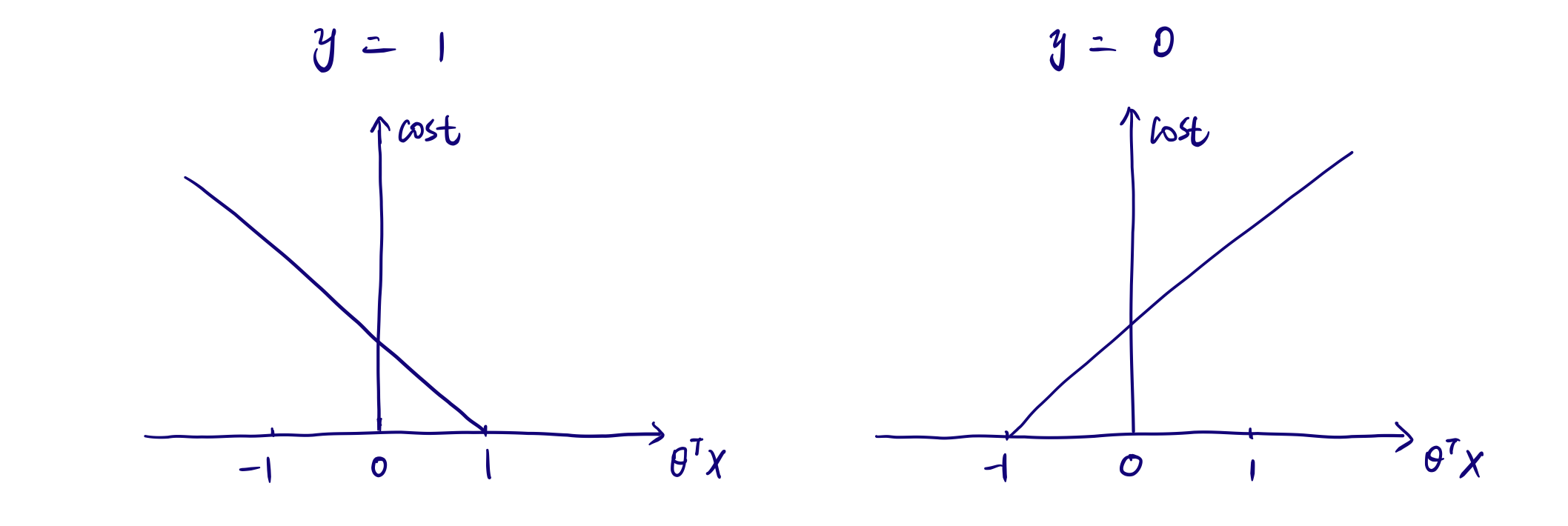

Hinge Loss, when the actual is 1 (left plot as below), if θᵀx ≥ 1, no cost at all, if θᵀx < 1, the cost increases as the value of θᵀx decreases. Wait! When θᵀx ≥ 0, we already predict 1, which is the correct prediction. Why does the cost start to increase from 1 instead of 0? Yes, SVM gives some punishment to both incorrect predictions and those close to decision boundary ( 0 < θᵀx <1), that’s how we call them support vectors. When data points are just right on the margin, θᵀx = 1, when data points are between decision boundary and margin, 0< θᵀx <1. I will explain why some data points appear inside of margin later. As for why removing non-support vectors won’t affect model performance, we are able to answer it now. Remember model fitting process is to minimize the cost function. Since there is no cost for non-support vectors at all, the total value of cost function won’t be changed by adding or removing them.

设计后绘制 SVM’s cost function如图:

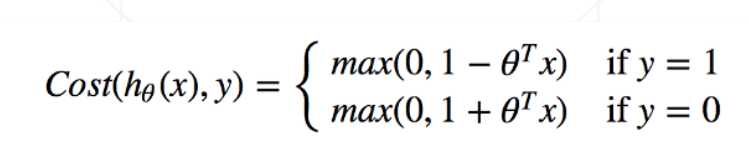

SVM’s cost function 最终凝练为如下公式:

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?