博文地址:http://blog.csdn.net/shuzfan/article/details/51460895

Loss Function

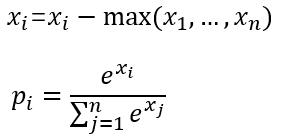

softmax_loss的计算包含2步:

(1)计算softmax归一化概率

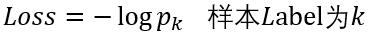

(2)计算损失

这里以batchsize=1的2分类为例:

设最后一层的输出为[1.2 0.8],减去最大值后为[0 -0.4],

然后计算归一化概率得到[0.5987 0.4013],

假如该图片的label为1,则Loss=-log0.4013=0.9130

可选参数

(1) ignore_label

int型变量,默认为空。

如果指定值,则label等于ignore_label的样本将不参与Loss计算,并且反向传播时梯度直接置0.

(2) normalize

bool型变量,即Loss会除以参与计算的样本总数;否则Loss等于直接求和

(3) normalization

enum型变量,默认为VALID,具体代表情况如下面的代码。

enum NormalizationMode {

// Divide by the number of examples in the batch times spatial dimensions.

// Outputs that receive the ignore label will NOT be ignored in computing the normalization factor.

FULL = 0;

// Divide by the total number of output locations that do not take the

// ignore_label. If ignore_label is not set, this behaves like FULL.

VALID = 1;

// Divide by the batch size.

BATCH_SIZE = 2;

//

NONE = 3;

}归一化case的判断:

(1) 如果未设置normalization,但是设置了normalize。

则有normalize==1 -> 归一化方式为VALID

normalize==0 -> 归一化方式为BATCH_SIZE

(2) 一旦设置normalization,归一化方式则由normalization决定,不再考虑normalize。

使用方法

layer {

name: "loss"

type: "SoftmaxWithLoss"

bottom: "fc1"

bottom: "label"

top: "loss"

top: "prob"

loss_param{

ignore_label:0

normalize: 1

normalization: FULL

}

}

1023

1023

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?