前言

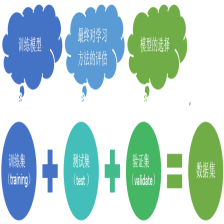

我觉得朴素贝叶斯算法是分类算法最被熟知一种算法了,因为它还是比较容易理解的,原理基础就是一个条件概率,只不过又加了一个条件独立性假设。又因为这个假设,可能会存在后验概率为0的可能性错误,所以用平滑来避免这种错误,比如(拉普拉斯平滑处理)。

#算法一,调用sklearn中的算法

# _*_ encoding:utf-8 _*_

from matplotlib import pyplot

import scipy as sp

import numpy as np

from sklearn.datasets import load_files

from sklearn.cross_validation import train_test_split

from sklearn.feature_extraction.text import CountVectorizer

from sklearn.feature_extraction.text import TfidfVectorizer

from sklearn.naive_bayes import MultinomialNB

from sklearn.metrics import precision_recall_curve

from sklearn.metrics import classification_report

#读取数据

movie_data=sp.load('move_data.npy')

movie_target=sp.load('move_target.npy')

x=movie_data

y=movie_target

#布尔型特征下的向量空间模型,注意,测试样本调用的是transfrom接口,而不是fit_transform

count_vec=TfidfVectorizer(binary=False,decode_error='ignore',stop_words='english')

#加载数据集,切分数据集80%训练,20%测试

x_train,x_test,y_train,y_test=\

train_test_split(x,y,test_size=0.2)

x_train=count_vec.fit_transform(x_train)

x_test=count_vec.transform(x_test)

#print x_train[0]

#调用MultinomialNB分类器

clf=MultinomialNB().fit(x_train,y_train)

doc_class_predict=clf.predict(x_test)

#print doc_class_predict

#print y_test

print np.mean(doc_class_predict==y_test)

#精确率与召回率

precision,recall,thresholds=precision_recall_curve(y_test,clf.predict(x_test))

answer=clf.predict_proba(x_test)[:,1]#预测属于第二类的概率

#print answer

report=answer>0.5

#print report

print classification_report(y_test,report,target_names=['neg','pos'])# -*- encoding:utf-8 -*-

'''

#Python实现朴素贝叶斯应用到:

将文本分为正负两类

'''

from numpy import *

class NaiveBayesClassifier(object):

def __init__(self):

self.dataMat=list()

self.labelMat=list()

self.pLabel1=0

self.p0Vec=list()

self.p1Vec=list()

def loadDataSet(self,filename):

fr=open(filename)

for line in fr.readlines():

lineArr=line.strip().split()

dataLine=list()

for i in lineArr:

dataLine.append(i)

label=dataLine.pop()#最后一列表示类标号

self.dataMat.append(dataLine)

self.labelMat.append(int(label))

def createVocablist(self,dataSet):#创建词库

vocabSet=[]

for docs in dataSet:

for d in docs:

if d not in vocabSet:

vocabSet.append(d)

#print vocabSet

return vocabSet

def bagOfWords2VecMN(self,vocablist,inputSet):#创建词袋模型,每一项为出现的次数

returnVec=[0]*len(vocablist)

for word in inputSet:

if word in vocablist:

returnVec[vocablist.index(word)]+=1

#print returnVec

return returnVec

def train(self,trainMat):

dataNum=len(trainMat)

featureNum=len(trainMat[0])

self.pLabel1=sum(self.labelMat)/float(dataNum)

#初始化所有词出现的次数为1,(拉普拉斯平滑),并将分母初始化2

#避免某一个概率值为0

p0Num=ones(featureNum)

p1Num=ones(featureNum)

p0Denom=2.0

p1Denom=2.0

for i in range(dataNum):

if self.labelMat[i]==1:

p1Num+=trainMat[i]

p1Denom+=sum(trainMat[i])

else:

p0Num+=trainMat[i]

p0Denom+=sum(trainMat[i])

#将结果取自然对数,避免下溢出,即太多很小的数相乘造成的影响

self.p0Vec=log(p0Num/p0Denom)

self.p1Vec=log(p1Num/p1Denom)

def classify(self,data):

p1=sum(data*self.p1Vec)+log(self.pLabel1)

p0=sum(data*self.p0Vec)+log(1.0-self.pLabel1)

if p1>p0:

return 1

else:

return 0

def test(self):

self.loadDataSet('testNB')

myVocablist=self.createVocablist(self.dataMat)

trainMat=[]

for post in self.dataMat:

trainMat.append(self.bagOfWords2VecMN(myVocablist,post))

self.labelMat=array(self.labelMat)

#print self.labelMat

#print array(trainMat)

self.train(array(trainMat))

test=['stupid','ladmation','dog']

testVec=self.bagOfWords2VecMN(myVocablist,test)

print test,'被分为的类别为:',self.classify(testVec)

if __name__ == '__main__':

NB=NaiveBayesClassifier()

NB.test()实验结果为:['stupid', 'ladmation', 'dog'] 被分为的类别为: 1

其他有关贝叶斯的实现或者应用请点击这里

《完》

所谓的不平凡就是平凡的N次幂。

-----By Ada

223

223

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?